手把手带你部署K8s二进制集群

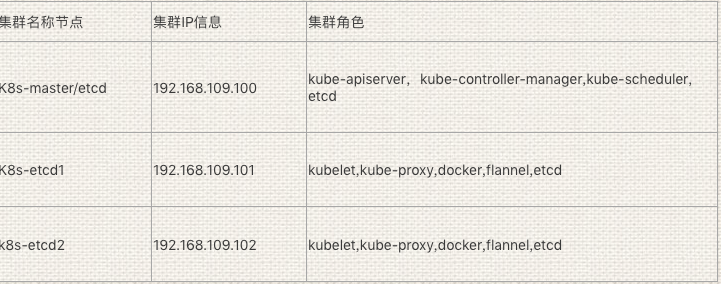

集群环境准备:

【etcd集群证书生成】

#mkdir -p k8s/{k8s-cert,etcd-cert}

#cd k8s/etcd-cert/

#cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF #cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF #cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.109.100",

"192.168.109.101",

"192.168.109.102"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

#cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

[root@#k8s-master etcd-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem server.csr server-csr.json server-key.pem server.pem

Ps:如果在生成证书过程中出现没有cfssl命令时候,需要通过下载安装

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

【安装etcd节点】

#tar zvf etcd-v3.3.10-linux-amd64.tar.gz #将解压的etcd二进制软件包解压到

# cd etcd-v3.3.10-linux-amd64

#mkdir /opt/etcd/{cfg,bin,ssl} -p #创建etcd配置配置/启动/证书/文件

[root@k8s-master soft]# mv ./etcd-v3.3.10-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/ #将etcd下解压之后的etcd和etcdctl两个启动文件拷贝到bin目录下

【etcd证书植入到etcd目录】

[root@k8s-master k8s]# cp /root/k8s/etcd-cert/{ca*pem,server*pem} /opt/etcd/ssl/ #将在etcd节点生成的证书拷贝到新建的/opt/etcd/ssl中

[root@k8s-master k8s]# vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.109.100:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.109.100:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.109.100:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.109.100:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.109.100:2380,etcd02=https://192.168.109.101:2380,etcd03=https://192.168.109.102:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

参数详解:

ETCD_NAME 节点名称

ETCD_DATA_DIR 数据目录

ETCD_LISTEN_PEER_URLS 集群通信监听地址

ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

ETCD_INITIAL_CLUSTER 集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN 集群Token

ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群

【添加systemd】

#vim /usr/lib/systemd/system/etcd.service #配置etcd服务由systemd管理

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536 [Install]

WantedBy=multi-user.target

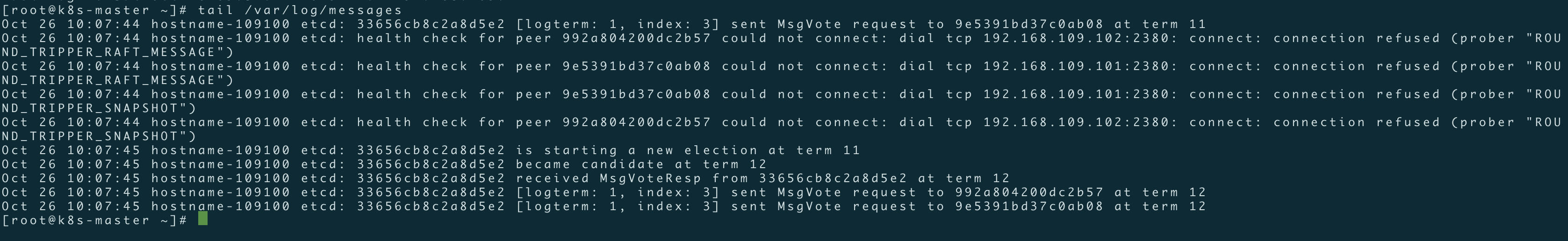

Ps:配置完毕第一个etcd节点之后,启动一个节点的话,是无法正常启动的,需要保证其它两个节点etcd服务处理监听状态~

将第一个etcd节点的etcd配置文件/证书文件/二进制启动文件/systemd管理的etcd启动文件拷贝到其它节点上去(ps:拷贝到其它节点之后,注意修改etcd配置文件中对应的IP信息)

[root@k8s-master k8s]# scp -r /opt/etcd/ root@192.168.109.101:/opt/

[root@k8s-master k8s]# scp -r /usr/lib/systemd/system/etcd.service root@192.168.109.101:/usr/lib/systemd/system/etcd.service

[root@k8s-master k8s]# scp -r /opt/etcd/ root@192.168.109.102:/opt/

[root@k8s-master k8s]# scp -r /usr/lib/systemd/system/etcd.service root@192.168.109.102:/usr/lib/systemd/system/etcd.service

#systemctl daemon-reload

#systemctl enable etcd

#systemctl start etcd

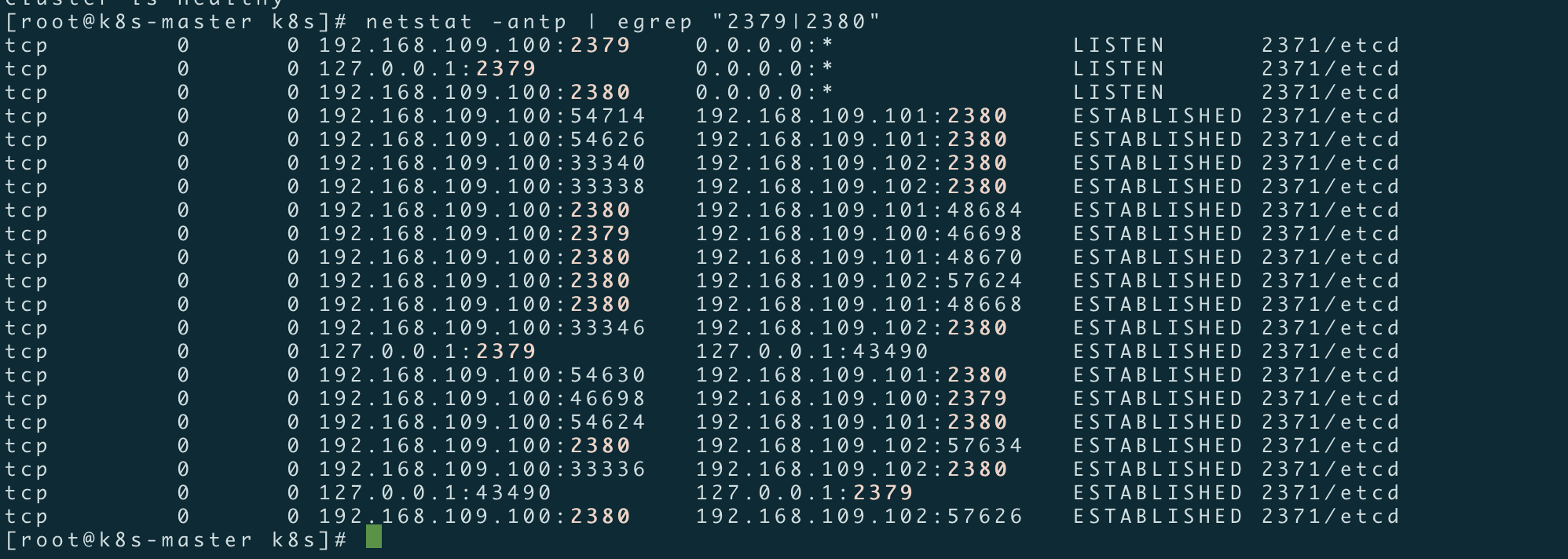

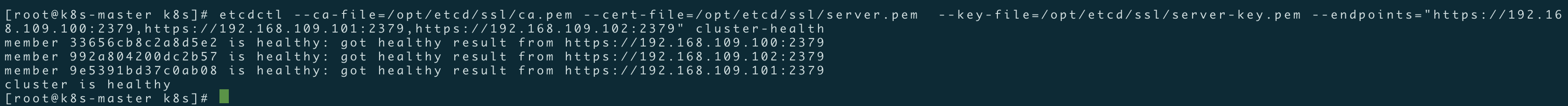

ETCD集群节点状态检查

[root@k8s-master k8s]# ln -s /opt/etcd/bin/etcdctl /usr/bin/

[root@k8s-master k8s]# etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.109.100:2379,https://192.168.109.101:2379,https://192.168.109.102:2379" cluster-health

member 33656cb8c2a8d5e2 is healthy: got healthy result from https://192.168.109.100:2379

member 992a804200dc2b57 is healthy: got healthy result from https://192.168.109.102:2379

member 9e5391bd37c0ab08 is healthy: got healthy result from https://192.168.109.101:2379

cluster is healthy

【k8s-node1/2节点部署docker】

Docker安装

[root@k8s-node01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@k8s-node01 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@k8s-node01 ~]# yum makecache fast

[root@k8s-node01 ~]#yum -y install docker-ce

[root@k8s-node01 ~]# curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io #配置docker加速器

【写入分配的子网段到etcd,提供给flanneld使用】

[root@k8s-node1 ~]# etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.109.100:2379,https://192.168.109.101:2379,https://192.168.109.102:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

[root@k8s-node1 ~]# /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.109.100:2379,https://192.168.109.101:2379,https://192.168.109.102:2379" get /coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

【在所有node节点部署flanneld服务】

https://github.com/coreos/flannel/releases

[root@k8s-node1 k8s]# mkdir -p /opt/kubernetes/{bin,cfg/ssl}

[root@k8s-node1 sort]# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz

[root@k8s-node1 sort]# mv flannel mk-docker-opts.sh /opt/kubernetes/bin/ #将二进制启动文件拷贝到/opt/kubernetes/bin目录

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/flanneld #配置flanneld网络

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.109.100:2379,https://192.168.109.101:2379,https://192.168.109.102:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"

[root@k8s-node1 ~]# vim /usr/lib/systemd/system/flanneld.service 在node1以及node2节点配置flanned启动脚本,由systemd管理

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service [Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure [Install]

WantedBy=multi-user.target

[root@k8s-node1 ~]# vim /usr/lib/systemd/system/docker.service #修改设置docker由systemd管理(默认安装docker之后会妇女在systemd管理的docker启动文件,这里修改是为了整合flanneld网络)

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s [Install]

WantedBy=multi-user.target

将对node1所做的配置复用拷贝到另一个节点

[root@k8s-node1 ~]# scp -r /opt/kubernetes/ root@192.168.109.102:/opt/kubernetes/

[root@k8s-node1 ~]# scp -r /usr/lib/systemd/system/{flanneld.service,docker.service} root@192.168.109.102:/usr/lib/systemd/system/

启动flaneld/docker服务

在node1以及node2节点上启动flanneld以及docker服务,并配置自启动;

# systemctl enable flanneld

#systemctl restart flanneld

# systemctl restart docker

# systemctl enable docker

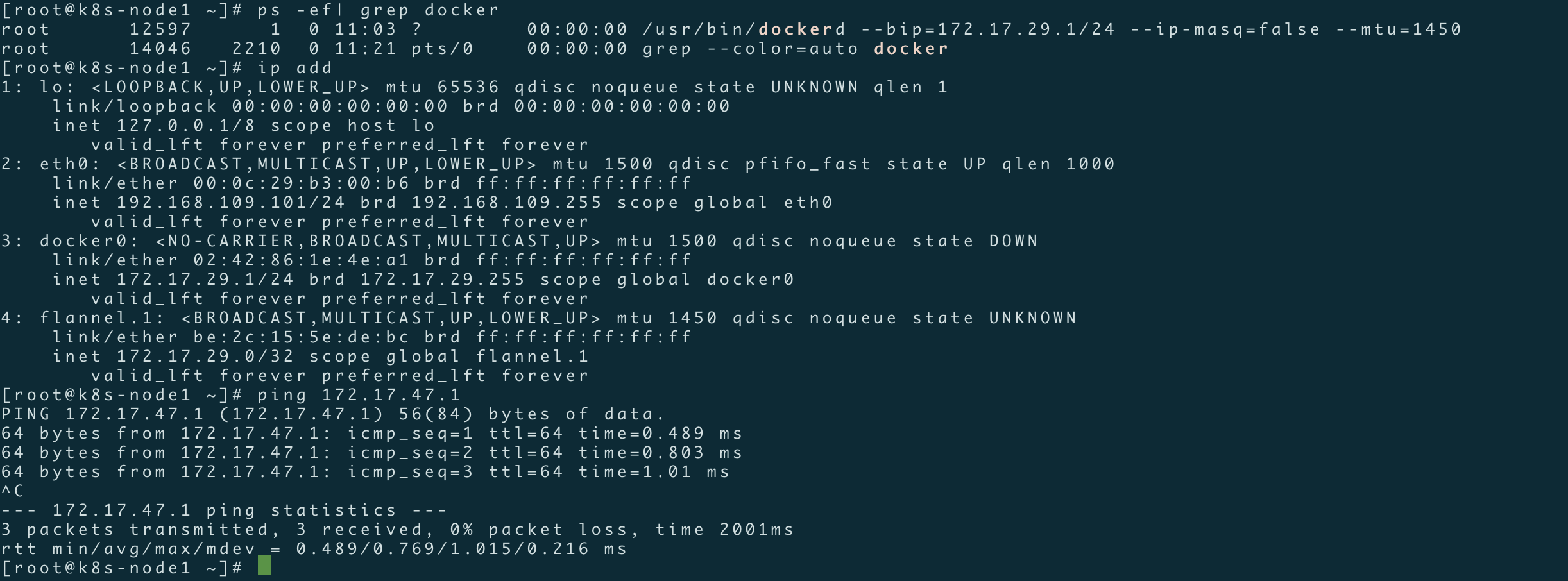

检测是否生效

确保docker和flanneld.1在同一个网段

测试不通节点互通,在当前节点访问另一个node节点docker0 IP

【Master节点】

https://github.com/kubernetes/kubernetes/releases

[root@k8s-master ~]# mkdir -p /opt/kubernetes/{cfg,bin,ssl}

[root@#hostname-109100 ~]# tar zxvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-master soft]# mv ./kubernetes/server/bin/{kube-apiserver,kube-controller-manager,kube-scheduler,kubectl} /opt/kubernetes/bin/

Master证书的生成

[root@#hostname-109100 k8s-cert]# vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

[root@#hostname-109100 k8s-cert]# vim ca-csr.json

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

[root@#hostname-109100 k8s-cert]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

[root@#hostname-109100 k8s-cert]#vim server-csr.json

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.109.100",

"192.168.109.101",

"192.168.109.102",

"192.168.109.103",

"192.168.109.104",

"192.168.109.105",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@#hostname-109100 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

[root@#hostname-109100 k8s-cert]# vim admin-csr.json

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

[root@#hostname-109100 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

[root@#hostname-109100 k8s-cert]# vim kube-proxy-csr.json

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

[root@#hostname-109100 k8s-cert]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

[root@k8s-master k8s]# cp /root/k8s/k8s-cert/{ca.pem,ca-key.pem,server.pem,server-key.pem} /opt/kubernetes/ssl/ #将生成的ca.pem,ca.pem, server.pem,server-key.pem四个证书拷贝到创建的/opt/kubernetes/ssl/目录中

[root@#hostname-109100 k8s]# BOOTSTRAP_TOKEN=0fb61c46f8991b718eb38d27b605b008 #自定义tokey变量值

[root@k8s-master k8s]# cat > token.csv <<EOF

> ${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

> EOF

[root@#hostname-109100 k8s]# cat token.csv

0fb61c46f8991b718eb38d27b605b008,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

[root@#hostname-109100 k8s]# mv token.csv /opt/kubernetes/cfg/ #将token.csv文件拷贝到kubernetes的主目录(cfg)里;

[root@k8s-master k8s]# vim /opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=false \

--log-dir=/opt/kubernetes/logs \

--v=4 \

--etcd-servers=https://192.168.109.100:2379,https://192.168.109.101:2379,https://192.168.109.102:2379 \

--bind-address=192.168.109.100 \

--secure-port=6443 \

--advertise-address=192.168.109.100 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

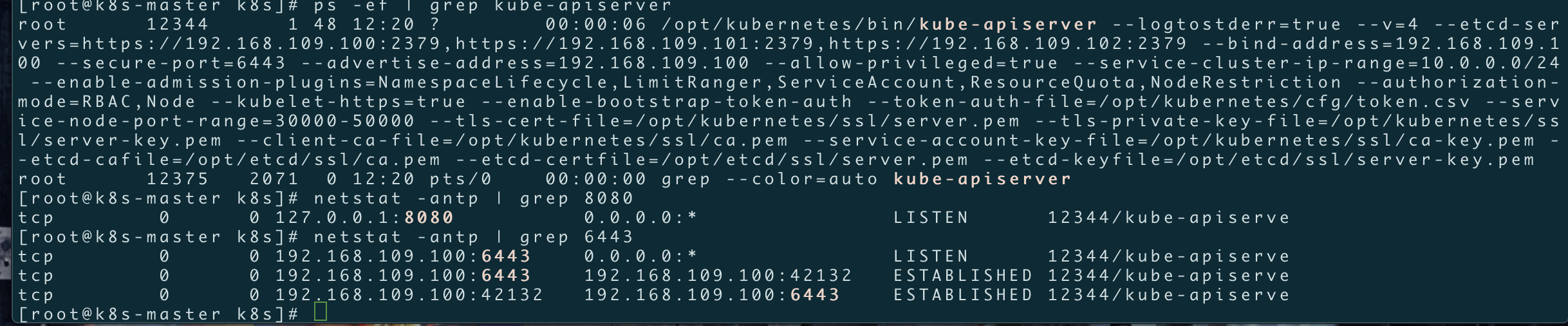

参数说明:

--logtostderr 启用日志

---v 日志等级

--etcd-servers etcd集群地址

--bind-address 监听地址

--secure-port https安全端口

--advertise-address 集群通告地址

--allow-privileged 启用授权

--service-cluster-ip-range Service虚拟IP地址段

--enable-admission-plugins 准入控制模块

--authorization-mode 认证授权,启用RBAC授权和节点自管理

--enable-bootstrap-token-auth 启用TLS bootstrap功能,后面会讲到

--token-auth-file token文件

--service-node-port-range Service Node类型默认分配端口范围

[root@#hostname-109100 ~]# vim /usr/lib/systemd/system/kube-apiserver.service #设置systemd管理kube-apiserver服务启动

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

[root@k8s-master k8s]# systemctl restart kube-apiserver

[root@k8s-master k8s]# systemctl enable kube-apiserver

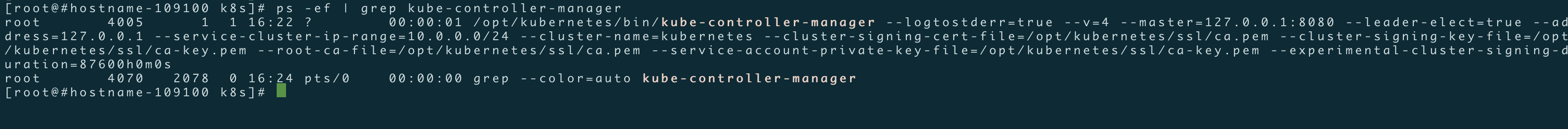

[root@#hostname-109100 k8s]# vim /opt/kubernetes/cfg/kube-controller-manager #配置kube-controller-manager文件

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--experimental-cluster-signing-duration=87600h0m0s"

[root@#hostname-109100 k8s]# vim /usr/lib/systemd/system/kube-controller-manager.service #配置kube-controller-manager服务启动

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

[root@#hostname-109100 k8s]# systemctl restart kube-controller-manager

[root@#hostname-109100 k8s]# systemctl enable kube-controller-manager

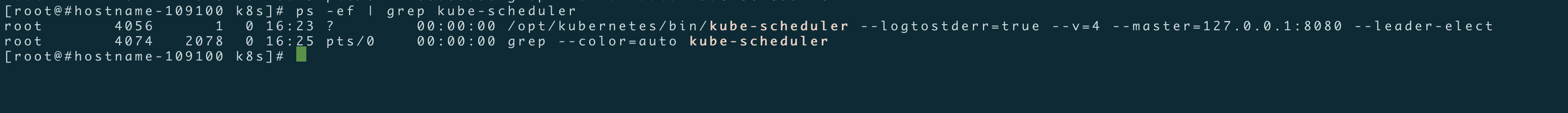

[root@#hostname-109100 k8s]# vim /opt/kubernetes/cfg/kube-scheduler #创建schduler配置文件

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"

参数详解:

--master #连接本地的apiserver

--leader-elect #当该组件启动多个时,自动选举(HA)

[root@k8s-master k8s]# vim /usr/lib/systemd/system/kube-scheduler.service #systemd管理scheduler

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

[root@#hostname-109100 k8s]# systemctl enable kube-scheduler

[root@#hostname-109100 k8s]# systemctl restart kube-scheduler

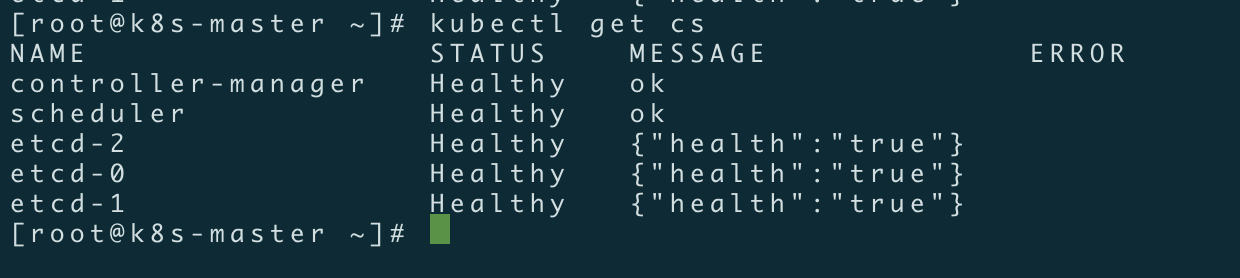

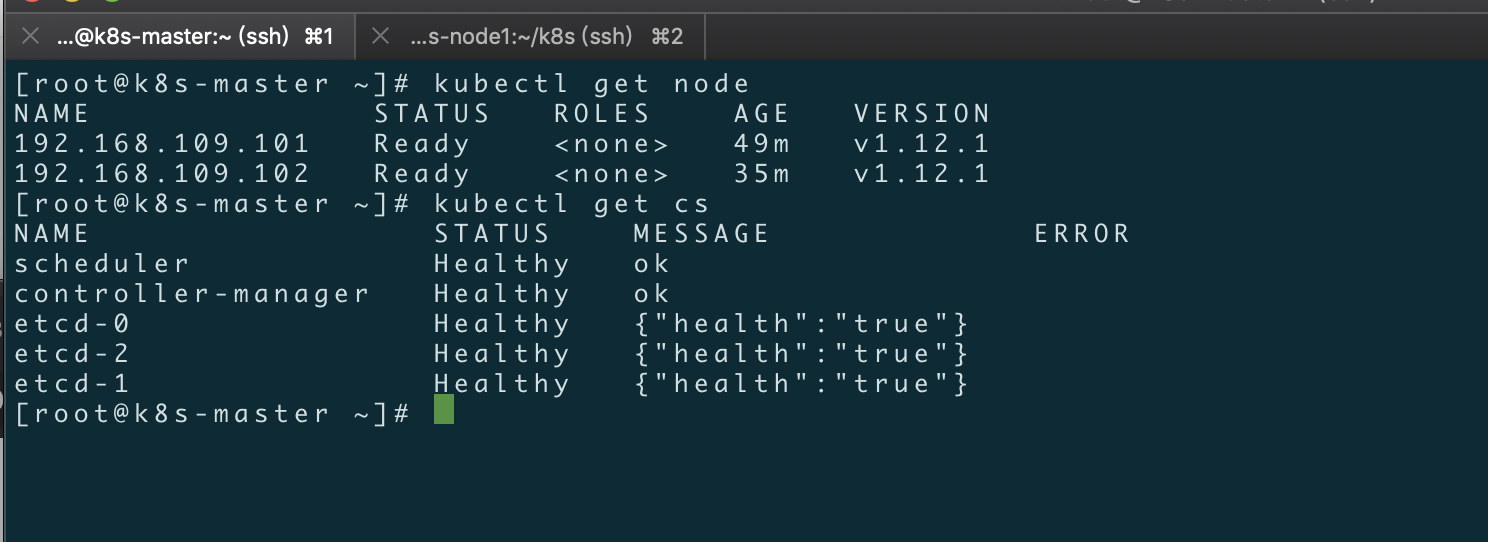

当所有的组件启动成功之后,通过kubectl工具查看当前集群组件状态;

[root@k8s-master ~]# ln -s /opt/kubernetes/bin/kubectl /usr/bin/

[root@k8s-master ~]# kubectl get cs #检查k8s集群状态

[root@k8s-master ~]# cat /opt/kubernetes/cfg/token.csv

0fb61c46f8991b718eb38d27b605b008,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

[root@k8s-master ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

[root@hostname-109100 k8s]# vim kubeconfig.sh #由于配置kubeconfig文件步骤较为繁琐,这里给出一个关于kubeconfig脚本,在生成kubernetes证书目录下执行生成kubeconfig文件

BOOTSTRAP_TOKEN=0fb61c46f8991b718eb38d27b605b008

APISERVER=$1

SSL_DIR=$2

export KUBE_APISERVER="https://$APISERVER:6443"

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@hostname-109100 k8s]# sh kubeconfig.sh 192.168.109.100 /root/k8s/k8s-cert/ #指定master主机IP地址后面跟上k8s证书目录;

[root@hostname-109100 k8s]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.109.101:/opt/kubernetes/cfg/

[root@hostname-109100 k8s]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.109.102:/opt/kubernetes/cfg/

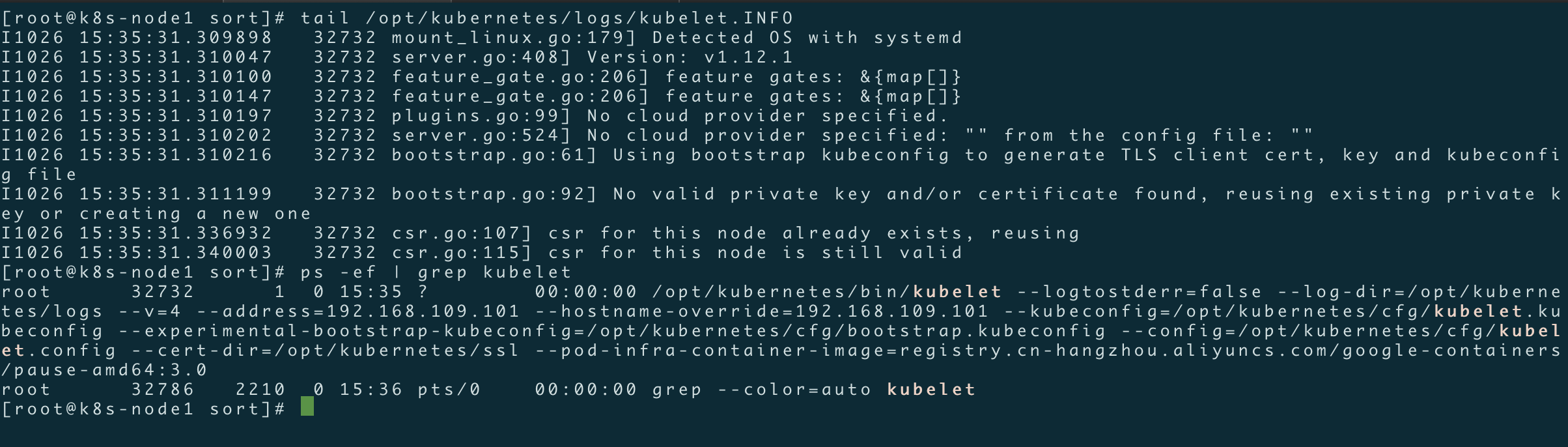

【node节点部署kubelet/kube-proxy组件】

[root@k8s-node1 sort]# tar zxvf kubernetes-server-linux-amd64.tar.gz

[root@k8s-node1 sort]# mv /root/sort/kubernetes/server/bin/{kubelet,kube-proxy} /opt/kubernetes/bin/ #将解压之后二进制文件拷贝到/opt/kubernetes/bin目录下

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kubelet

KUBELET_OPTS="--logtostderr=false \

--log-dir=/opt/kubernetes/logs \

--v=4 \

--address=192.168.109.101 \

--hostname-override=192.168.109.101 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

参数说明:

--hostname-override 在集群中显示的主机名

--kubeconfig 指定kubeconfig文件位置,会自动生成

--bootstrap-kubeconfig 指定刚才生成的bootstrap.kubeconfig文件

--cert-dir 颁发证书存放位置

--pod-infra-container-image 管理Pod网络的镜像

[root@k8s-node01 k8s]# vim /opt/kubernetes/cfg/kubelet.config

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 192.168.109.101

port: 10250

cgroupDriver: cgroupfs

clusterDNS:

- 10.0.0.2

clusterDomain: cluster.local.

failSwapOn: false

[root@k8s-node1 ~]# vim /usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service [Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process [Install]

WantedBy=multi-user.target

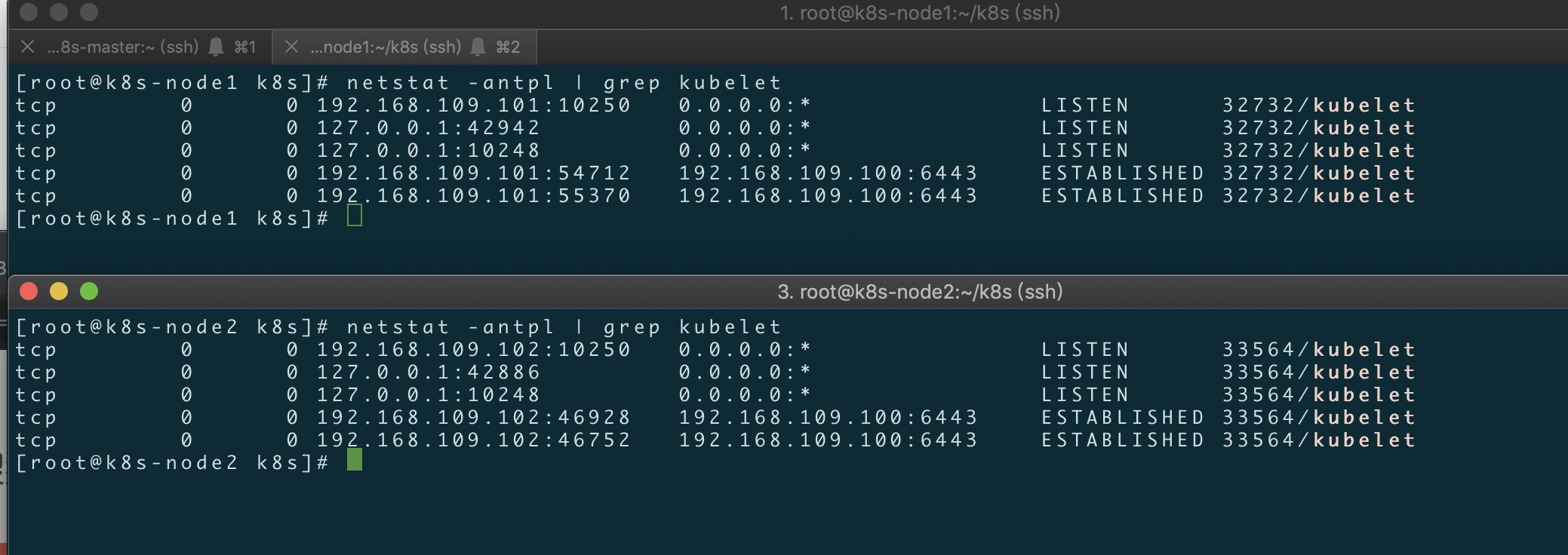

[root@k8s-node1 ~]# systemctl restart kubelet

[root@k8s-node1 sort]# systemctl enable kubelet

[root@k8s-node1 ~]# scp /opt/kubernetes/bin/{kubelet,kube-proxy} root@192.168.109.102:/opt/kubernetes/bin/ #将kubelet二进制文件拷贝到另一个node节点

[root@k8s-node1 ~]# scp /opt/kubernetes/cfg/{kubelet,kubelet.config} root@192.168.109.102:/opt/kubernetes/cfg/ #将kubelet配置文件拷贝到另一个node节点

[root@k8s-node1 k8s]# scp usr/lib/systemd/system/kubelet.service root@192.168.109.102:usr/lib/systemd/system/kubelet.service #将systemd管理的kubelet文件拷贝到另一个node节点

上述两个node节点kubelet启动没问题之后,接下来在k8s-master节点手动允许node节点加入k8s集群;

[root@k8s-master ~]# kubectl get csr #检查请求的签名node:

NAME AGE REQUESTOR CONDITION

node-csr-EjFlCMMd_g_yLx8Flhux0OB_I_2HgRD1uVP-lbwgOfc 30m kubelet-bootstrap Pending

node-csr-lVtFTCGPMj-K1RmC-EPhqNDdyIuV-E0wN99CApKBxYo 41s kubelet-bootstrap Pending

[root@k8s-master ~]# kubectl certificate approve 【请求签名名称NAME】

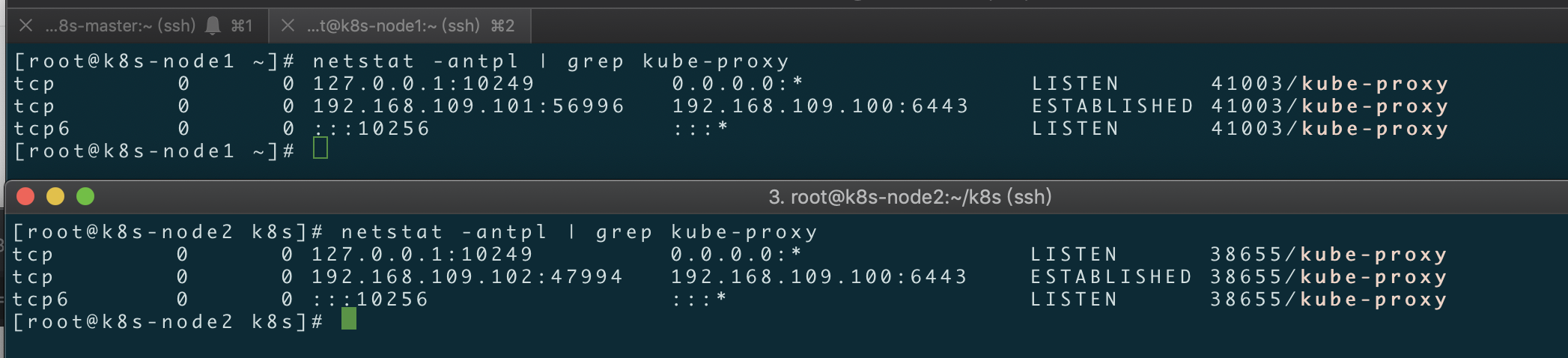

【node节点部署kube-proxy组件】

[root@k8s-node1 ~]# vim /opt/kubernetes/cfg/kube-proxy

KUBE_PROXY_OPTS="--logtostderr=false \

--log-dir=/opt/kubernetes/logs \

--v=4 \

--hostname-override=192.168.109.101 \

--cluster-cidr=10.0.0.0/24 \

--proxy-mode=ipvs \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

[root@k8s-node1 ~]# vim /usr/lib/systemd//system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

scp /opt/kubernetes/cfg/kube-proxy root@192.168.109.102:/opt/kubernetes/cfg/

scp /usr/lib/systemd/system/kube-proxy.service root@192.168.109.102:/usr/lib/systemd//system/

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

到目前为止,整个集群部署完毕,查看集群状态正常!

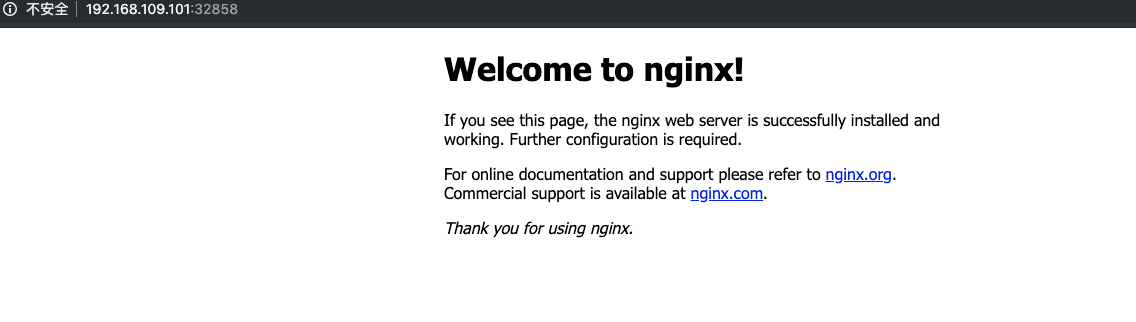

通过kubectl创建一个nginx容器,并访问,看看集群是否正常!

[root@k8s-master ~]# kubectl run nginx --image=nginx --replicas=3

kubectl run --generator=deployment/apps.v1beta1 is DEPRECATED and will be removed in a future version. Use kubectl create instead.

deployment.apps/nginx created

[root@k8s-master ~]# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

service/nginx exposed

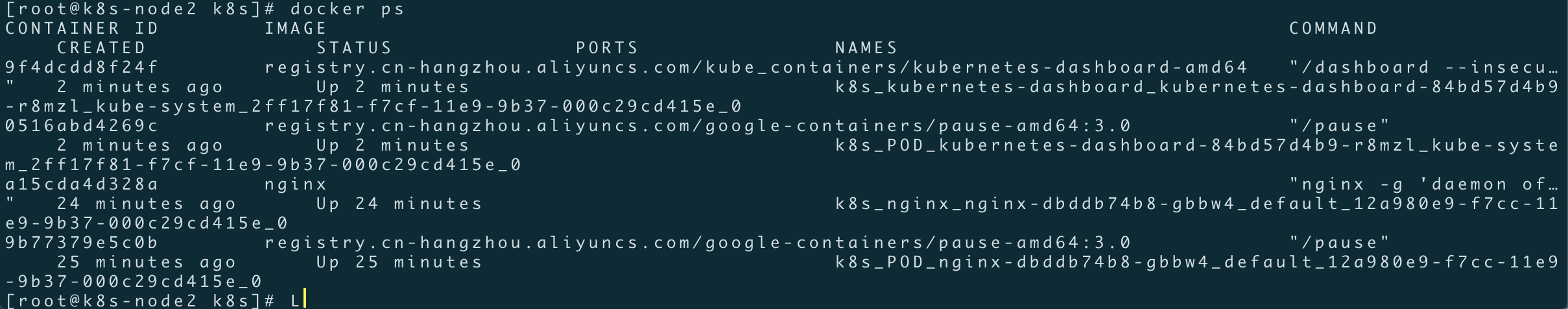

【部署Dashboard】

[root@k8s-master ~]# vim dashboard-rbac.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

name: kubernetes-dashboard

namespace: kube-system

--- kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

addonmanager.kubernetes.io/mode: Reconcile

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system

[root@k8s-master ~]#

[root@k8s-master ~]# vim dashboard-deployment.yaml

apiVersion: apps/v1beta2

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

spec:

serviceAccountName: kubernetes-dashboard

containers:

- name: kubernetes-dashboard

image: registry.cn-hangzhou.aliyuncs.com/kube_containers/kubernetes-dashboard-amd64:v1.8.1

resources:

limits:

cpu: 100m

memory: 300Mi

requests:

cpu: 100m

memory: 100Mi

ports:

- containerPort: 9090

protocol: TCP

livenessProbe:

httpGet:

scheme: HTTP

path: /

port: 9090

initialDelaySeconds: 30

timeoutSeconds: 30

tolerations:

- key: "CriticalAddonsOnly"

operator: "Exists"

[root@k8s-master ~]# vim dashboard-service.yaml

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

spec:

type: NodePort

selector:

k8s-app: kubernetes-dashboard

ports:

- port: 80

targetPort: 9090

[root@k8s-master ~]# kubectl apply -f dashboard-rbac.yaml

[root@k8s-master ~]# kubectl apply -f dashboard-deployment.yaml

[root@k8s-master ~]# kubectl apply -f dashboard-service.yaml

浏览器访问:http://192.168.109.102:48343

END

到这里整个K8S二进制集群部署就告一段落,过程比较复杂,如有问题请在博客下方留言或者加入博客左边的QQ群,入群交流沟通;

手把手带你部署K8s二进制集群的更多相关文章

- 内网环境上部署k8s+docker集群:集群ftp的yum源配置

接触docker已经有一年了,想把做的时候的一些知识分享给大家. 因为公司机房是内网环境无法连接外网,所以这里所有的部署都是基于内网环境进行的. 首先,需要通过ftp服务制作本地的yum源,可以从ht ...

- 这一篇 K8S(Kubernetes)集群部署 我觉得还可以!!!

点赞再看,养成习惯,微信搜索[牧小农]关注我获取更多资讯,风里雨里,小农等你,很高兴能够成为你的朋友. 国内安装K8S的四种途径 Kubernetes 的安装其实并不复杂,因为Kubernetes 属 ...

- K8S部署Redis Cluster集群

kubernetes部署单节点redis: https://www.cnblogs.com/zisefeizhu/p/14282299.html Redis 介绍 • Redis代表REmote DI ...

- K8S部署Redis Cluster集群(三主三从模式) - 部署笔记

一.Redis 介绍 Redis代表REmote DIctionary Server是一种开源的内存中数据存储,通常用作数据库,缓存或消息代理.它可以存储和操作高级数据类型,例如列表,地图,集合和排序 ...

- 手把手教你用Docker部署一个MongoDB集群

MongoDB是一个介于关系数据库和非关系数据库之间的产品,是非关系数据库当中最像关系数据库的.支持类似于面向对象的查询语言,几乎可以实现类似关系数据库单表查询的绝大部分功能,而且还支持对数据建立索引 ...

- kubernetes kubeadm部署高可用集群

k8s kubeadm部署高可用集群 kubeadm是官方推出的部署工具,旨在降低kubernetes使用门槛与提高集群部署的便捷性. 同时越来越多的官方文档,围绕kubernetes容器化部署为环境 ...

- Kubernetes 学习笔记(二):本地部署一个 kubernetes 集群

前言 前面用到过的 minikube 只是一个单节点的 k8s 集群,这对于学习而言是不够的.我们需要有一个多节点集群,才能用到各种调度/监控功能.而且单节点只能是一个加引号的"集群&quo ...

- ingress-nginx 的使用 =》 部署在 Kubernetes 集群中的应用暴露给外部的用户使用

文章转载自:https://mp.weixin.qq.com/s?__biz=MzU4MjQ0MTU4Ng==&mid=2247488189&idx=1&sn=8175f067 ...

- .net core i上 K8S(一)集群搭建

1.前言 以前搭建集群都是使用nginx反向代理,但现在我们有了更好的选择——K8S.我不打算一上来就讲K8S的知识点,因为知识点还是比较多,我打算先从搭建K8S集群讲起,我也是在搭建集群的过程中熟悉 ...

随机推荐

- mybatis 变更xml文件目录

mybatis的xml默认读取的是resources目录,这个目录是可以变化的.我习惯于将mapper文件和xml放到一起或相邻目录下. 如图: 具体操作: 以mybatis-plus为例 boots ...

- docker安装mysql笔记

首先 查找镜像 docker search mysql 拉取镜像 : docker pull mysql 拉取成功后,查看本地镜像: docker images 可以看到本地有两个镜像(redis是我 ...

- Vue v-bind与v-model的区别

v-bind 缩写 : 动态地绑定一个或多个特性,或一个组件 prop 到表达式. 官网举例 <!-- 绑定一个属性 --> <img v-bind:src=" ...

- Unicode 字符和UTF编码的理解

Unicode 编码的由来 我们都知道,计算机的内部全部是由二进制数字0, 1 组成的, 那么计算机就没有办法保存我们的文字, 这怎么行呢? 于是美国人就想了一个办法(计算机是由美国人发明的),也把文 ...

- CSS-服务器端字体笔记

服务器端字体 在CSS3中可以使用@font-face属性来利用服务器端字体. @font-face 属性的使用方法: @font-face{ font-family:webFont; src:ur ...

- intellij IDEA github clone 指定分支代码

1.问题描述 在实际开发中,我们通常会使用idea克隆一个新项目(clone),通常情况下,我们默认克隆的是master分支,但是如果master分支只是一个空文件夹而已,真正的代码在develop分 ...

- 【故障处理】 DBCA建库报错CRS-2566

[故障处理] DBCA建库报错CRS-2566 PRCR-1071 PRCR-1006 一.1 BLOG文档结构图 一.2 前言部分 一.2.1 导读和注意事项 各位技术爱好者, ...

- day 19 作业

今日作业 1.什么是对象?什么是类? 对象是特征与技能的结合体,类是一系列对象相同的特征与技能的结合体 2.绑定方法的有什么特点 由对象来调用称之为对象的绑定方法,不同的对象调用该绑定方法,则会将不同 ...

- Python学习日记(二十六) 封装和几个装饰器函数

封装 广义上的封装,它其实是一种面向对象的思想,它能够保护代码;狭义上的封装是面向对象三大特性之一,能把属性和方法都藏起来不让人看见 私有属性 私有属性表示方式即在一个属性名前加上两个双下划线 cla ...

- MySQL/MariaDB数据库的主从级联复制

MySQL/MariaDB数据库的主从级联复制 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一.主从复制类型概述 1>.主从复制 博主推荐阅读: https://ww ...