模型融合之blending和stacking

1. blending

- 需要得到各个模型结果集的权重,然后再线性组合。

"""Kaggle competition: Predicting a Biological Response.

Blending {RandomForests, ExtraTrees, GradientBoosting} + stretching to

[0,1]. The blending scheme is related to the idea Jose H. Solorzano

presented here:

http://www.kaggle.com/c/bioresponse/forums/t/1889/question-about-the-process-of-ensemble-learning/10950#post10950

'''You can try this: In one of the 5 folds, train the models, then use

the results of the models as 'variables' in logistic regression over

the validation data of that fold'''. Or at least this is the

implementation of my understanding of that idea :-)

The predictions are saved in test.csv. The code below created my best

submission to the competition:

- public score (25%): 0.43464

- private score (75%): 0.37751

- final rank on the private leaderboard: 17th over 711 teams :-)

Note: if you increase the number of estimators of the classifiers,

e.g. n_estimators=1000, you get a better score/rank on the private

test set.

Copyright 2012, Emanuele Olivetti.

BSD license, 3 clauses.

"""

from __future__ import division

import numpy as np

import load_data

from sklearn.cross_validation import StratifiedKFold

from sklearn.ensemble import RandomForestClassifier, ExtraTreesClassifier

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.linear_model import LogisticRegression

def logloss(attempt, actual, epsilon=1.0e-15):

"""Logloss, i.e. the score of the bioresponse competition.

"""

attempt = np.clip(attempt, epsilon, 1.0-epsilon)

return - np.mean(actual * np.log(attempt) +

(1.0 - actual) * np.log(1.0 - attempt))

if __name__ == '__main__':

np.random.seed(0) # seed to shuffle the train set

n_folds = 10

verbose = True

shuffle = False

X, y, X_submission = load_data.load()

if shuffle:

idx = np.random.permutation(y.size)

X = X[idx]

y = y[idx]

skf = list(StratifiedKFold(y, n_folds))

clfs = [RandomForestClassifier(n_estimators=100, n_jobs=-1, criterion='gini'),

RandomForestClassifier(n_estimators=100, n_jobs=-1, criterion='entropy'),

ExtraTreesClassifier(n_estimators=100, n_jobs=-1, criterion='gini'),

ExtraTreesClassifier(n_estimators=100, n_jobs=-1, criterion='entropy'),

GradientBoostingClassifier(learning_rate=0.05, subsample=0.5, max_depth=6, n_estimators=50)]

print ("Creating train and test sets for blending.")

dataset_blend_train = np.zeros((X.shape[0], len(clfs)))

dataset_blend_test = np.zeros((X_submission.shape[0], len(clfs)))

for j, clf in enumerate(clfs):

print (j, clf)

dataset_blend_test_j = np.zeros((X_submission.shape[0], len(skf)))

for i, (train, test) in enumerate(skf):

print ("Fold", i)

X_train = X[train]

y_train = y[train]

X_test = X[test]

y_test = y[test]

clf.fit(X_train, y_train)

y_submission = clf.predict_proba(X_test)[:, 1]

dataset_blend_train[test, j] = y_submission

dataset_blend_test_j[:, i] = clf.predict_proba(X_submission)[:, 1]

dataset_blend_test[:, j] = dataset_blend_test_j.mean(1)

print()

print( "Blending.")

clf = LogisticRegression()

clf.fit(dataset_blend_train, y)

y_submission = clf.predict_proba(dataset_blend_test)[:, 1]

print( "Linear stretch of predictions to [0,1]")

y_submission = (y_submission - y_submission.min()) / (y_submission.max() - y_submission.min())

print( "Saving Results.")

tmp = np.vstack([range(1, len(y_submission)+1), y_submission]).T

np.savetxt(fname='submission.csv', X=tmp, fmt='%d,%0.9f',

header='MoleculeId,PredictedProbability', comments='')

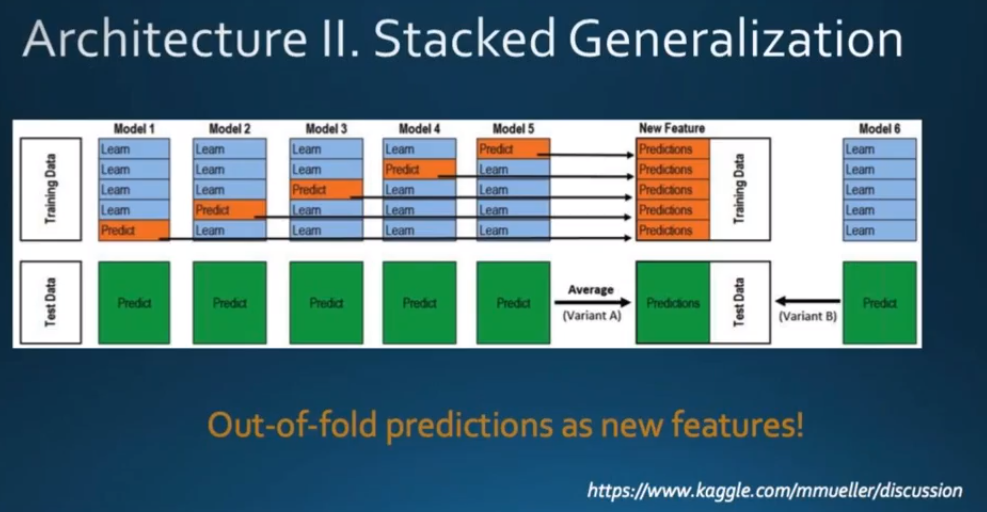

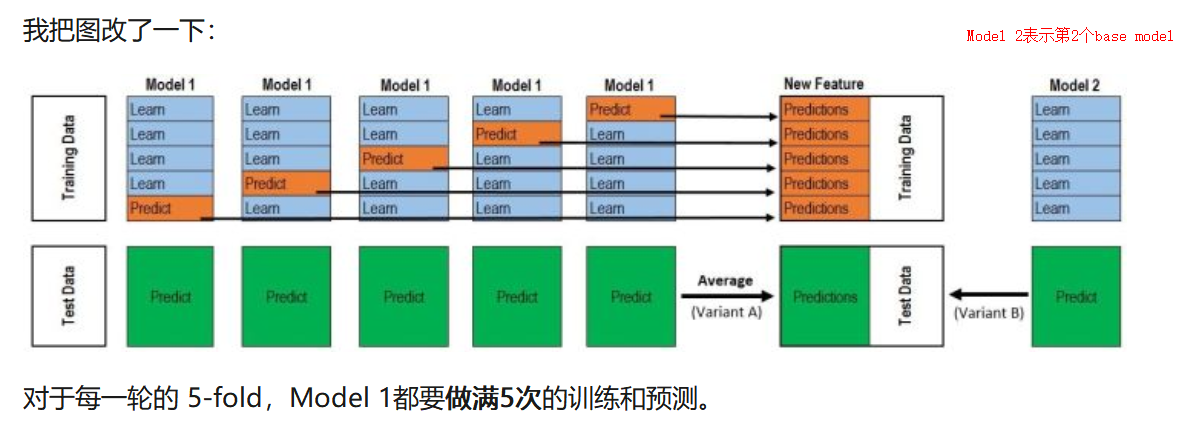

2.stacking

- stacking的核心:在训练集上进行预测,从而构建更高层的学习器。

- stacking训练过程:

1) 拆解训练集。将训练数据随机且大致均匀的拆为m份。

2)在拆解后的训练集上训练模型,同时在测试集上预测。利用m-1份训练数据进行训练,预测剩余一份;在此过程进行的同时,利用相同的m-1份数据训练,在真正的测试集上预测;如此重复m次,将训练集上m次结果叠加为1列,将测试集上m次结果取均值融合为1列。

3)使用k个分类器重复2过程。将分别得到k列训练集的预测结果,k列测试集预测结果。

4)训练3过程得到的数据。将k列训练集预测结果和训练集真实label进行训练,将k列测试集预测结果作为测试集。

# -*- coding: utf-8 -*-

import numpy as np

from sklearn.model_selection import StratifiedKFold

from sklearn.svm import SVC

from sklearn.ensemble import RandomForestClassifier

from sklearn.neighbors import KNeighborsClassifier

import xgboost as xgb

from sklearn.ensemble import ExtraTreesClassifier

from sklearn.linear_model import LogisticRegression

def load_data():

pass

def stacking(train_x, train_y, test):

""" stacking

input: train_x, train_y, test

output: test的预测值

clfs: 5个一级分类器

dataset_blend_train: 一级分类器的prediction, 二级分类器的train_x

dataset_blend_test: 二级分类器的test

"""

# 5个一级分类器

clfs = [SVC(C = 3, kernel="rbf"),

RandomForestClassifier(n_estimators=100, max_features="log2", max_depth=10, min_samples_leaf=1, bootstrap=True, n_jobs=-1, random_state=1),

KNeighborsClassifier(n_neighbors=15, n_jobs=-1),

xgb.XGBClassifier(n_estimators=100, objective="binary:logistic", gamma=1, max_depth=10, subsample=0.8, nthread=-1, seed=1),

ExtraTreesClassifier(n_estimators=100, criterion="gini", max_features="log2", max_depth=10, min_samples_split=2, min_samples_leaf=1,bootstrap=True, n_jobs=-1, random_state=1)]

# 二级分类器的train_x, test

dataset_blend_train = np.zeros((train_x.shape[0], len(clfs)), dtype=np.int)

dataset_blend_test = np.zeros((test.shape[0], len(clfs)), dtype=np.int)

# 5个分类器进行8_folds预测

n_folds = 8

skf = StratifiedKFold(n_splits=n_folds, shuffle=True, random_state=1)

for i,clf in enumerate(clfs):

dataset_blend_test_j = np.zeros((test.shape[0], n_folds)) # 每个分类器的单次fold预测结果

for j,(train_index,test_index) in enumerate(skf.split(train_x, train_y)):

tr_x = train_x[train_index]

tr_y = train_y[train_index]

clf.fit(tr_x, tr_y)

dataset_blend_train[test_index, i] = clf.predict(train_x[test_index])

dataset_blend_test_j[:, j] = clf.predict(test)

dataset_blend_test[:, i] = dataset_blend_test_j.sum(axis=1) // (n_folds//2 + 1)

# 二级分类器进行预测

clf = LogisticRegression(penalty="l1", tol=1e-6, C=1.0, random_state=1, n_jobs=-1)

clf.fit(dataset_blend_train, train_y)

prediction = clf.predict(dataset_blend_test)

return prediction

def main():

(train_x, train_y, test) = load_data()

prediction = stacking(train_x, train_y, test)

return prediction

if __name__ == "__main__":

prediction = main()

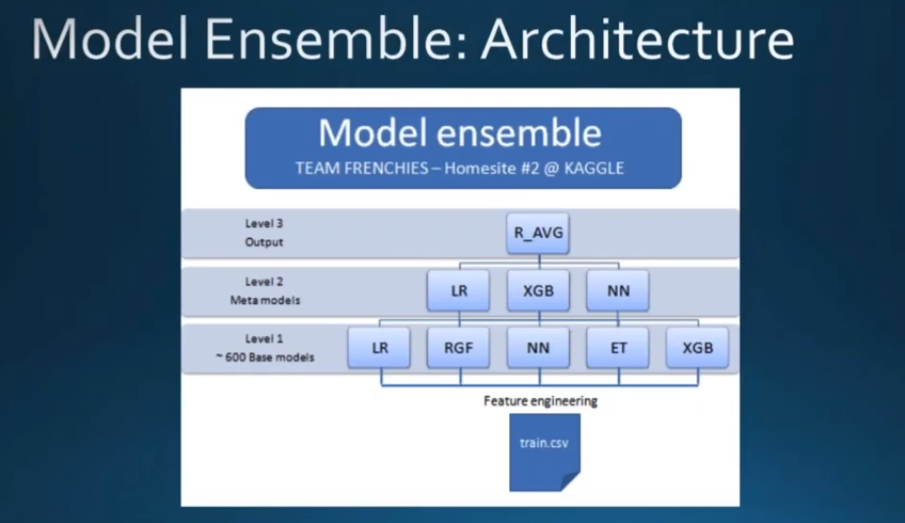

ensemble model

比较简明的资源有:

- 1.https://zhuanlan.zhihu.com/p/26890738

- 2.数据应用学院 Kaggle案例实战 公开课 Part 2 https://www.youtube.com/watch?v=BS4SY3HhVDI&t=4320s

上面的是以5折为例,如果是2折的话就更简单了!

-----------------------------------------------------分割线------------------------------------------------------------------

- 以Kaggle的泰坦尼克号为例子

# out-of-Fold Prdictions

TrainingData = train.shape[0] # 891行

TestData = test.shape[0] # 418 行

# 5 折

kf = KFold(n_splits = 5, random_state = 2017)

# X_train, y_train, X_test表示原生的数据

def get_oof(clf, X_train, y_train, X_test):

# oof_train对应于训练数据集TrainingData

oof_train = np.zeros((TrainingData, )) # 1 * 891型

# oof_test对应于测试集TestData

oof_test = np.zeros((TestData, )) # 1 * 418型

# oof_test_skf对应于5折之后的所有predict作为新的TestData(测试集),只要最后对行(axis = 0)取平均就得到平均predict值Test Data

oof_test_skf = np.empty((5, TestData)) # 5 * 418型

for i, (train_index, test_index) in enumerate(kf.split(X_train)):

# kf_X_train 表示train data每一折中用于训练的训练集(4份共有712个样本)

kf_X_train = X_train[train_index] # 712 * 7 例如712 instances for each fold

kf_y_train = y_train[train_index] # 712 * 1 例如712 instances for each fold

# kf_X_test 表示train data每一折中用于predict的数据集(也就是验证集validation data)

kf_X_test = X_train[test_index] # 179 * 7 例如178 instances for each fold

clf.train(kf_X_train, kf_y_train) # 训练模型

# 得到predict值(输出值)作为new feature输入用于第二层的训练

# 一个base model 就对应于new feature的一列,此时的new feature有多少列取决于你第一层用了多少个base model

oof_train[test_index] = clf.prdict(kf_X_test) # 1 * 179 ===> will be 1 * 891 after 5 folds

# 用TestData --这里X_test(测试集)用于预测

oof_test_skf[i, :] = clf.predict(X_test) # oof_test_skf[i, :],1 * 418 ===> will be 5 * 418 after 5 folds

# 5折stacking结束后

# 对测试集预测得到的predict值进行行(axis = 0)平均: 5 * 418 ===> 1 * 418

oof_test[:] = oof_test_skf.mean(axis = 0)

return oof_train.reshpae(-1, 1), oof_test.reshpae(-1, 1)

# oof_train.reshpae(-1, 1): 891 * 1 oof_test.reshpae(-1, 1): 418 * 1

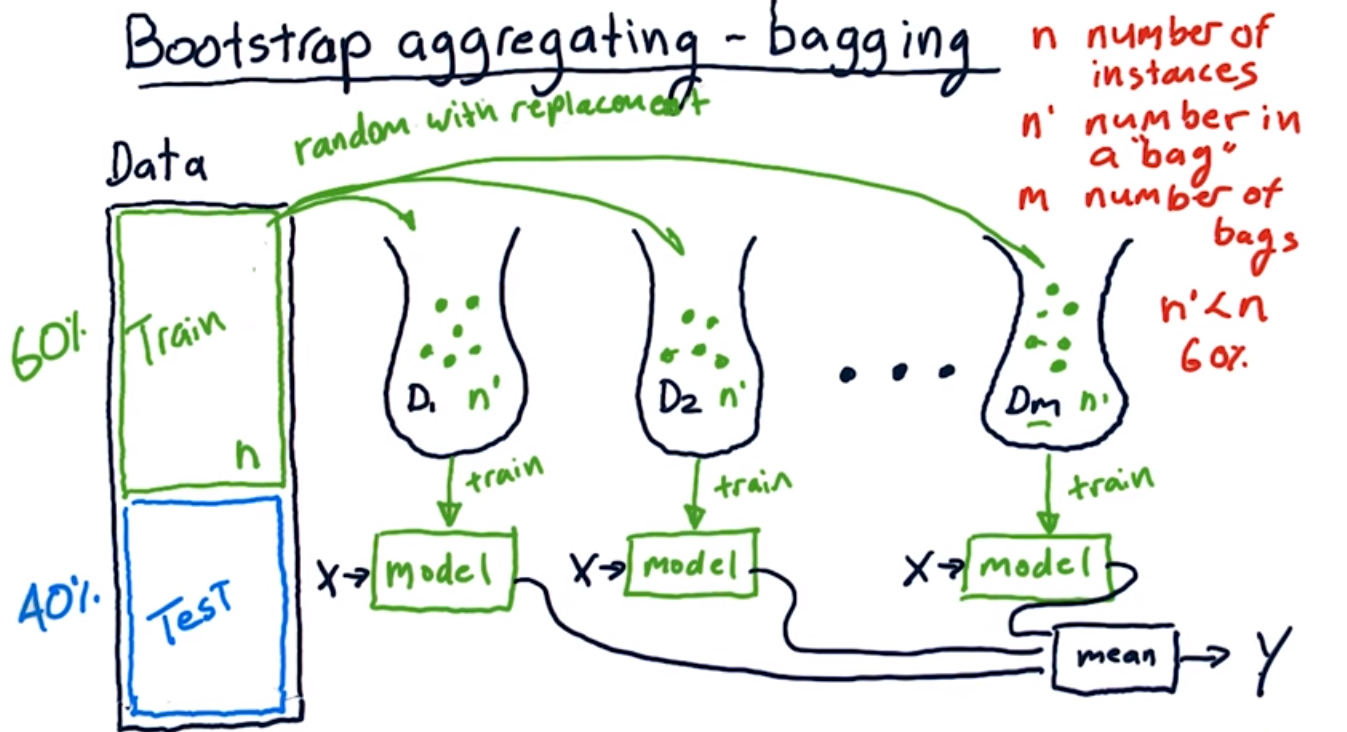

-------------------------------------------------补充----------------------------------------------

Udacity的几张图

模型融合之blending和stacking的更多相关文章

- 模型融合——stacking原理与实现

一般提升模型效果从两个大的方面入手 数据层面:数据增强.特征工程等 模型层面:调参,模型融合 模型融合:通过融合多个不同的模型,可能提升机器学习的性能.这一方法在各种机器学习比赛中广泛应用, 也是在比 ...

- 深度学习模型融合stacking

当你的深度学习模型变得很多时,选一个确定的模型也是一个头痛的问题.或者你可以把他们都用起来,就进行模型融合.我主要使用stacking和blend方法.先把代码贴出来,大家可以看一下. import ...

- 深度学习模型stacking模型融合python代码,看了你就会使

话不多说,直接上代码 def stacking_first(train, train_y, test): savepath = './stack_op{}_dt{}_tfidf{}/'.format( ...

- 谈谈模型融合之一 —— 集成学习与 AdaBoost

前言 前面的文章中介绍了决策树以及其它一些算法,但是,会发现,有时候使用使用这些算法并不能达到特别好的效果.于是乎就有了集成学习(Ensemble Learning),通过构建多个学习器一起结合来完成 ...

- 在Caffe中实现模型融合

模型融合 有的时候我们手头可能有了若干个已经训练好的模型,这些模型可能是同样的结构,也可能是不同的结构,训练模型的数据可能是同一批,也可能不同.无论是出于要通过ensemble提升性能的目的,还是要设 ...

- Gluon炼丹(Kaggle 120种狗分类,迁移学习加双模型融合)

这是在kaggle上的一个练习比赛,使用的是ImageNet数据集的子集. 注意,mxnet版本要高于0.12.1b2017112. 下载数据集. train.zip test.zip labels ...

- 模型融合策略voting、averaging、stacking

原文:https://zhuanlan.zhihu.com/p/25836678 1.voting 对于分类问题,采用多个基础模型,采用投票策略选择投票最多的为最终的分类. 2.averaging 对 ...

- 基于sklearn的 BaseEstimator开发接口:模型融合Stacking

转载:https://github.com/LearningFromBest/CMB-credit-card-department-prediction-of-purchasing-behavior- ...

- 22(8).模型融合---RegionBoost

在adaboost当中,样本的权重alpha是固定的,蓝色五角星所在的圈中3个○分错了,红色五角星所在的圈中4个×和1个○都分对了,很容易让人想到,这个模型,对于红色位置的判断更加可信. 动态权重,每 ...

随机推荐

- C/C++程序开发中实现信息隐藏的三种类型

不管是模块化设计,还是面向对象设计.还是分层设计,实现子系统内部信息的对外隐藏都是最关键的内在要求.以本人浅显的经验,把信息隐藏依照程度的不同分成(1)不可见不可用(2)可见不可用(3)可见可用. 1 ...

- (转)Unity 导出XML配置文件,动态加载场景

参考:http://www.xuanyusong.com/archives/1919 http://www.omuying.com/article/48.aspx 主要功能: 1.导出场景的配置文 ...

- Intent跳转系统的应用

1.从google搜索内容 Intent intent = new Intent(); intent.setAction(Intent.ACTION_WEB_SEARCH); int ...

- javascript iframe 操作(一)

[兼容所有浏览器 包括IE7/8/9] 1.父页面中获取IFRAME的WINDOW对象 获得了window对象后,就可以调用iframe页面中定义的方法等. IE:可以通过iframeId.windo ...

- 【BZOJ2707】[SDOI2012]走迷宫 Tarjan+拓扑排序+高斯消元+期望

[BZOJ2707][SDOI2012]走迷宫 Description Morenan被困在了一个迷宫里.迷宫可以视为N个点M条边的有向图,其中Morenan处于起点S,迷宫的终点设为T.可惜的是,M ...

- Cocos2d-x Lua中Sprite精灵类

精灵类是Sprite,它的类图如下图所示. Sprite类图 Sprite类直接继承了Node类,具有Node基本特征.此外,我们还可以看到Sprite类的子类有:PhysicsSprite和Skin ...

- AGS Server 10.1 切图工具

在AGS Sever中很重要的功能就是地图缓存的制作,安装AGS Sever会在catalog中增加相关的工具箱,利用这些工具可以制作.删除.更新切片 一.Convert map server cac ...

- cmd文件和bat文件有什么区别

第一次遇到后缀是cmd的文件, 记录下与bat文件的区别 本质上没有区别,都是简单的文本编码方式,都可以用记事本创建.编辑和查看. 两者所用的命令行代码也是共用的,只是cmd文件中允许使用的命令要比b ...

- 2015-02-07——js笔记

示例1: var abc; console.log(abc === undefined); console.log(abc === null); console.log(t ...

- 0x07 MySQL 多表查询

Some Content From——Egon's Blog http://www.cnblogs.com/linhaifeng/articles/7126847.html 一 准备表 准备表 #建表 ...