docker入门级详解

Docker

1 docker安装

yum install docker [root@topcheer ~]# systemctl start docker

[root@topcheer ~]# mkdir -p /etc/docker

[root@topcheer ~]# vim /etc/docker/daemon.json #配置阿里云镜像加速 {

"registry-mirrors": ["XXXXXXXXXXXXXXXX"]

}

[root@topcheer ~]# systemctl daemon-reload #加载配置文件 [root@topcheer ~]# systemctl restart docker #重启

[root@topcheer ~]#

2 docker命令

2.1 docker帮助命令

docker version

[root@topcheer ~]# docker version

Client:

Version: 1.13.1

API version: 1.26

Package version: docker-1.13.1-103.git7f2769b.el7.centos.x86_64

Go version: go1.10.3

Git commit: 7f2769b/1.13.1

Built: Sun Sep 15 14:06:47 2019

OS/Arch: linux/amd64

Server:

Version: 1.13.1

API version: 1.26 (minimum version 1.12)

Package version: docker-1.13.1-103.git7f2769b.el7.centos.x86_64

Go version: go1.10.3

Git commit: 7f2769b/1.13.1

Built: Sun Sep 15 14:06:47 2019

OS/Arch: linux/amd64

Experimental: false

[root@topcheer ~]#docker info

[root@topcheer ~]# docker info

Containers: 1

Running: 0

Paused: 0

Stopped: 1

Images: 1

Server Version: 1.13.1

Storage Driver: overlay2

Backing Filesystem: xfs

Supports d_type: true

Native Overlay Diff: true

Logging Driver: journald

Cgroup Driver: systemd

Plugins:

Volume: local

Network: bridge host macvlan null overlay

Swarm: inactive

Runtimes: docker-runc runc

Default Runtime: docker-runc

Init Binary: /usr/libexec/docker/docker-init-current

containerd version: (expected: aa8187dbd3b7ad67d8e5e3a15115d3eef43a7ed1)

runc version: 9c3c5f853ebf0ffac0d087e94daef462133b69c7 (expected: 9df8b306d01f59d3a8029be411de015b7304dd8f)

init version: fec3683b971d9c3ef73f284f176672c44b448662 (expected: 949e6facb77383876aeff8a6944dde66b3089574)

Security Options:

seccomp

WARNING: You're not using the default seccomp profile

Profile: /etc/docker/seccomp.json

selinux

Kernel Version: 3.10.0-957.el7.x86_64

Operating System: CentOS Linux 7 (Core)

OSType: linux

Architecture: x86_64

Number of Docker Hooks: 3

CPUs: 4

Total Memory: 1.777 GiB

Name: topcheer

ID: SR5A:YSH6:3YGH:YEZ4:PWLB:EEVE:3L5S:Z5AR:ARIA:SDGX:CZI5:MJ7O

Docker Root Dir: /var/lib/docker

Debug Mode (client): false

Debug Mode (server): false

Registry: https://index.docker.io/v1/

Experimental: false

Insecure Registries:

127.0.0.0/8

Registry Mirrors:

https://lara9y80.mirror.aliyuncs.com

Live Restore Enabled: false

Registries: docker.io (secure)

[root@topcheer ~]#docker --help

[root@topcheer ~]# docker --help

Usage: docker COMMAND

A self-sufficient runtime for containers

Options:

--config string Location of client config files (default "/root/.docker")

-D, --debug Enable debug mode

--help Print usage

-H, --host list Daemon socket(s) to connect to (default [])

-l, --log-level string Set the logging level ("debug", "info", "warn", "error", "fatal") (default "info")

--tls Use TLS; implied by --tlsverify

--tlscacert string Trust certs signed only by this CA (default "/root/.docker/ca.pem")

--tlscert string Path to TLS certificate file (default "/root/.docker/cert.pem")

--tlskey string Path to TLS key file (default "/root/.docker/key.pem")

--tlsverify Use TLS and verify the remote

-v, --version Print version information and quit

Management Commands:

container Manage containers

image Manage images

network Manage networks

node Manage Swarm nodes

plugin Manage plugins

secret Manage Docker secrets

service Manage services

stack Manage Docker stacks

swarm Manage Swarm

system Manage Docker

volume Manage volumes

Commands:

attach Attach to a running container

build Build an image from a Dockerfile

commit Create a new image from a container's changes

cp Copy files/folders between a container and the local filesystem

create Create a new container

diff Inspect changes on a container's filesystem

events Get real time events from the server

exec Run a command in a running container

export Export a container's filesystem as a tar archive

history Show the history of an image

images List images

import Import the contents from a tarball to create a filesystem image

info Display system-wide information

inspect Return low-level information on Docker objects

kill Kill one or more running containers

load Load an image from a tar archive or STDIN

login Log in to a Docker registry

logout Log out from a Docker registry

logs Fetch the logs of a container

pause Pause all processes within one or more containers

port List port mappings or a specific mapping for the container

ps List containers

pull Pull an image or a repository from a registry

push Push an image or a repository to a registry

rename Rename a container

restart Restart one or more containers

rm Remove one or more containers

rmi Remove one or more images

run Run a command in a new container

save Save one or more images to a tar archive (streamed to STDOUT by default)

search Search the Docker Hub for images

start Start one or more stopped containers

stats Display a live stream of container(s) resource usage statistics

stop Stop one or more running containers

tag Create a tag TARGET_IMAGE that refers to SOURCE_IMAGE

top Display the running processes of a container

unpause Unpause all processes within one or more containers

update Update configuration of one or more containers

version Show the Docker version information

wait Block until one or more containers stop, then print their exit codes

Run 'docker COMMAND --help' for more information on a command.

[root@topcheer ~]#

2.2 镜像命令

docker images

[root@topcheer ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer ~]#

REPOSITORY:表示镜像的仓库源

TAG:镜像的标签

IMAGE ID:镜像ID

CREATED:镜像创建时间

SIZE:镜像大小

同一仓库源可以有多个 TAG,代表这个仓库源的不同个版本,我们使用 REPOSITORY:TAG 来定义不同的镜像。

如果你不指定一个镜像的版本标签,例如你只使用 ubuntu,docker 将默认使用 ubuntu:latest 镜像docker search

[root@topcheer ~]# docker search redis

INDEX NAME DESCRIPTION STARS OFFICIAL AUTOMATED

docker.io docker.io/redis Redis is an open source key-value store th... 7342 [OK]

docker.io docker.io/bitnami/redis Bitnami Redis Docker Image 127 [OK]

docker.io docker.io/sameersbn/redis 77 [OK]

docker.io docker.io/grokzen/redis-cluster Redis cluster 3.0, 3.2, 4.0 & 5.0 56

docker.io docker.io/rediscommander/redis-commander Alpine image for redis-commander - Redis m... 31 [OK]

docker.io docker.io/kubeguide/redis-master redis-master with "Hello World!" 29

docker.io docker.io/redislabs/redis Clustered in-memory database engine compat... 23

docker.io docker.io/arm32v7/redis Redis is an open source key-value store th... 17

docker.io docker.io/redislabs/redisearch Redis With the RedisSearch module pre-load... 17

docker.io docker.io/oliver006/redis_exporter Prometheus Exporter for Redis Metrics. Su... 15

docker.io docker.io/webhippie/redis Docker images for Redis 10 [OK]

docker.io docker.io/s7anley/redis-sentinel-docker Redis Sentinel 9 [OK]

docker.io docker.io/insready/redis-stat Docker image for the real-time Redis monit... 8 [OK]

docker.io docker.io/redislabs/redisgraph A graph database module for Redis 8 [OK]

docker.io docker.io/arm64v8/redis Redis is an open source key-value store th... 6

docker.io docker.io/bitnami/redis-sentinel Bitnami Docker Image for Redis Sentinel 6 [OK]

docker.io docker.io/centos/redis-32-centos7 Redis in-memory data structure store, used... 4

docker.io docker.io/redislabs/redismod An automated build of redismod - latest Re... 4 [OK]

docker.io docker.io/circleci/redis CircleCI images for Redis 2 [OK]

docker.io docker.io/frodenas/redis A Docker Image for Redis 2 [OK]

docker.io docker.io/runnable/redis-stunnel stunnel to redis provided by linking conta... 1 [OK]

docker.io docker.io/tiredofit/redis Redis Server w/ Zabbix monitoring and S6 O... 1 [OK]

docker.io docker.io/wodby/redis Redis container image with orchestration 1 [OK]

docker.io docker.io/cflondonservices/redis Docker image for running redis 0

docker.io docker.io/xetamus/redis-resource forked redis-resource 0 [OK]

[root@topcheer ~]#docker pull

[root@topcheer ~]# docker pull docker.io/redis

Using default tag: latest

Trying to pull repository docker.io/library/redis ...

latest: Pulling from docker.io/library/redis

b8f262c62ec6: Pull complete

93789b5343a5: Pull complete

49cdbb315637: Pull complete

2c1ff453e5c9: Pull complete

9341ee0a5d4a: Pull complete

770829e1df34: Pull complete

Digest: sha256:5dcccb533dc0deacce4a02fe9035134576368452db0b4323b98a4b2ba2d3b302

Status: Downloaded newer image for docker.io/redis:latest

[root@topcheer ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/redis latest 63130206b0fa 9 days ago 98.2 MB

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer ~]#

docker rmi

[root@topcheer ~]# docker rmi 63130206b0fa

Untagged: docker.io/redis:latest

Untagged: docker.io/redis@sha256:5dcccb533dc0deacce4a02fe9035134576368452db0b4323b98a4b2ba2d3b302

Deleted: sha256:63130206b0fa808e4545a0cb4a1f14f6d40b8a7e2e6fda0a31fd326c2ac0971c

Deleted: sha256:9476758634326bb436208264d0541e9a0d42e4add35d00c2a7408f810223013d

Deleted: sha256:0f3d9de16a216bfa5e2c2bd0e3c2ba83afec01a1b326d9f39a5ea7aecc112baf

Deleted: sha256:452d665d4efca3e6067c89a332c878437d250312719f9ea8fff8c0e350b6e471

Deleted: sha256:d6aec371927a9d4bfe4df4ee8e510624549fc08bc60871ce1f145997f49d4d37

Deleted: sha256:2957e0a13c30e89650dd6c00644c04aa87ce516284c76a67c4b32cbb877de178

Deleted: sha256:2db44bce66cde56fca25aeeb7d09dc924b748e3adfe58c9cc3eb2bd2f68a1b68

[root@topcheer ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer ~]#

2.3 容器命令

docker run

OPTIONS说明(常用):有些是一个减号,有些是两个减号 --name="容器新名字": 为容器指定一个名称;

-d: 后台运行容器,并返回容器ID,也即启动守护式容器;

-i:以交互模式运行容器,通常与 -t 同时使用;

-t:为容器重新分配一个伪输入终端,通常与 -i 同时使用;

-P: 随机端口映射;

-p: 指定端口映射,有以下四种格式

ip:hostPort:containerPort

ip::containerPort

hostPort:containerPort

containerPort [root@topcheer ~]# docker run -it centos /bin/bash

[root@3d2a94b63807 /]# cd /

[root@3d2a94b63807 /]# lldocker ps

OPTIONS说明(常用): -a :列出当前所有正在运行的容器+历史上运行过的

-l :显示最近创建的容器。

-n:显示最近n个创建的容器。

-q :静默模式,只显示容器编号。

--no-trunc :不截断输出。

退出容器 exit:容器停止退出 crtl p q容器不停止退出

[root@topcheer ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

3d2a94b63807 centos "/bin/bash" 3 minutes ago Up 3 minutes nostalgic_darwin

[root@topcheer ~]#docker stop

root@topcheer ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

3d2a94b63807 centos "/bin/bash" 3 minutes ago Up 3 minutes nostalgic_darwin

[root@topcheer ~]# docker stop 3d2a94b63807

3d2a94b63807docker start

[root@topcheer ~]# docker start 3d2a94b63807

3d2a94b63807

[root@topcheer ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

3d2a94b63807 centos "/bin/bash" 6 minutes ago Up 17 seconds nostalgic_darwin

[root@topcheer ~]#docker rm

[root@topcheer ~]# docker rm -f $(docker ps -a -q)

3d2a94b63807

299b22d3d143

[root@topcheer ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

[root@topcheer ~]#docker run -d

[root@topcheer ~]# docker run -d centos

3c618cadb296fd013384201958f175085395a505a0aa1f7727e3c24b744b0b7f

[root@topcheer ~]# 问题:然后docker ps -a 进行查看, 会发现容器已经退出

很重要的要说明的一点: Docker容器后台运行,就必须有一个前台进程.

容器运行的命令如果不是那些一直挂起的命令(比如运行top,tail),就是会自动退出的。 这个是docker的机制问题,比如你的web容器,我们以nginx为例,正常情况下,我们配置启动服务只需要启动响应的service即可。例如

service nginx start

但是,这样做,nginx为后台进程模式运行,就导致docker前台没有运行的应用,

这样的容器后台启动后,会立即自杀因为他觉得他没事可做了.

所以,最佳的解决方案是,将你要运行的程序以前台进程的形式运行docker logs

* -t 是加入时间戳

* -f 跟随最新的日志打印

* --tail 数字 显示最后多少条

[root@topcheer ~]# docker run -d centos /bin/sh -c "while true;do echo hello zzyy;sleep 2;done"

6c4bb3ce4c35a5380b553e686b806a1581bfb8dd0a115f63fa9b14da6195e667

[root@topcheer ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6c4bb3ce4c35 centos "/bin/sh -c 'while..." 6 seconds ago Up 4 seconds eloquent_shannon

3c618cadb296 centos "/bin/bash" About a minute ago Exited (0) About a minute ago upbeat_jepsen

[root@topcheer ~]# docker logs -f -t --tail 6c4bb3ce4c35

"docker logs" requires exactly 1 argument(s).

See 'docker logs --help'.

Usage: docker logs [OPTIONS] CONTAINER

Fetch the logs of a container

[root@topcheer ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

6c4bb3ce4c35 centos "/bin/sh -c 'while..." 47 seconds ago Up 46 seconds eloquent_shannon

3c618cadb296 centos "/bin/bash" 2 minutes ago Exited (0) 2 minutes ago upbeat_jepsen

[root@topcheer ~]# docker logs -tf --tail10 6c4bb3ce4c35

unknown flag: --tail10

See 'docker logs --help'.

[root@topcheer ~]# docker logs -tf --tail 10 6c4bb3ce4c35

2019-09-22T10:23:14.595414000Z hello zzyy

2019-09-22T10:23:16.597109000Z hello zzyy

2019-09-22T10:23:18.600019000Z hello zzyy

2019-09-22T10:23:20.602673000Z hello zzyy

2019-09-22T10:23:22.605026000Z hello zzyy

2019-09-22T10:23:24.625059000Z hello zzyydocker top 查看容器内运行的进程

[root@topcheer ~]# docker top 6c4bb3ce4c35

UID PID PPID C STIME TTY TIME CMD

root 116050 116030 0 18:21 ? 00:00:00 /bin/sh -c while true;do echo hello zzyy;sleep 2;done

root 116250 116050 2 18:25 ? 00:00:00 sleep 2

[root@topcheer ~]#

docker inspect 查看容器内部细节

[root@topcheer ~]# docker inspect 6c4bb3ce4c35

[

{

"Id": "6c4bb3ce4c35a5380b553e686b806a1581bfb8dd0a115f63fa9b14da6195e667",

"Created": "2019-09-22T10:21:57.924998005Z",

"Path": "/bin/sh",

"Args": [

"-c",

"while true;do echo hello zzyy;sleep 2;done"

],

"State": {

"Status": "running",

"Running": true,

"Paused": false,

"Restarting": false,

"OOMKilled": false,

"Dead": false,

"Pid": 116050,

"ExitCode": 0,

"Error": "",

"StartedAt": "2019-09-22T10:21:58.43216616Z",

"FinishedAt": "0001-01-01T00:00:00Z"

},

"Image": "sha256:67fa590cfc1c207c30b837528373f819f6262c884b7e69118d060a0c04d70ab8",

"ResolvConfPath": "/var/lib/docker/containers/6c4bb3ce4c35a5380b553e686b806a1581bfb8dd0a115f63fa9b14da6195e667/resolv.conf",

"HostnamePath": "/var/lib/docker/containers/6c4bb3ce4c35a5380b553e686b806a1581bfb8dd0a115f63fa9b14da6195e667/hostname",

"HostsPath": "/var/lib/docker/containers/6c4bb3ce4c35a5380b553e686b806a1581bfb8dd0a115f63fa9b14da6195e667/hosts",

"LogPath": "",

"Name": "/eloquent_shannon",

"RestartCount": 0,

"Driver": "overlay2",

"MountLabel": "system_u:object_r:svirt_sandbox_file_t:s0:c71,c940",

"ProcessLabel": "system_u:system_r:svirt_lxc_net_t:s0:c71,c940",

"AppArmorProfile": "",

"ExecIDs": null,

"HostConfig": {

"Binds": null,

"ContainerIDFile": "",

"LogConfig": {

"Type": "journald",

"Config": {}

},

"NetworkMode": "default",

"PortBindings": {},

"RestartPolicy": {

"Name": "no",

"MaximumRetryCount": 0

},

"AutoRemove": false,

"VolumeDriver": "",

"VolumesFrom": null,

"CapAdd": null,

"CapDrop": null,

"Dns": [],

"DnsOptions": [],

"DnsSearch": [],

"ExtraHosts": null,

"GroupAdd": null,

"IpcMode": "",

"Cgroup": "",

"Links": null,

"OomScoreAdj": 0,

"PidMode": "",

"Privileged": false,

"PublishAllPorts": false,

"ReadonlyRootfs": false,

"SecurityOpt": null,

"UTSMode": "",

"UsernsMode": "",

"ShmSize": 67108864,

"Runtime": "docker-runc",

"ConsoleSize": [

0,

0

],

"Isolation": "",

"CpuShares": 0,

"Memory": 0,

"NanoCpus": 0,

"CgroupParent": "",

"BlkioWeight": 0,

"BlkioWeightDevice": null,

"BlkioDeviceReadBps": null,

"BlkioDeviceWriteBps": null,

"BlkioDeviceReadIOps": null,

"BlkioDeviceWriteIOps": null,

"CpuPeriod": 0,

"CpuQuota": 0,

"CpuRealtimePeriod": 0,

"CpuRealtimeRuntime": 0,

"CpusetCpus": "",

"CpusetMems": "",

"Devices": [],

"DiskQuota": 0,

"KernelMemory": 0,

"MemoryReservation": 0,

"MemorySwap": 0,

"MemorySwappiness": -1,

"OomKillDisable": false,

"PidsLimit": 0,

"Ulimits": null,

"CpuCount": 0,

"CpuPercent": 0,

"IOMaximumIOps": 0,

"IOMaximumBandwidth": 0

},

"GraphDriver": {

"Name": "overlay2",

"Data": {

"LowerDir": "/var/lib/docker/overlay2/d8d3dca6c9115b3c782bf358a744475e78f5e62b627cca79e10a34e754310933-init/diff:/var/lib/docker/overlay2/7bc85336eb8ca768f43d8eb3d5f27bdf35fa99068be45c84622d18c0f87c90bd/diff",

"MergedDir": "/var/lib/docker/overlay2/d8d3dca6c9115b3c782bf358a744475e78f5e62b627cca79e10a34e754310933/merged",

"UpperDir": "/var/lib/docker/overlay2/d8d3dca6c9115b3c782bf358a744475e78f5e62b627cca79e10a34e754310933/diff",

"WorkDir": "/var/lib/docker/overlay2/d8d3dca6c9115b3c782bf358a744475e78f5e62b627cca79e10a34e754310933/work"

}

},

"Mounts": [],

"Config": {

"Hostname": "6c4bb3ce4c35",

"Domainname": "",

"User": "",

"AttachStdin": false,

"AttachStdout": false,

"AttachStderr": false,

"Tty": false,

"OpenStdin": false,

"StdinOnce": false,

"Env": [

"PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"

],

"Cmd": [

"/bin/sh",

"-c",

"while true;do echo hello zzyy;sleep 2;done"

],

"Image": "centos",

"Volumes": null,

"WorkingDir": "",

"Entrypoint": null,

"OnBuild": null,

"Labels": {

"org.label-schema.build-date": "",

"org.label-schema.license": "GPLv2",

"org.label-schema.name": "CentOS Base Image",

"org.label-schema.schema-version": "1.0",

"org.label-schema.vendor": "CentOS"

}

},

"NetworkSettings": {

"Bridge": "",

"SandboxID": "d5f116b329f01e9bab7f985282fd568e379c8e7aa4fcc3677b9b025ded771149",

"HairpinMode": false,

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"Ports": {},

"SandboxKey": "/var/run/docker/netns/d5f116b329f0",

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"EndpointID": "825091555bc0adfdf32667650884ec2b6274c44c787291870de32ec2cee8575b",

"Gateway": "172.17.0.1",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"MacAddress": "02:42:ac:11:00:02",

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "fe000671b1b7f9a2e634f409bd33ada7bed50e818a28c1d9c8245aba67b1b625",

"EndpointID": "825091555bc0adfdf32667650884ec2b6274c44c787291870de32ec2cee8575b",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.2",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:02"

}

}

}

}

]

[root@topcheer ~]#

docker exec -it

[root@topcheer ~]# docker exec -it 6c4bb3ce4c35 /bin/bash

[root@6c4bb3ce4c35 /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 5 root root 340 Sep 22 10:21 dev

drwxr-xr-x. 1 root root 66 Sep 22 10:21 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 251 root root 0 Sep 22 10:21 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 22 10:21 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 2 01:15 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@6c4bb3ce4c35 /]#

[root@topcheer ~]# docker attach 6c4bb3ce4c35

hello zzyy

hello zzyy

hello zzyy

hello zzyy

attach 直接进入容器启动命令的终端,不会启动新的进程

exec 是在容器中打开新的终端,并且可以启动新的进程docker cp docker cp 容器ID:容器内路径 目的主机路径

[root@topcheer ~]# docker cp 6c4bb3ce4c35:/tmp/yum.log /tmp/yum.log

[root@topcheer ~]# cd /tmp

[root@topcheer tmp]# ll

总用量 144

-rw-r--r--. 1 root root 1148 8月 31 18:29 anaconda.log

drwxr-xr-x. 2 root root 18 8月 31 18:17 hsperfdata_root

-rw-r--r--. 1 root root 415 8月 31 18:29 ifcfg.log

-rwx------. 1 root root 836 8月 31 18:27 ks-script-zj2XPa

-rw-r--r--. 1 root root 0 8月 31 18:28 packaging.log

-rw-r--r--. 1 root root 0 8月 31 18:28 program.log

-rw-r--r--. 1 root root 0 8月 31 18:28 sensitive-info.log

drwx------. 2 wgr wgr 25 8月 31 18:31 ssh-FYigK4SAU4OM

drwx------. 2 wgr wgr 25 9月 2 09:18 ssh-zKscLR1XtYju

-rw-r--r--. 1 root root 0 8月 31 18:28 storage.log

drwx------. 3 root root 17 8月 31 18:29 systemd-private-6a7934172f6c411fbf39074aa3902f99-bolt.service-Y8qrWS

drwx------. 3 root root 17 8月 31 18:29 systemd-private-6a7934172f6c411fbf39074aa3902f99-colord.service-7Jig8H

drwx------. 3 root root 17 8月 31 18:28 systemd-private-6a7934172f6c411fbf39074aa3902f99-cups.service-bBt1J6

drwx------. 3 root root 17 8月 31 18:31 systemd-private-6a7934172f6c411fbf39074aa3902f99-fwupd.service-Gm5QpN

drwx------. 3 root root 17 8月 31 18:28 systemd-private-6a7934172f6c411fbf39074aa3902f99-rtkit-daemon.service-VEQfTp

drwx------. 3 root root 17 8月 31 18:31 systemd-private-6a7934172f6c411fbf39074aa3902f99-systemd-hostnamed.service-TulnOV

drwx------. 3 root root 17 8月 31 18:28 systemd-private-6a7934172f6c411fbf39074aa3902f99-systemd-machined.service-Jxxmt6

drwx------. 3 root root 17 9月 2 09:16 systemd-private-7b6d429e399747c496a317824a2e8642-bolt.service-LFuHXZ

drwx------. 3 root root 17 9月 2 09:16 systemd-private-7b6d429e399747c496a317824a2e8642-colord.service-LRGmIL

drwx------. 3 root root 17 9月 2 09:16 systemd-private-7b6d429e399747c496a317824a2e8642-cups.service-Qktpb4

drwx------. 3 root root 17 9月 2 09:18 systemd-private-7b6d429e399747c496a317824a2e8642-fwupd.service-aSrZvk

drwx------. 3 root root 17 9月 2 09:15 systemd-private-7b6d429e399747c496a317824a2e8642-rtkit-daemon.service-nW4tNf

drwx------. 2 root root 6 9月 22 17:34 tmp.Bl496ZWqxn

drwx------. 2 root root 6 9月 22 17:33 tmp.K31L5zqugc

drwx------. 2 wgr wgr 6 8月 31 18:31 tracker-extract-files.1000

drwx------. 2 root root 6 9月 2 09:15 vmware-root_6298-692293416

drwx------. 2 root root 6 8月 31 18:28 vmware-root_6346-994818392

-rw-------. 1 root root 0 8月 1 09:09 yum.log

-rw-------. 1 root root 133031 9月 2 09:19 yum_save_tx.2019-09-02.09-19.4iKsVG.yumtx

[root@topcheer tmp]#

attach Attach to a running container # 当前 shell 下 attach 连接指定运行镜像

build Build an image from a Dockerfile # 通过 Dockerfile 定制镜像

commit Create a new image from a container changes # 提交当前容器为新的镜像

cp Copy files/folders from the containers filesystem to the host path #从容器中拷贝指定文件或者目录到宿主机中

create Create a new container # 创建一个新的容器,同 run,但不启动容器

diff Inspect changes on a container's filesystem # 查看 docker 容器变化

events Get real time events from the server # 从 docker 服务获取容器实时事件

exec Run a command in an existing container # 在已存在的容器上运行命令

export Stream the contents of a container as a tar archive # 导出容器的内容流作为一个 tar 归档文件[对应 import ]

history Show the history of an image # 展示一个镜像形成历史

images List images # 列出系统当前镜像

import Create a new filesystem image from the contents of a tarball # 从tar包中的内容创建一个新的文件系统映像[对应export]

info Display system-wide information # 显示系统相关信息

inspect Return low-level information on a container # 查看容器详细信息

kill Kill a running container # kill 指定 docker 容器

load Load an image from a tar archive # 从一个 tar 包中加载一个镜像[对应 save]

login Register or Login to the docker registry server # 注册或者登陆一个 docker 源服务器

logout Log out from a Docker registry server # 从当前 Docker registry 退出

logs Fetch the logs of a container # 输出当前容器日志信息

port Lookup the public-facing port which is NAT-ed to PRIVATE_PORT # 查看映射端口对应的容器内部源端口

pause Pause all processes within a container # 暂停容器

ps List containers # 列出容器列表

pull Pull an image or a repository from the docker registry server # 从docker镜像源服务器拉取指定镜像或者库镜像

push Push an image or a repository to the docker registry server # 推送指定镜像或者库镜像至docker源服务器

restart Restart a running container # 重启运行的容器

rm Remove one or more containers # 移除一个或者多个容器

rmi Remove one or more images # 移除一个或多个镜像[无容器使用该镜像才可删除,否则需删除相关容器才可继续或 -f 强制删除]

run Run a command in a new container # 创建一个新的容器并运行一个命令

save Save an image to a tar archive # 保存一个镜像为一个 tar 包[对应 load]

search Search for an image on the Docker Hub # 在 docker hub 中搜索镜像

start Start a stopped containers # 启动容器

stop Stop a running containers # 停止容器

tag Tag an image into a repository # 给源中镜像打标签

top Lookup the running processes of a container # 查看容器中运行的进程信息

unpause Unpause a paused container # 取消暂停容器

version Show the docker version information # 查看 docker 版本号

wait Block until a container stops, then print its exit code # 截取容器停止时的退出状态值

3 docker镜像

3.1 docker镜像是什么

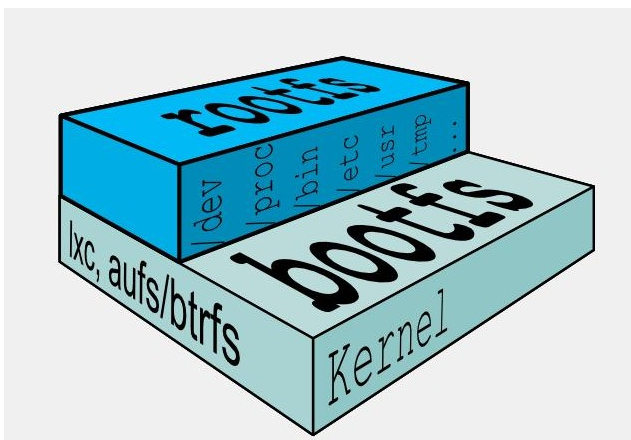

UnionFS(联合文件系统):Union文件系统(UnionFS)是一种分层、轻量级并且高性能的文件系统,它支持对文件系统的修改作为一次提交来一层层的叠加,同时可以将不同目录挂载到同一个虚拟文件系统下(unite several directories into a single virtual filesystem)。Union 文件系统是 Docker 镜像的基础。镜像可以通过分层来进行继承,基于基础镜像(没有父镜像),可以制作各种具体的应用镜像。

特性:一次同时加载多个文件系统,但从外面看起来,只能看到一个文件系统,联合加载会把各层文件系统叠加起来,这样最终的文件系统会包含所有底层的文件和目录

docker镜像加载原理

docker的镜像实际上由一层一层的文件系统组成,这种层级的文件系统UnionFS。 bootfs(boot file system)主要包含bootloader和kernel, bootloader主要是引导加载kernel, Linux刚启动时会加载bootfs文件系统,在Docker镜像的最底层是bootfs。这一层与我们典型的Linux/Unix系统是一样的,包含boot加载器和内核。当boot加载完成之后整个内核就都在内存中了,此时内存的使用权已由bootfs转交给内核,此时系统也会卸载bootfs。

rootfs (root file system) ,在bootfs之上。包含的就是典型 Linux 系统中的 /dev, /proc, /bin, /etc 等标准目录和文件。rootfs就是各种不同的操作系统发行版,比如Ubuntu,Centos等等。

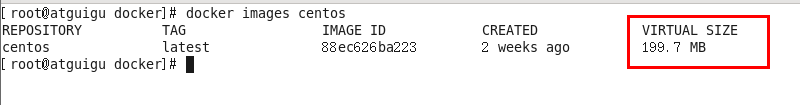

平时我们安装进虚拟机的CentOS都是好几个G,为什么docker这里才200M??

对于一个精简的OS,rootfs可以很小,只需要包括最基本的命令、工具和程序库就可以了,因为底层直接用Host的kernel,自己只需要提供 rootfs 就行了。由此可见对于不同的linux发行版, bootfs基本是一致的, rootfs会有差别, 因此不同的发行版可以公用bootfs。

docker分层镜像

以我们的pull为例,在下载的过程中我们可以看到docker的镜像好像是在一层一层的在下载

最大的一个好处就是 - 共享资源

比如:有多个镜像都从相同的 base 镜像构建而来,那么宿主机只需在磁盘上保存一份base镜像, 同时内存中也只需加载一份 base 镜像,就可以为所有容器服务了。而且镜像的每一层都可以被共享。

特点

Docker镜像都是只读的 当容器启动时,一个新的可写层被加载到镜像的顶部。 这一层通常被称作“容器层”,“容器层”之下的都叫“镜像层”。

3.2 镜像的commit

docker commit -m=“提交的描述信息” -a=“作者” 容器ID 要创建的目标镜像名:[标签名]

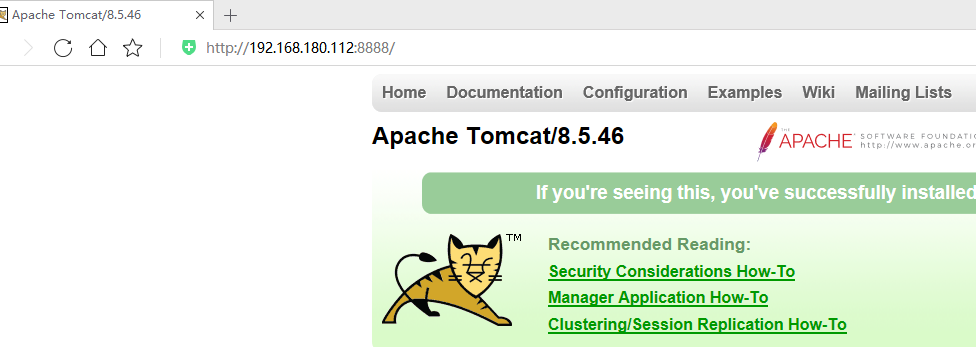

先拉取官方tomcat,运行

[root@topcheer tmp]# docker run -it -p 8888:8080 tomcat

Using CATALINA_BASE: /usr/local/tomcat

Using CATALINA_HOME: /usr/local/tomcat

Using CATALINA_TMPDIR: /usr/local/tomcat/temp

Using JRE_HOME: /usr/local/openjdk-8

Using CLASSPATH: /usr/local/tomcat/bin/bootstrap.jar:/usr/local/tomcat/bin/tomcat-juli.jar

22-Sep-2019 13:28:56.568 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server version name: Apache Tomcat/8.5.46

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server built: Sep 16 2019 18:16:19 UTC

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server version number: 8.5.46.0

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log OS Name: Linux

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log OS Version: 3.10.0-957.el7.x86_64

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Architecture: amd64

22-Sep-2019 13:28:56.572 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Java Home: /usr/local/o

-p 主机端口:docker容器端口

-P 随机分配端口

i:交互

t:终端

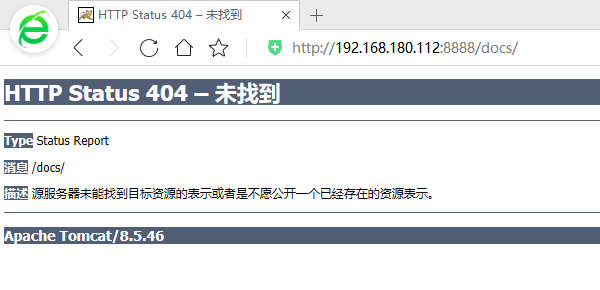

删除文件

[root@topcheer tmp]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5910b3a257ff tomcat "catalina.sh run" 3 minutes ago Up 3 minutes 0.0.0.0:8888->8080/tcp brave_knuth

6c4bb3ce4c35 centos "/bin/sh -c 'while..." 3 hours ago Up 3 hours eloquent_shannon

[root@topcheer tmp]# docker exec -it 5910b3a257ff /bin/bash

root@5910b3a257ff:/usr/local/tomcat# ll

bash: ll: command not found

root@5910b3a257ff:/usr/local/tomcat# ls -l

total 124

-rw-r--r--. 1 root root 19318 Sep 16 18:19 BUILDING.txt

-rw-r--r--. 1 root root 5407 Sep 16 18:19 CONTRIBUTING.md

-rw-r--r--. 1 root root 57011 Sep 16 18:19 LICENSE

-rw-r--r--. 1 root root 1726 Sep 16 18:19 NOTICE

-rw-r--r--. 1 root root 3255 Sep 16 18:19 README.md

-rw-r--r--. 1 root root 7139 Sep 16 18:19 RELEASE-NOTES

-rw-r--r--. 1 root root 16262 Sep 16 18:19 RUNNING.txt

drwxr-xr-x. 2 root root 4096 Sep 20 01:40 bin

drwxr-sr-x. 1 root root 22 Sep 22 13:28 conf

drwxr-sr-x. 2 root staff 78 Sep 20 01:40 include

drwxr-xr-x. 2 root root 4096 Sep 20 01:40 lib

drwxrwxrwx. 1 root root 177 Sep 22 13:28 logs

drwxr-sr-x. 3 root staff 151 Sep 20 01:40 native-jni-lib

drwxrwxrwx. 2 root root 30 Sep 20 01:40 temp

drwxr-xr-x. 7 root root 81 Sep 16 18:17 webapps

drwxrwxrwx. 1 root root 22 Sep 22 13:28 work

root@5910b3a257ff:/usr/local/tomcat#

root@5910b3a257ff:/usr/local/tomcat/webapps# ls -l

total 8

drwxr-xr-x. 3 root root 4096 Sep 20 01:40 ROOT

drwxr-xr-x. 15 root root 4096 Sep 20 01:40 docs

drwxr-xr-x. 6 root root 83 Sep 20 01:40 examples

drwxr-xr-x. 5 root root 87 Sep 20 01:40 host-manager

drwxr-xr-x. 5 root root 103 Sep 20 01:40 manager

root@5910b3a257ff:/usr/local/tomcat/webapps# rm -rf docs/

root@5910b3a257ff:/usr/local/tomcat/webapps#

提交镜像

[root@topcheer tmp]# docker ps -l

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

5910b3a257ff tomcat "catalina.sh run" 6 minutes ago Up 6 minutes 0.0.0.0:8888->8080/tcp brave_knuth

[root@topcheer tmp]# docker commit -a="wgr" -m "test del docs" 5910b3a257ff topcher/tomcat:1.0.1

sha256:3d8737216a1e91c4b2f66a054eeb7e48031f5bff7a05a4a5ce4e5c519cc40084

[root@topcheer tmp]#

[root@topcheer tmp]# docker commit -a="wgr" -m "test del docs" 5910b3a257ff topcher/tomcat:1.0.1

sha256:3d8737216a1e91c4b2f66a054eeb7e48031f5bff7a05a4a5ce4e5c519cc40084

[root@topcheer tmp]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

topcher/tomcat 1.0.1 3d8737216a1e 22 seconds ago 508 MB

docker.io/tomcat latest 8973f493aa0a 2 days ago 508 MB

docker.io/centos latest 67fa590cfc1c 4 weeks ago 202 MB

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer tmp]#

运行镜像

[root@topcheer tmp]# docker run -it -p 8080:8080 topcher/tomcat:1.0.1

Using CATALINA_BASE: /usr/local/tomcat

Using CATALINA_HOME: /usr/local/tomcat

Using CATALINA_TMPDIR: /usr/local/tomcat/temp

Using JRE_HOME: /usr/local/openjdk-8

Using CLASSPATH: /usr/local/tomcat/bin/bootstrap.jar:/usr/local/tomcat/bin/tomcat-juli.jar

22-Sep-2019 13:38:55.628 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server version name: Apache Tomcat/8.5.46

22-Sep-2019 13:38:55.631 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server built: Sep 16 2019 18:16:19 UTC

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Server version number: 8.5.46.0

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log OS Name: Linux

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log OS Version: 3.10.0-957.el7.x86_64

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Architecture: amd64

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log Java Home: /usr/local/openjdk-8/jre

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log JVM Version: 1.8.0_222-b10

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log JVM Vendor: Oracle Corporation

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log CATALINA_BASE: /usr/local/tomcat

22-Sep-2019 13:38:55.632 INFO [main] org.apache.catalina.startup.VersionLoggerListener.log CATALINA_HOME: /usr/local/tomcat

确实为刚刚commit的镜像

4 docker数据卷

4.1 理念

先来看看Docker的理念:

将运用与运行的环境打包形成容器运行 ,运行可以伴随着容器,但是我们对数据的要求希望是持久化的

容器之间希望有可能共享数据

Docker容器产生的数据,如果不通过docker commit生成新的镜像,使得数据做为镜像的一部分保存下来, 那么当容器删除后,数据自然也就没有了。

为了能保存数据在docker中我们使用卷。

4.2 作用

卷就是目录或文件,存在于一个或多个容器中,由docker挂载到容器,但不属于联合文件系统,因此能够绕过Union File System提供一些用于持续存储或共享数据的特性:

卷的设计目的就是数据的持久化,完全独立于容器的生存周期,因此Docker不会在容器删除时删除其挂载的数据卷

特点: 1:数据卷可在容器之间共享或重用数据 2:卷中的更改可以直接生效 3:数据卷中的更改不会包含在镜像的更新中 4:数据卷的生命周期一直持续到没有容器使用它为止

容器的持久化 有点类似我们Redis里面的rdb和aof文件

容器间继承+共享数据 类似Maven的父工程

4.3 通过命令添加数据卷

docker run -it -v /宿主机绝对路径目录:/容器内目录 镜像名

[root@topcheer tmp]# docker run -it -v /wgrData:/containerData 67fa590cfc1c /bin/bash

[root@a518695bb7bc /]# ls -l

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 6 Sep 22 13:50 containerData

drwxr-xr-x. 5 root root 360 Sep 22 13:50 dev

drwxr-xr-x. 1 root root 66 Sep 22 13:50 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 265 root root 0 Sep 22 13:50 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 22 13:50 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 2 01:15 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@a518695bb7bc /]# cd containerData/

[root@a518695bb7bc containerData]# touch wgr.txt

touch: cannot touch 'wgr.txt': Permission denied

##后面说加参数,这边权限不够

[root@topcheer /]# cd wgrData

[root@topcheer wgrData]# ll

总用量 0

[root@topcheer wgrData]# touch wgr.txt

[root@topcheer wgrData]#

[root@a518695bb7bc containerData]# ls -l

total 0

-rw-r--r--. 1 root root 0 Sep 22 13:50 wgr.txt

[root@a518695bb7bc containerData]#

[root@topcheer wgrData]# docker inspect a518695bb7bc

[

{

"Id": "a518695bb7bc4c72983d69351ac7d55f8ede9b104639646a8f19a7d22a6e965d",

"Created": "2019-09-22T13:50:02.271544718Z",

"Path": "/bin/bash",

"Args": [],

"State": {

"Status": "running",

"Running": true,

"Paused": false,

"Restarting": false,

"OOMKilled": false,

"Dead": false,

"Pid": 126235,

"ExitCode": 0,

"Error": "",

"StartedAt": "2019-09-22T13:50:02.8043339Z",

"FinishedAt": "0001-01-01T00:00:00Z"

},

"Image": "sha256:67fa590cfc1c207c30b837528373f819f6262c884b7e69118d060a0c04d70ab8",

"ResolvConfPath": "/var/lib/docker/containers/a518695bb7bc4c72983d69351ac7d55f8ede9b104639646a8f19a7d22a6e965d/resolv.conf",

"HostnamePath": "/var/lib/docker/containers/a518695bb7bc4c72983d69351ac7d55f8ede9b104639646a8f19a7d22a6e965d/hostname",

"HostsPath": "/var/lib/docker/containers/a518695bb7bc4c72983d69351ac7d55f8ede9b104639646a8f19a7d22a6e965d/hosts",

"LogPath": "",

"Name": "/priceless_mccarthy",

"RestartCount": 0,

"Driver": "overlay2",

"MountLabel": "system_u:object_r:svirt_sandbox_file_t:s0:c554,c859",

"ProcessLabel": "system_u:system_r:svirt_lxc_net_t:s0:c554,c859",

"AppArmorProfile": "",

"ExecIDs": null,

"HostConfig": {

"Binds": [

"/wgrData:/containerData"

],

"ContainerIDFile": "",

"LogConfig": {

"Type": "journald",

"Config": {}

},

"NetworkMode": "default",

"PortBindings": {},

"RestartPolicy": {

"Name": "no",

"MaximumRetryCount": 0

},

"AutoRemove": false,

"VolumeDriver": "",

"VolumesFrom": null,

"CapAdd": null,

"CapDrop": null,

"Dns": [],

"DnsOptions": [],

"DnsSearch": [],

"ExtraHosts": null,

"GroupAdd": null,

"IpcMode": "",

"Cgroup": "",

"Links": null,

"OomScoreAdj": 0,

"PidMode": "",

"Privileged": false,

"PublishAllPorts": false,

"ReadonlyRootfs": false,

"SecurityOpt": null,

"UTSMode": "",

"UsernsMode": "",

"ShmSize": 67108864,

"Runtime": "docker-runc",

"ConsoleSize": [

0,

0

],

"Isolation": "",

"CpuShares": 0,

"Memory": 0,

"NanoCpus": 0,

"CgroupParent": "",

"BlkioWeight": 0,

"BlkioWeightDevice": null,

"BlkioDeviceReadBps": null,

"BlkioDeviceWriteBps": null,

"BlkioDeviceReadIOps": null,

"BlkioDeviceWriteIOps": null,

"CpuPeriod": 0,

"CpuQuota": 0,

"CpuRealtimePeriod": 0,

"CpuRealtimeRuntime": 0,

"CpusetCpus": "",

"CpusetMems": "",

"Devices": [],

"DiskQuota": 0,

"KernelMemory": 0,

"MemoryReservation": 0,

"MemorySwap": 0,

"MemorySwappiness": -1,

"OomKillDisable": false,

"PidsLimit": 0,

"Ulimits": null,

"CpuCount": 0,

"CpuPercent": 0,

"IOMaximumIOps": 0,

"IOMaximumBandwidth": 0

},

"GraphDriver": {

"Name": "overlay2",

"Data": {

"LowerDir": "/var/lib/docker/overlay2/5ec60cedcc924e4e1308efa93cff63dcdf046209923df890790fffe89906f52a-init/diff:/var/lib/docker/overlay2/7bc85336eb8ca768f43d8eb3d5f27bdf35fa99068be45c84622d18c0f87c90bd/diff",

"MergedDir": "/var/lib/docker/overlay2/5ec60cedcc924e4e1308efa93cff63dcdf046209923df890790fffe89906f52a/merged",

"UpperDir": "/var/lib/docker/overlay2/5ec60cedcc924e4e1308efa93cff63dcdf046209923df890790fffe89906f52a/diff",

"WorkDir": "/var/lib/docker/overlay2/5ec60cedcc924e4e1308efa93cff63dcdf046209923df890790fffe89906f52a/work"

}

},

"Mounts": [

{

"Type": "bind",

"Source": "/wgrData",

"Destination": "/containerData",

"Mode": "",

"RW": true,

"Propagation": "rprivate"

}

],

"Config": {

"Hostname": "a518695bb7bc",

"Domainname": "",

"User": "",

"AttachStdin": true,

"AttachStdout": true,

"AttachStderr": true,

"Tty": true,

"OpenStdin": true,

"StdinOnce": true,

"Env": [

"PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"

],

"Cmd": [

"/bin/bash"

],

"Image": "67fa590cfc1c",

"Volumes": null,

"WorkingDir": "",

"Entrypoint": null,

"OnBuild": null,

"Labels": {

"org.label-schema.build-date": "",

"org.label-schema.license": "GPLv2",

"org.label-schema.name": "CentOS Base Image",

"org.label-schema.schema-version": "1.0",

"org.label-schema.vendor": "CentOS"

}

},

"NetworkSettings": {

"Bridge": "",

"SandboxID": "99fff9167aad470c7e05b16c4f0a7995a8b65ec62bbd8b547e526618f6ad426b",

"HairpinMode": false,

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"Ports": {},

"SandboxKey": "/var/run/docker/netns/99fff9167aad",

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"EndpointID": "51a7cabaa6a8ec85f43faca98bb1f12ad8cdc7e7bc9c323aa689ec209b557405",

"Gateway": "172.17.0.1",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "172.17.0.5",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"MacAddress": "02:42:ac:11:00:05",

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "fe000671b1b7f9a2e634f409bd33ada7bed50e818a28c1d9c8245aba67b1b625",

"EndpointID": "51a7cabaa6a8ec85f43faca98bb1f12ad8cdc7e7bc9c323aa689ec209b557405",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.5",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:05"

}

}

}

}

]

[root@topcheer wgrData]#

4.4 测试

容器停止退出后,主机修改后数据是否同步

[root@topcheer wgrData]# docker stop a518695bb7bc

a518695bb7bc

[root@topcheer wgrData]# ll

总用量 0

-rw-r--r--. 1 root root 0 9月 22 21:50 wgr.txt

[root@topcheer wgrData]# vim wgr.txt

[root@topcheer wgrData]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

a518695bb7bc 67fa590cfc1c "/bin/bash" 13 minutes ago Exited (137) About a minute ago priceless_mccarthy

936835c7272b topcher/tomcat:1.0.1 "catalina.sh run" 24 minutes ago Up 24 minutes 0.0.0.0:8080->8080/tcp angry_northcutt

5910b3a257ff tomcat "catalina.sh run" 34 minutes ago Up 34 minutes 0.0.0.0:8888->8080/tcp brave_knuth

6c4bb3ce4c35 centos "/bin/sh -c 'while..." 3 hours ago Up 3 hours eloquent_shannon

[root@topcheer wgrData]# docker start a518695bb7bc

a518695bb7bc

[root@topcheer wgrData]# docker exec -it a518695bb7bc /bin/bash

[root@a518695bb7bc /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 21 Sep 22 14:02 containerData

drwxr-xr-x. 5 root root 360 Sep 22 14:03 dev

drwxr-xr-x. 1 root root 66 Sep 22 13:50 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 267 root root 0 Sep 22 14:03 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 22 13:50 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 2 01:15 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@a518695bb7bc /]# cd containerData/

[root@a518695bb7bc containerData]# ll

total 4

-rw-r--r--. 1 root root 8 Sep 22 14:02 wgr.txt

[root@a518695bb7bc containerData]# cat wgr.txt

eqweqeq

[root@a518695bb7bc containerData]#添加权限

[root@topcheer wgrData]# docker run -it --privileged=true -v /wgrData1:/containerData1 67fa590cfc1c /bin/bash

[root@2de3c8ed278e /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 6 Sep 22 14:19 containerData1

drwxr-xr-x. 15 root root 3120 Sep 22 14:19 dev

drwxr-xr-x. 1 root root 66 Sep 22 14:19 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 272 root root 0 Sep 22 14:19 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 22 14:19 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 2 01:15 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@2de3c8ed278e /]# cd containerData1/

[root@2de3c8ed278e containerData1]# touch wgr.txt

[root@2de3c8ed278e containerData1]#限制权限

[root@topcheer wgrData]# docker stop 936835c7272b

936835c7272b

[root@topcheer wgrData]# docker run -it -v /wgrData2:/containerData2:ro 67fa590cfc1c /bin/bash

[root@377e0b8a96a2 /]#

"Mounts": [

{

"Type": "bind",

"Source": "/wgrData2",

"Destination": "/containerData2",

"Mode": "ro",

"RW": false,

"Propagation": "rprivate"

}

],

4.5 Dockerfile添加

可在Dockerfile中使用VOLUME指令来给镜像添加一个或多个数据卷

[root@topcheer mydocker]# vim Dockerfile

[root@topcheer mydocker]# docker build -f Dockerfile -t wgr/centos .

Sending build context to Docker daemon 2.048 kB

Step 1/4 : FROM centos

---> 67fa590cfc1c

Step 2/4 : VOLUME /dataVolumeContainer1 /dataVolumeContainer2

---> Running in 1fece8932e92

---> 5c15da2cfe9a

Removing intermediate container 1fece8932e92

Step 3/4 : CMD echo "finished,--------success1"

---> Running in 708260afecce

---> 8039778cf467

Removing intermediate container 708260afecce

Step 4/4 : CMD /bin/bash

---> Running in 54e07ae3feb5

---> fb7e3d506043

Removing intermediate container 54e07ae3feb5

Successfully built fb7e3d506043

[root@topcheer mydocker]# cat Dockerfile

# volume test

FROM centos

VOLUME ["/dataVolumeContainer1","/dataVolumeContainer2"]

CMD echo "finished,--------success1"

CMD /bin/bash

[root@topcheer mydocker]#

[root@topcheer mydocker]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

wgr/centos latest fb7e3d506043 About a minute ago 202 MB

mytomcat9 latest 6c243064a028 20 hours ago 749 MB

myip 1.2 00a0a1f80e36 20 hours ago 271 MB

myip latest 420c99c3b707 20 hours ago 271 MB

mycentosfile 1.1 f022cd7b9017 20 hours ago 395 MB

topcher/tomcat 1.0.1 3d8737216a1e 23 hours ago 508 MB

docker.io/tomcat latest 8973f493aa0a 3 days ago 508 MB

docker.io/centos latest 67fa590cfc1c 4 weeks ago 202 MB

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer mydocker]# docker run -it wgr/centos /bin/bash

[root@a63d98e5a625 /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 6 Sep 23 12:52 dataVolumeContainer1

drwxr-xr-x. 2 root root 6 Sep 23 12:52 dataVolumeContainer2

drwxr-xr-x. 5 root root 360 Sep 23 12:52 dev

drwxr-xr-x. 1 root root 66 Sep 23 12:52 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 208 root root 0 Sep 23 12:52 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 23 12:52 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 23 12:25 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@a63d98e5a625 /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 6 Sep 23 12:52 dataVolumeContainer1

drwxr-xr-x. 2 root root 6 Sep 23 12:52 dataVolumeContainer2

drwxr-xr-x. 5 root root 360 Sep 23 12:52 dev

drwxr-xr-x. 1 root root 66 Sep 23 12:52 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 208 root root 0 Sep 23 12:52 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 23 12:52 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 23 12:25 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@a63d98e5a625 /]# cd dataVolumeContainer

bash: cd: dataVolumeContainer: No such file or directory

[root@a63d98e5a625 /]# cd dataVolumeContainer1

[root@a63d98e5a625 dataVolumeContainer1]# ll

total 0

[root@a63d98e5a625 dataVolumeContainer1]# touch 1.txt

[root@a63d98e5a625 dataVolumeContainer1]#

[root@a63d98e5a625 dataVolumeContainer1]#

[root@a63d98e5a625 dataVolumeContainer1]# [root@topcheer mydocker]#

[root@topcheer mydocker]# docker inspect a63d98e5a625

[

{

"Id": "a63d98e5a6256f77f457ae99346d6e6e2dc538c747a0ac5ed8632337694dd72b",

"Created": "2019-09-23T12:52:45.588897445Z",

"Path": "/bin/bash",

"Args": [],

"State": {

"Status": "running",

"Running": true,

"Paused": false,

"Restarting": false,

"OOMKilled": false,

"Dead": false,

"Pid": 18139,

"ExitCode": 0,

"Error": "",

"StartedAt": "2019-09-23T12:52:49.795395625Z",

"FinishedAt": "0001-01-01T00:00:00Z"

},

"Image": "sha256:fb7e3d506043d6ee7ca70b2dd2c18eb053d2a9fcc11b812c536f852a53d8c6cf",

"ResolvConfPath": "/var/lib/docker/containers/a63d98e5a6256f77f457ae99346d6e6e2dc538c747a0ac5ed8632337694dd72b/resolv.conf",

"HostnamePath": "/var/lib/docker/containers/a63d98e5a6256f77f457ae99346d6e6e2dc538c747a0ac5ed8632337694dd72b/hostname",

"HostsPath": "/var/lib/docker/containers/a63d98e5a6256f77f457ae99346d6e6e2dc538c747a0ac5ed8632337694dd72b/hosts",

"LogPath": "",

"Name": "/stoic_lamport",

"RestartCount": 0,

"Driver": "overlay2",

"MountLabel": "system_u:object_r:svirt_sandbox_file_t:s0:c816,c976",

"ProcessLabel": "system_u:system_r:svirt_lxc_net_t:s0:c816,c976",

"AppArmorProfile": "",

"ExecIDs": null,

"HostConfig": {

"Binds": null,

"ContainerIDFile": "",

"LogConfig": {

"Type": "journald",

"Config": {}

},

"NetworkMode": "default",

"PortBindings": {},

"RestartPolicy": {

"Name": "no",

"MaximumRetryCount": 0

},

"AutoRemove": false,

"VolumeDriver": "",

"VolumesFrom": null,

"CapAdd": null,

"CapDrop": null,

"Dns": [],

"DnsOptions": [],

"DnsSearch": [],

"ExtraHosts": null,

"GroupAdd": null,

"IpcMode": "",

"Cgroup": "",

"Links": null,

"OomScoreAdj": 0,

"PidMode": "",

"Privileged": false,

"PublishAllPorts": false,

"ReadonlyRootfs": false,

"SecurityOpt": null,

"UTSMode": "",

"UsernsMode": "",

"ShmSize": 67108864,

"Runtime": "docker-runc",

"ConsoleSize": [

0,

0

],

"Isolation": "",

"CpuShares": 0,

"Memory": 0,

"NanoCpus": 0,

"CgroupParent": "",

"BlkioWeight": 0,

"BlkioWeightDevice": null,

"BlkioDeviceReadBps": null,

"BlkioDeviceWriteBps": null,

"BlkioDeviceReadIOps": null,

"BlkioDeviceWriteIOps": null,

"CpuPeriod": 0,

"CpuQuota": 0,

"CpuRealtimePeriod": 0,

"CpuRealtimeRuntime": 0,

"CpusetCpus": "",

"CpusetMems": "",

"Devices": [],

"DiskQuota": 0,

"KernelMemory": 0,

"MemoryReservation": 0,

"MemorySwap": 0,

"MemorySwappiness": -1,

"OomKillDisable": false,

"PidsLimit": 0,

"Ulimits": null,

"CpuCount": 0,

"CpuPercent": 0,

"IOMaximumIOps": 0,

"IOMaximumBandwidth": 0

},

"GraphDriver": {

"Name": "overlay2",

"Data": {

"LowerDir": "/var/lib/docker/overlay2/fc0dec9c7dd31f34f9d63168c5555aa9bdc85eaef29c562b65895bf26b068aa7-init/diff:/var/lib/docker/overlay2/7bc85336eb8ca768f43d8eb3d5f27bdf35fa99068be45c84622d18c0f87c90bd/diff",

"MergedDir": "/var/lib/docker/overlay2/fc0dec9c7dd31f34f9d63168c5555aa9bdc85eaef29c562b65895bf26b068aa7/merged",

"UpperDir": "/var/lib/docker/overlay2/fc0dec9c7dd31f34f9d63168c5555aa9bdc85eaef29c562b65895bf26b068aa7/diff",

"WorkDir": "/var/lib/docker/overlay2/fc0dec9c7dd31f34f9d63168c5555aa9bdc85eaef29c562b65895bf26b068aa7/work"

}

},

"Mounts": [

{

"Type": "volume",

"Name": "3cef2f791e18ba2f31798ef27ab1f066f012d5b4e2447e0d4cf2d15bb76af352",

"Source": "/var/lib/docker/volumes/3cef2f791e18ba2f31798ef27ab1f066f012d5b4e2447e0d4cf2d15bb76af352/_data",

"Destination": "/dataVolumeContainer2",

"Driver": "local",

"Mode": "",

"RW": true,

"Propagation": ""

},

{

"Type": "volume",

"Name": "fa71d12b3a7f55457b3f2f57ca72b0620ea234fd03fba760534480758183944d",

"Source": "/var/lib/docker/volumes/fa71d12b3a7f55457b3f2f57ca72b0620ea234fd03fba760534480758183944d/_data",

"Destination": "/dataVolumeContainer1",

"Driver": "local",

"Mode": "",

"RW": true,

"Propagation": ""

}

],

"Config": {

"Hostname": "a63d98e5a625",

"Domainname": "",

"User": "",

"AttachStdin": true,

"AttachStdout": true,

"AttachStderr": true,

"Tty": true,

"OpenStdin": true,

"StdinOnce": true,

"Env": [

"PATH=/usr/local/sbin:/usr/local/bin:/usr/sbin:/usr/bin:/sbin:/bin"

],

"Cmd": [

"/bin/bash"

],

"Image": "wgr/centos",

"Volumes": {

"/dataVolumeContainer1": {},

"/dataVolumeContainer2": {}

},

"WorkingDir": "",

"Entrypoint": null,

"OnBuild": null,

"Labels": {

"org.label-schema.build-date": "",

"org.label-schema.license": "GPLv2",

"org.label-schema.name": "CentOS Base Image",

"org.label-schema.schema-version": "1.0",

"org.label-schema.vendor": "CentOS"

}

},

"NetworkSettings": {

"Bridge": "",

"SandboxID": "4bd5f69d0dffd043bb7948d327839f0ab92780a9e4aa74cc62e4555a47c35902",

"HairpinMode": false,

"LinkLocalIPv6Address": "",

"LinkLocalIPv6PrefixLen": 0,

"Ports": {},

"SandboxKey": "/var/run/docker/netns/4bd5f69d0dff",

"SecondaryIPAddresses": null,

"SecondaryIPv6Addresses": null,

"EndpointID": "69971af973442c794869f43d21a152b8530d648da8b1967e419fde7db0efac13",

"Gateway": "172.17.0.1",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"IPAddress": "172.17.0.3",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"MacAddress": "02:42:ac:11:00:03",

"Networks": {

"bridge": {

"IPAMConfig": null,

"Links": null,

"Aliases": null,

"NetworkID": "c7d7aaeb71644a84fdda020955a64ae3a2905c8369a08536c24c956bdba11b58",

"EndpointID": "69971af973442c794869f43d21a152b8530d648da8b1967e419fde7db0efac13",

"Gateway": "172.17.0.1",

"IPAddress": "172.17.0.3",

"IPPrefixLen": 16,

"IPv6Gateway": "",

"GlobalIPv6Address": "",

"GlobalIPv6PrefixLen": 0,

"MacAddress": "02:42:ac:11:00:03"

}

}

}

}

]

[root@topcheer mydocker]# cd /var/lib/docker/volumes/fa71d12b3a7f55457b3f2f57ca72b0620ea234fd03fba760534480758183944d/_data

[root@topcheer _data]# ll

总用量 0

-rw-r--r--. 1 root root 0 9月 23 20:53 1.txt

[root@topcheer _data]#

Docker挂载主机目录Docker访问出现cannot open directory .: Permission denied 解决办法:在挂载目录后多加一个--privileged=true参数即可

4.6 数据卷容器

4.6.1 概念

命名的容器挂载数据卷,其它容器通过挂载这个(父容器)实现数据共享,挂载数据卷的容器,称之为数据卷容器

4.6.2 实验

[root@topcheer _data]# docker run -it --name dc02 --volumes-from stoic_lamport wgr/centos

[root@d8e6cc3bad6f /]# ll

total 12

-rw-r--r--. 1 root root 12090 Aug 1 01:10 anaconda-post.log

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 bin -> usr/bin

drwxr-xr-x. 2 root root 19 Sep 23 12:53 dataVolumeContainer1

drwxr-xr-x. 2 root root 6 Sep 23 12:52 dataVolumeContainer2

drwxr-xr-x. 5 root root 360 Sep 23 13:05 dev

drwxr-xr-x. 1 root root 66 Sep 23 13:05 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 home

lrwxrwxrwx. 1 root root 7 Aug 1 01:09 lib -> usr/lib

lrwxrwxrwx. 1 root root 9 Aug 1 01:09 lib64 -> usr/lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 media

drwxr-xr-x. 2 root root 6 Apr 11 2018 mnt

drwxr-xr-x. 2 root root 6 Apr 11 2018 opt

dr-xr-xr-x. 220 root root 0 Sep 23 13:05 proc

dr-xr-x---. 2 root root 114 Aug 1 01:10 root

drwxr-xr-x. 1 root root 21 Sep 23 13:05 run

lrwxrwxrwx. 1 root root 8 Aug 1 01:09 sbin -> usr/sbin

drwxr-xr-x. 2 root root 6 Apr 11 2018 srv

dr-xr-xr-x. 13 root root 0 Sep 23 12:25 sys

drwxrwxrwt. 7 root root 132 Aug 1 01:10 tmp

drwxr-xr-x. 13 root root 155 Aug 1 01:09 usr

drwxr-xr-x. 18 root root 238 Aug 1 01:09 var

[root@d8e6cc3bad6f /]# cd dataVolumeContainer1

[root@d8e6cc3bad6f dataVolumeContainer1]# ll

total 0

-rw-r--r--. 1 root root 0 Sep 23 12:53 1.txt

[root@d8e6cc3bad6f dataVolumeContainer1]#

[root@a63d98e5a625 /]# cd dataVolumeContainer2

[root@a63d98e5a625 dataVolumeContainer2]# ll

total 0

-rw-r--r--. 1 root root 0 Sep 23 13:06 2.txt

[root@a63d98e5a625 dataVolumeContainer2]#

[root@topcheer ~]# docker run -it --name dc03 --volumes-from stoic_lamport wgr/centos

[root@24ee76550315 /]# cd /dataVolumeContainer2

[root@24ee76550315 dataVolumeContainer2]# ll

total 0

-rw-r--r--. 1 root root 0 Sep 23 13:06 2.txt

[root@24ee76550315 dataVolumeContainer2]#

结论:容器之间配置信息的传递,数据卷的生命周期一直持续到没有容器使用它为止

5 Dockerfile详解

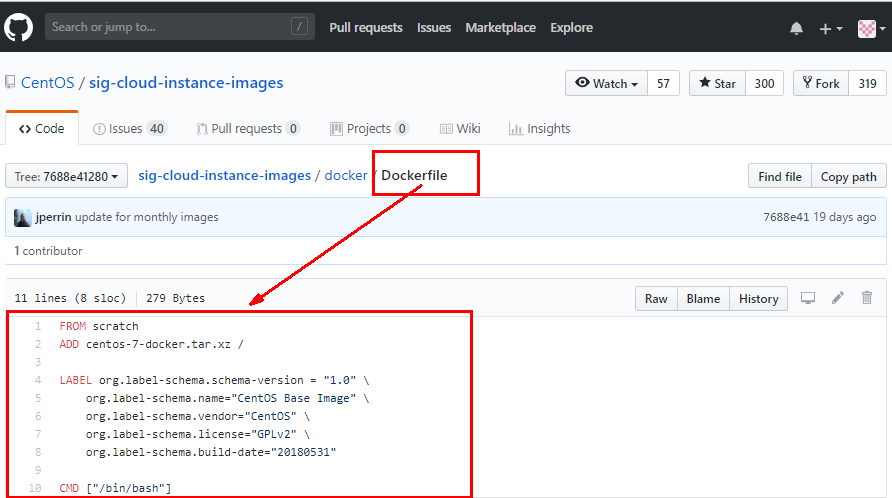

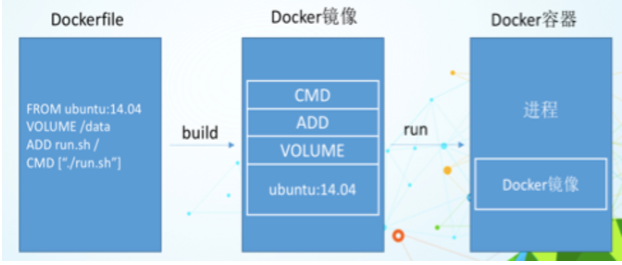

Dockerfile是用来构建Docker镜像的构建文件,是由一系列命令和参数构成的脚本。

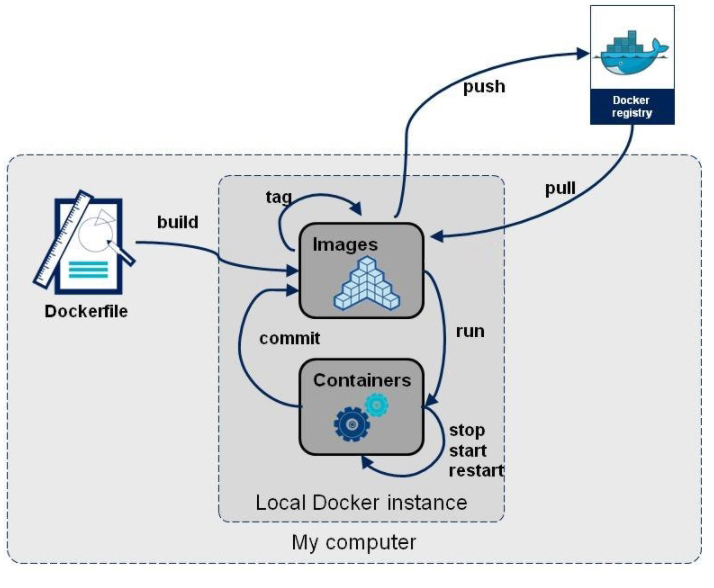

编写Dockerfile文件 --- docker build --- docker run

如图,centos为例

5.1 DockerFile构建过程解析

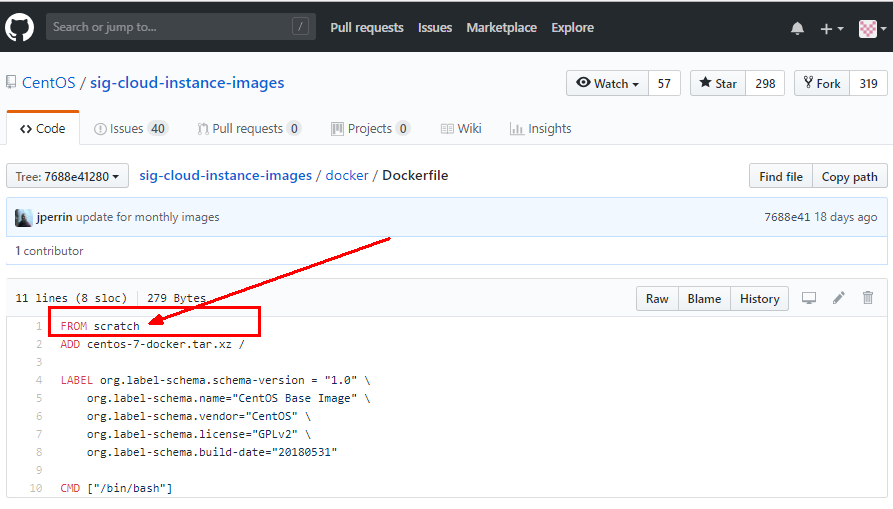

Dockerfile内容基础知识

1:每条保留字指令都必须为大写字母且后面要跟随至少一个参数

2:指令按照从上到下,顺序执行

3:#表示注释

4:每条指令都会创建一个新的镜像层,并对镜像进行提交

Docker执行Dockerfile的大致流程

(1)docker从基础镜像运行一个容器

(2)执行一条指令并对容器作出修改

(3)执行类似docker commit的操作提交一个新的镜像层

(4)docker再基于刚提交的镜像运行一个新容器

(5)执行dockerfile中的下一条指令直到所有指令都执行完成

总结

从应用软件的角度来看,Dockerfile、Docker镜像与Docker容器分别代表软件的三个不同阶段,

Dockerfile是软件的原材料

Docker镜像是软件的交付品

Docker容器则可以认为是软件的运行态。 Dockerfile面向开发,Docker镜像成为交付标准,Docker容器则涉及部署与运维,三者缺一不可,合力充当Docker体系的基石。

1 Dockerfile,需要定义一个Dockerfile,Dockerfile定义了进程需要的一切东西。Dockerfile涉及的内容包括执行代码或者是文件、环境变量、依赖包、运行时环境、动态链接库、操作系统的发行版、服务进程和内核进程(当应用进程需要和系统服务和内核进程打交道,这时需要考虑如何设计namespace的权限控制)等等;

2 Docker镜像,在用Dockerfile定义一个文件之后,docker build时会产生一个Docker镜像,当运行 Docker镜像时,会真正开始提供服务;

3 Docker容器,容器是直接提供服务的。

5.2 Dockerfile指令

| FROM | 基础镜像,当前新镜像是基于哪个镜像的 |

|---|---|

| MAINTAINER | 镜像维护者的姓名和邮箱地址 |

| RUN | 容器构建时需要运行的命令 |

| EXPOSE | 当前容器对外暴露出的端口 |

| WORKDIR | 指定在创建容器后,终端默认登陆的进来工作目录,一个落脚点 |

| ENV | 用来在构建镜像过程中设置环境变量 |

| ADD | 将宿主机目录下的文件拷贝进镜像且ADD命令会自动处理URL和解压tar压缩包 |

| COPY | 类似ADD,拷贝文件和目录到镜像中。 将从构建上下文目录中 <源路径> 的文件/目录复制到新的一层的镜像内的 <目标路径> 位置 |

| VOLUME | 容器数据卷,用于数据保存和持久化工作 |

| CMD | Dockerfile 中可以有多个 CMD 指令,但只有最后一个生效,CMD 会被 docker run 之后的参数替换 |

| ENTRYPOINT | ENTRYPOINT 的目的和 CMD 一样,都是在指定容器启动程序及参数 |

| ONBUILD | 当构建一个被继承的Dockerfile时运行命令,父镜像在被子继承后父镜像的onbuild被触发 |

注:Docker Hub 中 99% 的镜像都是通过在 base 镜像中安装和配置需要的软件构建出来的

5.3 制作案例--自定义镜像mycentos

自定义mycentos目的使我们自己的镜像具备如下: 登陆后的默认路径 vim编辑器 查看网络配置ifconfig支持

编写Dockerfile

FROM centos

MAINTAINER wgr<wang.gr@topcheer.com> ENV MYPATH /usr/local

WORKDIR $MYPATH RUN yum -y install vim

RUN yum -y install net-tools EXPOSE 80 CMD echo $MYPATH

CMD echo "success--------------ok"

CMD /bin/bash开始构建

[root@topcheer myfile]# docker build -t mycentosfile:1.1 .

Sending build context to Docker daemon 2.048 kB

Step 1/10 : FROM centos

---> 67fa590cfc1c

Step 2/10 : MAINTAINER wgr<wang.gr@topcheer.com>

---> Running in 1f88baf9b360

---> 871c31a91729

Removing intermediate container 1f88baf9b360

Step 3/10 : ENV MYPATH /usr/local

---> Running in b069dd98cebf

---> 084266f310f4

Removing intermediate container b069dd98cebf

Step 4/10 : WORKDIR $MYPATH

---> 4d957d2ce926

Removing intermediate container fe5768a9a5b5

Step 5/10 : RUN yum -y install vim

---> Running in fd8a0b061957

Loaded plugins: fastestmirror, ovl

Determining fastest mirrors

* base: mirror.jdcloud.com

* extras: centos.ustc.edu.cn

* updates: centos.ustc.edu.cn

Resolving Dependencies

--> Running transaction check

---> Package vim-enhanced.x86_64 2:7.4.629-6.el7 will be installed

--> Processing Dependency: vim-common = 2:7.4.629-6.el7 for package: 2:vim-enhanced-7.4.629-6.el7.x86_64

--> Processing Dependency: which for package: 2:vim-enhanced-7.4.629-6.el7.x86_64

--> Processing Dependency: perl(:MODULE_COMPAT_5.16.3) for package: 2:vim-enhanced-7.4.629-6.el7.x86_64

--> Processing Dependency: libperl.so()(64bit) for package: 2:vim-enhanced-7.4.629-6.el7.x86_64

--> Processing Dependency: libgpm.so.2()(64bit) for package: 2:vim-enhanced-7.4.629-6.el7.x86_64

--> Running transaction check

---> Package gpm-libs.x86_64 0:1.20.7-6.el7 will be installed

.....................

Complete!

---> 67a4329fa503

Removing intermediate container e92c8b523c7c

Step 7/10 : EXPOSE 80

---> Running in bf6935680423

---> e47d782ab0f5

Removing intermediate container bf6935680423

Step 8/10 : CMD echo $MYPATH

---> Running in e0c51d8c13ba

---> 850284459ab5

Removing intermediate container e0c51d8c13ba

Step 9/10 : CMD echo "success--------------ok"

---> Running in 339022b46c36

---> 7117b7f8d635

Removing intermediate container 339022b46c36

Step 10/10 : CMD /bin/bash

---> Running in ad662d3129a4

---> f022cd7b9017

Removing intermediate container ad662d3129a4

Successfully built f022cd7b9017

[root@topcheer myfile]#

运行

[root@topcheer myfile]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

mycentosfile 1.1 f022cd7b9017 27 seconds ago 395 MB

topcher/tomcat 1.0.1 3d8737216a1e 2 hours ago 508 MB

docker.io/tomcat latest 8973f493aa0a 2 days ago 508 MB

docker.io/centos latest 67fa590cfc1c 4 weeks ago 202 MB

docker.io/hello-world latest fce289e99eb9 8 months ago 1.84 kB

[root@topcheer myfile]# docker run -it mycentosfile:1.1

[root@48e1ce50cb3f local]# ll

total 0

drwxr-xr-x. 2 root root 6 Apr 11 2018 bin

drwxr-xr-x. 2 root root 6 Apr 11 2018 etc

drwxr-xr-x. 2 root root 6 Apr 11 2018 games

drwxr-xr-x. 2 root root 6 Apr 11 2018 include

drwxr-xr-x. 2 root root 6 Apr 11 2018 lib

drwxr-xr-x. 2 root root 6 Apr 11 2018 lib64

drwxr-xr-x. 2 root root 6 Apr 11 2018 libexec

drwxr-xr-x. 2 root root 6 Apr 11 2018 sbin

drwxr-xr-x. 5 root root 49 Aug 1 01:09 share

drwxr-xr-x. 2 root root 6 Apr 11 2018 src

[root@48e1ce50cb3f local]# pwd

/usr/local

[root@48e1ce50cb3f local]# vim 1.txt

[root@48e1ce50cb3f local]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 172.17.0.6 netmask 255.255.0.0 broadcast 0.0.0.0

inet6 fe80::42:acff:fe11:6 prefixlen 64 scopeid 0x20<link>

ether 02:42:ac:11:00:06 txqueuelen 0 (Ethernet)

RX packets 8 bytes 656 (656.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 8 bytes 656 (656.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

inet6 ::1 prefixlen 128 scopeid 0x10<host>

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

[root@48e1ce50cb3f local]# [root@topcheer myfile]#

[root@topcheer myfile]#

[root@topcheer myfile]#

[root@topcheer myfile]# docker history f022cd7b9017

IMAGE CREATED CREATED BY SIZE COMMENT

f022cd7b9017 2 minutes ago /bin/sh -c #(nop) CMD ["/bin/sh" "-c" "/b... 0 B

7117b7f8d635 2 minutes ago /bin/sh -c #(nop) CMD ["/bin/sh" "-c" "ec... 0 B

850284459ab5 2 minutes ago /bin/sh -c #(nop) CMD ["/bin/sh" "-c" "ec... 0 B

e47d782ab0f5 2 minutes ago /bin/sh -c #(nop) EXPOSE 80/tcp 0 B

67a4329fa503 2 minutes ago /bin/sh -c yum -y install net-tools 69 MB

4b7b749294d0 2 minutes ago /bin/sh -c yum -y install vim 124 MB

4d957d2ce926 3 minutes ago /bin/sh -c #(nop) WORKDIR /usr/local 0 B

084266f310f4 3 minutes ago /bin/sh -c #(nop) ENV MYPATH=/usr/local 0 B

871c31a91729 3 minutes ago /bin/sh -c #(nop) MAINTAINER wgr<wang.gr@... 0 B

67fa590cfc1c 4 weeks ago /bin/sh -c #(nop) CMD ["/bin/bash"] 0 B

<missing> 4 weeks ago /bin/sh -c #(nop) LABEL org.label-schema.... 0 B

<missing> 4 weeks ago /bin/sh -c #(nop) ADD file:4e7247c06de9ad1... 202 MB

[root@topcheer myfile]#

5.4 CMD/ENTRYPOINT 详解

都是指定一个容器启动时要运行的命令

CMD

Dockerfile 中可以有多个 CMD 指令,但只有最后一个生效,CMD 会被 docker run 之后的参数替换

[root@topcheer myfile]# docker run -it 3d8737216a1e ls -l

total 124

-rw-r--r--. 1 root root 19318 Sep 16 18:19 BUILDING.txt

-rw-r--r--. 1 root root 5407 Sep 16 18:19 CONTRIBUTING.md

-rw-r--r--. 1 root root 57011 Sep 16 18:19 LICENSE

-rw-r--r--. 1 root root 1726 Sep 16 18:19 NOTICE

-rw-r--r--. 1 root root 3255 Sep 16 18:19 README.md

-rw-r--r--. 1 root root 7139 Sep 16 18:19 RELEASE-NOTES

-rw-r--r--. 1 root root 16262 Sep 16 18:19 RUNNING.txt

drwxr-xr-x. 2 root root 4096 Sep 20 01:40 bin

drwxr-sr-x. 1 root root 22 Sep 22 13:28 conf

drwxr-sr-x. 2 root staff 78 Sep 20 01:40 include

drwxr-xr-x. 2 root root 4096 Sep 20 01:40 lib

drwxrwxrwx. 1 root root 177 Sep 22 13:28 logs

drwxr-sr-x. 3 root staff 151 Sep 20 01:40 native-jni-lib

drwxrwxrwx. 2 root root 30 Sep 20 01:40 temp

drwxr-xr-x. 1 root root 18 Sep 22 13:33 webapps

drwxrwxrwx. 1 root root 22 Sep 22 13:28 work

[root@topcheer myfile]#

注:tomcat的Dockerfile最后一个命令为CMD /bin/bash,手动输入参数,会进行替换

ENTRYPOINT

docker run 之后的参数会被当做参数传递给 ENTRYPOINT,之后形成新的命令组合

[root@topcheer myfile]# docker build -f dockerfile1 -t myip .

Sending build context to Docker daemon 2.048 kB

Step 1/3 : FROM centos

---> 67fa590cfc1c

Step 2/3 : RUN yum install -y curl

---> Running in 24d685efc352

Loaded plugins: fastestmirror, ovl

Determining fastest mirrors

* base: mirrors.aliyun.com

* extras: mirrors.huaweicloud.com

* updates: mirrors.huaweicloud.com

Resolving Dependencies

--> Running transaction check

---> Package curl.x86_64 0:7.29.0-51.el7_6.3 will be updated

---> Package curl.x86_64 0:7.29.0-54.el7 will be an update

--> Processing Dependency: libcurl = 7.29.0-54.el7 for package: curl-7.29.0-54.el7.x86_64

--> Running transaction check

---> Package libcurl.x86_64 0:7.29.0-51.el7_6.3 will be updated

---> Package libcurl.x86_64 0:7.29.0-54.el7 will be an update

--> Finished Dependency Resolution

Dependencies Resolved

================================================================================

Package Arch Version Repository Size

================================================================================

Updating:

curl x86_64 7.29.0-54.el7 base 270 k

Updating for dependencies:

libcurl x86_64 7.29.0-54.el7 base 222 k

Transaction Summary

================================================================================

Upgrade 1 Package (+1 Dependent package)

Total download size: 493 k

Downloading packages:

Delta RPMs disabled because /usr/bin/applydeltarpm not installed.

warning: /var/cache/yum/x86_64/7/base/packages/libcurl-7.29.0-54.el7.x86_64.rpm: Header V3 RSA/SHA256 Signature, key ID f4a80eb5: NOKEY

Public key for libcurl-7.29.0-54.el7.x86_64.rpm is not installed

--------------------------------------------------------------------------------

Total 988 kB/s | 493 kB 00:00

Retrieving key from file:///etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-7

Importing GPG key 0xF4A80EB5:

Userid : "CentOS-7 Key (CentOS 7 Official Signing Key) <security@centos.org>"

Fingerprint: 6341 ab27 53d7 8a78 a7c2 7bb1 24c6 a8a7 f4a8 0eb5

Package : centos-release-7-6.1810.2.el7.centos.x86_64 (@CentOS)

From : /etc/pki/rpm-gpg/RPM-GPG-KEY-CentOS-7

Running transaction check

Running transaction test

Transaction test succeeded

Running transaction

Updating : libcurl-7.29.0-54.el7.x86_64 1/4

Updating : curl-7.29.0-54.el7.x86_64 2/4

Cleanup : curl-7.29.0-51.el7_6.3.x86_64 3/4

Cleanup : libcurl-7.29.0-51.el7_6.3.x86_64 4/4

Verifying : libcurl-7.29.0-54.el7.x86_64 1/4

Verifying : curl-7.29.0-54.el7.x86_64 2/4

Verifying : curl-7.29.0-51.el7_6.3.x86_64 3/4

Verifying : libcurl-7.29.0-51.el7_6.3.x86_64 4/4

Updated:

curl.x86_64 0:7.29.0-54.el7

Dependency Updated:

libcurl.x86_64 0:7.29.0-54.el7

Complete!

---> ed86a4b09c55

Removing intermediate container 24d685efc352

Step 3/3 : CMD curl -s http://ip.cn

---> Running in c98ca5fa9fed

---> 420c99c3b707

Removing intermediate container c98ca5fa9fed

Successfully built 420c99c3b707

[root@topcheer myfile]#

root@topcheer myfile]# cat dockerfile1

FROM centos

RUN yum install -y curl

CMD [ "curl", "-s", "http://ip.cn" ]

加入参数 -i

[root@topcheer myfile]# docker run 420c99c3b707 -i

container_linux.go:235: starting container process caused "exec: \"-i\": executable file not found in $PATH"

/usr/bin/docker-current: Error response from daemon: oci runtime error: container_linux.go:235: starting container process caused "exec: \"-i\": executable file not found in $PATH".

[root@topcheer myfile]#

我们可以看到可执行文件找不到的报错,executable file not found。 之前我们说过,跟在镜像名后面的是 command,运行时会替换 CMD 的默认值。 因此这里的 -i 替换了原来的 CMD,而不是添加在原来的 curl -s http://ip.cn 后面。而 -i 根本不是命令,所以自然找不到。

那么如果我们希望加入 -i 这参数,我们就必须重新完整的输入这个命令:

$ docker run myip curl -s http://ip.cn -i

[root@topcheer myfile]# docker build -f dockerfile2 -t myip:1.2 .

Sending build context to Docker daemon 3.072 kB

Step 1/3 : FROM centos

---> 67fa590cfc1c

Step 2/3 : RUN yum install -y curl

---> Using cache

---> ed86a4b09c55

Step 3/3 : ENTRYPOINT curl -s http://ip.cn

---> Running in 695e59ae2f9f

---> 00a0a1f80e36

Removing intermediate container 695e59ae2f9f

Successfully built 00a0a1f80e36

[root@topcheer myfile]#

root@topcheer myfile]# cat dockerfile2

FROM centos

RUN yum install -y curl

ENTRYPOINT [ "curl", "-s", "http://ip.cn" ]

[root@topcheer myfile]#

[root@topcheer myfile]# docker run 00a0a1f80e36 -i

HTTP/1.1 301 Moved Permanently

Date: Sun, 22 Sep 2019 16:21:12 GMT

Transfer-Encoding: chunked

Connection: keep-alive

Cache-Control: max-age=3600

Expires: Sun, 22 Sep 2019 17:21:12 GMT

Location: https://ip.cn/

Server: cloudflare

CF-RAY: 51a59c51fca7d356-LAX

[root@topcheer myfile]#

5.5 自定义镜像Tomcat9

[root@topcheer myfile]# mkdir -p /zzyyuse/mydockerfile/tomcat9

[root@topcheer myfile]# cd /zzyyuse/mydockerfile/tomcat9/

[root@topcheer tomcat9]# mv touch touch.txt

[root@topcheer tomcat9]# ll

总用量 202568

-rw-r--r--. 1 root root 12326996 9月 23 00:29 apache-tomcat-9.0.26.tar.gz

-rw-r--r--. 1 root root 195094741 9月 23 00:44 jdk-8u221-linux-x64.tar.gz

-rw-r--r--. 1 root root 8 9月 23 00:26 touch.txt

[root@topcheer tomcat9]# vim dockerfile

[root@topcheer tomcat9]# docker build -f dockerfile -t mytomcat9 .

Sending build context to Docker daemon 207.4 MB

Step 1/15 : FROM centos

---> 67fa590cfc1c

Step 2/15 : MAINTAINER wgr<wang.gr@Topcheer.com>

---> Running in 1d226a95e4bd

---> 1757ce5df080

Removing intermediate container 1d226a95e4bd

Step 3/15 : COPY touch.txt /usr/local/cincontainer.txt

---> 47027886f2b6

Removing intermediate container 7f9c861f6ebf

Step 4/15 : ADD jdk-8u221-linux-x64.tar.gz /usr/local/

---> af6a09494e41

Removing intermediate container 1ce823526620

Step 5/15 : ADD apache-tomcat-9.0.26.tar.gz /usr/local/

---> 30ed83402115

Removing intermediate container 63f92f905d88

Step 6/15 : RUN yum -y install vim

---> Running in 52768f621694

Complete!

---> 1a786e61417c

Removing intermediate container 52768f621694

Step 7/15 : ENV MYPATH /usr/local

---> Running in a9ffa71dea83

---> 3e22143a0c16

Removing intermediate container a9ffa71dea83

Step 8/15 : WORKDIR $MYPATH

---> 6371b1f9c73c

Removing intermediate container 0f276bf3ce88

Step 9/15 : ENV JAVA_HOME /usr/local/jdk1.8.0_221

---> Running in 41ccc23b039d

---> 41a86caa4a67

Removing intermediate container 41ccc23b039d

Step 10/15 : ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

---> Running in d8b2069614ec

---> b2d06aada292

Removing intermediate container d8b2069614ec

Step 11/15 : ENV CATALINA_HOME /usr/local/apache-tomcat-9.0.26

---> Running in b8129aaa2c20

---> 6f4277b94c01

Removing intermediate container b8129aaa2c20

Step 12/15 : ENV CATALINA_BASE /usr/local/apache-tomcat-9.0.26

---> Running in 310832c60e55

---> 965e54b0e595

Removing intermediate container 310832c60e55

Step 13/15 : ENV PATH $PATH:$JAVA_HOME/bin:$CATALINA_HOME/lib:$CATALINA_HOME/bin

---> Running in e9c4f9fe44a2

---> 7102c04d53b2

Removing intermediate container e9c4f9fe44a2

Step 14/15 : EXPOSE 8080

---> Running in 329adfcaba35

---> 601bffd46d5a

Removing intermediate container 329adfcaba35

Step 15/15 : CMD /usr/local/apache-tomcat-9.0.26/bin/startup.sh && tail -F /usr/local/apache-tomcat-9.0.26/bin/logs/catalina.out

---> Running in 1ecc7244a41f

---> 6c243064a028

Removing intermediate container 1ecc7244a41f

Successfully built 6c243064a028

Dockerfile

[root@topcheer tomcat9]# cat dockerfile

FROM centos

MAINTAINER wgr<wang.gr@Topcheer.com>

#把宿主机当前上下文的c.txt拷贝到容器/usr/local/路径下

COPY touch.txt /usr/local/cincontainer.txt

#把java与tomcat添加到容器中

ADD jdk-8u221-linux-x64.tar.gz /usr/local/

ADD apache-tomcat-9.0.26.tar.gz /usr/local/

#安装vim编辑器

RUN yum -y install vim

#设置工作访问时候的WORKDIR路径,登录落脚点

ENV MYPATH /usr/local

WORKDIR $MYPATH

#配置java与tomcat环境变量

ENV JAVA_HOME /usr/local/jdk1.8.0_221

ENV CLASSPATH $JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

ENV CATALINA_HOME /usr/local/apache-tomcat-9.0.26

ENV CATALINA_BASE /usr/local/apache-tomcat-9.0.26

ENV PATH $PATH:$JAVA_HOME/bin:$CATALINA_HOME/lib:$CATALINA_HOME/bin

#容器运行时监听的端口

EXPOSE 8080

#启动时运行tomcat

CMD /usr/local/apache-tomcat-9.0.26/bin/startup.sh && tail -F /usr/local/apache-tomcat-9.0.26/bin/logs/catalina.out

[root@topcheer tomcat9]#

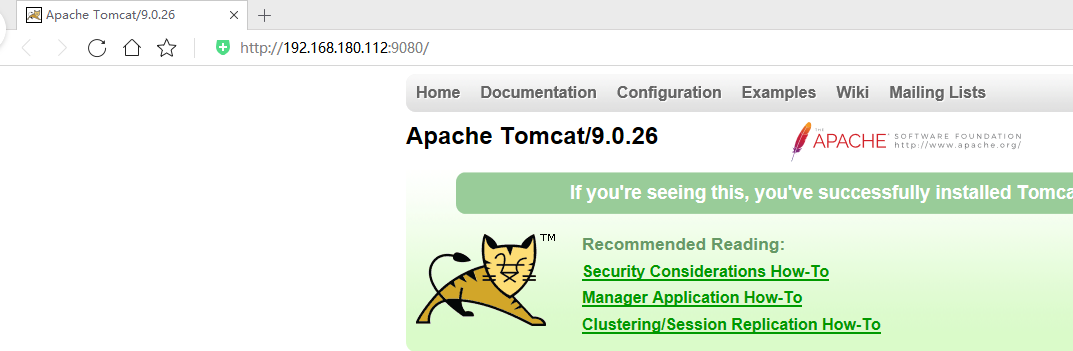

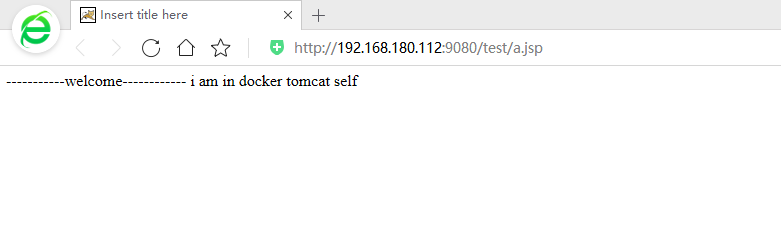

运行容器

[root@topcheer tomcat9]# docker run -d -p 9080:8080 --name myt9 -v /zzyyuse/mydockerfile/tomcat9/test:/usr/local/apache-tomcat-9.0.26/webapps/test -v /zzyyuse/mydockerfile/tomcat9/tomcat9logs/:/usr/local/apache-tomcat-9.0.26/logs --privileged=true mytomcat9

caf65bdc80f404157081f45f74a3056150504a80a44d7217f31ab95bf604c053

[root@topcheer tomcat9]#

[root@topcheer tomcat9]# ll

总用量 202572

-rw-r--r--. 1 root root 12326996 9月 23 00:29 apache-tomcat-9.0.26.tar.gz

-rw-r--r--. 1 root root 929 9月 23 00:47 dockerfile

-rw-r--r--. 1 root root 195094741 9月 23 00:44 jdk-8u221-linux-x64.tar.gz

drwxr-xr-x. 2 root root 6 9月 23 00:51 test