Tensorflow 循环神经网络 基本 RNN 和 LSTM 网络 拟合、预测sin曲线

时序预测一直是比较重要的研究问题,在统计学中我们有各种的模型来解决时间序列问题,但是最近几年比较火的深度学习中也有能解决时序预测问题的方法,另外在深度学习领域中时序预测算法可以解决自然语言问题等。

在网上找到了 tensorflow 中 RNN 和 LSTM 算法预测 sin 曲线的代码,效果不错。

LSTM:

#encoding:UTF-8 import random

import numpy as np

import tensorflow as tf

from tensorflow.contrib.rnn.python.ops import core_rnn

from tensorflow.contrib.rnn.python.ops import core_rnn_cell def build_data(n):

xs = []

ys = []

for i in range(0, 2000):

k = random.uniform(1, 50) x = [[np.sin(k + j)] for j in range(0, n)]

y = [np.sin(k + n)] # x[i] = sin(k + i) (i = 0, 1, ..., n-1)

# y[i] = sin(k + n)

xs.append(x)

ys.append(y) train_x = np.array(xs[0: 1500])

train_y = np.array(ys[0: 1500])

test_x = np.array(xs[1500:])

test_y = np.array(ys[1500:])

return (train_x, train_y, test_x, test_y) length = 10

time_step_size = length

vector_size = 1

batch_size = 10

test_size = 10 # build data

(train_x, train_y, test_x, test_y) = build_data(length)

print(train_x.shape, train_y.shape, test_x.shape, test_y.shape) X = tf.placeholder("float", [None, length, vector_size])

Y = tf.placeholder("float", [None, 1]) # get lstm_size and output predicted value

W = tf.Variable(tf.random_normal([10, 1], stddev=0.01))

B = tf.Variable(tf.random_normal([1], stddev=0.01)) def seq_predict_model(X, w, b, time_step_size, vector_size):

# input X shape: [batch_size, time_step_size, vector_size]

# transpose X to [time_step_size, batch_size, vector_size]

X = tf.transpose(X, [1, 0, 2]) # reshape X to [time_step_size * batch_size, vector_size]

X = tf.reshape(X, [-1, vector_size]) # split X, array[time_step_size], shape: [batch_size, vector_size]

X = tf.split(X, time_step_size, 0) # LSTM model with state_size = 10

cell = core_rnn_cell.BasicLSTMCell(num_units=10,

forget_bias=1.0,

state_is_tuple=True)

outputs, _states = core_rnn.static_rnn(cell, X, dtype=tf.float32) # Linear activation

return tf.matmul(outputs[-1], w) + b, cell.state_size pred_y, _ = seq_predict_model(X, W, B, time_step_size, vector_size)

loss = tf.square(tf.subtract(Y, pred_y))

train_op = tf.train.GradientDescentOptimizer(0.001).minimize(loss) with tf.Session() as sess:

tf.global_variables_initializer().run() # train

for i in range(50):

# train

for end in range(batch_size, len(train_x), batch_size):

begin = end - batch_size

x_value = train_x[begin: end]

y_value = train_y[begin: end]

sess.run(train_op, feed_dict={X: x_value, Y: y_value}) # randomly select validation set from test set

test_indices = np.arange(len(test_x))

np.random.shuffle(test_indices)

test_indices = test_indices[0: test_size]

x_value = test_x[test_indices]

y_value = test_y[test_indices] # eval in validation set

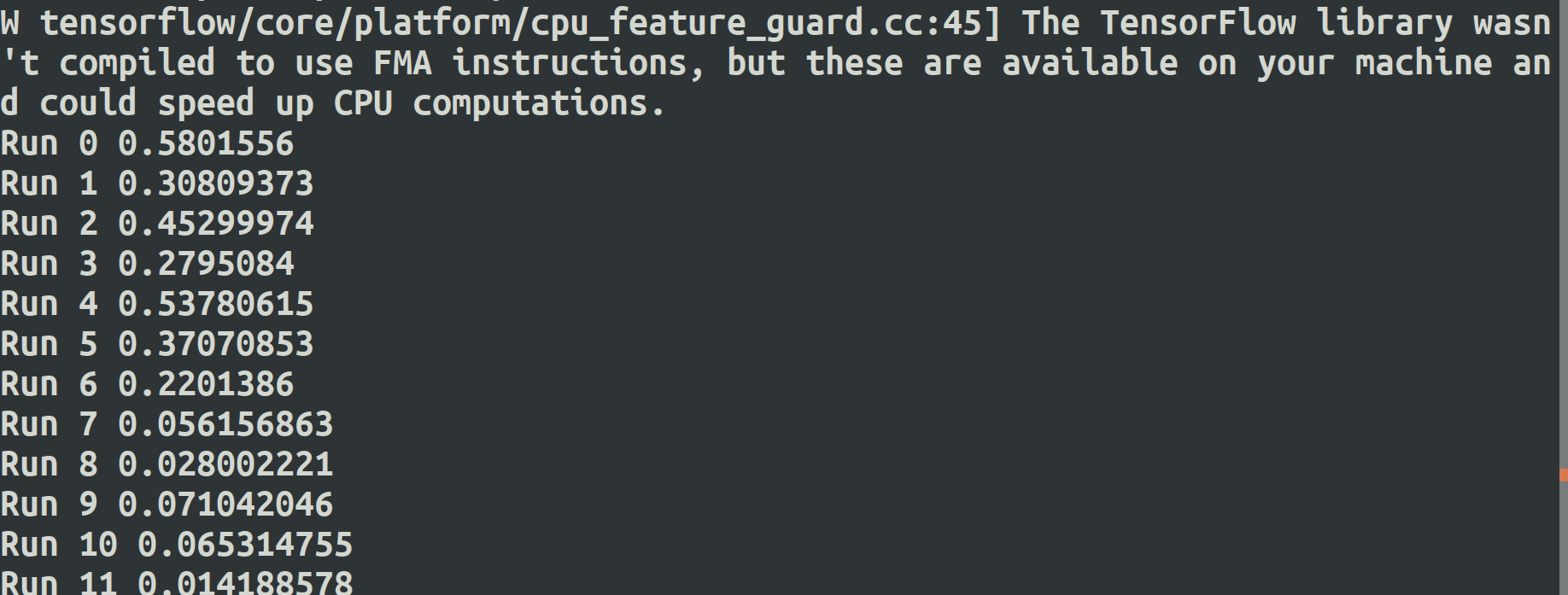

val_loss = np.mean(sess.run(loss,

feed_dict={X: x_value, Y: y_value}))

print('Run %s' % i, val_loss) for b in range(0, len(test_x), test_size):

x_value = test_x[b: b + test_size]

y_value = test_y[b: b + test_size]

pred = sess.run(pred_y, feed_dict={X: x_value})

for i in range(len(pred)):

print(pred[i], y_value[i], pred[i] - y_value[i])

RNN :

import random import numpy as np

import tensorflow as tf

from tensorflow.contrib.rnn.python.ops import core_rnn

from tensorflow.contrib.rnn.python.ops import core_rnn_cell def build_data(n):

xs = []

ys = []

for i in range(0, 2000):

k = random.uniform(1, 50) x = [[np.sin(k + j)] for j in range(0, n)]

y = [np.sin(k + n)] # x[i] = sin(k + i) (i = 0, 1, ..., n-1)

# y[i] = sin(k + n)

xs.append(x)

ys.append(y) train_x = np.array(xs[0: 1500])

train_y = np.array(ys[0: 1500])

test_x = np.array(xs[1500:])

test_y = np.array(ys[1500:])

return (train_x, train_y, test_x, test_y) length = 10

time_step_size = length

vector_size = 1

batch_size = 10

test_size = 10 # build data

(train_x, train_y, test_x, test_y) = build_data(length)

print(train_x.shape, train_y.shape, test_x.shape, test_y.shape) X = tf.placeholder("float", [None, length, vector_size])

Y = tf.placeholder("float", [None, 1]) # get lstm_size and output predicted value

W = tf.Variable(tf.random_normal([10, 1], stddev=0.01))

B = tf.Variable(tf.random_normal([1], stddev=0.01)) def seq_predict_model(X, w, b, time_step_size, vector_size):

# input X shape: [batch_size, time_step_size, vector_size]

# transpose X to [time_step_size, batch_size, vector_size]

X = tf.transpose(X, [1, 0, 2])

# reshape X to [time_step_size * batch_size, vector_size]

X = tf.reshape(X, [-1, vector_size])

# split X, array[time_step_size], shape: [batch_size, vector_size]

X = tf.split(X, time_step_size, 0) cell = core_rnn_cell.BasicRNNCell(num_units=10)

initial_state = tf.zeros([batch_size, cell.state_size])

outputs, _states = core_rnn.static_rnn(cell, X, initial_state=initial_state) # Linear activation

return tf.matmul(outputs[-1], w) + b, cell.state_size pred_y, _ = seq_predict_model(X, W, B, time_step_size, vector_size)

loss = tf.square(tf.subtract(Y, pred_y))

train_op = tf.train.GradientDescentOptimizer(0.001).minimize(loss) with tf.Session() as sess:

tf.global_variables_initializer().run() # train

for i in range(50):

# train

for end in range(batch_size, len(train_x), batch_size):

begin = end - batch_size

x_value = train_x[begin: end]

y_value = train_y[begin: end]

sess.run(train_op, feed_dict={X: x_value, Y: y_value}) # randomly select validation set from test set

test_indices = np.arange(len(test_x))

np.random.shuffle(test_indices)

test_indices = test_indices[0: test_size]

x_value = test_x[test_indices]

y_value = test_y[test_indices] # eval in validation set

val_loss = np.mean(sess.run(loss,

feed_dict={X: x_value, Y: y_value}))

print('Run %s' % i, val_loss) for b in range(0, len(test_x), test_size):

x_value = test_x[b: b + test_size]

y_value = test_y[b: b + test_size]

pred = sess.run(pred_y, feed_dict={X: x_value})

for i in range(len(pred)):

print(pred[i], y_value[i], pred[i] - y_value[i])

-------------------------------------------------------------------

Tensorflow 循环神经网络 基本 RNN 和 LSTM 网络 拟合、预测sin曲线的更多相关文章

- 深度学习项目——基于循环神经网络(RNN)的智能聊天机器人系统

基于循环神经网络(RNN)的智能聊天机器人系统 本设计研究智能聊天机器人技术,基于循环神经网络构建了一套智能聊天机器人系统,系统将由以下几个部分构成:制作问答聊天数据集.RNN神经网络搭建.seq2s ...

- 大话循环神经网络(RNN)

在上一篇文章中,介绍了 卷积神经网络(CNN)的算法原理,CNN在图像识别中有着强大.广泛的应用,但有一些场景用CNN却无法得到有效地解决,例如: 语音识别,要按顺序处理每一帧的声音信息,有些结果 ...

- Coursera Deep Learning笔记 序列模型(一)循环序列模型[RNN GRU LSTM]

参考1 参考2 参考3 1. 为什么选择序列模型 序列模型能够应用在许多领域,例如: 语音识别 音乐发生器 情感分类 DNA序列分析 机器翻译 视频动作识别 命名实体识别 这些序列模型都可以称作使用标 ...

- 【学习笔记】循环神经网络(RNN)

前言 多方寻找视频于博客.学习笔记,依然不能完全熟悉RNN,因此决定还是回到书本(<神经网络与深度学习>第六章),一点点把啃下来,因为这一章对于整个NLP学习十分重要,我想打好基础. 当然 ...

- TensorFlow(十一):递归神经网络(RNN与LSTM)

RNN RNN(Recurrent Neural Networks,循环神经网络)不仅会学习当前时刻的信息,也会依赖之前的序列信息.由于其特殊的网络模型结构解决了信息保存的问题.所以RNN对处理时间序 ...

- CNN(卷积神经网络)、RNN(循环神经网络)、DNN,LSTM

http://cs231n.github.io/neural-networks-1 https://arxiv.org/pdf/1603.07285.pdf https://adeshpande3.g ...

- 深度学习之循环神经网络(RNN)

循环神经网络(Recurrent Neural Network,RNN)是一类具有短期记忆能力的神经网络,适合用于处理视频.语音.文本等与时序相关的问题.在循环神经网络中,神经元不但可以接收其他神经元 ...

- TensorFlow——循环神经网络基本结构

1.导入依赖包,初始化一些常量 import collections import numpy as np import tensorflow as tf TRAIN_DATA = "./d ...

- [深度学习]理解RNN, GRU, LSTM 网络

Recurrent Neural Networks(RNN) 人类并不是每时每刻都从一片空白的大脑开始他们的思考.在你阅读这篇文章时候,你都是基于自己已经拥有的对先前所见词的理解来推断当前词的真实含义 ...

随机推荐

- 十大排序代码实现(python)

目录 冒泡排序 快速排序 简单插入排序 希尔排序 简单选择排序 堆排序 二路归并排序 多路归并排序 计数排序 桶排序 基数排序 写在前面: 参考文章:十大经典排序算法 本文的逻辑顺序基于从第一篇参考博 ...

- pandas速查手册(中文版)

本文翻译自文章:Pandas Cheat Sheet - Python for Data Science 对于数据科学家,无论是数据分析还是数据挖掘来说,Pandas是一个非常重要的Python包.它 ...

- k8s证书之etcd,api,front-proxy配置文件

这几个文件,是要结合前面的master安装脚本的. 所以有的json文件中会出现一些LOCAL_HOSTS_L,THIS_HOST之类的变量. 如果手工单独使用这些文件,要将这些变量替换为合适的IP或 ...

- JDK源码那些事儿之SynchronousQueue下篇

之前一篇文章已经讲解了阻塞队列SynchronousQueue的大部分内容,其中默认的非公平策略还未说明,本文就紧接上文继续讲解其中的非公平策略下的内部实现,顺便简单说明其涉及到的线程池部分的使用 前 ...

- 2019-2020-1 20199301《Linux内核原理与分析》第四周作业

Week4 MenuOS的构造 一.上周复习 计算机的三大法宝: 存储程序计算机: 函数调用堆栈: 中断. 操作系统的两把宝剑: 中断上下文-保存现场和恢复现场 进程上下文 二.Linux内核源代码简 ...

- 神经网络(8)---如何求神经网络的参数:cost function的表达

两种分类问题: binary & multi-class 下面的是两种类型的分类问题(一种是binary classification,一种是multi-class classificatio ...

- 创建型模式(四) 建造者\生成器模式(Builder)

一.动机(Motivation) 在软件系统中,有时候面临着“一个复杂对象”的创建工作,其通常由各个部分的子对象用一定的算法构成:由于需求的变化,这个复杂对象的各个部分经常面临着剧烈的变化,但是将它们 ...

- bzoj 4128: Matrix ——BSGS&&矩阵快速幂&&哈希

题目 给定矩阵A, B和模数p,求最小的正整数x满足 A^x = B(mod p). 分析 与整数的离散对数类似,只不过普通乘法换乘了矩阵乘法. 由于矩阵的求逆麻烦,使用 $A^{km-t} = B( ...

- 2019ICPC徐州网络赛 A.Who is better?——斐波那契博弈&&扩展中国剩余定理

题意 有一堆石子,两个顶尖聪明的人玩游戏,先取者可以取走任意多个,但不能全取完,以后每人取的石子数不能超过上个人的两倍.石子的个数是通过模方程组给出的. 题目链接 分析 斐波那契博弈有结论:当且仅当石 ...

- VS调试web api服务

vs2013开发web api service时,使用vs开发服务器调试没有问题,但将项目放到另一台电脑调试(vs2010),总会提示 无法再以下端口启动asp.net开发服务器 错误:通常每个套接字 ...