关于 tf.nn.softmax_cross_entropy_with_logits 及 tf.clip_by_value

In order to train our model, we need to define what it means for the model to be good. Well, actually, in machine learning we typically define what it means for a model to be bad. We call this the cost, or the loss, and it represents how far off our model is from our desired outcome. We try to minimize that error, and the smaller the error margin, the better our model is.

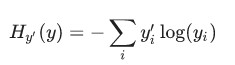

One very common, very nice function to determine the loss of a model is called "cross-entropy." Cross-entropy arises from thinking about information compressing codes in information theory but it winds up being an important idea in lots of areas, from gambling to machine learning. It's defined as:

Where y is our predicted probability distribution, and y′ is the true distribution (the one-hot vector with the digit labels). In some rough sense, the cross-entropy is measuring how inefficient our predictions are for describing the truth. Going into more detail about cross-entropy is beyond the scope of this tutorial, but it's well worthunderstanding.

To implement cross-entropy we need to first add a new placeholder to input the correct answers:

y_ = tf.placeholder(tf.float32, [None, 10])

Then we can implement the cross-entropy function,

cross_entropy = tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(y), reduction_indices=[1]))

First, tf.log computes the logarithm of each element of y. Next, we multiply each element of y_ with the corresponding element of tf.log(y). Then tf.reduce_sum adds the elements in the second dimension of y, due to the reduction_indices=[1] parameter. Finally, tf.reduce_mean computes the mean over all the examples in the batch.

Note that in the source code, we don't use this formulation, because it is numerically unstable. Instead, we apply tf.nn.softmax_cross_entropy_with_logits on the unnormalized logits (e.g., we call softmax_cross_entropy_with_logits on tf.matmul(x, W) + b), because this more numerically stable function internally computes the softmax activation. In your code, consider usingtf.nn.softmax_cross_entropy_with_logits instead.

大意是:如果使用 cross_entropy = tf.reduce_mean(-tf.reduce_sum(y_ * tf.log(y), reduction_indices=[1]))

来计算交叉熵,则需要使用 tf.clip_by_value 来使某些求 log 的值,因为 log 会产生 none (如 log-3 ), 用它来限定不出现none,具体使用方式如下:

cross_entropy = -tf.reduce_sum(y_*tf.log(tf.clip_by_value(y_conv, 1e-10, 1.0)))但后来有人用了一个更好的方法来避免none:

cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv + 1e-10))具体参见 http://stackoverflow.com/questions/33712178/tensorflow-nan-bug 的讨论。

而如果直接用 tf.nn.softmax_cross_entropy_with_logits 则你再没有上面的后顾之忧了,它自动解决了上面的问题。

关于 tf.nn.softmax_cross_entropy_with_logits 及 tf.clip_by_value的更多相关文章

- 【TensorFlow】tf.nn.softmax_cross_entropy_with_logits的用法

在计算loss的时候,最常见的一句话就是 tf.nn.softmax_cross_entropy_with_logits ,那么它到底是怎么做的呢? 首先明确一点,loss是代价值,也就是我们要最小化 ...

- 深度学习原理与框架-Tensorflow卷积神经网络-卷积神经网络mnist分类 1.tf.nn.conv2d(卷积操作) 2.tf.nn.max_pool(最大池化操作) 3.tf.nn.dropout(执行dropout操作) 4.tf.nn.softmax_cross_entropy_with_logits(交叉熵损失) 5.tf.truncated_normal(两个标准差内的正态分布)

1. tf.nn.conv2d(x, w, strides=[1, 1, 1, 1], padding='SAME') # 对数据进行卷积操作 参数说明:x表示输入数据,w表示卷积核, stride ...

- [TensorFlow] tf.nn.softmax_cross_entropy_with_logits的用法

在计算loss的时候,最常见的一句话就是tf.nn.softmax_cross_entropy_with_logits,那么它到底是怎么做的呢? 首先明确一点,loss是代价值,也就是我们要最小化的值 ...

- tf.nn.softmax_cross_entropy_with_logits的用法

http://blog.csdn.net/mao_xiao_feng/article/details/53382790 计算loss的时候,最常见的一句话就是tf.nn.softmax_cross_e ...

- tf.nn.softmax & tf.nn.reduce_sum & tf.nn.softmax_cross_entropy_with_logits

tf.nn.softmax softmax是神经网络的最后一层将实数空间映射到概率空间的常用方法,公式如下: \[ softmax(x)_i=\frac{exp(x_i)}{\sum_jexp(x_j ...

- tf.nn.softmax_cross_entropy_with_logits()函数的使用方法

import tensorflow as tf labels = [[0.2,0.3,0.5], [0.1,0.6,0.3]]logits = [[2,0.5,1], [0.1,1,3]] a=tf. ...

- 1、求loss:tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits, labels, name=None))

1.求loss: tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits, labels, name=None)) 第一个参数log ...

- tf.nn.softmax_cross_entropy_with_logits 分类

tf.nn.softmax_cross_entropy_with_logits(logits, labels, name=None) 参数: logits:就是神经网络最后一层的输出,如果有batch ...

- 深度学习原理与框架-图像补全(原理与代码) 1.tf.nn.moments(求平均值和标准差) 2.tf.control_dependencies(先执行内部操作) 3.tf.cond(判别执行前或后函数) 4.tf.nn.atrous_conv2d 5.tf.nn.conv2d_transpose(反卷积) 7.tf.train.get_checkpoint_state(判断sess是否存在

1. tf.nn.moments(x, axes=[0, 1, 2]) # 对前三个维度求平均值和标准差,结果为最后一个维度,即对每个feature_map求平均值和标准差 参数说明:x为输入的fe ...

随机推荐

- PLS-00157: AUTHID only allowed on schema-level programs解决办法 包体的过程使用调用者权限方法

在包体里写了一个过程,test执行时报错,但是如果把该过程单独拿出来创建一个,就能顺利执行. 在没加上调用者权 authid current_user之前,报错如下 ORA-01031: insuf ...

- 跟着百度学PHP[14]-初识PDO数据库抽象层

目录: 00x1 php中的pdo是什么? 00x2 pdo创建一个PDO对象 00x1 php中的pdo是什么? 就是操作数据库的方法,pdo就是把操作数据库的函数封装成一个pdo类,其间做了安全验 ...

- 1. Retrofit2 -- Getting Started and Create an Android Client

1. Retrofit2 -- Getting Started and Create an Android Client Retrofit tutorial 什么是 Retrofit 如何申明请求 准 ...

- 11 jsp脚本调用java代码

大多数情况下, jsp 文档的大部分由静态文本(html)构成, 为处理该页面而创建的 servlet 只是将它们原封不动的传递给客户端, 原封不动的传送给客户端有两个小例外: 1. 如果想传送 &l ...

- ParseChat应用源代码ios版

ParseChat是一个全然原生的iPhone应用程序.用于创建实时的.基于文本的Parse聊天室.功能:支持多台设备之间的实时聊天,可动态加入新的聊天室,支持基本配置,可发送和接收音效以及随意大小的 ...

- bootstrap基础学习十一篇

bootstrap下拉菜单(Dropdowns) 下拉菜单是可切换的,是以列表格式显示链接的上下文菜单.如需使用下列菜单,只需要在 class .dropdown 内加上下拉菜单即可. a.代码示例如 ...

- CSS样式呈现优先级

经常出现CSS样式不生效的问题. 比如我先对p{},然后在其中一个标签写了个.p_style{}怎么都不生效,于是想到了CSS的呈现优先级. 经过自己测试, 属性style="" ...

- PANDAS 数据合并与重塑(join/merge篇)

pandas中也常常用到的join 和merge方法 merge pandas的merge方法提供了一种类似于SQL的内存链接操作,官网文档提到它的性能会比其他开源语言的数据操作(例如R)要高效. 和 ...

- 细说多线程之Thread VS Runnable

[线程创建的两种方式] [线程的生命周期] ● 就绪:创建了线程对象后,调用了线程的start(). (注意:此时线程只是进入了线程队列,等待获取CPU服务,具备了运行的条件,但并不一定已经开始运行了 ...

- 《转》适用于开发人员的10个最佳ASP.NET的CMS系统

1) mojoportal mojoPortal 是一个开源的.用 C# 编写的站点框架和内容管理系统,可以运行在 Windows 中的 ASP.NET 和 Linux/Mac OS X 中的 Mon ...