HiBench成长笔记——(3) HiBench测试Spark

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html

创建并修改配置文件conf/spark.conf

cp conf/spark.conf.template conf/spark.conf

参考:https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-sparkbench.md,设置属性为下列值

# Spark home hibench.spark.home /opt/cloudera/parcels/CDH--.cdh5./lib/spark # Spark master # standalone mode: spark://xxx:7077 # YARN mode: yarn-client hibench.spark.master yarn-client

执行脚本

bin/workloads/micro/wordcount/prepare/prepare.sh

返回信息

[root@node1 prepare]# ./prepare.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start HadoopPrepareWordcount bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Input Deleted hdfs://node1:8020/HiBench/Wordcount/Input Submit MapReduce Job: /opt/cloudera/parcels/CDH--.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn jar /opt/cloudera/parcels/CDH--.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-examples--cdh5. -D mapreduce.randomtextwriter.bytespermap= -D mapreduce.job.maps= -D mapreduce.job.reduces= hdfs://node1:8020/HiBench/Wordcount/Input The job took seconds. finish HadoopPrepareWordcount bench

执行脚本

bin/workloads/micro/wordcount/spark/run.sh

返回信息

[root@node1 spark]# ./run.sh patching args= Parsing conf: /home/cf/app/HiBench-master/conf/hadoop.conf Parsing conf: /home/cf/app/HiBench-master/conf/hibench.conf Parsing conf: /home/cf/app/HiBench-master/conf/spark.conf Parsing conf: /home/cf/app/HiBench-master/conf/workloads/micro/wordcount.conf probe -.cdh5./lib/hadoop/../../jars/hadoop-mapreduce-client-jobclient--cdh5.14.2-tests.jar start ScalaSparkWordcount bench hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -rm -r -skipTrash hdfs://node1:8020/HiBench/Wordcount/Output Deleted hdfs://node1:8020/HiBench/Wordcount/Output hdfs -.cdh5./bin/hadoop --config /etc/hadoop/conf.cloudera.yarn fs -du -s hdfs://node1:8020/HiBench/Wordcount/Input Export env: SPARKBENCH_PROPERTIES_FILES=/home/cf/app/HiBench-master/report/wordcount/spark/conf/sparkbench/sparkbench.conf Export env: HADOOP_CONF_DIR=/etc/hadoop/conf.cloudera.yarn Submit Spark job: /opt/cloudera/parcels/CDH--.cdh5./lib/spark/bin/spark-submit --properties- --executor-cores --executor-memory 4g /home/cf/app/HiBench-master/sparkbench/assembly/target/sparkbench-assembly-7.1-SNAPSHOT-dist.jar hdfs://node1:8020/HiBench/Wordcount/Input hdfs://node1:8020/HiBench/Wordcount/Output // :: INFO remote.RemoteActorRefProvider$RemotingTerminator: Shutting down remote daemon. finish ScalaSparkWordcount bench

查看report/hibench.report

Type Date Time Input_data_size Duration(s) Throughput(bytes/s) Throughput/node HadoopWordcount -- :: ScalaSparkWordcount -- ::

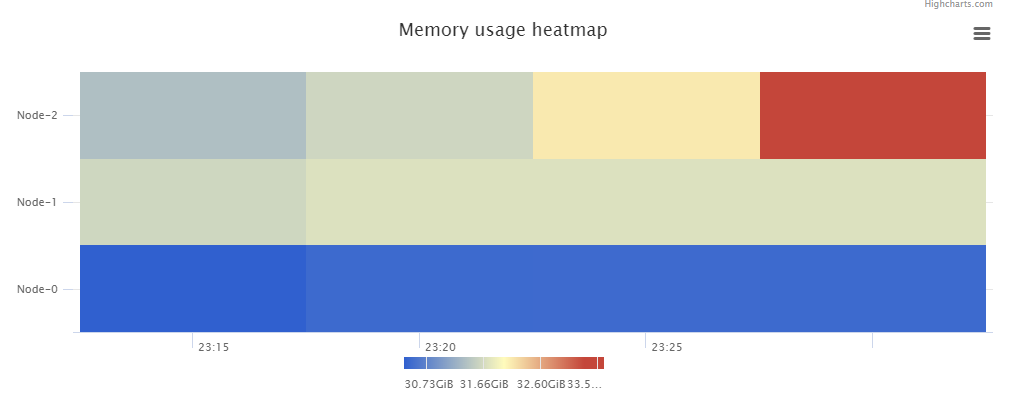

\report\wordcount\spark下有多个文件:monitor.log是原始日志,bench.log是scheduler.DAGScheduler和scheduler.TaskSetManager信息,monitor.html可视化了系统的性能信息,\conf\wordcount.conf、\conf\sparkbench\spark.conf和\conf\sparkbench\sparkbench.conf是本次任务的环境变量

monitor.html中包含了Memory usage heatmap等统计图:

根据官方文档 https://github.com/Intel-bigdata/HiBench/blob/master/docs/run-sparkbench.md ,还可以修改 hibench.scale.profile 调整测试的数据规模,修改 hibench.default.map.parallelism 和 hibench.default.shuffle.parallelism 调整并行化,修改hibench.yarn.executor.num、hibench.yarn.executor.cores、spark.executor.memory和spark.driver.memory控制Spark executor 的数量、核数、内存和driver的内存。

HiBench成长笔记——(3) HiBench测试Spark的更多相关文章

- HiBench成长笔记——(1) HiBench概述

测试分类 HiBench共计19个测试方向,可大致分为6个测试类别:分别是micro,ml(机器学习),sql,graph,websearch和streaming. 2.1 micro Benchma ...

- HiBench成长笔记——(4) HiBench测试Spark SQL

很多内容之前的博客已经提过,这里不再赘述,详细内容参照本系列前面的博客:https://www.cnblogs.com/ratels/p/10970905.html 和 https://www.cnb ...

- HiBench成长笔记——(6) HiBench测试结果分析

Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Aggregation Scan Join Ag ...

- HiBench成长笔记——(5) HiBench-Spark-SQL-Scan源码分析

run.sh #!/bin/bash # Licensed to the Apache Software Foundation (ASF) under one or more # contributo ...

- HiBench成长笔记——(2) CentOS部署安装HiBench

安装Scala 使用spark-shell命令进入shell模式,查看spark版本和Scala版本: 下载Scala2.10.5 wget https://downloads.lightbend.c ...

- HiBench成长笔记——(7) 阅读《The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis》

<The HiBench Benchmark Suite: Characterization of the MapReduce-Based Data Analysis>内容精选 We th ...

- HiBench成长笔记——(10) 分析源码execute_with_log.py

#!/usr/bin/env python2 # Licensed to the Apache Software Foundation (ASF) under one or more # contri ...

- HiBench成长笔记——(9) 分析源码monitor.py

monitor.py 是主监控程序,将监控数据写入日志,并统计监控数据生成HTML统计展示页面: #!/usr/bin/env python2 # Licensed to the Apache Sof ...

- HiBench成长笔记——(8) 分析源码workload_functions.sh

workload_functions.sh 是测试程序的入口,粘连了监控程序 monitor.py 和 主运行程序: #!/bin/bash # Licensed to the Apache Soft ...

随机推荐

- Windows平台VC++ 6.0 下的网络编程学习 - 简单的测试winsock.h头文件

最近学习数据结构和算法学得有点累了(貌似也没那么累...)...找了本网络编程翻了翻当做打一个小基础吧,打算一边继续学习数据结构一边也看看网络编程相关的... 简单的第一次尝试,就大致梳理一下看书+自 ...

- 基于贝叶斯模型和KNN模型分别对手写体数字进行识别

首先,我们准备了0~9的训练集和测试集,这些手写体全部经过像素转换,用0,1表示,有颜色的区域为0,没有颜色的区域为1.实现代码如下: # 图片处理 # 先将所有图片转为固定宽高,比如32*,然后再进 ...

- springboot RESTful Web Service

参考:http://spring.io/guides/gs/rest-service-cors/

- c++ 读取、保存单张图片

转载:https://www.jb51.net/article/147896.htm 实际上就是以二进制形式打开文件,将数据保存到内存,在以二进制形式输出到指定文件.因此对于有图片的文件,也可以用这种 ...

- 第1节 Scala基础语法:5、6、7、8、基础-申明变量和常用类型,表达式,循环,定义方法和函数

4. Scala基础 4.1. 声明变量 package cn.itcast.scala object VariableDemo { def main(args: Array[Strin ...

- eclipse启动时权限不够的问题

eclipse启动时权限不够的问题 2009年04月28日 19:19:00 tomey21 阅读数 1445 安装好后每次都要用root权限运行,比较郁闷,摸索了一下,修改一下相关目录的权限就可 ...

- AWS-DDNS

1. DDNS 2. 在 Linux 实例上设置动态 DNS 2.1 Ubuntu 2.2 Amazon Linux 2 2.3 Arch Linux 2.4 其他Linux系统 3. 更多相关 1. ...

- Day11 - L - 邂逅明下 HDU - 2897

当日遇到月,于是有了明.当我遇到了你,便成了侣.那天,日月相会,我见到了你.而且,大地失去了光辉,你我是否成侣?这注定是个凄美的故事.(以上是废话)小t和所有世俗的人们一样,期待那百年难遇的日食.驻足 ...

- Controller生命周期

1. 实例化 alloc/init, initWithNibName 2.awakeFromNib 从nib创建Controller对象 3.get/set outlets 4. viewDidLoa ...

- HTTP关键词收集

[HTTP协议][客户端][服务器端][HTTPS][Web服务器][域名][DNS][IP地址][虚拟服务器][虚拟主机][中转服务器][HTTP/1.1规范][域名解析][Web托管服务][代理] ...