记一次cdh6.3.2版本spark写入phoniex的错误:Incompatible jars detected between client and server. Ensure that phoenix-[version]-server.jar is put on the classpath of HBase in every region server:

Caused by: java.lang.reflect.InvocationTargetException

at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at com.wanmi.sbc.dw.spark.app.RecommendAnalysisApp$$anonfun$run$2.apply(RecommendAnalysisApp.scala:33)

at com.wanmi.sbc.dw.spark.app.RecommendAnalysisApp$$anonfun$run$2.apply(RecommendAnalysisApp.scala:33)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.TraversableLike$$anonfun$map$1.apply(TraversableLike.scala:234)

at scala.collection.mutable.HashSet.foreach(HashSet.scala:78)

at scala.collection.TraversableLike$class.map(TraversableLike.scala:234)

at scala.collection.mutable.AbstractSet.scala$collection$SetLike$$super$map(Set.scala:46)

at scala.collection.SetLike$class.map(SetLike.scala:92)

at scala.collection.mutable.AbstractSet.map(Set.scala:46)

at com.wanmi.sbc.dw.spark.app.RecommendAnalysisApp$.run(RecommendAnalysisApp.scala:33)

at com.wanmi.sbc.dw.spark.app.RecommendAnalysisApp.run(RecommendAnalysisApp.scala)

... 11 more

Caused by: java.sql.SQLException: ERROR 2006 (INT08): Incompatible jars detected between client and server. Ensure that phoenix-[version]-server.jar is put on the classpath of HBase in every region server: Can't find method newStub in org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService!

at org.apache.phoenix.exception.SQLExceptionCode$Factory$1.newException(SQLExceptionCode.java:494)

at org.apache.phoenix.exception.SQLExceptionInfo.buildException(SQLExceptionInfo.java:150)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.checkClientServerCompatibility(ConnectionQueryServicesImpl.java:1326)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.ensureTableCreated(ConnectionQueryServicesImpl.java:1162)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.createTable(ConnectionQueryServicesImpl.java:1501)

at org.apache.phoenix.query.DelegateConnectionQueryServices.createTable(DelegateConnectionQueryServices.java:119)

at org.apache.phoenix.schema.MetaDataClient.createTableInternal(MetaDataClient.java:2721)

at org.apache.phoenix.schema.MetaDataClient.createTable(MetaDataClient.java:1114)

at org.apache.phoenix.compile.CreateTableCompiler$1.execute(CreateTableCompiler.java:192)

at org.apache.phoenix.jdbc.PhoenixStatement$2.call(PhoenixStatement.java:408)

at org.apache.phoenix.jdbc.PhoenixStatement$2.call(PhoenixStatement.java:391)

at org.apache.phoenix.call.CallRunner.run(CallRunner.java:53)

at org.apache.phoenix.jdbc.PhoenixStatement.executeMutation(PhoenixStatement.java:390)

at org.apache.phoenix.jdbc.PhoenixStatement.executeMutation(PhoenixStatement.java:378)

at org.apache.phoenix.jdbc.PhoenixStatement.executeUpdate(PhoenixStatement.java:1806)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$12.call(ConnectionQueryServicesImpl.java:2569)

at org.apache.phoenix.query.ConnectionQueryServicesImpl$12.call(ConnectionQueryServicesImpl.java:2532)

at org.apache.phoenix.util.PhoenixContextExecutor.call(PhoenixContextExecutor.java:76)

at org.apache.phoenix.query.ConnectionQueryServicesImpl.init(ConnectionQueryServicesImpl.java:2532)

at org.apache.phoenix.jdbc.PhoenixDriver.getConnectionQueryServices(PhoenixDriver.java:255)

at org.apache.phoenix.jdbc.PhoenixEmbeddedDriver.createConnection(PhoenixEmbeddedDriver.java:150)

at org.apache.phoenix.jdbc.PhoenixDriver.connect(PhoenixDriver.java:221)

at java.sql.DriverManager.getConnection(DriverManager.java:664)

at java.sql.DriverManager.getConnection(DriverManager.java:208)

at org.apache.phoenix.mapreduce.util.ConnectionUtil.getConnection(ConnectionUtil.java:113)

at org.apache.phoenix.mapreduce.util.ConnectionUtil.getInputConnection(ConnectionUtil.java:58)

at org.apache.phoenix.mapreduce.util.PhoenixConfigurationUtil.getSelectColumnMetadataList(PhoenixConfigurationUtil.java:354)

at org.apache.phoenix.spark.PhoenixRDD.toDataFrame(PhoenixRDD.scala:118)

at org.apache.phoenix.spark.SparkSqlContextFunctions.phoenixTableAsDataFrame(SparkSqlContextFunctions.scala:39)

at com.wanmi.sbc.dw.spark.recommend.db.PhoenixDb.select(PhoenixDb.scala:40)

at com.wanmi.sbc.dw.spark.recommend.CommonDimensionData.user_recommend_goods_data$lzycompute(CommonDimensionData.scala:222)

at com.wanmi.sbc.dw.spark.recommend.CommonDimensionData.user_recommend_goods_data(CommonDimensionData.scala:222)

at com.wanmi.sbc.dw.spark.recommend.CommonDimensionData.<init>(CommonDimensionData.scala:254)

at com.wanmi.sbc.dw.spark.recommend.DataAnalysis.<init>(DataAnalysis.scala:16)

at com.wanmi.sbc.dw.spark.recommend.hot.HotGoodsRecommend.<init>(HotGoodsRecommend.scala:19)

... 26 more

Caused by: java.lang.IllegalArgumentException: Can't find method newStub in org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService!

at org.apache.hadoop.hbase.util.Methods.call(Methods.java:47)

at org.apache.hadoop.hbase.protobuf.ProtobufUtil.newServiceStub(ProtobufUtil.java:1537)

at org.apache.hadoop.hbase.client.HTable$12.call(HTable.java:996)

at java.util.concurrent.FutureTask.run(FutureTask.java:266)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: java.lang.NoSuchMethodException: org.apache.phoenix.coprocessor.generated.MetaDataProtos$MetaDataService.newStub(org.apache.hadoop.hbase.shaded.com.google.protobuf.RpcChannel)

at java.lang.Class.getMethod(Class.java:1786)

at org.apache.hadoop.hbase.util.Methods.call(Methods.java:39)

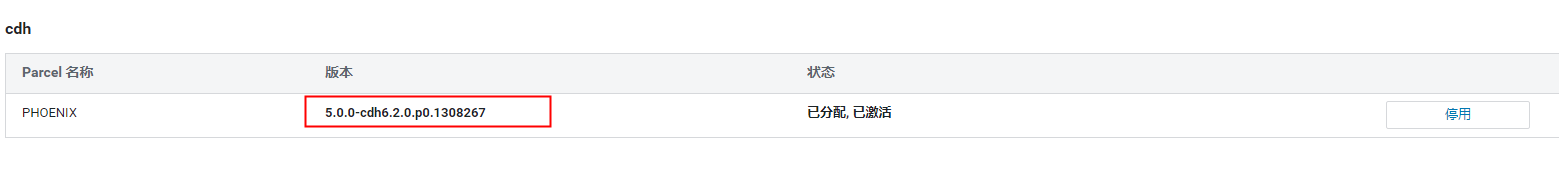

使用parall包安装的phoniex

在cdh集群执行spark读取phoniex数据是报上面错误

spark-shell --jars hdfs://hdfs地址:8020/spark/yarn/phoenix-core-5.0.0-HBase-2.0.jar,hdfs://hdfs地址:8020/spark/yarn/phoenix-spark-5.0.0-HBase-2.0.jar,hdfs://hdfs地址:8020/spark/yarn/hppc-0.7.2.jar,hdfs://hdfs地址:8020/spark/yarn/htrace-core-3.0.4.jar,hdfs://hdfs地址:8020/spark/yarn/htrace-core-3.1.0-incubating.jar,hdfs://hdfs地址:8020/spark/yarn/htrace-core-3.2.0-incubating.jar,hdfs://hdfs地址:8020/spark/yarn/htrace-core4-4.2.0-incubating.jar,hdfs://hdfs地址:8020/spark/yarn/tephra-api-0.6.0.jar,hdfs://hdfs地址:8020/spark/yarn/tephra-core-0.6.0.jar,hdfs://hdfs地址:8020/spark/yarn/tephra-api-0.14.0-incubating.jar,hdfs://hdfs地址:8020/spark/yarn/tephra-core-0.14.0-incubating.jar,hdfs://hdfs地址:8020/spark/yarn/twill-zookeeper-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/twill-api-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/twill-common-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/twill-core-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/twill-discovery-api-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/twill-discovery-core-0.8.0.jar,hdfs://hdfs地址:8020/spark/yarn/disruptor-3.3.6.jar,hdfs://hdfs地址:8020/spark/yarn/antlr4-runtime-4.7.jar,hdfs://hdfs地址:8020/spark/yarn/antlr-2.7.7.jar,hdfs://hdfs地址:8020/spark/yarn/antlr-runtime-3.4.jar,hdfs://hdfs地址:8020/spark/yarn/antlr-runtime-3.5.2.jar

使用idea开发环境没问题

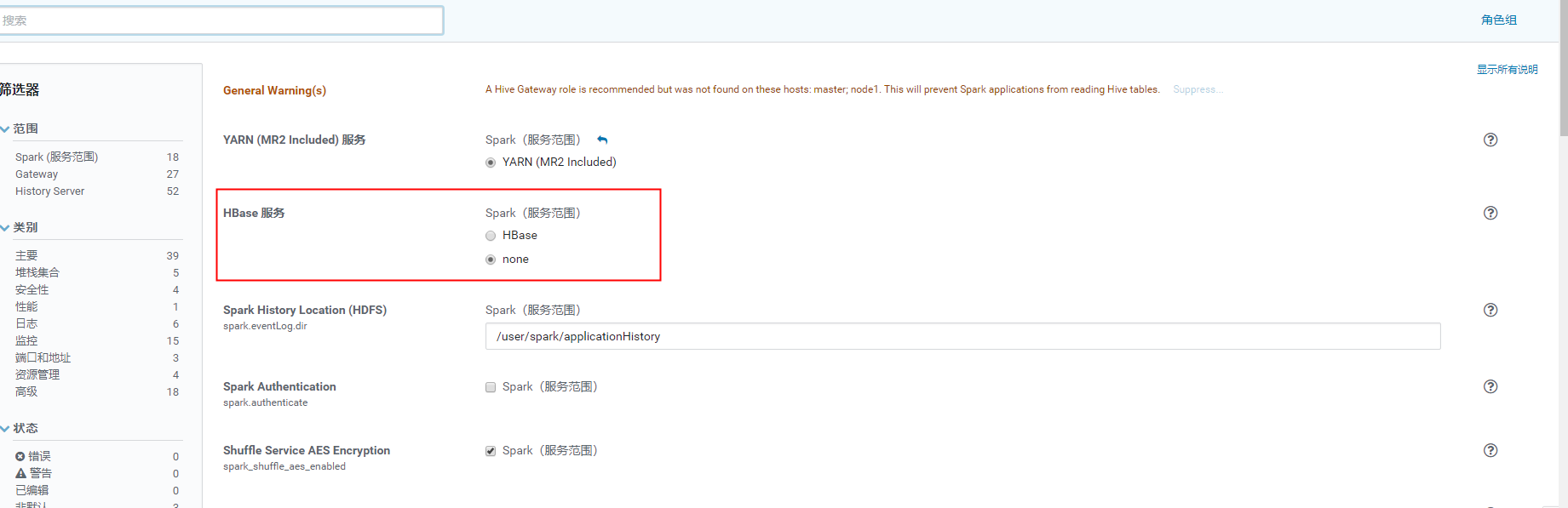

排查了1天,最大可能是cdh的 hbase的jar包依赖冲突的问题

选择将hbase服务依赖去掉,spark启动不加载hbase的classpath

将hbase相关jar copy到spark的classpath ,在运行代码成功了

cp $HBASE_HOME/lib/hbase-* $SPARK_HOME/jars

val df= spark.read.format("org.apache.phoenix.spark").options(Map("table" -> "RECOMMEND.HOT_GOODS_RECOMMEND", "zkUrl" -> "master:2181", "skipNormalizingIdentifier" -> "true")).load()

成功读取

太南了!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

记一次cdh6.3.2版本spark写入phoniex的错误:Incompatible jars detected between client and server. Ensure that phoenix-[version]-server.jar is put on the classpath of HBase in every region server:的更多相关文章

- Hbase Region Server整体架构

Region Server的整体架构 本文主要介绍Region的整体架构,后续再慢慢介绍region的各部分具体实现和源码 RegionServer逻辑架构图 RegionServer职责 1. ...

- habse Region server挂掉

2019-04-28 15:57:28,355 INFO org.apache.hadoop.hbase.regionserver.HeapMemoryManager: heapOccupancyPe ...

- hbase源码系列(三)Client如何找到正确的Region Server

客户端在进行put.delete.get等操作的时候,它都需要数据到底存在哪个Region Server上面,这个定位的操作是通过HConnection.locateRegion方法来完成的. loc ...

- region xx not deployed on any region server

ERROR: Region { meta => month_hotstatic,860010-2288000000_201405_5_exit_00000047486,1400144486405 ...

- 三 Client 如何找到正确的 Region Server

客户端在进行put.delete.get等操作的时候,它都需要数据到底存在哪个Region Server上面,这个定位的操作是通过 Connection.locateRegion方法来完成的. loc ...

- 码农飞升记-02-OracleJDK是什么?OracleJDK的版本怎么选择?

目录 1.Oracle JDK 是什么? 2.Oracle JDK 版本如何选择? 1.Java SE 发布节奏以及不同版本的差距 1.Java SE 8 以及之前版本的发布节奏和不同版本的差距 1. ...

- The server does not support version 3.0 of the J2EE Web module specification

1.错误: 在eclipse中使用run->run on server的时候,选择tomcat6会报错误:The server does not support version 3.0 of t ...

- Solution(项目部署):The server does not support version 3.0 of the J2EE Web module specification

1.错误: 在eclipse中使用run->run on server的时候,选择tomcat6会报错误:The server does not support version 3.0 of t ...

- HBase原理–所有Region切分的细节都在这里了

本文由 网易云发布. 作者:范欣欣(本篇文章仅限内部分享,如需转载,请联系网易获取授权.) Region自动切分是HBase能够拥有良好扩张性的最重要因素之一,也必然是所有分布式系统追求无限 ...

- SQL Server ->> Memory Allocation Mechanism and Performance Analysis(内存分配机制与性能分析)之 -- Minimum server memory与Maximum server memory

Minimum server memory与Maximum server memory是SQL Server下配置实例级别最大和最小可用内存(注意不等于物理内存)的服务器配置选项.它们是管理SQL S ...

随机推荐

- 基于 MySQL + Tablestore 分层存储架构的大规模订单系统实践-架构篇

简介: 本文简要介绍了基于 MySQL 结合 Tablestore 的大规模订单系统方案.这种方案支持大数据存储.高性能数据检索.SQL搜索.实时与全量数据分析,且部署简单.运维成本低. 作者 ...

- boom lab分析

单步调试: (gdb) bt #1 0x0000000000401347 in strings_not_equal () #2 0x0000000000400eee in phase_1 () #3 ...

- 8.prometheus监控--监控Mysql8.0

一.环境搭建 docker-compose安装mysql mkdir /data/mysql -p cd /data/mysql cat > docker-compose.yaml <&l ...

- Swift实现判断目录下是否存在指定文件功能

本文主要讲解以下这段名为 isDataJsonFilePathExists 的私有函数的 Swift 代码实现细节,该函数的作用是检查指定文件或文件夹是否存在,其返回值类型为 Bool 类型,如果存在 ...

- MacOS安装和使用标注软件“labelImg”教程

原文发布于:https://blog.zhaoxuan.site/archives/16.html: 第一时间获取最新文章请关注博客个人站:https://blog.zhaoxuan.site. 简介 ...

- NASM语法

NASM汇编语言的语法很简单,由4部分组成: label:instruction operands; comment 这4部分都是可选的.一条语句可以没有label,没有comment,甚至连inst ...

- mybaits-plus实现自定义字典转换

需求:字典实现类似mybatis-plus中@EnumValue的功能,假设枚举类中应用使用code,数据库存储对应的value 思路:Mybatis支持对Executor.StatementHand ...

- LOTO示波器软件PC缓存(波形录制与回放)功能

当打开PC缓存功能后, 软件将采用先进先出的原则排队对示波器采集的每一帧数据, 进行帧缓存. 当发现屏幕中有感兴趣的波形掠过时, 鼠标点击软件的(暂停)按钮, 可以选择回看某一帧的波形.一帧数据的量 ...

- ansible api调用及二次封装详解

ansible 2.7调用 程序 import json from collections import namedtuple from ansible.parsing.dataloader impo ...

- T2T-ViT:更多的局部结构信息,更高效的主干网络 | ICCV 2021

论文提出了T2T-ViT模型,引入tokens-to-token(T2T)模块有效地融合图像的结构信息,同时借鉴CNN结果设计了deep-narrow的ViT主干网络,增强特征的丰富性.在ImageN ...