沉淀再出发:java中的HashMap、ConcurrentHashMap和Hashtable的认识

沉淀再出发:java中的HashMap、ConcurrentHashMap和Hashtable的认识

一、前言

很多知识在学习或者使用了之后总是会忘记的,但是如果把这些只是背后的原理理解了,并且记忆下来,这样我们就不会忘记了,常用的方法有对比记忆,将几个易混的概念放到一起进行比较,对我们的学习和生活有很大的帮助,比如hashmap和hashtab这两个概念的对比和记忆。

二、HashMap的基础知识

2.1、HashMap的介绍

HashMap 是一个散列表,它存储的内容是键值对(key-value)映射。

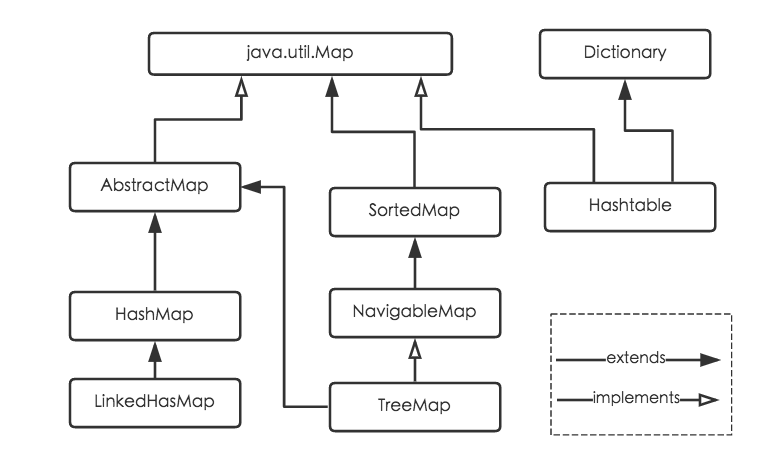

HashMap 继承于AbstractMap类,实现了Map、Cloneable、java.io.Serializable接口。

HashMap 的实现不是同步的,这意味着它不是线程安全的。它的key、value都可以为null。此外,HashMap中的映射不是有序的。

HashMap 的实例有两个参数影响其性能:“初始容量” 和 “加载因子”。容量是哈希表中桶的数量,初始容量是哈希表在创建时的容量。加载因子是哈希表在其容量自动增加之前可以达到多满的一种尺度。当哈希表中的条目数超出了加载因子与当前容量的乘积时,则要对该哈希表进行 rehash 操作(即重建内部数据结构),从而哈希表将具有大约两倍的桶数。通常,默认加载因子是 0.75, 这是在时间和空间成本上寻求一种折衷。加载因子过高虽然减少了空间开销,但同时也增加了查询成本(在大多数 HashMap 类的操作中,包括 get 和 put 操作,都反映了这一点)。在设置初始容量时应该考虑到映射中所需的条目数及其加载因子,以便最大限度地减少 rehash 操作次数。如果初始容量大于最大条目数除以加载因子,则不会发生 rehash 操作。

2.2、HashMap源码解读

package java.util;

import java.io.*; public class HashMap<K,V>

extends AbstractMap<K,V>

implements Map<K,V>, Cloneable, Serializable

{ // 默认的初始容量是16,必须是2的幂。

static final int DEFAULT_INITIAL_CAPACITY = 16; // 最大容量(必须是2的幂且小于2的30次方,传入容量过大将被这个值替换)

static final int MAXIMUM_CAPACITY = 1 << 30; // 默认加载因子

static final float DEFAULT_LOAD_FACTOR = 0.75f; // 存储数据的Entry数组,长度是2的幂。

// HashMap是采用拉链法实现的,每一个Entry本质上是一个单向链表

transient Entry[] table; // HashMap的大小,它是HashMap保存的键值对的数量

transient int size; // HashMap的阈值,用于判断是否需要调整HashMap的容量(threshold = 容量*加载因子)

int threshold; // 加载因子实际大小

final float loadFactor; // HashMap被改变的次数

transient volatile int modCount; // 指定“容量大小”和“加载因子”的构造函数

public HashMap(int initialCapacity, float loadFactor) {

if (initialCapacity < 0)

throw new IllegalArgumentException("Illegal initial capacity: " +

initialCapacity);

// HashMap的最大容量只能是MAXIMUM_CAPACITY

if (initialCapacity > MAXIMUM_CAPACITY)

initialCapacity = MAXIMUM_CAPACITY;

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new IllegalArgumentException("Illegal load factor: " +

loadFactor); // 找出“大于initialCapacity”的最小的2的幂

int capacity = 1;

while (capacity < initialCapacity)

capacity <<= 1; // 设置“加载因子”

this.loadFactor = loadFactor;

// 设置“HashMap阈值”,当HashMap中存储数据的数量达到threshold时,就需要将HashMap的容量加倍。

threshold = (int)(capacity * loadFactor);

// 创建Entry数组,用来保存数据

table = new Entry[capacity];

init();

} // 指定“容量大小”的构造函数

public HashMap(int initialCapacity) {

this(initialCapacity, DEFAULT_LOAD_FACTOR);

} // 默认构造函数。

public HashMap() {

// 设置“加载因子”

this.loadFactor = DEFAULT_LOAD_FACTOR;

// 设置“HashMap阈值”,当HashMap中存储数据的数量达到threshold时,就需要将HashMap的容量加倍。

threshold = (int)(DEFAULT_INITIAL_CAPACITY * DEFAULT_LOAD_FACTOR);

// 创建Entry数组,用来保存数据

table = new Entry[DEFAULT_INITIAL_CAPACITY];

init();

} // 包含“子Map”的构造函数

public HashMap(Map<? extends K, ? extends V> m) {

this(Math.max((int) (m.size() / DEFAULT_LOAD_FACTOR) + 1,

DEFAULT_INITIAL_CAPACITY), DEFAULT_LOAD_FACTOR);

// 将m中的全部元素逐个添加到HashMap中

putAllForCreate(m);

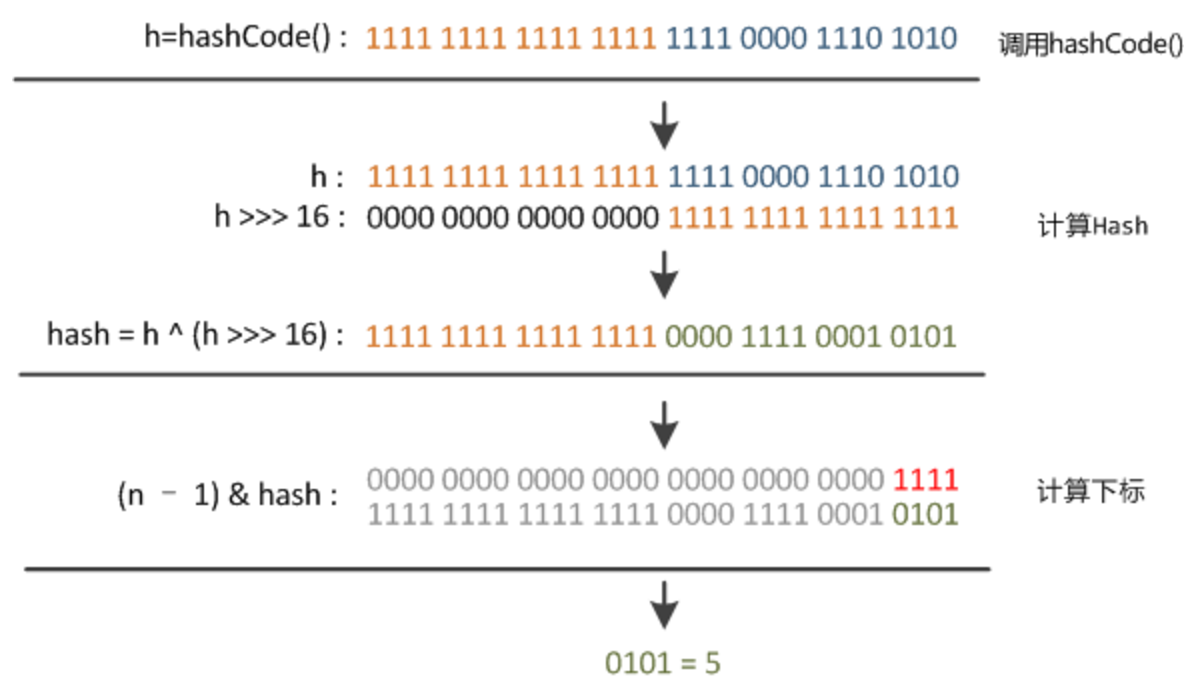

} static int hash(int h) {

h ^= (h >>> 20) ^ (h >>> 12);

return h ^ (h >>> 7) ^ (h >>> 4);

} // 返回索引值

// h & (length-1)保证返回值的小于length

static int indexFor(int h, int length) {

return h & (length-1);

} public int size() {

return size;

} public boolean isEmpty() {

return size == 0;

} // 获取key对应的value

public V get(Object key) {

if (key == null)

return getForNullKey();

// 获取key的hash值

int hash = hash(key.hashCode());

// 在“该hash值对应的链表”上查找“键值等于key”的元素

for (Entry<K,V> e = table[indexFor(hash, table.length)];

e != null;

e = e.next) {

Object k;

if (e.hash == hash && ((k = e.key) == key || key.equals(k)))

return e.value;

}

return null;

} // 获取“key为null”的元素的值

// HashMap将“key为null”的元素存储在table[0]位置!

private V getForNullKey() {

for (Entry<K,V> e = table[0]; e != null; e = e.next) {

if (e.key == null)

return e.value;

}

return null;

} // HashMap是否包含key

public boolean containsKey(Object key) {

return getEntry(key) != null;

} // 返回“键为key”的键值对

final Entry<K,V> getEntry(Object key) {

// 获取哈希值

// HashMap将“key为null”的元素存储在table[0]位置,“key不为null”的则调用hash()计算哈希值

int hash = (key == null) ? 0 : hash(key.hashCode());

// 在“该hash值对应的链表”上查找“键值等于key”的元素

for (Entry<K,V> e = table[indexFor(hash, table.length)];

e != null;

e = e.next) {

Object k;

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

return e;

}

return null;

} // 将“key-value”添加到HashMap中

public V put(K key, V value) {

// 若“key为null”,则将该键值对添加到table[0]中。

if (key == null)

return putForNullKey(value);

// 若“key不为null”,则计算该key的哈希值,然后将其添加到该哈希值对应的链表中。

int hash = hash(key.hashCode());

int i = indexFor(hash, table.length);

for (Entry<K,V> e = table[i]; e != null; e = e.next) {

Object k;

// 若“该key”对应的键值对已经存在,则用新的value取代旧的value。然后退出!

if (e.hash == hash && ((k = e.key) == key || key.equals(k))) {

V oldValue = e.value;

e.value = value;

e.recordAccess(this);

return oldValue;

}

} // 若“该key”对应的键值对不存在,则将“key-value”添加到table中

modCount++;

addEntry(hash, key, value, i);

return null;

} // putForNullKey()的作用是将“key为null”键值对添加到table[0]位置

private V putForNullKey(V value) {

for (Entry<K,V> e = table[0]; e != null; e = e.next) {

if (e.key == null) {

V oldValue = e.value;

e.value = value;

e.recordAccess(this);

return oldValue;

}

}

// 这里的完全不会被执行到!

modCount++;

addEntry(0, null, value, 0);

return null;

} // 创建HashMap对应的“添加方法”,

// 它和put()不同。putForCreate()是内部方法,它被构造函数等调用,用来创建HashMap

// 而put()是对外提供的往HashMap中添加元素的方法。

private void putForCreate(K key, V value) {

int hash = (key == null) ? 0 : hash(key.hashCode());

int i = indexFor(hash, table.length); // 若该HashMap表中存在“键值等于key”的元素,则替换该元素的value值

for (Entry<K,V> e = table[i]; e != null; e = e.next) {

Object k;

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k)))) {

e.value = value;

return;

}

} // 若该HashMap表中不存在“键值等于key”的元素,则将该key-value添加到HashMap中

createEntry(hash, key, value, i);

} // 将“m”中的全部元素都添加到HashMap中。

// 该方法被内部的构造HashMap的方法所调用。

private void putAllForCreate(Map<? extends K, ? extends V> m) {

// 利用迭代器将元素逐个添加到HashMap中

for (Iterator<? extends Map.Entry<? extends K, ? extends V>> i = m.entrySet().iterator(); i.hasNext(); ) {

Map.Entry<? extends K, ? extends V> e = i.next();

putForCreate(e.getKey(), e.getValue());

}

} // 重新调整HashMap的大小,newCapacity是调整后的单位

void resize(int newCapacity) {

Entry[] oldTable = table;

int oldCapacity = oldTable.length;

if (oldCapacity == MAXIMUM_CAPACITY) {

threshold = Integer.MAX_VALUE;

return;

} // 新建一个HashMap,将“旧HashMap”的全部元素添加到“新HashMap”中,

// 然后,将“新HashMap”赋值给“旧HashMap”。

Entry[] newTable = new Entry[newCapacity];

transfer(newTable);

table = newTable;

threshold = (int)(newCapacity * loadFactor);

} // 将HashMap中的全部元素都添加到newTable中

void transfer(Entry[] newTable) {

Entry[] src = table;

int newCapacity = newTable.length;

for (int j = 0; j < src.length; j++) {

Entry<K,V> e = src[j];

if (e != null) {

src[j] = null;

do {

Entry<K,V> next = e.next;

int i = indexFor(e.hash, newCapacity);

e.next = newTable[i];

newTable[i] = e;

e = next;

} while (e != null);

}

}

} // 将"m"的全部元素都添加到HashMap中

public void putAll(Map<? extends K, ? extends V> m) {

// 有效性判断

int numKeysToBeAdded = m.size();

if (numKeysToBeAdded == 0)

return; // 计算容量是否足够,

// 若“当前实际容量 < 需要的容量”,则将容量x2。

if (numKeysToBeAdded > threshold) {

int targetCapacity = (int)(numKeysToBeAdded / loadFactor + 1);

if (targetCapacity > MAXIMUM_CAPACITY)

targetCapacity = MAXIMUM_CAPACITY;

int newCapacity = table.length;

while (newCapacity < targetCapacity)

newCapacity <<= 1;

if (newCapacity > table.length)

resize(newCapacity);

} // 通过迭代器,将“m”中的元素逐个添加到HashMap中。

for (Iterator<? extends Map.Entry<? extends K, ? extends V>> i = m.entrySet().iterator(); i.hasNext(); ) {

Map.Entry<? extends K, ? extends V> e = i.next();

put(e.getKey(), e.getValue());

}

} // 删除“键为key”元素

public V remove(Object key) {

Entry<K,V> e = removeEntryForKey(key);

return (e == null ? null : e.value);

} // 删除“键为key”的元素

final Entry<K,V> removeEntryForKey(Object key) {

// 获取哈希值。若key为null,则哈希值为0;否则调用hash()进行计算

int hash = (key == null) ? 0 : hash(key.hashCode());

int i = indexFor(hash, table.length);

Entry<K,V> prev = table[i];

Entry<K,V> e = prev; // 删除链表中“键为key”的元素

// 本质是“删除单向链表中的节点”

while (e != null) {

Entry<K,V> next = e.next;

Object k;

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k)))) {

modCount++;

size--;

if (prev == e)

table[i] = next;

else

prev.next = next;

e.recordRemoval(this);

return e;

}

prev = e;

e = next;

} return e;

} // 删除“键值对”

final Entry<K,V> removeMapping(Object o) {

if (!(o instanceof Map.Entry))

return null; Map.Entry<K,V> entry = (Map.Entry<K,V>) o;

Object key = entry.getKey();

int hash = (key == null) ? 0 : hash(key.hashCode());

int i = indexFor(hash, table.length);

Entry<K,V> prev = table[i];

Entry<K,V> e = prev; // 删除链表中的“键值对e”

// 本质是“删除单向链表中的节点”

while (e != null) {

Entry<K,V> next = e.next;

if (e.hash == hash && e.equals(entry)) {

modCount++;

size--;

if (prev == e)

table[i] = next;

else

prev.next = next;

e.recordRemoval(this);

return e;

}

prev = e;

e = next;

} return e;

} // 清空HashMap,将所有的元素设为null

public void clear() {

modCount++;

Entry[] tab = table;

for (int i = 0; i < tab.length; i++)

tab[i] = null;

size = 0;

} // 是否包含“值为value”的元素

public boolean containsValue(Object value) {

// 若“value为null”,则调用containsNullValue()查找

if (value == null)

return containsNullValue(); // 若“value不为null”,则查找HashMap中是否有值为value的节点。

Entry[] tab = table;

for (int i = 0; i < tab.length ; i++)

for (Entry e = tab[i] ; e != null ; e = e.next)

if (value.equals(e.value))

return true;

return false;

} // 是否包含null值

private boolean containsNullValue() {

Entry[] tab = table;

for (int i = 0; i < tab.length ; i++)

for (Entry e = tab[i] ; e != null ; e = e.next)

if (e.value == null)

return true;

return false;

} // 克隆一个HashMap,并返回Object对象

public Object clone() {

HashMap<K,V> result = null;

try {

result = (HashMap<K,V>)super.clone();

} catch (CloneNotSupportedException e) {

// assert false;

}

result.table = new Entry[table.length];

result.entrySet = null;

result.modCount = 0;

result.size = 0;

result.init();

// 调用putAllForCreate()将全部元素添加到HashMap中

result.putAllForCreate(this); return result;

} // Entry是单向链表。

// 它是 “HashMap链式存储法”对应的链表。

// 它实现了Map.Entry 接口,即实现getKey(), getValue(), setValue(V value), equals(Object o), hashCode()这些函数

static class Entry<K,V> implements Map.Entry<K,V> {

final K key;

V value;

// 指向下一个节点

Entry<K,V> next;

final int hash; // 构造函数。

// 输入参数包括"哈希值(h)", "键(k)", "值(v)", "下一节点(n)"

Entry(int h, K k, V v, Entry<K,V> n) {

value = v;

next = n;

key = k;

hash = h;

} public final K getKey() {

return key;

} public final V getValue() {

return value;

} public final V setValue(V newValue) {

V oldValue = value;

value = newValue;

return oldValue;

} // 判断两个Entry是否相等

// 若两个Entry的“key”和“value”都相等,则返回true。

// 否则,返回false

public final boolean equals(Object o) {

if (!(o instanceof Map.Entry))

return false;

Map.Entry e = (Map.Entry)o;

Object k1 = getKey();

Object k2 = e.getKey();

if (k1 == k2 || (k1 != null && k1.equals(k2))) {

Object v1 = getValue();

Object v2 = e.getValue();

if (v1 == v2 || (v1 != null && v1.equals(v2)))

return true;

}

return false;

} // 实现hashCode()

public final int hashCode() {

return (key==null ? 0 : key.hashCode()) ^

(value==null ? 0 : value.hashCode());

} public final String toString() {

return getKey() + "=" + getValue();

} // 当向HashMap中添加元素时,绘调用recordAccess()。

// 这里不做任何处理

void recordAccess(HashMap<K,V> m) {

} // 当从HashMap中删除元素时,绘调用recordRemoval()。

// 这里不做任何处理

void recordRemoval(HashMap<K,V> m) {

}

} // 新增Entry。将“key-value”插入指定位置,bucketIndex是位置索引。

void addEntry(int hash, K key, V value, int bucketIndex) {

// 保存“bucketIndex”位置的值到“e”中

Entry<K,V> e = table[bucketIndex];

// 设置“bucketIndex”位置的元素为“新Entry”,

// 设置“e”为“新Entry的下一个节点”

table[bucketIndex] = new Entry<K,V>(hash, key, value, e);

// 若HashMap的实际大小 不小于 “阈值”,则调整HashMap的大小

if (size++ >= threshold)

resize(2 * table.length);

} // 创建Entry。将“key-value”插入指定位置,bucketIndex是位置索引。

// 它和addEntry的区别是:

// (01) addEntry()一般用在 新增Entry可能导致“HashMap的实际容量”超过“阈值”的情况下。

// 例如,我们新建一个HashMap,然后不断通过put()向HashMap中添加元素;

// put()是通过addEntry()新增Entry的。

// 在这种情况下,我们不知道何时“HashMap的实际容量”会超过“阈值”;

// 因此,需要调用addEntry()

// (02) createEntry() 一般用在 新增Entry不会导致“HashMap的实际容量”超过“阈值”的情况下。

// 例如,我们调用HashMap“带有Map”的构造函数,它绘将Map的全部元素添加到HashMap中;

// 但在添加之前,我们已经计算好“HashMap的容量和阈值”。也就是,可以确定“即使将Map中

// 的全部元素添加到HashMap中,都不会超过HashMap的阈值”。

// 此时,调用createEntry()即可。

void createEntry(int hash, K key, V value, int bucketIndex) {

// 保存“bucketIndex”位置的值到“e”中

Entry<K,V> e = table[bucketIndex];

// 设置“bucketIndex”位置的元素为“新Entry”,

// 设置“e”为“新Entry的下一个节点”

table[bucketIndex] = new Entry<K,V>(hash, key, value, e);

size++;

} // HashIterator是HashMap迭代器的抽象出来的父类,实现了公共了函数。

// 它包含“key迭代器(KeyIterator)”、“Value迭代器(ValueIterator)”和“Entry迭代器(EntryIterator)”3个子类。

private abstract class HashIterator<E> implements Iterator<E> {

// 下一个元素

Entry<K,V> next;

// expectedModCount用于实现fast-fail机制。

int expectedModCount;

// 当前索引

int index;

// 当前元素

Entry<K,V> current; HashIterator() {

expectedModCount = modCount;

if (size > 0) { // advance to first entry

Entry[] t = table;

// 将next指向table中第一个不为null的元素。

// 这里利用了index的初始值为0,从0开始依次向后遍历,直到找到不为null的元素就退出循环。

while (index < t.length && (next = t[index++]) == null)

;

}

} public final boolean hasNext() {

return next != null;

} // 获取下一个元素

final Entry<K,V> nextEntry() {

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

Entry<K,V> e = next;

if (e == null)

throw new NoSuchElementException(); // 注意!!!

// 一个Entry就是一个单向链表

// 若该Entry的下一个节点不为空,就将next指向下一个节点;

// 否则,将next指向下一个链表(也是下一个Entry)的不为null的节点。

if ((next = e.next) == null) {

Entry[] t = table;

while (index < t.length && (next = t[index++]) == null)

;

}

current = e;

return e;

} // 删除当前元素

public void remove() {

if (current == null)

throw new IllegalStateException();

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

Object k = current.key;

current = null;

HashMap.this.removeEntryForKey(k);

expectedModCount = modCount;

} } // value的迭代器

private final class ValueIterator extends HashIterator<V> {

public V next() {

return nextEntry().value;

}

} // key的迭代器

private final class KeyIterator extends HashIterator<K> {

public K next() {

return nextEntry().getKey();

}

} // Entry的迭代器

private final class EntryIterator extends HashIterator<Map.Entry<K,V>> {

public Map.Entry<K,V> next() {

return nextEntry();

}

} // 返回一个“key迭代器”

Iterator<K> newKeyIterator() {

return new KeyIterator();

}

// 返回一个“value迭代器”

Iterator<V> newValueIterator() {

return new ValueIterator();

}

// 返回一个“entry迭代器”

Iterator<Map.Entry<K,V>> newEntryIterator() {

return new EntryIterator();

} // HashMap的Entry对应的集合

private transient Set<Map.Entry<K,V>> entrySet = null; // 返回“key的集合”,实际上返回一个“KeySet对象”

public Set<K> keySet() {

Set<K> ks = keySet;

return (ks != null ? ks : (keySet = new KeySet()));

} // Key对应的集合

// KeySet继承于AbstractSet,说明该集合中没有重复的Key。

private final class KeySet extends AbstractSet<K> {

public Iterator<K> iterator() {

return newKeyIterator();

}

public int size() {

return size;

}

public boolean contains(Object o) {

return containsKey(o);

}

public boolean remove(Object o) {

return HashMap.this.removeEntryForKey(o) != null;

}

public void clear() {

HashMap.this.clear();

}

} // 返回“value集合”,实际上返回的是一个Values对象

public Collection<V> values() {

Collection<V> vs = values;

return (vs != null ? vs : (values = new Values()));

} // “value集合”

// Values继承于AbstractCollection,不同于“KeySet继承于AbstractSet”,

// Values中的元素能够重复。因为不同的key可以指向相同的value。

private final class Values extends AbstractCollection<V> {

public Iterator<V> iterator() {

return newValueIterator();

}

public int size() {

return size;

}

public boolean contains(Object o) {

return containsValue(o);

}

public void clear() {

HashMap.this.clear();

}

} // 返回“HashMap的Entry集合”

public Set<Map.Entry<K,V>> entrySet() {

return entrySet0();

} // 返回“HashMap的Entry集合”,它实际是返回一个EntrySet对象

private Set<Map.Entry<K,V>> entrySet0() {

Set<Map.Entry<K,V>> es = entrySet;

return es != null ? es : (entrySet = new EntrySet());

} // EntrySet对应的集合

// EntrySet继承于AbstractSet,说明该集合中没有重复的EntrySet。

private final class EntrySet extends AbstractSet<Map.Entry<K,V>> {

public Iterator<Map.Entry<K,V>> iterator() {

return newEntryIterator();

}

public boolean contains(Object o) {

if (!(o instanceof Map.Entry))

return false;

Map.Entry<K,V> e = (Map.Entry<K,V>) o;

Entry<K,V> candidate = getEntry(e.getKey());

return candidate != null && candidate.equals(e);

}

public boolean remove(Object o) {

return removeMapping(o) != null;

}

public int size() {

return size;

}

public void clear() {

HashMap.this.clear();

}

} // java.io.Serializable的写入函数

// 将HashMap的“总的容量,实际容量,所有的Entry”都写入到输出流中

private void writeObject(java.io.ObjectOutputStream s)

throws IOException

{

Iterator<Map.Entry<K,V>> i =

(size > 0) ? entrySet0().iterator() : null; // Write out the threshold, loadfactor, and any hidden stuff

s.defaultWriteObject(); // Write out number of buckets

s.writeInt(table.length); // Write out size (number of Mappings)

s.writeInt(size); // Write out keys and values (alternating)

if (i != null) {

while (i.hasNext()) {

Map.Entry<K,V> e = i.next();

s.writeObject(e.getKey());

s.writeObject(e.getValue());

}

}

} private static final long serialVersionUID = 362498820763181265L; // java.io.Serializable的读取函数:根据写入方式读出

// 将HashMap的“总的容量,实际容量,所有的Entry”依次读出

private void readObject(java.io.ObjectInputStream s)

throws IOException, ClassNotFoundException

{

// Read in the threshold, loadfactor, and any hidden stuff

s.defaultReadObject(); // Read in number of buckets and allocate the bucket array;

int numBuckets = s.readInt();

table = new Entry[numBuckets]; init(); // Give subclass a chance to do its thing. // Read in size (number of Mappings)

int size = s.readInt(); // Read the keys and values, and put the mappings in the HashMap

for (int i=0; i<size; i++) {

K key = (K) s.readObject();

V value = (V) s.readObject();

putForCreate(key, value);

}

} // 返回“HashMap总的容量”

int capacity() { return table.length; }

// 返回“HashMap的加载因子”

float loadFactor() { return loadFactor; }

}

HashMap源码解读(jdk1.6)

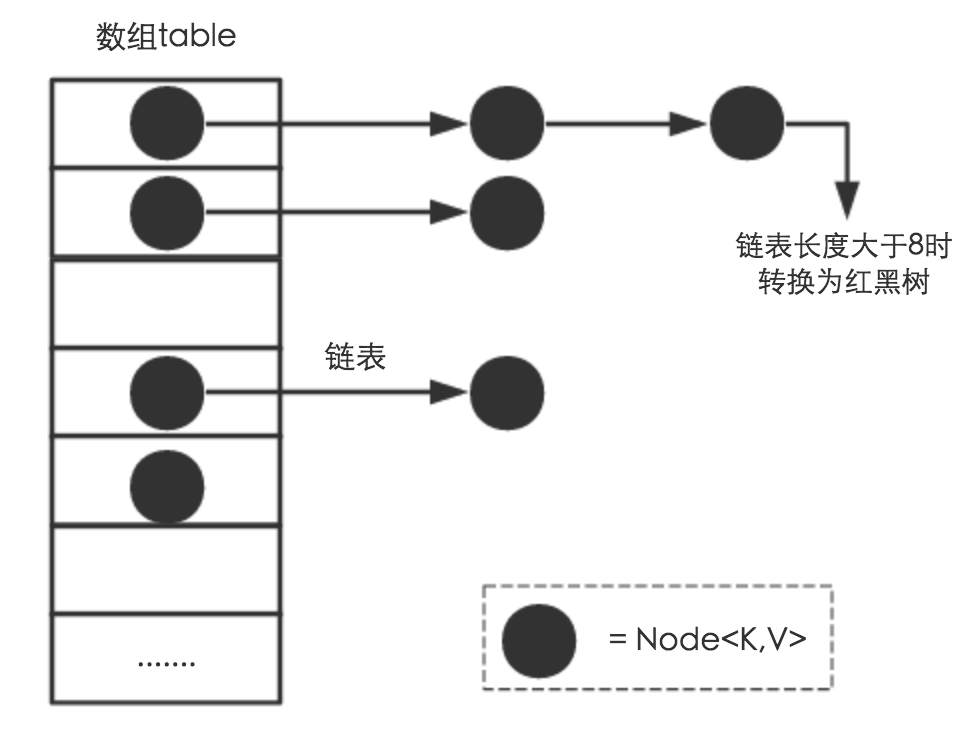

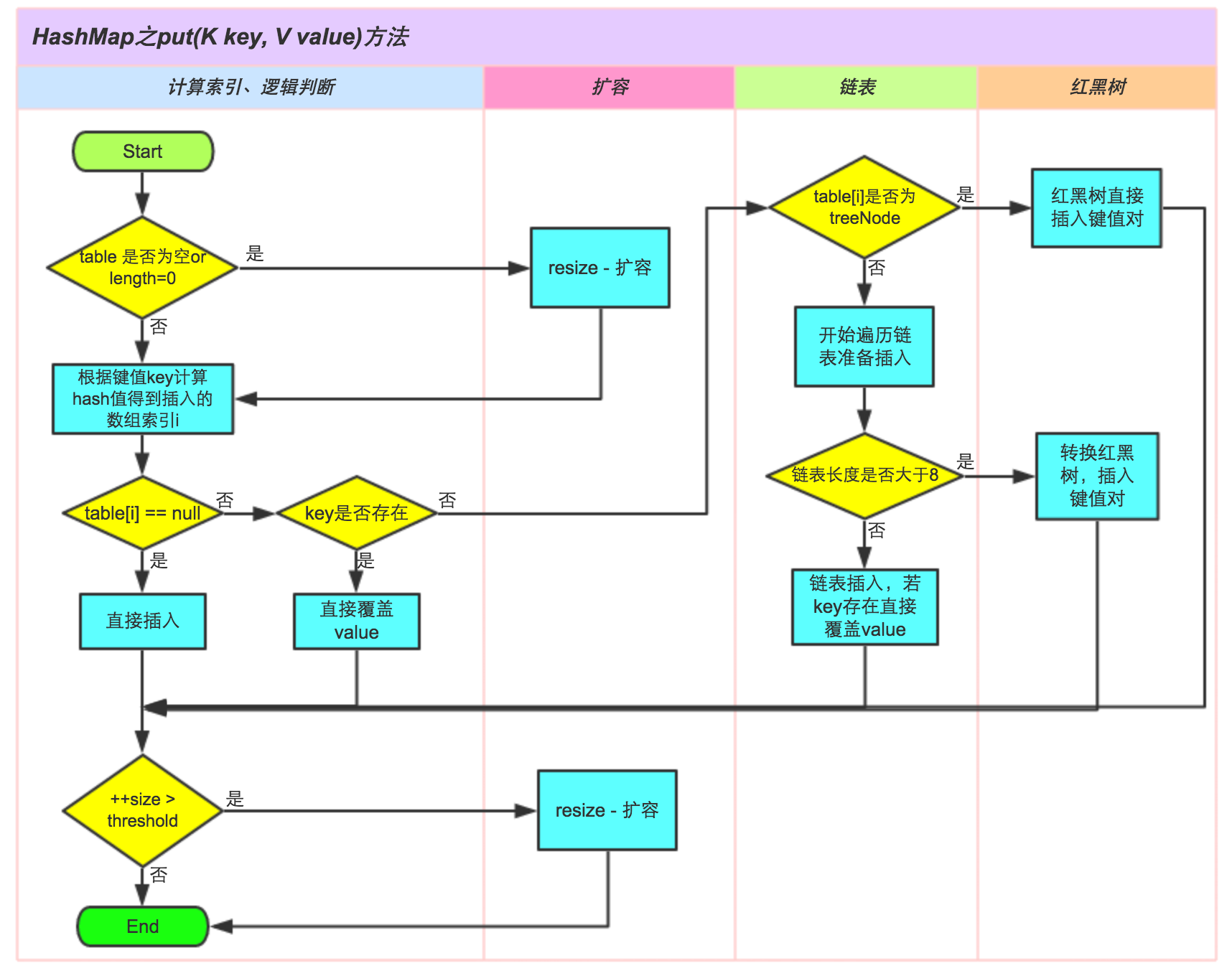

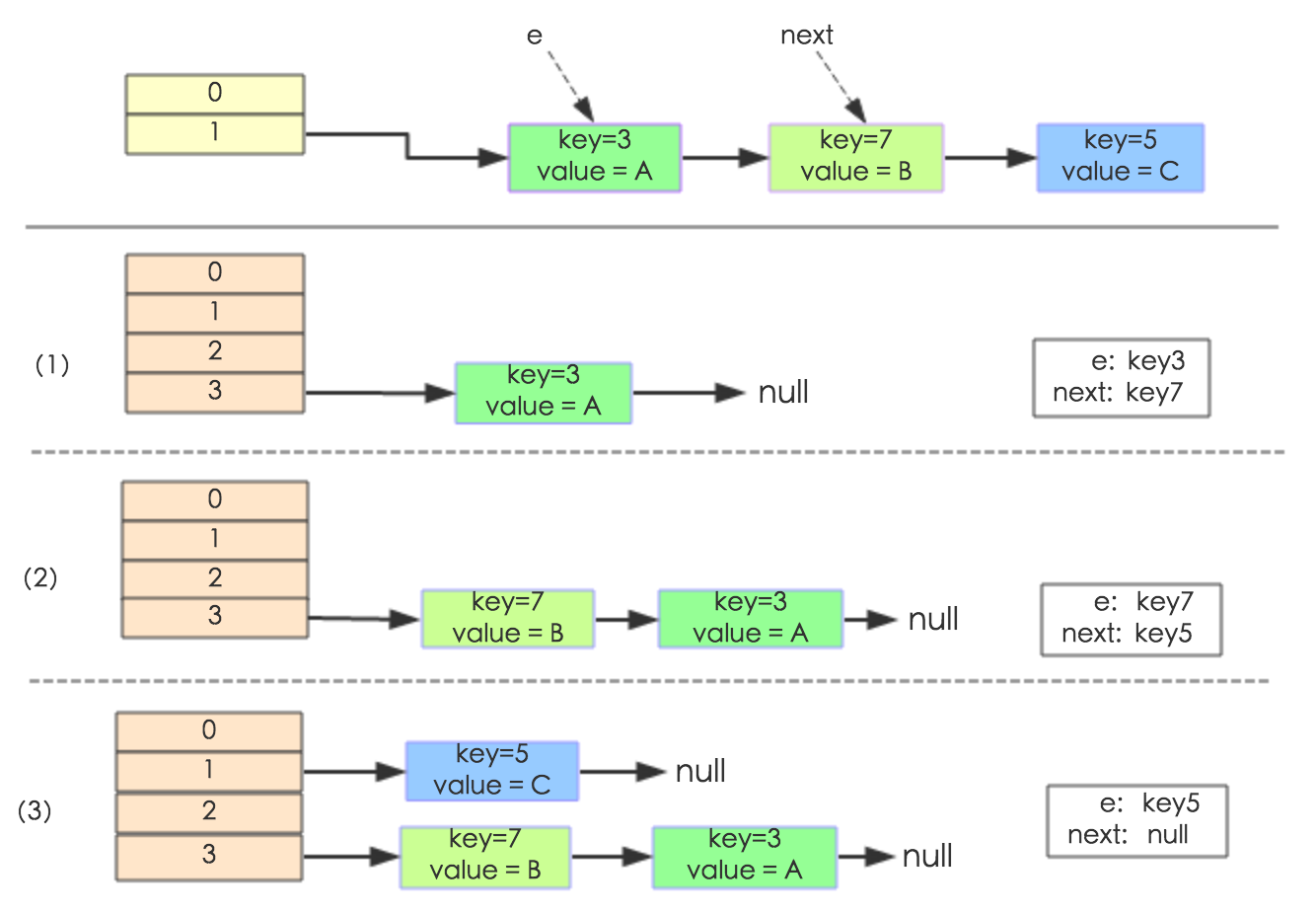

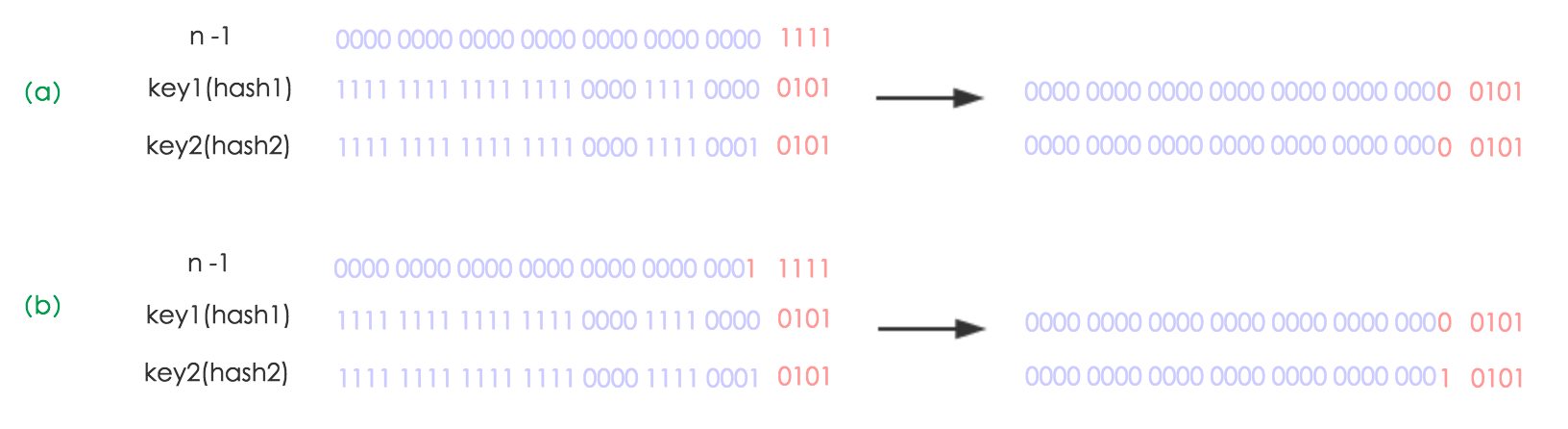

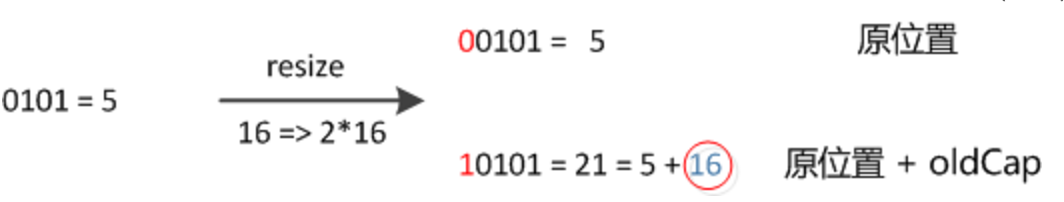

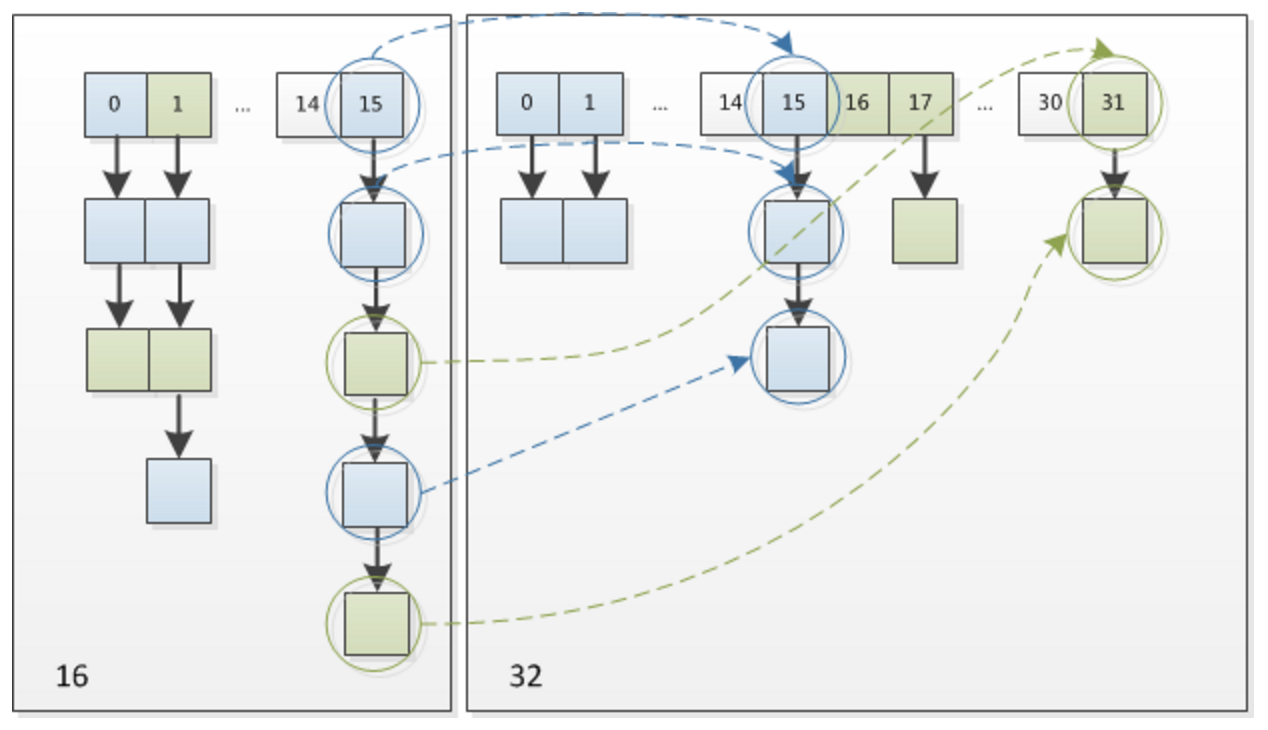

但是在jdk1.8之后,HashMap加入了红黑树机制,在一个单向链表的节点大于8的情况下,就把这个链表转换成红黑树。

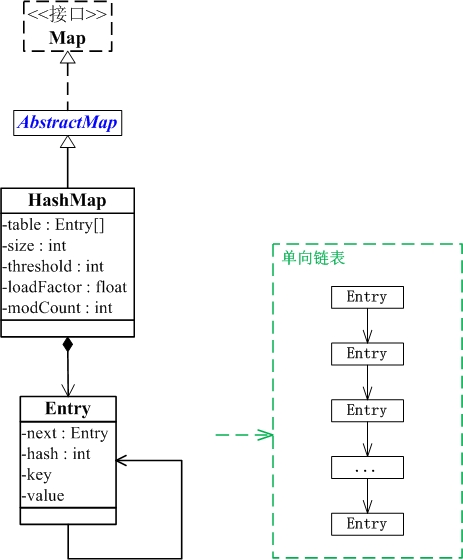

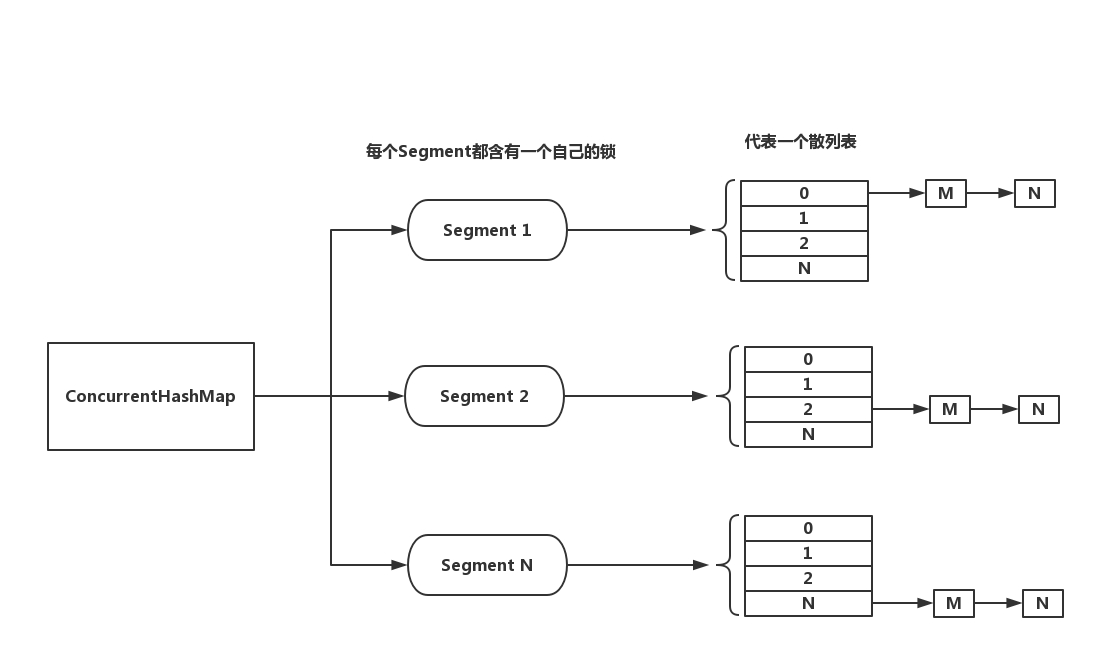

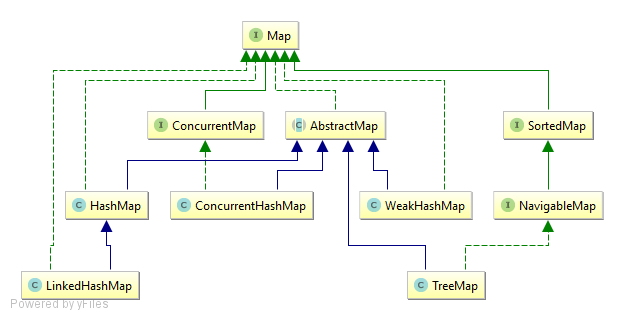

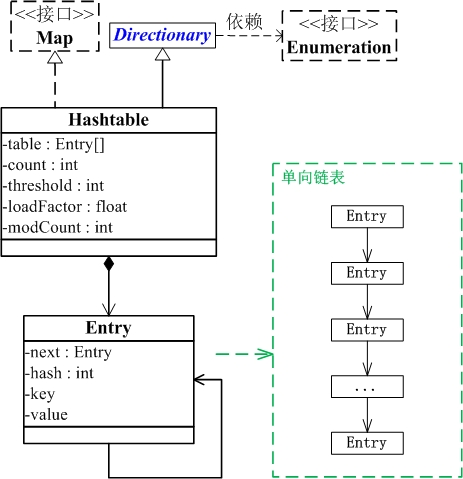

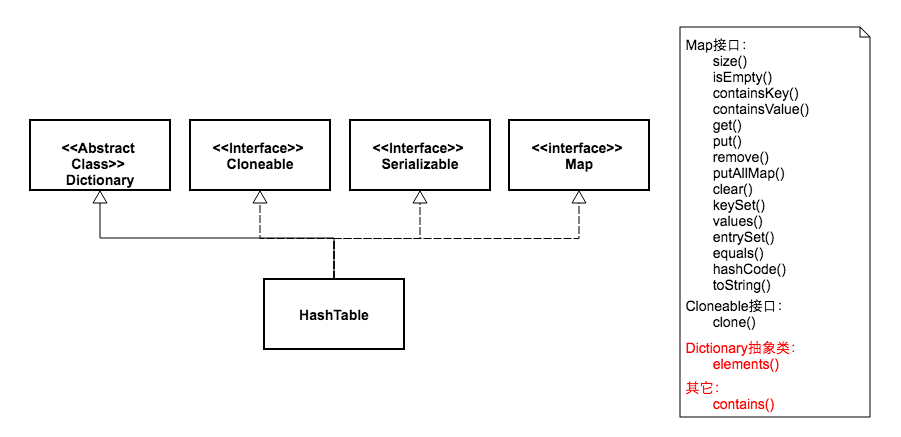

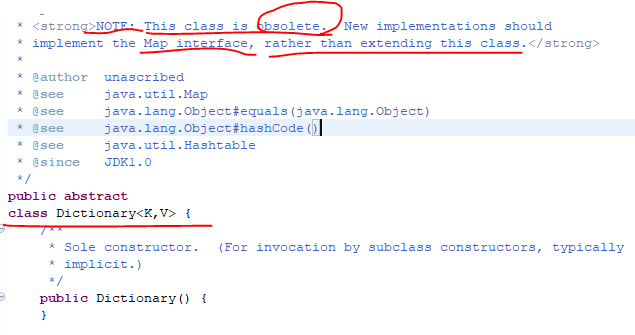

同时让我们看看HashMap和Map之间的关系:

(1) HashMap继承于AbstractMap类,实现了Map接口。Map是"key-value键值对"接口,AbstractMap实现了"键值对"的通用函数接口。

(2) HashMap是通过"拉链法"实现的哈希表。它包括几个重要的成员变量:table, size, threshold, loadFactor, modCount。

table是一个Entry[]数组类型,而Entry实际上就是一个单向链表。哈希表的"key-value键值对"都是存储在Entry数组中的。

size是HashMap的大小,它是HashMap保存的键值对的数量。

threshold是HashMap的阈值,用于判断是否需要调整HashMap的容量。

threshold的值="容量*加载因子",当HashMap中存储数据的数量达到threshold时,就需要将HashMap的容量加倍。

loadFactor就是加载因子。

modCount是用来实现fail-fast机制的。java.util包下面的所有的集合类都是快速失败(fail-fast)的,

而java.util.concurrent包下面的所有的类都是安全失败(fail-safe)的。

快速失败的迭代器会抛出ConcurrentModificationException异常,而安全失败的迭代器永远不会抛出这样的异常。

当多个线程对同一个集合进行操作的时候,某线程访问集合的过程中,

该集合的内容被其他线程所改变(即其它线程通过add、remove、clear等方法,改变了modCount的值);

这时,就会抛出ConcurrentModificationException异常,产生fail-fast事件。

fail-fast机制,是一种错误检测机制。它只能被用来检测错误,因为JDK并不保证fail-fast机制一定会发生。

若在多线程环境下使用fail-fast机制的集合,建议使用“java.util.concurrent包下的类”去取代“java.util包下的类”。

下面我们看看在jdk1.8之中的HashMap源码实现方式:

/*

* Copyright (c) 1997, 2013, Oracle and/or its affiliates. All rights reserved.

* ORACLE PROPRIETARY/CONFIDENTIAL. Use is subject to license terms.

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*

*/ package java.util; import java.io.IOException;

import java.io.InvalidObjectException;

import java.io.Serializable;

import java.lang.reflect.ParameterizedType;

import java.lang.reflect.Type;

import java.util.function.BiConsumer;

import java.util.function.BiFunction;

import java.util.function.Consumer;

import java.util.function.Function; /**

* Hash table based implementation of the <tt>Map</tt> interface. This

* implementation provides all of the optional map operations, and permits

* <tt>null</tt> values and the <tt>null</tt> key. (The <tt>HashMap</tt>

* class is roughly equivalent to <tt>Hashtable</tt>, except that it is

* unsynchronized and permits nulls.) This class makes no guarantees as to

* the order of the map; in particular, it does not guarantee that the order

* will remain constant over time.

*

* <p>This implementation provides constant-time performance for the basic

* operations (<tt>get</tt> and <tt>put</tt>), assuming the hash function

* disperses the elements properly among the buckets. Iteration over

* collection views requires time proportional to the "capacity" of the

* <tt>HashMap</tt> instance (the number of buckets) plus its size (the number

* of key-value mappings). Thus, it's very important not to set the initial

* capacity too high (or the load factor too low) if iteration performance is

* important.

*

* <p>An instance of <tt>HashMap</tt> has two parameters that affect its

* performance: <i>initial capacity</i> and <i>load factor</i>. The

* <i>capacity</i> is the number of buckets in the hash table, and the initial

* capacity is simply the capacity at the time the hash table is created. The

* <i>load factor</i> is a measure of how full the hash table is allowed to

* get before its capacity is automatically increased. When the number of

* entries in the hash table exceeds the product of the load factor and the

* current capacity, the hash table is <i>rehashed</i> (that is, internal data

* structures are rebuilt) so that the hash table has approximately twice the

* number of buckets.

*

* <p>As a general rule, the default load factor (.75) offers a good

* tradeoff between time and space costs. Higher values decrease the

* space overhead but increase the lookup cost (reflected in most of

* the operations of the <tt>HashMap</tt> class, including

* <tt>get</tt> and <tt>put</tt>). The expected number of entries in

* the map and its load factor should be taken into account when

* setting its initial capacity, so as to minimize the number of

* rehash operations. If the initial capacity is greater than the

* maximum number of entries divided by the load factor, no rehash

* operations will ever occur.

*

* <p>If many mappings are to be stored in a <tt>HashMap</tt>

* instance, creating it with a sufficiently large capacity will allow

* the mappings to be stored more efficiently than letting it perform

* automatic rehashing as needed to grow the table. Note that using

* many keys with the same {@code hashCode()} is a sure way to slow

* down performance of any hash table. To ameliorate impact, when keys

* are {@link Comparable}, this class may use comparison order among

* keys to help break ties.

*

* <p><strong>Note that this implementation is not synchronized.</strong>

* If multiple threads access a hash map concurrently, and at least one of

* the threads modifies the map structurally, it <i>must</i> be

* synchronized externally. (A structural modification is any operation

* that adds or deletes one or more mappings; merely changing the value

* associated with a key that an instance already contains is not a

* structural modification.) This is typically accomplished by

* synchronizing on some object that naturally encapsulates the map.

*

* If no such object exists, the map should be "wrapped" using the

* {@link Collections#synchronizedMap Collections.synchronizedMap}

* method. This is best done at creation time, to prevent accidental

* unsynchronized access to the map:<pre>

* Map m = Collections.synchronizedMap(new HashMap(...));</pre>

*

* <p>The iterators returned by all of this class's "collection view methods"

* are <i>fail-fast</i>: if the map is structurally modified at any time after

* the iterator is created, in any way except through the iterator's own

* <tt>remove</tt> method, the iterator will throw a

* {@link ConcurrentModificationException}. Thus, in the face of concurrent

* modification, the iterator fails quickly and cleanly, rather than risking

* arbitrary, non-deterministic behavior at an undetermined time in the

* future.

*

* <p>Note that the fail-fast behavior of an iterator cannot be guaranteed

* as it is, generally speaking, impossible to make any hard guarantees in the

* presence of unsynchronized concurrent modification. Fail-fast iterators

* throw <tt>ConcurrentModificationException</tt> on a best-effort basis.

* Therefore, it would be wrong to write a program that depended on this

* exception for its correctness: <i>the fail-fast behavior of iterators

* should be used only to detect bugs.</i>

*

* <p>This class is a member of the

* <a href="{@docRoot}/../technotes/guides/collections/index.html">

* Java Collections Framework</a>.

*

* @param <K> the type of keys maintained by this map

* @param <V> the type of mapped values

*

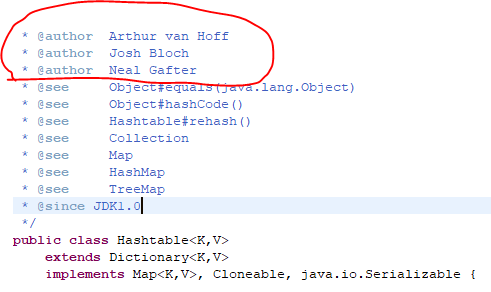

* @author Doug Lea

* @author Josh Bloch

* @author Arthur van Hoff

* @author Neal Gafter

* @see Object#hashCode()

* @see Collection

* @see Map

* @see TreeMap

* @see Hashtable

* @since 1.2

*/

public class HashMap<K,V> extends AbstractMap<K,V>

implements Map<K,V>, Cloneable, Serializable { private static final long serialVersionUID = 362498820763181265L; /*

* Implementation notes.

*

* This map usually acts as a binned (bucketed) hash table, but

* when bins get too large, they are transformed into bins of

* TreeNodes, each structured similarly to those in

* java.util.TreeMap. Most methods try to use normal bins, but

* relay to TreeNode methods when applicable (simply by checking

* instanceof a node). Bins of TreeNodes may be traversed and

* used like any others, but additionally support faster lookup

* when overpopulated. However, since the vast majority of bins in

* normal use are not overpopulated, checking for existence of

* tree bins may be delayed in the course of table methods.

*

* Tree bins (i.e., bins whose elements are all TreeNodes) are

* ordered primarily by hashCode, but in the case of ties, if two

* elements are of the same "class C implements Comparable<C>",

* type then their compareTo method is used for ordering. (We

* conservatively check generic types via reflection to validate

* this -- see method comparableClassFor). The added complexity

* of tree bins is worthwhile in providing worst-case O(log n)

* operations when keys either have distinct hashes or are

* orderable, Thus, performance degrades gracefully under

* accidental or malicious usages in which hashCode() methods

* return values that are poorly distributed, as well as those in

* which many keys share a hashCode, so long as they are also

* Comparable. (If neither of these apply, we may waste about a

* factor of two in time and space compared to taking no

* precautions. But the only known cases stem from poor user

* programming practices that are already so slow that this makes

* little difference.)

*

* Because TreeNodes are about twice the size of regular nodes, we

* use them only when bins contain enough nodes to warrant use

* (see TREEIFY_THRESHOLD). And when they become too small (due to

* removal or resizing) they are converted back to plain bins. In

* usages with well-distributed user hashCodes, tree bins are

* rarely used. Ideally, under random hashCodes, the frequency of

* nodes in bins follows a Poisson distribution

* (http://en.wikipedia.org/wiki/Poisson_distribution) with a

* parameter of about 0.5 on average for the default resizing

* threshold of 0.75, although with a large variance because of

* resizing granularity. Ignoring variance, the expected

* occurrences of list size k are (exp(-0.5) * pow(0.5, k) /

* factorial(k)). The first values are:

*

* 0: 0.60653066

* 1: 0.30326533

* 2: 0.07581633

* 3: 0.01263606

* 4: 0.00157952

* 5: 0.00015795

* 6: 0.00001316

* 7: 0.00000094

* 8: 0.00000006

* more: less than 1 in ten million

*

* The root of a tree bin is normally its first node. However,

* sometimes (currently only upon Iterator.remove), the root might

* be elsewhere, but can be recovered following parent links

* (method TreeNode.root()).

*

* All applicable internal methods accept a hash code as an

* argument (as normally supplied from a public method), allowing

* them to call each other without recomputing user hashCodes.

* Most internal methods also accept a "tab" argument, that is

* normally the current table, but may be a new or old one when

* resizing or converting.

*

* When bin lists are treeified, split, or untreeified, we keep

* them in the same relative access/traversal order (i.e., field

* Node.next) to better preserve locality, and to slightly

* simplify handling of splits and traversals that invoke

* iterator.remove. When using comparators on insertion, to keep a

* total ordering (or as close as is required here) across

* rebalancings, we compare classes and identityHashCodes as

* tie-breakers.

*

* The use and transitions among plain vs tree modes is

* complicated by the existence of subclass LinkedHashMap. See

* below for hook methods defined to be invoked upon insertion,

* removal and access that allow LinkedHashMap internals to

* otherwise remain independent of these mechanics. (This also

* requires that a map instance be passed to some utility methods

* that may create new nodes.)

*

* The concurrent-programming-like SSA-based coding style helps

* avoid aliasing errors amid all of the twisty pointer operations.

*/ /**

* The default initial capacity - MUST be a power of two.

*/

static final int DEFAULT_INITIAL_CAPACITY = 1 << 4; // aka 16 /**

* The maximum capacity, used if a higher value is implicitly specified

* by either of the constructors with arguments.

* MUST be a power of two <= 1<<30.

*/

static final int MAXIMUM_CAPACITY = 1 << 30; /**

* The load factor used when none specified in constructor.

*/

static final float DEFAULT_LOAD_FACTOR = 0.75f; /**

* The bin count threshold for using a tree rather than list for a

* bin. Bins are converted to trees when adding an element to a

* bin with at least this many nodes. The value must be greater

* than 2 and should be at least 8 to mesh with assumptions in

* tree removal about conversion back to plain bins upon

* shrinkage.

*/

static final int TREEIFY_THRESHOLD = 8; /**

* The bin count threshold for untreeifying a (split) bin during a

* resize operation. Should be less than TREEIFY_THRESHOLD, and at

* most 6 to mesh with shrinkage detection under removal.

*/

static final int UNTREEIFY_THRESHOLD = 6; /**

* The smallest table capacity for which bins may be treeified.

* (Otherwise the table is resized if too many nodes in a bin.)

* Should be at least 4 * TREEIFY_THRESHOLD to avoid conflicts

* between resizing and treeification thresholds.

*/

static final int MIN_TREEIFY_CAPACITY = 64; /**

* Basic hash bin node, used for most entries. (See below for

* TreeNode subclass, and in LinkedHashMap for its Entry subclass.)

*/

static class Node<K,V> implements Map.Entry<K,V> {

final int hash;

final K key;

V value;

Node<K,V> next; Node(int hash, K key, V value, Node<K,V> next) {

this.hash = hash;

this.key = key;

this.value = value;

this.next = next;

} public final K getKey() { return key; }

public final V getValue() { return value; }

public final String toString() { return key + "=" + value; } public final int hashCode() {

return Objects.hashCode(key) ^ Objects.hashCode(value);

} public final V setValue(V newValue) {

V oldValue = value;

value = newValue;

return oldValue;

} public final boolean equals(Object o) {

if (o == this)

return true;

if (o instanceof Map.Entry) {

Map.Entry<?,?> e = (Map.Entry<?,?>)o;

if (Objects.equals(key, e.getKey()) &&

Objects.equals(value, e.getValue()))

return true;

}

return false;

}

} /* ---------------- Static utilities -------------- */ /**

* Computes key.hashCode() and spreads (XORs) higher bits of hash

* to lower. Because the table uses power-of-two masking, sets of

* hashes that vary only in bits above the current mask will

* always collide. (Among known examples are sets of Float keys

* holding consecutive whole numbers in small tables.) So we

* apply a transform that spreads the impact of higher bits

* downward. There is a tradeoff between speed, utility, and

* quality of bit-spreading. Because many common sets of hashes

* are already reasonably distributed (so don't benefit from

* spreading), and because we use trees to handle large sets of

* collisions in bins, we just XOR some shifted bits in the

* cheapest possible way to reduce systematic lossage, as well as

* to incorporate impact of the highest bits that would otherwise

* never be used in index calculations because of table bounds.

*/

static final int hash(Object key) {

int h;

return (key == null) ? 0 : (h = key.hashCode()) ^ (h >>> 16);

} /**

* Returns x's Class if it is of the form "class C implements

* Comparable<C>", else null.

*/

static Class<?> comparableClassFor(Object x) {

if (x instanceof Comparable) {

Class<?> c; Type[] ts, as; Type t; ParameterizedType p;

if ((c = x.getClass()) == String.class) // bypass checks

return c;

if ((ts = c.getGenericInterfaces()) != null) {

for (int i = 0; i < ts.length; ++i) {

if (((t = ts[i]) instanceof ParameterizedType) &&

((p = (ParameterizedType)t).getRawType() ==

Comparable.class) &&

(as = p.getActualTypeArguments()) != null &&

as.length == 1 && as[0] == c) // type arg is c

return c;

}

}

}

return null;

} /**

* Returns k.compareTo(x) if x matches kc (k's screened comparable

* class), else 0.

*/

@SuppressWarnings({"rawtypes","unchecked"}) // for cast to Comparable

static int compareComparables(Class<?> kc, Object k, Object x) {

return (x == null || x.getClass() != kc ? 0 :

((Comparable)k).compareTo(x));

} /**

* Returns a power of two size for the given target capacity.

*/

static final int tableSizeFor(int cap) {

int n = cap - 1;

n |= n >>> 1;

n |= n >>> 2;

n |= n >>> 4;

n |= n >>> 8;

n |= n >>> 16;

return (n < 0) ? 1 : (n >= MAXIMUM_CAPACITY) ? MAXIMUM_CAPACITY : n + 1;

} /* ---------------- Fields -------------- */ /**

* The table, initialized on first use, and resized as

* necessary. When allocated, length is always a power of two.

* (We also tolerate length zero in some operations to allow

* bootstrapping mechanics that are currently not needed.)

*/

transient Node<K,V>[] table; /**

* Holds cached entrySet(). Note that AbstractMap fields are used

* for keySet() and values().

*/

transient Set<Map.Entry<K,V>> entrySet; /**

* The number of key-value mappings contained in this map.

*/

transient int size; /**

* The number of times this HashMap has been structurally modified

* Structural modifications are those that change the number of mappings in

* the HashMap or otherwise modify its internal structure (e.g.,

* rehash). This field is used to make iterators on Collection-views of

* the HashMap fail-fast. (See ConcurrentModificationException).

*/

transient int modCount; /**

* The next size value at which to resize (capacity * load factor).

*

* @serial

*/

// (The javadoc description is true upon serialization.

// Additionally, if the table array has not been allocated, this

// field holds the initial array capacity, or zero signifying

// DEFAULT_INITIAL_CAPACITY.)

int threshold; /**

* The load factor for the hash table.

*

* @serial

*/

final float loadFactor; /* ---------------- Public operations -------------- */ /**

* Constructs an empty <tt>HashMap</tt> with the specified initial

* capacity and load factor.

*

* @param initialCapacity the initial capacity

* @param loadFactor the load factor

* @throws IllegalArgumentException if the initial capacity is negative

* or the load factor is nonpositive

*/

public HashMap(int initialCapacity, float loadFactor) {

if (initialCapacity < 0)

throw new IllegalArgumentException("Illegal initial capacity: " +

initialCapacity);

if (initialCapacity > MAXIMUM_CAPACITY)

initialCapacity = MAXIMUM_CAPACITY;

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new IllegalArgumentException("Illegal load factor: " +

loadFactor);

this.loadFactor = loadFactor;

this.threshold = tableSizeFor(initialCapacity);

} /**

* Constructs an empty <tt>HashMap</tt> with the specified initial

* capacity and the default load factor (0.75).

*

* @param initialCapacity the initial capacity.

* @throws IllegalArgumentException if the initial capacity is negative.

*/

public HashMap(int initialCapacity) {

this(initialCapacity, DEFAULT_LOAD_FACTOR);

} /**

* Constructs an empty <tt>HashMap</tt> with the default initial capacity

* (16) and the default load factor (0.75).

*/

public HashMap() {

this.loadFactor = DEFAULT_LOAD_FACTOR; // all other fields defaulted

} /**

* Constructs a new <tt>HashMap</tt> with the same mappings as the

* specified <tt>Map</tt>. The <tt>HashMap</tt> is created with

* default load factor (0.75) and an initial capacity sufficient to

* hold the mappings in the specified <tt>Map</tt>.

*

* @param m the map whose mappings are to be placed in this map

* @throws NullPointerException if the specified map is null

*/

public HashMap(Map<? extends K, ? extends V> m) {

this.loadFactor = DEFAULT_LOAD_FACTOR;

putMapEntries(m, false);

} /**

* Implements Map.putAll and Map constructor

*

* @param m the map

* @param evict false when initially constructing this map, else

* true (relayed to method afterNodeInsertion).

*/

final void putMapEntries(Map<? extends K, ? extends V> m, boolean evict) {

int s = m.size();

if (s > 0) {

if (table == null) { // pre-size

float ft = ((float)s / loadFactor) + 1.0F;

int t = ((ft < (float)MAXIMUM_CAPACITY) ?

(int)ft : MAXIMUM_CAPACITY);

if (t > threshold)

threshold = tableSizeFor(t);

}

else if (s > threshold)

resize();

for (Map.Entry<? extends K, ? extends V> e : m.entrySet()) {

K key = e.getKey();

V value = e.getValue();

putVal(hash(key), key, value, false, evict);

}

}

} /**

* Returns the number of key-value mappings in this map.

*

* @return the number of key-value mappings in this map

*/

public int size() {

return size;

} /**

* Returns <tt>true</tt> if this map contains no key-value mappings.

*

* @return <tt>true</tt> if this map contains no key-value mappings

*/

public boolean isEmpty() {

return size == 0;

} /**

* Returns the value to which the specified key is mapped,

* or {@code null} if this map contains no mapping for the key.

*

* <p>More formally, if this map contains a mapping from a key

* {@code k} to a value {@code v} such that {@code (key==null ? k==null :

* key.equals(k))}, then this method returns {@code v}; otherwise

* it returns {@code null}. (There can be at most one such mapping.)

*

* <p>A return value of {@code null} does not <i>necessarily</i>

* indicate that the map contains no mapping for the key; it's also

* possible that the map explicitly maps the key to {@code null}.

* The {@link #containsKey containsKey} operation may be used to

* distinguish these two cases.

*

* @see #put(Object, Object)

*/

public V get(Object key) {

Node<K,V> e;

return (e = getNode(hash(key), key)) == null ? null : e.value;

} /**

* Implements Map.get and related methods

*

* @param hash hash for key

* @param key the key

* @return the node, or null if none

*/

final Node<K,V> getNode(int hash, Object key) {

Node<K,V>[] tab; Node<K,V> first, e; int n; K k;

if ((tab = table) != null && (n = tab.length) > 0 &&

(first = tab[(n - 1) & hash]) != null) {

if (first.hash == hash && // always check first node

((k = first.key) == key || (key != null && key.equals(k))))

return first;

if ((e = first.next) != null) {

if (first instanceof TreeNode)

return ((TreeNode<K,V>)first).getTreeNode(hash, key);

do {

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

return e;

} while ((e = e.next) != null);

}

}

return null;

} /**

* Returns <tt>true</tt> if this map contains a mapping for the

* specified key.

*

* @param key The key whose presence in this map is to be tested

* @return <tt>true</tt> if this map contains a mapping for the specified

* key.

*/

public boolean containsKey(Object key) {

return getNode(hash(key), key) != null;

} /**

* Associates the specified value with the specified key in this map.

* If the map previously contained a mapping for the key, the old

* value is replaced.

*

* @param key key with which the specified value is to be associated

* @param value value to be associated with the specified key

* @return the previous value associated with <tt>key</tt>, or

* <tt>null</tt> if there was no mapping for <tt>key</tt>.

* (A <tt>null</tt> return can also indicate that the map

* previously associated <tt>null</tt> with <tt>key</tt>.)

*/

public V put(K key, V value) {

return putVal(hash(key), key, value, false, true);

} /**

* Implements Map.put and related methods

*

* @param hash hash for key

* @param key the key

* @param value the value to put

* @param onlyIfAbsent if true, don't change existing value

* @param evict if false, the table is in creation mode.

* @return previous value, or null if none

*/

final V putVal(int hash, K key, V value, boolean onlyIfAbsent,

boolean evict) {

Node<K,V>[] tab; Node<K,V> p; int n, i;

if ((tab = table) == null || (n = tab.length) == 0)

n = (tab = resize()).length;

if ((p = tab[i = (n - 1) & hash]) == null)

tab[i] = newNode(hash, key, value, null);

else {

Node<K,V> e; K k;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

e = p;

else if (p instanceof TreeNode)

e = ((TreeNode<K,V>)p).putTreeVal(this, tab, hash, key, value);

else {

for (int binCount = 0; ; ++binCount) {

if ((e = p.next) == null) {

p.next = newNode(hash, key, value, null);

if (binCount >= TREEIFY_THRESHOLD - 1) // -1 for 1st

treeifyBin(tab, hash);

break;

}

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k))))

break;

p = e;

}

}

if (e != null) { // existing mapping for key

V oldValue = e.value;

if (!onlyIfAbsent || oldValue == null)

e.value = value;

afterNodeAccess(e);

return oldValue;

}

}

++modCount;

if (++size > threshold)

resize();

afterNodeInsertion(evict);

return null;

} /**

* Initializes or doubles table size. If null, allocates in

* accord with initial capacity target held in field threshold.

* Otherwise, because we are using power-of-two expansion, the

* elements from each bin must either stay at same index, or move

* with a power of two offset in the new table.

*

* @return the table

*/

final Node<K,V>[] resize() {

Node<K,V>[] oldTab = table;

int oldCap = (oldTab == null) ? 0 : oldTab.length;

int oldThr = threshold;

int newCap, newThr = 0;

if (oldCap > 0) {

if (oldCap >= MAXIMUM_CAPACITY) {

threshold = Integer.MAX_VALUE;

return oldTab;

}

else if ((newCap = oldCap << 1) < MAXIMUM_CAPACITY &&

oldCap >= DEFAULT_INITIAL_CAPACITY)

newThr = oldThr << 1; // double threshold

}

else if (oldThr > 0) // initial capacity was placed in threshold

newCap = oldThr;

else { // zero initial threshold signifies using defaults

newCap = DEFAULT_INITIAL_CAPACITY;

newThr = (int)(DEFAULT_LOAD_FACTOR * DEFAULT_INITIAL_CAPACITY);

}

if (newThr == 0) {

float ft = (float)newCap * loadFactor;

newThr = (newCap < MAXIMUM_CAPACITY && ft < (float)MAXIMUM_CAPACITY ?

(int)ft : Integer.MAX_VALUE);

}

threshold = newThr;

@SuppressWarnings({"rawtypes","unchecked"})

Node<K,V>[] newTab = (Node<K,V>[])new Node[newCap];

table = newTab;

if (oldTab != null) {

for (int j = 0; j < oldCap; ++j) {

Node<K,V> e;

if ((e = oldTab[j]) != null) {

oldTab[j] = null;

if (e.next == null)

newTab[e.hash & (newCap - 1)] = e;

else if (e instanceof TreeNode)

((TreeNode<K,V>)e).split(this, newTab, j, oldCap);

else { // preserve order

Node<K,V> loHead = null, loTail = null;

Node<K,V> hiHead = null, hiTail = null;

Node<K,V> next;

do {

next = e.next;

if ((e.hash & oldCap) == 0) {

if (loTail == null)

loHead = e;

else

loTail.next = e;

loTail = e;

}

else {

if (hiTail == null)

hiHead = e;

else

hiTail.next = e;

hiTail = e;

}

} while ((e = next) != null);

if (loTail != null) {

loTail.next = null;

newTab[j] = loHead;

}

if (hiTail != null) {

hiTail.next = null;

newTab[j + oldCap] = hiHead;

}

}

}

}

}

return newTab;

} /**

* Replaces all linked nodes in bin at index for given hash unless

* table is too small, in which case resizes instead.

*/

final void treeifyBin(Node<K,V>[] tab, int hash) {

int n, index; Node<K,V> e;

if (tab == null || (n = tab.length) < MIN_TREEIFY_CAPACITY)

resize();

else if ((e = tab[index = (n - 1) & hash]) != null) {

TreeNode<K,V> hd = null, tl = null;

do {

TreeNode<K,V> p = replacementTreeNode(e, null);

if (tl == null)

hd = p;

else {

p.prev = tl;

tl.next = p;

}

tl = p;

} while ((e = e.next) != null);

if ((tab[index] = hd) != null)

hd.treeify(tab);

}

} /**

* Copies all of the mappings from the specified map to this map.

* These mappings will replace any mappings that this map had for

* any of the keys currently in the specified map.

*

* @param m mappings to be stored in this map

* @throws NullPointerException if the specified map is null

*/

public void putAll(Map<? extends K, ? extends V> m) {

putMapEntries(m, true);

} /**

* Removes the mapping for the specified key from this map if present.

*

* @param key key whose mapping is to be removed from the map

* @return the previous value associated with <tt>key</tt>, or

* <tt>null</tt> if there was no mapping for <tt>key</tt>.

* (A <tt>null</tt> return can also indicate that the map

* previously associated <tt>null</tt> with <tt>key</tt>.)

*/

public V remove(Object key) {

Node<K,V> e;

return (e = removeNode(hash(key), key, null, false, true)) == null ?

null : e.value;

} /**

* Implements Map.remove and related methods

*

* @param hash hash for key

* @param key the key

* @param value the value to match if matchValue, else ignored

* @param matchValue if true only remove if value is equal

* @param movable if false do not move other nodes while removing

* @return the node, or null if none

*/

final Node<K,V> removeNode(int hash, Object key, Object value,

boolean matchValue, boolean movable) {

Node<K,V>[] tab; Node<K,V> p; int n, index;

if ((tab = table) != null && (n = tab.length) > 0 &&

(p = tab[index = (n - 1) & hash]) != null) {

Node<K,V> node = null, e; K k; V v;

if (p.hash == hash &&

((k = p.key) == key || (key != null && key.equals(k))))

node = p;

else if ((e = p.next) != null) {

if (p instanceof TreeNode)

node = ((TreeNode<K,V>)p).getTreeNode(hash, key);

else {

do {

if (e.hash == hash &&

((k = e.key) == key ||

(key != null && key.equals(k)))) {

node = e;

break;

}

p = e;

} while ((e = e.next) != null);

}

}

if (node != null && (!matchValue || (v = node.value) == value ||

(value != null && value.equals(v)))) {

if (node instanceof TreeNode)

((TreeNode<K,V>)node).removeTreeNode(this, tab, movable);

else if (node == p)

tab[index] = node.next;

else

p.next = node.next;

++modCount;

--size;

afterNodeRemoval(node);

return node;

}

}

return null;

} /**

* Removes all of the mappings from this map.

* The map will be empty after this call returns.

*/

public void clear() {

Node<K,V>[] tab;

modCount++;

if ((tab = table) != null && size > 0) {

size = 0;

for (int i = 0; i < tab.length; ++i)

tab[i] = null;

}

} /**

* Returns <tt>true</tt> if this map maps one or more keys to the

* specified value.

*

* @param value value whose presence in this map is to be tested

* @return <tt>true</tt> if this map maps one or more keys to the

* specified value

*/

public boolean containsValue(Object value) {

Node<K,V>[] tab; V v;

if ((tab = table) != null && size > 0) {

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next) {

if ((v = e.value) == value ||

(value != null && value.equals(v)))

return true;

}

}

}

return false;

} /**

* Returns a {@link Set} view of the keys contained in this map.

* The set is backed by the map, so changes to the map are

* reflected in the set, and vice-versa. If the map is modified

* while an iteration over the set is in progress (except through

* the iterator's own <tt>remove</tt> operation), the results of

* the iteration are undefined. The set supports element removal,

* which removes the corresponding mapping from the map, via the

* <tt>Iterator.remove</tt>, <tt>Set.remove</tt>,

* <tt>removeAll</tt>, <tt>retainAll</tt>, and <tt>clear</tt>

* operations. It does not support the <tt>add</tt> or <tt>addAll</tt>

* operations.

*

* @return a set view of the keys contained in this map

*/

public Set<K> keySet() {

Set<K> ks;

return (ks = keySet) == null ? (keySet = new KeySet()) : ks;

} final class KeySet extends AbstractSet<K> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

public final Iterator<K> iterator() { return new KeyIterator(); }

public final boolean contains(Object o) { return containsKey(o); }

public final boolean remove(Object key) {

return removeNode(hash(key), key, null, false, true) != null;

}

public final Spliterator<K> spliterator() {

return new KeySpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super K> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e.key);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

} /**

* Returns a {@link Collection} view of the values contained in this map.

* The collection is backed by the map, so changes to the map are

* reflected in the collection, and vice-versa. If the map is

* modified while an iteration over the collection is in progress

* (except through the iterator's own <tt>remove</tt> operation),

* the results of the iteration are undefined. The collection

* supports element removal, which removes the corresponding

* mapping from the map, via the <tt>Iterator.remove</tt>,

* <tt>Collection.remove</tt>, <tt>removeAll</tt>,

* <tt>retainAll</tt> and <tt>clear</tt> operations. It does not

* support the <tt>add</tt> or <tt>addAll</tt> operations.

*

* @return a view of the values contained in this map

*/

public Collection<V> values() {

Collection<V> vs;

return (vs = values) == null ? (values = new Values()) : vs;

} final class Values extends AbstractCollection<V> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

public final Iterator<V> iterator() { return new ValueIterator(); }

public final boolean contains(Object o) { return containsValue(o); }

public final Spliterator<V> spliterator() {

return new ValueSpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super V> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e.value);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

} /**

* Returns a {@link Set} view of the mappings contained in this map.

* The set is backed by the map, so changes to the map are

* reflected in the set, and vice-versa. If the map is modified

* while an iteration over the set is in progress (except through

* the iterator's own <tt>remove</tt> operation, or through the

* <tt>setValue</tt> operation on a map entry returned by the

* iterator) the results of the iteration are undefined. The set

* supports element removal, which removes the corresponding

* mapping from the map, via the <tt>Iterator.remove</tt>,

* <tt>Set.remove</tt>, <tt>removeAll</tt>, <tt>retainAll</tt> and

* <tt>clear</tt> operations. It does not support the

* <tt>add</tt> or <tt>addAll</tt> operations.

*

* @return a set view of the mappings contained in this map

*/

public Set<Map.Entry<K,V>> entrySet() {

Set<Map.Entry<K,V>> es;

return (es = entrySet) == null ? (entrySet = new EntrySet()) : es;

} final class EntrySet extends AbstractSet<Map.Entry<K,V>> {

public final int size() { return size; }

public final void clear() { HashMap.this.clear(); }

public final Iterator<Map.Entry<K,V>> iterator() {

return new EntryIterator();

}

public final boolean contains(Object o) {

if (!(o instanceof Map.Entry))

return false;

Map.Entry<?,?> e = (Map.Entry<?,?>) o;

Object key = e.getKey();

Node<K,V> candidate = getNode(hash(key), key);

return candidate != null && candidate.equals(e);

}

public final boolean remove(Object o) {

if (o instanceof Map.Entry) {

Map.Entry<?,?> e = (Map.Entry<?,?>) o;

Object key = e.getKey();

Object value = e.getValue();

return removeNode(hash(key), key, value, true, true) != null;

}

return false;

}

public final Spliterator<Map.Entry<K,V>> spliterator() {

return new EntrySpliterator<>(HashMap.this, 0, -1, 0, 0);

}

public final void forEach(Consumer<? super Map.Entry<K,V>> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

}

} // Overrides of JDK8 Map extension methods @Override

public V getOrDefault(Object key, V defaultValue) {

Node<K,V> e;

return (e = getNode(hash(key), key)) == null ? defaultValue : e.value;

} @Override

public V putIfAbsent(K key, V value) {

return putVal(hash(key), key, value, true, true);

} @Override

public boolean remove(Object key, Object value) {

return removeNode(hash(key), key, value, true, true) != null;

} @Override

public boolean replace(K key, V oldValue, V newValue) {

Node<K,V> e; V v;

if ((e = getNode(hash(key), key)) != null &&

((v = e.value) == oldValue || (v != null && v.equals(oldValue)))) {

e.value = newValue;

afterNodeAccess(e);

return true;

}

return false;

} @Override

public V replace(K key, V value) {

Node<K,V> e;

if ((e = getNode(hash(key), key)) != null) {

V oldValue = e.value;

e.value = value;

afterNodeAccess(e);

return oldValue;

}

return null;

} @Override

public V computeIfAbsent(K key,

Function<? super K, ? extends V> mappingFunction) {

if (mappingFunction == null)

throw new NullPointerException();

int hash = hash(key);

Node<K,V>[] tab; Node<K,V> first; int n, i;

int binCount = 0;

TreeNode<K,V> t = null;

Node<K,V> old = null;

if (size > threshold || (tab = table) == null ||

(n = tab.length) == 0)

n = (tab = resize()).length;

if ((first = tab[i = (n - 1) & hash]) != null) {

if (first instanceof TreeNode)

old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);

else {

Node<K,V> e = first; K k;

do {

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k)))) {

old = e;

break;

}

++binCount;

} while ((e = e.next) != null);

}

V oldValue;

if (old != null && (oldValue = old.value) != null) {

afterNodeAccess(old);

return oldValue;

}

}

V v = mappingFunction.apply(key);

if (v == null) {

return null;

} else if (old != null) {

old.value = v;

afterNodeAccess(old);

return v;

}

else if (t != null)

t.putTreeVal(this, tab, hash, key, v);

else {

tab[i] = newNode(hash, key, v, first);

if (binCount >= TREEIFY_THRESHOLD - 1)

treeifyBin(tab, hash);

}

++modCount;

++size;

afterNodeInsertion(true);

return v;

} public V computeIfPresent(K key,

BiFunction<? super K, ? super V, ? extends V> remappingFunction) {

if (remappingFunction == null)

throw new NullPointerException();

Node<K,V> e; V oldValue;

int hash = hash(key);

if ((e = getNode(hash, key)) != null &&

(oldValue = e.value) != null) {

V v = remappingFunction.apply(key, oldValue);

if (v != null) {

e.value = v;

afterNodeAccess(e);

return v;

}

else

removeNode(hash, key, null, false, true);

}

return null;

} @Override

public V compute(K key,

BiFunction<? super K, ? super V, ? extends V> remappingFunction) {

if (remappingFunction == null)

throw new NullPointerException();

int hash = hash(key);

Node<K,V>[] tab; Node<K,V> first; int n, i;

int binCount = 0;

TreeNode<K,V> t = null;

Node<K,V> old = null;

if (size > threshold || (tab = table) == null ||

(n = tab.length) == 0)

n = (tab = resize()).length;

if ((first = tab[i = (n - 1) & hash]) != null) {

if (first instanceof TreeNode)

old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);

else {

Node<K,V> e = first; K k;

do {

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k)))) {

old = e;

break;

}

++binCount;

} while ((e = e.next) != null);

}

}

V oldValue = (old == null) ? null : old.value;

V v = remappingFunction.apply(key, oldValue);

if (old != null) {

if (v != null) {

old.value = v;

afterNodeAccess(old);

}

else

removeNode(hash, key, null, false, true);

}

else if (v != null) {

if (t != null)

t.putTreeVal(this, tab, hash, key, v);

else {

tab[i] = newNode(hash, key, v, first);

if (binCount >= TREEIFY_THRESHOLD - 1)

treeifyBin(tab, hash);

}

++modCount;

++size;

afterNodeInsertion(true);

}

return v;

} @Override

public V merge(K key, V value,

BiFunction<? super V, ? super V, ? extends V> remappingFunction) {

if (value == null)

throw new NullPointerException();

if (remappingFunction == null)

throw new NullPointerException();

int hash = hash(key);

Node<K,V>[] tab; Node<K,V> first; int n, i;

int binCount = 0;

TreeNode<K,V> t = null;

Node<K,V> old = null;

if (size > threshold || (tab = table) == null ||

(n = tab.length) == 0)

n = (tab = resize()).length;

if ((first = tab[i = (n - 1) & hash]) != null) {

if (first instanceof TreeNode)

old = (t = (TreeNode<K,V>)first).getTreeNode(hash, key);

else {

Node<K,V> e = first; K k;

do {

if (e.hash == hash &&

((k = e.key) == key || (key != null && key.equals(k)))) {

old = e;

break;

}

++binCount;

} while ((e = e.next) != null);

}

}

if (old != null) {

V v;

if (old.value != null)

v = remappingFunction.apply(old.value, value);

else

v = value;

if (v != null) {

old.value = v;

afterNodeAccess(old);

}

else

removeNode(hash, key, null, false, true);

return v;

}

if (value != null) {

if (t != null)

t.putTreeVal(this, tab, hash, key, value);

else {

tab[i] = newNode(hash, key, value, first);

if (binCount >= TREEIFY_THRESHOLD - 1)

treeifyBin(tab, hash);

}

++modCount;

++size;

afterNodeInsertion(true);

}

return value;

} @Override

public void forEach(BiConsumer<? super K, ? super V> action) {

Node<K,V>[] tab;

if (action == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next)

action.accept(e.key, e.value);

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

} @Override

public void replaceAll(BiFunction<? super K, ? super V, ? extends V> function) {

Node<K,V>[] tab;

if (function == null)

throw new NullPointerException();

if (size > 0 && (tab = table) != null) {

int mc = modCount;

for (int i = 0; i < tab.length; ++i) {

for (Node<K,V> e = tab[i]; e != null; e = e.next) {

e.value = function.apply(e.key, e.value);

}

}

if (modCount != mc)

throw new ConcurrentModificationException();

}

} /* ------------------------------------------------------------ */

// Cloning and serialization /**

* Returns a shallow copy of this <tt>HashMap</tt> instance: the keys and

* values themselves are not cloned.

*

* @return a shallow copy of this map

*/

@SuppressWarnings("unchecked")

@Override

public Object clone() {

HashMap<K,V> result;

try {

result = (HashMap<K,V>)super.clone();

} catch (CloneNotSupportedException e) {

// this shouldn't happen, since we are Cloneable

throw new InternalError(e);

}

result.reinitialize();

result.putMapEntries(this, false);

return result;

} // These methods are also used when serializing HashSets

final float loadFactor() { return loadFactor; }

final int capacity() {

return (table != null) ? table.length :

(threshold > 0) ? threshold :

DEFAULT_INITIAL_CAPACITY;

} /**

* Save the state of the <tt>HashMap</tt> instance to a stream (i.e.,

* serialize it).

*

* @serialData The <i>capacity</i> of the HashMap (the length of the

* bucket array) is emitted (int), followed by the

* <i>size</i> (an int, the number of key-value

* mappings), followed by the key (Object) and value (Object)

* for each key-value mapping. The key-value mappings are

* emitted in no particular order.

*/

private void writeObject(java.io.ObjectOutputStream s)

throws IOException {

int buckets = capacity();

// Write out the threshold, loadfactor, and any hidden stuff

s.defaultWriteObject();

s.writeInt(buckets);

s.writeInt(size);

internalWriteEntries(s);

} /**

* Reconstitute the {@code HashMap} instance from a stream (i.e.,

* deserialize it).

*/

private void readObject(java.io.ObjectInputStream s)

throws IOException, ClassNotFoundException {

// Read in the threshold (ignored), loadfactor, and any hidden stuff

s.defaultReadObject();

reinitialize();

if (loadFactor <= 0 || Float.isNaN(loadFactor))

throw new InvalidObjectException("Illegal load factor: " +

loadFactor);

s.readInt(); // Read and ignore number of buckets

int mappings = s.readInt(); // Read number of mappings (size)

if (mappings < 0)

throw new InvalidObjectException("Illegal mappings count: " +

mappings);

else if (mappings > 0) { // (if zero, use defaults)

// Size the table using given load factor only if within

// range of 0.25...4.0

float lf = Math.min(Math.max(0.25f, loadFactor), 4.0f);

float fc = (float)mappings / lf + 1.0f;

int cap = ((fc < DEFAULT_INITIAL_CAPACITY) ?

DEFAULT_INITIAL_CAPACITY :

(fc >= MAXIMUM_CAPACITY) ?

MAXIMUM_CAPACITY :

tableSizeFor((int)fc));

float ft = (float)cap * lf;

threshold = ((cap < MAXIMUM_CAPACITY && ft < MAXIMUM_CAPACITY) ?

(int)ft : Integer.MAX_VALUE);

@SuppressWarnings({"rawtypes","unchecked"})

Node<K,V>[] tab = (Node<K,V>[])new Node[cap];

table = tab; // Read the keys and values, and put the mappings in the HashMap

for (int i = 0; i < mappings; i++) {

@SuppressWarnings("unchecked")

K key = (K) s.readObject();

@SuppressWarnings("unchecked")

V value = (V) s.readObject();

putVal(hash(key), key, value, false, false);

}

}

} /* ------------------------------------------------------------ */

// iterators abstract class HashIterator {

Node<K,V> next; // next entry to return

Node<K,V> current; // current entry

int expectedModCount; // for fast-fail

int index; // current slot HashIterator() {

expectedModCount = modCount;

Node<K,V>[] t = table;

current = next = null;

index = 0;

if (t != null && size > 0) { // advance to first entry

do {} while (index < t.length && (next = t[index++]) == null);

}

} public final boolean hasNext() {

return next != null;

} final Node<K,V> nextNode() {

Node<K,V>[] t;

Node<K,V> e = next;

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

if (e == null)

throw new NoSuchElementException();

if ((next = (current = e).next) == null && (t = table) != null) {

do {} while (index < t.length && (next = t[index++]) == null);

}

return e;

} public final void remove() {

Node<K,V> p = current;

if (p == null)

throw new IllegalStateException();

if (modCount != expectedModCount)

throw new ConcurrentModificationException();

current = null;

K key = p.key;

removeNode(hash(key), key, null, false, false);

expectedModCount = modCount;

}

} final class KeyIterator extends HashIterator

implements Iterator<K> {

public final K next() { return nextNode().key; }

} final class ValueIterator extends HashIterator

implements Iterator<V> {

public final V next() { return nextNode().value; }

} final class EntryIterator extends HashIterator

implements Iterator<Map.Entry<K,V>> {

public final Map.Entry<K,V> next() { return nextNode(); }

} /* ------------------------------------------------------------ */

// spliterators static class HashMapSpliterator<K,V> {

final HashMap<K,V> map;

Node<K,V> current; // current node

int index; // current index, modified on advance/split

int fence; // one past last index

int est; // size estimate

int expectedModCount; // for comodification checks HashMapSpliterator(HashMap<K,V> m, int origin,

int fence, int est,

int expectedModCount) {

this.map = m;

this.index = origin;

this.fence = fence;

this.est = est;

this.expectedModCount = expectedModCount;

} final int getFence() { // initialize fence and size on first use

int hi;

if ((hi = fence) < 0) {

HashMap<K,V> m = map;

est = m.size;

expectedModCount = m.modCount;

Node<K,V>[] tab = m.table;

hi = fence = (tab == null) ? 0 : tab.length;

}

return hi;

} public final long estimateSize() {

getFence(); // force init

return (long) est;

}

} static final class KeySpliterator<K,V>

extends HashMapSpliterator<K,V>

implements Spliterator<K> {

KeySpliterator(HashMap<K,V> m, int origin, int fence, int est,

int expectedModCount) {

super(m, origin, fence, est, expectedModCount);

} public KeySpliterator<K,V> trySplit() {

int hi = getFence(), lo = index, mid = (lo + hi) >>> 1;

return (lo >= mid || current != null) ? null :

new KeySpliterator<>(map, lo, index = mid, est >>>= 1,

expectedModCount);

} public void forEachRemaining(Consumer<? super K> action) {

int i, hi, mc;

if (action == null)

throw new NullPointerException();

HashMap<K,V> m = map;

Node<K,V>[] tab = m.table;

if ((hi = fence) < 0) {

mc = expectedModCount = m.modCount;

hi = fence = (tab == null) ? 0 : tab.length;

}

else

mc = expectedModCount;

if (tab != null && tab.length >= hi &&

(i = index) >= 0 && (i < (index = hi) || current != null)) {

Node<K,V> p = current;

current = null;

do {

if (p == null)

p = tab[i++];

else {

action.accept(p.key);

p = p.next;

}

} while (p != null || i < hi);

if (m.modCount != mc)

throw new ConcurrentModificationException();

}

} public boolean tryAdvance(Consumer<? super K> action) {

int hi;

if (action == null)

throw new NullPointerException();

Node<K,V>[] tab = map.table;

if (tab != null && tab.length >= (hi = getFence()) && index >= 0) {

while (current != null || index < hi) {

if (current == null)

current = tab[index++];

else {

K k = current.key;

current = current.next;

action.accept(k);

if (map.modCount != expectedModCount)

throw new ConcurrentModificationException();

return true;

}

}

}

return false;

} public int characteristics() {

return (fence < 0 || est == map.size ? Spliterator.SIZED : 0) |

Spliterator.DISTINCT;

}

} static final class ValueSpliterator<K,V>

extends HashMapSpliterator<K,V>

implements Spliterator<V> {

ValueSpliterator(HashMap<K,V> m, int origin, int fence, int est,

int expectedModCount) {

super(m, origin, fence, est, expectedModCount);

} public ValueSpliterator<K,V> trySplit() {

int hi = getFence(), lo = index, mid = (lo + hi) >>> 1;

return (lo >= mid || current != null) ? null :

new ValueSpliterator<>(map, lo, index = mid, est >>>= 1,

expectedModCount);

} public void forEachRemaining(Consumer<? super V> action) {

int i, hi, mc;

if (action == null)

throw new NullPointerException();

HashMap<K,V> m = map;

Node<K,V>[] tab = m.table;

if ((hi = fence) < 0) {

mc = expectedModCount = m.modCount;

hi = fence = (tab == null) ? 0 : tab.length;

}

else

mc = expectedModCount;

if (tab != null && tab.length >= hi &&

(i = index) >= 0 && (i < (index = hi) || current != null)) {

Node<K,V> p = current;

current = null;

do {

if (p == null)

p = tab[i++];

else {

action.accept(p.value);

p = p.next;

}

} while (p != null || i < hi);

if (m.modCount != mc)

throw new ConcurrentModificationException();

}

} public boolean tryAdvance(Consumer<? super V> action) {

int hi;

if (action == null)

throw new NullPointerException();

Node<K,V>[] tab = map.table;

if (tab != null && tab.length >= (hi = getFence()) && index >= 0) {

while (current != null || index < hi) {

if (current == null)

current = tab[index++];

else {

V v = current.value;

current = current.next;

action.accept(v);

if (map.modCount != expectedModCount)

throw new ConcurrentModificationException();

return true;

}

}

}

return false;

} public int characteristics() {

return (fence < 0 || est == map.size ? Spliterator.SIZED : 0);

}

} static final class EntrySpliterator<K,V>

extends HashMapSpliterator<K,V>