ResNet实战

# Resnet.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

class BasicBlock(layers.Layer):

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1:

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:

self.downsample = lambda x: x

def call(self, inputs, training=None):

# [b,h,w,c]

out = self.conv1(inputs)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

identity = self.downsample(inputs)

output = layers.add([out, identity])

output = tf.nn.relu(output)

return out

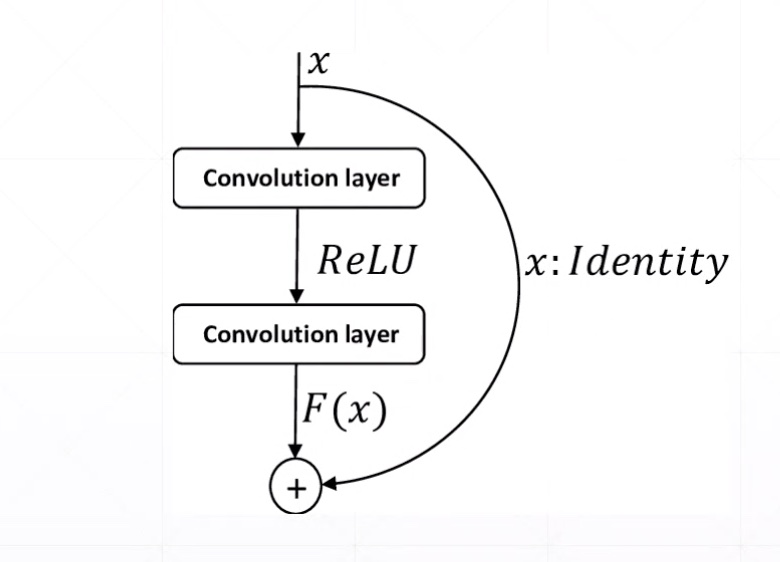

Res Block

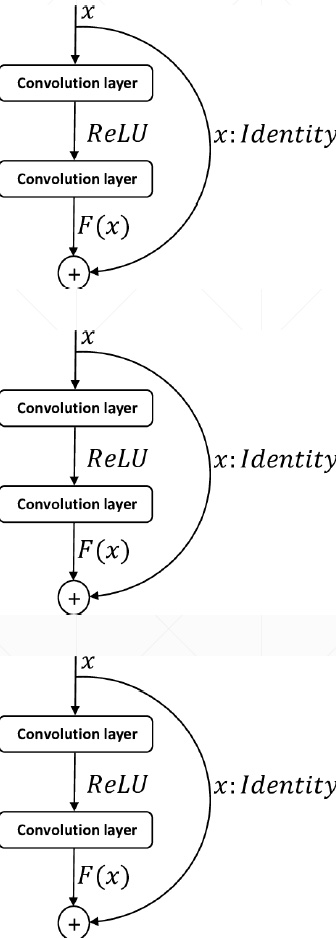

ResNet18

# Resnet.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow import keras

from tensorflow.keras import layers, Sequential

class BasicBlock(layers.Layer):

def __init__(self, filter_num, stride=1):

super(BasicBlock, self).__init__()

self.conv1 = layers.Conv2D(filter_num, (3, 3), strides=stride, padding='same')

self.bn1 = layers.BatchNormalization()

self.relu = layers.Activation('relu')

self.conv2 = layers.Conv2D(filter_num, (3, 3), strides=1, padding='same')

self.bn2 = layers.BatchNormalization()

if stride != 1:

self.downsample = Sequential()

self.downsample.add(layers.Conv2D(filter_num, (1, 1), strides=stride))

else:

self.downsample = lambda x: x

def call(self, inputs, training=None):

# [b,h,w,c]

out = self.conv1(inputs)

out = self.bn1(out)

out = self.relu(out)

out = self.conv2(out)

out = self.bn2(out)

identity = self.downsample(inputs)

output = layers.add([out, identity])

output = tf.nn.relu(output)

return out

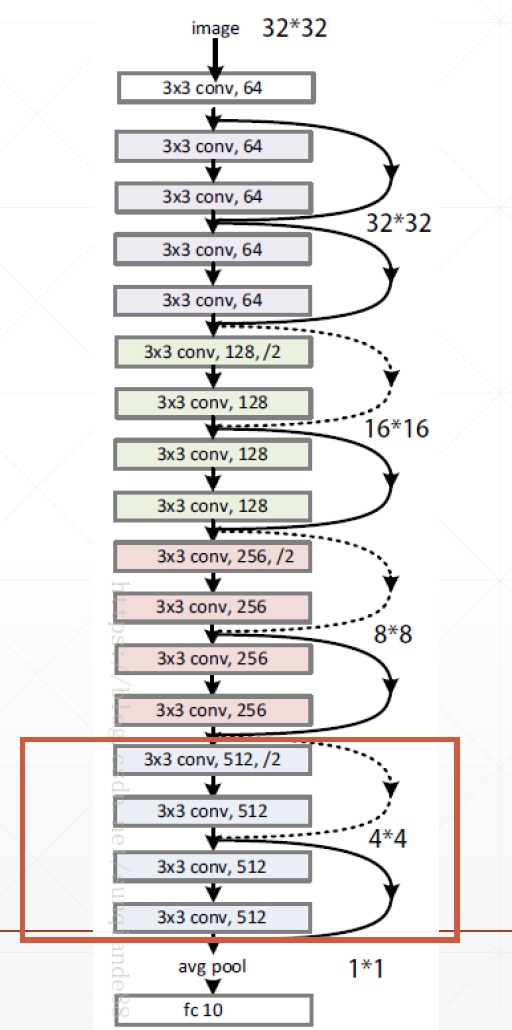

class ResNet(keras.Model):

def __init__(self, layer_dims, num_classes=100): # [2,2,2,2]

super(ResNet, self).__init__()

# 根部

self.stem = Sequential([layers.Conv2D(64, (3, 3), strides=(1, 1,)),

layers.BatchNormalization(),

layers.Activation('relu'),

layers.MaxPool2D(pool_size=(2, 2), strides=(1, 1), padding='same')

])

# 64,128,256,512是通道数

self.layer1 = self.build_resblock(64, layer_dims[0])

self.layer2 = self.build_resblock(128, layer_dims[1], stride=2)

self.layer3 = self.build_resblock(256, layer_dims[2], stride=2)

self.layer4 = self.build_resblock(512, layer_dims[3], stride=2)

# output: [b, 512, h, w]

self.avgpool = layers.GlobalAveragePooling2D()

self.fc = layers.Dense(num_classes) # 分类

def call(self, inputs, training=None):

x = self.stem(inputs)

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

x = self.layer4(x)

# [b, c]

x = self.avgpool(x)

# [b]

x = self.fc(x)

return x

def build_resblock(self, filter_num, blocks, stride=1):

res_blocks = Sequential()

# may down sample

res_blocks.add(BasicBlock(filter_num, stride))

for _ in range(1, blocks):

res_blocks.add(BasicBlock(filter_num, stride=1))

return res_blocks

def resnet18():

return ResNet([2, 2, 2, 2])

def resnet34():

return ResNet([3, 4, 6, 3])

# resnet18_train.py

#!/usr/bin/env python

# -*- coding:utf-8 -*-

import tensorflow as tf

from tensorflow.keras import layers, optimizers, datasets, Sequential

import os

from Resnet import resnet18

os.environ['TF_CPP_MIN_LOG_LEVEL'] = '2'

tf.random.set_seed(2345)

def preprocess(x, y):

# [-1~1]

x = tf.cast(x, dtype=tf.float32) / 255. - 0.5

y = tf.cast(y, dtype=tf.int32)

return x, y

(x, y), (x_test, y_test) = datasets.cifar100.load_data()

y = tf.squeeze(y, axis=1)

y_test = tf.squeeze(y_test, axis=1)

print(x.shape, y.shape, x_test.shape, y_test.shape)

train_db = tf.data.Dataset.from_tensor_slices((x, y))

train_db = train_db.shuffle(1000).map(preprocess).batch(512)

test_db = tf.data.Dataset.from_tensor_slices((x_test, y_test))

test_db = test_db.map(preprocess).batch(512)

sample = next(iter(train_db))

print('sample:', sample[0].shape, sample[1].shape,

tf.reduce_min(sample[0]), tf.reduce_max(sample[0]))

def main():

# [b, 32, 32, 3] => [b, 1, 1, 512]

model = resnet18()

model.build(input_shape=(None, 32, 32, 3))

model.summary()

optimizer = optimizers.Adam(lr=1e-3)

for epoch in range(500):

for step, (x, y) in enumerate(train_db):

with tf.GradientTape() as tape:

# [b, 32, 32, 3] => [b, 100]

logits = model(x)

# [b] => [b, 100]

y_onehot = tf.one_hot(y, depth=100)

# compute loss

loss = tf.losses.categorical_crossentropy(y_onehot, logits, from_logits=True)

loss = tf.reduce_mean(loss)

grads = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(grads, model.trainable_variables))

if step % 50 == 0:

print(epoch, step, 'loss:', float(loss))

total_num = 0

total_correct = 0

for x, y in test_db:

logits = model(x)

prob = tf.nn.softmax(logits, axis=1)

pred = tf.argmax(prob, axis=1)

pred = tf.cast(pred, dtype=tf.int32)

correct = tf.cast(tf.equal(pred, y), dtype=tf.int32)

correct = tf.reduce_sum(correct)

total_num += x.shape[0]

total_correct += int(correct)

acc = total_correct / total_num

print(epoch, 'acc:', acc)

if __name__ == '__main__':

main()

(50000, 32, 32, 3) (50000,) (10000, 32, 32, 3) (10000,)

sample: (512, 32, 32, 3) (512,) tf.Tensor(-0.5, shape=(), dtype=float32) tf.Tensor(0.5, shape=(), dtype=float32)

Model: "res_net"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

sequential (Sequential) multiple 2048

_________________________________________________________________

sequential_1 (Sequential) multiple 148736

_________________________________________________________________

sequential_2 (Sequential) multiple 526976

_________________________________________________________________

sequential_4 (Sequential) multiple 2102528

_________________________________________________________________

sequential_6 (Sequential) multiple 8399360

_________________________________________________________________

global_average_pooling2d (Gl multiple 0

_________________________________________________________________

dense (Dense) multiple 51300

=================================================================

Total params: 11,230,948

Trainable params: 11,223,140

Non-trainable params: 7,808

_________________________________________________________________

WARNING: Logging before flag parsing goes to stderr.

W0601 16:59:57.619546 4664264128 optimizer_v2.py:928] Gradients does not exist for variables ['sequential_2/basic_block_2/sequential_3/conv2d_7/kernel:0', 'sequential_2/basic_block_2/sequential_3/conv2d_7/bias:0', 'sequential_4/basic_block_4/sequential_5/conv2d_12/kernel:0', 'sequential_4/basic_block_4/sequential_5/conv2d_12/bias:0', 'sequential_6/basic_block_6/sequential_7/conv2d_17/kernel:0', 'sequential_6/basic_block_6/sequential_7/conv2d_17/bias:0'] when minimizing the loss.

0 0 loss: 4.60512638092041

Out of memory

- decrease batch size

- tune resnet[2,2,2,2]

- try Google CoLab

- buy new NVIDIA GPU Card

ResNet实战的更多相关文章

- TensorFlow2教程(目录)

第一篇 基本操作 01 Tensor数据类型 02 创建Tensor 03 Tensor索引和切片 04 维度变换 05 Broadcasting 06 数学运算 07 前向传播(张量)- 实战 第二 ...

- Pytorch1.0入门实战三:ResNet实现cifar-10分类,利用visdom可视化训练过程

人的理想志向往往和他的能力成正比. —— 约翰逊 最近一直在使用pytorch深度学习框架,很想用pytorch搞点事情出来,但是框架中一些基本的原理得懂!本次,利用pytorch实现ResNet神经 ...

- Pytorch1.0入门实战二:LeNet、AleNet、VGG、GoogLeNet、ResNet模型详解

LeNet 1998年,LeCun提出了第一个真正的卷积神经网络,也是整个神经网络的开山之作,称为LeNet,现在主要指的是LeNet5或LeNet-5,如图1.1所示.它的主要特征是将卷积层和下采样 ...

- [深度应用]·实战掌握PyTorch图片分类简明教程

[深度应用]·实战掌握PyTorch图片分类简明教程 个人网站--> http://www.yansongsong.cn/ 项目GitHub地址--> https://github.com ...

- 学习笔记TF033:实现ResNet

ResNet(Residual Neural Network),微软研究院 Kaiming He等4名华人提出.通过Residual Unit训练152层深神经网络,ILSVRC 2015比赛冠军,3 ...

- Reading | 《TensorFlow:实战Google深度学习框架》

目录 三.TensorFlow入门 1. TensorFlow计算模型--计算图 I. 计算图的概念 II. 计算图的使用 2.TensorFlow数据类型--张量 I. 张量的概念 II. 张量的使 ...

- 人工智能深度学习框架MXNet实战:深度神经网络的交通标志识别训练

人工智能深度学习框架MXNet实战:深度神经网络的交通标志识别训练 MXNet 是一个轻量级.可移植.灵活的分布式深度学习框架,2017 年 1 月 23 日,该项目进入 Apache 基金会,成为 ...

- 【深度学习】基于Pytorch的ResNet实现

目录 1. ResNet理论 2. pytorch实现 2.1 基础卷积 2.2 模块 2.3 使用ResNet模块进行迁移学习 1. ResNet理论 论文:https://arxiv.org/pd ...

- tensorflow学习笔记——ResNet

自2012年AlexNet提出以来,图像分类.目标检测等一系列领域都被卷积神经网络CNN统治着.接下来的时间里,人们不断设计新的深度学习网络模型来获得更好的训练效果.一般而言,许多网络结构的改进(例如 ...

随机推荐

- linux 文件 chgrp、chown、chmod

linux 默认情况下有会提供6个terminal 来让用户登录,使用[Ctrl]+[Alt] +[F1]~[F6] 组合 linux 文件 任何一个文件都具有"user,Group及Oth ...

- 贪心 UVALive 6834 Shopping

题目传送门 /* 题意:有n个商店排成一条直线,有一些商店有先后顺序,问从0出发走到n+1最少的步数 贪心:对于区间被覆盖的点只进行一次计算,还有那些要往回走的区间步数*2,再加上原来最少要走n+1步 ...

- shell脚本中定义路径变量出现的BUG

=========================================================================== if 语句中的定义路径变量 引发命令的PATH路 ...

- WAMP配置虚拟目录

1.启动wamp所有服务,输入localhost或localhost:端口号确保wamp环境正常无误. 2.设置httpd.conf 2.1打开文件:单击wamp在电脑右下角的图标=>wamp= ...

- AJPFX关于数组获取最值的思路和方法

思路分析:1.定义一个变量(max,初始值一般为数组中的第一个元素值),用来记录最大值.2.遍历数组,获取数组中的每一个元素,然后依次和max进行比较.如果当前遍历到的元素比max大,就把当前元素值给 ...

- jq星星评分

html代码 <div class="make_mark"> <h5>请为这次服务打分</h5> <div class="mar ...

- CentOS 7 下用 firewall-cmd / iptables 实现 NAT 转发供内网服务器联网

自从用 HAProxy 对服务器做了负载均衡以后,感觉后端服务器真的没必要再配置并占用公网IP资源. 而且由于托管服务器的公网 IP 资源是固定的,想上 Keepalived 的话,需要挤出来 3 个 ...

- Android拍照得到全尺寸图片并进行压缩/拍照或者图库选择 压缩后 图片 上传

http://www.jb51.net/article/77223.htm https://www.cnblogs.com/breeze1988/p/4019510.html

- STM32 (基础语法)笔记

指针遍历: char *myitoa(int value, char *string, int radix){ int i, d; int flag = 0; char *ptr = string; ...

- JS中的Promise

Promise 对象有以下两个特点. (1)对象的状态不受外界影响.Promise 对象代表一个异步操作,有三种状态:Pending(进行中).Resolved(已完成,又称 Fulfilled)和 ...