Lucene入门+实现

Lucene简介详情见:(https://blog.csdn.net/Regan_Hoo/article/details/78802897)

lucene实现原理

其实网上很多资料表明了,lucene底层实现原理就是倒排索引(invertedindex)。

那么究竟什么是倒排索引呢?

经过Lucene分词之后,它会维护一个类似于“词条--文档ID”的对应关系,当我们进行搜索某个词条的时候,就会得到相应的文档ID。

不同于传统的顺排索引根据一个词,知道有哪几篇文章有这个词。

图解:

Lucene在搜索前自行生成倒排索引,相比数据库中like的模糊搜索效率更高!

Lucene 核心API

索引过程中的核心类

- Document文档:他是承载数据的实体(他可以集合信息域Field),是一个抽象的概念,一条记录经过索引之后,就是以一个Document的形式存储在索引文件中的。

- Field:Field 索引中的每一个Document对象都包含一个或者多个不同的域(Field),域是由域名(name)和域值(value)对组成,每一个域都包含一段相应的数据信息。

- IndexWriter:索引过程的核心组件。这个类用于创建一个新的索引并且把文档 加到已有的索引中去,也就是写入操作。

- Directroy:是索引的存放位置,是个抽象类。具体的子类提供特定的存储索引的地址。(FSDirectory 将索引存放在指定的磁盘中,RAMDirectory ·将索引存放在内存中。)

- Analyzer:分词器,在文本被索引之前,需要经过分词器处理,他负责从将被索引的文档中提取词汇单元,并剔除剩下的无用信息(停止词汇),分词器十分关键,因为不同的分词器,解析相同的文档结果会有很大的不同。Analyzer是一个抽象类,是所有分词器的基类。

搜索过程中的核心类

- IndexSearcher :IndexSearcher 调用它的search方法,用于搜索IndexWriter 所创建的索引。

- Term :Term 使用于搜索的一个基本单元。

- Query : Query Lucene中含有多种查询(Query)子类。它们用于查询条件的限定其中TermQuery 是Lucene提供的最基本的查询类型,也是最简单的,它主要用来匹配在指定的域(Field)中包含了特定项(Term)的文档。

- TermQuery :Query下的一个子类TermQuery(单词条查询) ,Query lucene中有很多类似的子类。

- TopDocs: 是一个存放有序搜索结果指针的简单容器,在这里搜索的结果是指匹配一个查询条件的一系列的文档。

lucene实现

- 在pom.xml中导入lucene相关依赖

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-core</artifactId>

<version>5.3.</version>

</dependency>

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-queryparser</artifactId>

<version>5.3.</version>

</dependency>

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-analyzers-common</artifactId>

<version>5.3.</version>

</dependency>

加入工具类LuceneUtil.java

import java.io.IOException;

import java.nio.file.Paths;

import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.index.DirectoryReader;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.index.IndexWriterConfig.OpenMode;

import org.apache.lucene.search.IndexSearcher;

import org.apache.lucene.search.Query;

import org.apache.lucene.search.highlight.Formatter;

import org.apache.lucene.search.highlight.Highlighter;

import org.apache.lucene.search.highlight.QueryTermScorer;

import org.apache.lucene.search.highlight.Scorer;

import org.apache.lucene.search.highlight.SimpleFragmenter;

import org.apache.lucene.search.highlight.SimpleHTMLFormatter;

import org.apache.lucene.store.Directory;

import org.apache.lucene.store.FSDirectory;

import org.apache.lucene.store.RAMDirectory; /**

* lucene工具类

* @author Administrator

*

*/

public class LuceneUtil { /**

* 获取索引文件存放的文件夹对象

*

* @param path

* @return

*/

public static Directory getDirectory(String path) {

Directory directory = null;

try {

directory = FSDirectory.open(Paths.get(path));

} catch (IOException e) {

e.printStackTrace();

}

return directory;

} /**

* 索引文件存放在内存

*

* @return

*/

public static Directory getRAMDirectory() {

Directory directory = new RAMDirectory();

return directory;

} /**

* 文件夹读取对象

*

* @param directory

* @return

*/

public static DirectoryReader getDirectoryReader(Directory directory) {

DirectoryReader reader = null;

try {

reader = DirectoryReader.open(directory);

} catch (IOException e) {

e.printStackTrace();

}

return reader;

} /**

* 文件索引对象

*

* @param reader

* @return

*/

public static IndexSearcher getIndexSearcher(DirectoryReader reader) {

IndexSearcher indexSearcher = new IndexSearcher(reader);

return indexSearcher;

} /**

* 写入索引对象

*

* @param directory

* @param analyzer

* @return

*/

public static IndexWriter getIndexWriter(Directory directory, Analyzer analyzer) {

IndexWriter iwriter = null;

try {

IndexWriterConfig config = new IndexWriterConfig(analyzer);

config.setOpenMode(OpenMode.CREATE_OR_APPEND);

// Sort sort=new Sort(new SortField("content", Type.STRING));

// config.setIndexSort(sort);//排序

config.setCommitOnClose(true);

// 自动提交

// config.setMergeScheduler(new ConcurrentMergeScheduler());

// config.setIndexDeletionPolicy(new

// SnapshotDeletionPolicy(NoDeletionPolicy.INSTANCE));

iwriter = new IndexWriter(directory, config);

} catch (IOException e) {

e.printStackTrace();

}

return iwriter;

} /**

* 关闭索引文件生成对象以及文件夹对象

*

* @param indexWriter

* @param directory

*/

public static void close(IndexWriter indexWriter, Directory directory) {

if (indexWriter != null) {

try {

indexWriter.close();

} catch (IOException e) {

indexWriter = null;

}

}

if (directory != null) {

try {

directory.close();

} catch (IOException e) {

directory = null;

}

}

} /**

* 关闭索引文件读取对象以及文件夹对象

*

* @param reader

* @param directory

*/

public static void close(DirectoryReader reader, Directory directory) {

if (reader != null) {

try {

reader.close();

} catch (IOException e) {

reader = null;

}

}

if (directory != null) {

try {

directory.close();

} catch (IOException e) {

directory = null;

}

} } /**

* 高亮标签

*

* @param query

* @param fieldName

* @return

*/ public static Highlighter getHighlighter(Query query, String fieldName) {

Formatter formatter = new SimpleHTMLFormatter("<span style='color:red'>", "</span>");

Scorer fragmentScorer = new QueryTermScorer(query, fieldName);

Highlighter highlighter = new Highlighter(formatter, fragmentScorer);

highlighter.setTextFragmenter(new SimpleFragmenter(200));

return highlighter;

}

}

生成索引(配合测试类dome01展示)

目的:索引数据目录,在指定目录生成索引文件

1、构造方法 实例化IndexWriter

* 获取索引文件存放地址对象

* 获取输出流

设置输出流的对应配置

给输出流配置设置分词器

2、关闭索引输出流

3、索引指定路径下的所有文件

4、索引指定的文件

5、获取文档(索引文件中包含的重要信息,key-value的形式)

6、测试

IndexCreate.java

import java.io.File;

import java.io.FileReader;

import java.nio.file.Paths; import org.apache.lucene.analysis.Analyzer;

import org.apache.lucene.analysis.standard.StandardAnalyzer;

import org.apache.lucene.document.Document;

import org.apache.lucene.document.Field;

import org.apache.lucene.document.TextField;

import org.apache.lucene.index.IndexWriter;

import org.apache.lucene.index.IndexWriterConfig;

import org.apache.lucene.store.FSDirectory; /**

* 配合Demo1.java进行lucene的helloword实现

* @author Administrator

*

*/

public class IndexCreate {

private IndexWriter indexWriter; /**

* 1、构造方法 实例化IndexWriter

* @param indexDir

* @throws Exception

*/

public IndexCreate(String indexDir) throws Exception{

// 获取索引文件的存放地址对象

FSDirectory dir = FSDirectory.open(Paths.get(indexDir));

// 标准分词器(针对英文)

Analyzer analyzer = new StandardAnalyzer();

// 索引输出流配置对象

IndexWriterConfig conf = new IndexWriterConfig(analyzer);

indexWriter = new IndexWriter(dir, conf);

} /**

* 2、关闭索引输出流

* @throws Exception

*/

public void closeIndexWriter() throws Exception{

indexWriter.close();

} /**

* 3、索引指定路径下的所有文件

* @param dataDir

* @return

* @throws Exception

*/

public int index(String dataDir) throws Exception{

File[] files = new File(dataDir).listFiles();

for (File file : files) {

indexFile(file);

}

return indexWriter.numDocs();

} /**

* 4、索引指定的文件

* @param file

* @throws Exception

*/

private void indexFile(File file) throws Exception{

System.out.println("被索引文件的全路径:"+file.getCanonicalPath());

Document doc = getDocument(file);

indexWriter.addDocument(doc);

} /**

* 5、获取文档(索引文件中包含的重要信息,key-value的形式)

* @param file

* @return

* @throws Exception

*/

private Document getDocument(File file) throws Exception{

Document doc = new Document();

doc.add(new TextField("contents", new FileReader(file)));

// Field.Store.YES是否存储到硬盘

doc.add(new TextField("fullPath", file.getCanonicalPath(),Field.Store.YES));

doc.add(new TextField("fileName", file.getName(),Field.Store.YES));

return doc;

}

}

测试dome01.java

public class Demo1 {

public static void main(String[] args) {

// 索引文件将要存放的位置

String indexDir = "D:\\lucenetemp\\lucene\\demo1";

// 数据源地址

String dataDir = "D:\\lucenetemp\\lucene\\demo1\\data";

IndexCreate ic = null;

try {

ic = new IndexCreate(indexDir);

long start = System.currentTimeMillis();

int num = ic.index(dataDir);

long end = System.currentTimeMillis();

System.out.println("索引指定路径下"+num+"个文件,一共花费了"+(end-start)+"毫秒");

} catch (Exception e) {

e.printStackTrace();

}finally {

try {

ic.closeIndexWriter();

} catch (Exception e) {

e.printStackTrace();

}

}

}

}

使用索引

目的:从索引文件中拿数据

1、获取输入流(通过dirReader)

2、获取索引搜索对象(通过输入流来拿)

3、获取查询对象(通过查询解析器来获取,解析器是通过分词器获取)

4、获取包含关键字排前面的文档对象集合

5、可以获取对应文档的内容

IndexUse.java

/**

* 配合Demo2.java进行lucene的helloword实现

* @author Administrator

*

*/

public class IndexUse {

/**

* 通过关键字在索引目录中查询

* @param indexDir 索引文件所在目录

* @param q 关键字

*/

public static void search(String indexDir, String q) throws Exception{

FSDirectory indexDirectory = FSDirectory.open(Paths.get(indexDir));

// 注意:索引输入流不是new出来的,是通过目录读取工具类打开的

IndexReader indexReader = DirectoryReader.open(indexDirectory);

// 获取索引搜索对象

IndexSearcher indexSearcher = new IndexSearcher(indexReader);

Analyzer analyzer = new StandardAnalyzer();

QueryParser queryParser = new QueryParser("contents", analyzer);

// 获取符合关键字的查询对象

Query query = queryParser.parse(q); long start=System.currentTimeMillis();

// 获取关键字出现的前十次

TopDocs topDocs = indexSearcher.search(query , 10);

long end=System.currentTimeMillis();

System.out.println("匹配 "+q+" ,总共花费"+(end-start)+"毫秒"+"查询到"+topDocs.totalHits+"个记录"); for (ScoreDoc scoreDoc : topDocs.scoreDocs) {

int docID = scoreDoc.doc;

// 索引搜索对象通过文档下标获取文档

Document doc = indexSearcher.doc(docID);

System.out.println("通过索引文件:"+doc.get("fullPath")+"拿数据");

} indexReader.close();

}

}

Dome02.java

/**

* 查询索引测试

* @author Administrator

*

*/

public class Demo2 {

public static void main(String[] args) {

String indexDir = "D:\\lucenetemp\\lucene\\demo1";

String q = "EarlyTerminating-Collector";

try {

IndexUse.search(indexDir, q);

} catch (Exception e) {

e.printStackTrace();

}

}

}

构建索引

注:大数据时用合并前的删除,知识给索引文件打标,定时清理打标的索引文件。

数据量不是特别大的时候,可以及时删除索引文件。

Dome03.java

/**

* 构建索引

* 对索引的增删改

* @author Administrator

*

*/

public class Demo3 {

private String ids[]={"1","2","3"};

private String citys[]={"qingdao","nanjing","shanghai"};

private String descs[]={

"Qingdao is a beautiful city.",

"Nanjing is a city of culture.",

"Shanghai is a bustling city."

};

private FSDirectory dir; /**

* 每次都生成索引文件

* @throws Exception

*/

@Before

public void setUp() throws Exception {

dir = FSDirectory.open(Paths.get("D:\\lucenetemp\\lucene\\demo2\\indexDir"));

IndexWriter indexWriter = getIndexWriter();

for (int i = 0; i < ids.length; i++) {

Document doc = new Document();

doc.add(new StringField("id", ids[i], Field.Store.YES));

doc.add(new StringField("city", citys[i], Field.Store.YES));

doc.add(new TextField("desc", descs[i], Field.Store.NO));

indexWriter.addDocument(doc);

}

indexWriter.close();

} /**

* 获取索引输出流

* @return

* @throws Exception

*/

private IndexWriter getIndexWriter() throws Exception{

Analyzer analyzer = new StandardAnalyzer();

IndexWriterConfig conf = new IndexWriterConfig(analyzer);

return new IndexWriter(dir, conf );

} /**

* 测试写了几个索引文件

* @throws Exception

*/

@Test

public void getWriteDocNum() throws Exception {

IndexWriter indexWriter = getIndexWriter();

System.out.println("索引目录下生成"+indexWriter.numDocs()+"个索引文件");

} /**

* 打上标记,该索引实际并未删除

* @throws Exception

*/

@Test

public void deleteDocBeforeMerge() throws Exception {

IndexWriter indexWriter = getIndexWriter();

System.out.println("最大文档数:"+indexWriter.maxDoc());

indexWriter.deleteDocuments(new Term("id", "1"));

indexWriter.commit(); System.out.println("最大文档数:"+indexWriter.maxDoc());

System.out.println("实际文档数:"+indexWriter.numDocs());

indexWriter.close();

} /**

* 对应索引文件已经删除,但是该版本的分词会保留

* @throws Exception

*/

@Test

public void deleteDocAfterMerge() throws Exception {

// https://blog.csdn.net/asdfsadfasdfsa/article/details/78820030

// org.apache.lucene.store.LockObtainFailedException: Lock held by this virtual machine:indexWriter是单例的、线程安全的,不允许打开多个。

IndexWriter indexWriter = getIndexWriter();

System.out.println("最大文档数:"+indexWriter.maxDoc());

indexWriter.deleteDocuments(new Term("id", "1"));

indexWriter.forceMergeDeletes(); //强制删除

indexWriter.commit(); System.out.println("最大文档数:"+indexWriter.maxDoc());

System.out.println("实际文档数:"+indexWriter.numDocs());

indexWriter.close();

} /**

* 测试更新索引

* @throws Exception

*/

@Test

public void testUpdate()throws Exception{

IndexWriter writer=getIndexWriter();

Document doc=new Document();

doc.add(new StringField("id", "1", Field.Store.YES));

doc.add(new StringField("city","qingdao",Field.Store.YES));

doc.add(new TextField("desc", "dsss is a city.", Field.Store.NO));

writer.updateDocument(new Term("id","1"), doc);

writer.close();

}

}

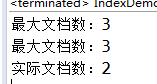

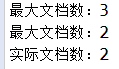

合并前:

合并后:

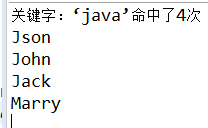

文档域加权

Dome04.java

public class Demo4 {

private String ids[]={"1","2","3","4"};

private String authors[]={"Jack","Marry","John","Json"};

private String positions[]={"accounting","technician","salesperson","boss"};

private String titles[]={"Java is a good language.","Java is a cross platform language","Java powerful","You should learn java"};

private String contents[]={

"If possible, use the same JRE major version at both index and search time.",

"When upgrading to a different JRE major version, consider re-indexing. ",

"Different JRE major versions may implement different versions of Unicode,",

"For example: with Java 1.4, `LetterTokenizer` will split around the character U+02C6,"

};

private Directory dir;//索引文件目录

@Before

public void setUp()throws Exception {

dir = FSDirectory.open(Paths.get("D:\\lucenetemp\\lucene\\demo3\\indexDir"));

IndexWriter writer = getIndexWriter();

for (int i = 0; i < authors.length; i++) {

Document doc = new Document();

doc.add(new StringField("id", ids[i], Field.Store.YES));

doc.add(new StringField("author", authors[i], Field.Store.YES));

doc.add(new StringField("position", positions[i], Field.Store.YES));

TextField textField = new TextField("title", titles[i], Field.Store.YES);

// Json投钱做广告,把排名刷到第一了

// if("boss".equals(positions[i])) {

// textField.setBoost(2f);//设置权重,默认为1

// }

doc.add(textField);

// TextField会分词,StringField不会分词

doc.add(new TextField("content", contents[i], Field.Store.NO));

writer.addDocument(doc);

}

writer.close();

}

private IndexWriter getIndexWriter() throws Exception{

Analyzer analyzer = new StandardAnalyzer();

IndexWriterConfig conf = new IndexWriterConfig(analyzer);

return new IndexWriter(dir, conf);

}

@Test

public void index() throws Exception{

IndexReader reader = DirectoryReader.open(dir);

IndexSearcher searcher = new IndexSearcher(reader);

String fieldName = "title";

String keyWord = "java";

Term t = new Term(fieldName, keyWord);

Query query = new TermQuery(t);

TopDocs hits = searcher.search(query, 10);

System.out.println("关键字:‘"+keyWord+"’命中了"+hits.totalHits+"次");

for (ScoreDoc scoreDoc : hits.scoreDocs) {

Document doc = searcher.doc(scoreDoc.doc);

System.out.println(doc.get("author"));

}

}

}

文档加权后

注:文档加权可以提升排名,一般浏览器搜索都是如此,镀金玩家就是牛逼!

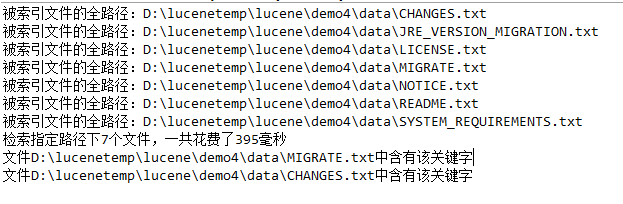

索引搜索功能

特定项搜索

Dome05.java

/**

* 特定项搜索

* 查询表达式(queryParser)

* @author Administrator

*

*/

public class Demo5 {

@Before

public void setUp() {

// 索引文件将要存放的位置

String indexDir = "D:\\lucenetemp\\lucene\\demo4";

// 数据源地址

String dataDir = "D:\\lucenetemp\\lucene\\demo4\\data";

IndexCreate ic = null;

try {

ic = new IndexCreate(indexDir);

long start = System.currentTimeMillis();

int num = ic.index(dataDir);

long end = System.currentTimeMillis();

System.out.println("检索指定路径下" + num + "个文件,一共花费了" + (end - start) + "毫秒"); } catch (Exception e) {

e.printStackTrace();

} finally {

try {

ic.closeIndexWriter();

} catch (Exception e) {

e.printStackTrace();

}

}

} /**

* 特定项搜索

*/

@Test

public void testTermQuery() {

String indexDir = "D:\\lucenetemp\\lucene\\demo4"; String fld = "contents";

String text = "indexformattoooldexception";

// 特定项片段名和关键字

Term t = new Term(fld , text);

TermQuery tq = new TermQuery(t );

try {

FSDirectory indexDirectory = FSDirectory.open(Paths.get(indexDir));

// 注意:索引输入流不是new出来的,是通过目录读取工具类打开的

IndexReader indexReader = DirectoryReader.open(indexDirectory);

// 获取索引搜索对象

IndexSearcher is = new IndexSearcher(indexReader); TopDocs hits = is.search(tq, 100);

// System.out.println(hits.totalHits);

for(ScoreDoc scoreDoc: hits.scoreDocs) {

Document doc = is.doc(scoreDoc.doc);

System.out.println("文件"+doc.get("fullPath")+"中含有该关键字"); }

} catch (IOException e) {

e.printStackTrace();

}

} /**

* 查询表达式(queryParser)

*/

@Test

public void testQueryParser() {

String indexDir = "D:\\lucenetemp\\lucene\\demo4";

// 获取查询解析器(通过哪种分词器去解析哪种片段)

QueryParser queryParser = new QueryParser("contents", new StandardAnalyzer());

try {

FSDirectory indexDirectory = FSDirectory.open(Paths.get(indexDir));

// 注意:索引输入流不是new出来的,是通过目录读取工具类打开的

IndexReader indexReader = DirectoryReader.open(indexDirectory);

// 获取索引搜索对象

IndexSearcher is = new IndexSearcher(indexReader); // 由解析器去解析对应的关键字

TopDocs hits = is.search(queryParser.parse("indexformattoooldexception") , 100);

for(ScoreDoc scoreDoc: hits.scoreDocs) {

Document doc = is.doc(scoreDoc.doc);

System.out.println("文件"+doc.get("fullPath")+"中含有该关键字"); }

} catch (IOException e) {

e.printStackTrace();

} catch (ParseException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

查询表达式

@Test

public void testQueryParser() {

String indexDir = "E:\\temp\\T224\\lucene\\demo4";

// 获取查询解析器(通过哪种分词器去解析哪种片段)

QueryParser queryParser = new QueryParser("contents", new StandardAnalyzer());

try {

FSDirectory indexDirectory = FSDirectory.open(Paths.get(indexDir));

// 注意:索引输入流不是new出来的,是通过目录读取工具类打开的

IndexReader indexReader = DirectoryReader.open(indexDirectory);

// 获取索引搜索对象

IndexSearcher is = new IndexSearcher(indexReader); // 由解析器去解析对应的关键字

TopDocs hits = is.search(queryParser.parse("indexformattoooldexception") , 100);

for(ScoreDoc scoreDoc: hits.scoreDocs) {

Document doc = is.doc(scoreDoc.doc);

System.out.println("文件"+doc.get("fullPath")+"中含有该关键字"); }

} catch (IOException e) {

e.printStackTrace();

} catch (ParseException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

指定(范围,字母)查询表达式

/**

* 指定数字范围查询

* 指定字符串开头字母查询(prefixQuery)

* @author Administrator

*

*/

public class Demo6 {

private int ids[]={1,2,3};

private String citys[]={"qingdao","nanjing","shanghai"};

private String descs[]={

"Qingdao is a beautiful city.",

"Nanjing is a city of culture.",

"Shanghai is a bustling city."

};

private FSDirectory dir; /**

* 每次都生成索引文件

* @throws Exception

*/

@Before

public void setUp() throws Exception {

dir = FSDirectory.open(Paths.get("D:\\lucenetemp\\lucene\\demo2\\indexDir"));

IndexWriter indexWriter = getIndexWriter();

for (int i = 0; i < ids.length; i++) {

Document doc = new Document();

doc.add(new IntField("id", ids[i], Field.Store.YES));

doc.add(new StringField("city", citys[i], Field.Store.YES));

doc.add(new TextField("desc", descs[i], Field.Store.YES));

indexWriter.addDocument(doc);

}

indexWriter.close();

} /**

* 获取索引输出流

* @return

* @throws Exception

*/

private IndexWriter getIndexWriter() throws Exception{

Analyzer analyzer = new StandardAnalyzer();

IndexWriterConfig conf = new IndexWriterConfig(analyzer);

return new IndexWriter(dir, conf );

} /**

* 指定数字范围查询

* @throws Exception

*/

@Test

public void testNumericRangeQuery()throws Exception{

IndexReader reader = DirectoryReader.open(dir);

IndexSearcher is = new IndexSearcher(reader); NumericRangeQuery<Integer> query=NumericRangeQuery.newIntRange("id", 1, 2, true, true);

TopDocs hits=is.search(query, 10);

for(ScoreDoc scoreDoc:hits.scoreDocs){

Document doc=is.doc(scoreDoc.doc);

System.out.println(doc.get("id"));

System.out.println(doc.get("city"));

System.out.println(doc.get("desc"));

}

} /**

* 指定字符串开头字母查询(prefixQuery)

* @throws Exception

*/

@Test

public void testPrefixQuery()throws Exception{

IndexReader reader = DirectoryReader.open(dir);

IndexSearcher is = new IndexSearcher(reader); PrefixQuery query=new PrefixQuery(new Term("city","n"));

TopDocs hits=is.search(query, 10);

for(ScoreDoc scoreDoc:hits.scoreDocs){

Document doc=is.doc(scoreDoc.doc);

System.out.println(doc.get("id"));

System.out.println(doc.get("city"));

System.out.println(doc.get("desc"));

}

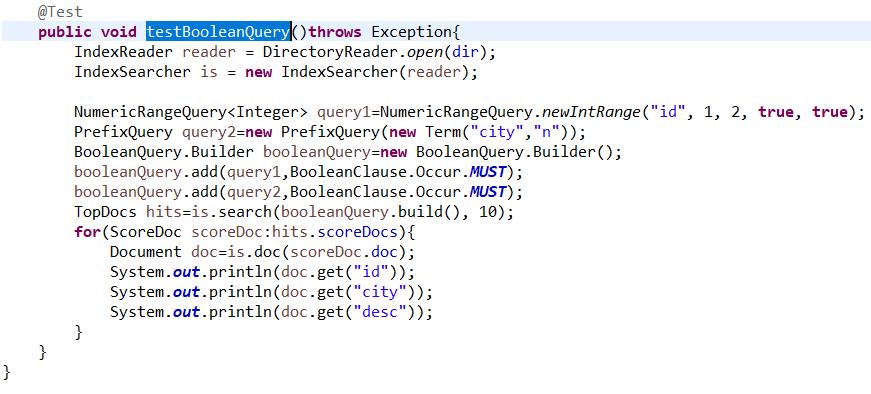

} @Test

public void testBooleanQuery()throws Exception{

IndexReader reader = DirectoryReader.open(dir);

IndexSearcher is = new IndexSearcher(reader); NumericRangeQuery<Integer> query1=NumericRangeQuery.newIntRange("id", 1, 2, true, true);

PrefixQuery query2=new PrefixQuery(new Term("city","n"));

BooleanQuery.Builder booleanQuery=new BooleanQuery.Builder();

booleanQuery.add(query1,BooleanClause.Occur.MUST);

booleanQuery.add(query2,BooleanClause.Occur.MUST);

TopDocs hits=is.search(booleanQuery.build(), 10);

for(ScoreDoc scoreDoc:hits.scoreDocs){

Document doc=is.doc(scoreDoc.doc);

System.out.println(doc.get("id"));

System.out.println(doc.get("city"));

System.out.println(doc.get("desc"));

}

}

}

组合查询

效果是其上功能的组合

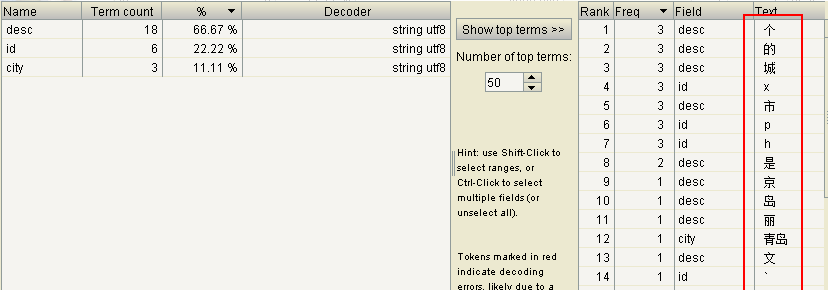

中文分词&&高亮显示

中文分词

private Integer ids[]={1,2,3};

private String citys[]={"青岛","南京","上海"};

private String descs[]={

"青岛是个美丽的城市。",

"南京是个有文化的城市。",

"上海市个繁华的城市。"

};

把句子分成词,还未达到想要的效果,那么继续

在pom.xml导入依赖

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-analyzers-smartcn</artifactId>

<version>5.3.1</version>

</dependency>

这儿依赖把分词器的标准改成了中文分词器

高亮显示

导入依赖

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-highlighter</artifactId>

<version>5.3.1</version>

</dependency>

高亮显示的步骤:

1、通过查询对象,获取查询得分对象

2、通过得分对象,获取对应的片段

3、实例化一个html格式化对象

4、通过html格式化实例和查询得分实例,来实例化Lucene提供的高亮显示类对象。

5、将前面获取到的得分片段,设置到高亮显示的的实例对象中。

6、通过分词器获取TokenStream令牌流对象

7、通过令牌和原有的片段,去拿高亮展示后的片段

Dome07.java

public class Demo7 {

private Integer ids[] = { 1, 2, 3 };

private String citys[] = { "青岛", "南京", "上海" };

// private String descs[]={

// "青岛是个美丽的城市。",

// "南京是个有文化的城市。",

// "上海市个繁华的城市。"

// };

private String descs[] = { "青岛是个美丽的城市。",

"南京是一个文化的城市南京,简称宁,是江苏省会,地处中国东部地区,长江下游,濒江近海。全市下辖11个区,总面积6597平方公里,2013年建成区面积752.83平方公里,常住人口818.78万,其中城镇人口659.1万人。[1-4] “江南佳丽地,金陵帝王州”,南京拥有着6000多年文明史、近2600年建城史和近500年的建都史,是中国四大古都之一,有“六朝古都”、“十朝都会”之称,是中华文明的重要发祥地,历史上曾数次庇佑华夏之正朔,长期是中国南方的政治、经济、文化中心,拥有厚重的文化底蕴和丰富的历史遗存。[5-7] 南京是国家重要的科教中心,自古以来就是一座崇文重教的城市,有“天下文枢”、“东南第一学”的美誉。截至2013年,南京有高等院校75所,其中211高校8所,仅次于北京上海;国家重点实验室25所、国家重点学科169个、两院院士83人,均居中国第三。[8-10]",

"上海市个繁华的城市。" };

private FSDirectory dir;

/**

* 每次都生成索引文件

*

* @throws Exception

*/

@Before

public void setUp() throws Exception {

dir = FSDirectory.open(Paths.get("D:\\lucenetemp\\lucene\\demo2\\indexDir"));

IndexWriter indexWriter = getIndexWriter();

for (int i = 0; i < ids.length; i++) {

Document doc = new Document();

doc.add(new IntField("id", ids[i], Field.Store.YES));

doc.add(new StringField("city", citys[i], Field.Store.YES));

doc.add(new TextField("desc", descs[i], Field.Store.YES));

indexWriter.addDocument(doc);

}

indexWriter.close();

}

/**

* 获取索引输出流

*

* @return

* @throws Exception

*/

private IndexWriter getIndexWriter() throws Exception {

// Analyzer analyzer = new StandardAnalyzer();

Analyzer analyzer = new SmartChineseAnalyzer();

IndexWriterConfig conf = new IndexWriterConfig(analyzer);

return new IndexWriter(dir, conf);

}

/**

* luke查看索引生成

*

* @throws Exception

*/

@Test

public void testIndexCreate() throws Exception {

}

/**

* 测试高亮

*

* @throws Exception

*/

@Test

public void testHeight() throws Exception {

IndexReader reader = DirectoryReader.open(dir);

IndexSearcher searcher = new IndexSearcher(reader);

SmartChineseAnalyzer analyzer = new SmartChineseAnalyzer();

QueryParser parser = new QueryParser("desc", analyzer);

// Query query = parser.parse("南京文化");

Query query = parser.parse("南京文明");

TopDocs hits = searcher.search(query, 100);

// 查询得分项

QueryScorer queryScorer = new QueryScorer(query);

// 得分项对应的内容片段

SimpleSpanFragmenter fragmenter = new SimpleSpanFragmenter(queryScorer);

// 高亮显示的样式

SimpleHTMLFormatter htmlFormatter = new SimpleHTMLFormatter("<span color='red'><b>", "</b></span>");

// 高亮显示对象

Highlighter highlighter = new Highlighter(htmlFormatter, queryScorer);

// 设置需要高亮显示对应的内容片段

highlighter.setTextFragmenter(fragmenter);

for (ScoreDoc scoreDoc : hits.scoreDocs) {

Document doc = searcher.doc(scoreDoc.doc);

String desc = doc.get("desc");

if (desc != null) {

// tokenstream是从doucment的域(field)中抽取的一个个分词而组成的一个数据流,用于分词。

TokenStream tokenStream = analyzer.tokenStream("desc", new StringReader(desc));

System.out.println("高亮显示的片段:" + highlighter.getBestFragment(tokenStream, desc));

}

System.out.println("所有内容:" + desc);

}

}

}

综合案例:

pom.xml(放所有的jar包依赖)

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/maven-v4_0_0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.javaxl</groupId>

<artifactId>javaxl_lunece_freemarker</artifactId>

<packaging>war</packaging>

<version>0.0.1-SNAPSHOT</version>

<name>javaxl_lunece_freemarker Maven Webapp</name>

<url>http://maven.apache.org</url>

<properties>

<httpclient.version>4.5.2</httpclient.version>

<jsoup.version>1.10.1</jsoup.version>

<!-- <lucene.version>7.1.0</lucene.version> -->

<lucene.version>5.3.1</lucene.version>

<ehcache.version>2.10.3</ehcache.version>

<junit.version>4.12</junit.version>

<log4j.version>1.2.16</log4j.version>

<mysql.version>5.1.44</mysql.version>

<fastjson.version>1.2.47</fastjson.version>

<struts2.version>2.5.16</struts2.version>

<servlet.version>4.0.1</servlet.version>

<jstl.version>1.2</jstl.version>

<standard.version>1.1.2</standard.version>

<tomcat-jsp-api.version>8.0.47</tomcat-jsp-api.version>

</properties>

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>${junit.version}</version>

<scope>test</scope>

</dependency> <!-- jdbc驱动包 -->

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>${mysql.version}</version>

</dependency> <!-- 添加Httpclient支持 -->

<dependency>

<groupId>org.apache.httpcomponents</groupId>

<artifactId>httpclient</artifactId>

<version>${httpclient.version}</version>

</dependency> <!-- 添加jsoup支持 -->

<dependency>

<groupId>org.jsoup</groupId>

<artifactId>jsoup</artifactId>

<version>${jsoup.version}</version>

</dependency> <!-- 添加日志支持 -->

<dependency>

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>${log4j.version}</version>

</dependency> <!-- 添加ehcache支持 -->

<dependency>

<groupId>net.sf.ehcache</groupId>

<artifactId>ehcache</artifactId>

<version>${ehcache.version}</version>

</dependency> <dependency>

<groupId>com.alibaba</groupId>

<artifactId>fastjson</artifactId>

<version>${fastjson.version}</version>

</dependency> <dependency>

<groupId>org.apache.struts</groupId>

<artifactId>struts2-core</artifactId>

<version>${struts2.version}</version>

</dependency> <dependency>

<groupId>javax.servlet</groupId>

<artifactId>javax.servlet-api</artifactId>

<version>${servlet.version}</version>

<scope>provided</scope>

</dependency> <dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-core</artifactId>

<version>${lucene.version}</version>

</dependency>

<dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-queryparser</artifactId>

<version>${lucene.version}</version>

</dependency>

<!-- <dependency> <groupId>org.apache.lucene</groupId> <artifactId>lucene-analyzers-common</artifactId>

<version>${lucene.version}</version> </dependency> --> <dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-analyzers-smartcn</artifactId>

<version>${lucene.version}</version>

</dependency> <dependency>

<groupId>org.apache.lucene</groupId>

<artifactId>lucene-highlighter</artifactId>

<version>${lucene.version}</version>

</dependency> <!-- 5.3、jstl、standard -->

<dependency>

<groupId>jstl</groupId>

<artifactId>jstl</artifactId>

<version>${jstl.version}</version>

</dependency>

<dependency>

<groupId>taglibs</groupId>

<artifactId>standard</artifactId>

<version>${standard.version}</version>

</dependency> <!-- 5.4、tomcat-jsp-api -->

<dependency>

<groupId>org.apache.tomcat</groupId>

<artifactId>tomcat-jsp-api</artifactId>

<version>${tomcat-jsp-api.version}</version>

</dependency>

</dependencies>

<build>

<finalName>javaxl_lunece_freemarker</finalName>

<plugins>

<plugin>

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.7.0</version>

<configuration>

<source>1.8</source>

<target>1.8</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

</plugins>

</build>

</project>

BlogActio.java

public class BlogAction {

private String title;

private BlogDao blogDao = new BlogDao();

public String getTitle() {

return title;

}

public void setTitle(String title) {

this.title = title;

}

public String list() {

try {

HttpServletRequest request = ServletActionContext.getRequest();

if (StringUtils.isBlank(title)) {

List<Map<String, Object>> blogList = this.blogDao.list(title, null);

request.setAttribute("blogList", blogList);

}else {

Directory directory = LuceneUtil.getDirectory(PropertiesUtil.getValue("indexPath"));

DirectoryReader reader = LuceneUtil.getDirectoryReader(directory);

IndexSearcher searcher = LuceneUtil.getIndexSearcher(reader);

SmartChineseAnalyzer analyzer = new SmartChineseAnalyzer();

// 拿一句话到索引目中的索引文件中的词库进行关键词碰撞

Query query = new QueryParser("title", analyzer).parse(title);

Highlighter highlighter = LuceneUtil.getHighlighter(query, "title");

TopDocs topDocs = searcher.search(query , 100);

//处理得分命中的文档

List<Map<String, Object>> blogList = new ArrayList<>();

Map<String, Object> map = null;

ScoreDoc[] scoreDocs = topDocs.scoreDocs;

for (ScoreDoc scoreDoc : scoreDocs) {

map = new HashMap<>();

Document doc = searcher.doc(scoreDoc.doc);

map.put("id", doc.get("id"));

String titleHighlighter = doc.get("title");

if(StringUtils.isNotBlank(titleHighlighter)) {

titleHighlighter = highlighter.getBestFragment(analyzer, "title", titleHighlighter);

}

map.put("title", titleHighlighter);

map.put("url", doc.get("url"));

blogList.add(map);

}

request.setAttribute("blogList", blogList);

}

} catch (Exception e) {

e.printStackTrace();

}

return "blogList";

}

}

IndexStarter.java

/**

* 构建lucene索引

* @author Administrator

* 1。构建索引 IndexWriter

* 2、读取索引文件,获取命中片段

* 3、使得命中片段高亮显示

*

*/

public class IndexStarter {

private static BlogDao blogDao = new BlogDao();

public static void main(String[] args) {

IndexWriterConfig conf = new IndexWriterConfig(new SmartChineseAnalyzer());

Directory d;

IndexWriter indexWriter = null;

try {

d = FSDirectory.open(Paths.get(PropertiesUtil.getValue("indexPath")));

indexWriter = new IndexWriter(d , conf ); // 为数据库中的所有数据构建索引

List<Map<String, Object>> list = blogDao.list(null, null);

for (Map<String, Object> map : list) {

Document doc = new Document();

doc.add(new StringField("id", (String) map.get("id"), Field.Store.YES));

// TextField用于对一句话分词处理 java培训机构

doc.add(new TextField("title", (String) map.get("title"), Field.Store.YES));

doc.add(new StringField("url", (String) map.get("url"), Field.Store.YES));

indexWriter.addDocument(doc);

} } catch (IOException e) {

e.printStackTrace();

} catch (InstantiationException e) {

e.printStackTrace();

} catch (IllegalAccessException e) {

e.printStackTrace();

} catch (SQLException e) {

e.printStackTrace();

}finally {

try {

if(indexWriter!= null) {

indexWriter.close();

}

} catch (IOException e) {

// TODO Auto-generated catch block

e.printStackTrace();

}

}

}

}

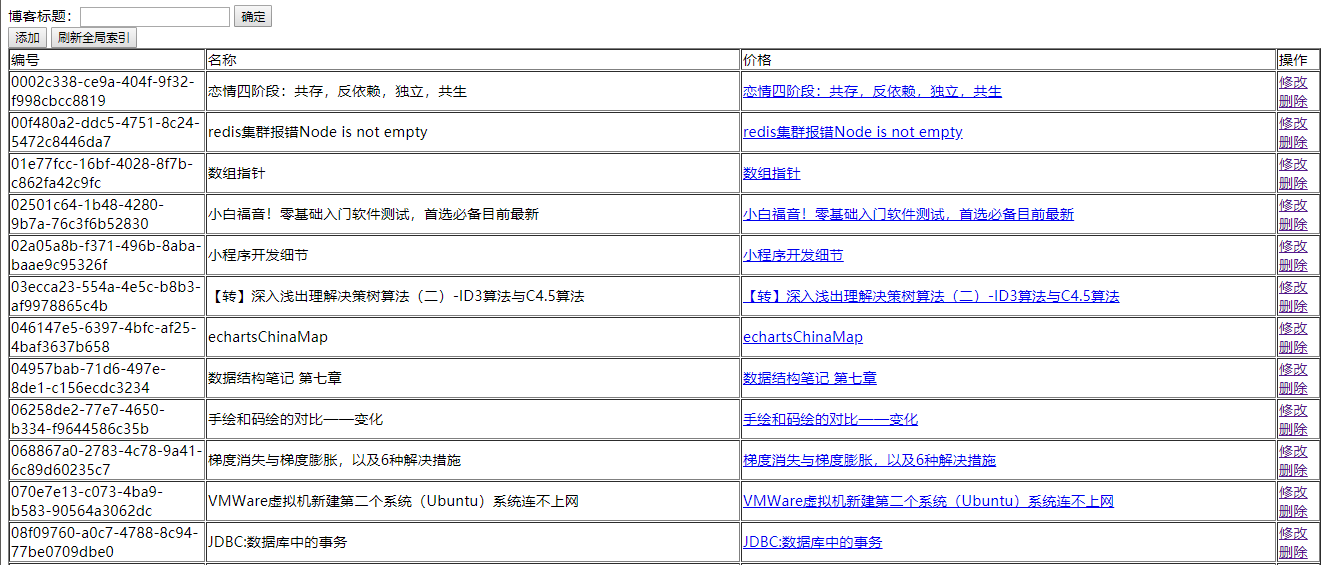

效果

谢谢观看!

Lucene入门+实现的更多相关文章

- Lucene入门学习

技术原理: 开发环境: lucene包:分词包,核心包,高亮显示(highlight和memory),查询包.(下载请到官网去查看,如若下载其他版本,请看我的上篇文档,在luke里面) 原文文档: 入 ...

- lucene入门

一.lucene简介 Lucene是apache下的一个靠性能的.功能全面的用纯java开发的一个全文搜索引擎库.它几乎适合任何需要全文搜索应用程序,尤其是跨平台.lucene是开源的免费的工程.lu ...

- Lucene 入门需要了解的东西

全文搜索引擎的原理网上大段的内容,要想深入的学习,最好的办法就是先用一下,lucene 发展比较快,下面是写第一个demo 要注意的一些事情: 1.Lucene的核心jar包,下面几个包分别位于不同 ...

- Lucene入门的基本知识(四)

刚才在写创建索引和搜索类的时候发现非常多类的概念还不是非常清楚,这里我总结了一下. 1 lucene简单介绍 1.1 什么是lucene Lucene是一个全文搜索框架,而不是应用产品.因此它并不 ...

- Lucene入门教程

Lucene教程 1 lucene简介 1.1 什么是lucene Lucene是一个全文搜索框架,而不是应用产品.因此它并不像www.baidu.com 或者google Desktop那么 ...

- Lucene入门教程(转载)

http://blog.csdn.net/tianlincao/article/details/6867127 Lucene教程 1 lucene简介 1.1 什么是lucene Lucene ...

- Lucene入门-安装和运行Demo程序

Lucene版本:7.1 一.下载安装包 https://lucene.apache.org/core/downloads.html 二.安装 把4个必备jar包和路径添加到CLASSPATH \lu ...

- Lucene入门简介

一 Lucene产生的背景 数据库中的搜索很容易实现,通常都是使用sql语句进行查询,而且能很快的得到查询结果. 为什么数据库搜索很容易? 因为数据库中的数据存储是有规律的,有行有列而且数据格式.数 ...

- Lucene入门案例一

1. 配置开发环境 官方网站:http://lucene.apache.org/ Jdk要求:1.7以上 创建索引库必须的jar包(lucene-core-4.10.3.jar,lucene-anal ...

- Java Lucene入门

1.lucene版本:7.2.1 pom文件: <?xml version="1.0" encoding="UTF-8"?> <project ...

随机推荐

- [JDBC]查询结果集把字段名和字段值一起竖向输出

代码: package com.hy.fieldandvalue; import java.sql.Connection; import java.sql.DriverManager; import ...

- linux命令实现音频格式转换和拼接

安装FFmpeg flaceric@ray:~$ sudo apt install FFmpeg flac 安装lame faaceric@ray:~$ sudo apt install lame f ...

- openresty开发系列15--lua基础语法4表table和运算符

openresty开发系列15--lua基础语法4表table和运算符 lua中的表table 一)table (表)Table 类型实现了一种抽象的"关联数组".即可用作数组,也 ...

- pytorch nn.Sequential()动态添加方法

之前我们使用nn.Sequential()都是直接写死的,就如下所示: # Example of using Sequential model = nn.Sequential( nn.Conv2d(, ...

- Qt编写自定义控件63-水波效果

一.前言 几年前就一直考虑过写这个控件了,在9年前用C#的时候,就看到过别人用C#写了个水波效果的控件,挺好玩的,当时看了下代码用的二维数组来存储变换的图像像素数据,自从学了Qt以后,有过几次想要用Q ...

- 转 mysql awr 报告

1. https://github.com/noodba/myawr 2. https://www.cnblogs.com/zhjh256/p/5779533.html

- ABAP DEMO 下拉框

效果展示: *&---------------------------------------------------------------------* *& Report YCX ...

- 【Leetcode_easy】965. Univalued Binary Tree

problem 965. Univalued Binary Tree 参考 1. Leetcode_easy_965. Univalued Binary Tree; 完

- DevOps - DevOps精要 - 溯源

1 - DevOps的含义 DevOps涉及领域广泛,其含义因人而异,在不同的理解和需求场景下,有着不同的实践形式. DevOps可以理解为是一个职位.一套工具集合.一组过程与方法.一种组织形式与文化 ...

- swift 导入第三方库

现在的项目也是做了几个,每个都会导入几个优秀的第三方…… 这里写下导入的步骤,方便查询:::: 1.手动导入 首先要知道,是需要文件,还是框架 比如 Alamofire.SnapKit,都需要导入框架 ...