Kubernetes 学习3 kubeadm初始化k8s集群

一、k8s集群

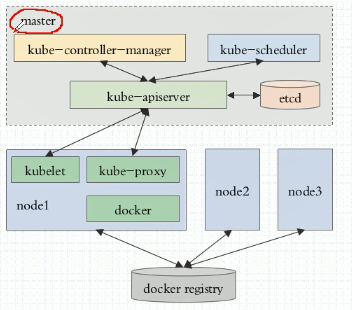

1、k8s整体架构图

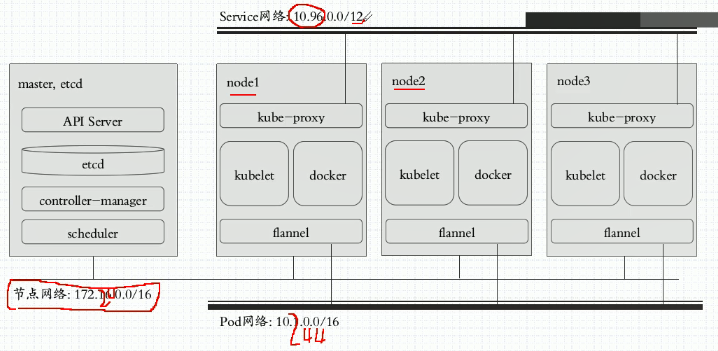

2、k8s网络架构图

二、基于kubeadm安装k8s步骤

1、master,nodes:安装kubelet,kubeadm,docker

2、master: kubeadm init

3、各nodes: kubeadm join

4、k8s有两种部署方案

a、采用传统的方式来部署k8s自身,让k8s自己的相关组件统统运行为系统级的守护进程,这包括master节点上的四个组件以及每个node节点上的三个组件都运行为系统级的守护进程,但是这个每一步都需要我们自己解决,包括做证书等等,非常繁琐而复杂。

b、通过ansible 的 playbook。

c、通过kubeadm部署,将所有组件都部署在pod中(这些pod为静态pod)。只需要按照kubelet和docker以及flannel即可。

三、部署k8s

1、目前为止k8s官方对docker的支持只到17.03

2、节点规划

master节点:192.168.10.10

node1节点: 192.168.10.11

node2节点: 192.168.10.12

k8s版本:1.11

3、在各节点中添加host并配置master 免密登陆

[root@localhost ~]# ssh-keygen #获取节点公钥

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:1Ww6zH+E56Kmb2/+2tGguLm+a0dJtyEA+alJcoapTIE root@localhost.localdomain

The key's randomart image is:

+---[RSA ]----+

| . .o |

| E . . .o |

| . o ..o+ |

| . + ++ooo.o |

| o . =So=..++o |

| o o +o=.o |

| ..+ + .|

| ++o+ . |

| .BXO+oo |

+----[SHA256]-----+ [root@localhost ~]# sed -i '35a StrictHostKeyChecking no' /etc/ssh/ssh_config #取消登陆提示 #下发公钥,若无sshpass命令 使用yum 安装即可

[root@localhost ~]# sshpass -p ssh-copy-id -i /root/.ssh/id_rsa.pub 192.168.10.11

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: key(s) remain to be installed -- if you are prompted now it is to install the new keys Number of key(s) added: Now try logging into the machine, with: "ssh '192.168.10.11'"

and check to make sure that only the key(s) you wanted were added. [root@localhost ~]# sshpass -p ssh-copy-id -i /root/.ssh/id_rsa.pub 192.168.10.12

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: key(s) remain to be installed -- if you are prompted now it is to install the new keys Number of key(s) added: Now try logging into the machine, with: "ssh '192.168.10.12'"

and check to make sure that only the key(s) you wanted were added. [root@localhost ~]# ssh 192.168.10.11

Last login: Wed May :: from 192.168.10.1

[root@localhost ~]# exit

logout

Connection to 192.168.10.11 closed.

4、所有节点做时间同步并(关闭firewalld和禁用开机启动)

在xshell 窗口上打开所有会话

[root@localhost ~]# date -s "2019/5/8 10:39:00" #修改时间

Wed May :: CST

[root@localhost ~]# hwclock -w #写入硬件

5、各节点设置docker阿里云源

[root@localhost ~]# cd /etc/yum.repos.d/

[root@localhost yum.repos.d]# wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

---- ::-- https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 118.112.14.225, 118.112.14.10, 118.112.14.8, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|118.112.14.225|:... connected.

HTTP request sent, awaiting response... OK

Length: (.6K) [application/octet-stream]

Saving to: ‘docker-ce.repo’ %[===================================================================================================================================================>] , --.-K/s in 0s -- :: ( MB/s) - ‘docker-ce.repo’ saved [/]

6、各节点设置k8s 阿里云源

[root@localhost yum.repos.d]# cat > /etc/yum.repos.d/kubernetes.repo <<EOF

> [kubernetes]

> name=Kubernetes Repo

> baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

> gpgcheck=0

> gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

> enabled=

> EOF

#导入yum-key和package-key

[root@localhost ~]# wget https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

---- ::-- https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 118.112.14.11, 118.112.14.224, 118.112.14.10, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|118.112.14.11|:... connected.

HTTP request sent, awaiting response... OK

Length: (.8K) [application/octet-stream]

Saving to: ‘yum-key.gpg’ %[===================================================================================================================================================>] , --.-K/s in 0s -- :: (13.7 MB/s) - ‘yum-key.gpg’ saved [/] [root@localhost ~]# rpm --import yum-key.gpg

[root@localhost ~]# wget https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

---- ::-- https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

Resolving mirrors.aliyun.com (mirrors.aliyun.com)... 118.112.14.7, 118.112.14.224, 118.112.14.225, ...

Connecting to mirrors.aliyun.com (mirrors.aliyun.com)|118.112.14.7|:... connected.

HTTP request sent, awaiting response... OK

Length: [application/octet-stream]

Saving to: ‘rpm-package-key.gpg’ %[===================================================================================================================================================>] --.-K/s in 0s -- :: ( MB/s) - ‘rpm-package-key.gpg’ saved [/] [root@localhost ~]# rpm --import rpm-package-key.gpg

7、各节点开始安装

[root@localhost /]# yum install -y docker-ce-18.06.0.ce-3.el7 kubelet-1.11.1 kubeadm-1.11.1 kubectl-1.11.1 kubernetes-cni-0.6.0-0.x86_64

#若要安装其它版本docker则直接写docker-ce-version即可

8、启动docker,docker会自动到仓库加载相应镜像,但是由于涉及到FQ,因此需要将相关镜像先导入到本地才行。不过我们现在可以借用别人提供的代理路径进行下载。加载完后需要将其注释掉然后继续使用国内的相应仓库进行镜像加载。

编辑 /usr/lib/systemd/system/docker.service文件,在[Service]下面添加如下内容:

Environment="HTTPS_PROXY=http://192.168.10.1:1080" #这是本地代理端口

Environment="HTTP_PROXY=http://192.168.10.1:1080" #这是本地代理端口

Environment="NO_PROXY=127.0.0.0/8,192.168.10.0/24"

[root@localhost ~]# systemctl daemon-reload

[root@localhost ~]# systemctl restart docker

[root@localhost ~]# docker info

Containers:

Running:

Paused:

Stopped:

Images:

Server Version: 18.09.

Storage Driver: overlay2

Backing Filesystem: xfs

Supports d_type: true

Native Overlay Diff: true

Logging Driver: json-file

Cgroup Driver: cgroupfs

Plugins:

Volume: local

Network: bridge host macvlan null overlay

Log: awslogs fluentd gcplogs gelf journald json-file local logentries splunk syslog

Swarm: inactive

Runtimes: runc

Default Runtime: runc

Init Binary: docker-init

containerd version: bb71b10fd8f58240ca47fbb579b9d1028eea7c84

runc version: 2b18fe1d885ee5083ef9f0838fee39b62d653e30

init version: fec3683

Security Options:

seccomp

Profile: default

Kernel Version: 3.10.-.el7.x86_64

Operating System: CentOS Linux (Core)

OSType: linux

Architecture: x86_64

CPUs:

Total Memory: .781GiB

Name: k8smaster

ID: RW6C:KASL:OFHE:QOAY:ZUIY:UYCF:KYA7:ACRY:7MDT:WIPV:2G4R:FV3H

Docker Root Dir: /var/lib/docker

Debug Mode (client): false

Debug Mode (server): false

HTTPS Proxy: http://www.ik8s.io:10080

No Proxy: 127.0.0.0/,172.20.0.0/

Registry: https://index.docker.io/v1/

Labels:

Experimental: false

Insecure Registries:

127.0.0.0/

Live Restore Enabled: false

Product License: Community Engine

9、所有节点设置kubelet开机自启动(暂时不要启动,启动会失败),并且设置docker开机启动

[root@localhost ~]# rpm -ql kubelet

/etc/kubernetes/manifests #清单目录

/etc/sysconfig/kubelet #配置文件

/usr/bin/kubelet #主程序

/usr/lib/systemd/system/kubelet.service 开机启动文件

[root@localhost ~]# cat /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS= #早期的k8s要求不能开swap,否则无法安装也无法启动,但是现在可以忽略这个警告,就是在这个参数中设置。

[root@localhost ~]# systemctl enable kubelet.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

[root@localhost ~]# systemctl enable docker.service

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

10、初始化k8s集群master节点

[root@localhost ~]# cat /etc/sysconfig/kubelet

KUBELET_EXTRA_ARGS="--fail-swap-on=false"

[root@k8smaster ~]# kubeadm init --kubernetes-version=v1.11.1 --pod-network-cidr=10.244.0.0/ --service-cidr=10.96.0.0/ --ignore-preflight-errors=Swap

[init] using Kubernetes version: v1.11.1

[preflight] running pre-flight checks

[WARNING Swap]: running with swap on is not supported. Please disable swap

I0508 ::18.226228 kernel_validator.go:] Validating kernel version

I0508 ::18.226357 kernel_validator.go:] Validating kernel config

[WARNING SystemVerification]: docker version is greater than the most recently validated version. Docker version: 18.06.-ce. Max validated version: 17.03

[preflight/images] Pulling images required for setting up a Kubernetes cluster

[preflight/images] This might take a minute or two, depending on the speed of your internet connection

[preflight/images] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[preflight] Activating the kubelet service

[certificates] Generated ca certificate and key.

[certificates] Generated apiserver certificate and key.

[certificates] apiserver serving cert is signed for DNS names [k8smaster kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.16

8.10.][certificates] Generated apiserver-kubelet-client certificate and key.

[certificates] Generated sa key and public key.

[certificates] Generated front-proxy-ca certificate and key.

[certificates] Generated front-proxy-client certificate and key.

[certificates] Generated etcd/ca certificate and key.

[certificates] Generated etcd/server certificate and key.

[certificates] etcd/server serving cert is signed for DNS names [k8smaster localhost] and IPs [127.0.0.1 ::]

[certificates] Generated etcd/peer certificate and key.

[certificates] etcd/peer serving cert is signed for DNS names [k8smaster localhost] and IPs [192.168.10.10 127.0.0.1 ::]

[certificates] Generated etcd/healthcheck-client certificate and key.

[certificates] Generated apiserver-etcd-client certificate and key.

[certificates] valid certificates and keys now exist in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/controller-manager.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/scheduler.conf"

[controlplane] wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml"

[controlplane] wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml"

[controlplane] wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml"

[etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml"

[init] waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests"

[init] this might take a minute or longer if the control plane images have to be pulled

[apiclient] All control plane components are healthy after 45.011749 seconds

[uploadconfig] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.11" in namespace kube-system with the configuration for the kubelets in the cluster

[markmaster] Marking the node k8smaster as master by adding the label "node-role.kubernetes.io/master=''"

[markmaster] Marking the node k8smaster as master by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "k8smaster" as an annotation

[bootstraptoken] using token: fgtp9x.z8gzf2coiouxzr1e

[bootstraptoken] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstraptoken] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstraptoken] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstraptoken] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy Your Kubernetes master has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of machines by running the following on each node

as root: kubeadm join 192.168.10.10: --token(域共享秘钥) fgtp9x.z8gzf2coiouxzr1e --discovery-token-ca-cert-hash(相关证书的哈希码) sha256:eec6e45b46868097fb6dc5c1007a4ed801f67950b5ea4949d9169fcde6d018cc #在其它节点上可以使用此命令将该节点加入该k8s集群

查看拖下来的镜像

[root@k8smaster ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

k8s.gcr.io/kube-proxy-amd64 v1.11.1 d5c25579d0ff months ago .8MB

k8s.gcr.io/kube-scheduler-amd64 v1.11.1 272b3a60cd68 months ago .8MB

k8s.gcr.io/kube-controller-manager-amd64 v1.11.1 52096ee87d0e months ago 155MB

k8s.gcr.io/kube-apiserver-amd64 v1.11.1 816332bd9d11 months ago 187MB

k8s.gcr.io/coredns 1.1. b3b94275d97c months ago .6MB

k8s.gcr.io/etcd-amd64 3.2. b8df3b177be2 months ago 219MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 months ago 742kB

执行初始化时提供的命令

[root@k8smaster ~]# mkdir -p $HOME/.kube

[root@k8smaster ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

运行命令查看集群状态,会发现集群处于NotReady状态,因此此时还没有安装flannel

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster NotReady master 15h v1.11.1

[root@k8smaster ~]# kubectl get componentstatus #获取集群组件状态信息,也可以将componentstatus简写为cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd- Healthy {"health": "true"}

11、在master节点上部署flannel组件

[root@k8smaster ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

podsecuritypolicy.extensions/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.extensions/kube-flannel-ds-amd64 created

daemonset.extensions/kube-flannel-ds-arm64 created

daemonset.extensions/kube-flannel-ds-arm created

daemonset.extensions/kube-flannel-ds-ppc64le created

daemonset.extensions/kube-flannel-ds-s390x created 等一段时间查看是否已经有flannel镜像

[root@k8smaster ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

quay.io/coreos/flannel v0.11.0-amd64 ff281650a721 months ago .6MB

k8s.gcr.io/kube-proxy-amd64 v1.11.1 d5c25579d0ff months ago .8MB

k8s.gcr.io/kube-apiserver-amd64 v1.11.1 816332bd9d11 months ago 187MB

k8s.gcr.io/kube-controller-manager-amd64 v1.11.1 52096ee87d0e months ago 155MB

k8s.gcr.io/kube-scheduler-amd64 v1.11.1 272b3a60cd68 months ago .8MB

k8s.gcr.io/coredns 1.1. b3b94275d97c months ago .6MB

k8s.gcr.io/etcd-amd64 3.2. b8df3b177be2 months ago 219MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 months ago 742kB #此时查看节点状态已经正常

[root@k8smaster ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8smaster Ready master 15h v1.11.1 #查看系统名称空间中的pod

[root@k8smaster ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-78fcdf6894-6qs64 / Running 15h

kube-system coredns-78fcdf6894-bhzf4 / Running 15h

kube-system etcd-k8smaster / Running 1m

kube-system kube-apiserver-k8smaster / Running 1m

kube-system kube-controller-manager-k8smaster / Running 1m

kube-system kube-flannel-ds-amd64-nskmt / Running 5m

kube-system kube-proxy-fknmj / Running 15h

kube-system kube-scheduler-k8smaster / Running 1m #查看所有名称空间

[root@k8smaster ~]# kubectl get namespaces #也可以简写为ns

NAME STATUS AGE

default Active 15h

kube-public Active 15h

kube-system Active 15h

12、各节点配置加入master集群

执行master初始化时提醒的命令,并且再加--ignore-preflight-errors=Swap即可

[root@k8snode1 ~]# kubeadm join 192.168.10.10: --token fgtp9x.z8gzf2coiouxzr1e --discovery-token-ca-cert-hash sha256:eec6e45b46868097fb6dc5c1007a4ed801f67950b5ea4949d9169fcde6d018cc --

ignore-preflight-errors=Swap[preflight] running pre-flight checks

[WARNING RequiredIPVSKernelModulesAvailable]: the IPVS proxier will not be used, because the following required kernel modules are not loaded: [ip_vs_rr ip_vs_wrr ip_vs_sh ip_vs] or

no builtin kernel ipvs support: map[ip_vs_rr:{} ip_vs_wrr:{} ip_vs_sh:{} nf_conntrack_ipv4:{} ip_vs:{}]you can solve this problem with following methods:

. Run 'modprobe -- ' to load missing kernel modules;

. Provide the missing builtin kernel ipvs support [WARNING Swap]: running with swap on is not supported. Please disable swap

I0509 ::21.495533 kernel_validator.go:] Validating kernel version

I0509 ::21.495936 kernel_validator.go:] Validating kernel config

[WARNING SystemVerification]: docker version is greater than the most recently validated version. Docker version: 18.06.-ce. Max validated version: 17.03

[discovery] Trying to connect to API Server "192.168.10.10:6443"

[discovery] Created cluster-info discovery client, requesting info from "https://192.168.10.10:6443"

[discovery] Requesting info from "https://192.168.10.10:6443" again to validate TLS against the pinned public key

[discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.10.10:6443"

[discovery] Successfully established connection with API Server "192.168.10.10:6443"

[kubelet] Downloading configuration for the kubelet from the "kubelet-config-1.11" ConfigMap in the kube-system namespace

[kubelet] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[preflight] Activating the kubelet service

[tlsbootstrap] Waiting for the kubelet to perform the TLS Bootstrap...

[patchnode] Uploading the CRI Socket information "/var/run/dockershim.sock" to the Node API object "k8snode1" as an annotation This node has joined the cluster:

* Certificate signing request was sent to master and a response

was received.

* The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the master to see this node join the cluster.

加入后集群也会拉取相关镜像,等拉取镜像后启动起来后可在master节点上查看node处于ready状态

[root@k8snode1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

quay.io/coreos/flannel v0.11.0-amd64 ff281650a721 months ago .6MB

k8s.gcr.io/kube-proxy-amd64 v1.11.1 d5c25579d0ff months ago .8MB

k8s.gcr.io/pause 3.1 da86e6ba6ca1 months ago 742kB

13、查看搭建好的简单的k8s集群

[root@k8smaster ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8smaster Ready master 16h v1.11.1 192.168.10.10 <none> CentOS Linux (Core) 3.10.-.el7.x86_64 docker://18.6.0

k8snode1 Ready <none> 18m v1.11.1 192.168.10.11 <none> CentOS Linux (Core) 3.10.-.el7.x86_64 docker://18.6.0

k8snode2 Ready <none> 4m v1.11.1 192.168.10.12 <none> CentOS Linux (Core) 3.10.-.el7.x86_64 docker://18.6.0

[root@k8smaster ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE

coredns-78fcdf6894-6qs64 / Running 16h 10.244.0.2 k8smaster

coredns-78fcdf6894-bhzf4 / Running 16h 10.244.0.3 k8smaster

etcd-k8smaster / Running 34m 192.168.10.10 k8smaster

kube-apiserver-k8smaster / Running 34m 192.168.10.10 k8smaster

kube-controller-manager-k8smaster / Running 34m 192.168.10.10 k8smaster

kube-flannel-ds-amd64-d22fv / Running 4m 192.168.10.12 k8snode2

kube-flannel-ds-amd64-nskmt / Running 38m 192.168.10.10 k8smaster

kube-flannel-ds-amd64-q4jvr / Running 18m 192.168.10.11 k8snode1

kube-proxy-6858m / Running 4m 192.168.10.12 k8snode2

kube-proxy-6btdl / Running 18m 192.168.10.11 k8snode1

kube-proxy-fknmj / Running 16h 192.168.10.10 k8smaster

kube-scheduler-k8smaster / Running 34m 192.168.10.10 k8smaster

至此,k8s简单集群搭建完成。

Kubernetes 学习3 kubeadm初始化k8s集群的更多相关文章

- 一,kubeadm初始化k8s集群

docker安装 wget https://mirrors.tuna.tsinghua.edu.cn/docker-ce/linux/centos/docker-ce.repo yum inst ...

- k8s学习笔记之二:使用kubeadm安装k8s集群

一.集群环境信息及安装前准备 部署前操作(集群内所有主机): .关闭防火墙,关闭selinux(生产环境按需关闭或打开) .同步服务器时间,选择公网ntpd服务器或者自建ntpd服务器 .关闭swap ...

- 使用Kubeadm创建k8s集群之部署规划(三十)

前言 上一篇我们讲述了使用Kubectl管理k8s集群,那么接下来,我们将使用kubeadm来启动k8s集群. 部署k8s集群存在一定的挑战,尤其是部署高可用的k8s集群更是颇为复杂(后续会讲).因此 ...

- 使用kubeadm部署k8s集群[v1.18.0]

使用kubeadm部署k8s集群 环境 IP地址 主机名 节点 10.0.0.63 k8s-master1 master1 10.0.0.63 k8s-master2 master2 10.0.0.6 ...

- CentOS7 使用 kubeadm 搭建 k8s 集群

一 安装Docker-CE 前言 Docker 使用越来越多,安装也很简单,本次记录一下基本的步骤. Docker 目前支持 CentOS 7 及以后的版本,内核要求至少为 3.10. Docker ...

- centos7下用kubeadm安装k8s集群并使用ipvs做高可用方案

1.准备 1.1系统配置 在安装之前,需要先做如下准备.三台CentOS主机如下: 配置yum源(使用腾讯云的) 替换之前先备份旧配置 mv /etc/yum.repos.d/CentOS-Base. ...

- kubeadm搭建K8s集群及Pod初体验

基于Kubeadm 搭建K8s集群: 通过上一篇博客,我们已经基本了解了 k8s 的基本概念,也许你现在还是有些模糊,说真的我也是很模糊的.只有不断地操作去熟练,强化自己对他的认知,才能提升境界. 我 ...

- 使用Kubeadm创建k8s集群之节点部署(三十一)

前言 本篇部署教程将讲述k8s集群的节点(master和工作节点)部署,请先按照上一篇教程完成节点的准备.本篇教程中的操作全部使用脚本完成,并且对于某些情况(比如镜像拉取问题)还提供了多种解决方案.不 ...

- (二)Kubernetes kubeadm部署k8s集群

kubeadm介绍 kubeadm是Kubernetes项目自带的及集群构建工具,负责执行构建一个最小化的可用集群以及将其启动等的必要基本步骤,kubeadm是Kubernetes集群全生命周期的管理 ...

随机推荐

- IDLE与pycharm执行相同代码结果却不同,原因分析

最近在熟悉Python的class类的时候,无意中发现同样的代码,在pycharm和IDLE中结果不同,闲话少说先上代码: class aa(): def __init__(self,name): p ...

- STM32F030-UART1_DMA使用提示

STM32F030-UART1_DMA使用提示 前言: 今天把STM32F030C8T6的串口DMA学习了一下,为了加快各位研发人员的开发进度,避免浪费大量的时间在硬件平台上,写出个人代码调试的经验. ...

- json转义问题

后端程序接受前台传递过来json 1正常json没有问题 比如 {"id":21,"userName":"2张天师","phon ...

- Java8系列 (三) Spliterator可分迭代器

本文转载自 jdk8 Stream 解析2 - Spliterator分割迭代器. 概述 我们最为常见的流的产生方式是 collection.stream(), 你点开Stream()方法, 他是通过 ...

- vue2.0项目在360兼容模式下打开空白

安装两个依赖环境 yarn add babel-polyfill -D yarn add babel-preset-es2015 babel-cli -D 在main.js中引入babel-polyf ...

- iOS原生与H5交互

一.WKWebView WKWebView 初始化时,有一个参数叫configuration,它是WKWebViewConfiguration类型的参数,而WKWebViewConfiguration ...

- react基础学习和react服务端渲染框架next.js踩坑

说明 React作为Facebook 内部开发 Instagram 的项目中,是一个用来构建用户界面的优秀 JS 库,于 2013 年 5 月开源.作为前端的三大框架之一,React的应用可以说是非常 ...

- brython的问题

brython 挺不错,但有bug. 再brython中使用mpmath做精确计算. 发现: int((103654973826275244659954807217085022028357821605 ...

- arm9的时钟和定时器

时钟 两种能够提供时钟的方式: 1) 晶振 2) PLL(也就是锁相环):通用PLL需啊一个晶振,和对晶体特定频率分频或倍频的锁相环电路. 学习ARM9时钟的四步: 1) 晶振:12MHZ 2) 有多 ...

- java常用集合框架关系

一.综合总图 1.所有集合类都位于java.util包下. 2.Java的集合类主要由两个接口派生而出:Collection和Map, 3.Collection和Map是Java集合框架的根接口,这两 ...