Elasticsearch之settings和mappings的意义

Elasticsearch之settings和mappings(图文详解)

Elasticsearch之settings和mappings的意义

简单的说,就是

settings是修改分片和副本数的。

mappings是修改字段和类型的。

记住,可以用url方式来操作它们,也可以用java方式来操作它们。建议用url方式,因为简单很多。

1、ES中的settings

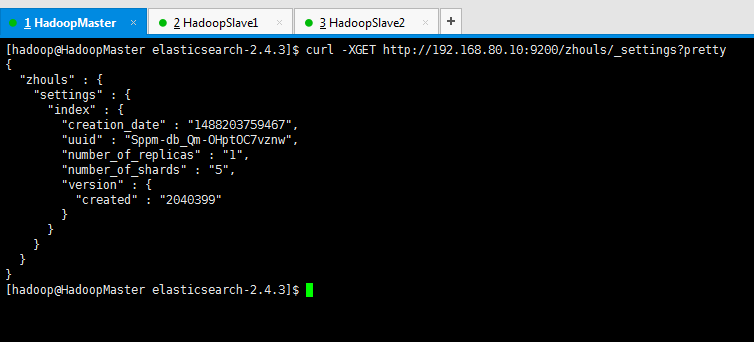

查询索引库的settings信息

[hadoop@HadoopMaster elasticsearch-2.4.3]$ curl -XGET http://192.168.80.10:9200/zhouls/_settings?pretty

{

"zhouls" : {

"settings" : {

"index" : {

"creation_date" : "1488203759467",

"uuid" : "Sppm-db_Qm-OHptOC7vznw",

"number_of_replicas" : "1",

"number_of_shards" : "5",

"version" : {

"created" : "2040399"

}

}

}

}

}

[hadoop@HadoopMaster elasticsearch-2.4.3]$

settings修改索引库默认配置

例如:分片数量,副本数量

查看:curl -XGET http://192.168.80.10:9200/zhouls/_settings?pretty

操作不存在索引:curl -XPUT '192.168.80.10:9200/liuch/' -d'{"settings":{"number_of_shards":3,"number_of_replicas":0}}'

操作已存在索引:curl -XPUT '192.168.80.10:9200/zhouls/_settings' -d'{"index":{"number_of_replicas":1}}'

总结:就是,不存在索引时,可以指定副本和分片,如果已经存在,则只能修改副本。

在创建新的索引库时,可以指定索引分片的副本数。默认是1,这个很简单

2、ES中的mappings

ES的mapping如何用?什么时候需要手动,什么时候需要自动?

Mapping,就是对索引库中索引的字段名称及其数据类型进行定义,类似于mysql中的表结构信息。不过es的mapping比数据库灵活很多,它可以动态识别字段。一般不需要指定mapping都可以,因为es会自动根据数据格式识别它的类型,如果你需要对某些字段添加特殊属性(如:定义使用其它分词器、是否分词、是否存储等),就必须手动添加mapping。

我们在es中添加索引数据时不需要指定数据类型,es中有自动影射机制,字符串映射为string,数字映射为long。通过mappings可以指定数据类型是否存储等属性。

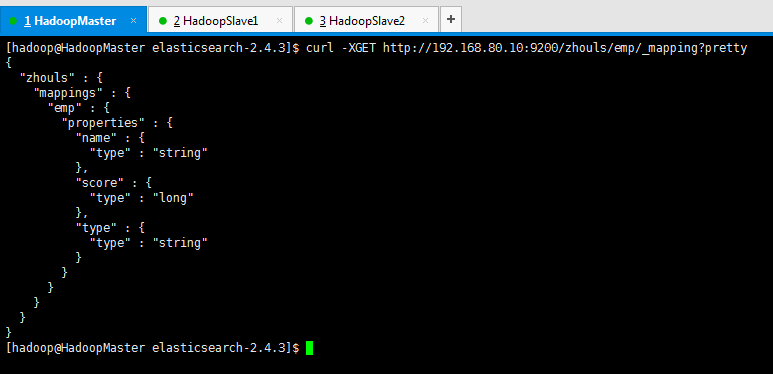

查询索引库的mapping信息

[hadoop@HadoopMaster elasticsearch-2.4.3]$ curl -XGET http://192.168.80.10:9200/zhouls/emp/_mapping?pretty

{

"zhouls" : {

"mappings" : {

"emp" : {

"properties" : {

"name" : {

"type" : "string"

},

"score" : {

"type" : "long"

},

"type" : {

"type" : "string"

}

}

}

}

}

}

[hadoop@HadoopMaster elasticsearch-2.4.3]$

mappings修改字段相关属性

例如:字段类型,使用哪种分词工具啊等,如下:

注意:下面可以使用indexAnalyzer定义分词器,也可以使用index_analyzer定义分词器

操作不存在的索引

curl -XPUT '192.168.80.10:9200/zhouls' -d'{"mappings":{"emp":{"properties":{"name":{"type":"string","analyzer": "ik_max_word"}}}}}'

操作已存在的索引

curl -XPOST http://192.168.80.10:9200/zhouls/emp/_mapping -d'{"properties":{"name":{"type":"string","analyzer": "ik_max_word"}}}'

也许我上面这样写,很多人不太懂,我下面,就举个例子。(大家必须要会)

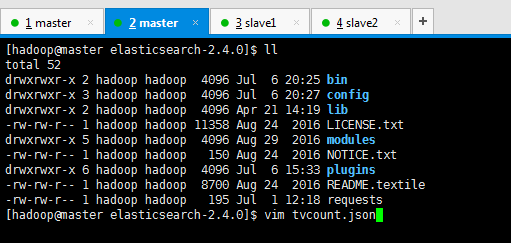

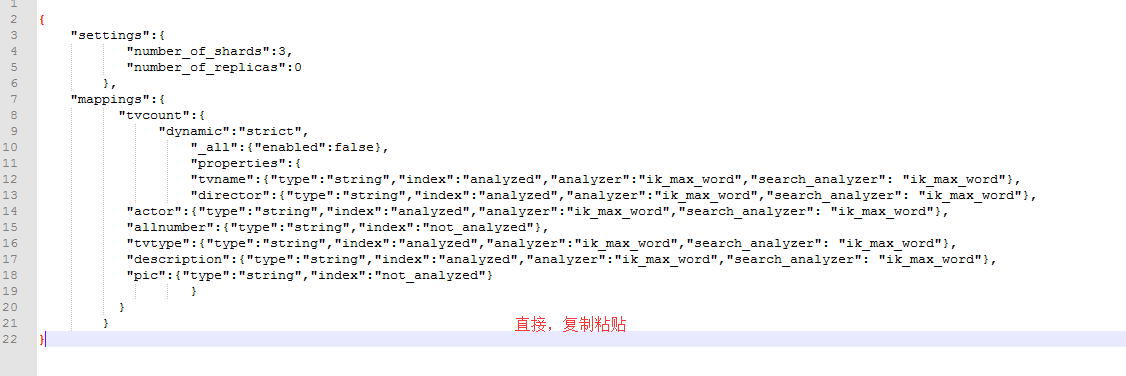

第一步:先编辑tvcount.json文件

内容如下(做了笔记):

- {

- "settings":{ #settings是修改分片和副本数的

- "number_of_shards":3, #分片为3

- "number_of_replicas":0 #副本数为0

- },

- "mappings":{ #mappings是修改字段和类型的

- "tvcount":{

- "dynamic":"strict",

- "_all":{"enabled":false},

- "properties":{

- "tvname":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

如,string类型,analyzed索引,ik_max_word分词器- "director":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "actor":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "allnumber":{"type":"string","index":"not_analyzed"},

- "tvtype":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "description":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "pic":{"type":"string","index":"not_analyzed"}

- }

- }

- }

- }

即,tvname(电视名称) director(导演) actor(主演) allnumber(总播放量)

tvtype(电视类别) description(描述)

‘’

- [hadoop@master elasticsearch-2.4.0]$ ll

- total 52

- drwxrwxr-x 2 hadoop hadoop 4096 Jul 6 20:25 bin

- drwxrwxr-x 3 hadoop hadoop 4096 Jul 6 20:27 config

- drwxrwxr-x 2 hadoop hadoop 4096 Apr 21 14:19 lib

- -rw-rw-r-- 1 hadoop hadoop 11358 Aug 24 2016 LICENSE.txt

- drwxrwxr-x 5 hadoop hadoop 4096 Aug 29 2016 modules

- -rw-rw-r-- 1 hadoop hadoop 150 Aug 24 2016 NOTICE.txt

- drwxrwxr-x 6 hadoop hadoop 4096 Jul 6 15:33 plugins

- -rw-rw-r-- 1 hadoop hadoop 8700 Aug 24 2016 README.textile

- -rw-rw-r-- 1 hadoop hadoop 195 Jul 1 12:18 requests

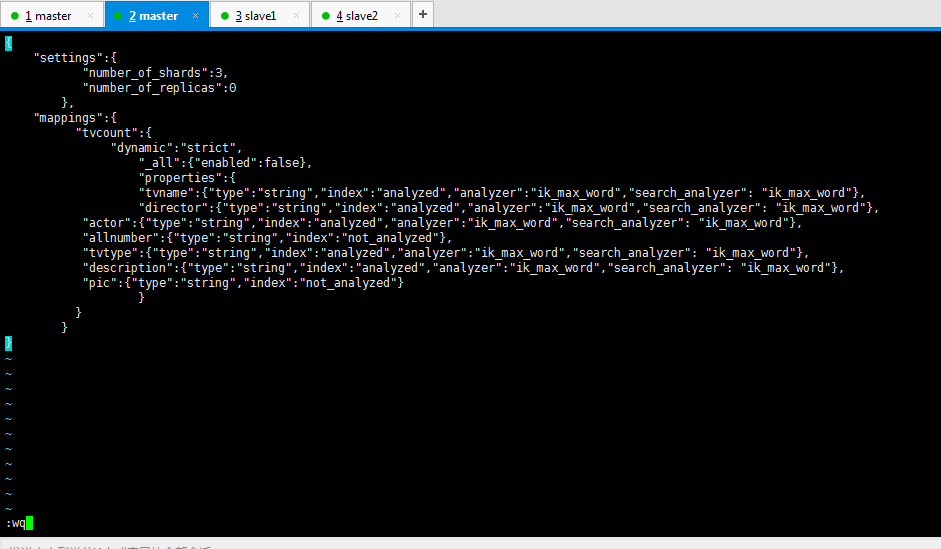

- [hadoop@master elasticsearch-2.4.0]$ vim tvcount.json

- {

- "settings":{

- "number_of_shards":3,

- "number_of_replicas":0

- },

- "mappings":{

- "tvcount":{

- "dynamic":"strict",

- "_all":{"enabled":false},

- "properties":{

- "tvname":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "director":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "actor":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "allnumber":{"type":"string","index":"not_analyzed"},

- "tvtype":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "description":{"type":"string","index":"analyzed","analyzer":"ik_max_word","search_analyzer": "ik_max_word"},

- "pic":{"type":"string","index":"not_analyzed"}

- }

- }

- }

- }

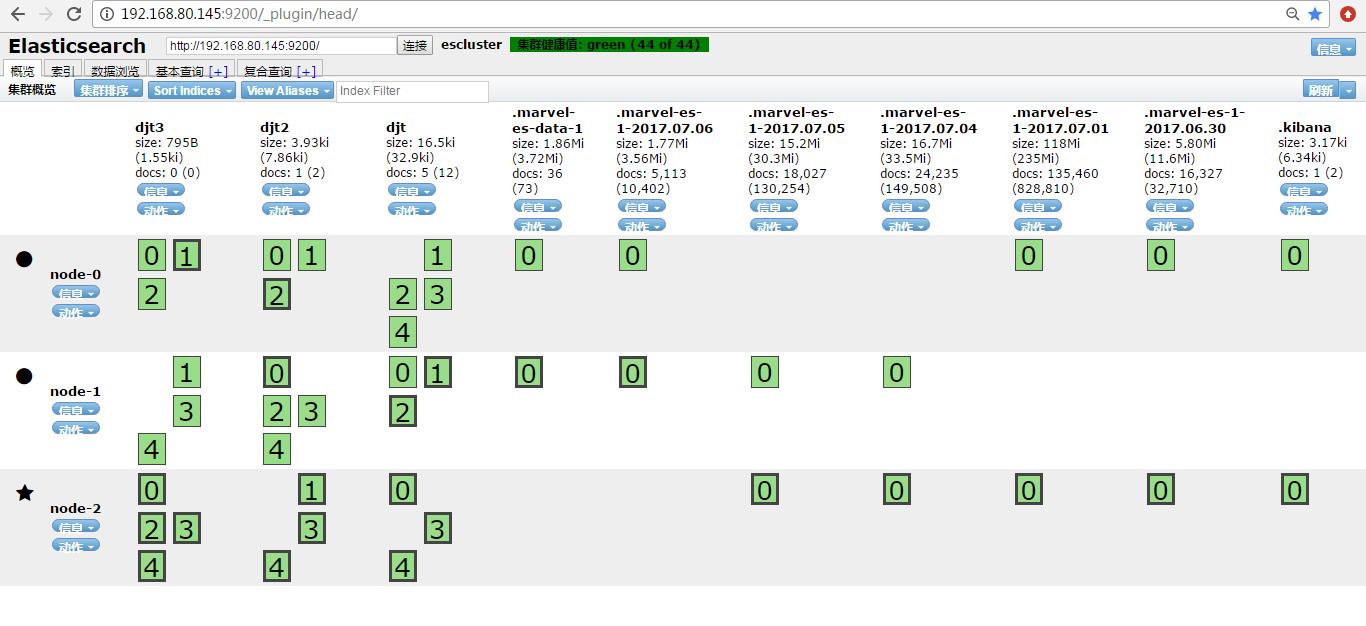

- http://192.168.80.145:9200/_plugin/head/

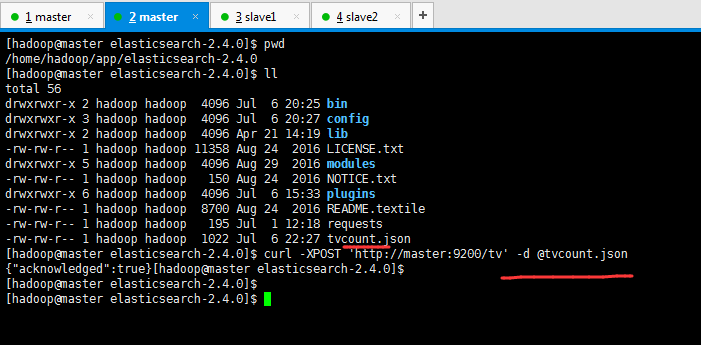

第二步:创建mapping

这里,因为,之前,我们是在/home/hadoop/app/elasticsearch-2.4.0下,这个目录下有我们刚之前写的tvcount.json,所以可以直接

- curl -XPOST 'http://master:9200/tv' -d @tvcount.json

不然的话,就需要用绝对路径

- [hadoop@master elasticsearch-2.4.0]$ pwd

- /home/hadoop/app/elasticsearch-2.4.0

- [hadoop@master elasticsearch-2.4.0]$ ll

- total 56

- drwxrwxr-x 2 hadoop hadoop 4096 Jul 6 20:25 bin

- drwxrwxr-x 3 hadoop hadoop 4096 Jul 6 20:27 config

- drwxrwxr-x 2 hadoop hadoop 4096 Apr 21 14:19 lib

- -rw-rw-r-- 1 hadoop hadoop 11358 Aug 24 2016 LICENSE.txt

- drwxrwxr-x 5 hadoop hadoop 4096 Aug 29 2016 modules

- -rw-rw-r-- 1 hadoop hadoop 150 Aug 24 2016 NOTICE.txt

- drwxrwxr-x 6 hadoop hadoop 4096 Jul 6 15:33 plugins

- -rw-rw-r-- 1 hadoop hadoop 8700 Aug 24 2016 README.textile

- -rw-rw-r-- 1 hadoop hadoop 195 Jul 1 12:18 requests

- -rw-rw-r-- 1 hadoop hadoop 1022 Jul 6 22:27 tvcount.json

- [hadoop@master elasticsearch-2.4.0]$ curl -XPOST 'http://master:9200/tv' -d @tvcount.json

- {"acknowledged":true}[hadoop@master elasticsearch-2.4.0]$

- [hadoop@master elasticsearch-2.4.0]$

- [hadoop@master elasticsearch-2.4.0]$

简单的说,就是

settings是修改分片和副本数的。

mappings是修改字段和类型的。

具体,见我的博客

Elasticsearch之settings和mappings(图文详解)

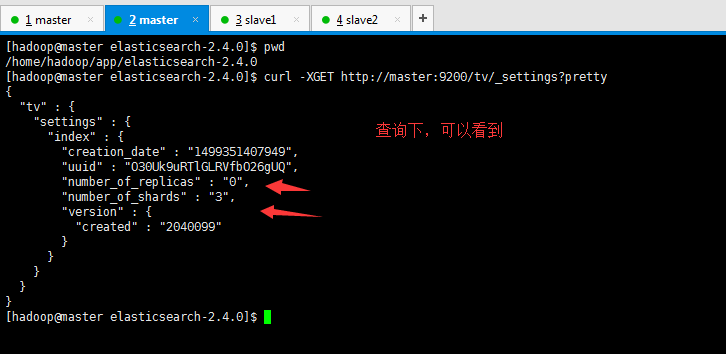

然后,再来查询下

- [hadoop@master elasticsearch-2.4.0]$ pwd

- /home/hadoop/app/elasticsearch-2.4.0

- [hadoop@master elasticsearch-2.4.0]$ curl -XGET http://master:9200/tv/_settings?pretty

- {

- "tv" : {

- "settings" : {

- "index" : {

- "creation_date" : "1499351407949",

- "uuid" : "O30Uk9uRTlGLRVfbO26gUQ",

- "number_of_replicas" : "0",

- "number_of_shards" : "3",

- "version" : {

- "created" : "2040099"

- }

- }

- }

- }

- }

- [hadoop@master elasticsearch-2.4.0]$

然后,再来查看mapping(mappings是修改字段和类型的)

- [hadoop@master elasticsearch-2.4.0]$ pwd

- /home/hadoop/app/elasticsearch-2.4.0

- [hadoop@master elasticsearch-2.4.0]$ curl -XGET http://master:9200/tv/_mapping?pretty

- {

- "tv" : {

- "mappings" : {

- "tvcount" : {

- "dynamic" : "strict",

- "_all" : {

- "enabled" : false

- },

- "properties" : {

- "actor" : {

- "type" : "string",

- "analyzer" : "ik_max_word"

- },

- "allnumber" : {

- "type" : "string",

- "index" : "not_analyzed"

- },

- "description" : {

- "type" : "string",

- "analyzer" : "ik_max_word"

- },

- "director" : {

- "type" : "string",

- "analyzer" : "ik_max_word"

- },

- "pic" : {

- "type" : "string",

- "index" : "not_analyzed"

- },

- "tvname" : {

- "type" : "string",

- "analyzer" : "ik_max_word"

- },

- "tvtype" : {

- "type" : "string",

- "analyzer" : "ik_max_word"

- }

- }

- }

- }

- }

- }

- [hadoop@master elasticsearch-2.4.0]$

说简单点就是,tvcount.json里已经初步设置好了settings和mappings。

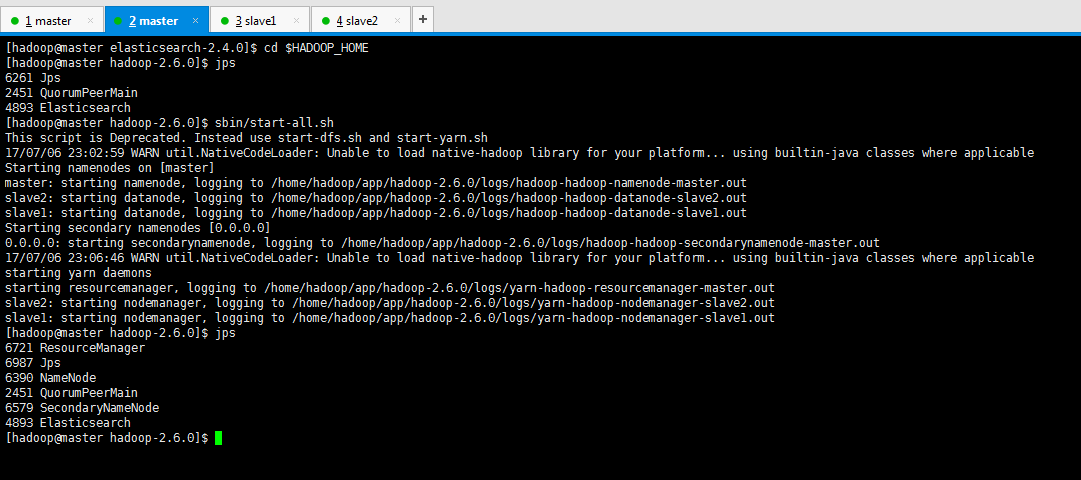

然后启动hdfs、启动hbase

这里,很简单,不多说。

- [hadoop@master elasticsearch-2.4.0]$ cd $HADOOP_HOME

- [hadoop@master hadoop-2.6.0]$ jps

- 6261 Jps

- 2451 QuorumPeerMain

- 4893 Elasticsearch

- [hadoop@master hadoop-2.6.0]$ sbin/start-all.sh

- This script is Deprecated. Instead use start-dfs.sh and start-yarn.sh

- 17/07/06 23:02:59 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

- Starting namenodes on [master]

- master: starting namenode, logging to /home/hadoop/app/hadoop-2.6.0/logs/hadoop-hadoop-namenode-master.out

- slave2: starting datanode, logging to /home/hadoop/app/hadoop-2.6.0/logs/hadoop-hadoop-datanode-slave2.out

- slave1: starting datanode, logging to /home/hadoop/app/hadoop-2.6.0/logs/hadoop-hadoop-datanode-slave1.out

- Starting secondary namenodes [0.0.0.0]

- 0.0.0.0: starting secondarynamenode, logging to /home/hadoop/app/hadoop-2.6.0/logs/hadoop-hadoop-secondarynamenode-master.out

- 17/07/06 23:06:46 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

- starting yarn daemons

- starting resourcemanager, logging to /home/hadoop/app/hadoop-2.6.0/logs/yarn-hadoop-resourcemanager-master.out

- slave2: starting nodemanager, logging to /home/hadoop/app/hadoop-2.6.0/logs/yarn-hadoop-nodemanager-slave2.out

- slave1: starting nodemanager, logging to /home/hadoop/app/hadoop-2.6.0/logs/yarn-hadoop-nodemanager-slave1.out

- [hadoop@master hadoop-2.6.0]$ jps

- 6721 ResourceManager

- 6987 Jps

- 6390 NameNode

- 2451 QuorumPeerMain

- 6579 SecondaryNameNode

- 4893 Elasticsearch

- [hadoop@master hadoop-2.6.0]$

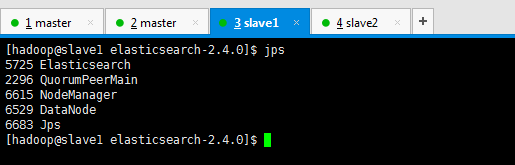

- [hadoop@slave1 elasticsearch-2.4.0]$ jps

- 5725 Elasticsearch

- 2296 QuorumPeerMain

- 6615 NodeManager

- 6529 DataNode

- 6683 Jps

- [hadoop@slave1 elasticsearch-2.4.0]$

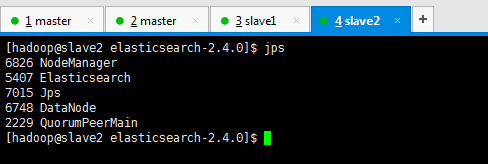

- [hadoop@slave2 elasticsearch-2.4.0]$ jps

- 6826 NodeManager

- 5407 Elasticsearch

- 7015 Jps

- 6748 DataNode

- 2229 QuorumPeerMain

- [hadoop@slave2 elasticsearch-2.4.0]$

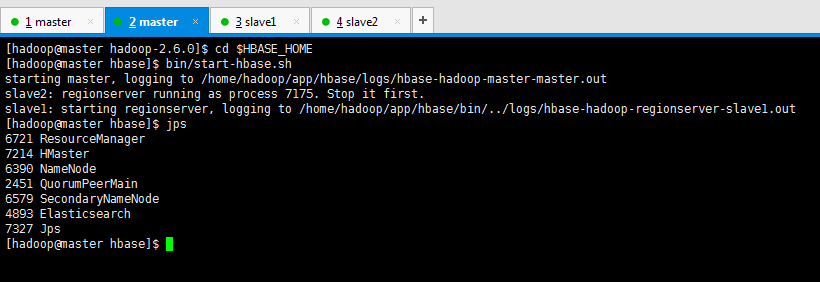

- [hadoop@master hadoop-2.6.0]$ cd $HBASE_HOME

- [hadoop@master hbase]$ bin/start-hbase.sh

- starting master, logging to /home/hadoop/app/hbase/logs/hbase-hadoop-master-master.out

- slave2: regionserver running as process 7175. Stop it first.

- slave1: starting regionserver, logging to /home/hadoop/app/hbase/bin/../logs/hbase-hadoop-regionserver-slave1.out

- [hadoop@master hbase]$ jps

- 6721 ResourceManager

- 7214 HMaster

- 6390 NameNode

- 2451 QuorumPeerMain

- 6579 SecondaryNameNode

- 4893 Elasticsearch

- 7327 Jps

- [hadoop@master hbase]$

- [hadoop@slave1 hbase]$ jps

- 7210 Jps

- 7145 HRegionServer

- 5725 Elasticsearch

- 2296 QuorumPeerMain

- 6615 NodeManager

- 6529 DataNode

- 6969 HMaster

- [hadoop@slave1 hbase]$

- [hadoop@slave2 hbase]$ jps

- 6826 NodeManager

- 5407 Elasticsearch

- 7470 Jps

- 7337 HMaster

- 6748 DataNode

- 7175 HRegionServer

- 2229 QuorumPeerMain

- [hadoop@slave2 hbase]$

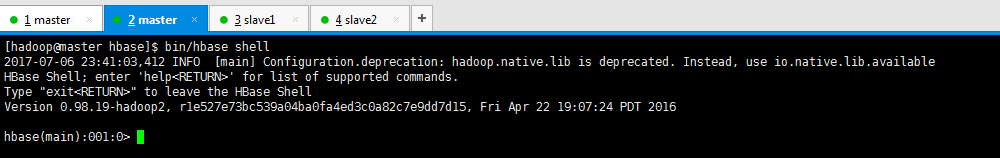

打开进入hbase shell

- [hadoop@master hbase]$ bin/hbase shell

- 2017-07-06 23:41:03,412 INFO [main] Configuration.deprecation: hadoop.native.lib is deprecated. Instead, use io.native.lib.available

- HBase Shell; enter 'help<RETURN>' for list of supported commands.

- Type "exit<RETURN>" to leave the HBase Shell

- Version 0.98.19-hadoop2, r1e527e73bc539a04ba0fa4ed3c0a82c7e9dd7d15, Fri Apr 22 19:07:24 PDT 2016

- hbase(main):001:0>

查询一下有哪些库

- hbase(main):001:0> list

- TABLE

- 2017-07-06 23:51:21,204 WARN [main] util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

- SLF4J: Class path contains multiple SLF4J bindings.

- SLF4J: Found binding in [jar:file:/home/hadoop/app/hbase-0.98.19/lib/slf4j-log4j12-1.6.4.jar!/org/slf4j/impl/StaticLoggerBinder.class]

- SLF4J: Found binding in [jar:file:/home/hadoop/app/hadoop-2.6.0/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

- SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

- 0 row(s) in 266.1210 seconds

- => []

如果tvcount数据库已经存在的话可以删除掉

- hbase(main):002:0> disable 'tvcount'

- ERROR: Table tvcount does not exist.

- Here is some help for this command:

- Start disable of named table:

- hbase> disable 't1'

- hbase> disable 'ns1:t1'

- hbase(main):003:0> drop 'tvcount'

- ERROR: Table tvcount does not exist.

- Here is some help for this command:

- Drop the named table. Table must first be disabled:

- hbase> drop 't1'

- hbase> drop 'ns1:t1'

- hbase(main):004:0> list

- TABLE

- 0 row(s) in 1.3770 seconds

- => []

- hbase(main):005:0>

然后,启动mysql数据库,创建数据库创建表

进一步,可以见

http://www.cnblogs.com/zlslch/p/6746922.html

Elasticsearch之settings和mappings的意义的更多相关文章

- Elasticsearch之settings和mappings(图文详解)

Elasticsearch之settings和mappings的意义 简单的说,就是 settings是修改分片和副本数的. mappings是修改字段和类型的. 记住,可以用url方式来操作它们,也 ...

- Elasticsearch Network Settings

网络设置 Elasticsearch 缺省情况下是绑定 localhost.对于本地开发服务是足够的(如果你在相同机子上启动多个节点,它还可以形成一个集群),但是你需要配置基本的网络设置,为了能够在实 ...

- 修改Elasticsearch的settings

解决:Limit of total fields [1000] in index [nginx-access-log] has been exceeded" 的问题 PUT http://1 ...

- Elasticsearch 更新索引settings

1.更新索引设置:将副本减至0,修改索引分析器为ik_max_word和检索分词器为ik_smart 2.需要先将索引关闭,然后再PUT setings POST user/_close PUT us ...

- Elasticsearch之重要核心概念(cluster(集群)、shards(分配)、replicas(索引副本)、recovery(据恢复或叫数据重新分布)、gateway(es索引的持久化存储方式)、discovery.zen(es的自动发现节点机制机制)、Transport(内部节点或集群与客户端的交互方式)、settings(修改索引库默认配置)和mappings)

Elasticsearch之重要核心概念如下: 1.cluster 代表一个集群,集群中有多个节点,其中有一个为主节点,这个主节点是可以通过选举产生的,主从节点是对于集群内部来说的.es的一个概念就是 ...

- es实战一:基本概念

基本概念 可以对照数关系型据库来理解Elasticsearch的有关概念. Relational DB Elasticsearch Databases Indices Tables Types Row ...

- Elasticsearch【mappings】类型配置操作

在介绍ES的更新操作的时候,说过,ES的索引创建是很简单的,没有必要多说,这里是有个前提的,简单是建立在ES默认的配置基础之上的. 比如,当ES安装完毕后,我们就可以通过curl命令完成index,t ...

- ES3:ElasticSearch 索引

ElasticSearch是文档型数据库,索引(Index)定义了文档的逻辑存储和字段类型,每个索引可以包含多个文档类型,文档类型是文档的集合,文档以索引定义的逻辑存储模型,比如,指定分片和副本的数量 ...

- Elasticsearch就这么简单

一.前言 最近有点想弄一个站内搜索的功能,之前学过了Lucene,后来又听过Solr这个名词.接着在了解全文搜索的时候就发现了Elasticsearch这个,他也是以Lucene为基础的. 我去搜了几 ...

随机推荐

- Go之简单并发

func Calculate(id int) { fmt.Println(id) } 使用go来实现并发 func main() { for i := 0; i < 100; i++ { go ...

- Android图片的合成示例

package com.example.demo; import android.os.Bundle; import android.app.Activity; import android.grap ...

- iOS - UIScrollView 相关属性代理详解

一.UIScrollView的属性和代理方法详解 属性: - (void)viewDidLoad { [super viewDidLoad]; _scrollView.backgroundColor ...

- WinPE启动U盘的制作方法与软件下载(通用PE工具箱/老毛桃/大白菜WinPE)

转自:http://blog.sina.com.cn/s/blog_58c380370100cp5x.html 文件大小:39.5M(支持Win7安装,早期的通用PE工具箱,小巧不过几十兆,现在都臃肿 ...

- 如何在浏览器中简单模拟微信浏览器(仅限于通过User Agent进行判断的页面)

模拟微信浏览器: .打开360极速 .F12开发者工具 .开发者模式左上方有一个手机样子的图标 点击进入 设备模式‘ .将UA选项中的字符串替换成: Mozilla/ 备注: 替换的字符串是微信浏览器 ...

- openlayers中利用vector实现marker的方式

项目需要一个小型的gis.openlayers,geoserver,postgres组合是比较好的选择. openlayers的marker层好像不支持拖动操作.通过研究api发现,可以利用vecto ...

- java基础---->java的新特性(一)

通过简单的实例来感觉一下java7和java8的新特性.当那条唯捷径省略了朝拜者,我便在一滴花露中瞬间彻悟. java7代码实例 一.java7中switch中可以字符串 @Test public v ...

- Android studio快捷键设置

Android Studio格式化代码设置和代码风格设置.代码提示键 http://blog.csdn.net/u010156024/article/details/48207145 Android ...

- Linux上VNC 启动和关闭常见问题

0, 重设密码 [root@yqrh5u2 ~]# vncpasswd Password: Verify: [root@yqrh5u2 ~]# 1, ...

- OpenStack入门之【OpenStack-havana】之单网卡-All In One 安装(基于CentOS6.4)

这篇文章是自己的一篇老文,分享下,请君慢用.... =========================================== [特别申明]:经过了一段时间的不断学习加不断的测试得出本文, ...