基于Web的Kafka管理器工具之Kafka-manager的编译部署详细安装 (支持kafka0.8、0.9和0.10以后版本)(图文详解)(默认端口或任意自定义端口)

不多说,直接上干货!

至于为什么,要写这篇博客以及安装Kafka-manager?

问题详情

无奈于,在kafka里没有一个较好自带的web ui。启动后无法观看,并且不友好。所以,需安装一个第三方的kafka管理工具

功能

为了简化开发者和服务工程师维护Kafka集群的工作,yahoo构建了一个叫做Kafka管理器的基于Web工具,叫做 Kafka Manager。这个管理工具可以很容易地发现分布在集群中的哪些topic分布不均匀,或者是分区在整个集群分布不均匀的的情况。

它支持管理多个集群、选择副本、副本重新分配以及创建Topic。同时,这个管理工具也是一个非常好的可以快速浏览这个集群的工具。

有如下功能:

- 管理多个kafka集群

- 便捷的检查kafka集群状态(topics,brokers,备份分布情况,分区分布情况)

- 选择你要运行的副本

- 基于当前分区状况进行

- 可以选择topic配置并创建topic(0.8.1.1和0.8.2的配置不同)

- 删除topic(只支持0.8.2以上的版本并且要在broker配置中设置delete.topic.enable=true)

- Topic list会指明哪些topic被删除(在0.8.2以上版本适用)

- 为已存在的topic增加分区

- 为已存在的topic更新配置

- 在多个topic上批量重分区

- 在多个topic上批量重分区(可选partition broker位置)

大家编译的步骤,可以参考

kafka管理器kafka-manager部署安装

或者

Apache Kafka 入门 - Kafka-manager的基本配置和运行

我这里不多说。大家去看看这个流程就好

直接采用下面这位博主已经编译好之后分享的。

Kafka manager安装 (支持0.10以后版本consumer)

谢谢他!

下载地址: https://pan.baidu.com/s/1jIE3YL4

若此连接失效,则大家可以在我这篇博客下方留言评论,我将无偿发送给你们。

步骤:

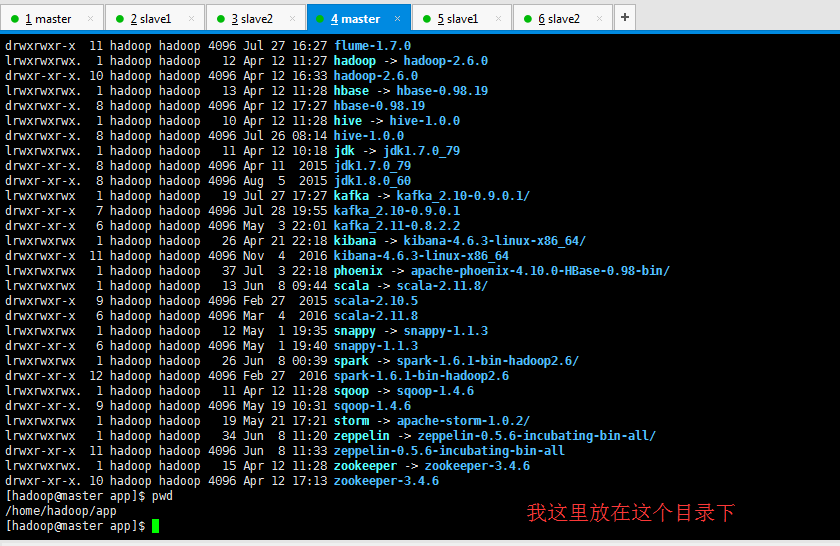

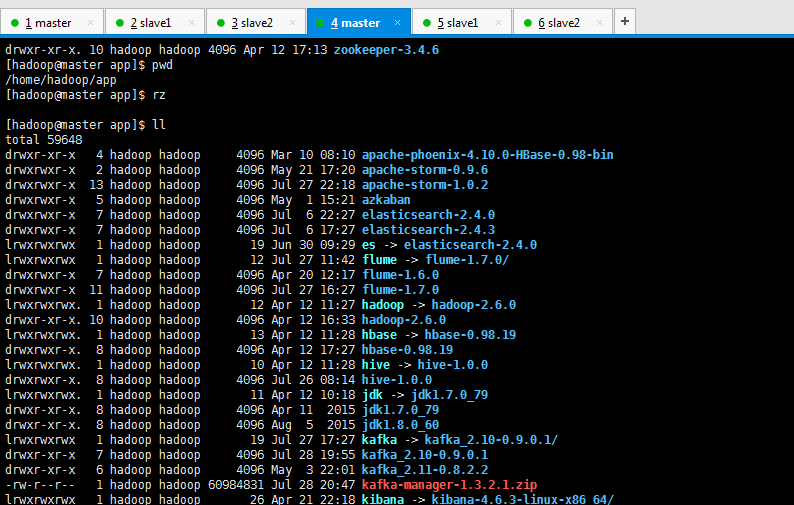

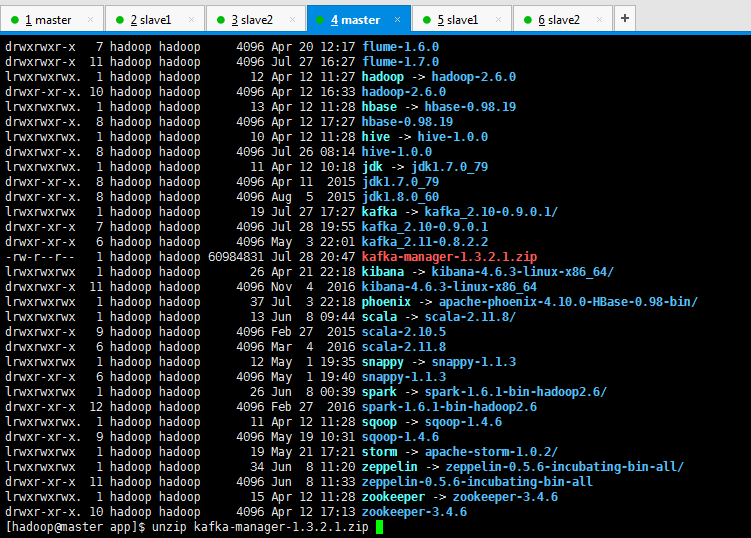

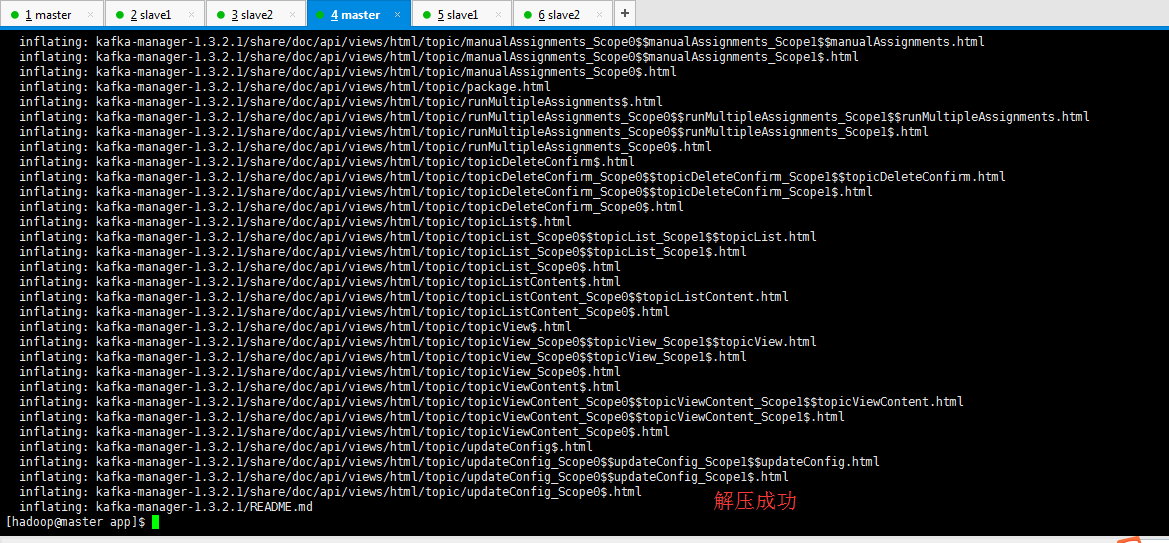

1、解压kafka-manager-1.3.2.1.zip

lrwxrwxrwx. hadoop hadoop Apr : hadoop -> hadoop-2.6.

drwxr-xr-x. hadoop hadoop Apr : hadoop-2.6.

lrwxrwxrwx. hadoop hadoop Apr : hbase -> hbase-0.98.

drwxrwxr-x. hadoop hadoop Apr : hbase-0.98.

lrwxrwxrwx. hadoop hadoop Apr : hive -> hive-1.0.

drwxrwxr-x. hadoop hadoop Jul : hive-1.0.

lrwxrwxrwx. hadoop hadoop Apr : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx hadoop hadoop Jul : kafka -> kafka_2.-0.9.0.1/

drwxr-xr-x hadoop hadoop Jul : kafka_2.-0.9.0.1

drwxr-xr-x hadoop hadoop May : kafka_2.-0.8.2.2

-rw-r--r-- hadoop hadoop Jul : kafka-manager-1.3.2.1.zip

lrwxrwxrwx hadoop hadoop Apr : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop Jul : phoenix -> apache-phoenix-4.10.-HBase-0.98-bin/

lrwxrwxrwx hadoop hadoop Jun : scala -> scala-2.11./

drwxrwxr-x hadoop hadoop Feb scala-2.10.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : snappy -> snappy-1.1.

drwxr-xr-x hadoop hadoop May : snappy-1.1.

lrwxrwxrwx hadoop hadoop Jun : spark -> spark-1.6.-bin-hadoop2./

drwxr-xr-x hadoop hadoop Feb spark-1.6.-bin-hadoop2.

lrwxrwxrwx. hadoop hadoop Apr : sqoop -> sqoop-1.4.

drwxr-xr-x. hadoop hadoop May : sqoop-1.4.

lrwxrwxrwx hadoop hadoop May : storm -> apache-storm-1.0./

lrwxrwxrwx hadoop hadoop Jun : zeppelin -> zeppelin-0.5.-incubating-bin-all/

drwxr-xr-x hadoop hadoop Jun : zeppelin-0.5.-incubating-bin-all

lrwxrwxrwx. hadoop hadoop Apr : zookeeper -> zookeeper-3.4.

drwxr-xr-x. hadoop hadoop Apr : zookeeper-3.4.

[hadoop@master app]$ unzip kafka-manager-1.3.2.1.zip

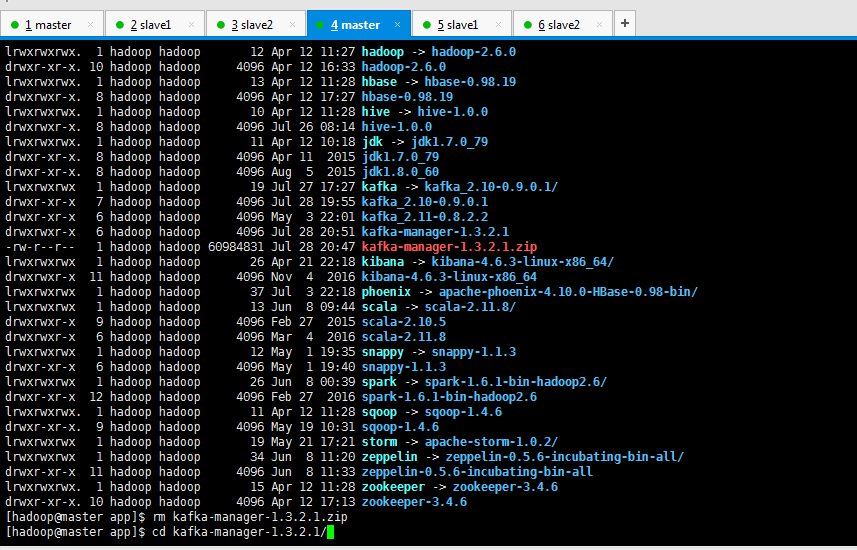

2、cd kafka-manager-1.3.2.1

lrwxrwxrwx. hadoop hadoop Apr : hive -> hive-1.0.

drwxrwxr-x. hadoop hadoop Jul : hive-1.0.

lrwxrwxrwx. hadoop hadoop Apr : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx hadoop hadoop Jul : kafka -> kafka_2.-0.9.0.1/

drwxr-xr-x hadoop hadoop Jul : kafka_2.-0.9.0.1

drwxr-xr-x hadoop hadoop May : kafka_2.-0.8.2.2

drwxrwxr-x hadoop hadoop Jul : kafka-manager-1.3.2.1

-rw-r--r-- hadoop hadoop Jul : kafka-manager-1.3.2.1.zip

lrwxrwxrwx hadoop hadoop Apr : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop Jul : phoenix -> apache-phoenix-4.10.-HBase-0.98-bin/

lrwxrwxrwx hadoop hadoop Jun : scala -> scala-2.11./

drwxrwxr-x hadoop hadoop Feb scala-2.10.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : snappy -> snappy-1.1.

drwxr-xr-x hadoop hadoop May : snappy-1.1.

lrwxrwxrwx hadoop hadoop Jun : spark -> spark-1.6.-bin-hadoop2./

drwxr-xr-x hadoop hadoop Feb spark-1.6.-bin-hadoop2.

lrwxrwxrwx. hadoop hadoop Apr : sqoop -> sqoop-1.4.

drwxr-xr-x. hadoop hadoop May : sqoop-1.4.

lrwxrwxrwx hadoop hadoop May : storm -> apache-storm-1.0./

lrwxrwxrwx hadoop hadoop Jun : zeppelin -> zeppelin-0.5.-incubating-bin-all/

drwxr-xr-x hadoop hadoop Jun : zeppelin-0.5.-incubating-bin-all

lrwxrwxrwx. hadoop hadoop Apr : zookeeper -> zookeeper-3.4.

drwxr-xr-x. hadoop hadoop Apr : zookeeper-3.4.

[hadoop@master app]$ rm kafka-manager-1.3.2.1.zip

[hadoop@master app]$ cd kafka-manager-1.3.2.1/

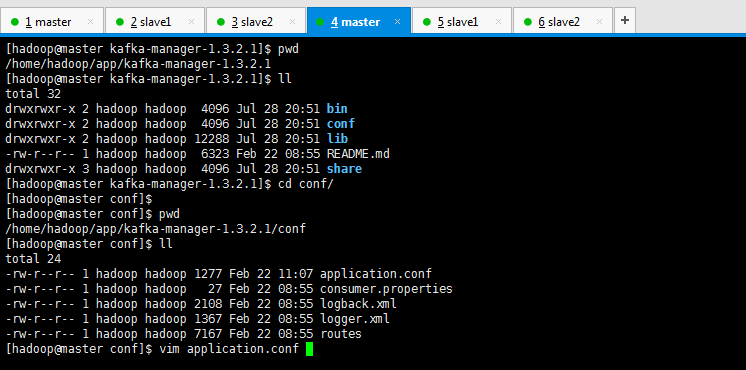

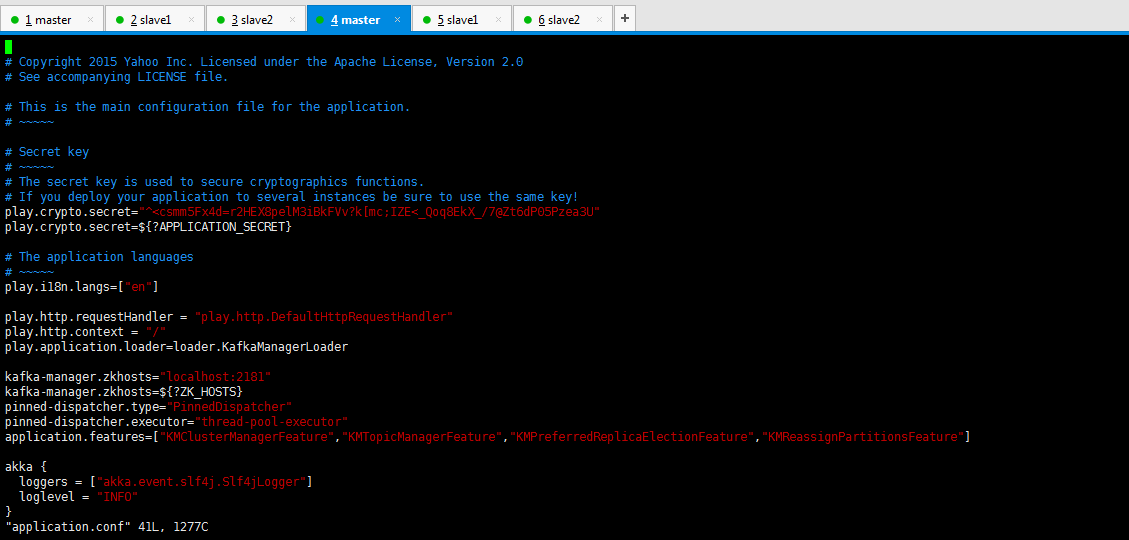

3、 修改conf/application.conf文件,特别是kafka-manager.zkhosts的配置

[hadoop@master kafka-manager-1.3.2.1]$ pwd

/home/hadoop/app/kafka-manager-1.3.2.1

[hadoop@master kafka-manager-1.3.2.1]$ ll

total

drwxrwxr-x hadoop hadoop Jul : bin

drwxrwxr-x hadoop hadoop Jul : conf

drwxrwxr-x hadoop hadoop Jul : lib

-rw-r--r-- hadoop hadoop Feb : README.md

drwxrwxr-x hadoop hadoop Jul : share

[hadoop@master kafka-manager-1.3.2.1]$ cd conf/

[hadoop@master conf]$

[hadoop@master conf]$ pwd

/home/hadoop/app/kafka-manager-1.3.2.1/conf

[hadoop@master conf]$ ll

total

-rw-r--r-- hadoop hadoop Feb : application.conf

-rw-r--r-- hadoop hadoop Feb : consumer.properties

-rw-r--r-- hadoop hadoop Feb : logback.xml

-rw-r--r-- hadoop hadoop Feb : logger.xml

-rw-r--r-- hadoop hadoop Feb : routes

[hadoop@master conf]$ vim application.conf

以下是默认,我贴出来,大家学习学习

# Copyright Yahoo Inc. Licensed under the Apache License, Version 2.0

# See accompanying LICENSE file. # This is the main configuration file for the application.

# ~~~~~ # Secret key

# ~~~~~

# The secret key is used to secure cryptographics functions.

# If you deploy your application to several instances be sure to use the same key!

play.crypto.secret="^<csmm5Fx4d=r2HEX8pelM3iBkFVv?k[mc;IZE<_Qoq8EkX_/7@Zt6dP05Pzea3U"

play.crypto.secret=${?APPLICATION_SECRET} # The application languages

# ~~~~~

play.i18n.langs=["en"] play.http.requestHandler = "play.http.DefaultHttpRequestHandler"

play.http.context = "/"

play.application.loader=loader.KafkaManagerLoader kafka-manager.zkhosts="localhost:2181"

kafka-manager.zkhosts=${?ZK_HOSTS}

pinned-dispatcher.type="PinnedDispatcher"

pinned-dispatcher.executor="thread-pool-executor"

application.features=["KMClusterManagerFeature","KMTopicManagerFeature","KMPreferredReplicaElectionFeature","KMReassignPartitionsFeature"] akka {

loggers = ["akka.event.slf4j.Slf4jLogger"]

loglevel = "INFO"

} basicAuthentication.enabled=false

basicAuthentication.username="admin"

basicAuthentication.password="password"

basicAuthentication.realm="Kafka-Manager" kafka-manager.consumer.properties.file=${?CONSUMER_PROPERTIES_FILE}

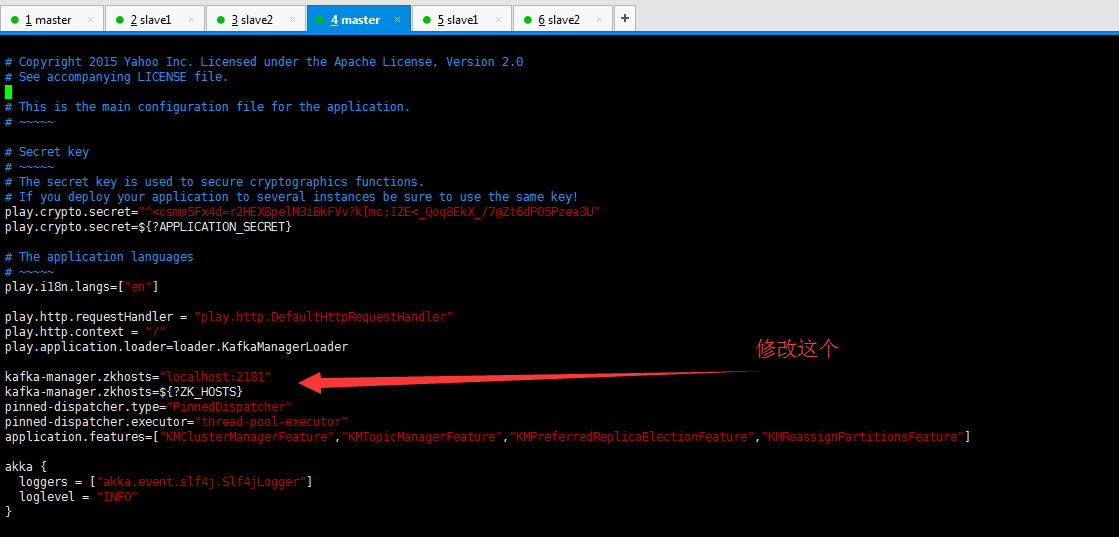

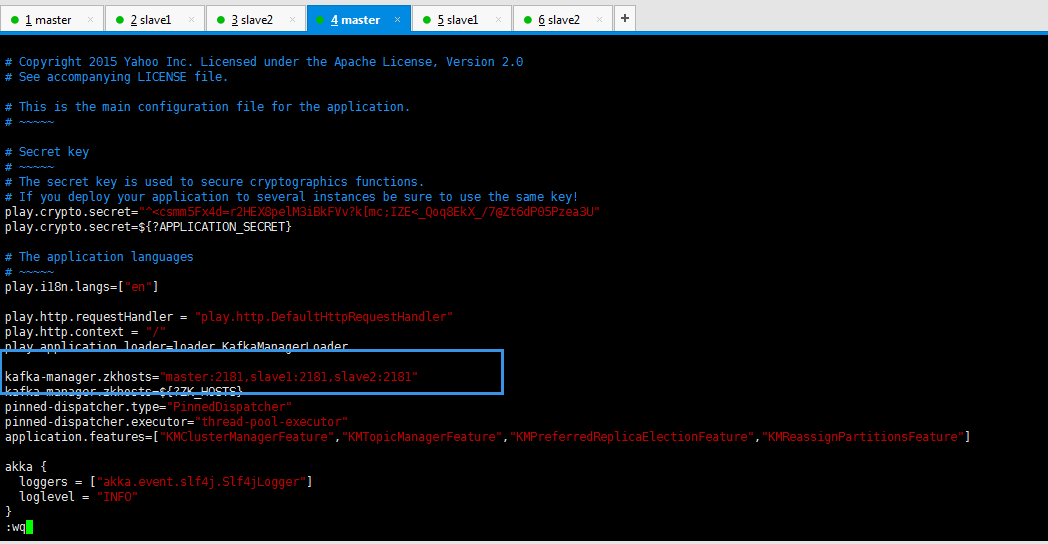

修改

我的是master、slave1和slave2,大家根据自己的机器情况对应进行修改即可。

kafka-manager.zkhosts="master:2181,slave1:2181,slave2:2181"

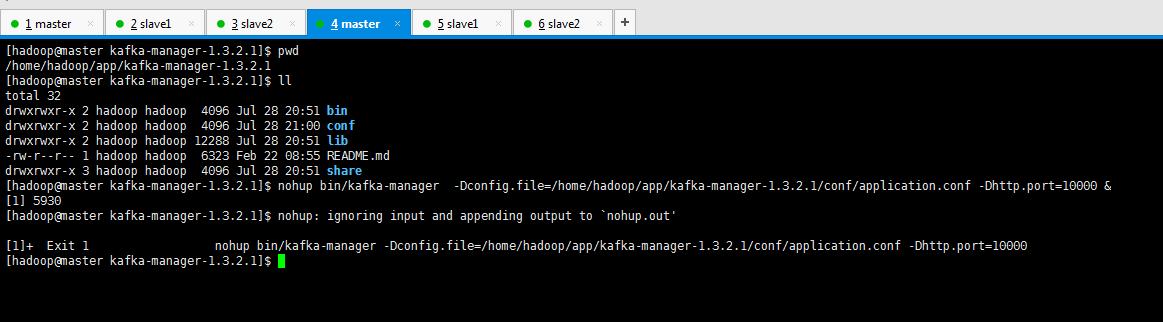

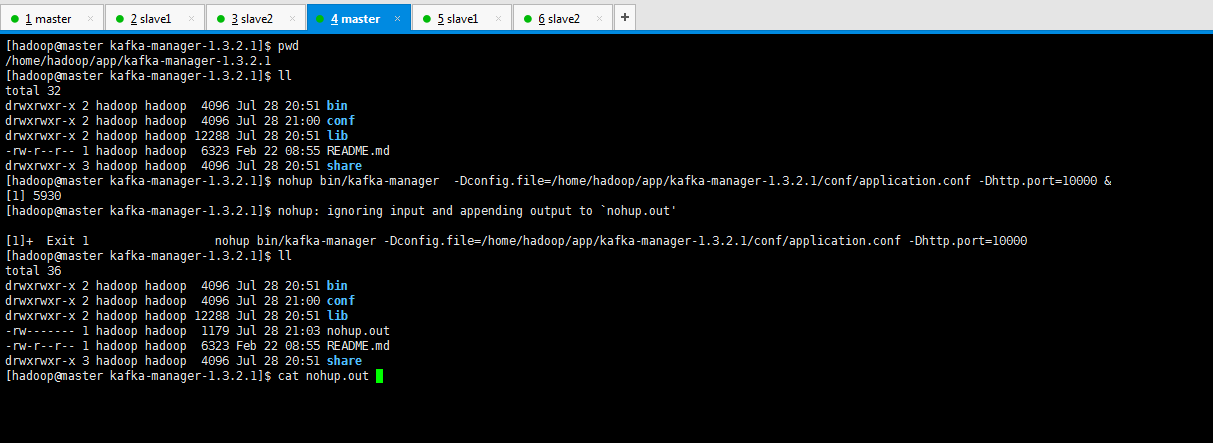

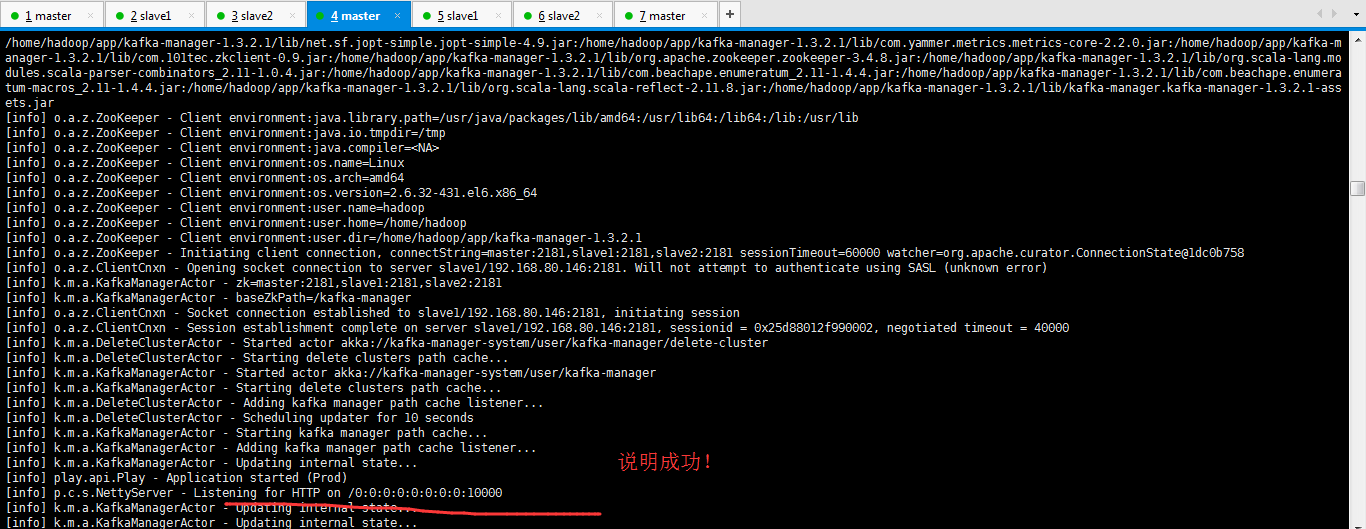

4、 运行kafka manager

注意:默认启动端口为9000。

要到大家kafka-manager的安装目录下来执行。

如我这里是在/home/hadoop/app/kafka-manager-1.3.2.1

bin/kafka-manager -Dconfig.file=conf/application.conf

或者

nohup bin/kafka-manager -Dconfig.file=conf/application.conf & (后台运行)

当然,大家可以以这个端口为所用,大家也可以在启动的时候,开启另一个端口,比如我这里开启10000端口。

最好使用绝对路径。

要到大家kafka-manager的安装目录下来执行。

如我这里是在/home/hadoop/app/kafka-manager-1.3.2.1

nohup bin/kafka-manager -Dconfig.file=/home/hadoop/app/kafka-manager-1.3.2.1/conf/application.conf -Dhttp.port= &

5、打开浏览器,访问http://IP:10000

[hadoop@master kafka-manager-1.3.2.1]$ pwd

/home/hadoop/app/kafka-manager-1.3.2.1

[hadoop@master kafka-manager-1.3.2.1]$ ll

total

drwxrwxr-x hadoop hadoop Jul : bin

drwxrwxr-x hadoop hadoop Jul : conf

drwxrwxr-x hadoop hadoop Jul : lib

-rw-r--r-- hadoop hadoop Feb : README.md

drwxrwxr-x hadoop hadoop Jul : share

[hadoop@master kafka-manager-1.3.2.1]$ nohup bin/kafka-manager -Dconfig.file=/home/hadoop/app/kafka-manager-1.3.2.1/conf/application.conf -Dhttp.port= &

[]

[hadoop@master kafka-manager-1.3.2.1]$ nohup: ignoring input and appending output to `nohup.out' []+ Exit nohup bin/kafka-manager -Dconfig.file=/home/hadoop/app/kafka-manager-1.3.2.1/conf/application.conf -Dhttp.port=

[hadoop@master kafka-manager-1.3.2.1]$

[hadoop@master kafka-manager-1.3.2.1]$ cat nohup.out

This application is already running (Or delete /home/hadoop/app/kafka-manager-1.3.2.1/RUNNING_PID file).

::, |-INFO in ch.qos.logback.classic.LoggerContext[default] - Could NOT find resource [logback.groovy]

::, |-INFO in ch.qos.logback.classic.LoggerContext[default] - Could NOT find resource [logback-test.xml]

::, |-INFO in ch.qos.logback.classic.LoggerContext[default] - Found resource [logback.xml] at [file:/home/hadoop/app/kafka-manager-1.3.2.1/conf/logback.xml]

::, |-INFO in ch.qos.logback.classic.joran.action.ConfigurationAction - debug attribute not set

::, |-INFO in ch.qos.logback.core.joran.action.ConversionRuleAction - registering conversion word coloredLevel with class [play.api.Logger$ColoredLevel]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - About to instantiate appender of type [ch.qos.logback.core.rolling.RollingFileAppender]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - Naming appender as [FILE]

::, |-INFO in ch.qos.logback.core.joran.action.NestedComplexPropertyIA - Assuming default type [ch.qos.logback.classic.encoder.PatternLayoutEncoder] for [encoder] property

::, |-ERROR in ch.qos.logback.core.joran.spi.Interpreter@: - no applicable action for [totalSizeCap], current ElementPath is [[configuration][appender][rollingPolicy][totalSizeCap]]

::, |-INFO in c.q.l.core.rolling.TimeBasedRollingPolicy - No compression will be used

::, |-INFO in c.q.l.core.rolling.TimeBasedRollingPolicy - Will use the pattern application.home_IS_UNDEFINED/logs/application.%d{yyyy-MM-dd}.log for the active file

::, |-INFO in c.q.l.core.rolling.DefaultTimeBasedFileNamingAndTriggeringPolicy - The date pattern is 'yyyy-MM-dd' from file name pattern 'application.home_IS_UNDEFINED/logs/application.%d{yyyy-MM-dd}.log'.

::, |-INFO in c.q.l.core.rolling.DefaultTimeBasedFileNamingAndTriggeringPolicy - Roll-over at midnight.

::, |-INFO in c.q.l.core.rolling.DefaultTimeBasedFileNamingAndTriggeringPolicy - Setting initial period to Fri Jul :: CST

::, |-INFO in ch.qos.logback.core.rolling.RollingFileAppender[FILE] - Active log file name: application.home_IS_UNDEFINED/logs/application.log

::, |-INFO in ch.qos.logback.core.rolling.RollingFileAppender[FILE] - File property is set to [application.home_IS_UNDEFINED/logs/application.log]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - About to instantiate appender of type [ch.qos.logback.core.ConsoleAppender]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - Naming appender as [STDOUT]

::, |-INFO in ch.qos.logback.core.joran.action.NestedComplexPropertyIA - Assuming default type [ch.qos.logback.classic.encoder.PatternLayoutEncoder] for [encoder] property

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - About to instantiate appender of type [ch.qos.logback.classic.AsyncAppender]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - Naming appender as [ASYNCFILE]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderRefAction - Attaching appender named [FILE] to ch.qos.logback.classic.AsyncAppender[ASYNCFILE]

::, |-INFO in ch.qos.logback.classic.AsyncAppender[ASYNCFILE] - Attaching appender named [FILE] to AsyncAppender.

::, |-INFO in ch.qos.logback.classic.AsyncAppender[ASYNCFILE] - Setting discardingThreshold to

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - About to instantiate appender of type [ch.qos.logback.classic.AsyncAppender]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderAction - Naming appender as [ASYNCSTDOUT]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderRefAction - Attaching appender named [STDOUT] to ch.qos.logback.classic.AsyncAppender[ASYNCSTDOUT]

::, |-INFO in ch.qos.logback.classic.AsyncAppender[ASYNCSTDOUT] - Attaching appender named [STDOUT] to AsyncAppender.

::, |-INFO in ch.qos.logback.classic.AsyncAppender[ASYNCSTDOUT] - Setting discardingThreshold to

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [play] to INFO

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [application] to INFO

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [kafka.manager] to INFO

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [com.avaje.ebean.config.PropertyMapLoader] to OFF

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [com.avaje.ebeaninternal.server.core.XmlConfigLoader] to OFF

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [com.avaje.ebeaninternal.server.lib.BackgroundThread] to OFF

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [com.gargoylesoftware.htmlunit.javascript] to OFF

::, |-INFO in ch.qos.logback.classic.joran.action.LoggerAction - Setting level of logger [org.apache.zookeeper] to INFO

::, |-INFO in ch.qos.logback.classic.joran.action.RootLoggerAction - Setting level of ROOT logger to WARN

::, |-INFO in ch.qos.logback.core.joran.action.AppenderRefAction - Attaching appender named [ASYNCFILE] to Logger[ROOT]

::, |-INFO in ch.qos.logback.core.joran.action.AppenderRefAction - Attaching appender named [ASYNCSTDOUT] to Logger[ROOT]

::, |-INFO in ch.qos.logback.classic.joran.action.ConfigurationAction - End of configuration.

::, |-INFO in ch.qos.logback.classic.joran.JoranConfigurator@18cf1e03 - Registering current configuration as safe fallback point [warn] o.a.c.r.ExponentialBackoffRetry - maxRetries too large (). Pinning to

[info] k.m.a.KafkaManagerActor - Starting curator...

[info] o.a.z.ZooKeeper - Client environment:zookeeper.version=3.4.--, built on // : GMT

[info] o.a.z.ZooKeeper - Client environment:host.name=master

[info] o.a.z.ZooKeeper - Client environment:java.version=1.8.0_60

[info] o.a.z.ZooKeeper - Client environment:java.vendor=Oracle Corporation

[info] o.a.z.ZooKeeper - Client environment:java.home=/home/hadoop/app/jdk1..0_60/jre

[info] o.a.z.ZooKeeper - Client environment:java.class.path=/home/hadoop/app/kafka-manager-1.3.2.1/lib/../conf/:/home/hadoop/app/kafka-manager-1.3.2.1/lib/kafka-manager.kafka-manager-1.3.2.1-sans-externalized.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scala-lang.scala-library-2.11..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.twirl-api_2.-1.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.commons.commons-lang3-3.4.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-server_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.build-link-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-exceptions-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.javassist.javassist-3.19.-GA.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-iteratees_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scala-stm.scala-stm_2.-0.7.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.config-1.3..jar:/home/hadoop/app/

kafka-manager-1.3.2.1/lib/com.typesafe.play.play-json_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-functional_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-datacommons_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/joda-time.joda-time-2.8..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.joda.joda-convert-1.7.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.fasterxml.jackson.datatype.jackson-datatype-jdk8-2.5..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.fasterxml.jackson.datatype.jackson-datatype-jsr310-2.5..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-netty-utils-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.slf4j.jul-to-slf4j-1.7..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.slf4j.jcl-over-slf4j-1.7..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/ch.qos.logback.logback-core-1.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/ch.qos.logback.logback-classic-1.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.akka.akka-actor_2.-2.3..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.akka.akka-slf4j_2.-2.3..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/commons-codec.commons-codec-1.10.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/xerces.xercesImpl-2.11..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/xml-apis.xml-apis-1.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/javax.transaction.jta-1.1.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.google.inject.guice-4.0.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/javax.inject.javax.inject-.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/aopalliance.aopalliance-1.0.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.google.guava.guava-16.0..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.google.inject.extensions.guice-assistedinject-4.0.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.play.play-netty-server_2.-2.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/io.netty.netty-3.10..Final.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.typesafe.netty.netty-http-pipelining-1.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.google.code.findbugs.jsr305-2.0..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.webjars-play_2.-2.4.-.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.requirejs-2.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.webjars-locator-0.28.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.webjars-locator-core-0.27.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.commons.commons-compress-1.9.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.npm.validate.js-0.8..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.bootstrap-3.3..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.jquery-2.1..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.backbonejs-1.2..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.underscorejs-1.8..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.dustjs-linkedin-2.6.-.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.webjars.json--.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.curator.curator-framework-2.10..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.curator.curator-client-2.10..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/jline.jline-0.9..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.curator.curator-recipes-2.10..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.json4s.json4s-jackson_2.-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.json4s.json4s-core_2.-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.json4s.json4s-ast_2.-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.json4s.json4s-scalap_2.-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.thoughtworks.paranamer.paranamer-2.8.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scala-lang.modules.scala-xml_2.-1.0..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.fasterxml.jackson.core.jackson-databind-2.6..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.fasterxml.jackson.core.jackson-annotations-2.6..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.fasterxml.jackson.core.jackson-core-2.6..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.json4s.json4s-scalaz_2.-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scalaz.scalaz-core_2.-7.2..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.slf4j.log4j-over-slf4j-1.7..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.adrianhurt.play-bootstrap3_2.-0.4.-P24.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.clapper.grizzled-slf4j_2.-1.0..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.kafka.kafka_2.-0.10.1.1.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.kafka.kafka-clients-0.10.1.1.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/net.jpountz.lz4.lz4-1.3..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.xerial.snappy.snappy-java-1.1.2.6.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.slf4j.slf4j-api-1.7..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/net.sf.jopt-simple.jopt-simple-4.9.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.yammer.metrics.metrics-core-2.2..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.101tec.zkclient-0.9.jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.apache.zookeeper.zookeeper-3.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scala-lang.modules.scala-parser-combinators_2.-1.0..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.beachape.enumeratum_2.-1.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/com.beachape.enumera

tum-macros_2.-1.4..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/org.scala-lang.scala-reflect-2.11..jar:/home/hadoop/app/kafka-manager-1.3.2.1/lib/kafka-manager.kafka-manager-1.3.2.1-assets.jar

[info] o.a.z.ZooKeeper - Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib

[info] o.a.z.ZooKeeper - Client environment:java.io.tmpdir=/tmp

[info] o.a.z.ZooKeeper - Client environment:java.compiler=<NA>

[info] o.a.z.ZooKeeper - Client environment:os.name=Linux

[info] o.a.z.ZooKeeper - Client environment:os.arch=amd64

[info] o.a.z.ZooKeeper - Client environment:os.version=2.6.-.el6.x86_64

[info] o.a.z.ZooKeeper - Client environment:user.name=hadoop

[info] o.a.z.ZooKeeper - Client environment:user.home=/home/hadoop

[info] o.a.z.ZooKeeper - Client environment:user.dir=/home/hadoop/app/kafka-manager-1.3.2.1

[info] o.a.z.ZooKeeper - Initiating client connection, connectString=master:2181,slave1:2181,slave2:2181 sessionTimeout= watcher=org.apache.curator.ConnectionState@1dc0b758

[info] o.a.z.ClientCnxn - Opening socket connection to server slave1/192.168.80.146:. Will not attempt to authenticate using SASL (unknown error)

[info] k.m.a.KafkaManagerActor - zk=master:,slave1:,slave2:

[info] k.m.a.KafkaManagerActor - baseZkPath=/kafka-manager

[info] o.a.z.ClientCnxn - Socket connection established to slave1/192.168.80.146:, initiating session

[info] o.a.z.ClientCnxn - Session establishment complete on server slave1/192.168.80.146:, sessionid = 0x25d88012f990002, negotiated timeout =

[info] k.m.a.DeleteClusterActor - Started actor akka://kafka-manager-system/user/kafka-manager/delete-cluster

[info] k.m.a.DeleteClusterActor - Starting delete clusters path cache...

[info] k.m.a.KafkaManagerActor - Started actor akka://kafka-manager-system/user/kafka-manager

[info] k.m.a.KafkaManagerActor - Starting delete clusters path cache...

[info] k.m.a.DeleteClusterActor - Adding kafka manager path cache listener...

[info] k.m.a.DeleteClusterActor - Scheduling updater for seconds

[info] k.m.a.KafkaManagerActor - Starting kafka manager path cache...

[info] k.m.a.KafkaManagerActor - Adding kafka manager path cache listener...

[info] k.m.a.KafkaManagerActor - Updating internal state...

[info] play.api.Play - Application started (Prod)

[info] p.c.s.NettyServer - Listening for HTTP on /0:0:0:0:0:0:0:0:10000

[info] k.m.a.KafkaManagerActor - Updating internal state...

[info] k.m.a.KafkaManagerActor - Updating internal state...

也许,大家在这一步启动的时候,出现如下错误

基于Web的Kafka管理器工具之Kafka-manager启动时出现Exception in thread "main" java.lang.UnsupportedClassVersionError错误解决办法(图文详解)

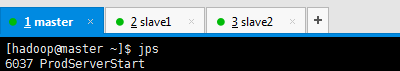

Kafka-manager的进程是

[hadoop@master ~]$ jps

ProdServerStart

比如kill掉它,则

然后,再次打开,即可

http://192.168.80.145:10000/

或者

http://master:10000/

同时,大家可以关注我的个人博客:

http://www.cnblogs.com/zlslch/ 和 http://www.cnblogs.com/lchzls/ http://www.cnblogs.com/sunnyDream/

详情请见:http://www.cnblogs.com/zlslch/p/7473861.html

人生苦短,我愿分享。本公众号将秉持活到老学到老学习无休止的交流分享开源精神,汇聚于互联网和个人学习工作的精华干货知识,一切来于互联网,反馈回互联网。

目前研究领域:大数据、机器学习、深度学习、人工智能、数据挖掘、数据分析。 语言涉及:Java、Scala、Python、Shell、Linux等 。同时还涉及平常所使用的手机、电脑和互联网上的使用技巧、问题和实用软件。 只要你一直关注和呆在群里,每天必须有收获

对应本平台的讨论和答疑QQ群:大数据和人工智能躺过的坑(总群)(161156071)

基于Web的Kafka管理器工具之Kafka-manager的编译部署详细安装 (支持kafka0.8、0.9和0.10以后版本)(图文详解)(默认端口或任意自定义端口)的更多相关文章

- 基于Web的Kafka管理器工具之Kafka-manager启动时出现Exception in thread "main" java.lang.UnsupportedClassVersionError错误解决办法(图文详解)

不多说,直接上干货! 前期博客 基于Web的Kafka管理器工具之Kafka-manager的编译部署详细安装 (支持kafka0.8.0.9和0.10以后版本)(图文详解) 问题详情 我在Kaf ...

- 基于Web的Kafka管理器工具之Kafka-manager安装之后第一次进入web UI的初步配置(图文详解)

前期博客 基于Web的Kafka管理器工具之Kafka-manager的编译部署详细安装 (支持kafka0.8.0.9和0.10以后版本)(图文详解) 基于Web的Kafka管理器工具之Kafka- ...

- 给ambari集群里的kafka安装基于web的kafka管理工具Kafka-manager(图文详解)

不多说,直接上干货! 参考博客 基于Web的Kafka管理器工具之Kafka-manager的编译部署详细安装 (支持kafka0.8.0.9和0.10以后版本)(图文详解)(默认端口或任意自定义端口 ...

- 可视化的Redis数据库管理工具redis-desktop-manager的初步使用(图文详解)

不多说,直接上干货! 无论是Linux 还是 Windows里安装Redis, Windows里如何正确安装Redis以服务运行(博主推荐)(图文详解) Windows下如何正确下载并安装可视化的Re ...

- 全网最详细的Sublime Text 3的安装Package Control插件管理包(图文详解)

不多说,直接上干货! 全网最详细的Windows里下载与安装Sublime Text *(图文详解) 全网最详细的Sublime Text 3的激活(图文详解) 全网最详细的Sublime Text ...

- Windows下如何正确下载并安装可视化的Redis数据库管理工具(redis-desktop-manager)(图文详解)

不多说,直接上干货! Redis Desktop Manager是一个可视化的Redis数据库管理工具,使用非常简单. 官网下载:https://redisdesktop.com/down ...

- 基于Web的IIS管理工具

Servant:基于Web的IIS管理工具 Servant for IIS是个管理IIS的简单.自动化的Web管理工具.安装Servant的过程很简单,只要双击批处理文件Install Serva ...

- 基于浏览器的开源“管理+开发”工具,Pivotal MySQL*Web正式上线!

基于浏览器的开源“管理+开发”工具,Pivotal MySQL*Web正式上线! https://www.sohu.com/a/168292858_747818 https://github.com/ ...

- kafka管理器kafka-manager部署安装

运行的环境要求 Kafka 0.8.1.1+ sbt 0.13.x Java 7+ 功能 为了简化开发者和服务工程师维护Kafka集群的工作,yahoo构建了一个叫做Kafka管理器的基于Web工具, ...

随机推荐

- poj——3687 Labeling Balls

Labeling Balls Time Limit: 1000MS Memory Limit: 65536K Total Submissions: 14835 Accepted: 4346 D ...

- mac idea快捷键(部分常用)

shift+F6重命名 shift+enter 换到下一行 shift+F8等同eclipse的f8跳到下一个断点,也等同eclipse的F7跳出函数 F8等同eclipse的f6跳到下一步F7等同e ...

- Ubuntu 16.04在搭建Redis Cluster搭建时,使用gem install redis时出现:ERROR: While executing gem ... (Gem::FilePermissionError) You don't have write permissions for the /var/lib/gems/2.3.0 directory.

注意:千万不要使用sudo来执行gem install redis. 解决方法: sudo apt-get update sudo apt-get install git-core curl zlib ...

- Ubuntu 16.04下操作iptables的技巧(解决Failed to start iptables.service: Unit iptables.service not found.或者/etc/init.d/iptables: 没有那个文件或目录)

/etc/init.d/iptables网上的解法应该都是基于CentOS 6去实践,而在CentOS 7中又被firewalld给取代,所以操作上的写法基本会改变,但是底层iptables则不会改变 ...

- vue assetsPublicPath

vue 中 /config/index.js, assetsPublicPath 的作用是便于访问打包后的静态资源,默认是相对于根 /, 当然如果直接把dist文件夹当成根来配置域名 可以什么都不用 ...

- 详解cisco访问控制列表ACL

一:访问控制列表概述 ·访问控制列表(ACL)是应用在路由器接口的指令列表.这些指令列表用来告诉路由器哪些数据包可以通过,哪些数据包需要拒绝. ·工作原理:它读取第三及第四层包头中的信息,如源 ...

- 查看yarn当前执行任务列表

Author: kwu 查看yarn当前执行任务列表.可使用例如以下命令查看: yarn application -list 如需杀死当前某个作业,使用kill application-id的命令例如 ...

- ASP.NET Boilerplate 学习 AspNet Core2 浏览器缓存使用 c#基础,单线程,跨线程访问和线程带参数 wpf 禁用启用webbroswer右键菜单 EF Core 2.0使用MsSql/MySql实现DB First和Code First ASP.NET Core部署到Windows IIS QRCode.js:使用 JavaScript 生成

ASP.NET Boilerplate 学习 1.在http://www.aspnetboilerplate.com/Templates 网站下载ABP模版 2.解压后打开解决方案,解决方案目录: ...

- spi和I2c的速率

I2C协议v2.1规定了100K,400K和3.4M三种速率(bps).SPI是一种事实标准,由Motorola开发,并没有一个官方标准.已知的有的器件SPI已达到50Mbps.具体到产品中SPI的速 ...

- CPU卡详解【转】

本文转载自:http://blog.csdn.net/logaa/article/details/7571805 第一部分 CPU基础知识 一.为什么用CPU卡 IC卡从接口方式上分,可以分为接触式I ...