Spark 源码解析 : DAGScheduler中的DAG划分与提交

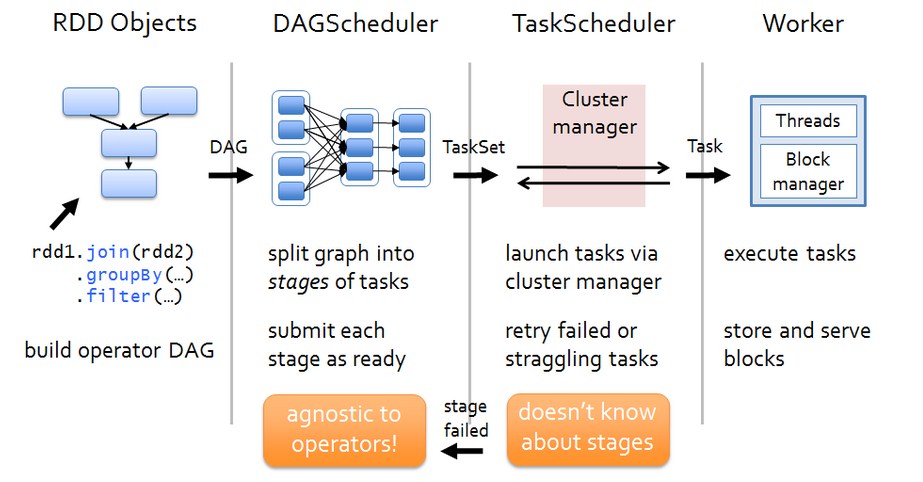

一、Spark 运行架构

def submitJob[T, U](rdd: RDD[T],func: (TaskContext, Iterator[T]) => U,partitions: Seq[Int],callSite: CallSite,resultHandler: (Int, U) => Unit,properties: Properties): JobWaiter[U] = {// Check to make sure we are not launching a task on a partition that does not exist.val maxPartitions = rdd.partitions.lengthpartitions.find(p => p >= maxPartitions || p < 0).foreach { p =>throw new IllegalArgumentException("Attempting to access a non-existent partition: " + p + ". " +"Total number of partitions: " + maxPartitions)}val jobId = nextJobId.getAndIncrement()if (partitions.size == 0) {// Return immediately if the job is running 0 tasksreturn new JobWaiter[U](this, jobId, 0, resultHandler)}assert(partitions.size > 0)val func2 = func.asInstanceOf[(TaskContext, Iterator[_]) => _]val waiter = new JobWaiter(this, jobId, partitions.size, resultHandler)//给eventProcessLoop发送JobSubmitted消息eventProcessLoop.post(JobSubmitted(jobId, rdd, func2, partitions.toArray, callSite, waiter,SerializationUtils.clone(properties)))waiter}

private[scheduler] val eventProcessLoop = new DAGSchedulerEventProcessLoop(this)

private def doOnReceive(event: DAGSchedulerEvent): Unit = event match {//Job提交

case JobSubmitted(jobId, rdd, func, partitions, callSite, listener, properties) =>dagScheduler.handleJobSubmitted(jobId, rdd, func, partitions, callSite, listener, properties)case MapStageSubmitted(jobId, dependency, callSite, listener, properties) =>dagScheduler.handleMapStageSubmitted(jobId, dependency, callSite, listener, properties)case StageCancelled(stageId) =>dagScheduler.handleStageCancellation(stageId)case JobCancelled(jobId) =>dagScheduler.handleJobCancellation(jobId)case JobGroupCancelled(groupId) =>dagScheduler.handleJobGroupCancelled(groupId)case AllJobsCancelled =>dagScheduler.doCancelAllJobs()case ExecutorAdded(execId, host) =>dagScheduler.handleExecutorAdded(execId, host)case ExecutorLost(execId) =>dagScheduler.handleExecutorLost(execId, fetchFailed = false)case BeginEvent(task, taskInfo) =>dagScheduler.handleBeginEvent(task, taskInfo)case GettingResultEvent(taskInfo) =>dagScheduler.handleGetTaskResult(taskInfo)case completion: CompletionEvent =>dagScheduler.handleTaskCompletion(completion)case TaskSetFailed(taskSet, reason, exception) =>dagScheduler.handleTaskSetFailed(taskSet, reason, exception)case ResubmitFailedStages =>dagScheduler.resubmitFailedStages()}

try {//创建新stage可能出现异常,比如job运行依赖hdfs文文件被删除finalStage = newResultStage(finalRDD, func, partitions, jobId, callSite)} catch {case e: Exception =>logWarning("Creating new stage failed due to exception - job: " + jobId, e)listener.jobFailed(e)return}

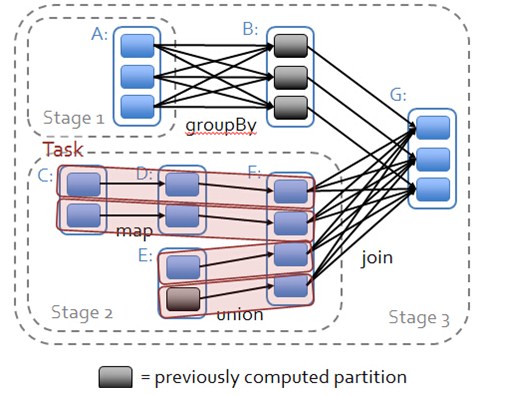

private def getMissingParentStages(stage: Stage): List[Stage] = {val missing = new HashSet[Stage] //存储需要返回的父Stageval visited = new HashSet[RDD[_]] //存储访问过的RDD//自己建立栈,以免函数的递归调用导致val waitingForVisit = new Stack[RDD[_]]def visit(rdd: RDD[_]) {if (!visited(rdd)) {visited += rddval rddHasUncachedPartitions = getCacheLocs(rdd).contains(Nil)if (rddHasUncachedPartitions) {for (dep <- rdd.dependencies) {dep match {case shufDep: ShuffleDependency[_, _, _] =>val mapStage = getShuffleMapStage(shufDep, stage.firstJobId)if (!mapStage.isAvailable) {missing += mapStage //遇到宽依赖,加入父stage}case narrowDep: NarrowDependency[_] =>waitingForVisit.push(narrowDep.rdd) //窄依赖入栈,}}}}}- //回溯的起始RDD入栈

waitingForVisit.push(stage.rdd)while (waitingForVisit.nonEmpty) {visit(waitingForVisit.pop())}missing.toList}

private def newOrUsedShuffleStage(shuffleDep: ShuffleDependency[_, _, _],firstJobId: Int): ShuffleMapStage = {val rdd = shuffleDep.rddval numTasks = rdd.partitions.lengthval stage = newShuffleMapStage(rdd, numTasks, shuffleDep, firstJobId, rdd.creationSite)if (mapOutputTracker.containsShuffle(shuffleDep.shuffleId)) {//Stage已经被计算过,从MapOutputTracker中获取计算结果val serLocs = mapOutputTracker.getSerializedMapOutputStatuses(shuffleDep.shuffleId)val locs = MapOutputTracker.deserializeMapStatuses(serLocs)(0 until locs.length).foreach { i =>if (locs(i) ne null) {// locs(i) will be null if missingstage.addOutputLoc(i, locs(i))}}} else {// Kind of ugly: need to register RDDs with the cache and map output tracker here// since we can't do it in the RDD constructor because # of partitions is unknownlogInfo("Registering RDD " + rdd.id + " (" + rdd.getCreationSite + ")")mapOutputTracker.registerShuffle(shuffleDep.shuffleId, rdd.partitions.length)}stage}

/** Submits stage, but first recursively submits any missing parents. */private def submitStage(stage: Stage) {val jobId = activeJobForStage(stage)if (jobId.isDefined) {logDebug("submitStage(" + stage + ")")if (!waitingStages(stage) && !runningStages(stage) && !failedStages(stage)) {val missing = getMissingParentStages(stage).sortBy(_.id)logDebug("missing: " + missing)if (missing.isEmpty) {logInfo("Submitting " + stage + " (" + stage.rdd + "), which has no missing parents")//如果没有父stage,则提交当前stagesubmitMissingTasks(stage, jobId.get)} else {for (parent <- missing) {//如果有父stage,则递归提交父stagesubmitStage(parent)}waitingStages += stage}}} else {abortStage(stage, "No active job for stage " + stage.id, None)}}

Spark 源码解析 : DAGScheduler中的DAG划分与提交的更多相关文章

- Spark 源码解析:TaskScheduler的任务提交和task最佳位置算法

上篇文章< Spark 源码解析 : DAGScheduler中的DAG划分与提交 >介绍了DAGScheduler的Stage划分算法. 本文继续分析Stage被封装成TaskSet, ...

- Spark源码分析 – DAGScheduler

DAGScheduler的架构其实非常简单, 1. eventQueue, 所有需要DAGScheduler处理的事情都需要往eventQueue中发送event 2. eventLoop Threa ...

- spark 源码分析之十九 -- DAG的生成和Stage的划分

上篇文章 spark 源码分析之十八 -- Spark存储体系剖析 重点剖析了 Spark的存储体系.从本篇文章开始,剖析Spark作业的调度和计算体系. 在说DAG之前,先简单说一下RDD. 对RD ...

- Scala实战高手****第4课:零基础彻底实战Scala控制结构及Spark源码解析

1.环境搭建 基础环境配置 jdk+idea+maven+scala2.11.以上工具安装配置此处不再赘述. 2.源码导入 官网下载spark源码后解压到合适的项目目录下,打开idea,File-&g ...

- Spark源码在Eclipse中部署/编译/运行

(1)下载Spark源码 到官方网站下载:Openfire.Spark.Smack,其中Spark只能使用SVN下载,源码的文件夹分别对应Openfire.Spark和Smack. 直接下载Openf ...

- 源码解析.Net中IConfiguration配置的实现

前言 关于IConfituration的使用,我觉得大部分人都已经比较熟悉了,如果不熟悉的可以看这里.因为本篇不准备讲IConfiguration都是怎么使用的,但是在源码部分的解读,网上资源相对少一 ...

- 源码解析.Net中DependencyInjection的实现

前言 笔者的这篇文章和上篇文章思路一样,不注重依赖注入的使用方法,更加注重源码的实现,我尽量的表达清楚内容,让读者能够真正的学到东西.如果有不太清楚依赖注入是什么或怎么在.Net项目中使用的话,请点击 ...

- 源码解析.Net中Middleware的实现

前言 本篇继续之前的思路,不注重用法,如果还不知道有哪些用法的小伙伴,可以点击这里,微软文档说的很详细,在阅读本篇文章前,还是希望你对中间件有大致的了解,这样你读起来可能更加能够意会到意思.废话不多说 ...

- 源码解析.Net中Host主机的构建过程

前言 本篇文章着重讲一下在.Net中Host主机的构建过程,依旧延续之前文章的思路,着重讲解其源码,如果有不知道有哪些用法的同学可以点击这里,废话不多说,咱们直接进入正题 Host构建过程 下图是我自 ...

随机推荐

- jinja2 中的 Template 批量替换json字符串中的内容

项目中用到elasticsearch,使用Json格式查询方式,一个查询语句中有好几个地方需要替换,且替换的值都相同.最开始把json转为字符串发方式,利用format函数处理,发现再转回json时无 ...

- JS中encodeURI、encodeURIComponent、decodeURI、decodeURIComponent

js 对文字进行编码涉及2个函数:encodeURI,encodeURIComponent,相应2个解码函数:decodeURI,decodeURIComponent 1.用来编码和解码URI的 统一 ...

- 启动EMQ(emqtt)时报错找不到libsctp.so.1

libsctp.so.1: cannot open shared object file: No such file or directory 发现没有安装sctp [root@localho ...

- JS自学大全

JS是从上往下执行的 console.log();输出语句console.warn();错误提示语句 黄色三角形感叹号console.error();错误提示 红色圆Xalert();弹窗docume ...

- Linux 下 JDK + Eclipse + PyDev 安装与配置

一:JDK / JRE 环境 Eclipse 是运行于Java虚拟机中的,所以必须先安装Java环境才能进行开发测试.JRE(Java Runtime Environment)是运行环境,JDK(Ja ...

- Network File System

Network File System 2014-12-31 #system 接着上一篇博客Distributed Systems 分布式系统来扯淡,之前的博客一再在写文件系统,这次继续,只不过是分布 ...

- lazyload support for Zepto.js

关于lazyload,很久之前整理过它的文档:<Lazy Load(1.7.0)中文文档 -- 延迟加载图片的jQuery插件> 因为懒,所以才要用lazyload.但lazyload用了 ...

- 区分IE8 、IE9 的专属css hack

一般来说,我们写的结构比较好的时候,IE8/9下是没区别的.所以可能很少人关注只有IE8或只有IE9才识别的css hack. 因为IE8及以下版本是不支持CSS3的,但是我们如果使用css3,在IE ...

- LintCode 402: Continuous Subarray Sum

LintCode 402: Continuous Subarray Sum 题目描述 给定一个整数数组,请找出一个连续子数组,使得该子数组的和最大.输出答案时,请分别返回第一个数字和最后一个数字的下标 ...

- 在外网使用ssh连接内网中的多台Linux服务器

最近因为要对全球工控机网络进行协议扫描,需要在实验室配置几台服务器,因为我们只有一个IP地址,所以是用路由器搭建了一个内网(拓扑结构如下图).但是这样做了之后无法在宿舍通过ssh直接连接服务器,因为那 ...