Tencent社会招聘scrapy爬虫 --- 已经解决

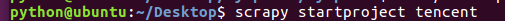

1.用 scrapy 新建一个 tencent 项目

2.在 items.py 中确定要爬去的内容

# -*- coding: utf- -*- # Define here the models for your scraped items

#

# See documentation in:

# http://doc.scrapy.org/en/latest/topics/items.html import scrapy class TencentItem(scrapy.Item):

# define the fields for your item here like:

# 职位

position_name = scrapy.Field()

# 详情链接

positin_link = scrapy.Field()

# 职业类别

position_type = scrapy.Field()

# 招聘人数

people_number = scrapy.Field()

# 工作地点

work_location = scrapy.Field()

# 发布时间

publish_time = scrapy.Field()

3.在当前命令下创建一个名为 tencent_spider 的爬虫, 并指定爬取域的范围

4.打开tencent_spider.py已经初始化了格式, 修改一下就好了

# -*- coding: utf-8 -*-

import scrapy

from tencent.items import TencentItem class TencentSpiderSpider(scrapy.Spider):

name = "tencent_spider"

allowed_domains = ["tencent.com"] url = "http://hr.tencent.com/position.php?&start="

offset = 0 start_urls =[

url + str(offset),

] def parse(self, response):

for each in response.xpath("//tr[@class='even'] | //tr[@class='odd']"):

# 初始化模型对象

item = TencentItem()

item['positionname'] = each.xpath("./td[1]/a/text()").extract()[0]

# 详情连接

item['positionlink'] = each.xpath("./td[1]/a/@href").extract()[0]

# 职位类别

item['positiontype'] = each.xpath("./td[2]/text()").extract()[0]

# 招聘人数

item['peoplenumber'] = each.xpath("./td[3]/text()").extract()[0]

# 工作地点

item['worklocation'] = each.xpath("./td[4]/text()").extract()[0]

# 发布时间

item['publishtime'] = each.xpath("./td[5]/text()").extract()[0] yield item

if self.offset < 1000:

self.offset += 10 # 每次处理完一页的数据之后,重新发送下一页页面请求

# self.offset自增10,同时拼接为新的url,并调用回调函数self.parse处理Response

yield scrapy.Request(self.url + str(self.offset), callback = self.parse)

5.在 piplines.py 中写入文件

# -*- coding: utf-8 -*- # Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: http://doc.scrapy.org/en/latest/topics/item-pipeline.html import json class TencentPipeline(object):

def open_spider(self, spider):

self.filename = open("tencent.json", "w") def process_item(self, item, spider):

text = json.dumps(dict(item), ensure_ascii = False) + "\n"

self.filename.write(text.encode("utf-8")

return item def close_spider(self, spider):

self.filename.close()

6.进入settings中, 设置一下 headers 和 piplines

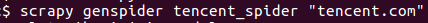

7.在命令输入以下命令运行

出现以下错误...还未解决...

2017-10-03 20:03:20 [scrapy] INFO: Scrapy 1.1.1 started (bot: tencent)

2017-10-03 20:03:20 [scrapy] INFO: Overridden settings: {'NEWSPIDER_MODULE': 'tencent.spiders', 'SPIDER_MODULES': ['tencent.spiders'], 'DOWNLOAD_DELAY': 2, 'BOT_NAME': 'tencent'}

2017-10-03 20:03:20 [scrapy] INFO: Enabled extensions:

['scrapy.extensions.logstats.LogStats',

'scrapy.extensions.telnet.TelnetConsole',

'scrapy.extensions.corestats.CoreStats']

2017-10-03 20:03:20 [scrapy] INFO: Enabled downloader middlewares:

['scrapy.downloadermiddlewares.httpauth.HttpAuthMiddleware',

'scrapy.downloadermiddlewares.downloadtimeout.DownloadTimeoutMiddleware',

'scrapy.downloadermiddlewares.useragent.UserAgentMiddleware',

'scrapy.downloadermiddlewares.retry.RetryMiddleware',

'scrapy.downloadermiddlewares.defaultheaders.DefaultHeadersMiddleware',

'scrapy.downloadermiddlewares.redirect.MetaRefreshMiddleware',

'scrapy.downloadermiddlewares.httpcompression.HttpCompressionMiddleware',

'scrapy.downloadermiddlewares.redirect.RedirectMiddleware',

'scrapy.downloadermiddlewares.cookies.CookiesMiddleware',

'scrapy.downloadermiddlewares.chunked.ChunkedTransferMiddleware',

'scrapy.downloadermiddlewares.stats.DownloaderStats']

2017-10-03 20:03:20 [scrapy] INFO: Enabled spider middlewares:

['scrapy.spidermiddlewares.httperror.HttpErrorMiddleware',

'scrapy.spidermiddlewares.offsite.OffsiteMiddleware',

'scrapy.spidermiddlewares.referer.RefererMiddleware',

'scrapy.spidermiddlewares.urllength.UrlLengthMiddleware',

'scrapy.spidermiddlewares.depth.DepthMiddleware']

Unhandled error in Deferred:

2017-10-03 20:03:20 [twisted] CRITICAL: Unhandled error in Deferred: Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/scrapy/commands/crawl.py", line 57, in run

self.crawler_process.crawl(spname, **opts.spargs)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 163, in crawl

return self._crawl(crawler, *args, **kwargs)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 167, in _crawl

d = crawler.crawl(*args, **kwargs)

File "/usr/local/lib/python2.7/dist-packages/twisted/internet/defer.py", line 1274, in unwindGenerator

return _inlineCallbacks(None, gen, Deferred())

--- <exception caught here> ---

File "/usr/local/lib/python2.7/dist-packages/twisted/internet/defer.py", line 1128, in _inlineCallbacks

result = g.send(result)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 90, in crawl

six.reraise(*exc_info)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 72, in crawl

self.engine = self._create_engine()

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 97, in _create_engine

return ExecutionEngine(self, lambda _: self.stop())

File "/usr/local/lib/python2.7/dist-packages/scrapy/core/engine.py", line 69, in __init__

self.scraper = Scraper(crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/core/scraper.py", line 71, in __init__

self.itemproc = itemproc_cls.from_crawler(crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/middleware.py", line 58, in from_crawler

return cls.from_settings(crawler.settings, crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/middleware.py", line 34, in from_settings

mwcls = load_object(clspath)

File "/usr/local/lib/python2.7/dist-packages/scrapy/utils/misc.py", line 44, in load_object

mod = import_module(module)

File "/usr/lib/python2.7/importlib/__init__.py", line 37, in import_module

__import__(name)

exceptions.SyntaxError: invalid syntax (pipelines.py, line 17)

2017-10-03 20:03:20 [twisted] CRITICAL:

Traceback (most recent call last):

File "/usr/local/lib/python2.7/dist-packages/twisted/internet/defer.py", line 1128, in _inlineCallbacks

result = g.send(result)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 90, in crawl

six.reraise(*exc_info)

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 72, in crawl

self.engine = self._create_engine()

File "/usr/local/lib/python2.7/dist-packages/scrapy/crawler.py", line 97, in _create_engine

return ExecutionEngine(self, lambda _: self.stop())

File "/usr/local/lib/python2.7/dist-packages/scrapy/core/engine.py", line 69, in __init__

self.scraper = Scraper(crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/core/scraper.py", line 71, in __init__

self.itemproc = itemproc_cls.from_crawler(crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/middleware.py", line 58, in from_crawler

return cls.from_settings(crawler.settings, crawler)

File "/usr/local/lib/python2.7/dist-packages/scrapy/middleware.py", line 34, in from_settings

mwcls = load_object(clspath)

File "/usr/local/lib/python2.7/dist-packages/scrapy/utils/misc.py", line 44, in load_object

mod = import_module(module)

File "/usr/lib/python2.7/importlib/__init__.py", line 37, in import_module

__import__(name)

File "/home/python/Desktop/tencent/tencent/pipelines.py", line 17

return item

^

SyntaxError: invalid syntax

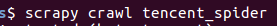

还是自己太粗心了, 在 5 中的piplines.py 少了一个括号...感谢 sun shine 的帮助

thank you !!!

thank you !!!

Tencent社会招聘scrapy爬虫 --- 已经解决的更多相关文章

- Python2.7集成scrapy爬虫错误解决

运行报错: NotSupported: Unsupported URL scheme 'https':.... 解决方法:降低对应package的版本 主要是scrapy和pyOpenSSL的版本 具 ...

- scrapy爬虫系列之二--翻页爬取及日志的基本用法

功能点:如何翻页爬取信息,如何发送请求,日志的简单实用 爬取网站:腾讯社会招聘网 完整代码:https://files.cnblogs.com/files/bookwed/tencent.zip 主要 ...

- [Python爬虫] scrapy爬虫系列 <一>.安装及入门介绍

前面介绍了很多Selenium基于自动测试的Python爬虫程序,主要利用它的xpath语句,通过分析网页DOM树结构进行爬取内容,同时可以结合Phantomjs模拟浏览器进行鼠标或键盘操作.但是,更 ...

- 进阶——scrapy登录豆瓣解决cookie传递问题并爬取用户参加过的同城活动©seven_clear

最近在用scrapy重写以前的爬虫,由于豆瓣的某些信息要登录后才有权限查看,故要实现登录功能.豆瓣登录偶尔需要输入验证码,这个在以前写的爬虫里解决了验证码的问题,所以只要搞清楚scrapy怎么提交表单 ...

- Linux搭建Scrapy爬虫集成开发环境

安装Python 下载地址:http://www.python.org/, Python 有 Python 2 和 Python 3 两个版本, 语法有些区别,ubuntu上自带了python2.7. ...

- scrapy爬虫成长日记之将抓取内容写入mysql数据库

前面小试了一下scrapy抓取博客园的博客(您可在此查看scrapy爬虫成长日记之创建工程-抽取数据-保存为json格式的数据),但是前面抓取的数据时保存为json格式的文本文件中的.这很显然不满足我 ...

- Scrapy爬虫框架第一讲(Linux环境)

1.What is Scrapy? 答:Scrapy是一个使用python语言(基于Twistec框架)编写的开源网络爬虫框架,其结构清晰.模块之间的耦合程度低,具有较强的扩张性,能满足各种需求.(前 ...

- Scrapy爬虫框架(实战篇)【Scrapy框架对接Splash抓取javaScript动态渲染页面】

(1).前言 动态页面:HTML文档中的部分是由客户端运行JS脚本生成的,即服务器生成部分HTML文档内容,其余的再由客户端生成 静态页面:整个HTML文档是在服务器端生成的,即服务器生成好了,再发送 ...

- Scrapy爬虫错误日志汇总

1.数组越界问题(list index out of range) 原因:第1种可能情况:list[index]index超出范围,也就是常说的数组越界. 第2种可能情况:list是一个空的, 没有一 ...

随机推荐

- C:\WINDOWS\system32\wmp.dll”受到“Windows 系统文件保护”

在VC# 2005 中,要是打包的程序中包含了Windows Media Player 这个组件的话,在生成解决方案的过程中会提示出错: "错误1,应将“wmp.dll”排除,原因是其源文 ...

- 基础教程:视图中的ASP.NET Core 2.0 MVC依赖注入

问题 如何在ASP.NET Core MVC Views中注入和使用服务. 解 更新 启动 类来为MVC添加服务和中间件. 添加一项服务 添加一个Controller,返回 ViewResult. 添 ...

- CloudStack架构分析

Cloudstack功能 作为云计算解决方案,毫无疑问,以下几点是服务的核心关键(不限于以下几点),也作为后续开发和使用的出发点: 1. 支持多租户 2. 能够按需提供自服务 3. 宽带网络的接入 4 ...

- pt-tcp-model

http://blog.9minutesnooze.com/analyzing-http-traffic-tcpdump-perconas-pttcpmodel/ #获取200k个packets tc ...

- ajax异步传送数据的方法

1, 此方法为ajax异步发送后台数据的方法 var payment_id=$(this).attr("name"); alert(payment_id); $('.label') ...

- mac 安装protobuf,并编译

因公司接口协议是PB文件,需要将 PB 编译成JAVA文件,且MAC 电脑,故整理并分享MAC安装 google 下的protobuf 文件 MAC 安装protobuf 流程 1.下载 http ...

- 为什么我的子线程更新了 UI 没报错?借此,纠正一些Android 程序员的一个知识误区

开门见山: 这个误区是:子线程不能更新 UI ,其应该分类讨论,而不是绝对的. 半小时前,我的 XRecyclerView 群里面,一位群友私聊我,问题是: 为什么我的子线程更新了 UI 没报错? 我 ...

- 如何使用MFC连接Access数据库

(1)新建一个Access数据库文件.将其命名为data.mdb,并创建好表.字段. (2)为系统添加数据源.打开“控制面板”—>“管理工具”—>“数据源”,选择“系统DSN”,点击右边的 ...

- TP框架中内置查询IP函数

系统内置了get_client_ip方法用于获取客户端的IP地址,使用示例: $ip = get_client_ip(); 如果要支持IP定位功能,需要使用扩展类库Org\Net\IpLocation ...

- Python之可变类型与不可变类型

Python常见的数据类型有:数字 字符串 元组 列表 字典 不可变类型:数字 字符串 元组 可变类型: 列表 字典 a = 100 b = [100] def num1(x): x += x pri ...