深度增强学习--A3C

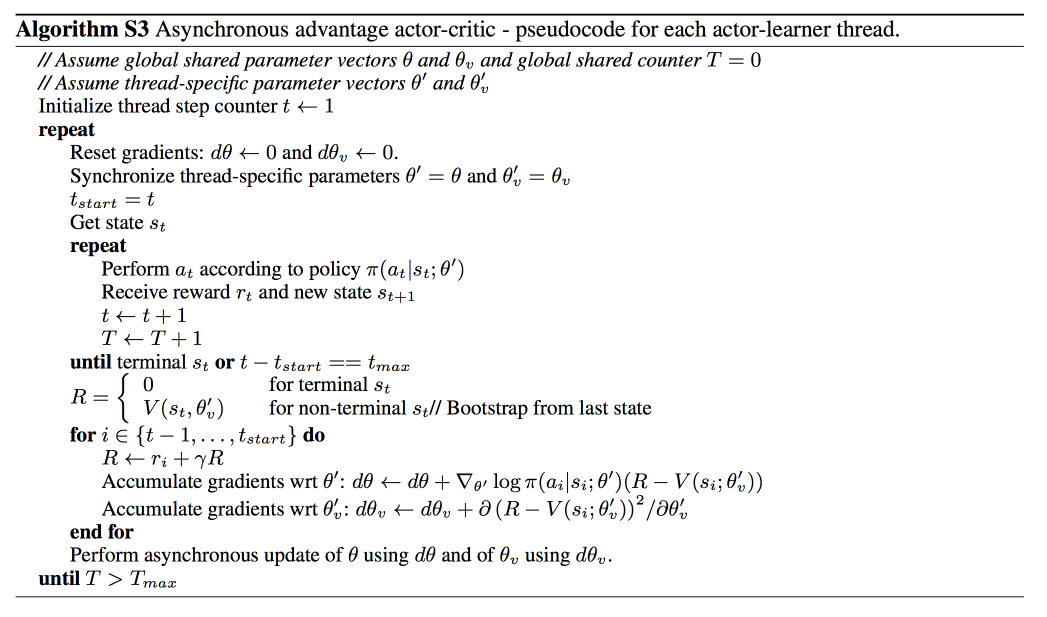

它会创建多个并行的环境, 让多个拥有副结构的 agent 同时在这些并行环境上更新主结构中的参数. 并行中的 agent 们互不干扰, 而主结构的参数更新受到副结构提交更新的不连续性干扰, 所以更新的相关性被降低, 收敛性提高

import threading

import numpy as np

import tensorflow as tf

import pylab

import time

import gym

from keras.layers import Dense, Input

from keras.models import Model

from keras.optimizers import Adam

from keras import backend as K # global variables for threading

episode = 0

scores = [] EPISODES = 2000 # This is A3C(Asynchronous Advantage Actor Critic) agent(global) for the Cartpole

# In this example, we use A3C algorithm

class A3CAgent:

def __init__(self, state_size, action_size, env_name):

# get size of state and action

self.state_size = state_size

self.action_size = action_size # get gym environment name

self.env_name = env_name # these are hyper parameters for the A3C

self.actor_lr = 0.001

self.critic_lr = 0.001

self.discount_factor = .99

self.hidden1, self.hidden2 = 24, 24

self.threads = 8 #8个线程并行 # create model for actor and critic network

self.actor, self.critic = self.build_model() # method for training actor and critic network

self.optimizer = [self.actor_optimizer(), self.critic_optimizer()] self.sess = tf.InteractiveSession()

K.set_session(self.sess)

self.sess.run(tf.global_variables_initializer()) # approximate policy and value using Neural Network

# actor -> state is input and probability of each action is output of network

# critic -> state is input and value of state is output of network

# actor and critic network share first hidden layer

def build_model(self):

state = Input(batch_shape=(None, self.state_size))

shared = Dense(self.hidden1, input_dim=self.state_size, activation='relu', kernel_initializer='glorot_uniform')(state) actor_hidden = Dense(self.hidden2, activation='relu', kernel_initializer='glorot_uniform')(shared)

action_prob = Dense(self.action_size, activation='softmax', kernel_initializer='glorot_uniform')(actor_hidden) value_hidden = Dense(self.hidden2, activation='relu', kernel_initializer='he_uniform')(shared)

state_value = Dense(1, activation='linear', kernel_initializer='he_uniform')(value_hidden) actor = Model(inputs=state, outputs=action_prob)

critic = Model(inputs=state, outputs=state_value) actor._make_predict_function()

critic._make_predict_function() actor.summary()

critic.summary() return actor, critic # make loss function for Policy Gradient

# [log(action probability) * advantages] will be input for the back prop

# we add entropy of action probability to loss

def actor_optimizer(self):

action = K.placeholder(shape=(None, self.action_size))

advantages = K.placeholder(shape=(None, )) policy = self.actor.output good_prob = K.sum(action * policy, axis=1)

eligibility = K.log(good_prob + 1e-10) * K.stop_gradient(advantages)

loss = -K.sum(eligibility) entropy = K.sum(policy * K.log(policy + 1e-10), axis=1) actor_loss = loss + 0.01*entropy optimizer = Adam(lr=self.actor_lr)

updates = optimizer.get_updates(self.actor.trainable_weights, [], actor_loss)

train = K.function([self.actor.input, action, advantages], [], updates=updates)

return train # make loss function for Value approximation

def critic_optimizer(self):

discounted_reward = K.placeholder(shape=(None, )) value = self.critic.output loss = K.mean(K.square(discounted_reward - value)) optimizer = Adam(lr=self.critic_lr)

updates = optimizer.get_updates(self.critic.trainable_weights, [], loss)

train = K.function([self.critic.input, discounted_reward], [], updates=updates)

return train # make agents(local) and start training

def train(self):

# self.load_model('./save_model/cartpole_a3c.h5')

agents = [Agent(i, self.actor, self.critic, self.optimizer, self.env_name, self.discount_factor,

self.action_size, self.state_size) for i in range(self.threads)]#建立8个local agent for agent in agents:

agent.start() while True:

time.sleep(20) plot = scores[:]

pylab.plot(range(len(plot)), plot, 'b')

pylab.savefig("./save_graph/cartpole_a3c.png") self.save_model('./save_model/cartpole_a3c.h5') def save_model(self, name):

self.actor.save_weights(name + "_actor.h5")

self.critic.save_weights(name + "_critic.h5") def load_model(self, name):

self.actor.load_weights(name + "_actor.h5")

self.critic.load_weights(name + "_critic.h5") # This is Agent(local) class for threading

class Agent(threading.Thread):

def __init__(self, index, actor, critic, optimizer, env_name, discount_factor, action_size, state_size):

threading.Thread.__init__(self) self.states = []

self.rewards = []

self.actions = [] self.index = index

self.actor = actor

self.critic = critic

self.optimizer = optimizer

self.env_name = env_name

self.discount_factor = discount_factor

self.action_size = action_size

self.state_size = state_size # Thread interactive with environment

def run(self):

global episode

env = gym.make(self.env_name)

while episode < EPISODES:

state = env.reset()

score = 0

while True:

action = self.get_action(state)

next_state, reward, done, _ = env.step(action)

score += reward self.memory(state, action, reward) state = next_state if done:

episode += 1

print("episode: ", episode, "/ score : ", score)

scores.append(score)

self.train_episode(score != 500)

break # In Policy Gradient, Q function is not available.

# Instead agent uses sample returns for evaluating policy

def discount_rewards(self, rewards, done=True):

discounted_rewards = np.zeros_like(rewards)

running_add = 0

if not done:

running_add = self.critic.predict(np.reshape(self.states[-1], (1, self.state_size)))[0]

for t in reversed(range(0, len(rewards))):

running_add = running_add * self.discount_factor + rewards[t]

discounted_rewards[t] = running_add

return discounted_rewards # save <s, a ,r> of each step

# this is used for calculating discounted rewards

def memory(self, state, action, reward):

self.states.append(state)

act = np.zeros(self.action_size)

act[action] = 1

self.actions.append(act)

self.rewards.append(reward) # update policy network and value network every episode

def train_episode(self, done):

discounted_rewards = self.discount_rewards(self.rewards, done) values = self.critic.predict(np.array(self.states))

values = np.reshape(values, len(values)) advantages = discounted_rewards - values self.optimizer[0]([self.states, self.actions, advantages])

self.optimizer[1]([self.states, discounted_rewards])

self.states, self.actions, self.rewards = [], [], [] def get_action(self, state):

policy = self.actor.predict(np.reshape(state, [1, self.state_size]))[0]

return np.random.choice(self.action_size, 1, p=policy)[0] if __name__ == "__main__":

env_name = 'CartPole-v1'

env = gym.make(env_name) state_size = env.observation_space.shape[0]

action_size = env.action_space.n env.close() global_agent = A3CAgent(state_size, action_size, env_name)

global_agent.train()

深度增强学习--A3C的更多相关文章

- 深度增强学习--DPPO

PPO DPPO介绍 PPO实现 代码DPPO

- 深度增强学习--DDPG

DDPG DDPG介绍2 ddpg输出的不是行为的概率, 而是具体的行为, 用于连续动作 (continuous action) 的预测 公式推导 推导 代码实现的gym的pendulum游戏,这个游 ...

- 深度增强学习--DQN的变形

DQN的变形 double DQN prioritised replay dueling DQN

- 深度增强学习--Actor Critic

Actor Critic value-based和policy-based的结合 实例代码 import sys import gym import pylab import numpy as np ...

- 深度增强学习--Policy Gradient

前面都是value based的方法,现在看一种直接预测动作的方法 Policy Based Policy Gradient 一个介绍 karpathy的博客 一个推导 下面的例子实现的REINFOR ...

- 深度增强学习--Deep Q Network

从这里开始换个游戏演示,cartpole游戏 Deep Q Network 实例代码 import sys import gym import pylab import random import n ...

- 常用增强学习实验环境 II (ViZDoom, Roboschool, TensorFlow Agents, ELF, Coach等) (转载)

原文链接:http://blog.csdn.net/jinzhuojun/article/details/78508203 前段时间Nature上发表的升级版Alpha Go - AlphaGo Ze ...

- 马里奥AI实现方式探索 ——神经网络+增强学习

[TOC] 马里奥AI实现方式探索 --神经网络+增强学习 儿时我们都曾有过一个经典游戏的体验,就是马里奥(顶蘑菇^v^),这次里约奥运会闭幕式,日本作为2020年东京奥运会的东道主,安倍最后也已经典 ...

- 增强学习 | AlphaGo背后的秘密

"敢于尝试,才有突破" 2017年5月27日,当今世界排名第一的中国棋手柯洁与AlphaGo 2.0的三局对战落败.该事件标志着最新的人工智能技术在围棋竞技领域超越了人类智能,借此 ...

随机推荐

- Python+Selenium 自动化实现实例-打开浏览器模拟进行搜索数据并验证

#导入模块 from selenium import webdriverfrom selenium.webdriver.common.keys import Keys #启动火狐浏览器driver = ...

- jenkins上展示html报告【转载】

转至博客:上海-悠悠 前言 在jenkins上展示html的报告,需要添加一个HTML Publisher plugin插件,把生成的html报告放到指定文件夹,这样就能用jenkins去读出指定文件 ...

- hdu 1671(字典树判断前缀)

Phone List Time Limit: 3000/1000 MS (Java/Others) Memory Limit: 32768/32768 K (Java/Others)Total ...

- centos7系统安装后的基础优化

1.更改网卡信息 1.编辑网卡 # cd /etc/sysconfig/network-scripts/ # mv ifcfg-ens33 ifcfg-eth0 # mv ifcfg-ens37 if ...

- MS SQL Server迁移至Azure SQL

SQL Server的数据目前是存在于公司服务器的,现时需要将它迁移至Azure SQL 迁移分两种 数据库结构复制 数据库结构复制与数据迁移至Azure SQL 第1种方法针对的是将现有数据库创建新 ...

- C语言数据类型64位和32机器的区别

C语言编程需要注意的64位和32机器的区别 .数据类型特别是int相关的类型在不同位数机器的平台下长度不同.C99标准并不规定具体数据类型的长度大小,只规定级别.作下比较: 32位平台 char:1字 ...

- Loj#6434「PKUSC2018」主斗地(搜索)

题面 Loj 题解 细节比较多的搜索题. 首先现将牌型暴力枚举出来,大概是\(3^{16}\)吧. 然后再看能打什么,简化后无非就三种决策:单牌,\(3+x\)和\(4+x\). 枚举网友打了几张\( ...

- Luogu P2590 树的统计(树链剖分+线段树)

题意 原文很清楚了 题解 重链剖分模板题,用线段树维护即可. #include <cstdio> #include <cstring> #include <algorit ...

- 34、Flask实战第34天:修改邮箱

修改邮箱页面布局 新建cms/cms_resetemail.html {% extends 'cms/cms_base.html' %} {% block title %}修改邮箱-CMS管理系统{% ...

- 安卓 内存 泄漏 工具 LeakCanary 使用

韩梦飞沙 yue31313 韩亚飞 han_meng_fei_sha 313134555@qq.com LeakCanary是Square开源了一个内存泄露自动探测神器 .这是项目的github仓库地 ...