Coursera, Deep Learning 2, Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Course

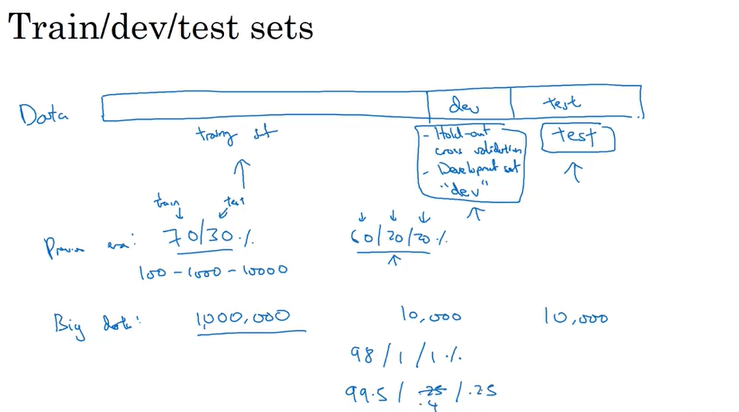

Train/Dev/Test set

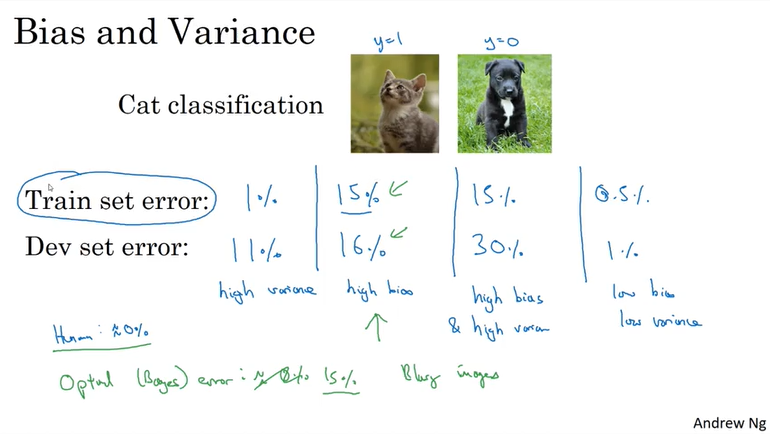

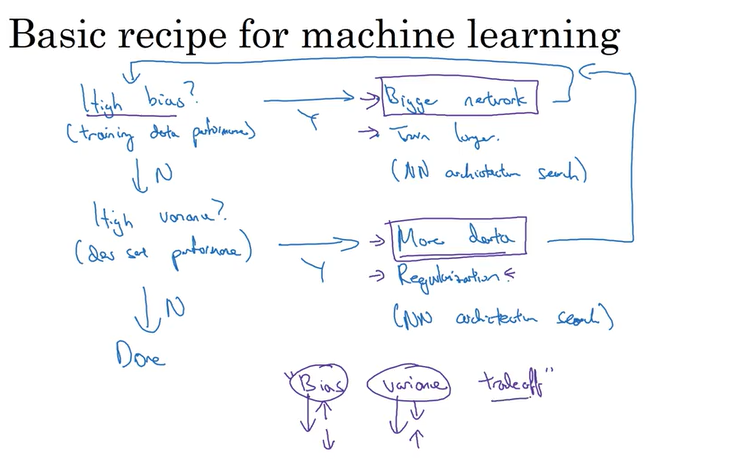

Bias/Variance

Regularization

- L2 regularation

- drop out

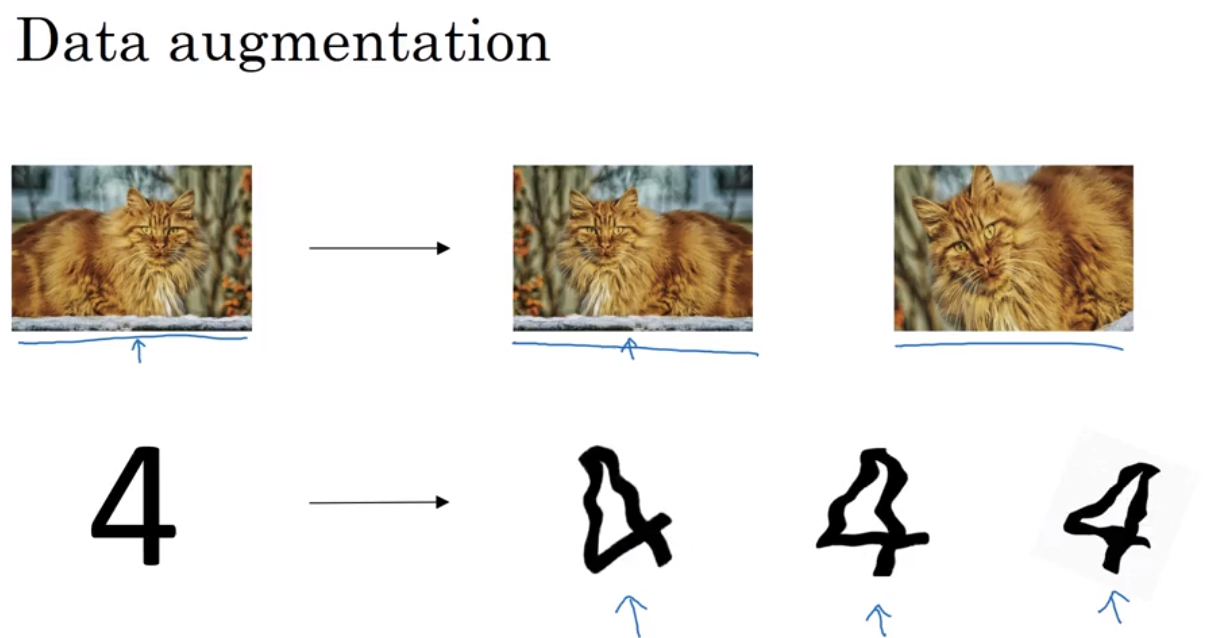

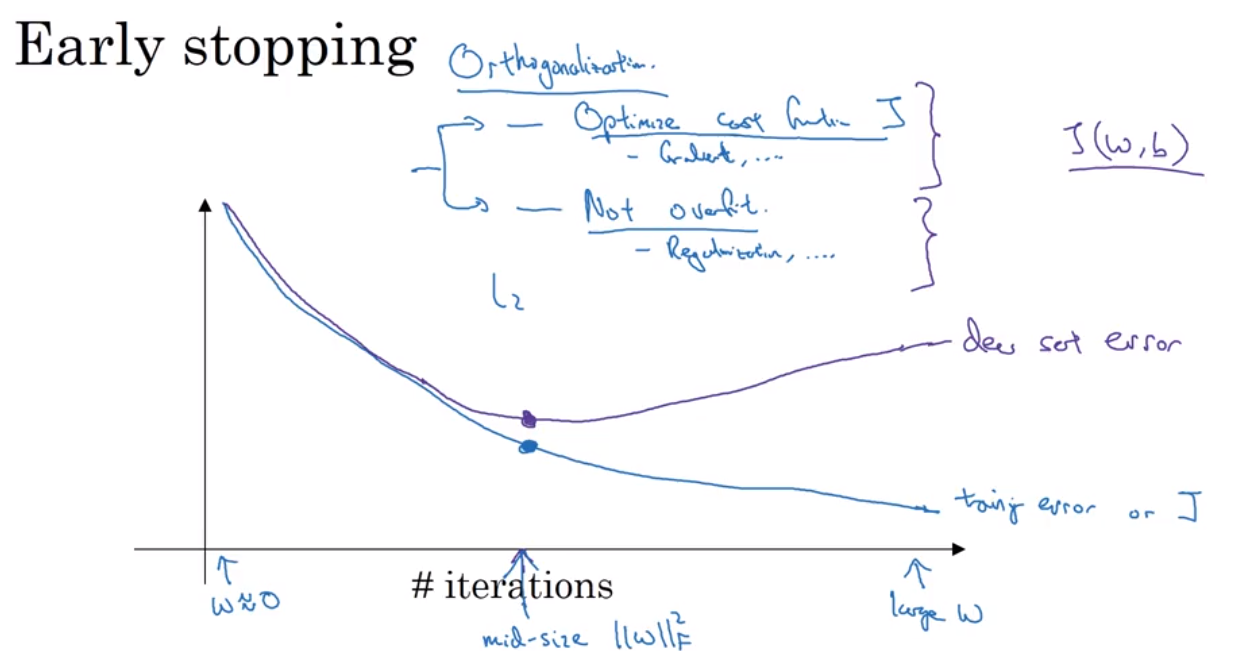

- data augmentation(翻转图片得到一个新的example), early stopping(画出J_train 和J_dev 对应于iteration的图像)

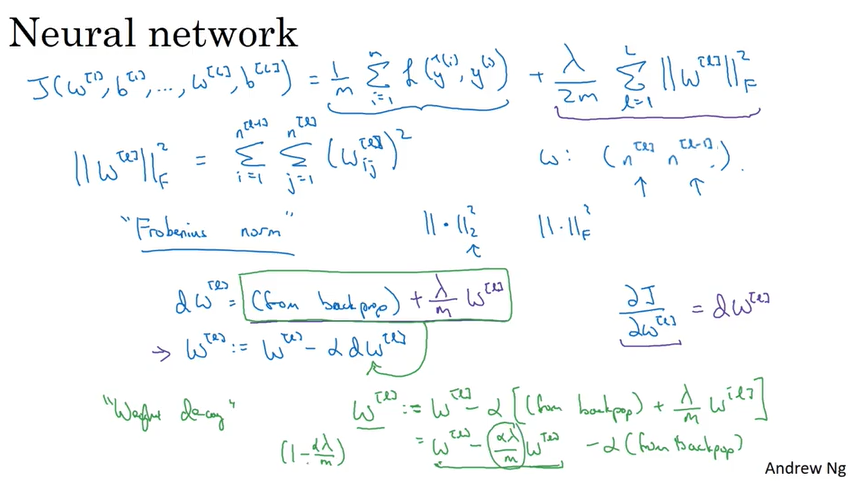

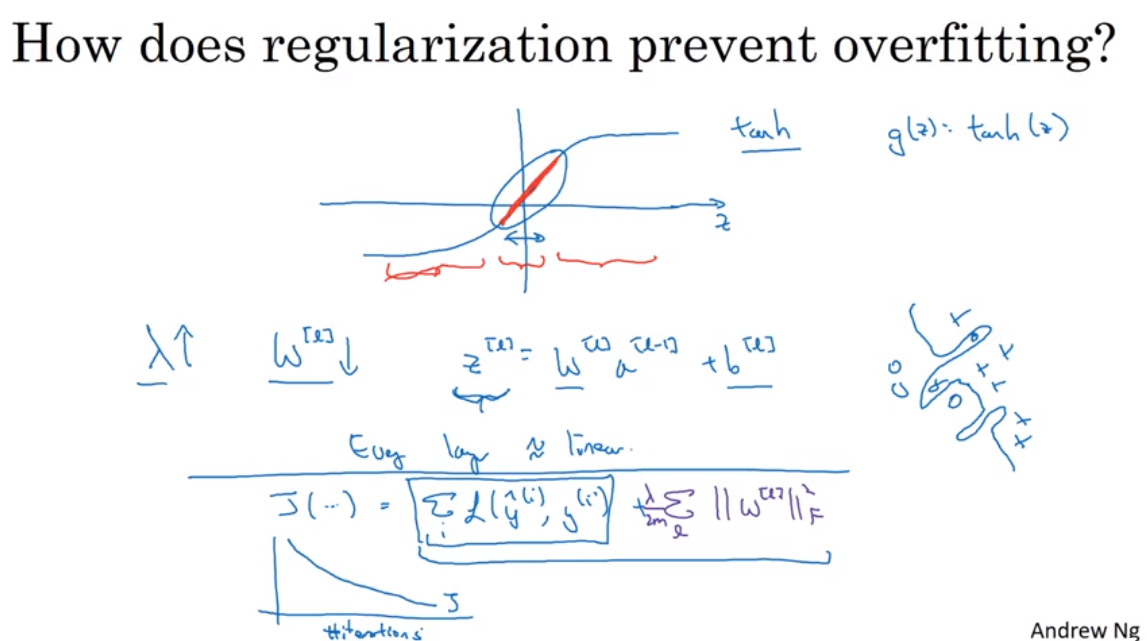

L2 regularization:

Forbenius Norm.

上面这张图提到了weight decay 的概念

Weight Decay: A regularization technique (such as L2 regularization) that results in gradient descent shrinking the weights on every iteration.

why regulation works(intuition)?

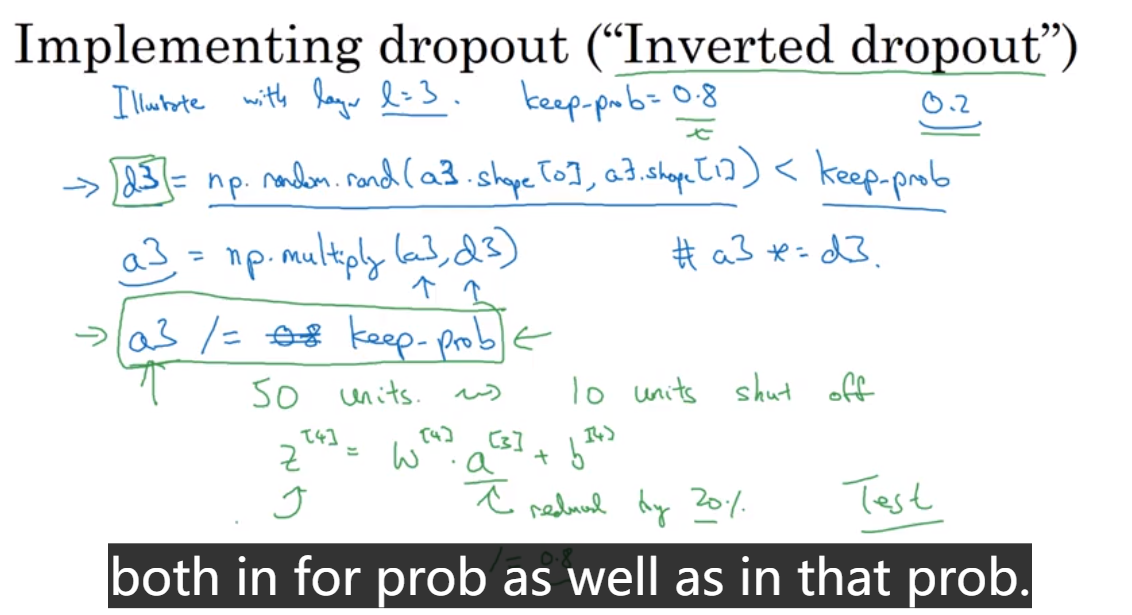

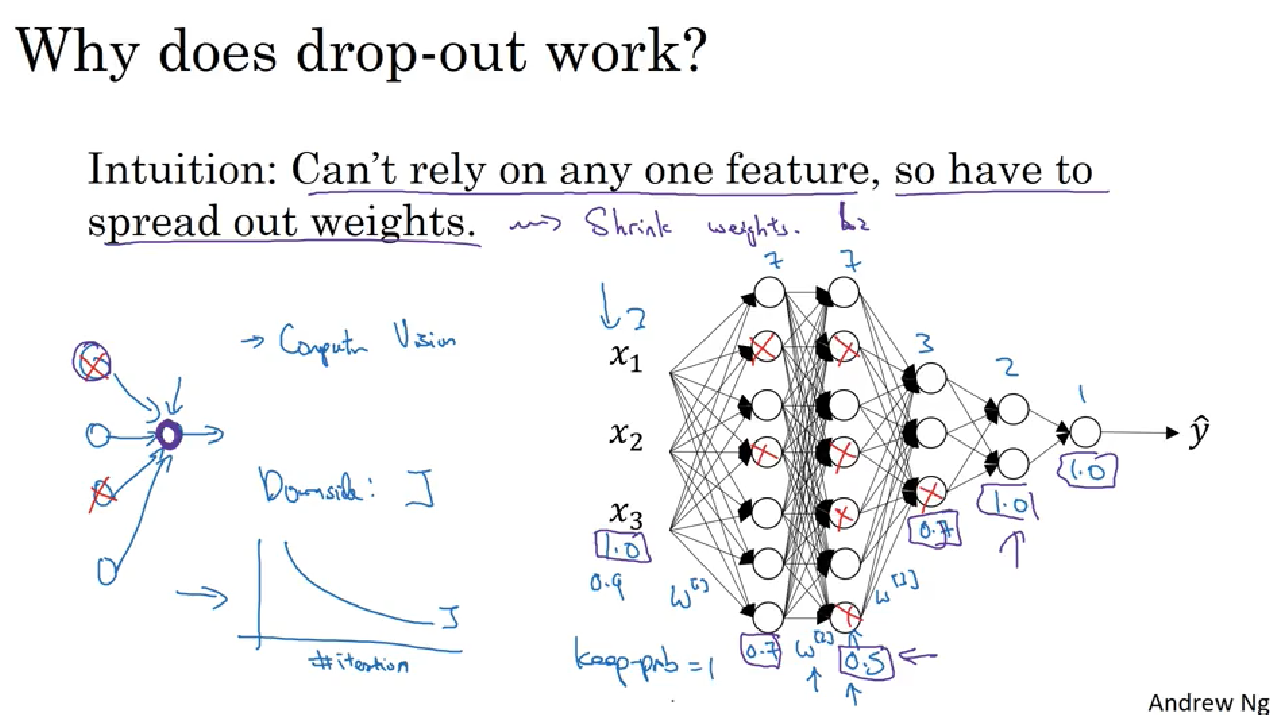

Dropout regularization:

下面的图只显示了forward propagation过程中使用dropout, back propagation 同样也需要drop out.

在对 test set 做预测的时候,不需要 drop out.

Early stopping: 缺点是违反了正交原则(Orthoganalization, 不同角度互不影响计算), 因为early stopping 同时关注Optimize cost func J, 和 Not overfit 两个任务,不是分开解决。一般建议用L2 regularization, 但是缺点是迭代次数多.

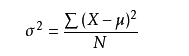

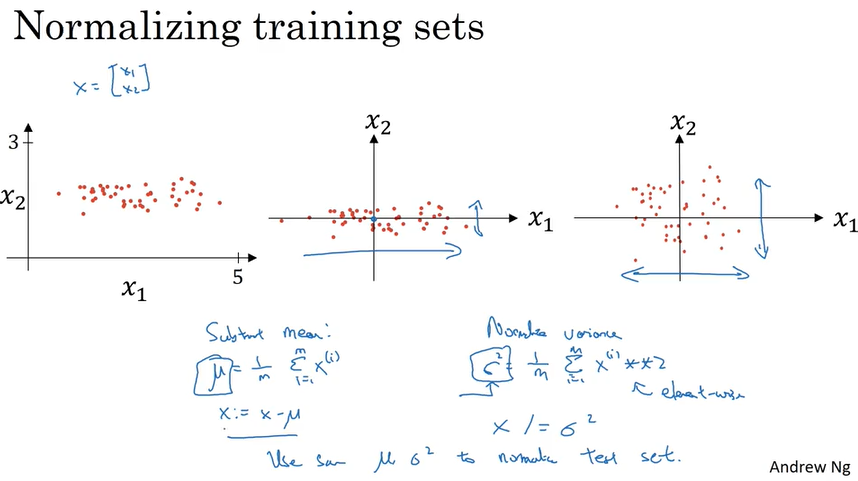

Normalizing input

就是把input x 转化成方差,公式如下

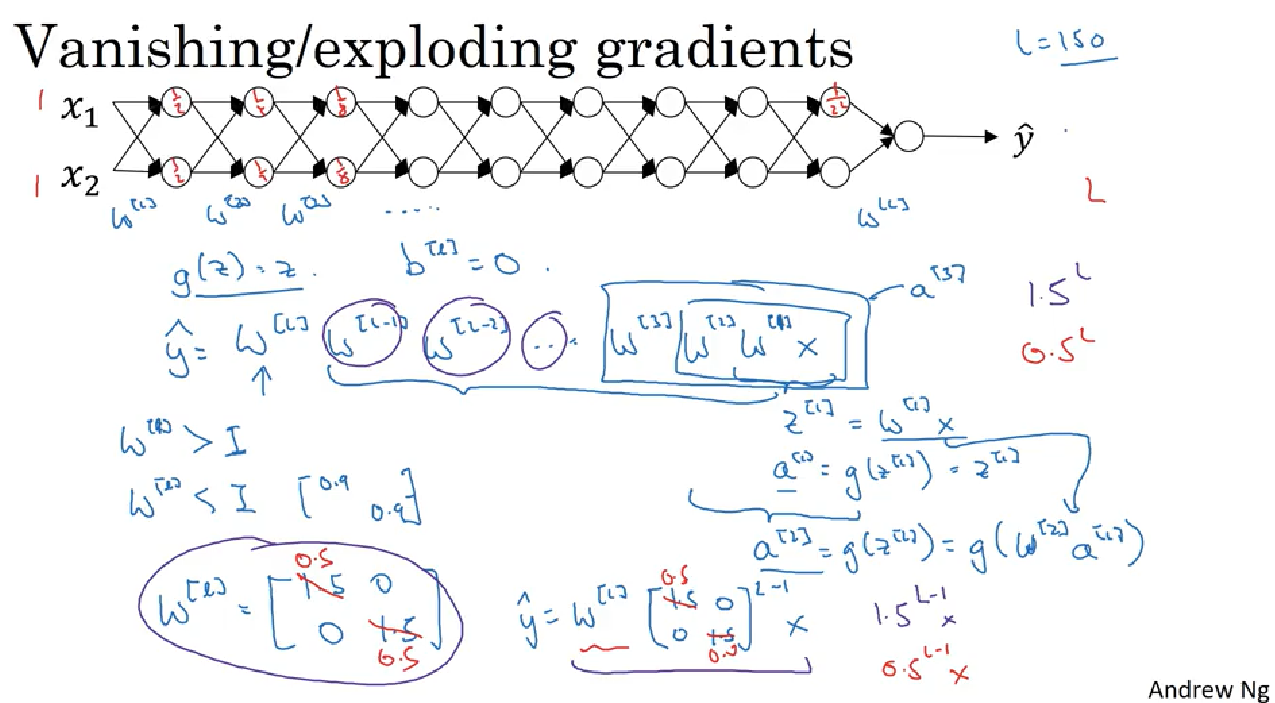

Vanishing/Exploding gradients

deep neural network suffer from these issues. they are huge barrier to training deep neural network.

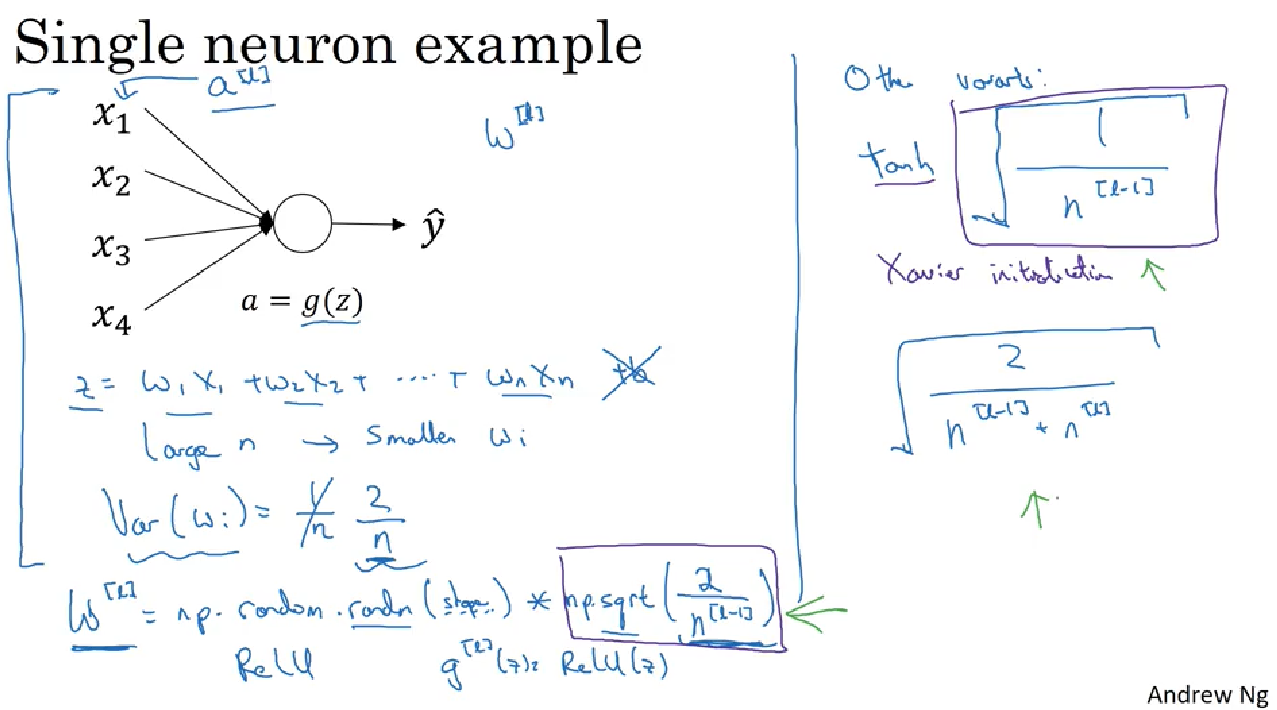

There is a partial solution to solve the above problem but help a lot which is careful choice how you initialize the weights. 主要目的是使得weight W[l]不要比1太大或者太小,这样最后在算W的指数级的时候就很大程度改善vanishing 和 exploding的问题.

如果用的是Relu activation, 就用中下部的蓝框的内容(He Initialization),如果是tanh activation 就用右边的蓝框的内容(Xavier initialization),也有些人对tanh用右边第二种

Weight Initialization for Deep Networks

Xavier initialization

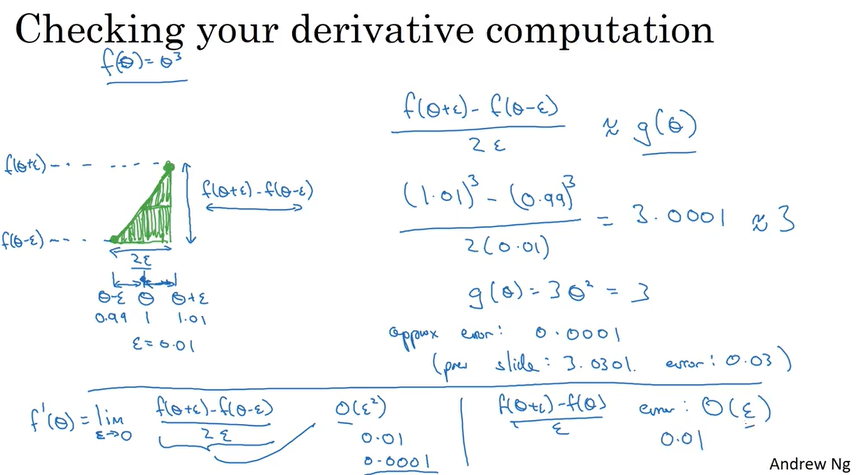

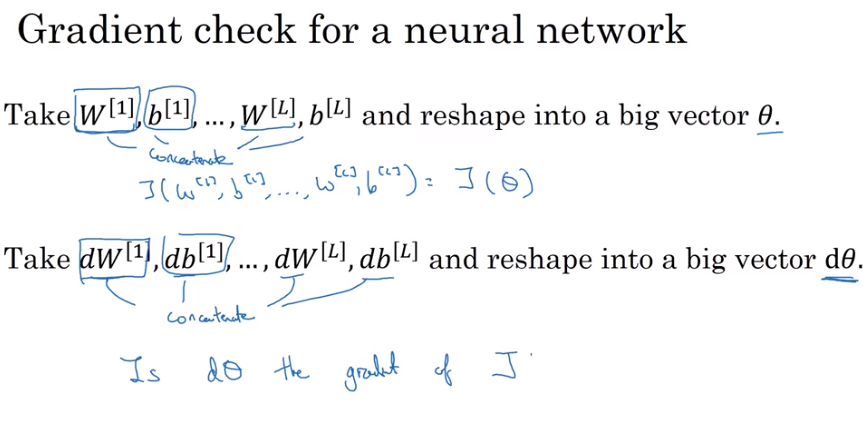

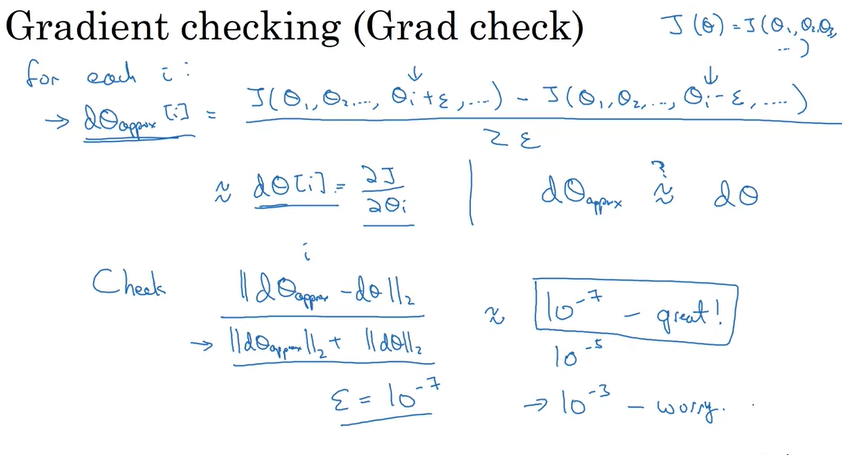

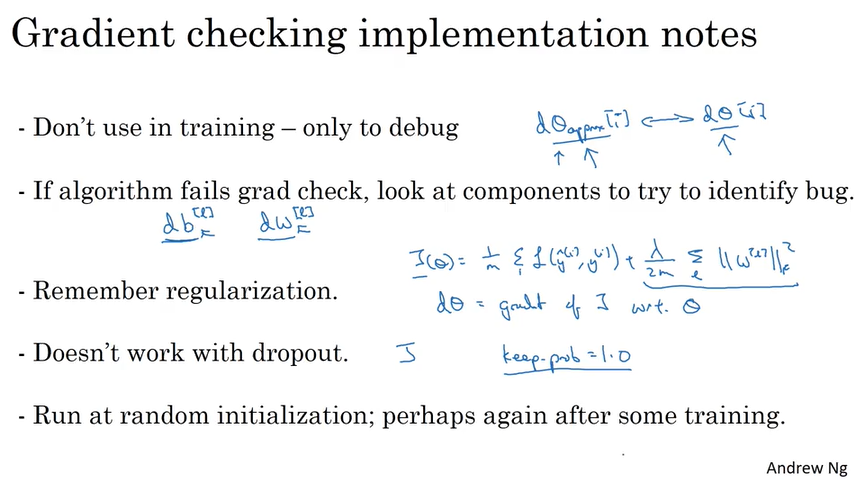

Gradient Checking

Ref:

1. Coursera

Coursera, Deep Learning 2, Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Course的更多相关文章

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Assignment(Initialization)

声明:所有内容来自coursera,作为个人学习笔记记录在这里. Initialization Welcome to the first assignment of "Improving D ...

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Assignment(Gradient Checking)

声明:所有内容来自coursera,作为个人学习笔记记录在这里. Gradient Checking Welcome to the final assignment for this week! In ...

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week1, Assignment(Regularization)

声明:所有内容来自coursera,作为个人学习笔记记录在这里. Regularization Welcome to the second assignment of this week. Deep ...

- 《Improving Deep Neural Networks:Hyperparameter tuning, Regularization and Optimization》课堂笔记

Lesson 2 Improving Deep Neural Networks:Hyperparameter tuning, Regularization and Optimization 这篇文章其 ...

- [C4] Andrew Ng - Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization

About this Course This course will teach you the "magic" of getting deep learning to work ...

- Coursera Deep Learning 2 Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization - week2, Assignment(Optimization Methods)

声明:所有内容来自coursera,作为个人学习笔记记录在这里. 请不要ctrl+c/ctrl+v作业. Optimization Methods Until now, you've always u ...

- 课程二(Improving Deep Neural Networks: Hyperparameter tuning, Regularization and Optimization),第一周(Practical aspects of Deep Learning) —— 4.Programming assignments:Gradient Checking

Gradient Checking Welcome to this week's third programming assignment! You will be implementing grad ...

- 吴恩达《深度学习》-课后测验-第二门课 (Improving Deep Neural Networks:Hyperparameter tuning, Regularization and Optimization)-Week 1 - Practical aspects of deep learning(第一周测验 - 深度学习的实践)

Week 1 Quiz - Practical aspects of deep learning(第一周测验 - 深度学习的实践) \1. If you have 10,000,000 example ...

- 吴恩达《深度学习》-第二门课 (Improving Deep Neural Networks:Hyperparameter tuning, Regularization and Optimization)-第一周:深度学习的实践层面 (Practical aspects of Deep Learning) -课程笔记

第一周:深度学习的实践层面 (Practical aspects of Deep Learning) 1.1 训练,验证,测试集(Train / Dev / Test sets) 创建新应用的过程中, ...

随机推荐

- 【CF1141F1】Same Sum Blocks

题目大意:给定一个 N 个值组成的序列,求序列中区间和相同的不相交区间段数量的最大值. 题解:设 \(dp[i][j]\) 表示到区间 [i,j] 时,与区间 [i,j] 的区间和相同的不相交区间数量 ...

- undefined is not an object(evaluating '_react3.default.PropTypes.shape)

手机红屏报这个错时的解决办法: npm uninstall --save react-native-deprecated-custom-components npm install --save ht ...

- 美丽的webpack-bundle-analyzer

webpack-bundle-analyzer -- Webpack 插件和 CLI 实用程序,她可以将打包后的内容束展示为方便交互的直观树状图,让我们知道我们所构建包中真正引入的内容: 我们可以借助 ...

- jQuery中mouseleave和mouseout的区别详解

很多人在使用jQuery实现鼠标悬停效果时,一般都会用到mouseover和mouseout这对事件.而在实现过程中,可能会出现一些不理想的状况. 先看下使用mouseout的效果: <p> ...

- Luogu P3521 [POI2011]ROT-Tree Rotations

题目链接 \(Click\) \(Here\) 线段树合并,没想到学起来意外的很简单,一般合并权值线段树. 建树方法和主席树一致,即动态开点.合并方法类似于\(FHQ\)的合并,就是把两棵树的信息整合 ...

- (叉积,线段判交)HDU1086 You can Solve a Geometry Problem too

You can Solve a Geometry Problem too Time Limit: 2000/1000 MS (Java/Others) Memory Limit: 65536/3 ...

- 使用docker-compose部署nginx

1.新建docker-compose.yml文件,文件的基本模板如下:(由于yml格式比较严格,注意空格缩进) version: '2.0' services: nginx: restart: a ...

- CodeForces1051F LCA + Floyd

题意:给定一个10W的无向联通图,和10W的询问,每个询问求任意两点间的距离,限制条件是边数-点数不超过20 一般来说图上任意两点间的距离都会采用Floyd算法直接做,但是这个数据范围显然是不合理的, ...

- 2017-12-15python全栈9期第二天第四节之格式化输出%s和个人简介模板

#!/user/bin/python# -*- coding:utf-8 -*-msg = '''-----------info of zd----------------Name:zdage:24h ...

- Spring 在 xml配置文件 或 annotation 注解中 运用Spring EL表达式

Spring EL 一:在Spring xml 配置文件中运用 Spring EL Spring EL 采用 #{Sp Expression Language} 即 #{spring表达式} ...