Kubernetes集群部署(yum部署)

环境准备

Kubernetes-Master:192.168.37.134 #yum install kubernetes-master etcd flannel -y

Kubernetes-node1:192.168.37.135 #yum install kubernetes-node etcd docker flannel *rhsm* -y

Kubernetes-node2:192.168.37.146 #yum install kubernetes-node etcd docker flannel *rhsm* -y

系统版本:Centos7.5

关闭Firewalld防火墙,保证ntp时间正常同步同步

【K8s-master-etcd配置】

[root@Kubernetes-master ~]# egrep -v "#|^$" /etc/etcd/etcd.conf

ETCD_DATA_DIR="/data/etcd1"

ETCD_LISTEN_PEER_URLS="http://192.168.37.134:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.37.134:2379,http://127.0.0.1:2379"

ETCD_MAX_SNAPSHOTS=""

ETCD_NAME="etcd1"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.37.134:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.37.134:2379"

ETCD_INITIAL_CLUSTER="etcd1=http://192.168.37.134:2380,etcd2=http://192.168.37.135:2380,etcd3=http://192.168.37.136:2380"

配置文件详解:

ETCD_DATA_DIR:etcd节点名称

ETCD_LISTEN_PEER_URLS:该节点与其他etcd节点通信时所监听的地址

ETCD_LISTEN_CLIENT_URLS:etcd节点与客户端通信时所监听的地址列表

ETCD_INITIAL_ADVERTISE_PEER_URLS:etcd集群通信所监听节点地址和端口

ETCD_ADVERTISE_CLIENT_URLS:广播本地节点地址告知其他etcd节点,监听本地的网络和端口2379

ETCD_INITIAL_CLUSTER:配置etcd集群内部所有成员地址,同时监听2380端口,方便etcd集群节点同步数据

root@Kubernetes-master ~]# mkdir -p /data/etcd1/

[root@Kubernetes-master ~]# chmod 757 -R /data/etcd1/

【K8s-etcd1配置】

[root@kubernetes-node1 ~]# egrep -v "#|^$" /etc/etcd/etcd.conf

ETCD_DATA_DIR="/data/etcd2"

ETCD_LISTEN_PEER_URLS="http://192.168.37.135:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.37.135:2379,http://127.0.0.1:2379"

ETCD_MAX_SNAPSHOTS=""

ETCD_NAME="etcd2"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.37.135:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.37.135:2379"

ETCD_INITIAL_CLUSTER="etcd1=http://192.168.37.134:2380,etcd2=http://192.168.37.135:2380,etcd3=http://192.168.37.136:2380"

[root@kubernetes-node1 ~]# mkdir -p /data/etcd2/

[root@kubernetes-node1 ~]#chmod 757 -R /data/etcd2/

【K8s-node2-etcd配置】

[root@kubernetes-node2 ~]# egrep -v "#|^$" /etc/etcd/etcd.conf

ETCD_DATA_DIR="/data/etcd3"

ETCD_LISTEN_PEER_URLS="http://192.168.37.136:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.37.136:2379,http://127.0.0.1:2379"

ETCD_MAX_SNAPSHOTS=""

ETCD_NAME="etcd3"

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.37.136:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.37.136:2379"

ETCD_INITIAL_CLUSTER="etcd1=http://192.168.37.134:2380,etcd2=http://192.168.37.135:2380,etcd3=http://192.168.37.136:2380"

[root@kubernetes-node2 ~]# mkdir /data/etcd3/

[root@kubernetes-node2 ~]# chmod 757 -R /data/etcd3/

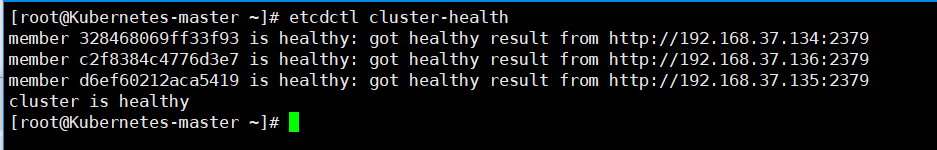

至此,ETCD集群已配置完毕,接下来启动并验证etcd集群是否正常~

[root@Kubernetes-master ~]# systemctl start etcd.service #注意,上述节点都需要启动etcd服务,同时也设置自启

[root@Kubernetes-master ~]# systemctl enable etcd.service

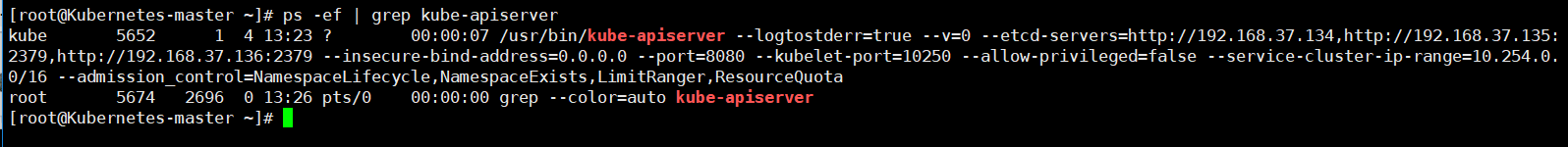

【K8s-master节点API-server/config配置】

[root@Kubernetes-master ~]# egrep -v "#|^$" /etc/kubernetes/apiserver

KUBE_API_ADDRESS="--insecure-bind-address=0.0.0.0"

KUBE_API_PORT="--port=8080"

KUBELET_PORT="--kubelet-port=10250"

KUBE_ETCD_SERVERS="--etcd-servers=http://192.168.37.134,http://192.168.37.135:2379,http://192.168.37.136:2379"

KUBE_SERVICE_ADDRESSES="--service-cluster-ip-range=10.254.0.0/16"

KUBE_ADMISSION_CONTROL="--admission_control=NamespaceLifecycle,NamespaceExists,LimitRanger,ResourceQuota"

KUBE_API_ARGS=""

[root@Kubernetes-master ~]#systemctl start kube-apiserver

[root@Kubernetes-master ~]# systemctl enable kube-apiserver

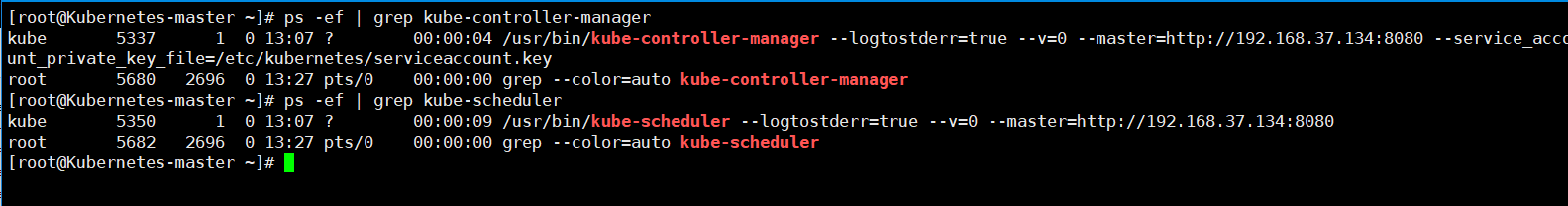

[root@Kubernetes-master ~]# egrep -v "#|^$" /etc/kubernetes/config

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=0"

KUBE_ALLOW_PRIV="--allow-privileged=false"

KUBE_MASTER="--master=http://192.168.37.134:8080"

[root@Kubernetes-master kubernetes]# systemctl start kube-controller-manager

[root@Kubernetes-master kubernetes]# systemctl enable kube-controller-manager

[root@Kubernetes-master kubernetes]# systemctl start kube-scheduler

[root@Kubernetes-master kubernetes]# systemctl enable kube-scheduler

【k8s-node1】

kubelet配置文件

[root@kubernetes-node1 ~]# egrep -v "#|^$" /etc/kubernetes/kubelet

KUBELET_ADDRESS="--address=0.0.0.0"

KUBELET_HOSTNAME="--hostname-override=192.168.37.135"

KUBELET_API_SERVER="--api-servers=http://192.168.37.134:8080"

KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest"

KUBELET_ARGS=""

config主配置文件

[root@kubernetes-node1 ~]# egrep -v "#|^$" /etc/kubernetes/config

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=0"

KUBE_ALLOW_PRIV="--allow-privileged=false"

KUBE_MASTER="--master=http://192.168.37.134:8080"

[root@kubernetes-node1 ~]# systemctl start kubelet

[root@kubernetes-node1 ~]# systemctl enable kubelet

[root@kubernetes-node1 ~]# systemctl start kube-proxy

[root@kubernetes-node1 ~]# systemctl enable kube-proxy

【k8s-node2】

kubelet配置文件

[root@kubernetes-node2 ~]# egrep -v "#|^$" /etc/kubernetes/kubelet

KUBELET_ADDRESS="--address=0.0.0.0"

KUBELET_HOSTNAME="--hostname-override=192.168.37.136"

KUBELET_API_SERVER="--api-servers=http://192.168.37.134:8080"

KUBELET_POD_INFRA_CONTAINER="--pod-infra-container-image=registry.access.redhat.com/rhel7/pod-infrastructure:latest"

KUBELET_ARGS=""

config主配置文件

[root@kubernetes-node2 ~]# egrep -v "^$|#" /etc/kubernetes/config

KUBE_LOGTOSTDERR="--logtostderr=true"

KUBE_LOG_LEVEL="--v=0"

KUBE_ALLOW_PRIV="--allow-privileged=false"

KUBE_MASTER="--master=http://192.168.37.134:8080"

[root@kubernetes-node2 ~]# systemctl start kubelet

[root@kubernetes-node2 ~]# systemctl enable kubelet

[root@kubernetes-node2 ~]# systemctl start kube-proxy

[root@kubernetes-node2 ~]# systemctl enable kube-proxy

【Kubernetes-flanneld网络配置】

[root@Kubernetes-master kubernetes]# egrep -v "#|^$" /etc/sysconfig/flanneld

FLANNEL_ETCD_ENDPOINTS="http://192.168.37.134:2379"

FLANNEL_ETCD_PREFIX="/atomic.io/network"

[root@kubernetes-node1 ~]# egrep -v "#|^$" /etc/sysconfig/flanneld

FLANNEL_ETCD_ENDPOINTS="http://192.168.37.134:2379"

FLANNEL_ETCD_PREFIX="/atomic.io/network"

[root@kubernetes-node2 ~]# egrep -v "#|^$" /etc/sysconfig/flanneld

FLANNEL_ETCD_ENDPOINTS="http://192.168.37.134:2379"

FLANNEL_ETCD_PREFIX="/atomic.io/network"

[root@Kubernetes-master kubernetes]# etcdctl mk /atomic.io/network/config '{"Network":"172.17.0.0/16"}'

{"Network":"172.17.0.0/16"}

[root@Kubernetes-master kubernetes]# etcdctl get /atomic.io/network/config

{"Network":"172.17.0.0/16"}

[root@Kubernetes-master kubernetes]# systemctl restart flanneld

[root@Kubernetes-master kubernetes]# systemctl enable flanneld

[root@kubernetes-node1 ~]# systemctl start flanneld

[root@kubernetes-node1 ~]# systemctl enable flanneld

[root@kubernetes-node2 ~]# systemctl start flanneld

[root@kubernetes-node2 ~]# systemctl enable flanneld

Ps:重启flanneld网络,会出现三个节点的IP,在node节点上要保证docker和自己的flanneld网段一致。如果不一致,重启docker服务即可恢复,否则的话,三个网段ping测不通

[root@Kubernetes-master ~]# etcdctl ls /atomic.io/network/subnets

/atomic.io/network/subnets/172.17.2.0-

/atomic.io/network/subnets/172.17.23.0-

/atomic.io/network/subnets/172.17.58.0-

检查Kubernetes-node节点防火墙设置,查看转发规则是否为drop,需开启 iptables -P FORWARD ACCEPT规则

[root@kubernetes-node1 ~]# iptables -L -n #查看防火墙规则

Chain INPUT (policy ACCEPT)

target prot opt source destination

KUBE-FIREWALL all -- 0.0.0.0/ 0.0.0.0/ Chain FORWARD (policy ACCEPT)

target prot opt source destination

DOCKER-ISOLATION all -- 0.0.0.0/ 0.0.0.0/

DOCKER all -- 0.0.0.0/ 0.0.0.0/

ACCEPT all -- 0.0.0.0/ 0.0.0.0/ ctstate RELATED,ESTABLISHED

ACCEPT all -- 0.0.0.0/ 0.0.0.0/

ACCEPT all -- 0.0.0.0/ 0.0.0.0/ Chain OUTPUT (policy ACCEPT)

target prot opt source destination

KUBE-SERVICES all -- 0.0.0.0/ 0.0.0.0/ /* kubernetes service portals */

KUBE-FIREWALL all -- 0.0.0.0/ 0.0.0.0/ Chain DOCKER ( references)

target prot opt source destination Chain DOCKER-ISOLATION ( references)

target prot opt source destination

RETURN all -- 0.0.0.0/ 0.0.0.0/ Chain KUBE-FIREWALL ( references)

target prot opt source destination

DROP all -- 0.0.0.0/ 0.0.0.0/ /* kubernetes firewall for dropping marked packets */ mark match 0x8000/0x8000 Chain KUBE-SERVICES ( references)

target prot opt source destination

或者开启转发功能

echo "net.ipv4.ip_forward = 1" >>/usr/lib/sysctl.d/-default.conf

[root@Kubernetes-master ~]# etcdctl ls /atomic.io/network/subnets #查看网络信息,保证连通性正常~

/atomic.io/network/subnets/172.17.38.0-24

/atomic.io/network/subnets/172.17.89.0-24

/atomic.io/network/subnets/172.17.52.0-24

[root@Kubernetes-master ~]# kubectl get nodes #在master上查看kubernetes的节点状态

NAME STATUS AGE

192.168.37.135 Ready 5m

192.168.37.136 Ready 5m

[root@Kubernetes-master ~]# etcdctl member list #检查etcd集群节点状态

328468069ff33f93: name=etcd1 peerURLs=http://192.168.37.134:2380 clientURLs=http://192.168.37.134:2379 isLeader=true

c2f8384c4776d3e7: name=etcd3 peerURLs=http://192.168.37.136:2380 clientURLs=http://192.168.37.136:2379 isLeader=false

d6ef60212aca5419: name=etcd2 peerURLs=http://192.168.37.135:2380 clientURLs=http://192.168.37.135:2379 isLeader=false

[root@Kubernetes-master ~]# kubectl get nodes #查看k8s集群node节点状态

NAME STATUS AGE

192.168.37.135 Ready 4h

192.168.37.136 Ready 1h

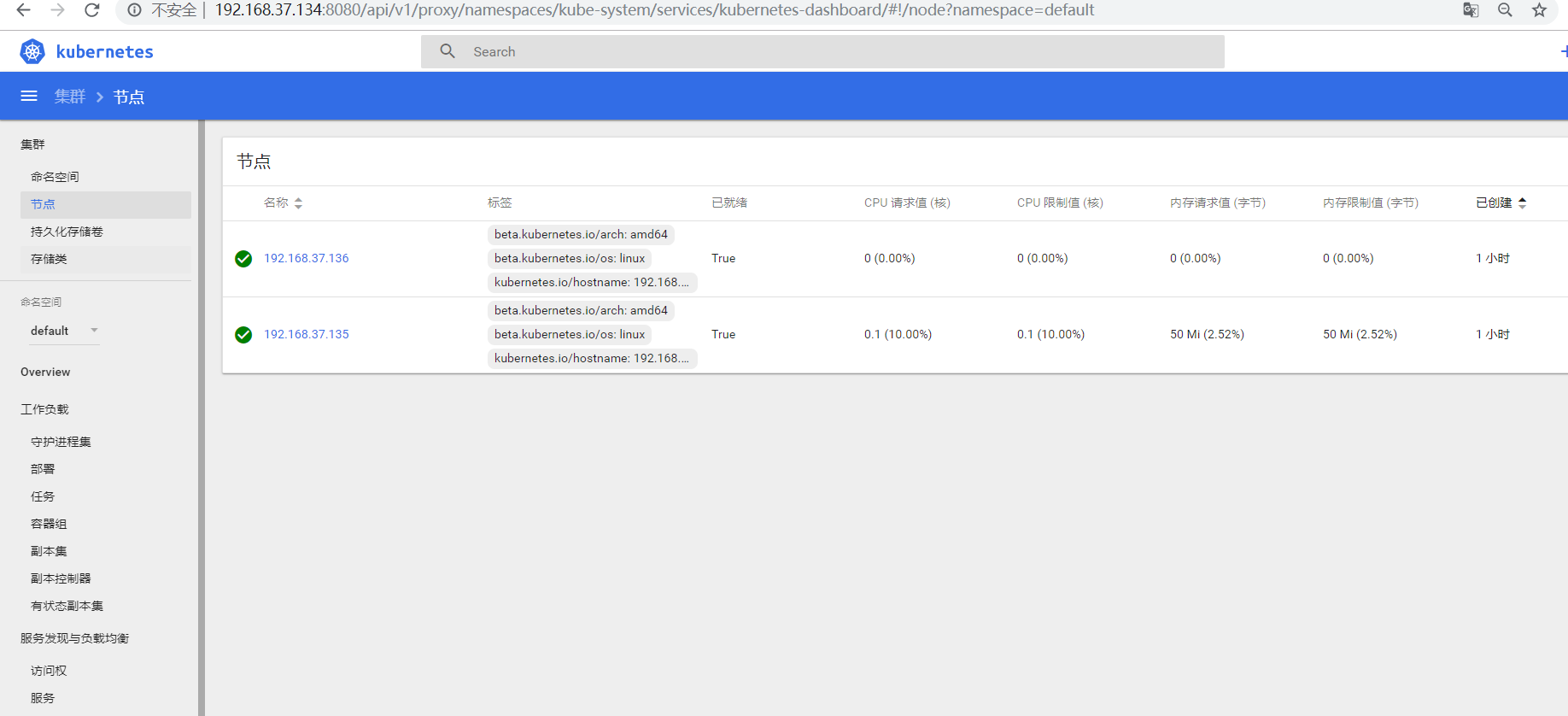

【K8s-Dashboard UI平台部署】

Kubernetes实现对docker容器集群的统一管理和调度,通过web界面能够更好的管理和控制

Ps:这里我们只需要在node1节点导入镜像即可

[root@kubernetes-node1 ~]# docker load < pod-infrastructure.tgz

[root@kubernetes-node1 ~]# docker tag $(docker images | grep none | awk '{print $3}') registry.access.redhat.com/rhel7/pod-infrastructure

[root@kubernetes-node1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 months ago MB

[root@kubernetes-node1 ~]# docker load < kubernetes-dashboard-amd64.tgz

[root@kubernetes-node1 ~]# docker tag $(docker images | grep none | awk '{print $3}') bestwu/kubernetes-dashboard-amd64:v1.6.3

[root@kubernetes-node1 ~]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.access.redhat.com/rhel7/pod-infrastructure latest 99965fb98423 months ago MB

bestwu/kubernetes-dashboard-amd64 v1.6.3 9595afede088 months ago MB

【Kubernetes-master】

编辑 ymal文件并创建Dashboard pods模块

[root@Kubernetes-master ~]# vim dashboard-controller.yaml

[root@Kubernetes-master ~]# cat dashboard-controller.yaml

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

spec:

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

annotations:

scheduler.alpha.kubernetes.io/critical-pod: ''

scheduler.alpha.kubernetes.io/tolerations: '[{"key":"CriticalAddonsOnly", "operator":"Exists"}]'

spec:

containers:

- name: kubernetes-dashboard

image: bestwu/kubernetes-dashboard-amd64:v1.6.3

resources:

# keep request = limit to keep this container in guaranteed class

limits:

cpu: 100m

memory: 50Mi

requests:

cpu: 100m

memory: 50Mi

ports:

- containerPort:

args:

- --apiserver-host=http://192.168.37.134:8080

livenessProbe:

httpGet:

path: /

port:

initialDelaySeconds:

timeoutSeconds:

[root@Kubernetes-master ~]# vim dashboard-service.yaml

apiVersion: v1

kind: Service

metadata:

name: kubernetes-dashboard

namespace: kube-system

labels:

k8s-app: kubernetes-dashboard

kubernetes.io/cluster-service: "true"

spec:

selector:

k8s-app: kubernetes-dashboard

ports:

- port:

targetPort:

[root@Kubernetes-master ~]# kubectl apply -f dashboard-controller.yaml

[root@Kubernetes-master ~]# kubectl apply -f dashboard-service.yaml

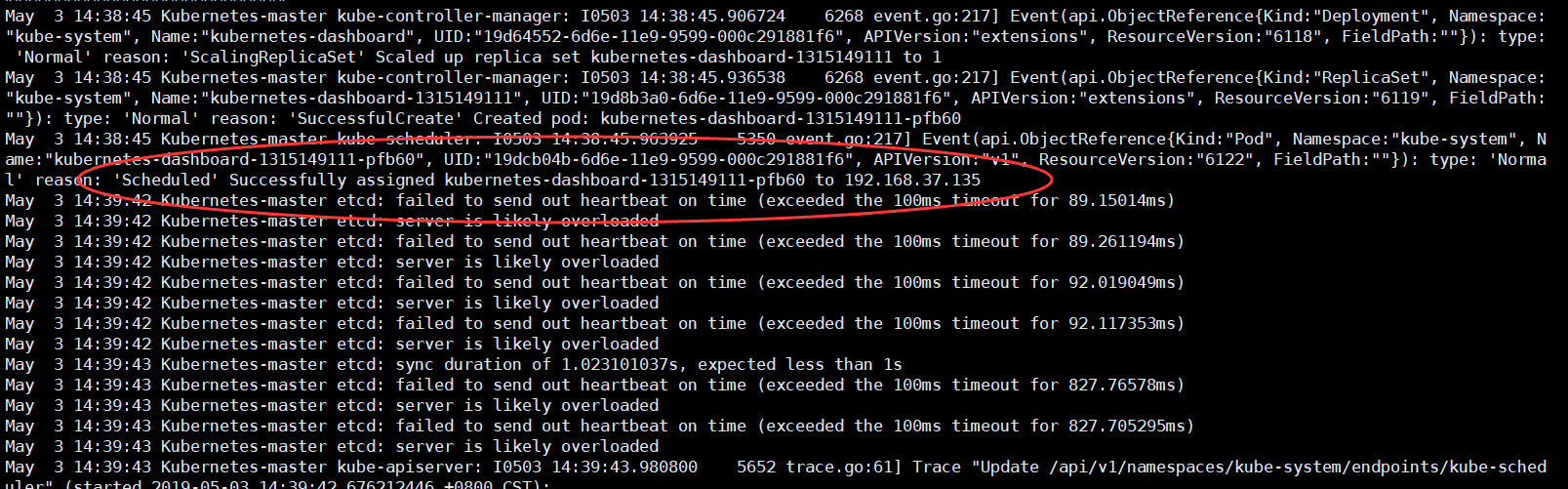

Ps:在创建 模块的同时,检查日志是否出现异常信息

[root@Kubernetes-master ~]# tail -f /var/log/messages

可以在node1节点上查看容器已经启动成功~

[root@kubernetes-node1 ~]# docker ps

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS

f118f845f19f bestwu/kubernetes-dashboard-amd64:v1.6.3 "/dashboard --inse..." minutes ago Up minutes 30dc9e7f_kubernetes-dashboard--pfb60_kube-system_19dcb04b-6d6e-11e9--000c291881f6_02fd5b8e

67b7746a6d23 registry.access.redhat.com/rhel7/pod-infrastructure:latest "/usr/bin/pod" minutes ago Up minutes es-dashboard--pfb60_kube-system_19dcb04b-6d6e-11e9--000c291881f6_4e2cb565

通过浏览器可验证输出k8s-master端访问即可

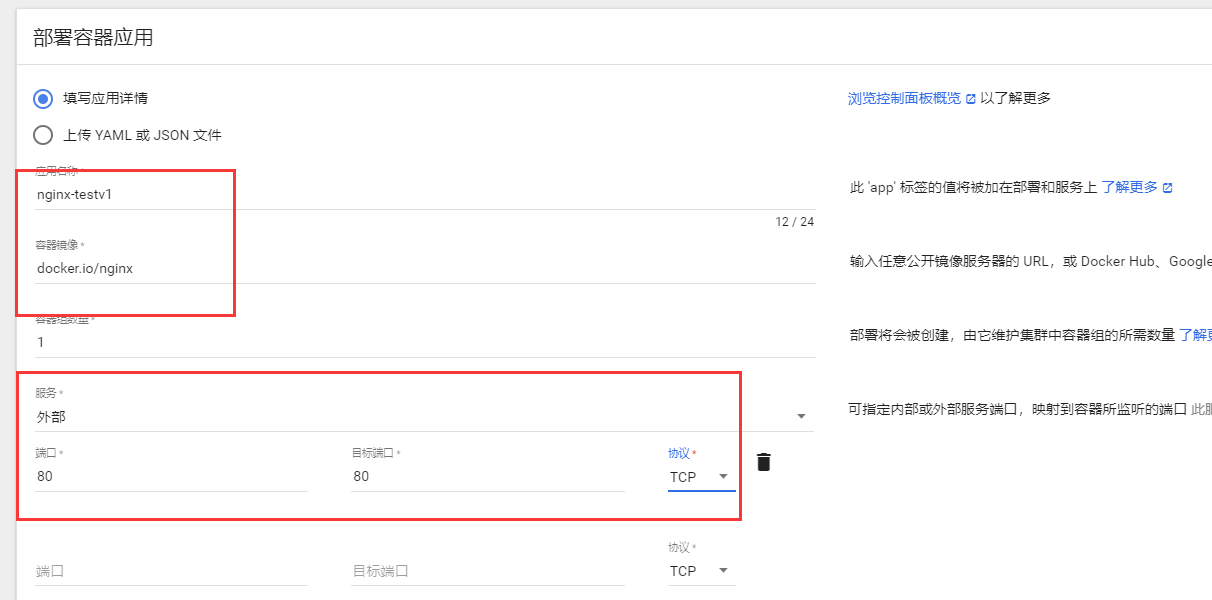

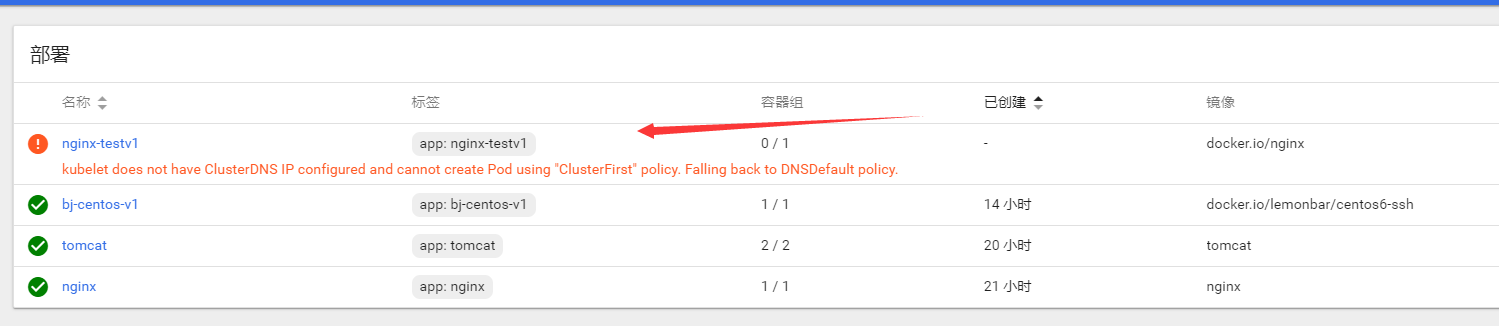

简单部署启动一个nginx容器,并且对外提供访问服务

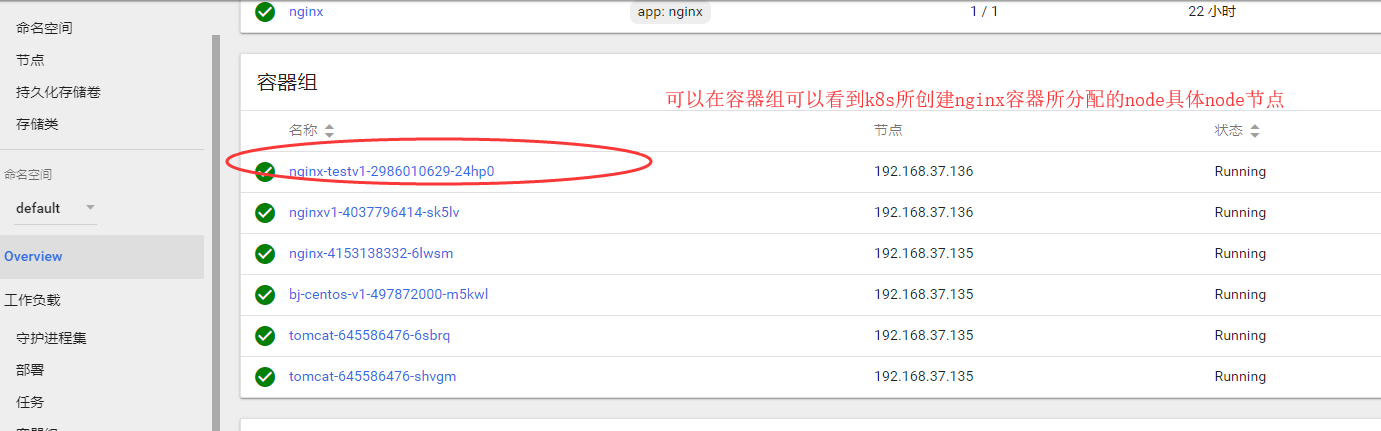

创建Server外部服务,默认会启动一个随机集群IP,将80端口映射成后端pod容器端口80,通过在局域网访问集群IP+80端口,即可访问后端pod集群应用,若是外部访问则通过node节点IP+随机生成的端口接口访问pod后端应用

浏览器访问node节点的IP地址+随机映射端口即可访问到k8s创建的nginx容器

【拓展-本地私有仓库部署】

# docker run -itd -p 5000:5000 -v /data/registry:/var/registry docker.io/registry

# docker tag docker.io/tomcat 192.168.37.135:5000/tomcat

# vim /etc/sysconfig/docker

OPTIONS='--selinux-enabled --log-driver=journald --signature-verification=false --insecure-registry 192.168.37.135:5000'

ADD_REGISTRY='--add-registry 192.168.37.135:5000'

#systemctl restart docker.service

# docker push 192.168.37.135:5000/tomcat

Kubernetes集群部署(yum部署)的更多相关文章

- kubernetes 集群的安装部署

本文来自我的github pages博客http://galengao.github.io/ 即www.gaohuirong.cn 摘要: 首先kubernetes得官方文档我自己看着很乱,信息很少, ...

- Kubernetes集群的安装部署

此文参照https://www.cnblogs.com/zhenyuyaodidiao/p/6500830.html,并根据实操过程略作修改. 1.环境介绍及准备: 1.1 物理机操作系统 物理机操作 ...

- K8S从入门到放弃系列-(16)Kubernetes集群Prometheus-operator监控部署

Prometheus Operator不同于Prometheus,Prometheus Operator是 CoreOS 开源的一套用于管理在 Kubernetes 集群上的 Prometheus 控 ...

- K8S从入门到放弃系列-(11)kubernetes集群网络Calico部署

摘要: 前面几个篇幅,已经介绍master与node节点集群组件部署,由于K8S本身不支持网络,当 node 全部启动后,由于网络组件(CNI)未安装会显示为 NotReady 状态,需要借助第三方网 ...

- K8S从入门到放弃系列-(9)kubernetes集群之kubelet部署

摘要: Kubelet组件运行在Node节点上,维持运行中的Pods以及提供kuberntes运行时环境,主要完成以下使命: 1.监视分配给该Node节点的pods 2.挂载pod所需要的volume ...

- K8S从入门到放弃系列-(5)kubernetes集群之kube-apiserver部署

摘要: 1.kube-apiserver为是整个k8s集群中的数据总线和数据中心,提供了对集群的增删改查及watch等HTTP Rest接口 2.kube-apiserver是无状态的,虽然客户端如k ...

- heptio scanner kubernetes 集群诊断工具部署说明

heptio scanner 是一款k8s 集群状态的诊断工具,还是很方便的,但是有一点就是需要使用google 的镜像 参考地址 https://scanner.heptio.com/ 部署 kub ...

- K8S从入门到放弃系列-(10)kubernetes集群之kube-proxy部署

摘要: kube-proxy的作用主要是负责service的实现,具体来说,就是实现了内部从pod到service和外部的从node port向service的访问 新版本目前 kube-proxy ...

- Ubuntu下搭建Kubernetes集群(3)--k8s部署

1. 关闭swap并关闭防火墙 首先,我们需要先关闭swap和防火墙,否则在安装Kubernetes时会导致不成功: # 临时关闭 swapoff -a # 编辑/etc/fstab,注释掉包含swa ...

- K8S从入门到放弃系列-(7)kubernetes集群之kube-scheduler部署

摘要: 1.Kube-scheduler作为组件运行在master节点,主要任务是把从kube-apiserver中获取的未被调度的pod通过一系列调度算法找到最适合的node,最终通过向kube-a ...

随机推荐

- 2019 讯飞java面试笔试题 (含面试题解析)

本人5年开发经验.18年年底开始跑路找工作,在互联网寒冬下成功拿到阿里巴巴.今日头条.讯飞等公司offer,岗位是Java后端开发,因为发展原因最终选择去了讯飞,入职一年时间了,也成为了面试官,之 ...

- Python进阶----UDP协议使用socket通信,socketserver模块实现并发

Python进阶----UDP协议使用socket通信,socketserver模块实现并发 一丶基于UDP协议的socket 实现UDP协议传输数据 代码如下:

- Content Security Policy (CSP)内容安全策略

CSP简介 Content Security Policy(CSP),内容(网页)安全策略,为了缓解潜在的跨站脚本问题(XSS攻击),浏览器的扩展程序系统引入了内容安全策略(CSP)这个概念. CSP ...

- Mybatis自动生成代码,MyBatis Generator

这还是在学校里跟老师学到的办法,然后随便在csdn下载一个并调试到可以用的状态. 基本由这几个文件组成,一个mysql连接的jar包.一个用于自动生成的配置文件,一个自动生成的jar包,运行jar包语 ...

- Firebird 事务隔离级别

各种RDBMS事务隔离都差不多,Firebird 中大致分为3类: CONCURRENCY.READ_COMMITTED.CONSISTENCY. 在提供的数据库驱动里可设置的事务隔离级别大致如下3类 ...

- Linux内核:关于中断你需要知道的

1.中断处理程序与其他内核函数真正的区别在于,中断处理程序是被内核调用来相应中断的,而它们运行于中断上下文(原子上下文)中,在该上下文中执行的代码不可阻塞.中断就是由硬件打断操作系统. 2.异常与中断 ...

- IDEA修改选取单词颜色和搜索结果的颜色

一.修改选取单词颜色 下图所示,选取Father后背景为淡蓝色,其它相同单词背景为灰色,根本看不清楚 修改配置 1.修改选取文本背景色为78C9FF 2.修改相同文本背景色为78C9FF,包括iden ...

- eclipse svn 提交、更新报错

问题描述: svn: Unable to connect to a repository at URL 'https://test.com/svn/clouds/trunk/fire_Alarm'sv ...

- 查看kafka版本

kafka没有提供version命令,不确定是否有方便的方法,但你可以进入kafka/libs文件夹. 或: find / -name \*kafka_\* | head -1 | grep -o ' ...

- 六、Linux_SSH服务器状态

一.保持Xshell连接Linux服务器状态 1.登录服务器后 cd /etc/ssh/ vim sshd_config 找到 ClientAliveInterval 0和ClientAliveCou ...