37.scrapy解决翻页及采集杭州造价网站材料数据

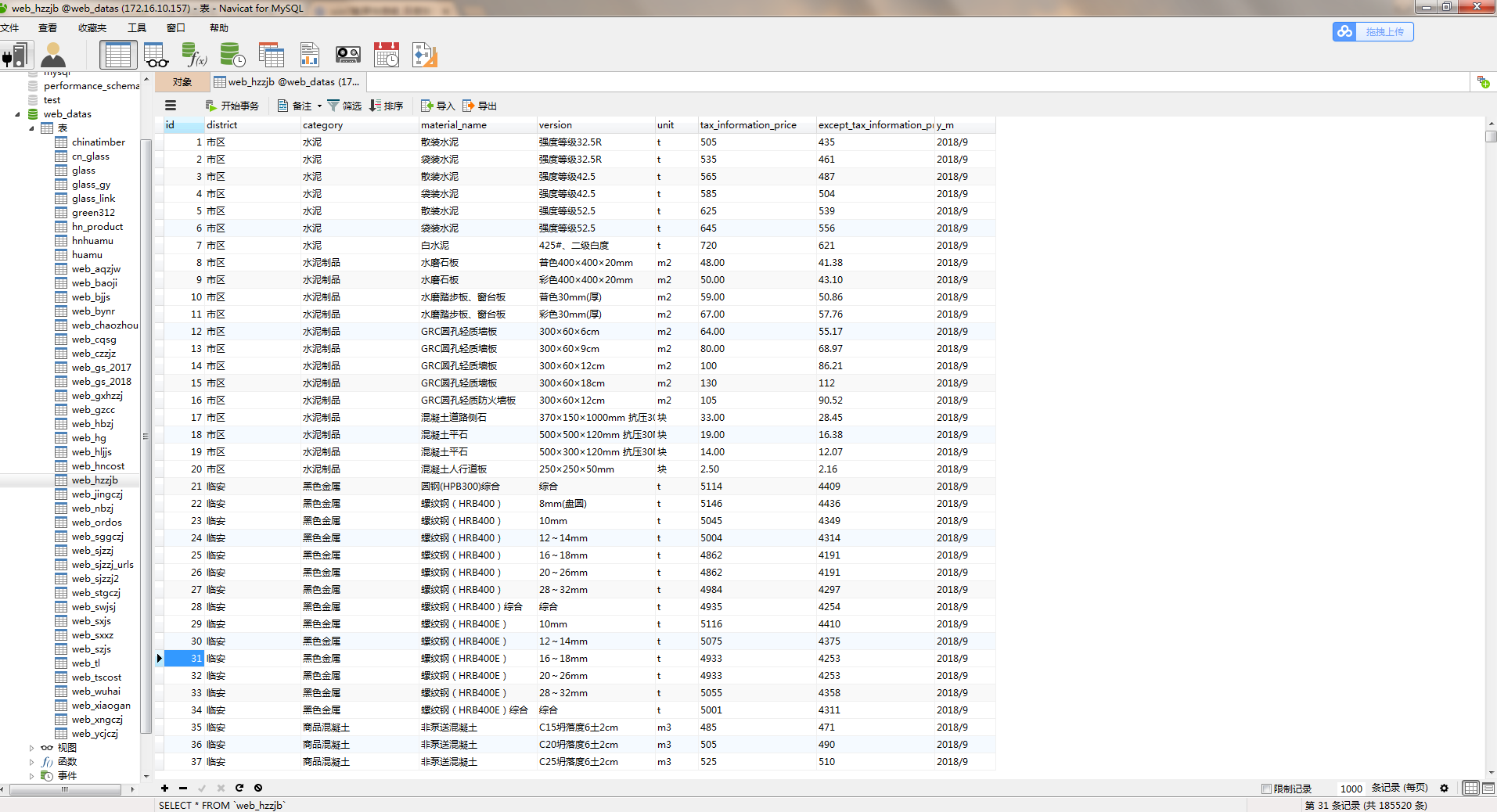

1.目标采集地址: http://183.129.219.195:8081/bs/hzzjb/web/list 2.这里的翻页还是较为简单的,只要模拟post请求发送data包含关键参数就能获取下一页页面信息。 获取页面标签信息的方法不合适,是之前写的,应该用xpath匹配整个table数据获取父类选择器再去二次匹配子类标签数据。 3.采集结果如下:

#hzzjb.py # -*- coding: utf-8 -*-

import scrapy

import json

import re

from hzzjb_web.items import HzzjbWebItem

class HzzjbSpider(scrapy.Spider):

name = 'hzzjb'

allowed_domains = ['183.129.219.195:8081/bs']

start_urls = ['http://183.129.219.195:8081/bs/hzzjb/web/list']

custom_settings = {

"DOWNLOAD_DELAY": 0.2,

"ITEM_PIPELINES": {

'hzzjb_web.pipelines.MysqlPipeline': 320,

},

"DOWNLOADER_MIDDLEWARES": {

'hzzjb_web.middlewares.HzzjbWebDownloaderMiddleware': 500

},

}

def parse(self, response):

_response=response.text

# print(_response)

try : #获取信息表

tag_list=response.xpath("//table[@class='table1']//tr/td").extract() # print(tag_list)

# for i in tag_list:

# print(i)

tag1=tag_list[:9]

tag2=tag_list[9:18]

tag3=tag_list[18:27]

tag4=tag_list[27:36]

tag5=tag_list[36:45]

tag6=tag_list[45:54]

tag7=tag_list[54:63]

tag8=tag_list[63:72]

tag9=tag_list[72:81]

tag10=tag_list[81:90]

tag11=tag_list[90:99]

tag12=tag_list[99:108]

tag13=tag_list[108:117]

tag14=tag_list[117:126]

tag15=tag_list[126:135]

tag16=tag_list[135:144]

tag17=tag_list[144:153]

tag18=tag_list[153:162]

tag19=tag_list[162:171]

tag20=tag_list[171:180] list=[]

list.append(tag1)

list.append(tag2)

list.append(tag3)

list.append(tag4)

list.append(tag5)

list.append(tag6)

list.append(tag7)

list.append(tag8)

list.append(tag9)

list.append(tag10)

list.append(tag11)

list.append(tag12)

list.append(tag13)

list.append(tag14)

list.append(tag15)

list.append(tag16)

list.append(tag17)

list.append(tag18)

list.append(tag19)

list.append(tag20) print(list)

except:

print('————————————————网站编码有异常!————————————————————') for index,tag in enumerate(list):

# print('*'*100)

# print(index+1,TAG(i)) item = HzzjbWebItem()

try:

# 地区

district = tag[0].replace('<td>','').replace('</td>','')

# print(district)

item['district'] = district

# 类别

category = tag[1].replace('<td>','').replace('</td>','')

# print(category)

item['category'] = category

# 材料名称

material_name = tag[2].replace('<td>','').replace('</td>','')

# print(material_name)

item['material_name'] = material_name

# 规格及型号

version = tag[3].replace('<td>','').replace('</td>','')

# print(version)

item['version'] = version

# 单位

unit = tag[4].replace('<td>','').replace('</td>','')

# print(unit)

item['unit'] = unit

# 含税信息价

tax_information_price = tag[5].replace('<td>','').replace('</td>','')

# print(tax_information_price)

item['tax_information_price'] = tax_information_price

# 除税信息价

except_tax_information_price = tag[6].replace('<td>','').replace('</td>','')

# print(except_tax_information_price)

item['except_tax_information_price'] = except_tax_information_price

# 年/月

year_month = tag[7].replace('<td>','').replace('</td>','')

# print(year_month)

item['y_m'] = year_month

except:

pass

# print('*'*100) yield item

for i in range(2, 5032):

# 翻页

data={

'mtype': '',

'_query.nfStart':'',

'_query.yfStart':'',

'_query.nfEnd':'',

'_query.yfEnd':'',

'_query.dqstr':'',

'_query.dq':'',

'_query.lbtype':'',

'_query.clmc':'',

'_query.ggjxh':'',

'pageNumber': '{}'.format(i),

'pageSize':'',

'orderColunm':'',

'orderMode':'',

} yield scrapy.FormRequest(url='http://183.129.219.195:8081/bs/hzzjb/web/list', callback=self.parse, formdata=data, method="POST", dont_filter=True)

#items.py # -*- coding: utf-8 -*- # Define here the models for your scraped items

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/items.html import scrapy class HzzjbWebItem(scrapy.Item):

# define the fields for your item here like:

# name = scrapy.Field() district=scrapy.Field()

category=scrapy.Field()

material_name=scrapy.Field()

version=scrapy.Field()

unit=scrapy.Field()

tax_information_price=scrapy.Field()

except_tax_information_price=scrapy.Field()

y_m=scrapy.Field()

#piplines.py # -*- coding: utf-8 -*- # Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

from scrapy.conf import settings

import pymysql class HzzjbWebPipeline(object):

def process_item(self, item, spider):

return item # 数据保存mysql

class MysqlPipeline(object): def open_spider(self, spider):

self.host = settings.get('MYSQL_HOST')

self.port = settings.get('MYSQL_PORT')

self.user = settings.get('MYSQL_USER')

self.password = settings.get('MYSQL_PASSWORD')

self.db = settings.get(('MYSQL_DB'))

self.table = settings.get('TABLE')

self.client = pymysql.connect(host=self.host, user=self.user, password=self.password, port=self.port, db=self.db, charset='utf8') def process_item(self, item, spider):

item_dict = dict(item)

cursor = self.client.cursor()

values = ','.join(['%s'] * len(item_dict))

keys = ','.join(item_dict.keys())

sql = 'INSERT INTO {table}({keys}) VALUES ({values})'.format(table=self.table, keys=keys, values=values)

try:

if cursor.execute(sql, tuple(item_dict.values())): # 第一个值为sql语句第二个为 值 为一个元组

print('数据入库成功!')

self.client.commit()

except Exception as e:

print(e)

self.client.rollback()

return item def close_spider(self, spider):

self.client.close()

#setting.py # -*- coding: utf-8 -*- # Scrapy settings for hzzjb_web project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html BOT_NAME = 'hzzjb_web' SPIDER_MODULES = ['hzzjb_web.spiders']

NEWSPIDER_MODULE = 'hzzjb_web.spiders' # mysql配置参数

MYSQL_HOST = "172.16.0.55"

MYSQL_PORT = 3306

MYSQL_USER = "root"

MYSQL_PASSWORD = "concom603"

MYSQL_DB = 'web_datas'

TABLE = "web_hzzjb" # Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'hzzjb_web (+http://www.yourdomain.com)' # Obey robots.txt rules

ROBOTSTXT_OBEY = False # Configure maximum concurrent requests performed by Scrapy (default: 16)

#CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default)

#COOKIES_ENABLED = False # Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False # Override the default request headers:

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#} # Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'hzzjb_web.middlewares.HzzjbWebSpiderMiddleware': 543,

#} # Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

DOWNLOADER_MIDDLEWARES = {

'hzzjb_web.middlewares.HzzjbWebDownloaderMiddleware': 500,

} # Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#} # Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'hzzjb_web.pipelines.HzzjbWebPipeline': 300,

} # Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False # Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

#middlewares.py # -*- coding: utf-8 -*- # Define here the models for your spider middleware

#

# See documentation in:

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html from scrapy import signals class HzzjbWebSpiderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the spider middleware does not modify the

# passed objects. @classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s def process_spider_input(self, response, spider):

# Called for each response that goes through the spider

# middleware and into the spider. # Should return None or raise an exception.

return None def process_spider_output(self, response, result, spider):

# Called with the results returned from the Spider, after

# it has processed the response. # Must return an iterable of Request, dict or Item objects.

for i in result:

yield i def process_spider_exception(self, response, exception, spider):

# Called when a spider or process_spider_input() method

# (from other spider middleware) raises an exception. # Should return either None or an iterable of Response, dict

# or Item objects.

pass def process_start_requests(self, start_requests, spider):

# Called with the start requests of the spider, and works

# similarly to the process_spider_output() method, except

# that it doesn’t have a response associated. # Must return only requests (not items).

for r in start_requests:

yield r def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name) class HzzjbWebDownloaderMiddleware(object):

# Not all methods need to be defined. If a method is not defined,

# scrapy acts as if the downloader middleware does not modify the

# passed objects. @classmethod

def from_crawler(cls, crawler):

# This method is used by Scrapy to create your spiders.

s = cls()

crawler.signals.connect(s.spider_opened, signal=signals.spider_opened)

return s def process_request(self, request, spider):

# Called for each request that goes through the downloader

# middleware. # Must either:

# - return None: continue processing this request

# - or return a Response object

# - or return a Request object

# - or raise IgnoreRequest: process_exception() methods of

# installed downloader middleware will be called

return None def process_response(self, request, response, spider):

# Called with the response returned from the downloader. # Must either;

# - return a Response object

# - return a Request object

# - or raise IgnoreRequest

return response def process_exception(self, request, exception, spider):

# Called when a download handler or a process_request()

# (from other downloader middleware) raises an exception. # Must either:

# - return None: continue processing this exception

# - return a Response object: stops process_exception() chain

# - return a Request object: stops process_exception() chain

pass def spider_opened(self, spider):

spider.logger.info('Spider opened: %s' % spider.name)

37.scrapy解决翻页及采集杭州造价网站材料数据的更多相关文章

- WPF中ListBox ListView数据翻页浏览笔记(强调:是数据翻页,非翻页动画)

ListBox和ListView在应用中,常常有需求关于每页显示固定数量的数据,然后通过Timer自动或者手动翻页操作,本文介绍到的就是该动作的实现. 一.重点 对于ListBox和ListView来 ...

- <el-table>序号逐次增加,翻页时继续累加,解决翻页时从编号1开始的情况

注释: scope.$index 当前序号 cuePage 表示当前页码 pageSize 表示每页显示的条数

- 翻页组件page-flip调用问题

翻页组件重新调用解决方案 翻页组件:page-flip import { PageFlip } from 'page-flip' pagefile() { //绘制翻页 this.pageFlip = ...

- 爬虫系列 一次采集.NET WebForm网站的坎坷历程

今天接到一个活,需要统计人员的工号信息,由于种种原因不能直接连数据库 [无奈].[无奈].[无奈].采取迂回方案,写个工具自动登录网站,采集用户信息. 这也不是第一次采集ASP.NET网站,以前采集的 ...

- Golddata如何采集需要登录/会话的数据?

概要 本文将介绍使用GoldData半自动登录功能,来采集需要登录网站的数据.GoldData半自动登录功能,就是指通过脚本来执行登录,如果需要验证码或者其它内容需要人工输入时,可以通过收发邮件来执行 ...

- 34.scrapy解决爬虫翻页问题

这里主要解决的问题: 1.翻页需要找到页面中加载的两个参数. '__VIEWSTATE': '{}'.format(response.meta['data']['__VIEWSTATE']), '__ ...

- 【解决ViewPager在大屏上滑动不流畅】 设置ViewPager滑动翻页距离

在项目中做了一个ViewPager+Fragment滑动翻页的效果,在模拟器和小米手机上测试也比较正常.但是换到4.7以上屏幕测试的时候发现老是滑动失效. 因为系统默认的滑动策略是当用户滑动超过半屏之 ...

- scrapy爬虫系列之二--翻页爬取及日志的基本用法

功能点:如何翻页爬取信息,如何发送请求,日志的简单实用 爬取网站:腾讯社会招聘网 完整代码:https://files.cnblogs.com/files/bookwed/tencent.zip 主要 ...

- combogrid翻页后保持显示内容为配置的textField解决办法

easyui的combogrid当配置pagination为true进行分页时,当datagrid加载其他数据页,和上一次选中的valueField不匹配时,会导致combogrid直接显示value ...

随机推荐

- CentOS 服务器安全设置 --摘抄自https://www.kafan.cn/edu/8169544.html

一.系统安全记录文件 操作系统内部的记录文件是检测是否有网络入侵的重要线索.如果您的系统是直接连到Internet,您发现有很多人对您的系统做Telnet/FTP登录尝试,可以运行”#more /va ...

- 字符串CRC校验

字符串CRC校验 <pre name="code" class="python"><span style="font-family: ...

- xe5 android tts(Text To Speech) [转]

TTS是Text To Speech的缩写,即“从文本到语音”,是人机对话的一部分,让机器能够说话. 以下代码实现xe5 开发的文本转语音的方法 和访问蓝牙一样,这里用javaclass的接口实现 接 ...

- CentOS6.5把MySQL从5.1升级到5.6后,MySQL不能启动

解决了:进入mysql安装目录 cd /var/lib/mysql删除了如下三个文件:ibdata1 ib_logfile0 ib_logfile1 CentOS6.5把MySQL从5.1升级到5 ...

- [原抄] Potplayer 1.7.2710 快捷键

对着软件一个一个抄下来的. 打开文件:Ctrl+O[F3] / 简索文件:F12 / 最后文件 Ctrl+Y / 关闭:F4 打开摄像头:Ctrl+J / 打开DVD设备 Ctrl+D 播放.暂停:空 ...

- Centos 6.3 安装教程

如果创建虚拟机,加载镜像之前都报错,可能是virtualbox 的版本问题,建议使用virtualbox 4.3.12 版本 1. 按回车 2.Skip 跳过 3.next 4.选择中文简体 n ...

- 自定义抛出throw 对象练习

package ltb6w; import java.util.*; public class Bank { private String select; private String select2 ...

- 【springboot】之常用技术文档

https://www.ibm.com/developerworks/cn/java/j-lo-spring-boot/index.html

- C/S模型服务端vsftpd的安装与卸载

c/s模型 连接光驱DVD 设置环境(软件安装的环境) mkdir /mnt/yw----------------------(创建一个在mnt下yw目录) mount /dev.sr0 /mnt/y ...

- 小程序API录音 微信录音后 Silk格式转码MP3

http://www.cnblogs.com/wqh17/p/6911748.html