第2章_神经网络入门_2-5&2-6 数据处理与模型图构建

神经元的TF实现

安装

版本: Python 2.7 tf 1.8.0 Linux

略

demo

神经网络的TF实现

# py36 tf 2.1.

# import tensorflow as tf

import tensorflow.compat.v1 as tf

tf.compat.v1.disable_eager_execution()

# https://blog.csdn.net/qq_39777550/article/details/104224296

import os

import pickle

import numpy as np

CIFAR_DIR = "./../../cifar-10-batches-py"

print(os.listdir(CIFAR_DIR))

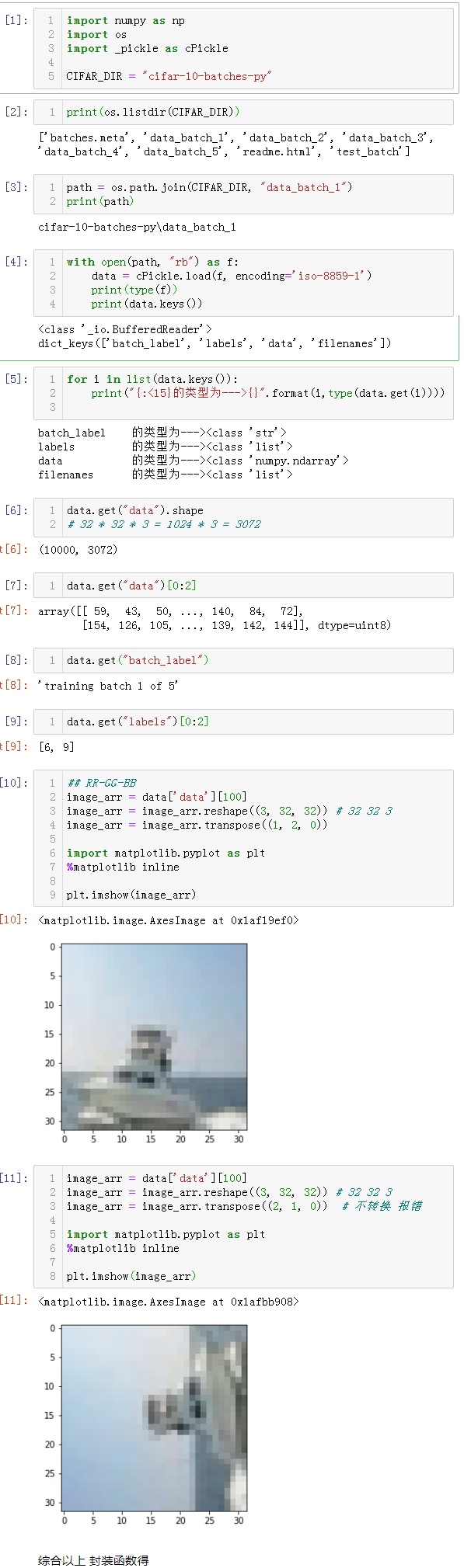

# 读取数据

def load_data(filename):

"""read data from data file."""

with open(filename, 'rb') as f:

data = pickle.load(f, encoding='bytes')

return data[b'data'], data[b'labels']

# tensorflow.Dataset.

class CifarData:

def __init__(self, filenames, need_shuffle):

all_data = []

all_labels = []

for filename in filenames:

data, labels = load_data(filename)

for item, label in zip(data, labels):

if label in [0, 1]:

all_data.append(item)

all_labels.append(label)

self._data = np.vstack(all_data)

self._data = self._data / 127.5 - 1

self._labels = np.hstack(all_labels)

print(self._data.shape)

print(self._labels.shape)

self._num_examples = self._data.shape[0]

self._need_shuffle = need_shuffle

self._indicator = 0

if self._need_shuffle:

self._shuffle_data()

def _shuffle_data(self):

# [0,1,2,3,4,5] -> [5,3,2,4,0,1]

p = np.random.permutation(self._num_examples)

self._data = self._data[p]

self._labels = self._labels[p]

def next_batch(self, batch_size):

"""return batch_size examples as a batch."""

end_indicator = self._indicator + batch_size

if end_indicator > self._num_examples:

if self._need_shuffle:

self._shuffle_data()

self._indicator = 0

end_indicator = batch_size

else:

raise Exception("have no more examples")

if end_indicator > self._num_examples:

raise Exception("batch size is larger than all examples")

batch_data = self._data[self._indicator: end_indicator]

batch_labels = self._labels[self._indicator: end_indicator]

self._indicator = end_indicator

return batch_data, batch_labels

train_filenames = [os.path.join(CIFAR_DIR, 'data_batch_%d' % i) for i in range(1, 6)]

test_filenames = [os.path.join(CIFAR_DIR, 'test_batch')]

train_data = CifarData(train_filenames, True)

test_data = CifarData(test_filenames, False)

# 搭建计算图

x = tf.placeholder(tf.float32, [None, 3072])

# [None] None表示输入样本不确定 可调整

y = tf.placeholder(tf.int64, [None])

# (3072, 1)

w = tf.get_variable('w', [x.get_shape()[-1], 1],

initializer=tf.random_normal_initializer(0, 1))

# (1, )

b = tf.get_variable('b', [1],

initializer=tf.constant_initializer(0.0))

# [None, 3072] * [3072, 1] = [None, 1]

y_ = tf.matmul(x, w) + b

# [None, 1]

p_y_1 = tf.nn.sigmoid(y_)

# [None, 1]

y_reshaped = tf.reshape(y, (-1, 1))

y_reshaped_float = tf.cast(y_reshaped, tf.float32)

loss = tf.reduce_mean(tf.square(y_reshaped_float - p_y_1))

# bool

predict = p_y_1 > 0.5

# [1,0,1,1,1,0,0,0]

correct_prediction = tf.equal(tf.cast(predict, tf.int64), y_reshaped)

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float64))

with tf.name_scope('train_op'):

train_op = tf.train.AdamOptimizer(1e-3).minimize(loss)

init = tf.global_variables_initializer()

batch_size = 20

train_steps = 100000

test_steps = 100

with tf.Session() as sess:

sess.run(init)

for i in range(train_steps):

batch_data, batch_labels = train_data.next_batch(batch_size)

loss_val, acc_val, _ = sess.run(

[loss, accuracy, train_op],

feed_dict={

x: batch_data,

y: batch_labels})

if (i+1) % 500 == 0:

print('[Train] Step: %d, loss: %4.5f, acc: %4.5f' % (i+1, loss_val, acc_val))

if (i+1) % 5000 == 0:

test_data = CifarData(test_filenames, False)

all_test_acc_val = []

for j in range(test_steps):

test_batch_data, test_batch_labels \

= test_data.next_batch(batch_size)

test_acc_val = sess.run(

[accuracy],

feed_dict = {

x: test_batch_data,

y: test_batch_labels

})

all_test_acc_val.append(test_acc_val)

test_acc = np.mean(all_test_acc_val)

print('[Test ] Step: %d, acc: %4.5f' % (i+1, test_acc))

out:

[Train] Step: 500, loss: 0.20478, acc: 0.75000

[Train] Step: 1000, loss: 0.21155, acc: 0.75000

[Train] Step: 1500, loss: 0.43419, acc: 0.55000

[Train] Step: 2000, loss: 0.20231, acc: 0.80000

[Train] Step: 2500, loss: 0.29795, acc: 0.70000

[Train] Step: 3000, loss: 0.10000, acc: 0.90000

[Train] Step: 3500, loss: 0.20452, acc: 0.80000

[Train] Step: 4000, loss: 0.10001, acc: 0.90000

[Train] Step: 4500, loss: 0.19975, acc: 0.80000

[Train] Step: 5000, loss: 0.19999, acc: 0.80000

(2000, 3072)

(2000,)

[Test ] Step: 5000, acc: 0.79950

[Train] Step: 5500, loss: 0.24914, acc: 0.70000

[Train] Step: 6000, loss: 0.19750, acc: 0.80000

[Train] Step: 6500, loss: 0.14980, acc: 0.85000

[Train] Step: 7000, loss: 0.20100, acc: 0.80000

[Train] Step: 7500, loss: 0.15000, acc: 0.85000

[Train] Step: 8000, loss: 0.25308, acc: 0.75000

[Train] Step: 8500, loss: 0.10026, acc: 0.90000

[Train] Step: 9000, loss: 0.25647, acc: 0.75000

[Train] Step: 9500, loss: 0.23454, acc: 0.75000

[Train] Step: 10000, loss: 0.00000, acc: 1.00000

(2000, 3072)

(2000,)

[Test ] Step: 10000, acc: 0.80750

[Train] Step: 10500, loss: 0.13790, acc: 0.85000

[Train] Step: 11000, loss: 0.09943, acc: 0.90000

[Train] Step: 11500, loss: 0.29916, acc: 0.70000

[Train] Step: 12000, loss: 0.15001, acc: 0.85000

[Train] Step: 12500, loss: 0.25000, acc: 0.75000

[Train] Step: 13000, loss: 0.53574, acc: 0.45000

[Train] Step: 13500, loss: 0.20016, acc: 0.80000

[Train] Step: 14000, loss: 0.30000, acc: 0.70000

[Train] Step: 14500, loss: 0.20156, acc: 0.80000

[Train] Step: 15000, loss: 0.18963, acc: 0.80000

(2000, 3072)

(2000,)

[Test ] Step: 15000, acc: 0.82050

[Train] Step: 15500, loss: 0.12585, acc: 0.85000

[Train] Step: 16000, loss: 0.15000, acc: 0.85000

[Train] Step: 16500, loss: 0.10003, acc: 0.90000

[Train] Step: 17000, loss: 0.15028, acc: 0.85000

[Train] Step: 17500, loss: 0.20604, acc: 0.80000

[Train] Step: 18000, loss: 0.28701, acc: 0.70000

[Train] Step: 18500, loss: 0.28371, acc: 0.70000

[Train] Step: 19000, loss: 0.18576, acc: 0.80000

[Train] Step: 19500, loss: 0.17266, acc: 0.80000

[Train] Step: 20000, loss: 0.05000, acc: 0.95000

(2000, 3072)

(2000,)

[Test ] Step: 20000, acc: 0.82350

[Train] Step: 20500, loss: 0.05006, acc: 0.95000

[Train] Step: 21000, loss: 0.25004, acc: 0.75000

[Train] Step: 21500, loss: 0.10000, acc: 0.90000

[Train] Step: 22000, loss: 0.20000, acc: 0.80000

[Train] Step: 22500, loss: 0.25239, acc: 0.75000

[Train] Step: 23000, loss: 0.19881, acc: 0.80000

[Train] Step: 23500, loss: 0.15887, acc: 0.85000

[Train] Step: 24000, loss: 0.16960, acc: 0.80000

[Train] Step: 24500, loss: 0.14990, acc: 0.85000

[Train] Step: 25000, loss: 0.10783, acc: 0.90000

(2000, 3072)

(2000,)

[Test ] Step: 25000, acc: 0.82450

[Train] Step: 25500, loss: 0.06129, acc: 0.95000

[Train] Step: 26000, loss: 0.25004, acc: 0.75000

[Train] Step: 26500, loss: 0.05000, acc: 0.95000

[Train] Step: 27000, loss: 0.06949, acc: 0.90000

[Train] Step: 27500, loss: 0.15037, acc: 0.85000

[Train] Step: 28000, loss: 0.05000, acc: 0.95000

[Train] Step: 28500, loss: 0.13999, acc: 0.85000

[Train] Step: 29000, loss: 0.11145, acc: 0.90000

[Train] Step: 29500, loss: 0.16517, acc: 0.80000

[Train] Step: 30000, loss: 0.05007, acc: 0.95000

(2000, 3072)

(2000,)

[Test ] Step: 30000, acc: 0.82450

[Train] Step: 30500, loss: 0.20095, acc: 0.80000

[Train] Step: 31000, loss: 0.18170, acc: 0.80000

[Train] Step: 31500, loss: 0.19512, acc: 0.80000

[Train] Step: 32000, loss: 0.00000, acc: 1.00000

[Train] Step: 32500, loss: 0.20135, acc: 0.80000

[Train] Step: 33000, loss: 0.11704, acc: 0.85000

[Train] Step: 33500, loss: 0.05083, acc: 0.95000

[Train] Step: 34000, loss: 0.09994, acc: 0.90000

[Train] Step: 34500, loss: 0.11291, acc: 0.85000

[Train] Step: 35000, loss: 0.10000, acc: 0.90000

(2000, 3072)

(2000,)

[Test ] Step: 35000, acc: 0.82100

[Train] Step: 35500, loss: 0.09685, acc: 0.90000

[Train] Step: 36000, loss: 0.05111, acc: 0.95000

[Train] Step: 36500, loss: 0.15356, acc: 0.85000

[Train] Step: 37000, loss: 0.14997, acc: 0.85000

[Train] Step: 37500, loss: 0.08142, acc: 0.90000

[Train] Step: 38000, loss: 0.31816, acc: 0.65000

[Train] Step: 38500, loss: 0.15094, acc: 0.85000

[Train] Step: 39000, loss: 0.10831, acc: 0.90000

[Train] Step: 39500, loss: 0.14931, acc: 0.85000

[Train] Step: 40000, loss: 0.13410, acc: 0.85000

(2000, 3072)

(2000,)

[Test ] Step: 40000, acc: 0.82400

[Train] Step: 40500, loss: 0.25000, acc: 0.75000

[Train] Step: 41000, loss: 0.18313, acc: 0.80000

[Train] Step: 41500, loss: 0.25164, acc: 0.75000

[Train] Step: 42000, loss: 0.10002, acc: 0.90000

[Train] Step: 42500, loss: 0.20063, acc: 0.80000

[Train] Step: 43000, loss: 0.20210, acc: 0.80000

[Train] Step: 43500, loss: 0.14993, acc: 0.85000

[Train] Step: 44000, loss: 0.27625, acc: 0.70000

[Train] Step: 44500, loss: 0.09901, acc: 0.90000

[Train] Step: 45000, loss: 0.08543, acc: 0.90000

(2000, 3072)

(2000,)

[Test ] Step: 45000, acc: 0.82200

[Train] Step: 45500, loss: 0.15000, acc: 0.85000

[Train] Step: 46000, loss: 0.15736, acc: 0.85000

[Train] Step: 46500, loss: 0.10140, acc: 0.90000

[Train] Step: 47000, loss: 0.05000, acc: 0.95000

[Train] Step: 47500, loss: 0.14900, acc: 0.85000

[Train] Step: 48000, loss: 0.10006, acc: 0.90000

[Train] Step: 48500, loss: 0.25005, acc: 0.75000

[Train] Step: 49000, loss: 0.14953, acc: 0.85000

[Train] Step: 49500, loss: 0.00000, acc: 1.00000

[Train] Step: 50000, loss: 0.15218, acc: 0.85000

(2000, 3072)

(2000,)

[Test ] Step: 50000, acc: 0.82400

[Train] Step: 50500, loss: 0.15024, acc: 0.85000

[Train] Step: 51000, loss: 0.24747, acc: 0.75000

[Train] Step: 51500, loss: 0.10175, acc: 0.90000

[Train] Step: 52000, loss: 0.10000, acc: 0.90000

[Train] Step: 52500, loss: 0.10093, acc: 0.90000

[Train] Step: 53000, loss: 0.04343, acc: 0.90000

[Train] Step: 53500, loss: 0.25575, acc: 0.75000

[Train] Step: 54000, loss: 0.14995, acc: 0.85000

[Train] Step: 54500, loss: 0.05000, acc: 0.95000

[Train] Step: 55000, loss: 0.11438, acc: 0.90000

(2000, 3072)

(2000,)

[Test ] Step: 55000, acc: 0.82300

[Train] Step: 55500, loss: 0.15405, acc: 0.85000

[Train] Step: 56000, loss: 0.05102, acc: 0.95000

[Train] Step: 56500, loss: 0.00000, acc: 1.00000

[Train] Step: 57000, loss: 0.10003, acc: 0.90000

[Train] Step: 57500, loss: 0.10098, acc: 0.90000

[Train] Step: 58000, loss: 0.15034, acc: 0.85000

[Train] Step: 58500, loss: 0.20014, acc: 0.80000

[Train] Step: 59000, loss: 0.10171, acc: 0.90000

[Train] Step: 59500, loss: 0.20040, acc: 0.80000

[Train] Step: 60000, loss: 0.15008, acc: 0.85000

(2000, 3072)

(2000,)

[Test ] Step: 60000, acc: 0.82450

[Train] Step: 60500, loss: 0.10014, acc: 0.90000

[Train] Step: 61000, loss: 0.05013, acc: 0.95000

[Train] Step: 61500, loss: 0.05019, acc: 0.95000

[Train] Step: 62000, loss: 0.05106, acc: 0.95000

[Train] Step: 62500, loss: 0.31838, acc: 0.70000

[Train] Step: 63000, loss: 0.15213, acc: 0.85000

[Train] Step: 63500, loss: 0.21945, acc: 0.75000

[Train] Step: 64000, loss: 0.00000, acc: 1.00000

[Train] Step: 64500, loss: 0.14999, acc: 0.85000

[Train] Step: 65000, loss: 0.20631, acc: 0.80000

(2000, 3072)

(2000,)

[Test ] Step: 65000, acc: 0.82650

[Train] Step: 65500, loss: 0.10079, acc: 0.90000

[Train] Step: 66000, loss: 0.10001, acc: 0.90000

[Train] Step: 66500, loss: 0.10000, acc: 0.90000

[Train] Step: 67000, loss: 0.05000, acc: 0.95000

[Train] Step: 67500, loss: 0.06823, acc: 0.90000

[Train] Step: 68000, loss: 0.25001, acc: 0.75000

[Train] Step: 68500, loss: 0.24419, acc: 0.75000

[Train] Step: 69000, loss: 0.15000, acc: 0.85000

[Train] Step: 69500, loss: 0.15000, acc: 0.85000

[Train] Step: 70000, loss: 0.15198, acc: 0.85000

(2000, 3072)

(2000,)

[Test ] Step: 70000, acc: 0.82400

[Train] Step: 70500, loss: 0.13796, acc: 0.85000

[Train] Step: 71000, loss: 0.15036, acc: 0.85000

[Train] Step: 71500, loss: 0.24917, acc: 0.75000

[Train] Step: 72000, loss: 0.00000, acc: 1.00000

[Train] Step: 72500, loss: 0.16025, acc: 0.85000

[Train] Step: 73000, loss: 0.05124, acc: 0.95000

[Train] Step: 73500, loss: 0.05395, acc: 0.95000

[Train] Step: 74000, loss: 0.15262, acc: 0.85000

[Train] Step: 74500, loss: 0.11369, acc: 0.90000

[Train] Step: 75000, loss: 0.20410, acc: 0.80000

(2000, 3072)

(2000,)

[Test ] Step: 75000, acc: 0.82600

[Train] Step: 75500, loss: 0.20000, acc: 0.80000

[Train] Step: 76000, loss: 0.15000, acc: 0.85000

[Train] Step: 76500, loss: 0.15020, acc: 0.85000

[Train] Step: 77000, loss: 0.15000, acc: 0.85000

[Train] Step: 77500, loss: 0.15294, acc: 0.85000

[Train] Step: 78000, loss: 0.15832, acc: 0.85000

[Train] Step: 78500, loss: 0.25277, acc: 0.75000

[Train] Step: 79000, loss: 0.20001, acc: 0.80000

[Train] Step: 79500, loss: 0.12471, acc: 0.85000

[Train] Step: 80000, loss: 0.01566, acc: 1.00000

(2000, 3072)

(2000,)

[Test ] Step: 80000, acc: 0.82450

[Train] Step: 80500, loss: 0.14999, acc: 0.85000

[Train] Step: 81000, loss: 0.20000, acc: 0.80000

[Train] Step: 81500, loss: 0.10390, acc: 0.90000

[Train] Step: 82000, loss: 0.19998, acc: 0.80000

[Train] Step: 82500, loss: 0.20066, acc: 0.80000

[Train] Step: 83000, loss: 0.20394, acc: 0.80000

[Train] Step: 83500, loss: 0.10256, acc: 0.90000

[Train] Step: 84000, loss: 0.15013, acc: 0.85000

[Train] Step: 84500, loss: 0.15000, acc: 0.85000

[Train] Step: 85000, loss: 0.15002, acc: 0.85000

(2000, 3072)

(2000,)

[Test ] Step: 85000, acc: 0.82300

[Train] Step: 85500, loss: 0.10160, acc: 0.90000

[Train] Step: 86000, loss: 0.15183, acc: 0.85000

[Train] Step: 86500, loss: 0.10011, acc: 0.90000

[Train] Step: 87000, loss: 0.10062, acc: 0.90000

[Train] Step: 87500, loss: 0.10850, acc: 0.90000

[Train] Step: 88000, loss: 0.05128, acc: 0.95000

[Train] Step: 88500, loss: 0.19986, acc: 0.80000

[Train] Step: 89000, loss: 0.20000, acc: 0.80000

[Train] Step: 89500, loss: 0.20552, acc: 0.80000

[Train] Step: 90000, loss: 0.20248, acc: 0.80000

(2000, 3072)

(2000,)

[Test ] Step: 90000, acc: 0.82850

[Train] Step: 90500, loss: 0.15013, acc: 0.85000

[Train] Step: 91000, loss: 0.10157, acc: 0.90000

[Train] Step: 91500, loss: 0.05001, acc: 0.95000

[Train] Step: 92000, loss: 0.19992, acc: 0.80000

[Train] Step: 92500, loss: 0.15054, acc: 0.85000

[Train] Step: 93000, loss: 0.15037, acc: 0.85000

[Train] Step: 93500, loss: 0.05008, acc: 0.95000

[Train] Step: 94000, loss: 0.10047, acc: 0.90000

[Train] Step: 94500, loss: 0.20108, acc: 0.80000

[Train] Step: 95000, loss: 0.00000, acc: 1.00000

(2000, 3072)

(2000,)

[Test ] Step: 95000, acc: 0.82550

[Train] Step: 95500, loss: 0.12996, acc: 0.85000

[Train] Step: 96000, loss: 0.04520, acc: 0.95000

[Train] Step: 96500, loss: 0.12607, acc: 0.85000

[Train] Step: 97000, loss: 0.18041, acc: 0.80000

[Train] Step: 97500, loss: 0.10482, acc: 0.90000

[Train] Step: 98000, loss: 0.20055, acc: 0.80000

[Train] Step: 98500, loss: 0.10218, acc: 0.90000

[Train] Step: 99000, loss: 0.20037, acc: 0.80000

[Train] Step: 99500, loss: 0.15000, acc: 0.85000

[Train] Step: 100000, loss: 0.05154, acc: 0.95000

(2000, 3072)

(2000,)

[Test ] Step: 100000, acc: 0.82550

第2章_神经网络入门_2-5&2-6 数据处理与模型图构建的更多相关文章

- ArcGIS for Desktop入门教程_第七章_使用ArcGIS进行空间分析 - ArcGIS知乎-新一代ArcGIS问答社区

原文:ArcGIS for Desktop入门教程_第七章_使用ArcGIS进行空间分析 - ArcGIS知乎-新一代ArcGIS问答社区 1 使用ArcGIS进行空间分析 1.1 GIS分析基础 G ...

- ArcGIS for Desktop入门教程_第六章_用ArcMap制作地图 - ArcGIS知乎-新一代ArcGIS问答社区

原文:ArcGIS for Desktop入门教程_第六章_用ArcMap制作地图 - ArcGIS知乎-新一代ArcGIS问答社区 1 用ArcMap制作地图 作为ArcGIS for Deskto ...

- ArcGIS for Desktop入门教程_第四章_入门案例分析 - ArcGIS知乎-新一代ArcGIS问答社区

原文:ArcGIS for Desktop入门教程_第四章_入门案例分析 - ArcGIS知乎-新一代ArcGIS问答社区 1 入门案例分析 在第一章里,我们已经对ArcGIS系列软件的体系结构有了一 ...

- ArcGIS for Desktop入门教程_第一章_引言 - ArcGIS知乎-新一代ArcGIS问答社区

原文:ArcGIS for Desktop入门教程_第一章_引言 - ArcGIS知乎-新一代ArcGIS问答社区 1 引言 1.1 读者定位 我们假设用户在阅读本指南前应已具备以下知识: · 熟悉W ...

- 第十四章——循环神经网络(Recurrent Neural Networks)(第一部分)

由于本章过长,分为两个部分,这是第一部分. 这几年提到RNN,一般指Recurrent Neural Networks,至于翻译成循环神经网络还是递归神经网络都可以.wiki上面把Recurrent ...

- 第十四章——循环神经网络(Recurrent Neural Networks)(第二部分)

本章共两部分,这是第二部分: 第十四章--循环神经网络(Recurrent Neural Networks)(第一部分) 第十四章--循环神经网络(Recurrent Neural Networks) ...

- 人工神经网络入门(4) —— AFORGE.NET简介

范例程序下载:http://files.cnblogs.com/gpcuster/ANN3.rar如果您有疑问,可以先参考 FAQ 如果您未找到满意的答案,可以在下面留言:) 0 目录人工神经网络入门 ...

- C Primer Plus_第6章_循环_编程练习

1.题略 #include int main(void) { int i; char ch[26]; for (i = 97; i <= (97+25); i++) { ch[i-97] = i ...

- C Primer Plus_第5章_运算符、表达式和语句_编程练习

Practice 1. 输入分钟输出对应的小时和分钟. #include #define MIN_PER_H 60 int main(void) { int mins, hours, minutes; ...

随机推荐

- docker 安装es跟kibana

首先docker 查询es docker search elasticsearch 在docker pull elasticsearch:7.9.3 docker在查询 kibana docker ...

- 职场PUA,管理者的五宗罪

在目前的社会环境下,程序员似乎成了"弱势群体".我们经常谈论的职场PUA已经成为程序员的代名词. 我一直在想,为什么这么多管理者能力会这么差. 但最后最吃亏的还是可怜的程序员. 也 ...

- Python 中更优雅的日志记录方案

在 Python 中,一般情况下我们可能直接用自带的 logging 模块来记录日志,包括我之前的时候也是一样.在使用时我们需要配置一些 Handler.Formatter 来进行一些处理,比如把日志 ...

- 如何在Python中处理不平衡数据

Index1.到底什么是不平衡数据2.处理不平衡数据的理论方法3.Python里有什么包可以处理不平衡样本4.Python中具体如何处理失衡样本印象中很久之前有位朋友说要我写一篇如何处理不平衡数据的文 ...

- python之列表操作的几个函数

Python中的列表是可变的,这是它却别于元组和字符串最重要的特点,元组和字符串的元素不可修改.列举一些常用的列表操作的函数和方法. 1,list.append(x),将x追加到列表list末尾: 1 ...

- C# 汉字转拼音 取汉字拼音的首字母

using System.Text.RegularExpressions; namespace DotNet.Utilities { /// <summary> /// 汉字转拼音类 // ...

- java 常用时间操作类,计算到期提醒,N年后,N月后的日期

package com.zjjerp.tool; import java.text.ParseException; import java.text.ParsePosition; import jav ...

- sklearn中的SGDClassifier

常用于大规模稀疏机器学习问题上 1.优点: 高效 简单 2.可以选择损失函数 loss="hinge": (soft-margin)线性SVM. loss="modifi ...

- Ubuntu安装最新的SlickEdit软件--破解教程

最近主要工作系统转到LInux上面来了,Slickedit的安装破解也费了些事,今天将过程整理一下做个记录. 说明:SlickEdit pro V21.03 Linux 64位实测可用,MAC实测可用 ...

- Java IO流 FileOutputStream、FileInputStream的用法

FileOutputStream.FileInputStream的使用 FileOutputStream是OutputStream的继承类,它的主要功能就是向磁盘上写文件.FileOutputStre ...