为caffe添加最简单的全通层AllPassLayer

参考赵永科的博客,这里我们实现一个新 Layer,名称为 AllPassLayer,顾名思义就是全通 Layer,“全通”借鉴于信号处理中的全通滤波器,将信号无失真地从输入转到输出。

虽然这个 Layer 并没有什么卵用,但是在这个基础上增加你的处理是非常简单的事情。另外也是出于实验考虑,全通层的 Forward/Backward 函数非常简单不需要读者有任何高等数学和求导的背景知识。读者使用该层时可以插入到任何已有网络中,而不会影响训练、预测的准确性。

首先,要把你的实现,要像正常的 Layer 类一样,分解为声明部分和实现部分,分别放在 .hpp 与 .cpp、.cu 中。Layer 名称要起一个能区别于原版实现的新名称。.hpp 文件置于 $CAFFE_ROOT/include/caffe/layers/,而 .cpp 和 .cu 置于 $CAFFE_ROOT/src/caffe/layers/,这样你在 $CAFFE_ROOT 下执行 make 编译时,会自动将这些文件加入构建过程,省去了手动设置编译选项的繁琐流程。

其次,在 $CAFFE_ROOT/src/caffe/proto/caffe.proto 中,增加新 LayerParameter 选项,这样你在编写 train.prototxt 或者 test.prototxt 或者 deploy.prototxt 时就能把新 Layer 的描述写进去,便于修改网络结构和替换其他相同功能的 Layer 了。

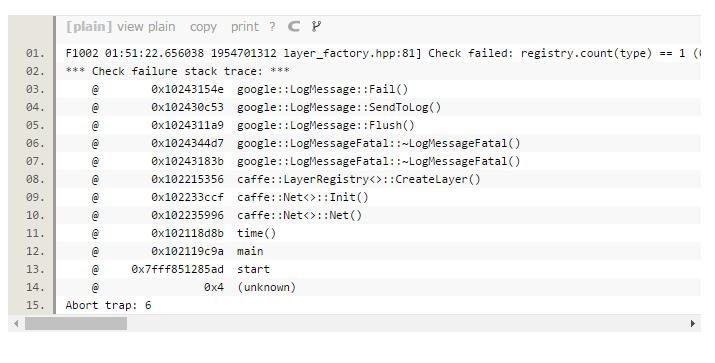

最后也是最容易忽视的一点,在 Layer 工厂注册新 Layer 加工函数,不然在你运行过程中可能会报如下错误:

首先看头文件(all_pass_layer.hpp):

#ifndef CAFFE_ALL_PASS_LAYER_HPP_

#define CAFFE_ALL_PASS_LAYER_HPP_ #include <vector> #include "caffe/blob.hpp"

#include "caffe/layer.hpp"

#include "caffe/proto/caffe.pb.h" #include "caffe/layers/neuron_layer.hpp" namespace caffe {

template <typename Dtype>

class AllPassLayer : public NeuronLayer<Dtype> {

public:

explicit AllPassLayer(const LayerParameter& param)

: NeuronLayer<Dtype>(param) {} virtual inline const char* type() const { return "AllPass"; } protected: virtual void Forward_cpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Forward_gpu(const vector<Blob<Dtype>*>& bottom,

const vector<Blob<Dtype>*>& top);

virtual void Backward_cpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

virtual void Backward_gpu(const vector<Blob<Dtype>*>& top,

const vector<bool>& propagate_down, const vector<Blob<Dtype>*>& bottom);

}; } // namespace caffe

再看源文件(all_pass_layer.cpp和all_pass_layer.cu,这两个文件我暂时还不知道有什么区别,我是直接复制粘贴的,两个文件内容一样):

#include <algorithm>

#include <vector> #include "caffe/layers/all_pass_layer.hpp"

#include <iostream> using namespace std;

#define DEBUG_AP(str) cout << str << endl;

namespace caffe{ template <typename Dtype>

void AllPassLayer<Dtype>::Forward_cpu(const vector<Blob<Dtype>*>& bottom , const vector<Blob<Dtype>*>& top)

{

const Dtype* bottom_data = bottom[] -> cpu_data(); //cpu_data()只读访问cpu data

Dtype* top_data = top[] -> mutable_cpu_data(); //mutable_cpu_data读写访问cpu data

const int count = bottom[] -> count(); //计算Blob中的元素总数

for (int i = ; i < count ; i ++)

{

top_data[i] = bottom_data[i]; //只是单纯的通过,全通

}

DEBUG_AP("Here is All Pass Layer , forwarding.");

DEBUG_AP(this -> layer_param_.all_pass_param().key()); //读取prototxt预设值

} template <typename Dtype>

void AllPassLayer<Dtype>::Backward_cpu(const vector<Blob<Dtype>*>& top , const vector<bool>& propagate_down , const vector<Blob<Dtype>*>& bottom)

{

if (propagate_down[]) //propagate_down[0] = 1则指定计算权值的剃度 , ...[1] = 1则指定计算偏置项的剃度,这里是计算权值的剃度

{

const Dtype* bottom_data = bottom[] -> cpu_data();

const Dtype* top_diff = top[] -> cpu_diff();

Dtype* bottom_diff = bottom[] -> mutable_cpu_diff();

const int count = bottom[] -> count();

for ( int i = ; i < count ; i ++ )

{

bottom_diff[i] = top_diff[i];

}

}

DEBUG_AP("Here is All Pass Layer , backwarding.");

DEBUG_AP(this -> layer_param_.all_pass_param().key());

} #ifndef CPU_ONLY

#define CPU_ONLY

#endif INSTANTIATE_CLASS(AllPassLayer);

REGISTER_LAYER_CLASS(AllPass);

} //namespace caffe

时间考虑,我没有实现 GPU 模式的 forward、backward,故本文例程仅支持 CPU_ONLY 模式。

编辑 caffe.proto,找到 LayerParameter 描述,增加一项:

message LayerParameter {

optional string name = ; // the layer name

optional string type = ; // the layer type

repeated string bottom = ; // the name of each bottom blob

repeated string top = ; // the name of each top blob

// The train / test phase for computation.

optional Phase phase = ;

// The amount of weight to assign each top blob in the objective.

// Each layer assigns a default value, usually of either 0 or 1,

// to each top blob.

repeated float loss_weight = ;

// Specifies training parameters (multipliers on global learning constants,

// and the name and other settings used for weight sharing).

repeated ParamSpec param = ;

// The blobs containing the numeric parameters of the layer.

repeated BlobProto blobs = ;

// Specifies on which bottoms the backpropagation should be skipped.

// The size must be either 0 or equal to the number of bottoms.

repeated bool propagate_down = ;

// Rules controlling whether and when a layer is included in the network,

// based on the current NetState. You may specify a non-zero number of rules

// to include OR exclude, but not both. If no include or exclude rules are

// specified, the layer is always included. If the current NetState meets

// ANY (i.e., one or more) of the specified rules, the layer is

// included/excluded.

repeated NetStateRule include = ;

repeated NetStateRule exclude = ;

// Parameters for data pre-processing.

optional TransformationParameter transform_param = ;

// Parameters shared by loss layers.

optional LossParameter loss_param = ;

// Layer type-specific parameters.

//

// Note: certain layers may have more than one computational engine

// for their implementation. These layers include an Engine type and

// engine parameter for selecting the implementation.

// The default for the engine is set by the ENGINE switch at compile-time.

optional AccuracyParameter accuracy_param = ;

optional ArgMaxParameter argmax_param = ;

optional BatchNormParameter batch_norm_param = ;

optional BiasParameter bias_param = ;

optional ConcatParameter concat_param = ;

optional ContrastiveLossParameter contrastive_loss_param = ;

optional ConvolutionParameter convolution_param = ;

optional CropParameter crop_param = ;

optional DataParameter data_param = ;

optional DropoutParameter dropout_param = ;

optional DummyDataParameter dummy_data_param = ;

optional EltwiseParameter eltwise_param = ;

optional ELUParameter elu_param = ;

optional EmbedParameter embed_param = ;

optional ExpParameter exp_param = ;

optional FlattenParameter flatten_param = ;

optional HDF5DataParameter hdf5_data_param = ;

optional HDF5OutputParameter hdf5_output_param = ;

optional HingeLossParameter hinge_loss_param = ;

optional ImageDataParameter image_data_param = ;

optional InfogainLossParameter infogain_loss_param = ;

optional InnerProductParameter inner_product_param = ;

optional InputParameter input_param = ;

optional LogParameter log_param = ;

optional LRNParameter lrn_param = ;

optional MemoryDataParameter memory_data_param = ;

optional MVNParameter mvn_param = ;

optional PoolingParameter pooling_param = ;

optional PowerParameter power_param = ;

optional PReLUParameter prelu_param = ;

optional PythonParameter python_param = ;

optional ReductionParameter reduction_param = ;

optional ReLUParameter relu_param = ;

optional ReshapeParameter reshape_param = ;

optional ScaleParameter scale_param = ;

optional SigmoidParameter sigmoid_param = ;

optional SoftmaxParameter softmax_param = ;

optional SPPParameter spp_param = ;

optional SliceParameter slice_param = ;

optional TanHParameter tanh_param = ;

optional ThresholdParameter threshold_param = ;

optional TileParameter tile_param = ;

optional WindowDataParameter window_data_param = ;

optional AllPassParameter all_pass_param = ;

}

注意新增数字不要和以前的 Layer 数字重复。

仍然在 caffe.proto 中,增加 AllPassParameter 声明,位置任意。我设定了一个参数,可以用于从 prototxt 中读取预设值。

message AllPassParameter {

optional float key = [default = ];

}

这句来读取 prototxt 预设值。

在 $CAFFE_ROOT 下执行 make clean,然后重新 make all。要想一次编译成功,务必规范代码,对常见错误保持敏锐的嗅觉并加以避免。

万事具备,只欠 prototxt 了。

不难,我们写个最简单的 deploy.prototxt,不需要 data layer 和 softmax layer,just for fun。

name: "AllPassTest"

layer {

name: "data"

type: "Input"

top: "data"

input_param { shape: { dim: dim: dim: dim: } }

}

layer {

name: "ap"

type: "AllPass"

bottom: "data"

top: "conv1"

all_pass_param {

key: 12.88

}

}

注意,这里的 type :后面写的内容,应该是你在 .hpp 中声明的新类 class name 去掉 Layer 后的名称。

上面设定了 key 这个参数的预设值为 12.88,嗯,你想到了刘翔对不对。

为了检验该 Layer 是否能正常创建和执行 forward, backward,我们运行 caffe time 命令并指定刚刚实现的 prototxt :

$ ./build/tools/caffe.bin time -model deploy.prototxt

I1002 ::41.667682 caffe.cpp:] Use CPU.

I1002 ::41.671360 net.cpp:] Initializing net from parameters:

name: "AllPassTest"

state {

phase: TRAIN

}

layer {

name: "data"

type: "Input"

top: "data"

input_param {

shape {

dim:

dim:

dim:

dim:

}

}

}

layer {

name: "ap"

type: "AllPass"

bottom: "data"

top: "conv1"

all_pass_param {

key: 12.88

}

}

I1002 ::41.671463 layer_factory.hpp:] Creating layer data

I1002 ::41.671484 net.cpp:] Creating Layer data

I1002 ::41.671499 net.cpp:] data -> data

I1002 ::41.671555 net.cpp:] Setting up data

I1002 ::41.671566 net.cpp:] Top shape: ()

I1002 ::41.671592 net.cpp:] Memory required for data:

I1002 ::41.671605 layer_factory.hpp:] Creating layer ap

I1002 ::41.671620 net.cpp:] Creating Layer ap

I1002 ::41.671630 net.cpp:] ap <- data

I1002 ::41.671644 net.cpp:] ap -> conv1

I1002 ::41.671663 net.cpp:] Setting up ap

I1002 ::41.671674 net.cpp:] Top shape: ()

I1002 ::41.671685 net.cpp:] Memory required for data:

I1002 ::41.671695 net.cpp:] ap does not need backward computation.

I1002 ::41.671705 net.cpp:] data does not need backward computation.

I1002 ::41.671710 net.cpp:] This network produces output conv1

I1002 ::41.671720 net.cpp:] Network initialization done.

I1002 ::41.671746 caffe.cpp:] Performing Forward

Here is All Pass Layer, forwarding.

12.88

I1002 ::41.679689 caffe.cpp:] Initial loss:

I1002 ::41.679714 caffe.cpp:] Performing Backward

I1002 ::41.679738 caffe.cpp:] *** Benchmark begins ***

I1002 ::41.679746 caffe.cpp:] Testing for iterations.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.681139 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.682394 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.683653 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.685096 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.686326 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.687713 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.689038 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.690251 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.691548 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.692805 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.694056 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.695264 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.696761 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.698225 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.699653 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.700945 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.702761 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.704056 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.706471 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.708784 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.710043 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.711272 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.712528 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.713964 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.715248 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.716487 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.717725 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.718962 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.720289 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.721837 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.723042 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.724261 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.725587 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.726771 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.728013 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.729249 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.730716 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.732275 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.733809 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.735049 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.737144 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.739090 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.741575 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.743450 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.744732 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.745970 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.747185 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.748430 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.749826 caffe.cpp:] Iteration: forward-backward time: ms.

Here is All Pass Layer, forwarding.

12.88

Here is All Pass Layer, backwarding.

12.88

I1002 ::41.751124 caffe.cpp:] Iteration: forward-backward time: ms.

I1002 ::41.751147 caffe.cpp:] Average time per layer:

I1002 ::41.751157 caffe.cpp:] data forward: 0.00108 ms.

I1002 ::41.751183 caffe.cpp:] data backward: 0.001 ms.

I1002 ::41.751194 caffe.cpp:] ap forward: 1.37884 ms.

I1002 ::41.751205 caffe.cpp:] ap backward: 0.01156 ms.

I1002 ::41.751220 caffe.cpp:] Average Forward pass: 1.38646 ms.

I1002 ::41.751231 caffe.cpp:] Average Backward pass: 0.0144 ms.

I1002 ::41.751240 caffe.cpp:] Average Forward-Backward: 1.42 ms.

I1002 ::41.751250 caffe.cpp:] Total Time: ms.

I1002 ::41.751260 caffe.cpp:] *** Benchmark ends ***

可见该 Layer 可以正常创建、加载预设参数、执行 forward、backward 函数。

实际上对于算法 Layer,还要写 Test Case 保证功能正确。由于我们选择了极为简单的全通 Layer,故这一步可以省去。我这里偷点懒,您省点阅读时间。

补充:make all后还要重新配置python接口,make pycaffe ,然后再按之前的操作就行了。。。

参考链接:http://blog.csdn.net/kkk584520/article/details/52721838

为caffe添加最简单的全通层AllPassLayer的更多相关文章

- Caffe源码阅读(1) 全连接层

Caffe源码阅读(1) 全连接层 发表于 2014-09-15 | 今天看全连接层的实现.主要看的是https://github.com/BVLC/caffe/blob/master/src ...

- 【深夜福利】Caffe 添加自己定义 Layer 及其 ProtoBuffer 參数

在飞驰的列车上,无法入眠.外面阴雨绵绵,思绪被拉扯到天边. 翻看之前聊天,想起还欠一个读者一篇博客. 于是花了点时间整理一下之前学习 Caffe 时添加自己定义 Layer 及自己定义 ProtoBu ...

- Mac为docker和kubectl添加自动命令补全

我最新最全的文章都在南瓜慢说 www.pkslow.com,欢迎大家来喝茶! 1 前言 自动命令补全是非常有用的功能,特别是当命令有特别多参数时.显然,docker/kubectl就是这样的命令.我们 ...

- 如何给caffe添加新的layer ?

如何给caffe添加新的layer ? 初学caffe难免会遇到这个问题,网上搜来一段看似经典的话, 但是问题来了,貌似新版的caffe并没有上面提到的vision_layer:

- caffe之(四)全连接层

在caffe中,网络的结构由prototxt文件中给出,由一些列的Layer(层)组成,常用的层如:数据加载层.卷积操作层.pooling层.非线性变换层.内积运算层.归一化层.损失计算层等:本篇主要 ...

- caffe中全卷积层和全连接层训练参数如何确定

今天来仔细讲一下卷基层和全连接层训练参数个数如何确定的问题.我们以Mnist为例,首先贴出网络配置文件: name: "LeNet" layer { name: "mni ...

- caffe添加python数据层

caffe添加python数据层(ImageData) 在caffe中添加自定义层时,必须要实现这四个函数,在C++中是(LayerSetUp,Reshape,Forward_cpu,Backward ...

- 简单JS全选、反选代码

1<!DOCTYPE html PUBLIC "-//W3C//DTD XHTML 1.0 Transitional//EN" "http://www.w3.org ...

- VUE实现简单的全选/全不选

<!DOCTYPE html> <html> <head lang="en"> <meta charset="UTF-8&quo ...

随机推荐

- 开源工作流程引擎ccflow多人待办处理模式的详解

多人待办工作处理模式,也是待办处理模式.是当接受的节点是多个人的时候,如何处理待办? 根据不用的场景,ccbpm把多人在普通节点下的处理模式分为如下几种. 抢办模式: A发送到B ,B节点上有n个人可 ...

- 自学Zabbix2.5-客户端agentd安装过程

点击返回:自学Zabbix之路 ....

- 学习 Spring Boot:(二十九)Spring Boot Junit 单元测试

前言 JUnit 是一个回归测试框架,被开发者用于实施对应用程序的单元测试,加快程序编制速度,同时提高编码的质量. JUnit 测试框架具有以下重要特性: 测试工具 测试套件 测试运行器 测试分类 了 ...

- Web Performance and Load Test Project错误集

当我们创建Web Performance and Load Test Project时,经常会遇到下面这些问题: 1. 当点击Add Recording时, 左边的record tree没有出现: 解 ...

- CAN通信详解

30.1 CAN简介 30.2 硬件设计 30.3 软件设计 30.4 下载验证 CAN 是Controller Area Network 的缩写(以下称为CAN),是ISO国际标准化的串行通信协议. ...

- Android 自定义ImageView支持缩放,拖拽,方便复用

今天刚发了一篇关于ImageView的缩放和拖拽的博客,然后我想了下,将他自定义下,方便我们来复用这个imageView,效果我就不多说了,http://blog.csdn.net/xiaanming ...

- window.open打开页面居中显示

<script type="text/javascript"> function openwindow(url,name,iWidth,iHeight) { var u ...

- bug8 eclipse项目导入到myeclipse时 Target runtime com.genuitec.runtime.generic

1.新导入的工程,出问题很大可能是jdk的版本问题导致,检查一下,发现jdk果然不一致,修改了jdk版本,但异常没有消除 2.网上查询下解决方案,原来在工程目录下的settings,有个文件也需要修改 ...

- Linux追加文件内容并在内容前加上该文件名(awk, FILENAME功能妙用)

假如有三个文件file1.txt,file2.txt,file3.txt 每一个文件内容如下: 现在打算提取每一个文件字符为16的行,打印该行所有的内容.以及该文件名,并追加到file4.txt,则可 ...

- 一个简单的加载动画,js实现

简单效果图: html: <div class="box"> <ul> <li></li> <li></li> ...