6.824 Lab 2: Raft 2B

Part 2B

We want Raft to keep a consistent, replicated log of operations. A call to Start() at the leader starts the process of adding a new operation to the log; the leader sends the new operation to the other servers in AppendEntries RPCs.我们希望Raft保持一致的、复制日志的操作。在leader处调用Start()将启动向日志添加新操作的过程;leader将新操作发送到附录条目rpc中的其他服务器。

Implement the leader and follower code to append new log entries. This will involve implementing Start(), completing the AppendEntries RPC structs, sending them, fleshing out the AppendEntry RPC handler, and advancing the commitIndex at the leader. Your first goal should be to pass the TestBasicAgree2B() test (in test_test.go). Once you have that working, you should get all the 2B tests to pass (go test -run 2B).

- You will need to implement the election restriction (section 5.4.1 in the paper).实现选举限制:如果日志不是新的,不给投票

- One way to fail the early Lab 2B tests is to hold un-needed elections, that is, an election even though the current leader is alive and can talk to all peers. This can prevent agreement in situations where the tester believes agreement is possible. Bugs in election timer management, or not sending out heartbeats immediately after winning an election, can cause un-needed elections.不必要的选举可能导致2b测试的过早失败

- You may need to write code that waits for certain events to occur. Do not write loops that execute continuously without pausing, since that will slow your implementation enough that it fails tests. You can wait efficiently with Go's channels, or Go's condition variables, or (if all else fails) by inserting a time.Sleep(10 * time.Millisecond) in each loop iteration.

- Give yourself time to rewrite your implementation in light of lessons learned about structuring concurrent code. In later labs you'll thank yourself for having Raft code that's as clear and clean as possible. For ideas, you can re-visit our structure, locking and guide, pages.

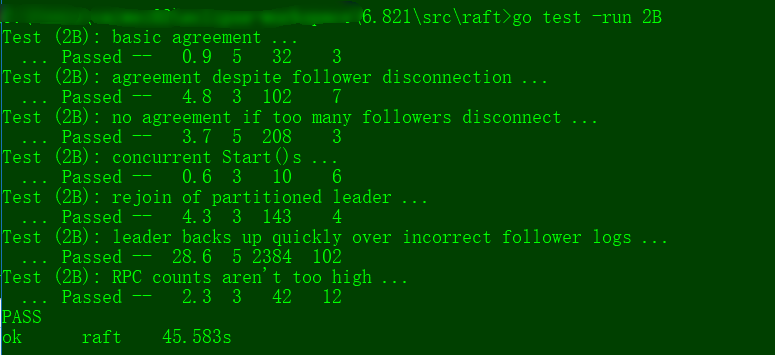

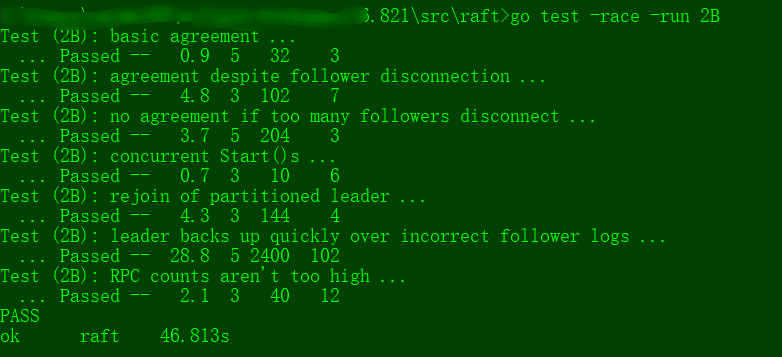

The tests for upcoming labs may fail your code if it runs too slowly. You can check how much real time and CPU time your solution uses with the time command. Here's some typical output for Lab 2B:

$ time go test -run 2B

Test (2B): basic agreement ...

... Passed -- 0.5 5 28 3

Test (2B): agreement despite follower disconnection ...

... Passed -- 3.9 3 69 7

Test (2B): no agreement if too many followers disconnect ...

... Passed -- 3.5 5 144 4

Test (2B): concurrent Start()s ...

... Passed -- 0.7 3 12 6

Test (2B): rejoin of partitioned leader ...

... Passed -- 4.3 3 106 4

Test (2B): leader backs up quickly over incorrect follower logs ...

... Passed -- 23.0 5 1302 102

Test (2B): RPC counts aren't too high ...

... Passed -- 2.2 3 30 12

PASS

ok raft 38.029s

real 0m38.511s

user 0m1.460s

sys 0m0.901s

$

The "ok raft 38.029s" means that Go measured the time taken for the 2B tests to be 38.029 seconds of real (wall-clock) time. The "user 0m1.460s" means that the code consumed 1.460 seconds of CPU time, or time spent actually executing instructions (rather than waiting or sleeping). If your solution uses much more than a minute of real time for the 2B tests, or much more than 5 seconds of CPU time, you may run into trouble later on. Look for time spent sleeping or waiting for RPC timeouts, loops that run without sleeping or waiting for conditions or channel messages, or large numbers of RPCs sent.

Be sure you pass the 2A and 2B tests before submitting Part 2B.

my homework code:

package raft //

// this is an outline of the API that raft must expose to

// the service (or tester). see comments below for

// each of these functions for more details.

//

// rf = Make(...)

// create a new Raft server.

// rf.Start(command interface{}) (index, term, isleader)

// start agreement on a new log entry

// rf.GetState() (term, isLeader)

// ask a Raft for its current term, and whether it thinks it is leader

// ApplyMsg

// each time a new entry is committed to the log, each Raft peer

// should send an ApplyMsg to the service (or tester)

// in the same server.

// import (

"labrpc"

"math/rand"

"sync"

"time"

) // import "bytes"

// import "labgob" //

// as each Raft peer becomes aware that successive log entries are

// committed, the peer should send an ApplyMsg to the service (or

// tester) on the same server, via the applyCh passed to Make(). set

// CommandValid to true to indicate that the ApplyMsg contains a newly

// committed log entry.

//

// in Lab 3 you'll want to send other kinds of messages (e.g.,

// snapshots) on the applyCh; at that point you can add fields to

// ApplyMsg, but set CommandValid to false for these other uses.

//

type ApplyMsg struct {

CommandValid bool

Command interface{}

CommandIndex int

} type LogEntry struct {

Command interface{}

Term int

} const (

Follower int =

Candidate int =

Leader int =

HEART_BEAT_TIMEOUT = //心跳超时,要求1秒10次,所以是100ms一次

) //

// A Go object implementing a single Raft peer.

//

type Raft struct {

mu sync.Mutex // Lock to protect shared access to this peer's state

peers []*labrpc.ClientEnd // RPC end points of all peers

persister *Persister // Object to hold this peer's persisted state

me int // this peer's index into peers[] // Your data here (2A, 2B, 2C).

// Look at the paper's Figure 2 for a description of what

// state a Raft server must maintain.

electionTimer *time.Timer // 选举定时器

heartbeatTimer *time.Timer // 心跳定时器

state int // 角色

voteCount int //投票数

applyCh chan ApplyMsg // 提交通道 currentTerm int //latest term server has seen (initialized to 0 on first boot, increases monotonically)

votedFor int //candidateId that received vote in current term (or null if none)

log []LogEntry //log entries; each entry contains command for state machine, and term when entry was received by leader (first index is 1) //Volatile state on all servers:

commitIndex int //index of highest log entry known to be committed (initialized to 0, increases monotonically)

lastApplied int //index of highest log entry applied to state machine (initialized to 0, increases monotonically)

//Volatile state on leaders:(Reinitialized after election)

nextIndex []int //for each server, index of the next log entry to send to that server (initialized to leader last log index + 1)

matchIndex []int //for each server, index of highest log entry known to be replicated on server (initialized to 0, increases monotonically) } // return currentTerm and whether this server

// believes it is the leader.

func (rf *Raft) GetState() (int, bool) {

var term int

var isleader bool

// Your code here (2A).

rf.mu.Lock()

defer rf.mu.Unlock()

term = rf.currentTerm

isleader = rf.state == Leader

return term, isleader

} func (rf *Raft) persist() {

// Your code here (2C).

// Example:

// w := new(bytes.Buffer)

// e := labgob.NewEncoder(w)

// e.Encode(rf.xxx)

// e.Encode(rf.yyy)

// data := w.Bytes()

// rf.persister.SaveRaftState(data)

} //

// restore previously persisted state.

//

func (rf *Raft) readPersist(data []byte) {

if data == nil || len(data) < { // bootstrap without any state?

return

}

// Your code here (2C).

// Example:

// r := bytes.NewBuffer(data)

// d := labgob.NewDecoder(r)

// var xxx

// var yyy

// if d.Decode(&xxx) != nil ||

// d.Decode(&yyy) != nil {

// error...

// } else {

// rf.xxx = xxx

// rf.yyy = yyy

// }

} //

// example RequestVote RPC arguments structure.

// field names must start with capital letters!

//

type RequestVoteArgs struct {

// Your data here (2A, 2B).

Term int //candidate’s term

CandidateId int //candidate requesting vote

LastLogIndex int //index of candidate’s last log entry (§5.4)

LastLogTerm int //term of candidate’s last log entry (§5.4)

} //

// example RequestVote RPC reply structure.

// field names must start with capital letters!

//

type RequestVoteReply struct {

// Your data here (2A).

Term int //currentTerm, for candidate to update itself

VoteGranted bool //true means candidate received vote

} //

// example RequestVote RPC handler.

//

func (rf *Raft) RequestVote(args *RequestVoteArgs, reply *RequestVoteReply) {

// Your code here (2A, 2B).

rf.mu.Lock()

defer rf.mu.Unlock()

DPrintf("Candidate[raft%v][term:%v] request vote: raft%v[%v] 's term%v\n", args.CandidateId, args.Term, rf.me, rf.state, rf.currentTerm)

if args.Term < rf.currentTerm ||

(args.Term == rf.currentTerm && rf.votedFor != - && rf.votedFor != args.CandidateId) {

reply.Term = rf.currentTerm

reply.VoteGranted = false

return

} if args.Term > rf.currentTerm {

rf.currentTerm = args.Term

rf.switchStateTo(Follower)

} // 2B: candidate's vote should be at least up-to-date as receiver's log

// "up-to-date" is defined in thesis 5.4.1

lastLogIndex := len(rf.log) -

if args.LastLogTerm < rf.log[lastLogIndex].Term ||

(args.LastLogTerm == rf.log[lastLogIndex].Term &&

args.LastLogIndex < (lastLogIndex)) {

// Receiver is more up-to-date, does not grant vote

reply.Term = rf.currentTerm

reply.VoteGranted = false

return

} rf.votedFor = args.CandidateId

reply.Term = rf.currentTerm

reply.VoteGranted = true

// reset timer after grant vote

rf.electionTimer.Reset(randTimeDuration())

} type AppendEntriesArgs struct {

Term int //leader’s term

LeaderId int //so follower can redirect clients

PrevLogIndex int //index of log entry immediately preceding new ones

PrevLogTerm int //term of prevLogIndex entry

Entries []LogEntry //log entries to store (empty for heartbeat; may send more than one for efficiency)

LeaderCommit int //leader’s commitIndex

} type AppendEntriesReply struct {

Term int //currentTerm, for leader to update itself

Success bool //true if follower contained entry matching prevLogIndex and prevLogTerm

} func (rf *Raft) AppendEntries(args *AppendEntriesArgs, reply *AppendEntriesReply) {

rf.mu.Lock()

defer rf.mu.Unlock()

DPrintf("leader[raft%v][term:%v] beat term:%v [raft%v][%v]\n", args.LeaderId, args.Term, rf.currentTerm, rf.me, rf.state)

reply.Success = true // 1. Reply false if term < currentTerm (§5.1)

if args.Term < rf.currentTerm {

reply.Success = false

reply.Term = rf.currentTerm

return

}

//If RPC request or response contains term T > currentTerm:set currentTerm = T, convert to follower (§5.1)

if args.Term > rf.currentTerm {

rf.currentTerm = args.Term

rf.switchStateTo(Follower)

} // reset election timer even log does not match

// args.LeaderId is the current term's Leader

rf.electionTimer.Reset(randTimeDuration()) // 2. Reply false if log doesn’t contain an entry at prevLogIndex

// whose term matches prevLogTerm (§5.3)

lastLogIndex := len(rf.log) -

if lastLogIndex < args.PrevLogIndex {

reply.Success = false

reply.Term = rf.currentTerm

return

} // 3. If an existing entry conflicts with a new one (same index

// but different terms), delete the existing entry and all that

// follow it (§5.3)

if rf.log[(args.PrevLogIndex)].Term != args.PrevLogTerm {

reply.Success = false

reply.Term = rf.currentTerm

return

} // 4. Append any new entries not already in the log

// compare from rf.log[args.PrevLogIndex + 1]

unmatch_idx := -

for idx := range args.Entries {

if len(rf.log) < (args.PrevLogIndex++idx) ||

rf.log[(args.PrevLogIndex++idx)].Term != args.Entries[idx].Term {

// unmatch log found

unmatch_idx = idx

break

}

} if unmatch_idx != - {

// there are unmatch entries

// truncate unmatch Follower entries, and apply Leader entries

rf.log = rf.log[:(args.PrevLogIndex + + unmatch_idx)]

rf.log = append(rf.log, args.Entries[unmatch_idx:]...)

} //5. If leaderCommit > commitIndex, set commitIndex = min(leaderCommit, index of last new entry)

if args.LeaderCommit > rf.commitIndex {

rf.setCommitIndex(min(args.LeaderCommit, len(rf.log)-))

} reply.Success = true

} //

// example code to send a RequestVote RPC to a server.

// server is the index of the target server in rf.peers[].

// expects RPC arguments in args.

// fills in *reply with RPC reply, so caller should

// pass &reply.

// the types of the args and reply passed to Call() must be

// the same as the types of the arguments declared in the

// handler function (including whether they are pointers).

//

// The labrpc package simulates a lossy network, in which servers

// may be unreachable, and in which requests and replies may be lost.

// Call() sends a request and waits for a reply. If a reply arrives

// within a timeout interval, Call() returns true; otherwise

// Call() returns false. Thus Call() may not return for a while.

// A false return can be caused by a dead server, a live server that

// can't be reached, a lost request, or a lost reply.

//

// Call() is guaranteed to return (perhaps after a delay) *except* if the

// handler function on the server side does not return. Thus there

// is no need to implement your own timeouts around Call().

//

// look at the comments in ../labrpc/labrpc.go for more details.

//

// if you're having trouble getting RPC to work, check that you've

// capitalized all field names in structs passed over RPC, and

// that the caller passes the address of the reply struct with &, not

// the struct itself.

//

func (rf *Raft) sendRequestVote(server int, args *RequestVoteArgs, reply *RequestVoteReply) bool {

ok := rf.peers[server].Call("Raft.RequestVote", args, reply)

return ok

} func (rf *Raft) sendAppendEntries(server int, args *AppendEntriesArgs, reply *AppendEntriesReply) bool {

ok := rf.peers[server].Call("Raft.AppendEntries", args, reply)

return ok

} //

// the service using Raft (e.g. a k/v server) wants to start

// agreement on the next command to be appended to Raft's log. if this

// server isn't the leader, returns false. otherwise start the

// agreement and return immediately. there is no guarantee that this

// command will ever be committed to the Raft log, since the leader

// may fail or lose an election. even if the Raft instance has been killed,

// this function should return gracefully.

//

// the first return value is the index that the command will appear at

// if it's ever committed. the second return value is the current

// term. the third return value is true if this server believes it is

// the leader.

//

func (rf *Raft) Start(command interface{}) (int, int, bool) {

index := -

term := -

isLeader := true // Your code here (2B).

rf.mu.Lock()

defer rf.mu.Unlock()

term = rf.currentTerm

isLeader = rf.state == Leader

if isLeader {

rf.log = append(rf.log, LogEntry{Command: command, Term: term})

index = len(rf.log) -

rf.matchIndex[rf.me] = index

rf.nextIndex[rf.me] = index +

} return index, term, isLeader

} //

// the tester calls Kill() when a Raft instance won't

// be needed again. you are not required to do anything

// in Kill(), but it might be convenient to (for example)

// turn off debug output from this instance.

//

func (rf *Raft) Kill() {

// Your code here, if desired.

} //

// the service or tester wants to create a Raft server. the ports

// of all the Raft servers (including this one) are in peers[]. this

// server's port is peers[me]. all the servers' peers[] arrays

// have the same order. persister is a place for this server to

// save its persistent state, and also initially holds the most

// recent saved state, if any. applyCh is a channel on which the

// tester or service expects Raft to send ApplyMsg messages.

// Make() must return quickly, so it should start goroutines

// for any long-running work.

//

func Make(peers []*labrpc.ClientEnd, me int,

persister *Persister, applyCh chan ApplyMsg) *Raft {

rf := &Raft{}

rf.peers = peers

rf.persister = persister

rf.me = me // Your initialization code here (2A, 2B, 2C).

rf.state = Follower

rf.votedFor = -

rf.heartbeatTimer = time.NewTimer(HEART_BEAT_TIMEOUT * time.Millisecond)

rf.electionTimer = time.NewTimer(randTimeDuration()) rf.applyCh = applyCh

rf.log = make([]LogEntry, ) // start from index 1 rf.nextIndex = make([]int, len(rf.peers))

rf.matchIndex = make([]int, len(rf.peers)) //以定时器的维度重写background逻辑

go func() {

for {

select {

case <-rf.electionTimer.C:

rf.mu.Lock()

switch rf.state {

case Follower:

rf.switchStateTo(Candidate)

case Candidate:

rf.startElection()

}

rf.mu.Unlock() case <-rf.heartbeatTimer.C:

rf.mu.Lock()

if rf.state == Leader {

rf.heartbeats()

rf.heartbeatTimer.Reset(HEART_BEAT_TIMEOUT * time.Millisecond)

}

rf.mu.Unlock()

}

}

}() // initialize from state persisted before a crash

rf.readPersist(persister.ReadRaftState()) return rf

} func randTimeDuration() time.Duration {

return time.Duration(HEART_BEAT_TIMEOUT*+rand.Intn(HEART_BEAT_TIMEOUT)) * time.Millisecond

} //切换状态,调用者需要加锁

func (rf *Raft) switchStateTo(state int) {

if state == rf.state {

return

}

DPrintf("Term %d: server %d convert from %v to %v\n", rf.currentTerm, rf.me, rf.state, state)

rf.state = state

switch state {

case Follower:

rf.heartbeatTimer.Stop()

rf.electionTimer.Reset(randTimeDuration())

rf.votedFor = - case Candidate:

//成为候选人后立马进行选举

rf.startElection() case Leader:

// initialized to leader last log index + 1

for i := range rf.nextIndex {

rf.nextIndex[i] = (len(rf.log))

}

for i := range rf.matchIndex {

rf.matchIndex[i] =

} rf.electionTimer.Stop()

rf.heartbeats()

rf.heartbeatTimer.Reset(HEART_BEAT_TIMEOUT * time.Millisecond)

}

} // 发送心跳包,调用者需要加锁

func (rf *Raft) heartbeats() {

for i := range rf.peers {

if i != rf.me {

go rf.heartbeat(i)

}

}

} func (rf *Raft) heartbeat(server int) {

rf.mu.Lock()

if rf.state != Leader {

rf.mu.Unlock()

return

} prevLogIndex := rf.nextIndex[server] - // use deep copy to avoid race condition

// when override log in AppendEntries()

entries := make([]LogEntry, len(rf.log[(prevLogIndex+):]))

copy(entries, rf.log[(prevLogIndex+):]) args := AppendEntriesArgs{

Term: rf.currentTerm,

LeaderId: rf.me,

PrevLogIndex: prevLogIndex,

PrevLogTerm: rf.log[(prevLogIndex)].Term,

Entries: entries,

LeaderCommit: rf.commitIndex,

}

rf.mu.Unlock() var reply AppendEntriesReply

if rf.sendAppendEntries(server, &args, &reply) {

rf.mu.Lock()

if rf.state != Leader {

rf.mu.Unlock()

return

}

// If last log index ≥ nextIndex for a follower: send

// AppendEntries RPC with log entries starting at nextIndex

// • If successful: update nextIndex and matchIndex for

// follower (§5.3)

// • If AppendEntries fails because of log inconsistency:

// decrement nextIndex and retry (§5.3)

if reply.Success {

// successfully replicated args.Entries

rf.matchIndex[server] = args.PrevLogIndex + len(args.Entries)

rf.nextIndex[server] = rf.matchIndex[server] + // If there exists an N such that N > commitIndex, a majority

// of matchIndex[i] ≥ N, and log[N].term == currentTerm:

// set commitIndex = N (§5.3, §5.4).

for N := (len(rf.log) - ); N > rf.commitIndex; N-- {

count :=

for _, matchIndex := range rf.matchIndex {

if matchIndex >= N {

count +=

}

} if count > len(rf.peers)/ {

// most of nodes agreed on rf.log[i]

rf.setCommitIndex(N)

break

}

} } else {

if reply.Term > rf.currentTerm {

rf.currentTerm = reply.Term

rf.switchStateTo(Follower)

} else {

//如果走到这个分支,那一定是需要前推

rf.nextIndex[server] = args.PrevLogIndex -

}

}

rf.mu.Unlock()

}

} // 开始选举,调用者需要加锁

func (rf *Raft) startElection() { // DPrintf("raft%v is starting election\n", rf.me)

rf.currentTerm +=

rf.votedFor = rf.me //vote for me

rf.voteCount =

rf.electionTimer.Reset(randTimeDuration()) for i := range rf.peers {

if i != rf.me {

go func(peer int) {

rf.mu.Lock()

lastLogIndex := len(rf.log) -

args := RequestVoteArgs{

Term: rf.currentTerm,

CandidateId: rf.me,

LastLogIndex: lastLogIndex,

LastLogTerm: rf.log[lastLogIndex].Term,

}

// DPrintf("raft%v[%v] is sending RequestVote RPC to raft%v\n", rf.me, rf.state, peer)

rf.mu.Unlock()

var reply RequestVoteReply

if rf.sendRequestVote(peer, &args, &reply) {

rf.mu.Lock()

defer rf.mu.Unlock()

if reply.Term > rf.currentTerm {

rf.currentTerm = reply.Term

rf.switchStateTo(Follower)

}

if reply.VoteGranted && rf.state == Candidate {

rf.voteCount++

if rf.voteCount > len(rf.peers)/ {

rf.switchStateTo(Leader)

}

}

}

}(i)

}

}

} //

// several setters, should be called with a lock

//

func (rf *Raft) setCommitIndex(commitIndex int) {

rf.commitIndex = commitIndex

// apply all entries between lastApplied and committed

// should be called after commitIndex updated

if rf.commitIndex > rf.lastApplied {

DPrintf("%v apply from index %d to %d", rf, rf.lastApplied+, rf.commitIndex)

entriesToApply := append([]LogEntry{}, rf.log[(rf.lastApplied+):(rf.commitIndex+)]...) go func(startIdx int, entries []LogEntry) {

for idx, entry := range entries {

var msg ApplyMsg

msg.CommandValid = true

msg.Command = entry.Command

msg.CommandIndex = startIdx + idx

rf.applyCh <- msg

// do not forget to update lastApplied index

// this is another goroutine, so protect it with lock

rf.mu.Lock()

if rf.lastApplied < msg.CommandIndex {

rf.lastApplied = msg.CommandIndex

}

rf.mu.Unlock()

}

}(rf.lastApplied+, entriesToApply)

}

}

func min(x, y int) int {

if x < y {

return x

} else {

return y

}

}

test

test -race

6.824 Lab 2: Raft 2B的更多相关文章

- 6.824 Lab 2: Raft 2A

6.824 Lab 2: Raft Part 2A Due: Feb 23 at 11:59pm Part 2B Due: Mar 2 at 11:59pm Part 2C Due: Mar 9 at ...

- 6.824 Lab 2: Raft 2C

Part 2C Do a git pull to get the latest lab software. If a Raft-based server reboots it should resum ...

- 6.824 Lab 3: Fault-tolerant Key/Value Service 3A

6.824 Lab 3: Fault-tolerant Key/Value Service Due Part A: Mar 13 23:59 Due Part B: Apr 10 23:59 Intr ...

- 6.824 Lab 3: Fault-tolerant Key/Value Service 3B

Part B: Key/value service with log compaction Do a git pull to get the latest lab software. As thing ...

- MIT-6.824 Lab 3: Fault-tolerant Key/Value Service

概述 lab2中实现了raft协议,本lab将在raft之上实现一个可容错的k/v存储服务,第一部分是实现一个不带日志压缩的版本,第二部分是实现日志压缩.时间原因我只完成了第一部分. 设计思路 如上图 ...

- 6.824 Lab 5: Caching Extents

Introduction In this lab you will modify YFS to cache extents, reducing the load on the extent serve ...

- MIT 6.824 Llab2B Raft之日志复制

书接上文Raft Part A | MIT 6.824 Lab2A Leader Election. 实验准备 实验代码:git://g.csail.mit.edu/6.824-golabs-2021 ...

- MIT 6.824 Lab2D Raft之日志压缩

书接上文Raft Part C | MIT 6.824 Lab2C Persistence. 实验准备 实验代码:git://g.csail.mit.edu/6.824-golabs-2021/src ...

- MIT 6.824 Lab2C Raft之持久化

书接上文Raft Part B | MIT 6.824 Lab2B Log Replication. 实验准备 实验代码:git://g.csail.mit.edu/6.824-golabs-2021 ...

随机推荐

- dubbo 2.8.4(dubbox)的jar包制作【添加到maven本地仓库】

1. 下载 网址:https://github.com/hongmoshui/dubbox 2. 解压zip文件 3. 用maven编译文件 如果没有配置全局maven,就直接使用cmd命令行[进 ...

- JAVA并发编程的艺术 Java并发容器和框架

ConcurrentHashMap ConcurrentHashMap是由Segment数组结构和HashEntry数组结构组成. 一个ConcurrentHashMap里包含一个Segment数组, ...

- 3.Pod控制器应用进阶

一.Pod的生命周期 init container -- Post start -- running -- pre stop -- main container 创建Pod经历的过程:->a ...

- 【SaltStack官方版】—— MANAGING THE JOB CACHE

MANAGING THE JOB CACHE The Salt Master maintains a job cache of all job executions which can be quer ...

- 对Git仓库里的.idea进行研究------引用

1.什么是.idea文件夹 因为IntelliJ IDEA是JetBrains最早推出的IDE(JetBrains一开始叫IntelliJ),因此使用IDEA作为配置文件夹的名称.按照这个SO问题里最 ...

- Set数据结构

1.生成Set数据结构 const s = new Set(); const set = new Set([1, 2, 3, 4, 4]); 以上如果打印set值: 2.特性 它类似于数组,但是成员的 ...

- springboot(十)使用LogBack作为日志组件

简介: 企业级项目在搭建的时候,最不可或缺的一部分就是日志,日志可以用来调试程序,打印运行日志以及错误信息方便于我们后期对系统的维护,在SpringBoot兴起之前记录日志最出色的莫过于log4j了, ...

- html acronym标签 语法

html acronym标签 语法 作用:定义首字母缩略词. 说明:如果首字母缩略词是一个单词,则可以被读出来,例如 NATO, NASA, ASAP, GUI.通过对只取首字母缩略词进行标记,您就能 ...

- 小程序的image图片显示

最近接触到了一点小程序的东西,跟我们平常的HTML还真不太一样,这里我先大概讲一下图片的显示问题, 小程序的图片用的是<image><image/>标签,他默认的大小是宽300 ...

- 2019.9.23JAVA课堂测试

1.题目 使用递归方式判断某个字串是否是回文( palindrome ) “回文”是指正着读.反着读都一样的句子.比如“我是谁是我”使用递归算法检测回文的算法描述如下:A single or zero ...