flink02------1.自定义source 2. StreamingSink 3 Time 4窗口 5 watermark

1.自定义sink

在flink中,sink负责最终数据的输出。使用DataStream实例中的addSink方法,传入自定义的sink类

定义一个printSink(),使得其打印显示的是真正的task号(默认的情况是task的id+1)

MyPrintSink

package cn._51doit.flink.day02; import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.functions.sink.RichSinkFunction;

import org.apache.flink.streaming.api.functions.sink.SinkFunction; public class MyPrintSink<T> extends RichSinkFunction<T> { @Override

public void invoke(T value, Context context) throws Exception { int index = getRuntimeContext().getIndexOfThisSubtask(); System.out.println(index + " > " + value);

}

}

MyPrintSinkDemo

package cn._51doit.flink.day02; import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.core.fs.FileSystem;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.util.Collector; public class MyPrintSinkDemo { public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888);

SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = lines.flatMap(new FlatMapFunction<String, Tuple2<String, Integer>>() {

@Override

public void flatMap(String value, Collector<Tuple2<String, Integer>> out) throws Exception {

String[] words = value.split(" ");

for (String word : words) {

out.collect(Tuple2.of(word, 1));

}

}

});

SingleOutputStreamOperator<Tuple2<String, Integer>> res = wordAndOne.keyBy(0).sum(1); res.addSink(new MyPrintSink<>()); env.execute();

}

}

2. StreamingSink

用的比较多,可以将结果输出到本地或者hdfs中去,并且支持exactly once

package cn._51doit.flink.day02; import akka.remote.WireFormats;

import org.apache.flink.api.common.serialization.SimpleStringEncoder;

import org.apache.flink.core.fs.Path;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.sink.filesystem.StreamingFileSink;

import org.apache.flink.streaming.api.functions.sink.filesystem.rollingpolicies.DefaultRollingPolicy; import java.util.concurrent.TimeUnit; public class StreamFileSinkDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888);

SingleOutputStreamOperator<String> upper = lines.map(String::toUpperCase);

String path = "E:\\flink"; env.enableCheckpointing(10000); StreamingFileSink<String> sink = StreamingFileSink

.forRowFormat(new Path(path), new SimpleStringEncoder<String>("UTF-8"))

.withRollingPolicy(

DefaultRollingPolicy.builder()

// 滚动生成文件的最长时间

.withRolloverInterval(TimeUnit.SECONDS.toMillis(30))

// 间隔多长时间没写文件,则文件滚动

.withInactivityInterval(TimeUnit.SECONDS.toMillis(10))

// 文件大小超过1m,则滚动

.withMaxPartSize(1024 * 1024 * 1024)

.build())

.build();

upper.addSink(sink);

env.execute(); }

}

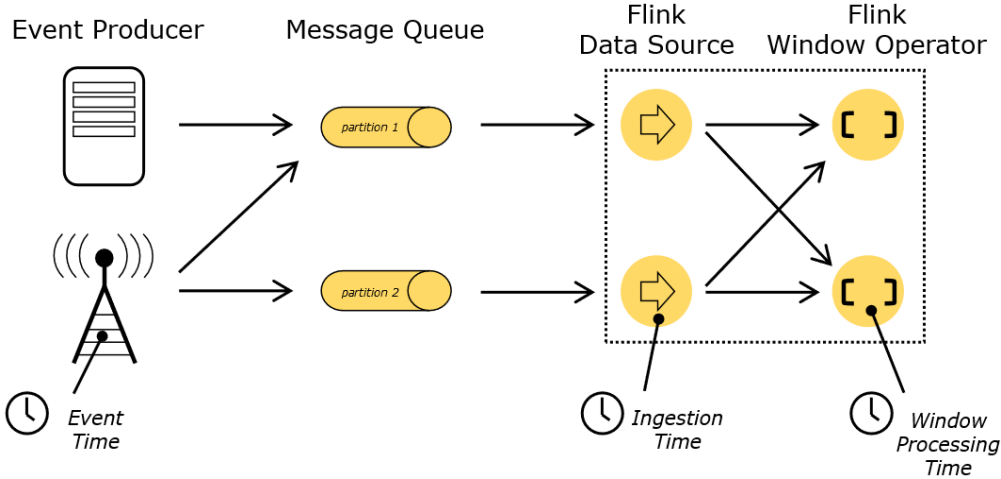

3. Time

(1)Event Time:是事件创建的时间。它通常由事件中的时间戳描述,例如采集的日志数据中,每一条日志都会记录自己的生成时间,flink通过时间戳分配器访问事件时间戳

(2)Ingestion:数据进入Flink的时间

(3)Processing Time:是每一个执行基于时间操作的算子的本地系统时间,与机器相关,默认的时间属性就是Processing Time

4. Window(窗口)

Window可以分成两类:

(1)GlobalWindow(countWindow)按照指定的数据条数生成一个window,与时间无关。

(2)TimeWindow:按照时间生成Window

对于TimeWindow,可以根据窗口实现原理的不同分为三类:滚动窗口(Tumbling Window)、滑动窗口(Sliding Window)和会话窗口(Session Window)。

4.1 countWindow/countWindowAll

countWindow根据窗口中相同key元素的数量来触发执行,执行时只计算元素数量达到窗口大小的key对应的结果

(1)滚动窗口:默认就是滚动窗口

- 未分组的情况:使用countWindowAll,输入的总数超过窗口的大小就会触发窗口

package cn._51doit.flink.day02.window; import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.windows.GlobalWindow; public class CountWindowAllDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("feng05", 8888);

SingleOutputStreamOperator<Integer> nums = lines.map(Integer::parseInt);

// 传入窗口分配器(划分器),传入具体划分窗口规则

AllWindowedStream<Integer, GlobalWindow> window = nums.countWindowAll(3);

SingleOutputStreamOperator<Integer> result = window.sum(0);

result.print();

env.execute();

}

}

- keyBy分组后,使用countWindow,输入数的每个分组的数超过窗口的大小就会触发窗口

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.windows.GlobalWindow; public class CountWindowDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("feng05", 8888);

// 划分窗口,若是调用了keyBy分组,调用window

SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = lines.map(new MapFunction<String, Tuple2<String, Integer>>() { @Override

public Tuple2<String, Integer> map(String value) throws Exception {

return Tuple2.of(value, 1);

}

});

// 按照key进行分组

KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0);

// 对KeyedStream划分窗口

WindowedStream<Tuple2<String, Integer>, Tuple, GlobalWindow> window = keyed.countWindow(5);

SingleOutputStreamOperator<Tuple2<String, Integer>> sumed = window.sum(1);

sumed.print();

env.execute(); }

}

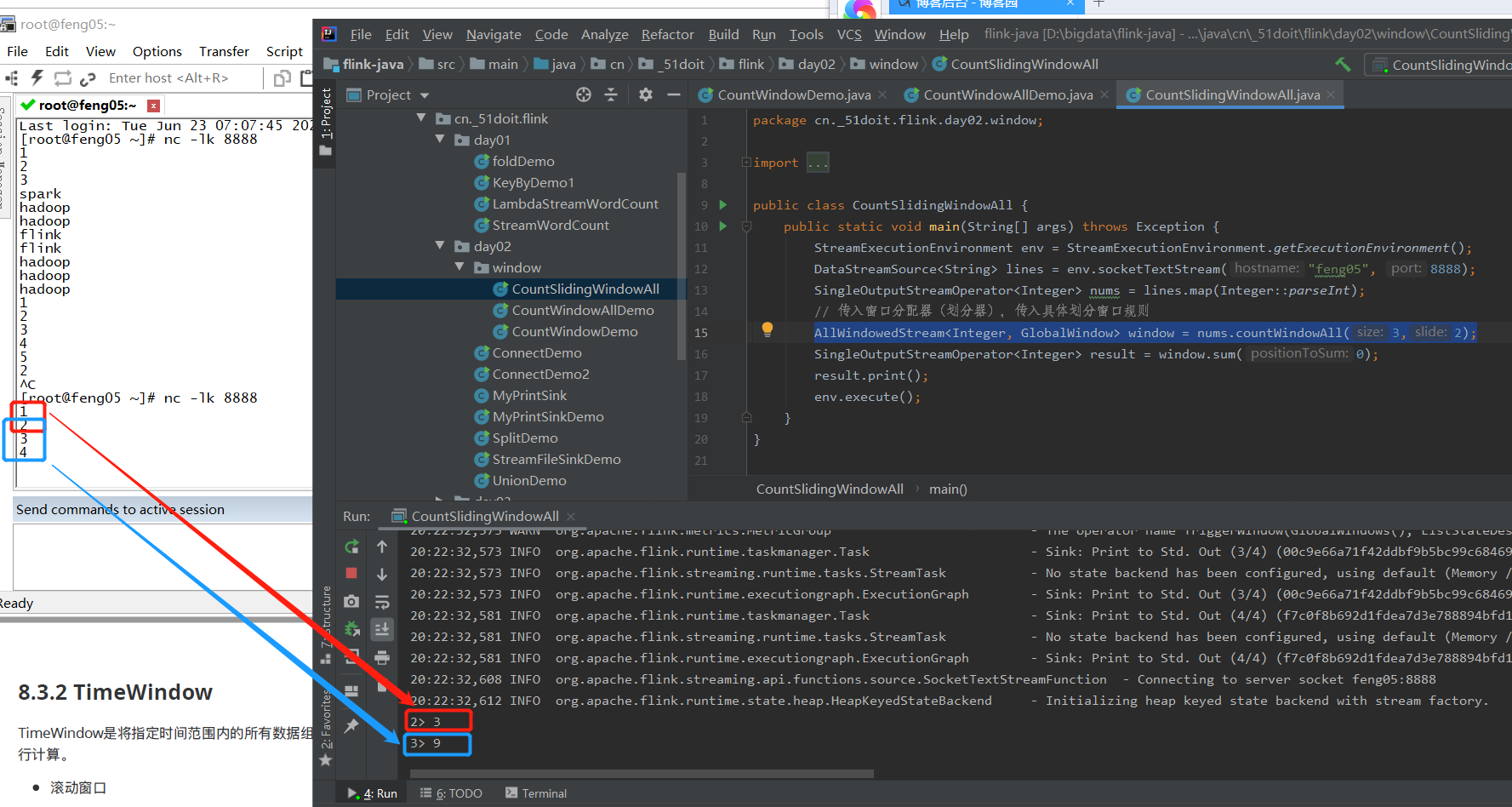

(2)滑动窗口

- 未分组的情况 与(1)相似,只是窗口分配的规则发生变化,变化的代码如下

AllWindowedStream<Integer, GlobalWindow> window = nums.countWindowAll(3,2);

运算结果

- 同理分组的情况

4.2 TimeWindow

TimeWindow是将指定时间范围内的所有数据组成一个window,一次对一个window里面的所有数据进行计算

4.2.1 Processing Time

(1)滚动窗口

Flink默认的时间窗口根据Processing Time进行窗口的划分,将Flink获取到的数据进入Flink的时间划分到不同的窗口中

- 未分组

ProcessingTumblingWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.*;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class ProcessingTumblingWindowAllDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = lines.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

return Tuple2.of(value, 1);

}

});

//如果是划分窗口,未分组,调用window

AllWindowedStream<Tuple2<String, Integer>, TimeWindow> window = wordAndOne.windowAll(TumblingProcessingTimeWindows.of(Time.seconds(5)));

SingleOutputStreamOperator<Tuple2<String, Integer>> sum = window.sum(1);

sum.print();

env.execute();

}

}

wordAndOne.windowAll(TumblingProcessingTimeWindows.of(Time.seconds(5)))

表示processingTime每5秒划分一个窗口

- 分组

同理

(2)滑动窗口

滑动窗口和滚动窗口的函数名是完全一致的,只是在传参数时需要传入两个参数,一个是window_size,一个是sliding_size

ProcessingSlidingWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.SlidingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class ProcessingSlidingWindowAllDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //如果是划分窗口,如果没有调用keyBy分组(Non-Keyed Stream),调用windowAll

SingleOutputStreamOperator<Integer> nums = lines.map(Integer::parseInt); //划分滚动窗口

AllWindowedStream<Integer, TimeWindow> window = nums.windowAll(SlidingProcessingTimeWindows.of(Time.seconds(20), Time.seconds(10))); SingleOutputStreamOperator<Integer> sum = window.sum(0); sum.print(); env.execute();

}

}

ProcessingSlidingWindowDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.SlidingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.assigners.TumblingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class ProcessingSlidingWindowDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = lines.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

return Tuple2.of(value, 1);

}

}); KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0); //如果是划分窗口,如果调用keyBy分组(Keyed Stream),调用window

WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed.window(SlidingProcessingTimeWindows.of(Time.seconds(20), Time.seconds(10))); SingleOutputStreamOperator<Tuple2<String, Integer>> sum = window.sum(1);

sum.print();

env.execute();

}

}

(3)会话窗口

由一系列列事件组合一个指定时间长度的timeout间隙组成,类似于web应用的session,也就是一段时间没有接收到新数据就会⽣生成新的窗口。

ProcessingSessionWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.ProcessingTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class ProcessingSessionWindowAllDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("feng05", 8888);

// 不分组,调用windowAll

SingleOutputStreamOperator<Integer> nums = lines.map(Integer::parseInt);

// 划分滚动窗口

AllWindowedStream<Integer, TimeWindow> window = nums.windowAll(ProcessingTimeSessionWindows.withGap(Time.seconds(5)));

SingleOutputStreamOperator<Integer> sum = window.sum(0);

sum.print();

env.execute();

}

}

此处程序5秒没收到数据,就会触发一个新的窗口

ProcessingSessionWindowDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.assigners.ProcessingTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.assigners.SlidingProcessingTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class ProcessingSessionWindowDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = lines.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

return Tuple2.of(value, 1);

}

}); KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0); //如果是划分窗口,如果调用keyBy分组(Keyed Stream),调用window

WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed

.window(ProcessingTimeSessionWindows.withGap(Time.seconds(5))); SingleOutputStreamOperator<Tuple2<String, Integer>> sum = window.sum(1);

sum.print();

env.execute();

}

}

4.2.2 Event Time

原理同上,只是划分窗口的时间变成事件产生时的时间。另外,由于Flink默认使用ProcessingTime作为时间标准,所以需要设置EventTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准

(1)滚动窗口

EventTimeTumblingWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; import java.text.ParseException;

import java.text.SimpleDateFormat;

import java.util.Date; public class EventTimeTumblingWindowAllDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准

//需要将时间转成Timestamp格式

//2020-03-01 00:00:00,1

//2020-03-01 00:00:04,2

//2020-03-01 00:00:05,3

DataStreamSource<String> lines = env.socketTextStream("feng05", 8888);

//提取数据中的EventTime

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) {

private SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

String dateStr = fields[0];

try {

Date date = sdf.parse(dateStr);

long timestamp = date.getTime();

return timestamp;

} catch (ParseException e) {

throw new RuntimeException("时间转换异常");

}

}

});

dataStreamWithWaterMark.print();

SingleOutputStreamOperator<Integer> nums = dataStreamWithWaterMark.map(new MapFunction<String, Integer>() {

@Override

public Integer map(String value) throws Exception {

String[] fields = value.split(",");

String numStr = fields[1];

return Integer.parseInt(numStr); }

});

nums.print(); //如果是划分窗口,如果没有调用keyBy分组(Non-Keyed Stream),调用windowAll

AllWindowedStream<Integer, TimeWindow> window = nums

.windowAll(TumblingEventTimeWindows.of(Time.seconds(5))); SingleOutputStreamOperator<Integer> sum = window.sum(0);

sum.print();

env.execute();

}

}

注意点:

EventTimeTumblingWindowDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.*;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class EventTimeTumblingWindowDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准 //需要将时间转成Timestamp格式

//1000,a

//3000,b

//4000,c

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //提取数据中的EventTime字段,并且转换成Timestamp格式

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(2)) {

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

return Long.parseLong(fields[0]);

}

}); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = dataStreamWithWaterMark.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String[] fields = value.split(",");

String word = fields[1];

return Tuple2.of(word, 1);

}

}); KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0); WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed.window(TumblingEventTimeWindows.of(Time.seconds(5))); SingleOutputStreamOperator<Tuple2<String, Integer>> res = window.sum(1); res.print(); env.execute();

}

}

(2)滑动窗口

EventTimeSlidingWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.SlidingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class EventTimeSlidingWindowAllDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准 //需要将时间转成Timestamp格式

//1000,1

//2000,2

//3000,3

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //提取数据中的EventTime字段,并且转换成Timestamp格式

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) {

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

return Long.parseLong(fields[0]);

}

}); SingleOutputStreamOperator<Integer> nums = dataStreamWithWaterMark.map(new MapFunction<String, Integer>() {

@Override

public Integer map(String value) throws Exception {

String[] fields = value.split(",");

String numStr = fields[1];

return Integer.parseInt(numStr);

}

}); //如果是划分窗口,如果没有调用keyBy分组(Non-Keyed Stream),调用windowAll

//Non-Keyed Stream 调用完windowAll 返回的是Non-Keyed Window(AllWindowed)

AllWindowedStream<Integer, TimeWindow> window = nums

.windowAll(SlidingEventTimeWindows.of(Time.seconds(10), Time.seconds(5))); SingleOutputStreamOperator<Integer> sum = window.sum(0);

sum.print();

env.execute();

}

}

EventTimeSlidingWindowDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.SlidingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class EventTimeSlidingWindowDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准 //需要将时间转成Timestamp格式

//1000,a

//3000,b

//4000,c

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //提取数据中的EventTime字段,并且转换成Timestamp格式

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) {

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

return Long.parseLong(fields[0]);

}

}); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = dataStreamWithWaterMark.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String[] fields = value.split(",");

String word = fields[1];

return Tuple2.of(word, 1);

}

}); KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0); WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed.window(SlidingEventTimeWindows.of(Time.seconds(10), Time.seconds(5))); SingleOutputStreamOperator<Tuple2<String, Integer>> res = window.sum(1); res.print(); env.execute();

}

}

(3)会话窗口

EventTimeSessionWindowAllDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.AllWindowedStream;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.EventTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.assigners.SlidingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class EventTimeSessionWindowAllDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准 //需要将时间转成Timestamp格式

//1000,1

//2000,2

//3000,3

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //提取数据中的EventTime字段,并且转换成Timestamp格式

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) {

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

return Long.parseLong(fields[0]);

}

}); SingleOutputStreamOperator<Integer> nums = dataStreamWithWaterMark.map(new MapFunction<String, Integer>() {

@Override

public Integer map(String value) throws Exception {

String[] fields = value.split(",");

String numStr = fields[1];

return Integer.parseInt(numStr);

}

}); //如果是划分窗口,如果没有调用keyBy分组(Non-Keyed Stream),调用windowAll

//Non-Keyed Stream 调用完windowAll 返回的是Non-Keyed Window(AllWindowed)

AllWindowedStream<Integer, TimeWindow> window = nums

.windowAll(EventTimeSessionWindows.withGap(Time.seconds(5))); SingleOutputStreamOperator<Integer> sum = window.sum(0);

sum.print();

env.execute();

}

}

EventTimeSessionWindowDemo

package cn._51doit.flink.day02.window; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.datastream.WindowedStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.EventTimeSessionWindows;

import org.apache.flink.streaming.api.windowing.assigners.SlidingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow; public class EventTimeSessionWindowDemo { public static void main(String[] args) throws Exception { StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

//Flink默认使用ProcessingTime作为时间标准

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime); //设置EventTime作为时间标准 //需要将时间转成Timestamp格式

//1000,a

//3000,b

//4000,c

DataStreamSource<String> lines = env.socketTextStream("localhost", 8888); //提取数据中的EventTime字段,并且转换成Timestamp格式

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) {

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

return Long.parseLong(fields[0]);

}

}); SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = dataStreamWithWaterMark.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String value) throws Exception {

String[] fields = value.split(",");

String word = fields[1];

return Tuple2.of(word, 1);

}

}); KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0); WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed

.window(EventTimeSessionWindows.withGap(Time.seconds(5))); SingleOutputStreamOperator<Tuple2<String, Integer>> res = window.sum(1); res.print(); env.execute();

}

}

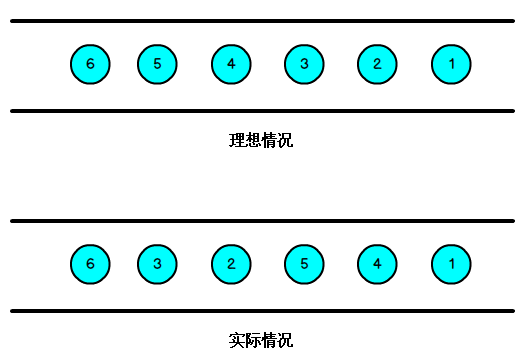

5 Watermark(水位线)

我们知道,流处理理从事件产生,到流经source,再到operator,中间是有一个过程和时间的,虽然大部分情况下,流到operator的数据都是按照事件产?生的时间顺序来的,但是也不不排除由于网络、背压等原因,导致乱序的产生,所谓乱序,就是指Flink接收到的事件的先后顺序不不是严格按照事件的Event Time顺序排列列的。

那么此时出现一个问题,一旦出现乱序,如果只根据eventTime决定window的运行,我们不能明确数据是否全部到位,但又不能无限期的等下去,此时必须要有个机制来保证一个特定的时间后,必须触发window去进行计算了,这个特别的机制,就是Watermark。

Watermark是用于处理乱序事件的,而正确的处理从乱序事件,通常用Watermark机制结合window来实现。

数据流中的Watermark用于表示timestamp小于Watermark的数据,都已经到达了,因此,window的执行也是由Watermark触发的。

Watermark可以理解成一个延迟触发机制,我们可以设置Watermark的延时时长t,每次系统会校验已经到达的数据中最大的maxEventTime,然后认定eventTime小于maxEventTime-t的所有数据都已经到达,如果有窗口的停止时间等于maxEventTime – t,那么这个窗口被触发执行。

下面便是创建了一个watermark

SingleOutputStreamOperator<String> dataStreamWithWaterMark = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)) { //延迟时间0秒

private SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss");

@Override

public long extractTimestamp(String element) {

String[] fields = element.split(",");

String dateStr = fields[0];

try {

Date date = sdf.parse(dateStr);

long timestamp = date.getTime();

return timestamp;

} catch (ParseException e) {

throw new RuntimeException("时间转换异常");

}

}

});

BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(0)),此种的参数即为延迟时间

窗口的尺寸是左闭右开,比如一个长度为5s的窗口,其范围为[0,4999)

flink02------1.自定义source 2. StreamingSink 3 Time 4窗口 5 watermark的更多相关文章

- Flume自定义Source、Sink和Interceptor(简单功能实现)

1.Event event是flume传输的最小对象,从source获取数据后会先封装成event,然后将event发送到channel,sink从channel拿event消费. event由头he ...

- Flink 自定义source和sink,获取kafka的key,输出指定key

--------20190905更新------- 沙雕了,可以用 JSONKeyValueDeserializationSchema,接收ObjectNode的数据,如果有key,会放在Objec ...

- flume自定义Source(taildirSource),自定义Sink(数据库),开发完整步骤

一.flume简单了解推荐网站(简介包括简单案例部署): http://www.aboutyun.com/thread-8917-1-1.html 二.我的需求是实现从ftp目录下采集数据,目录下文件 ...

- flink1.7自定义source实现

flink读取source data 数据的来源是flink程序从中读取输入的地方.我们可以使用StreamExecutionEnvironment.addSource(sourceFunction) ...

- 【翻译】Flink Table Api & SQL — 自定义 Source & Sink

本文翻译自官网: User-defined Sources & Sinks https://ci.apache.org/projects/flink/flink-docs-release-1 ...

- 4、flink自定义source、sink

一.Source 代码地址:https://gitee.com/nltxwz_xxd/abc_bigdata 1.1.flink内置数据源 1.基于文件 env.readTextFile(" ...

- Hadoop实战-Flume之自定义Source(十八)

import java.nio.charset.Charset; import java.util.HashMap; import java.util.Random; import org.apach ...

- 《从0到1学习Flink》—— 如何自定义 Data Source ?

前言 在 <从0到1学习Flink>-- Data Source 介绍 文章中,我给大家介绍了 Flink Data Source 以及简短的介绍了一下自定义 Data Source,这篇 ...

- Flink 从 0 到 1 学习 —— 如何自定义 Data Source ?

前言 在 <从0到1学习Flink>-- Data Source 介绍 文章中,我给大家介绍了 Flink Data Source 以及简短的介绍了一下自定义 Data Source,这篇 ...

随机推荐

- 像素设定 牛客网 程序员面试金典 C++ Python

像素设定 牛客网 程序员面试金典 题目描述 有一个单色屏幕储存在一维数组中,其中数组的每个元素代表连续的8位的像素的值,请实现一个函数,将第x到第y个像素涂上颜色(像素标号从零开始),并尝试尽量使用最 ...

- poj 1704 Georgia and Bob (nim)

题意: N个棋子,位置分别是p[1]...p[N]. Georgia和Bob轮流,每人每次可选择其中一个棋子向左移动若干个位置(不能超过前一个棋子,不能超出最左边[位置1]且不能不移) Georgia ...

- telnet IP 端口 的作用

测试远程服务器的端口是否开启

- python基本数据类型操作

str 字符串 #1.进行字符串转换 首字母转换成大写 # name = 'wangjianhui' # v = name.capitalize() # print(v) #2. 字符转换小写 # n ...

- NAT & 防火墙

NAT 网络地址转换 NAT产生背景 今天,无数快乐的互联网用户在尽情享受Internet带来的乐趣.他们浏览新闻,搜索资料,下载软件,广交新朋,分享信息,甚至于足不出户获取一切日用所需.企业利用互联 ...

- Django开发 X-Frame-Options to deny 报错处理

本博客已停更,请转自新博客查看 https://www.whbwiki.com/318.html 错误提示 Refused to display 'http://127.0.0.1:8000/inde ...

- 剑指 Offer 20. 表示数值的字符串

方法:分为几个部分判断 DA[.B][E/eC] D 其中D表示前后的空格,需要处理,跳过即可 A可以带正负号 有符号数 B无符号数 C可以为有符号数(带+-号) 小数点.后面必须是无符号数或者没有 ...

- oxidized备份华为HRP防火墙配置失败问题

Oxidized备份华为防火墙配置Last Status红色,备份失败,查看oxidized日志(默认是~/.config/oxidized/logs/oxidized.log)能看到报错日志: WA ...

- STC单片机控制28BYJ-48步进电机

STC单片机4*4按键控制步进电机旋转 28BYJ-48型步进电机说明 四相永磁式的含义 28BYJ-48工作原理 让电机转起来 最简单的电机转动程序 电机转速缓慢的原因分析 便于控制转过圈数的改进程 ...

- SQL里ORDER BY 对查询的字段进行排序,字段为空不想排在最前

在安字段排序时 空字段往往都是在最前,我只是想空字段在排序的后面,不为空的在前,这个如何修改呢 order by datatime desc 这样的句子也一样 不管是正排还是倒排 为空的都在最 ...