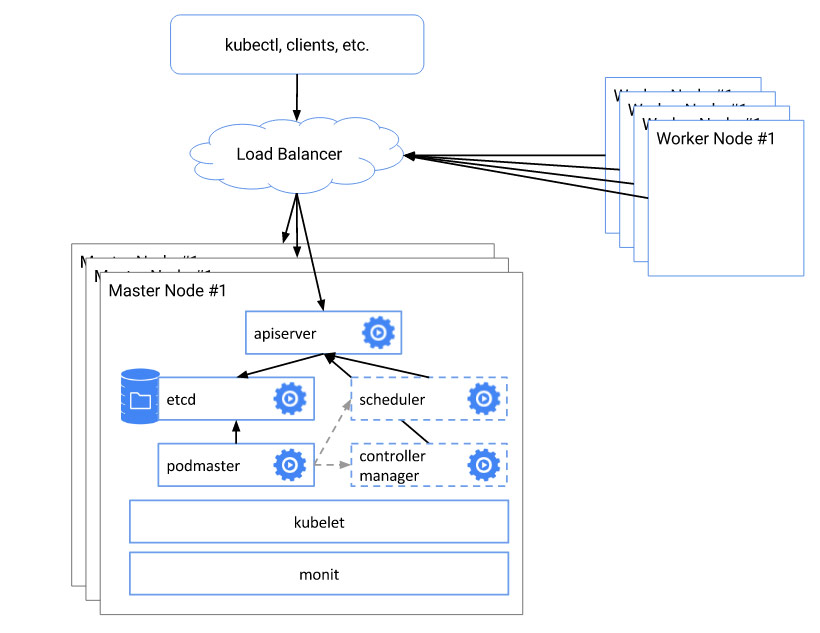

Kubernetes master节点的高可用配置

了解Kubernetes架构都知道Master节点在整个集群中的位置,为了保证整个架构的高可用,Kubernetes提供了HA的架构,处于兴趣和对架构的进一步了解,我在自己的电脑实践以下.

环境:

CentOS 7.3,Kubernetes版本

Client Version: version.Info{Major:"", Minor:"", GitVersion:"v1.5.1", GitCommit:"82450d03cb057bab0950214ef122b67c83fb11df", GitTreeState:"clean", BuildDate:"2016-12-14T00:57:05Z", GoVersion:"go1.7.4", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"", Minor:"", GitVersion:"v1.5.1", GitCommit:"82450d03cb057bab0950214ef122b67c83fb11df", GitTreeState:"clean", BuildDate:"2016-12-14T00:52:01Z", GoVersion:"go1.7.4", Compiler:"gc", Platform:"linux/amd64"}

主机环境 /etc/hosts

192.168.0.107 k8s-master1

192.168.0.108 k8s-master2

192.168.0.109 k8s-master3

1.搭建ETCD的集群

- 禁止selinux以及防火墙

setenforce

systemctl stop firewalld

systemctl disable firewalld

- 安装软件包

yum -y install ntppdate gcc git vim wget

- 配置定时更新

*/ * * * * /usr/sbin/ntpdate time.windows.com >/dev/null >&

- 下载安装包

cd /usr/src

wget https://github.com/coreos/etcd/releases/download/v3.0.15/etcd-v3.0.15-linux-amd64.tar.gz

tar -xvf https://github.com/coreos/etcd/releases/download/v3.0.15/etcd-v3.0.15-linux-amd64.tar.gz

cp etcd-v3.0.15-linux-amd64/etcd* /usr/local/bin

- 编写一个deploy-etcd.sh的脚本,并运行

#!/bin/bash # Copyright The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License. ## Create etcd.conf, etcd.service, and start etcd service. ETCD_NAME=`hostname`

ETCD_DATA_DIR=/var/lib/etcd

ETCD_CONF_DIR=/etc/etcd

ETCD_CLUSTER='k8s-master1=http://192.168.0.107:2380,k8s-master2=http://192.168.0.108:2380,k8s-master3=http://192.168.0.109:2380'

ETCD_LISTEN_IP=`ip addr show enp0s3 |grep -w 'inet' |awk -F " " '{print $2}' |awk -F "/" '{print $1}'` #useradd etcd

mkdir -p $ETCD_DATA_DIR $ETCD_CONF_DIR

chown -R etcd.etcd $ETCD_DATA_DIR cat <<EOF >/etc/etcd/etcd.conf

# [member]

ETCD_NAME=${ETCD_NAME}

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

#ETCD_WAL_DIR=""

ETCD_SNAPSHOT_COUNT=""

ETCD_HEARTBEAT_INTERVAL=""

ETCD_ELECTION_TIMEOUT=""

ETCD_LISTEN_PEER_URLS="http://${ETCD_LISTEN_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="http://${ETCD_LISTEN_IP}:2379"

ETCD_MAX_SNAPSHOTS=""

ETCD_MAX_WALS=""

#ETCD_CORS=""

#

#[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://${ETCD_LISTEN_IP}:2380"

# if you use different ETCD_NAME (e.g. test), set ETCD_INITIAL_CLUSTER value for this name, i.e. "test=http://..."

ETCD_INITIAL_CLUSTER="${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_STATE="new"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_ADVERTISE_CLIENT_URLS="http://${ETCD_LISTEN_IP}:2379"

#ETCD_DISCOVERY=""

#ETCD_DISCOVERY_SRV=""

#ETCD_DISCOVERY_FALLBACK="proxy"

#ETCD_DISCOVERY_PROXY=""

#ETCD_STRICT_RECONFIG_CHECK="false"

#ETCD_AUTO_COMPACTION_RETENTION=""

#

#[proxy]

#ETCD_PROXY="off"

#ETCD_PROXY_FAILURE_WAIT=""

#ETCD_PROXY_REFRESH_INTERVAL=""

#ETCD_PROXY_DIAL_TIMEOUT=""

#ETCD_PROXY_WRITE_TIMEOUT=""

#ETCD_PROXY_READ_TIMEOUT=""

#

#[security]

#ETCD_CERT_FILE=""

#ETCD_KEY_FILE=""

#ETCD_CLIENT_CERT_AUTH="false"

#ETCD_TRUSTED_CA_FILE=""

#ETCD_AUTO_TLS="false"

#ETCD_PEER_CERT_FILE=""

#ETCD_PEER_KEY_FILE=""

#ETCD_PEER_CLIENT_CERT_AUTH="false"

#ETCD_PEER_TRUSTED_CA_FILE=""

#ETCD_PEER_AUTO_TLS="false"

#

#[logging]

#ETCD_DEBUG="false"

# examples for -log-package-levels etcdserver=WARNING,security=DEBUG

#ETCD_LOG_PACKAGE_LEVELS=""

EOF cat <<EOF >//usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target [Service]

Type=notify

WorkingDirectory=/var/lib/etcd/

EnvironmentFile=-/etc/etcd/etcd.conf

User=etcd

# set GOMAXPROCS to number of processors

#ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /usr/local/bin/etcd --name=\"${ETCD_NAME}\" --data-dir=\"${ETCD_DATA_DIR}\" --listen-client-urls=\"${ETCD_LISTEN_CLIENT_URLS}\""

ExecStart=/bin/bash -c "GOMAXPROCS=$(nproc) /usr/local/bin/etcd"

Restart=on-failure

LimitNOFILE= [Install]

WantedBy=multi-user.target

EOF

- 运行如下命令

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd etcdctl cluster-health

- 发现如下错误:

[root@k8s-master1 ~]# etcdctl cluster-health

cluster may be unhealthy: failed to list members

Error: client: etcd cluster is unavailable or misconfigured

error #: dial tcp 127.0.0.1:: getsockopt: connection refused

error #: dial tcp 127.0.0.1:: getsockopt: connection refused

原因是etcdctl总是去找本地的地址,指定endpoint,输出如下:

[root@k8s-master1 ~]# etcdctl -endpoints "http://192.168.0.107:2379,http://192.168.0.108:2379,http://192.168.0.109:2379" cluster-health

member 1578ba76eb3abe05 is healthy: got healthy result from http://192.168.0.108:2379

member beb7fd3596aa26eb is healthy: got healthy result from http://192.168.0.109:2379

member e6bdc10e37172e00 is healthy: got healthy result from http://192.168.0.107:2379

cluster is healthy

2.搭建kubernetes高可用环境

- 默认master和etcd部署在同一台设备,共三台相互冗余

- 离线安装的介质可以直接在https://pan.baidu.com/s/1i5jusip 下载

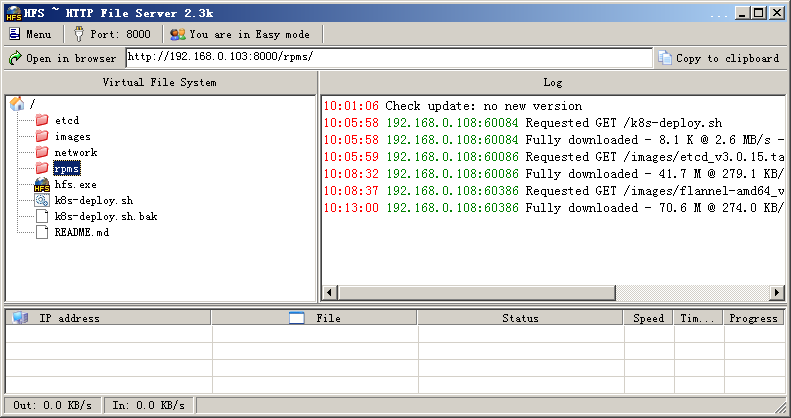

- 通过HFS启动个http server,安装节点会从这里拉取镜像和rpm

先下载hfs,因为我是在windows 7的主机环境,所以下载一个windows版本,启动以后,将下载的目录和文件都拖到hfs界面中,如图

关掉windows防火墙.

修改k8s-deploy.sh脚本,修改的地方如下

HTTP_SERVER=192.168.0.103:

.

.

.

#master侧不需要修改成ip,保持原来的$(master_ip)就可以,但replicate侧需要修改,具体原因还需要查

kube::copy_master_config()

{

local master_ip=$(etcdctl get ha_master)

mkdir -p /etc/kubernetes

scp -r root@192.168.0.107:/etc/kubernetes/* /etc/kubernetes/

systemctl start kubelet

}

- Master节点

curl -L http://192.168.0.101:8000/k8s-deploy.sh | bash -s master \

--api-advertise-addresses=192.168.0.110 \

--external-etcd-endpoints=http://192.168.0.107:2379,http://192.168.0.108:2379,http://192.168.0.109:2379

- 192.168.0.101:8000 是我的http-server, 注意要将k8s-deploy.sh 里的HTTP-SERVER变量也改下

- –api-advertise-addresses 是VIP地址

- –external-etcd-endpoints 是你的etcd集群地址,这样kubeadm将不再生成etcd.yaml manifest文件

- 记录下你的token输出, minion侧需要用到

运行完后输出

[init] Using Kubernetes version: v1.5.1

[tokens] Generated token: "e5029f.020306948a9c120f"

[certificates] Generated Certificate Authority key and certificate.

[certificates] Generated API Server key and certificate

[certificates] Generated Service Account signing keys

[certificates] Created keys and certificates in "/etc/kubernetes/pki"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/kubelet.conf"

[kubeconfig] Wrote KubeConfig file to disk: "/etc/kubernetes/admin.conf"

[apiclient] Created API client, waiting for the control plane to become ready

[apiclient] All control plane components are healthy after 23.199910 seconds

[apiclient] Waiting for at least one node to register and become ready

[apiclient] First node is ready after 0.512201 seconds

[apiclient] Creating a test deployment

[apiclient] Test deployment succeeded

[token-discovery] Created the kube-discovery deployment, waiting for it to become ready

[token-discovery] kube-discovery is ready after 2.004430 seconds

[addons] Created essential addon: kube-proxy

[addons] Created essential addon: kube-dns Your Kubernetes master has initialized successfully! You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

http://kubernetes.io/docs/admin/addons/ You can now join any number of machines by running the following on each node: kubeadm join --token=e5029f.020306948a9c120f 192.168.0.110

+ echo -e '\033[32m 赶紧找地方记录上面的token! \033[0m'

赶紧找地方记录上面的token!

+ kubectl apply -f http://192.168.0.101:8000/network/kube-flannel.yaml --namespace=kube-system

serviceaccount "flannel" created

configmap "kube-flannel-cfg" created

daemonset "kube-flannel-ds" created

+ kubectl get po --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system dummy--fjhbc / Running 7s

kube-system kube-discovery--ks84b / Running 6s

kube-system kube-dns--zg6b8 / ContainerCreating 3s

kube-system kube-flannel-ds-jzq98 / Pending 1s

kube-system kube-proxy-c0mx7 / ContainerCreating 3s

- Relica Master节点

curl -L http://192.168.0.103:8000/k8s-deploy.sh | bash -s replica \

--api-advertise-addresses=192.168.0.110 \

--external-etcd-endpoints=http://192.168.0.107:2379,http://192.168.0.108:2379,http://192.168.0.109:2379

输出

++ hostname

+ grep k8s-master2

k8s-master2 Ready 30s

++ hostname

+ kubectl label node k8s-master2 kubeadm.alpha.kubernetes.io/role=master

node "k8s-master2" labeled

建立了3个节点的HA集群后,先运行命令查看情况

[root@k8s-master2 ~]# kubectl get nodes

NAME STATUS AGE

k8s-master1 Ready,master 11h

k8s-master2 Ready,master 5m

k8s-master3 Ready,master 9h

[root@k8s-master2 ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system dummy--fjhbc / Running 11h

kube-system kube-apiserver-k8s-master1 / Running 11h

kube-system kube-apiserver-k8s-master2 / Running 5m

kube-system kube-apiserver-k8s-master3 / Running 9h

kube-system kube-controller-manager-k8s-master1 / Running 11h

kube-system kube-controller-manager-k8s-master2 / Running 5m

kube-system kube-controller-manager-k8s-master3 / Running 9h

kube-system kube-discovery--ks84b / Running 11h

kube-system kube-dns--zg6b8 / Running 11h

kube-system kube-flannel-ds-37zsp / Running 9h

kube-system kube-flannel-ds-8kwnh / Running 5m

kube-system kube-flannel-ds-jzq98 / Running 11h

kube-system kube-proxy-c0mx7 / Running 11h

kube-system kube-proxy-r9nmw / Running 9h

kube-system kube-proxy-rbxf7 / Running 5m

kube-system kube-scheduler-k8s-master1 / Running 11h

kube-system kube-scheduler-k8s-master2 / Running 5m

kube-system kube-scheduler-k8s-master3 / Running 9h

关掉一个master1,验证vip

bytes from 192.168.0.110: icmp_seq= ttl= time=0.049 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.050 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.049 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.049 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.049 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.099 ms

bytes from 192.168.0.110: icmp_seq= ttl= time=0.048 ms

- Minion节点

curl -L http://192.168.0.103:8000/k8s-deploy.sh | bash -s join --token=e5029f.020306948a9c120f 192.168.0.110

- token是第一个master节点生成

- 192.168.0.110是浮动vip

- 因为资源有限没有验证minion节点部署

验证未完待续.

Kubernetes master节点的高可用配置的更多相关文章

- 二、安装并配置Kubernetes Master节点

1. 安装配置Master节点上的Kubernetes服务 1.1 安装Master节点上的Kubernetes服务 yum -y install kubernetes 1.2 修改kube-apis ...

- K8S学习笔记之二进制部署Kubernetes v1.13.4 高可用集群

0x00 概述 本次采用二进制文件方式部署,本文过程写成了更详细更多可选方案的ansible部署方案 https://github.com/zhangguanzhang/Kubernetes-ansi ...

- 使用睿云智合开源 Breeze 工具部署 Kubernetes v1.12.3 高可用集群

一.Breeze简介 Breeze 项目是深圳睿云智合所开源的Kubernetes 图形化部署工具,大大简化了Kubernetes 部署的步骤,其最大亮点在于支持全离线环境的部署,且不需要FQ获取 G ...

- 二进制方式安装Kubernetes 1.14.2高可用详细步骤

00.组件版本和配置策略 组件版本 Kubernetes 1.14.2 Docker 18.09.6-ce Etcd 3.3.13 Flanneld 0.11.0 插件: Coredns Dashbo ...

- K8S从入门到放弃系列-(8)kube-apiserver 高可用配置

摘要: 前面几篇文章,就是整个的master节点各组件的部署,上面我们提到过,k8s组件中,kube-controller-manager.kube-scheduler及etcd这三个服务高可用,都是 ...

- OpenStack中MySQL高可用配置

采用Heartbeat+DRBD+mysql高可用方案,配置两个节点的高可用集群 l 配置各节点互相解析 gb07 gb06 l 配置各节点时间同步 gb07 [root@gb07 ~]# ntp ...

- Keepalived保证Nginx高可用配置

Keepalived保证Nginx高可用配置部署环境 keepalived-1.2.18 nginx-1.6.2 VM虚拟机redhat6.5-x64:192.168.1.201.192.168.1. ...

- 高可用OpenStack(Queen版)集群-3.高可用配置(pacemaker&haproxy)

参考文档: Install-guide:https://docs.openstack.org/install-guide/ OpenStack High Availability Guide:http ...

- linux中keepalived实现nginx高可用配置

linux中keepalived实现nginx高可用配置 安装keepalived 运行如下命令即可 tar -zxvf keepalived-2.0.8.tar.gz -C /usr/src cd ...

随机推荐

- COGS2085 Asm.Def的一秒

时间限制:1 s 内存限制:256 MB [题目描述] “你们搞的这个导弹啊,excited!” Asm.Def通过数据链发送了算出的疑似目标位置,几分钟后,成群结队的巡航导弹从“无蛤”号头顶掠过 ...

- swift出师作,史丹佛大学游戏制作案例,计算器,小游戏

这两个案例得好好弄清楚,感觉在任何方面既然能够作为公开课被提到这所名校的课程里面自然有不得不学习的理由,感觉应该去入手一下,毕竟这种课,价格不匪,难以接触,能看到就当再教育了.

- swift中_的用法,忽略默认参数名。

swift中默认参数名除了第一个之外,其他的默认是不忽略的,但是如果在参数的名字前面加上_,就可以忽略这个参数名了,虽然有些麻烦,但是这种定义也挺好,而且不想知道名字或者不想让别人知道名字的或者不用让 ...

- 微信小程序登录流程图

一. 官方登录时序图 官方的登录时序图 二. 简单理解 这里仅按照官方推荐的规范来 0. 前置条件 一共有三端: - 微信小程序客户端 - 第三方服务器端- 微信服务器端 1. 客户端获得code,并 ...

- RabbitMQ消息队列(四): 消息路由

1. 路由: 前面的示例中,我们或得到的消息为广播消息,但是无法更精确的获取消息的子集,比如:日志消息,worker1只需要error级别的日志, 而worker2需要info,warning,err ...

- Linux进程冻结技术【转】

转自:http://blog.csdn.net/zdy0_2004/article/details/50018843 http://www.wowotech.net/ 1 什么是进程冻结 进程冻结技术 ...

- JMeter之定时器的作用域

定时器的作用域 1.定时器是在每个sampler(采样器)之前执行的,而不是之后(无论定时器位置在sampler之前还是下面): 2.当执行一个sampler之前时,所有当前作用域内的定时器都会被执行 ...

- 阿里云OSS Web端直传 服务器签名C#版

最近用到队里OSS的文件上传,然后阿里官方给的四个服务器签名有Java PHP Python Go四个版本,就是没C#(话说写个C#有多难?) 百度了一下好像也没有,既然这样只能自己动手照着Java版 ...

- 配置iptables,把80端口转到8080

在Linux的下面部署了tomcat,为了安全我们使用非root用户进行启动,但是在域名绑定时无法直接访问80端口号.众所周知,在unix下,非root用户不能监听1024以上的端口号,这个tomca ...

- 整数快速乘法/快速幂+矩阵快速幂+Strassen算法

快速幂算法可以说是ACM一类竞赛中必不可少,并且也是非常基础的一类算法,鉴于我一直学的比较零散,所以今天用这个帖子总结一下 快速乘法通常有两类应用:一.整数的运算,计算(a*b) mod c 二.矩 ...