iSCSI 网关管理 - Storage6

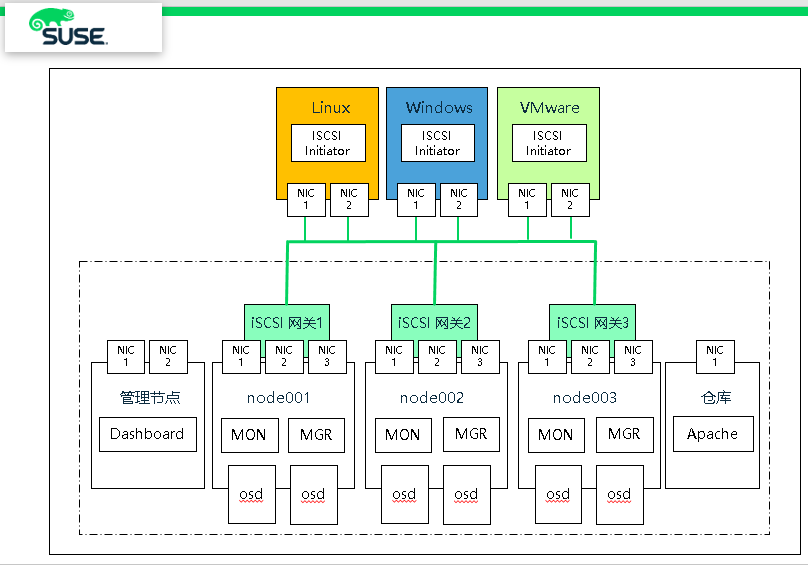

iSCSI网关集成了Ceph存储和iSCSI标准,以提供一个高可用性(HA) iSCSI目标,该目标将RADOS块设备(RBD)映像导出为SCSI磁盘。iSCSI协议允许客户机 (initiator) 通过TCP/IP网络向SCSI存储设备( targets )发送SCSI命令。这允许异构客户机访问Ceph存储集群。

每个iSCSI网关运行Linux IO目标内核子系统(LIO),以提供iSCSI协议支持。LIO利用用户空间通过( TCMU ) 与Ceph的librbd库交互,并向iSCSI客户机暴露RBD镜像。使用Ceph的iSCSI网关,可以有效地运行一个完全集成的块存储基础设施,它具有传统存储区域网络(SAN)的所有特性和优点。

RBD 作为 VMware ESXI datastore 是否支持?

(1)目前来说,RBD是不支持datastore形式。

(2)iSCSI 是支持 datastore 这种方式,可以作为VMware Esxi 虚拟机提供存储功能,性价比非常不错的选择。

1、创建池和镜像

(1)创建池

# ceph osd pool create iscsi-images replicated

# ceph osd pool application enable iscsi-images rbd

(2)创建images

# rbd --pool iscsi-images create --size= 'iscsi-gateway-image001'

# rbd --pool iscsi-images create --size= 'iscsi-gateway-image002'

# rbd --pool iscsi-images create --size= 'iscsi-gateway-image003'

# rbd --pool iscsi-images create --size= 'iscsi-gateway-image004'

(3)显示images

# rbd ls -p iscsi-images

iscsi-gateway-image001

iscsi-gateway-image002

iscsi-gateway-image003

iscsi-gateway-image004

2、deepsea 方式安装iSCSI网关

(1)node001 和 node002节点上安装,编辑policy.cfg 文件

vim /srv/pillar/ceph/proposals/policy.cfg

......

# IGW

role-igw/cluster/node00[-]*.sls

......

(2)运行 stage 2 和 stage 4

# salt-run state.orch ceph.stage.

# salt 'node001*' pillar.items

public_network:

192.168.2.0/

roles:

- mon

- mgr

- storage

- igw

time_server:

admin.example.com

# salt-run state.orch ceph.stage.

3、手动方式安装iSCSI网关

(1)node003 节点安装 iscsi 软件包

# zypper -n in -t pattern ceph_iscsi

# zypper -n in tcmu-runner tcmu-runner-handler-rbd \

ceph-iscsi patterns-ses-ceph_iscsi python3-Flask python3-click python3-configshell-fb \

python3-itsdangerous python3-netifaces python3-rtslib-fb \

python3-targetcli-fb python3-urwid targetcli-fb-common

(2)admin节点创建key,并复制到 node003

# ceph auth add client.igw.node003 mon 'allow *' osd 'allow *' mgr 'allow r'

# ceph auth get client.igw.node003

client.igw.node003

key: AQC0eotdAAAAABAASZrZH9KEo0V0WtFTCW9AHQ==

caps: [mgr] allow r

caps: [mon] allow *

caps: [osd] allow *

# ceph auth get client.igw.node003 >> /etc/ceph/ceph.client.igw.node003.keyring

# scp /etc/ceph/ceph.client.igw.node003.keyring node003:/etc/ceph

(3)node003 节点启动服务

# systemctl start tcmu-runner.service

# systemctl enable tcmu-runner.service

(4)node003 节点创建配置文件

# vim /etc/ceph/iscsi-gateway.cfg

[config]

cluster_client_name = client.igw.node003

pool = iscsi-images

trusted_ip_list = 192.168.2.42,192.168.2.40,192.168.2.41

minimum_gateways =

fqdn_enabled=true # Additional API configuration options are as follows, defaults shown.

api_port =

api_user = admin

api_password = admin

api_secure = false # Log level

logger_level = WARNING

(5)启动 RBD target 服务

# systemctl start rbd-target-api.service

# systemctl enable rbd-target-api.service

(6)显示配置信息

# gwcli info

HTTP mode : http

Rest API port :

Local endpoint : http://localhost:5000/api

Local Ceph Cluster : ceph

2ndary API IP's : 192.168.2.42,192.168.2.40,192.168.2.41

# gwcli ls

o- / ...................................................................... [...]

o- cluster ...................................................... [Clusters: ]

| o- ceph ......................................................... [HEALTH_OK]

| o- pools ....................................................... [Pools: ]

| | o- iscsi-images ........ [(x3), Commit: .00Y/15718656K (%), Used: 192K]

| o- topology ............................................. [OSDs: ,MONs: ]

o- disks .................................................... [.00Y, Disks: ]

o- iscsi-targets ............................ [DiscoveryAuth: None, Targets: ]

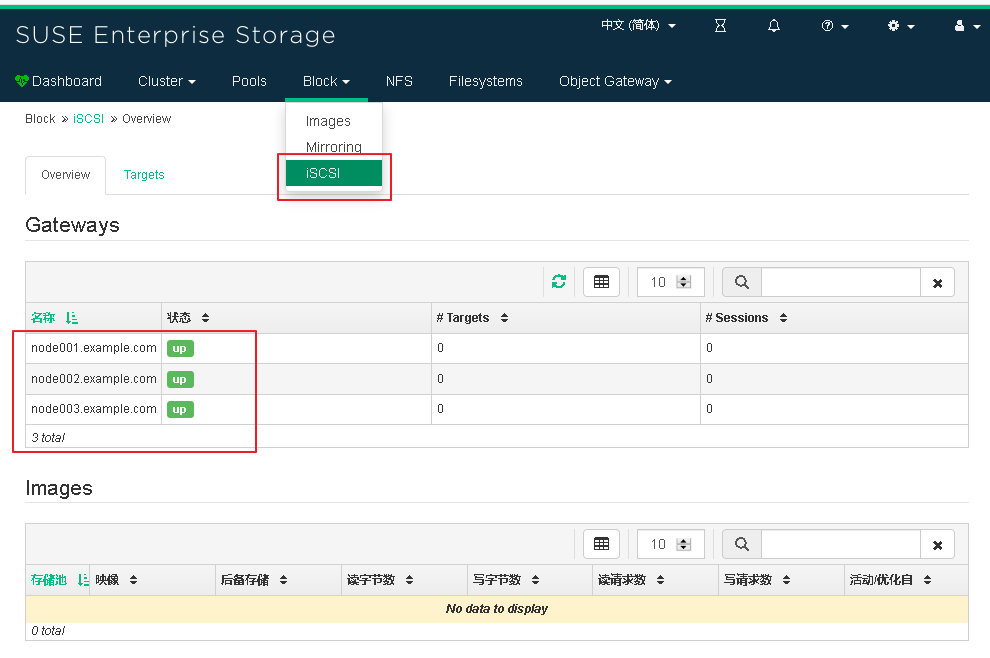

4、Dashboard 添加 iscsi 网关

(1)Admin节点上,查看 dashboard iSCSI 网关

admin:~ # ceph dashboard iscsi-gateway-list

{"gateways": {"node002.example.com": {"service_url": "http://admin:admin@192.168.2.41:5000"},

"node001.example.com": {"service_url": "http://admin:admin@192.168.2.40:5000"}}}

(2)添加 iSCSI 网关

# ceph dashboard iscsi-gateway-add http://admin:admin@192.168.2.42:5000

# ceph dashboard iscsi-gateway-list

{"gateways": {"node002.example.com": {"service_url": "http://admin:admin@192.168.2.41:5000"},

"node001.example.com": {"service_url": "http://admin:admin@192.168.2.40:5000"},

"node003.example.com": {"service_url": "http://admin:admin@192.168.2.42:5000"}}}

(3)登陆 Dashboard 查看 iSCSI 网关

5、Export RBD Images via iSCSI

(1)创建 iSCSI target name

# gwcli

gwcli > /> cd /iscsi-targets

gwcli > /iscsi-targets> create iqn.2019-10.com.suse-iscsi.iscsi01.x86:iscsi-gateway01

(2)添加 iSCSI 网关

gwcli > /iscsi-targets> cd iqn.2019-10.com.suse-iscsi.iscsi01.x86:iscsi-gateway01/gateways

/iscsi-target...tvol/gateways> create node001.example.com 172.200.50.40

/iscsi-target...tvol/gateways> create node002.example.com 172.200.50.41

/iscsi-target...tvol/gateways> create node003.example.com 172.200.50.42 /iscsi-target...ay01/gateways> ls

o- gateways ......................................................... [Up: 3/3, Portals: 3]

o- node001.example.com ............................................. [172.200.50.40 (UP)]

o- node002.example.com ............................................. [172.200.50.41 (UP)]

o- node003.example.com ............................................. [172.200.50.42 (UP)]

注意:安装主机名来定义

/iscsi-target...tvol/gateways> create node002 172.200.50.41

The first gateway defined must be the local machine

(3)添加 RBD 镜像

/iscsi-target...tvol/gateways> cd /disks

/disks> attach iscsi-images/iscsi-gateway-image001

/disks> attach iscsi-images/iscsi-gateway-image002

(4)target 和 RBD 镜像建立映射关系

/disks> cd /iscsi-targets/iqn.2019-10.com.suse-iscsi.iscsi01.x86:iscsi-gateway01/disks

/iscsi-target...teway01/disks> add iscsi-images/iscsi-gateway-image001

/iscsi-target...teway01/disks> add iscsi-images/iscsi-gateway-image002

(5)设置不验证

gwcli > /> cd /iscsi-targets/iqn.2019-10.com.suse-iscsi.iscsi01.x86:iscsi-gateway01/hosts

/iscsi-target...teway01/hosts> auth disable_acl

/iscsi-target...teway01/hosts> exit

(6)查看配置信息

node001:~ # gwcli ls

o- / ............................................................................... [...]

o- cluster ............................................................... [Clusters: 1]

| o- ceph .................................................................. [HEALTH_OK]

| o- pools ................................................................ [Pools: 1]

| | o- iscsi-images .................. [(x3), Commit: 6G/15717248K (40%), Used: 1152K]

| o- topology ...................................................... [OSDs: 6,MONs: 3]

o- disks ................................................................ [6G, Disks: 2]

| o- iscsi-images .................................................. [iscsi-images (6G)]

| o- iscsi-gateway-image001 ............... [iscsi-images/iscsi-gateway-image001 (2G)]

| o- iscsi-gateway-image002 ............... [iscsi-images/iscsi-gateway-image002 (4G)]

o- iscsi-targets ..................................... [DiscoveryAuth: None, Targets: 1]

o- iqn.2019-10.com.suse-iscsi.iscsi01.x86:iscsi-gateway01 .............. [Gateways: 3]

o- disks ................................................................ [Disks: 2]

| o- iscsi-images/iscsi-gateway-image001 .............. [Owner: node001.example.com]

| o- iscsi-images/iscsi-gateway-image002 .............. [Owner: node002.example.com]

o- gateways .................................................. [Up: 3/3, Portals: 3]

| o- node001.example.com ...................................... [172.200.50.40 (UP)]

| o- node002.example.com ...................................... [172.200.50.41 (UP)]

| o- node003.example.com ...................................... [172.200.50.42 (UP)]

o- host-groups ........................................................ [Groups : 0]

o- hosts .................................................... [Hosts: 0: Auth: None]

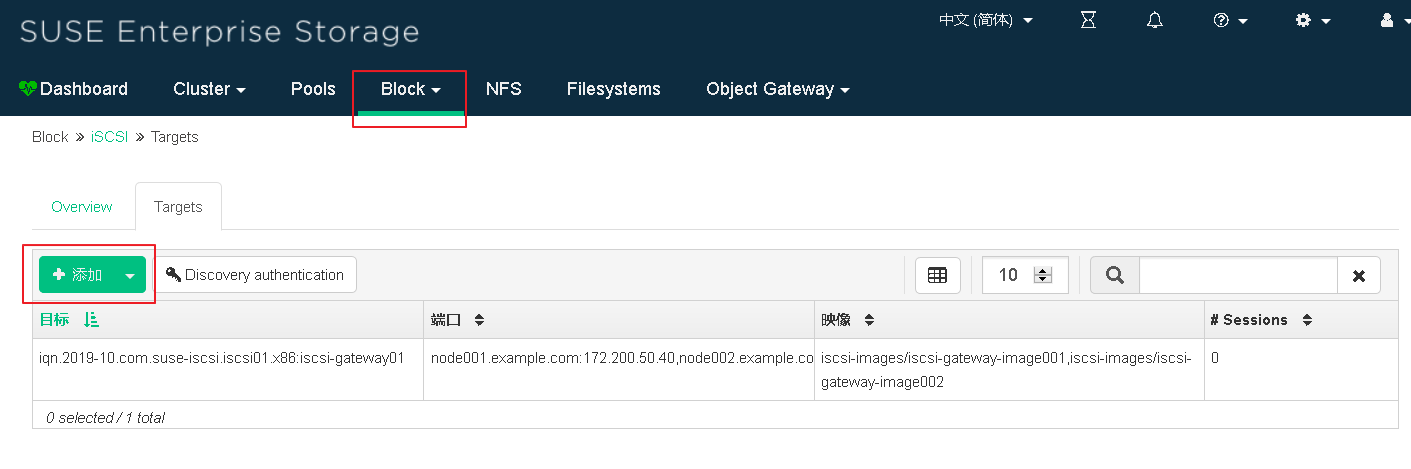

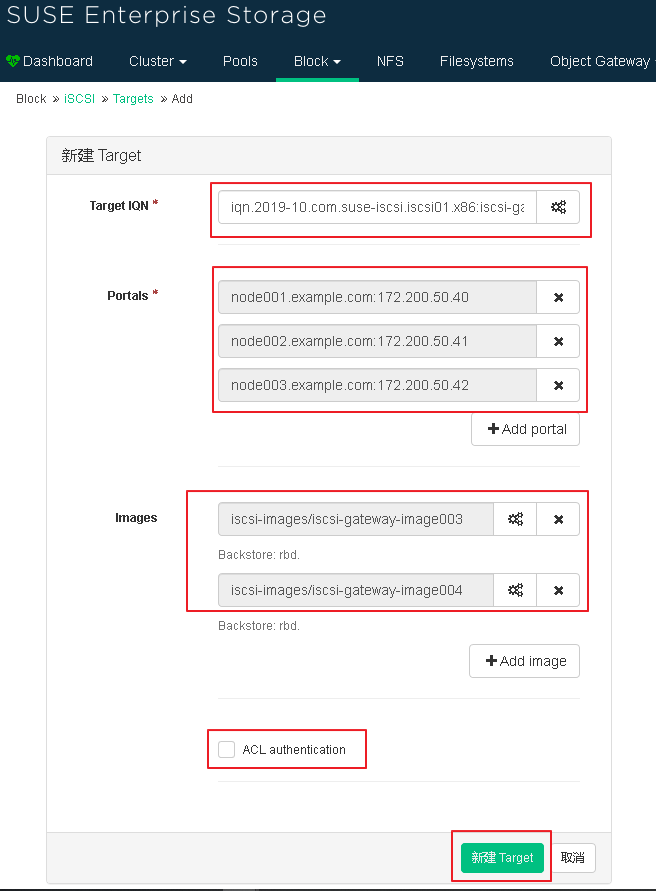

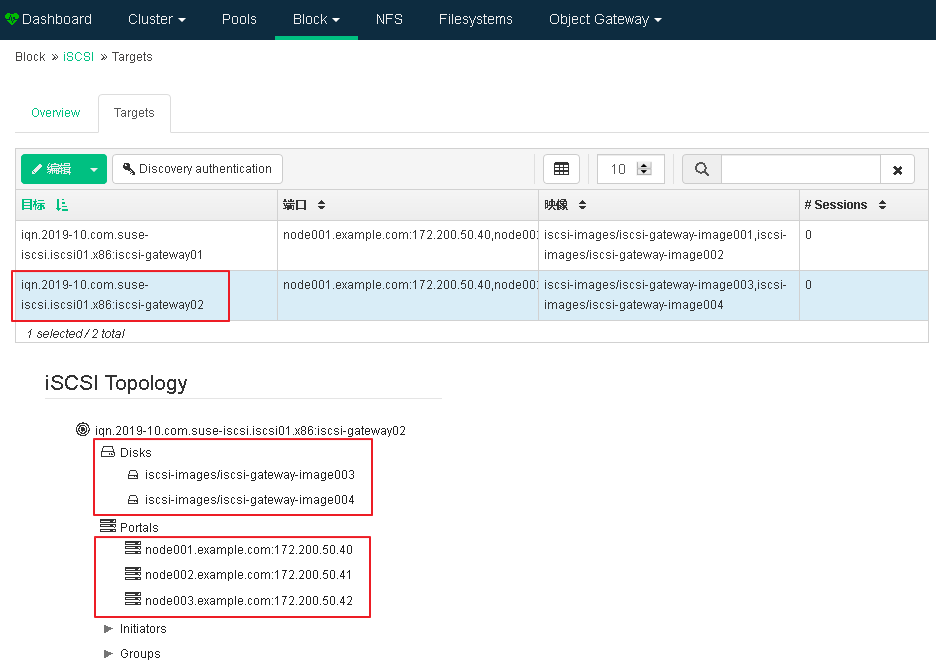

6、使用 Dashboard 界面输出 RBD Images

(1)添加 iSCSI target

(2)编写 target IQN,并且添加镜像 Portals 和 images

(3)查看新添加 iSCSI target 信息

7、Linux 客户端访问

(1)启动 iscsid 服务

- SLES or RHEL

# systemctl start iscsid.service

# systemctl enable iscsid.service

- Debian or Ubuntu

# systemctl start open-iscsi

(2)发现和连接 targets

# iscsiadm -m discovery -t st -p 172.200.50.40

172.200.50.40:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway01

172.200.50.41:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway01

172.200.50.42:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway01

172.200.50.40:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway02

172.200.50.41:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway02

172.200.50.42:, iqn.-.com.suse-iscsi.iscsi01.x86:iscsi-gateway02

(3)登录target

# iscsiadm -m node -p 172.200.50.40 --login

# iscsiadm -m node -p 172.200.50.41 --login

# iscsiadm -m node -p 172.200.50.42 --login

# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda : 25G disk

├─sda1 : 509M part /boot

└─sda2 : .5G part

├─vg00-lvswap : 2G lvm [SWAP]

└─vg00-lvroot : .5G lvm /

sdb : 100G disk

└─vg00-lvroot : .5G lvm /

sdc : 2G disk

sdd : 2G disk

sde : 4G disk

sdf : 4G disk

sdg : 2G disk

sdh : 4G disk

sdi : 2G disk

sdj : 4G disk

sdk : 2G disk

sdl : 2G disk

sdm : 4G disk

sdn : 4G disk

(4)如果系统上已安装 lsscsi 实用程序,您可以使用它来枚举系统上可用的 SCSI 设备:

# lsscsi

[:::] cd/dvd NECVMWar VMware SATA CD01 1.00 /dev/sr0

[:::] disk VMware, VMware Virtual S 1.0 /dev/sda

[:::] disk VMware, VMware Virtual S 1.0 /dev/sdb

[:::] disk SUSE RBD 4.0 /dev/sdc

[:::] disk SUSE RBD 4.0 /dev/sde

[:::] disk SUSE RBD 4.0 /dev/sdd

[:::] disk SUSE RBD 4.0 /dev/sdf

[:::] disk SUSE RBD 4.0 /dev/sdg

[:::] disk SUSE RBD 4.0 /dev/sdh

[:::] disk SUSE RBD 4.0 /dev/sdi

[:::] disk SUSE RBD 4.0 /dev/sdj

[:::] disk SUSE RBD 4.0 /dev/sdk

[:::] disk SUSE RBD 4.0 /dev/sdm

[:::] disk SUSE RBD 4.0 /dev/sdl

[:::] disk SUSE RBD 4.0 /dev/sdn

(5)多路径设置

# zypper in multipath-tools

# modprobe dm-multipath path

# systemctl start multipathd.service

# systemctl enable multipathd.service

# multipath -ll

36001405863b0b3975c54c5f8d1ce0e01 dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

|-+- policy='service-time 0' prio=50 status=active

| `- ::: sdh : active ready running <=== 单条链路 active

`-+- policy='service-time 0' prio= status=enabled

|- ::: sde : active ready running

`- ::: sdm : active ready running

3600140529260bf41c294075beede0c21 dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

|-+- policy='service-time 0' prio= status=active

| `- ::: sdc : active ready running

`-+- policy='service-time 0' prio= status=enabled

|- ::: sdg : active ready running

`- ::: sdk : active ready running

360014055d00387c82104d338e81589cb dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

|-+- policy='service-time 0' prio= status=active

| `- ::: sdl : active ready running

`-+- policy='service-time 0' prio= status=enabled

|- ::: sdd : active ready running

`- ::: sdi : active ready running

3600140522ec3f9612b64b45aa3e72d9c dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

|-+- policy='service-time 0' prio= status=active

| `- ::: sdf : active ready running

`-+- policy='service-time 0' prio= status=enabled

|- ::: sdj : active ready running

`- ::: sdn : active ready running

(5)编辑多路径配置文件

# vim /etc/multipath.conf

defaults {

user_friendly_names yes

} devices {

device {

vendor "(LIO-ORG|SUSE)"

product "RBD"

path_grouping_policy "multibus" # 所有有效路径在一个优先组群中

path_checker "tur" # 在设备中执行 TEST UNIT READY 命令。

features ""

hardware_handler "1 alua" # 在切换路径组群或者处理 I/O 错误时用来执行硬件具体动作的模块。

prio "alua"

failback "immediate"

rr_weight "uniform" # 所有路径都有相同的加权

no_path_retry # 路径故障后,重试12次,每次5秒

rr_min_io # 指定切换到当前路径组的下一个路径前路由到该路径的 I/O 请求数。

}

}

# systemctl stop multipathd.service

# systemctl start multipathd.service

(6)查看多路径状态

# multipath -ll

mpathd (3600140522ec3f9612b64b45aa3e72d9c) dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

`-+- policy='service-time 0' prio= status=active

|- ::: sdf : active ready running <=== 多条链路 active

|- ::: sdj : active ready running

`- ::: sdn : active ready running

mpathc (360014055d00387c82104d338e81589cb) dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

`-+- policy='service-time 0' prio= status=active

|- ::: sdd : active ready running

|- ::: sdi : active ready running

`- ::: sdl : active ready running

mpathb (36001405863b0b3975c54c5f8d1ce0e01) dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

`-+- policy='service-time 0' prio= status=active

|- ::: sde : active ready running

|- ::: sdh : active ready running

`- ::: sdm : active ready running

mpatha (3600140529260bf41c294075beede0c21) dm- SUSE,RBD

size=.0G features='2 queue_if_no_path retain_attached_hw_handler' hwhandler='1 alua' wp=rw

`-+- policy='service-time 0' prio= status=active

|- ::: sdc : active ready running

|- ::: sdg : active ready running

`- ::: sdk : active ready running

(7)显示当前的device mapper的信息

# dmsetup ls --tree

mpathd (:)

├─ (:)

├─ (:)

└─ (:)

mpathc (:)

├─ (:)

├─ (:)

└─ (:)

mpathb (:)

├─ (:)

├─ (:)

└─ (:)

mpatha (:)

├─ (:)

├─ (:)

└─ (:)

vg00-lvswap (:)

└─ (:)

vg00-lvroot (:)

├─ (:)

└─ (:)

(8)客户端 yast iscsi-client 工具查看

iSCSI的其他常用操作(客户端)

(1)列出所有target

# iscsiadm -m node

(2)连接所有target

# iscsiadm -m node -L all

(3)连接指定target

# iscsiadm -m node -T iqn.... -p 172.29.88.62 --login

(4)使用如下命令可以查看配置信息

# iscsiadm -m node -o show -T iqn.-.com.synology:rackstation.exservice-bak

(5)查看目前 iSCSI target 连接状态

# iscsiadm -m session

# iscsiadm: No active sessions.

(目前没有已连接的 iSCSI target)

(6)断开所有target

# iscsiadm -m node -U all

(7)断开指定target

# iscsiadm -m node -T iqn... -p 172.29.88.62 --logout

(8)删除所有node信息

# iscsiadm -m node --op delete

(9)删除指定节点(/var/lib/iscsi/nodes目录下,先断开session)

# iscsiadm -m node -o delete -name iqn.-.cn.nayun:test-

(10)删除一个目标(/var/lib/iscsi/send_targets目录下)

# iscsiadm --mode discovery -o delete -p 172.29.88.62:

iSCSI 网关管理 - Storage6的更多相关文章

- 003.iSCSI客户端管理

一 启动器介绍 iSCSI启动器通常在软件中实施,也可以采用硬件启动器.软件启动器需要安装iSCSI-initiator-utils软件包.包含如下文件: /etc/iscsi/iscsid.conf ...

- Getway网关管理ZUUL

1.ZUUL微服务网关 微服务架构体系中,通常一个业务系统会有很多的微服务,比如:OrderService.ProductService.UserService...,为了让调用更简单,一般会在这些服 ...

- RBD 基本使用 - Storage6

块存储管理系列文章 (1)RBD 基本使用 - Storage6 (2)iSCSI 网关管理 (3)使用 librbd 将虚拟机运行在 Ceph RBD (4)RBD Mirror 容灾 Ceph 块 ...

- [转]iSCSI完全指南

[转]iSCSI完全指南 Posted on 2008-04-01 18:57 Tony Zhang 阅读(2102) 评论(0) 编辑 收藏 引:在上世纪末.本世纪初,一提到SAN(Storage ...

- IDE SATA SCSI iSCSI等存储硬盘对比与分析

原文地址:http://blog.csdn.net/trochiluses/article/details/21229283 IDE是并口硬盘,(5400-7200转): SATA是串口硬盘,(720 ...

- iSCSI引入FC/SAN

由 cxemc 在 2013-9-24 上午9:10 上创建,最后由 cxemc 在 2013-9-24 上午9:10 上修改 版本 1 集成iSCSI 和FC SAN有五种常见的方法,各有优缺,适应 ...

- ceph的ISCSI GATEWAY

前言 最开始接触这个是在L版本的监控平台里面看到的,有个iscsi网关,但是没看到有类似的介绍,然后通过接口查询到了一些资料,当时由于有比较多的东西需要新内核,新版本的支持,所以并没有配置出来,由于内 ...

- 如何为RD网关创建自建签名的证书

创建安全的RD网关是一件非常好的事情,这样可以在公网环境下直接远程接入内部的已开启远程访问的主机服务器. 建立这个安全的RD网关需要的材料有RD网关本身,以及一个证书.由于一般情况下这些在RD网关后面 ...

- 深入分析iSCSI协议的应用

深入分析iSCSI协议的应用 1 引言 快速增长的存储容量使得企业需要采用网络存储解决方案.目前网络存储技术采用的连接技术主要有光纤通道和TCP/IP.基于IP的网络存储能解决基于光纤通道的网络存储中 ...

随机推荐

- iOS8 新特性

iOS8新特性主要体现在4方面 1.UIAlertController 对alert&actionSheet的封装 UIAlertController.h 提示框按钮的选择 typedef N ...

- 牛客小白月赛4 J 强迫症 思维

链接:https://www.nowcoder.com/acm/contest/134/J来源:牛客网 题目描述 铁子最近犯上了强迫症,他总是想要把一个序列里的元素变得两两不同,而他每次可以执行一个这 ...

- codeforces 811 C. Vladik and Memorable Trip(dp)

题目链接:http://codeforces.com/contest/811/problem/C 题意:给你n个数,现在让你选一些区间出来,对于每个区间中的每一种数,全部都要出现在这个区间. 每个区间 ...

- poj 1797Heavy Transportation(dijkstra变形)

题目链接:http://poj.org/problem?id=1797 题意:有n个城市,m条道路,在每条道路上有一个承载量,现在要求从1到n城市最大承载量,而最大承载量就是从城市1到城市n所有通路上 ...

- Nginx简介及配置文件详解

http://blog.csdn.net/hzsunshine/article/details/63687054 一 Nginx简介 Nginx是一款开源代码的高性能HTTP服务器和反向代理服务 ...

- 上传文件的C#代码

1 <%@ WebHandler Language="C#" Class="UpLoadFile" %> 2 3 using System; 4 u ...

- JavaScript如何给td赋值

td里加个标签,如: <td><div id="aa"></div></td> document.getElementById('a ...

- 对android中ActionBar中setDisplayHomeAsUpEnabled和setHomeButtonEnabled和setDisplayShowHomeEnabled方法的理解

转自: http://blog.csdn.net/lovexieyuan520/article/details/9974929 http://blog.csdn.net/cyp331203/artic ...

- Airflow:TypeError an integer is required (got type NoneType) 一次诡异问题排查

当使用rabbitmq作为airflow的broker的时候,启动scheduler,即执行airflow scheduler命令的时候抛出以下异常: Traceback (most recent ...

- 关于设置tomcat端口为80的事

今天有人要求tomcat服务器的访问地址不能带端口访问, 也就是说只能用80端口访问网站. 那么问题来了, Ubuntu系统禁止root用户以外的用户访问1024以下的商品, 因此tomcat 默认为 ...