解决django或者其他线程中调用scrapy报ReactorNotRestartable的错误

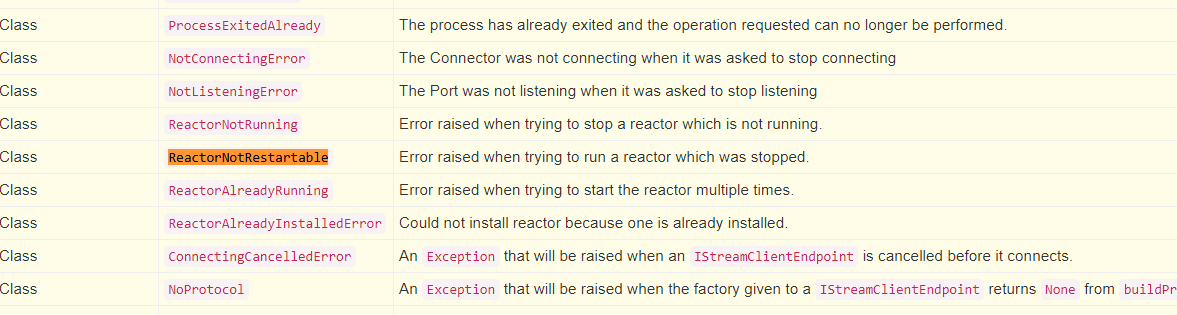

官网中关于ReactorNotRestartable的错误描述(摘自:https://twistedmatrix.com/documents/16.1.0/api/twisted.internet.error.html),我们将从scrapy源码分析这个问题

重点要了解scrapy源码下的crawler.py模块的三个类,这三个类是Scrapy的启动核心代码, 由于Scrapy是基于Twisted(一个python网络编程框架)的事件循环写的异步爬虫框架,

所以需要对Twisted模块有一定了解。这里不展开描写了。

- Crawler类: 启动scrapy的爬虫引擎并开始爬取

- CrawlerRunner类:是Crawler子类, 将设置传递给Crawler类并启动

- CrawlerProcesser类: 是CrawlerRunner子类,启动并且控制Twisted的reactor参数,这个reactor参数是全局变量,有且只能有一个,且只能在主线程中运行

import six

import signal

import logging

import warnings import sys

from twisted.internet import reactor, defer

from zope.interface.verify import verifyClass, DoesNotImplement from scrapy import Spider

from scrapy.core.engine import ExecutionEngine

from scrapy.resolver import CachingThreadedResolver

from scrapy.interfaces import ISpiderLoader

from scrapy.extension import ExtensionManager

from scrapy.settings import overridden_settings, Settings

from scrapy.signalmanager import SignalManager

from scrapy.exceptions import ScrapyDeprecationWarning

from scrapy.utils.ossignal import install_shutdown_handlers, signal_names

from scrapy.utils.misc import load_object

from scrapy.utils.log import (

LogCounterHandler, configure_logging, log_scrapy_info,

get_scrapy_root_handler, install_scrapy_root_handler)

from scrapy import signals logger = logging.getLogger(__name__) class Crawler(object): def __init__(self, spidercls, settings=None):

if isinstance(spidercls, Spider):

raise ValueError(

'The spidercls argument must be a class, not an object') if isinstance(settings, dict) or settings is None:

settings = Settings(settings) self.spidercls = spidercls

self.settings = settings.copy()

self.spidercls.update_settings(self.settings) self.signals = SignalManager(self)

self.stats = load_object(self.settings['STATS_CLASS'])(self) handler = LogCounterHandler(self, level=self.settings.get('LOG_LEVEL'))

logging.root.addHandler(handler) d = dict(overridden_settings(self.settings))

logger.info("Overridden settings: %(settings)r", {'settings': d}) if get_scrapy_root_handler() is not None:

# scrapy root handler already installed: update it with new settings

install_scrapy_root_handler(self.settings)

# lambda is assigned to Crawler attribute because this way it is not

# garbage collected after leaving __init__ scope

self.__remove_handler = lambda: logging.root.removeHandler(handler)

self.signals.connect(self.__remove_handler, signals.engine_stopped) lf_cls = load_object(self.settings['LOG_FORMATTER'])

self.logformatter = lf_cls.from_crawler(self)

self.extensions = ExtensionManager.from_crawler(self) self.settings.freeze()

self.crawling = False

self.spider = None

self.engine = None @property

def spiders(self):

if not hasattr(self, '_spiders'):

warnings.warn("Crawler.spiders is deprecated, use "

"CrawlerRunner.spider_loader or instantiate "

"scrapy.spiderloader.SpiderLoader with your "

"settings.",

category=ScrapyDeprecationWarning, stacklevel=2)

self._spiders = _get_spider_loader(self.settings.frozencopy())

return self._spiders @defer.inlineCallbacks

def crawl(self, *args, **kwargs):

assert not self.crawling, "Crawling already taking place"

self.crawling = True try:

self.spider = self._create_spider(*args, **kwargs)

self.engine = self._create_engine()

start_requests = iter(self.spider.start_requests())

yield self.engine.open_spider(self.spider, start_requests)

yield defer.maybeDeferred(self.engine.start)

except Exception:

# In Python 2 reraising an exception after yield discards

# the original traceback (see https://bugs.python.org/issue7563),

# so sys.exc_info() workaround is used.

# This workaround also works in Python 3, but it is not needed,

# and it is slower, so in Python 3 we use native `raise`.

if six.PY2:

exc_info = sys.exc_info() self.crawling = False

if self.engine is not None:

yield self.engine.close() if six.PY2:

six.reraise(*exc_info)

raise def _create_spider(self, *args, **kwargs):

return self.spidercls.from_crawler(self, *args, **kwargs) def _create_engine(self):

return ExecutionEngine(self, lambda _: self.stop()) @defer.inlineCallbacks

def stop(self):

"""Starts a graceful stop of the crawler and returns a deferred that is

fired when the crawler is stopped."""

if self.crawling:

self.crawling = False

yield defer.maybeDeferred(self.engine.stop) class CrawlerRunner(object):

"""

This is a convenient helper class that keeps track of, manages and runs

crawlers inside an already setup Twisted `reactor`_. The CrawlerRunner object must be instantiated with a

:class:`~scrapy.settings.Settings` object. This class shouldn't be needed (since Scrapy is responsible of using it

accordingly) unless writing scripts that manually handle the crawling

process. See :ref:`run-from-script` for an example.

""" crawlers = property(

lambda self: self._crawlers,

doc="Set of :class:`crawlers <scrapy.crawler.Crawler>` started by "

":meth:`crawl` and managed by this class."

) def __init__(self, settings=None):

if isinstance(settings, dict) or settings is None:

settings = Settings(settings)

self.settings = settings

self.spider_loader = _get_spider_loader(settings)

self._crawlers = set()

self._active = set()

self.bootstrap_failed = False @property

def spiders(self):

warnings.warn("CrawlerRunner.spiders attribute is renamed to "

"CrawlerRunner.spider_loader.",

category=ScrapyDeprecationWarning, stacklevel=2)

return self.spider_loader def crawl(self, crawler_or_spidercls, *args, **kwargs):

"""

Run a crawler with the provided arguments. It will call the given Crawler's :meth:`~Crawler.crawl` method, while

keeping track of it so it can be stopped later. If ``crawler_or_spidercls`` isn't a :class:`~scrapy.crawler.Crawler`

instance, this method will try to create one using this parameter as

the spider class given to it. Returns a deferred that is fired when the crawling is finished. :param crawler_or_spidercls: already created crawler, or a spider class

or spider's name inside the project to create it

:type crawler_or_spidercls: :class:`~scrapy.crawler.Crawler` instance,

:class:`~scrapy.spiders.Spider` subclass or string :param list args: arguments to initialize the spider :param dict kwargs: keyword arguments to initialize the spider

"""

if isinstance(crawler_or_spidercls, Spider):

raise ValueError(

'The crawler_or_spidercls argument cannot be a spider object, '

'it must be a spider class (or a Crawler object)')

crawler = self.create_crawler(crawler_or_spidercls)

return self._crawl(crawler, *args, **kwargs) def _crawl(self, crawler, *args, **kwargs):

self.crawlers.add(crawler)

d = crawler.crawl(*args, **kwargs)

self._active.add(d) def _done(result):

self.crawlers.discard(crawler)

self._active.discard(d)

self.bootstrap_failed |= not getattr(crawler, 'spider', None)

return result return d.addBoth(_done) def create_crawler(self, crawler_or_spidercls):

"""

Return a :class:`~scrapy.crawler.Crawler` object. * If ``crawler_or_spidercls`` is a Crawler, it is returned as-is.

* If ``crawler_or_spidercls`` is a Spider subclass, a new Crawler

is constructed for it.

* If ``crawler_or_spidercls`` is a string, this function finds

a spider with this name in a Scrapy project (using spider loader),

then creates a Crawler instance for it.

"""

if isinstance(crawler_or_spidercls, Spider):

raise ValueError(

'The crawler_or_spidercls argument cannot be a spider object, '

'it must be a spider class (or a Crawler object)')

if isinstance(crawler_or_spidercls, Crawler):

return crawler_or_spidercls

return self._create_crawler(crawler_or_spidercls) def _create_crawler(self, spidercls):

if isinstance(spidercls, six.string_types):

spidercls = self.spider_loader.load(spidercls)

return Crawler(spidercls, self.settings) def stop(self):

"""

Stops simultaneously all the crawling jobs taking place. Returns a deferred that is fired when they all have ended.

"""

return defer.DeferredList([c.stop() for c in list(self.crawlers)]) @defer.inlineCallbacks

def join(self):

"""

join() Returns a deferred that is fired when all managed :attr:`crawlers` have

completed their executions.

"""

while self._active:

yield defer.DeferredList(self._active) class CrawlerProcess(CrawlerRunner):

"""

A class to run multiple scrapy crawlers in a process simultaneously. This class extends :class:`~scrapy.crawler.CrawlerRunner` by adding support

for starting a Twisted `reactor`_ and handling shutdown signals, like the

keyboard interrupt command Ctrl-C. It also configures top-level logging. This utility should be a better fit than

:class:`~scrapy.crawler.CrawlerRunner` if you aren't running another

Twisted `reactor`_ within your application. The CrawlerProcess object must be instantiated with a

:class:`~scrapy.settings.Settings` object. :param install_root_handler: whether to install root logging handler

(default: True) This class shouldn't be needed (since Scrapy is responsible of using it

accordingly) unless writing scripts that manually handle the crawling

process. See :ref:`run-from-script` for an example.

""" def __init__(self, settings=None, install_root_handler=True):

super(CrawlerProcess, self).__init__(settings)

install_shutdown_handlers(self._signal_shutdown)

configure_logging(self.settings, install_root_handler)

log_scrapy_info(self.settings) def _signal_shutdown(self, signum, _):

install_shutdown_handlers(self._signal_kill)

signame = signal_names[signum]

logger.info("Received %(signame)s, shutting down gracefully. Send again to force ",

{'signame': signame})

reactor.callFromThread(self._graceful_stop_reactor) def _signal_kill(self, signum, _):

install_shutdown_handlers(signal.SIG_IGN)

signame = signal_names[signum]

logger.info('Received %(signame)s twice, forcing unclean shutdown',

{'signame': signame})

reactor.callFromThread(self._stop_reactor) def start(self, stop_after_crawl=True):

"""

This method starts a Twisted `reactor`_, adjusts its pool size to

:setting:`REACTOR_THREADPOOL_MAXSIZE`, and installs a DNS cache based

on :setting:`DNSCACHE_ENABLED` and :setting:`DNSCACHE_SIZE`. If ``stop_after_crawl`` is True, the reactor will be stopped after all

crawlers have finished, using :meth:`join`. :param boolean stop_after_crawl: stop or not the reactor when all

crawlers have finished

"""

if stop_after_crawl:

d = self.join()

# Don't start the reactor if the deferreds are already fired

if d.called:

return

d.addBoth(self._stop_reactor) reactor.installResolver(self._get_dns_resolver())

tp = reactor.getThreadPool()

tp.adjustPoolsize(maxthreads=self.settings.getint('REACTOR_THREADPOOL_MAXSIZE'))

reactor.addSystemEventTrigger('before', 'shutdown', self.stop)

reactor.run(installSignalHandlers=False) # blocking call def _get_dns_resolver(self):

if self.settings.getbool('DNSCACHE_ENABLED'):

cache_size = self.settings.getint('DNSCACHE_SIZE')

else:

cache_size = 0

return CachingThreadedResolver(

reactor=reactor,

cache_size=cache_size,

timeout=self.settings.getfloat('DNS_TIMEOUT')

) def _graceful_stop_reactor(self):

d = self.stop()

d.addBoth(self._stop_reactor)

return d def _stop_reactor(self, _=None):

try:

reactor.stop()

except RuntimeError: # raised if already stopped or in shutdown stage

pass def _get_spider_loader(settings):

""" Get SpiderLoader instance from settings """

cls_path = settings.get('SPIDER_LOADER_CLASS')

loader_cls = load_object(cls_path)

try:

verifyClass(ISpiderLoader, loader_cls)

except DoesNotImplement:

warnings.warn(

'SPIDER_LOADER_CLASS (previously named SPIDER_MANAGER_CLASS) does '

'not fully implement scrapy.interfaces.ISpiderLoader interface. '

'Please add all missing methods to avoid unexpected runtime errors.',

category=ScrapyDeprecationWarning, stacklevel=2

)

return loader_cls.from_settings(settings.frozencopy())

我是在djcelery项目中调用scrapy,是在worker的线程中调用,直接使用CrawlerProcess,会报错,因为CrawlerProcess自带reactor的启动关闭过程,而这个过程是在其他线程中发生的,

所以重复运行会报 ReactorNotRestartable、ReactorNotRestartable、ReactorNotRunning等一系列问题,只能使用CrawlerRunner类来启动Scrapy,但是如果按照官网例子(如下)直接运行:

from twisted.internet import reactor

import scrapy

from scrapy.crawler import CrawlerRunner

from scrapy.utils.log import configure_logging class MySpider(scrapy.Spider):

# Your spider definition

... configure_logging({'LOG_FORMAT': '%(levelname)s: %(message)s'})

runner = CrawlerRunner() d = runner.crawl(MySpider)

d.addBoth(lambda _: reactor.stop()) # twisted的回调函数,当爬虫运行结束时会自动调用并且stop reactor

reactor.run() # the script will block here until the crawling is finished

这个在Scrapy项目里面运行(主线程运行)没有问题,但是要在djcelery中的worker线程中运行会报错ReactorNotRestartable,表示reactor已经关闭了不能重新启动,这个问题不知道后续twisted会不会解决掉,目前需要屏蔽掉下面这两行代码,

d.addBoth(lambda _: reactor.stop())

reactor.run() # the script will block here until the crawling is finished

但是如果不启动reactor又不行,又不能重复启动reactor,可以用到另一个模块 Crochet,下面是他的官网描述,写的很清楚,可以代替我们运行reactor和启动事件循环(主要是在setup()函数中),并且全程不需要start、stop reactor所以避免了上面类似的问题

Use Twisted Anywhere!

Crochet is an MIT-licensed library that makes it easier for blocking and threaded applications like Flask or Django to use the Twisted networking framework. Here’s an example of a program using Crochet. Notice that you get a completely blocking interface to Twisted and do not need to run the Twisted reactor, the event loop, yourself.

综合以上,再其他线程中调用Twisted reactor,可以如下调用,reactor会在setup函数中自动调用,并全程使用:

from crochet import setup

setup()

from scrapy.utils.project import get_project_settings

from scrapy.crawler import CrawlerRunner runner = CrawlerRunner(get_project_settings()) runner.crawl(SpiderName)

d = runner.join()

解决django或者其他线程中调用scrapy报ReactorNotRestartable的错误的更多相关文章

- 在线程中调用SaveFileDialog

在多线程编程中,有时候可能需要在单独线程中执行某些操作.例如,调用SaveFileDialog类保存文件.首先,我们在Main方法中创建了一个新线程,并将其指向要执行的委托SaveFileAsyn.在 ...

- WPF非UI线程中调用App.Current.MainWindow.Dispatcher提示其他线程拥有此对象,无权使用。

大家都知道在WPF中对非UI线程中要处理对UI有关的对象进行操作,一般需要使用委托的方式,代码基本就是下面的写法 App.Current.MainWindow.Dispatcher.Invoke(ne ...

- 为什么说invalidate()不能直接在线程中调用

1.为什么说invalidate()不能直接在线程中调用?2.它是怎么违背单线程的?3.Android ui为什么说不是线程安全的?4.android ui操作为什么一定要在UI线程中执行? 1. ...

- Spring MVC普通类或工具类中调用service报空空指针的解决办法(调用service报java.lang.NullPointerException)

当我们在非Controller类中应用service的方法是会报空指针,如图: 这是因为Spring MVC普通类或工具类中调用service报空null的解决办法(调用service报java.la ...

- Django之在Python中调用Django环境

Django之在Python中调用Django环境 新建一个py文件,在其中写下如下代码: import os if __name__ == '__main__': os.environ.setdef ...

- 为何invalidate()不可以直接在UI线程中调用&invalidate与postInvalidate

1.android ui操作为什么一定要在主线程中执行? 答:Android UI操作是单线程模型,关于UI更新的相关API(包括invalidate())都是按照单线程设计的,对于多线程运行时不安全 ...

- 线程中调用python win32com

在python的线程中,调用win32com.client.Dispatch 调用windows下基于COM组件的应用接口, 需要在调用win32com.client.Dispatch前,调用pyth ...

- 一个解决在非UI线程中访问UI 异常的小方法

写 WPF 的童鞋可能都会碰到 在非UI线程中访问 UI 异常的问题.这是为了防止数据不一致做的安全限制. 子线程中更新UI还要交给主线程更新,引用满天飞,实在是麻烦. 接下来,我们推出一个可以称之为 ...

- 线程中调用service方法出错

public class PnFileTGIComputeThread implements Runnable { @Resource private AppUsedService appUsedSe ...

随机推荐

- TCP Socket服务端客户端(二)

本文服务端客户端封装代码转自https://blog.csdn.net/zhujunxxxxx/article/details/44258719,并作了简单的修改. 1)服务端 此类主要处理服务端相关 ...

- django-常见问题勘误

1.NoReverseMatch at / Reverse for 'about' not found. 'about' is not a valid view function or pattern ...

- mysql笔记一

普通操作, 查看数据库的大小,SELECT sum(DATA_LENGTH)+sum(INDEX_LENGTH) FROM information_schema.TABLES where TABLE_ ...

- iSCSI 共享存储

iSCSI(Internet Small Computer System Interface,发音为/ˈаɪskʌzi/),Internet小型计算机系统接口,又称为IP-SAN,是一种基于 ...

- $color$有色图

不想看题解的请速撤离 为防被骂灌输题解,撤离缓冲区 这里没字 $Ploya$神题一道,所以我自己做不出来,颓了一部分题解. 由于理(颓题)解不(没)深(脸)中途又拿了$std$对拍(输出中间结果并qj ...

- 高性能Web动画和渲染原理系列(4)“Compositor-Pipeline演讲PPT”学习摘要

目录 摘要 1.合成流水线 2. 预定义UI层 3. paint是什么意思 4. 分层的优势和劣势 5. 视图属性及其处理方式 6. Quads 7. Compositor Frame 8. 关于光栅 ...

- 易初大数据 2019年10月20日 spss习题 王庆超

一.选择题 1.有关spss数据字典的说法,正确的是:D A.SPSS数据集的数据字典可以复制到其他数据集中 B.SPSS数据集的数据字典是不能复制的 C.SPSS的数据字典可以通过“复制”和“黏贴” ...

- html5 点击播放video的方法

html5 点击播放video的方法<pre> <video videosrc="{$vo.shipinurl}" controls="" x ...

- python模块——socket

实例一. server: #socket套接字(IP + 端口号)(qq,wechat 发送接收消息依靠socket模块),cs架构import socketserver = socket.socke ...

- HTML——基础知识点1