MapReduce(四) 典型编程场景(二)

一、MapJoin-DistributedCache 应用

1、mapreduce join 介绍

在各种实际业务场景中,按照某个关键字对两份数据进行连接是非常常见的。如果两份数据 都比较小,那么可以直接在内存中完成连接。如果是大数据量的呢? 显然,在内存中进行连 接会发生 OOM。 MapReduce 可以用来解决大数据量的链接

MapReduce 的 Join 操作主要分两类: MapJoin 和 ReduceJoin

先看 ReduceJoin:

(1)map 阶段,两份数据 data1 和 data2 会被 map 分别读入,解析成以链接字段为 key 以查 询字段为 value 的 key-value 对,并标明数据来源是 data1 还是 data2。

(2)reduce 阶段, reducetask 会接收来自 data1 和 data2 的相同 key 的数据,在 reduce 端进 行乘积链接, 最直接的影响是很消耗内存,导致 OOM

再看 MapJoin:

MapJoin 适用于有一份数据较小的连接情况。做法是直接把该小份数据直接全部加载到内存 当中,按链接关键字建立索引。 然后大份数据就作为 MapTask 的输入,对 map()方法的每次 输入都去内存当中直接去匹配连接。 然后把连接结果按 key 输出,这种方法要使用 hadoop

中的 DistributedCache 把小份数据分布到各个计算节点,每个 maptask 执行任务的节点都需 要加载该数据到内存,并且按连接关键字建立索引

(map读的是大表数据,在读大表之前,把小表数据放到内存当中,用setup方法)

2、需求

现有两份数据 movies.dat 和 ratings.dat 数据样式分别为:

Movies.dat:

1::Toy Story (1995)::Animation|Children's|Comedy

2::Jumanji (1995)::Adventure|Children's|Fantasy

3::Grumpier Old Men (1995)::Comedy|Romance

字段含义: movieid, moviename, movietype

Ratings.dat

1::1193::5::978300760

1::661::3::978302109

1::914::3::978301968

字段含义: userid, movieid, rate, timestamp

现要求对两表进行连接,要求输出最终的结果有以上六个字段:

movieid, userid, rate, moviename, movietype, timestamp

3、实现

第一步:封装 MovieRate,方便数据的排序和序列化

package com.ghgj.mr.mymapjoin;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.WritableComparable;

public class MovieRate implements WritableComparable<MovieRate>{

private String movieid;

private String userid;

private int rate;

private String movieName;

private String movieType;

private long ts;

public String getMovieid() {

return movieid;

}

public void setMovieid(String movieid) {

this.movieid = movieid;

}

public String getUserid() {

return userid;

}

public void setUserid(String userid) {

this.userid = userid;

}

public int getRate() {

return rate;

}

public void setRate(int rate) {

this.rate = rate;

}

public String getMovieName() {

return movieName;

}

public void setMovieName(String movieName) {

this.movieName = movieName;

}

public String getMovieType() {

return movieType;

}

public void setMovieType(String movieType) {

this.movieType = movieType;

}

public long getTs() {

return ts;

}

public void setTs(long ts) {

this.ts = ts;

}

public MovieRate() {

}

public MovieRate(String movieid, String userid, int rate, String movieName,

String movieType, long ts) {

this.movieid = movieid;

this.userid = userid;

this.rate = rate;

this.movieName = movieName;

this.movieType = movieType;

this.ts = ts;

}

@Override

public String toString() {

return movieid + "\t" + userid + "\t" + rate + "\t" + movieName

+ "\t" + movieType + "\t" + ts;

}

@Override

public void write(DataOutput out) throws IOException {

out.writeUTF(movieid);

out.writeUTF(userid);

out.writeInt(rate);

out.writeUTF(movieName);

out.writeUTF(movieType);

out.writeLong(ts);

}

@Override

public void readFields(DataInput in) throws IOException {

this.movieid = in.readUTF();

this.userid = in.readUTF();

this.rate = in.readInt();

this.movieName = in.readUTF();

this.movieType = in.readUTF();

this.ts = in.readLong();

}

@Override

public int compareTo(MovieRate mr) {

int it = mr.getMovieid().compareTo(this.movieid);

if(it == 0){

return mr.getUserid().compareTo(this.userid);

}else{

return it;

}

}

}

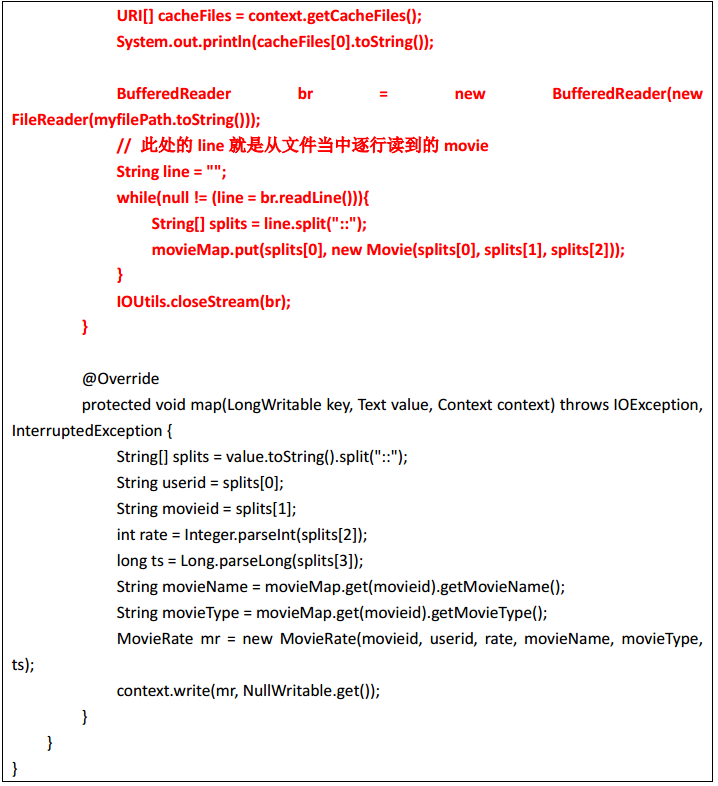

第二步:编写mapreduce程序

package com.ghgj.mr.mymapjoin;

import java.io.BufferedReader;

import java.io.FileReader;

import java.io.IOException;

import java.net.URI;

import java.util.HashMap;

import java.util.Map;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.filecache.DistributedCache;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

public class MovieRatingMapJoinMR {

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://hadoop02:9000");

System.setProperty("HADOOP_USER_NAME","hadoop");

Job job = Job.getInstance(conf);

// job.setJarByClass(MovieRatingMapJoinMR.class);

job.setJar("/home/hadoop/mrmr.jar");

job.setMapperClass(MovieRatingMapJoinMRMapper.class);

job.setMapOutputKeyClass(MovieRate.class);

job.setMapOutputValueClass(NullWritable.class);

// job.setReducerClass(MovieRatingMapJoinMReducer.class);

// job.setOutputKeyClass(MovieRate.class);

// job.setOutputValueClass(NullWritable.class);

job.setNumReduceTasks(0);

String minInput = args[0];

String maxInput = args[1];

String output = args[2];

FileInputFormat.setInputPaths(job, new Path(maxInput));

Path outputPath = new Path(output);

FileSystem fs = FileSystem.get(conf);

if(fs.exists(outputPath)){

fs.delete(outputPath, true);

}

FileOutputFormat.setOutputPath(job, outputPath);

URI uri = new Path(minInput).toUri();

job.addCacheFile(uri);

boolean status = job.waitForCompletion(true);

System.exit(status?0:1);

}

二、自定义 OutputFormat—数据分类输出

实现:自定义 OutputFormat,改写其中的 RecordWriter,改写具体输出数据的方法 write()

package com.ghgj.mr.score_outputformat; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.RecordWriter;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat; public class MyScoreOutputFormat extends TextOutputFormat<Text, NullWritable>{ @Override

public RecordWriter<Text, NullWritable> getRecordWriter(

TaskAttemptContext job) throws IOException, InterruptedException {

Configuration configuration = job.getConfiguration(); FileSystem fs = FileSystem.get(configuration);

Path p1 = new Path("/score1/outpu1");

Path p2 = new Path("/score2/outpu2"); if(fs.exists(p1)){

fs.delete(p1, true);

}

if(fs.exists(p2)){

fs.delete(p2, true);

} FSDataOutputStream fsdout1 = fs.create(p1);

FSDataOutputStream fsdout2 = fs.create(p2);

return new MyRecordWriter(fsdout1, fsdout2);

} static class MyRecordWriter extends RecordWriter<Text, NullWritable>{ FSDataOutputStream dout1 = null;

FSDataOutputStream dout2 = null; public MyRecordWriter(FSDataOutputStream dout1, FSDataOutputStream dout2) {

super();

this.dout1 = dout1;

this.dout2 = dout2;

} @Override

public void write(Text key, NullWritable value) throws IOException,

InterruptedException {

// TODO Auto-generated method stub String[] strs = key.toString().split("::");

if(strs[0].equals("1")){

dout1.writeBytes(strs[1]+"\n");

}else{

dout2.writeBytes(strs[1]+"\n");

}

} @Override

public void close(TaskAttemptContext context) throws IOException,

InterruptedException {

IOUtils.closeStream(dout2);

IOUtils.closeStream(dout1);

}

}

}

package com.ghgj.mr.score_outputformat; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner; public class ScoreOutputFormatMR extends Configured implements Tool{ // 这个run方法就相当于Driver

@Override

public int run(String[] args) throws Exception { Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://hadoop02:9000");

System.setProperty("HADOOP_USER_NAME", "hadoop");

Job job = Job.getInstance(conf); job.setMapperClass(ScoreOutputFormatMRMapper.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(NullWritable.class); job.setNumReduceTasks(0); // 这就是默认的输入输出组件

job.setInputFormatClass(TextInputFormat.class);

// 这是默认往外输出数据的组件

// job.setOutputFormatClass(TextOutputFormat.class);

job.setOutputFormatClass(MyScoreOutputFormat.class); FileInputFormat.setInputPaths(job, new Path("/scorefmt"));

Path output = new Path("/scorefmt/output");

FileSystem fs = FileSystem.get(conf);

if(fs.exists(output)){

fs.delete(output, true);

}

FileOutputFormat.setOutputPath(job, output); boolean status = job.waitForCompletion(true);

return status?0:1;

} public static void main(String[] args) throws Exception { int run = new ToolRunner().run(new ScoreOutputFormatMR(), args);

System.exit(run);

} static class ScoreOutputFormatMRMapper extends Mapper<LongWritable, Text, Text, NullWritable>{

@Override

protected void map(LongWritable key, Text value,

Mapper<LongWritable, Text, Text, NullWritable>.Context context)

throws IOException, InterruptedException { String[] split = value.toString().split("\t");

if(split.length-2 >= 6){

context.write(new Text("1::"+value.toString()), NullWritable.get());

}else{

context.write(new Text("2::"+value.toString()), NullWritable.get());

}

}

}

}

三、自定义 InputFormat—小文件合并

第一步:自定义InputFormat

package com.ghgj.mr.format.input; import java.io.IOException; import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.JobContext;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; public class WholeFileInputFormat extends FileInputFormat<NullWritable, Text> {

// 设置每个小文件不可分片,保证一个小文件生成一个key-value键值对

@Override

protected boolean isSplitable(JobContext context, Path file) {

return false;

} @Override

public RecordReader<NullWritable, Text> createRecordReader(

InputSplit split, TaskAttemptContext context) throws IOException,

InterruptedException {

WholeFileRecordReader reader = new WholeFileRecordReader();

reader.initialize(split, context);

return reader;

}

}

第二步:编写自定义的 RecordReader

package com.ghgj.mr.format.input; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IOUtils;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.RecordReader;

import org.apache.hadoop.mapreduce.TaskAttemptContext;

import org.apache.hadoop.mapreduce.lib.input.FileSplit; class WholeFileRecordReader extends RecordReader<NullWritable, Text> {

private FileSplit fileSplit;

private Configuration conf;

private Text value = new Text();

private boolean processed = false; @Override

public void initialize(InputSplit split, TaskAttemptContext context)

throws IOException, InterruptedException {

this.fileSplit = (FileSplit) split;

this.conf = context.getConfiguration();

} @Override

public boolean nextKeyValue() throws IOException, InterruptedException {

if (!processed) {

// 获取 输入逻辑切片的 字节数组

byte[] contents = new byte[(int) fileSplit.getLength()];

// 通过 filesplit获取该逻辑切片在文件系统的位置

Path file = fileSplit.getPath();

FileSystem fs = file.getFileSystem(conf);

FSDataInputStream in = null;

try {

// 文件系统对象fs打开一个file的输入流

in = fs.open(file);

/**

* in是输入流

* contents是存这个流读取的到数的数据的字节数组

*

*/

IOUtils.readFully(in, contents, 0, contents.length); value.set(contents, 0, contents.length); } finally {

IOUtils.closeStream(in);

}

processed = true;

return true;

}

return false;

} @Override

public NullWritable getCurrentKey() throws IOException, InterruptedException {

return NullWritable.get();

} @Override

public Text getCurrentValue() throws IOException, InterruptedException {

return value;

} @Override

public float getProgress() throws IOException {

return processed ? 1.0f : 0.0f;

} @Override

public void close() throws IOException {

// do nothing

}

}

第三步:编写mapreduce程序

package com.ghgj.mr.format.input; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.conf.Configured;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.InputSplit;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.Tool;

import org.apache.hadoop.util.ToolRunner; public class SmallFilesConvertToBigMR extends Configured implements Tool { public static void main(String[] args) throws Exception {

int exitCode = ToolRunner.run(new SmallFilesConvertToBigMR(), args);

System.exit(exitCode);

} static class SmallFilesConvertToBigMRMapper extends

Mapper<NullWritable, Text, Text, Text> { private Text filenameKey;

@Override

protected void setup(Context context) throws IOException,

InterruptedException {

InputSplit split = context.getInputSplit();

Path path = ((FileSplit) split).getPath();

filenameKey = new Text(path.toString());

} @Override

protected void map(NullWritable key, Text value, Context context)

throws IOException, InterruptedException {

context.write(filenameKey, value);

}

} static class SmallFilesConvertToBigMRReducer extends

Reducer<Text, Text, NullWritable, Text> {

@Override

protected void reduce(Text filename, Iterable<Text> bytes,

Context context) throws IOException, InterruptedException {

context.write(NullWritable.get(), bytes.iterator().next());

}

} @Override

public int run(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("fs.defaultFS", "hdfs://hadoop02:9000");

System.setProperty("HADOOP_USER_NAME", "hadoop");

Job job = Job.getInstance(conf, "combine small files to bigfile"); job.setJarByClass(SmallFilesConvertToBigMR.class); job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(Text.class);

job.setMapperClass(SmallFilesConvertToBigMRMapper.class); job.setOutputKeyClass(NullWritable.class);

job.setOutputValueClass(Text.class);

job.setReducerClass(SmallFilesConvertToBigMRReducer.class); // TextInputFormat是默认的数据读取组件

// job.setInputFormatClass(TextInputFormat.class);

// 不是用默认的读取数据的Format,我使用自定义的 WholeFileInputFormat

job.setInputFormatClass(WholeFileInputFormat.class); Path input = new Path("/smallfiles");

Path output = new Path("/bigfile");

FileInputFormat.setInputPaths(job, input);

FileSystem fs = FileSystem.get(conf);

if (fs.exists(output)) {

fs.delete(output, true);

}

FileOutputFormat.setOutputPath(job, output); int status = job.waitForCompletion(true) ? 0 : 1;

return status;

}

}

MapReduce(四) 典型编程场景(二)的更多相关文章

- 《Data-Intensive Text Processing with mapReduce》读书笔记之二:mapreduce编程、框架及运行

搜狐视频的屌丝男士第二季大结局了,惊现波多野老师,怀揣着无比鸡冻的心情啊,可惜随着剧情的推进发展,并没有出现期待中的屌丝奇遇,大鹏还是没敢冲破尺度的界线.想百度些种子吧,又不想让电脑留下污点证据,要知 ...

- SSD固态盘应用于Ceph集群的四种典型使用场景

在虚拟化及云计算技术大规模应用于企业数据中心的科技潮流中,存储性能无疑是企业核心应用是否虚拟化.云化的关键指标之一.传统的做法是升级存储设备,但这没解决根本问题,性能和容量不能兼顾,并且解决不好设备利 ...

- 从Paxos到ZooKeeper-三、ZooKeeper的典型应用场景

ZooKeeper是一个典型的发布/订阅模式的分布式数据管理与协调框架,开发人员可以使用它来进行分布式数据的发布与订阅.另一方面,通过对ZooKeeper中丰富的数据节点类型进行交叉使用,配合Watc ...

- 并发编程(二):全视角解析volatile

一.目录 1.引入话题-发散思考 2.volatile深度解析 3.解决volatile原子性问题 4.volatile应用场景 二.引入话题-发散思考 public class T1 { /*vol ...

- 并发编程(二)concurrent 工具类

并发编程(二)concurrent 工具类 一.CountDownLatch 经常用于监听某些初始化操作,等初始化执行完毕后,通知主线程继续工作. import java.util.concurren ...

- 搞懂分布式技术6:Zookeeper典型应用场景及实践

搞懂分布式技术6:Zookeeper典型应用场景及实践 一.ZooKeeper典型应用场景实践 ZooKeeper是一个高可用的分布式数据管理与系统协调框架.基于对Paxos算法的实现,使该框架保证了 ...

- 基于Apache Hudi构建数据湖的典型应用场景介绍

1. 传统数据湖存在的问题与挑战 传统数据湖解决方案中,常用Hive来构建T+1级别的数据仓库,通过HDFS存储实现海量数据的存储与水平扩容,通过Hive实现元数据的管理以及数据操作的SQL化.虽然能 ...

- 《高性能javascript》 领悟随笔之-------DOM编程篇(二)

<高性能javascript> 领悟随笔之-------DOM编程篇二 序:在javaSctipt中,ECMASCRIPT规定了它的语法,BOM实现了页面与浏览器的交互,而DOM则承载着整 ...

- 【转】《深入理解计算机系统》C程序中常见的内存操作有关的典型编程错误

原文地址:http://blog.csdn.net/slvher/article/details/9150597 对C/C++程序员来说,内存管理是个不小的挑战,绝对值得慎之又慎,否则让由上万行代码构 ...

随机推荐

- 跟浩哥学自动化测试Selenium -- Selenium简介 (1)

Selenium 简介 Selenium 是一款开源的web自动化测试工具,用来模拟对浏览器的操作(主要是对页面元素的操作),简单来讲,其实就是一个jar包.Selenium早期的版本比如1.0市场占 ...

- AWS探索及创建一个aws EC2实例

一.AWS登陆 1.百度搜索aws,或者浏览器输入:http://aws.amazon.com 2.输入账户及密码登陆(注册流程按照提示走即可) 二.创建EC2实例(相当于阿里云的ecs) 1.找到E ...

- 前端基础HTML

web的服务本质 浏览器发送请求>>>HTTP协议>>>服务端接受请求>>>服务端返回响应>>>服务端把HTML文件内容发给浏览 ...

- Javascript深入__proto__和prototype的区别和联系

有一个一个装逼的同事,写了一段代码 function a(){} a.__proto__.__proto__.__proto__ 然后问我,下面这个玩意a.__proto__.__proto__.__ ...

- Spring单元测试集成H2数据库

项目源代码在:Spring-H2测试 H2简介 H2数据库是一种由Java编写的,极小,速度极快,可嵌入式的数据库.非常适合用在单元测试等数据不需要保存的场景下面. 以下时其官网的介绍: {% blo ...

- RyuBook1.0案例三:REST Linkage

REST Linkage 该小结主要介绍如何添加一个REST Link 函数 RYU本身提供了一个类似WSGI的web服务器功能.借助这个功能,我们可以创建一个REST API. 基于创建的REST ...

- cinder的组件

跟nova相似,cinder也有很多组件,每个组件负责各自的业务,然后共同协作完成volume的管理.组件之间的通信方式与nova个组件之间的通信方式相同,都是通过消息队列进行通信. cinder-a ...

- POJ 1417 并查集 dp

After having drifted about in a small boat for a couple of days, Akira Crusoe Maeda was finally cast ...

- 第17次Scrum会议(10/29)【欢迎来怼】

一.小组信息 队名:欢迎来怼小组成员队长:田继平成员:李圆圆,葛美义,王伟东,姜珊,邵朔,冉华 小组照片 二.开会信息 时间:2017/10/29 17:20~17:42,总计22min.地点:东北师 ...

- U盘安装OSX

1.插入U盘,磁盘工具,格式化U盘为Mac OS X拓展 (日志式): 2.去网站搜索recovery disk assistant,此文件大约1.1M,直接打开使用它制作启动盘,进度条完毕就完成了. ...