MapReduce案例:统计共同好友+订单表多表合并+求每个订单中最贵的商品

案例三:

统计共同好友

任务需求:

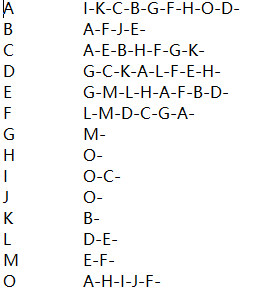

如下的文本,

A:B,C,D,F,E,O

B:A,C,E,K

C:F,A,D,I

D:A,E,F,L

E:B,C,D,M,L

F:A,B,C,D,E,O,M

G:A,C,D,E,F

H:A,C,D,E,O

I:A,O

J:B,O

K:A,C,D

L:D,E,F

M:E,F,G

O:A,H,I,J

求出哪些人两两之间有共同好友,及他俩的共同好友都是谁

b -a

c -a

d -a

a -b

c -b

b -e

b -j

解题思路:

写两个mapreduce

第一个MR输出结果如:

b -> a e j

c ->a b e f h

第二个MR输出结果如:

a-e b

a-j b

e-j b

a-b c

a-e c

比如:

a-e b c d

a-m e f

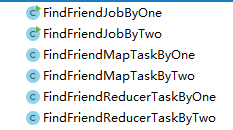

代码如下:

- 第一个mapper:FindFriendMapTaskByOne

- package com.gec.demo;

- import org.apache.hadoop.io.LongWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Mapper;

- import java.io.IOException;

- import java.io.PrintStream;

- public class FindFriendMapTaskByOne extends Mapper<LongWritable, Text,Text,Text> {

- @Override

- protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

- String line=value.toString();

- String[] datas=line.split(":");

- String user=datas[0];

- String []friends=datas[1].split(",");

- for (String friend : friends) {

- context.write(new Text(friend),new Text(user));

- }

- }

- }

第一个reducer:

- package com.gec.demo;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Reducer;

- import java.io.IOException;

- public class FindFriendReducerTaskByOne extends Reducer<Text,Text,Text,Text> {

- @Override

- protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

- StringBuffer strBuf=new StringBuffer();

- for (Text value : values) {

- strBuf.append(value).append("-");

- }

- context.write(key,new Text(strBuf.toString()));

- }

- }

第一个job

- package com.gec.demo;

- import org.apache.hadoop.conf.Configuration;

- import org.apache.hadoop.fs.Path;

- import org.apache.hadoop.io.IntWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Job;

- import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

- import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

- import java.io.IOException;

- public class FindFriendJobByOne {

- public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

- Configuration configuration=new Configuration();

- Job job=Job.getInstance(configuration);

- //设置Driver类

- job.setJarByClass(FindFriendJobByOne.class);

- //设置运行那个map task

- job.setMapperClass(FindFriendMapTaskByOne .class);

- //设置运行那个reducer task

- job.setReducerClass(FindFriendReducerTaskByOne .class);

- //设置map task的输出key的数据类型

- job.setMapOutputKeyClass(Text.class);

- //设置map task的输出value的数据类型

- job.setMapOutputValueClass(Text.class);

- job.setOutputKeyClass(Text.class);

- job.setOutputValueClass(Text.class);

- //指定要处理的数据所在的位置

- FileInputFormat.setInputPaths(job, "D://Bigdata//4、mapreduce//day05//homework//friendhomework.txt");

- //指定处理完成之后的结果所保存的位置

- FileOutputFormat.setOutputPath(job, new Path("D://Bigdata//4、mapreduce//day05//homework//output"));

- //向yarn集群提交这个job

- boolean res = job.waitForCompletion(true);

- System.exit(res?0:1);

- }

- }

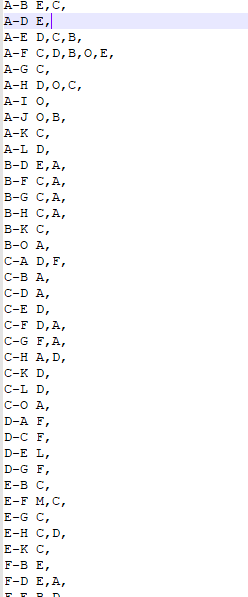

得出结果:

第二个mapper:

- package com.gec.demo;

- import org.apache.hadoop.io.LongWritable;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Mapper;

- import java.io.IOException;

- public class FindFriendMapTaskByTwo extends Mapper<LongWritable, Text,Text,Text> {

- @Override

- protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException {

- String line=value.toString();

- String []datas=line.split("\t");

- String []userlist=datas[1].split("-");

- for (int i=0;i<userlist.length-1;i++){

- for (int j=i+1;j<userlist.length;j++){

- String user1=userlist[i];

- String user2=userlist[j];

- String friendkey=user1+"-"+user2;

- context.write(new Text(friendkey),new Text(datas[0]));

- }

- }

- }

- }

第二个reducer:

- package com.gec.demo;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Reducer;

- import java.io.IOException;

- public class FindFriendReducerTaskByTwo extends Reducer<Text,Text,Text,Text> {

- @Override

- protected void reduce(Text key, Iterable<Text> values, Context context) throws IOException, InterruptedException {

- StringBuffer stringBuffer=new StringBuffer();

- for (Text value : values) {

- stringBuffer.append(value).append(",");

- }

- context.write(key,new Text(stringBuffer.toString()));

- }

- }

第二个job:

- package com.gec.demo;

- import org.apache.hadoop.conf.Configuration;

- import org.apache.hadoop.fs.Path;

- import org.apache.hadoop.io.Text;

- import org.apache.hadoop.mapreduce.Job;

- import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

- import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

- import java.io.IOException;

- public class FindFriendJobByTwo {

- public static void main(String[] args) throws IOException, ClassNotFoundException, InterruptedException {

- Configuration configuration=new Configuration();

- Job job=Job.getInstance(configuration);

- //设置Driver类

- job.setJarByClass(FindFriendJobByTwo.class);

- //设置运行那个map task

- job.setMapperClass(FindFriendMapTaskByTwo .class);

- //设置运行那个reducer task

- job.setReducerClass(FindFriendReducerTaskByTwo .class);

- //设置map task的输出key的数据类型

- job.setMapOutputKeyClass(Text.class);

- //设置map task的输出value的数据类型

- job.setMapOutputValueClass(Text.class);

- job.setOutputKeyClass(Text.class);

- job.setOutputValueClass(Text.class);

- //指定要处理的数据所在的位置

- FileInputFormat.setInputPaths(job, "D://Bigdata//4、mapreduce//day05//homework//friendhomework3.txt");

- //指定处理完成之后的结果所保存的位置

- FileOutputFormat.setOutputPath(job, new Path("D://Bigdata//4、mapreduce//day05//homework//output"));

- //向yarn集群提交这个job

- boolean res = job.waitForCompletion(true);

- System.exit(res?0:1);

- }

- }

得出结果:

案例四

MapReduce中多表合并案例

1)需求:

订单数据表t_order:

|

id |

pid |

amount |

|

1001 |

01 |

1 |

|

1002 |

02 |

2 |

|

1003 |

03 |

3 |

商品信息表t_product

|

id |

pname |

|

01 |

小米 |

|

02 |

华为 |

|

03 |

格力 |

将商品信息表中数据根据商品id合并到订单数据表中。

最终数据形式:

|

id |

pname |

amount |

|

1001 |

小米 |

1 |

|

1001 |

小米 |

1 |

|

1002 |

华为 |

2 |

|

1002 |

华为 |

2 |

|

1003 |

格力 |

3 |

|

1003 |

格力 |

3 |

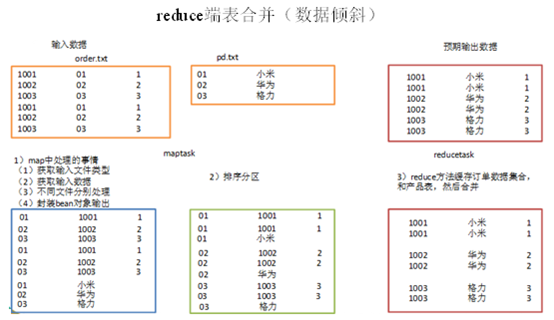

3.4.1 需求1:reduce端表合并(数据倾斜)

通过将关联条件作为map输出的key,将两表满足join条件的数据并携带数据所来源的文件信息,发往同一个reduce task,在reduce中进行数据的串联。

1)创建商品和订合并后的bean类

|

package com.gec.mapreduce.table; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.Writable; public class TableBean implements Writable { private String order_id; // 订单id private String p_id; // 产品id private int amount; // 产品数量 private String pname; // 产品名称 private String flag;// 表的标记 public TableBean() { super(); } public TableBean(String order_id, String p_id, int amount, String pname, String flag) { super(); this.order_id = order_id; this.p_id = p_id; this.amount = amount; this.pname = pname; this.flag = flag; } public String getFlag() { return flag; } public void setFlag(String flag) { this.flag = flag; } public String getOrder_id() { return order_id; } public void setOrder_id(String order_id) { this.order_id = order_id; } public String getP_id() { return p_id; } public void setP_id(String p_id) { this.p_id = p_id; } public int getAmount() { return amount; } public void setAmount(int amount) { this.amount = amount; } public String getPname() { return pname; } public void setPname(String pname) { this.pname = pname; } @Override public void write(DataOutput out) throws IOException { out.writeUTF(order_id); out.writeUTF(p_id); out.writeInt(amount); out.writeUTF(pname); out.writeUTF(flag); } @Override public void readFields(DataInput in) throws IOException { this.order_id = in.readUTF(); this.p_id = in.readUTF(); this.amount = in.readInt(); this.pname = in.readUTF(); this.flag = in.readUTF(); } @Override public String toString() { return order_id + "\t" + p_id + "\t" + amount + "\t" ; } } |

2)编写TableMapper程序

|

package com.gec.mapreduce.table; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; import org.apache.hadoop.mapreduce.lib.input.FileSplit; public class TableMapper extends Mapper<LongWritable, Text, Text, TableBean>{ TableBean bean = new TableBean(); Text k = new Text(); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取输入文件类型 FileSplit split = (FileSplit) context.getInputSplit(); String name = split.getPath().getName(); // 2 获取输入数据 String line = value.toString(); // 3 不同文件分别处理 if (name.startsWith("order")) {// 订单表处理 // 3.1 切割 String[] fields = line.split(","); // 3.2 封装bean对象 bean.setOrder_id(fields[0]); bean.setP_id(fields[1]); bean.setAmount(Integer.parseInt(fields[2])); bean.setPname(""); bean.setFlag("0"); k.set(fields[1]); }else {// 产品表处理 // 3.3 切割 String[] fields = line.split(","); // 3.4 封装bean对象 bean.setP_id(fields[0]); bean.setPname(fields[1]); bean.setFlag("1"); bean.setAmount(0); bean.setOrder_id(""); k.set(fields[0]); } // 4 写出 context.write(k, bean); } } |

3)编写TableReducer程序

|

package com.gec.mapreduce.table; import java.io.IOException; import java.util.ArrayList; import org.apache.commons.beanutils.BeanUtils; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Reducer; public class TableReducer extends Reducer<Text, TableBean, TableBean, NullWritable> { @Override protected void reduce(Text key, Iterable<TableBean> values, Context context) throws IOException, InterruptedException { // 1准备存储订单的集合 ArrayList<TableBean> orderBeans = new ArrayList<>(); // 2 准备bean对象 TableBean pdBean = new TableBean(); for (TableBean bean : values) { if ("0".equals(bean.getFlag())) {// 订单表 // 拷贝传递过来的每条订单数据到集合中 TableBean orderBean = new TableBean(); try { BeanUtils.copyProperties(orderBean, bean); } catch (Exception e) { e.printStackTrace(); } orderBeans.add(orderBean); } else {// 产品表 try { // 拷贝传递过来的产品表到内存中 BeanUtils.copyProperties(pdBean, bean); } catch (Exception e) { e.printStackTrace(); } } } // 3 表的拼接 for(TableBean bean:orderBeans){ bean.setP_id(pdBean.getPname()); // 4 数据写出去 context.write(bean, NullWritable.get()); } } } |

4)编写TableDriver程序

|

package com.gec.mapreduce.table; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class TableDriver { public static void main(String[] args) throws Exception { // 1 获取配置信息,或者job对象实例 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration); // 2 指定本程序的jar包所在的本地路径 job.setJarByClass(TableDriver.class); // 3 指定本业务job要使用的mapper/Reducer业务类 job.setMapperClass(TableMapper.class); job.setReducerClass(TableReducer.class); // 4 指定mapper输出数据的kv类型 job.setMapOutputKeyClass(Text.class); job.setMapOutputValueClass(TableBean.class); // 5 指定最终输出的数据的kv类型 job.setOutputKeyClass(TableBean.class); job.setOutputValueClass(NullWritable.class); // 6 指定job的输入原始文件所在目录 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 7 将job中配置的相关参数,以及job所用的java类所在的jar包, 提交给yarn去运行 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

3)运行程序查看结果

|

1001 小米 1 1001 小米 1 1002 华为 2 1002 华为 2 1003 格力 3 1003 格力 3 |

缺点:这种方式中,合并的操作是在reduce阶段完成,reduce端的处理压力太大,map节点的运算负载则很低,资源利用率不高,且在reduce阶段极易产生数据倾斜

解决方案: map端实现数据合并

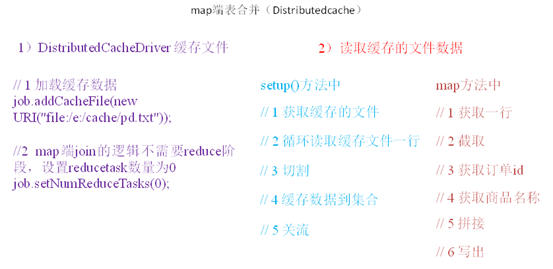

3.4.2 需求2:map端表合并(Distributedcache)

1)分析

适用于关联表中有小表的情形;

可以将小表分发到所有的map节点,这样,map节点就可以在本地对自己所读到的大表数据进行合并并输出最终结果,可以大大提高合并操作的并发度,加快处理速度。

2)实操案例

(1)先在驱动模块中添加缓存文件

|

package test; import java.net.URI; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class DistributedCacheDriver { public static void main(String[] args) throws Exception { // 1 获取job信息 Configuration configuration = new Configuration(); Job job = Job.getInstance(configuration); // 2 设置加载jar包路径 job.setJarByClass(DistributedCacheDriver.class); // 3 关联map job.setMapperClass(DistributedCacheMapper.class); // 4 设置最终输出数据类型 job.setOutputKeyClass(Text.class); job.setOutputValueClass(NullWritable.class); // 5 设置输入输出路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 6 加载缓存数据 job.addCacheFile(new URI("file:/e:/cache/pd.txt")); // 7 map端join的逻辑不需要reduce阶段,设置reducetask数量为0 job.setNumReduceTasks(0); // 8 提交 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

(2)读取缓存的文件数据

|

package test; import java.io.BufferedReader; import java.io.FileInputStream; import java.io.IOException; import java.io.InputStreamReader; import java.util.HashMap; import java.util.Map; import org.apache.commons.lang.StringUtils; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; public class DistributedCacheMapper extends Mapper<LongWritable, Text, Text, NullWritable>{ Map<String, String> pdMap = new HashMap<>(); @Override protected void setup(Mapper<LongWritable, Text, Text, NullWritable>.Context context) throws IOException, InterruptedException { // 1 获取缓存的文件 BufferedReader reader = new BufferedReader(new InputStreamReader(new FileInputStream("pd.txt(的完整路径)"))); String line; while(StringUtils.isNotEmpty(line = reader.readLine())){ // 2 切割 String[] fields = line.split("\t"); // 3 缓存数据到集合 pdMap.put(fields[0], fields[1]); } // 4 关流 reader.close(); } Text k = new Text(); @Override protected void map(LongWritable key, Text value, Context context) throws IOException, InterruptedException { // 1 获取一行 String line = value.toString(); // 2 截取 String[] fields = line.split("\t"); // 3 获取订单id String orderId = fields[1]; // 4 获取商品名称 String pdName = pdMap.get(orderId); // 5 拼接 k.set(line + "\t"+ pdName); // 6 写出 context.write(k, NullWritable.get()); } } |

案例五

求每个订单中最贵的商品(GroupingComparator)

1)需求

有如下订单数据

|

订单id |

商品id |

成交金额 |

|

Order_0000001 |

Pdt_01 |

222.8 |

|

Order_0000001 |

Pdt_05 |

25.8 |

|

Order_0000002 |

Pdt_03 |

522.8 |

|

Order_0000002 |

Pdt_04 |

122.4 |

|

Order_0000002 |

Pdt_05 |

722.4 |

|

Order_0000003 |

Pdt_01 |

222.8 |

|

Order_0000003 |

Pdt_02 |

33.8 |

现在需要求出每一个订单中最贵的商品。

2)输入数据

输出数据预期:

3)分析

(1)利用“订单id和成交金额”作为key,可以将map阶段读取到的所有订单数据按照id分区,按照金额排序,发送到reduce。

(2)在reduce端利用groupingcomparator将订单id相同的kv聚合成组,然后取第一个即是最大值。

4)实现

定义订单信息OrderBean

|

package com.gec.mapreduce.order; import java.io.DataInput; import java.io.DataOutput; import java.io.IOException; import org.apache.hadoop.io.WritableComparable; public class OrderBean implements WritableComparable<OrderBean> { private String orderId; private double price; public OrderBean() { super(); } public OrderBean(String orderId, double price) { super(); this.orderId = orderId; this.price = price; } public String getOrderId() { return orderId; } public void setOrderId(String orderId) { this.orderId = orderId; } public double getPrice() { return price; } public void setPrice(double price) { this.price = price; } @Override public void readFields(DataInput in) throws IOException { this.orderId = in.readUTF(); this.price = in.readDouble(); } @Override public void write(DataOutput out) throws IOException { out.writeUTF(orderId); out.writeDouble(price); } @Override public int compareTo(OrderBean o) { // 1 先按订单id排序(从小到大) int result = this.orderId.compareTo(o.getOrderId()); if (result == 0) { // 2 再按金额排序(从大到小) result = price > o.getPrice() ? -1 : 1; } return result; } @Override public String toString() { return orderId + "\t" + price ; } } |

编写OrderSortMapper处理流程

|

package com.gec.mapreduce.order; import java.io.IOException; import org.apache.hadoop.io.LongWritable; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.io.Text; import org.apache.hadoop.mapreduce.Mapper; public class OrderSortMapper extends Mapper<LongWritable, Text, OrderBean, NullWritable>{ OrderBean bean = new OrderBean(); @Override protected void map(LongWritable key, Text value, Context context)throws IOException, InterruptedException { // 1 获取一行数据 String line = value.toString(); // 2 截取字段 String[] fields = line.split("\t"); // 3 封装bean bean.setOrderId(fields[0]); bean.setPrice(Double.parseDouble(fields[2])); // 4 写出 context.write(bean, NullWritable.get()); } } |

编写OrderSortReducer处理流程

|

package com.gec.mapreduce.order; import java.io.IOException; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Reducer; public class OrderSortReducer extends Reducer<OrderBean, NullWritable, OrderBean, NullWritable>{ @Override protected void reduce(OrderBean bean, Iterable<NullWritable> values, Context context) throws IOException, InterruptedException { // 直接写出 context.write(bean, NullWritable.get()); } } |

编写OrderSortDriver处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.Path; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Job; import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class OrderSortDriver { public static void main(String[] args) throws Exception { // 1 获取配置信息 Configuration conf = new Configuration(); Job job = Job.getInstance(conf); // 2 设置jar包加载路径 job.setJarByClass(OrderSortDriver.class); // 3 加载map/reduce类 job.setMapperClass(OrderSortMapper.class); job.setReducerClass(OrderSortReducer.class); // 4 设置map输出数据key和value类型 job.setMapOutputKeyClass(OrderBean.class); job.setMapOutputValueClass(NullWritable.class); // 5 设置最终输出数据的key和value类型 job.setOutputKeyClass(OrderBean.class); job.setOutputValueClass(NullWritable.class); // 6 设置输入数据和输出数据路径 FileInputFormat.setInputPaths(job, new Path(args[0])); FileOutputFormat.setOutputPath(job, new Path(args[1])); // 10 设置reduce端的分组 job.setGroupingComparatorClass(OrderSortGroupingComparator.class); // 7 设置分区 job.setPartitionerClass(OrderSortPartitioner.class); // 8 设置reduce个数 job.setNumReduceTasks(3); // 9 提交 boolean result = job.waitForCompletion(true); System.exit(result ? 0 : 1); } } |

编写OrderSortPartitioner处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.io.NullWritable; import org.apache.hadoop.mapreduce.Partitioner; public class OrderSortPartitioner extends Partitioner<OrderBean, NullWritable>{ @Override public int getPartition(OrderBean key, NullWritable value, int numReduceTasks) { return (key.getOrderId().hashCode() & Integer.MAX_VALUE) % numReduceTasks; } } |

编写OrderSortGroupingComparator处理流程

|

package com.gec.mapreduce.order; import org.apache.hadoop.io.WritableComparable; import org.apache.hadoop.io.WritableComparator; public class OrderSortGroupingComparator extends WritableComparator { protected OrderSortGroupingComparator() { super(OrderBean.class, true); } @Override public int compare(WritableComparable a, WritableComparable b) { OrderBean abean = (OrderBean) a; OrderBean bbean = (OrderBean) b; // 将orderId相同的bean都视为一组 return abean.getOrderId().compareTo(bbean.getOrderId()); } } |

MapReduce案例:统计共同好友+订单表多表合并+求每个订单中最贵的商品的更多相关文章

- 【Hadoop学习之十】MapReduce案例分析二-好友推荐

环境 虚拟机:VMware 10 Linux版本:CentOS-6.5-x86_64 客户端:Xshell4 FTP:Xftp4 jdk8 hadoop-3.1.1 最应该推荐的好友TopN,如何排名 ...

- MapReduce案例二:好友推荐

1.需求 推荐好友的好友 图1: 2.解决思路 3.代码 3.1MyFoF类代码 说明: 该类定义了所加载的配置,以及执行的map,reduce程序所需要加载运行的类 package com.hado ...

- Hadoop Mapreduce 案例 wordcount+统计手机流量使用情况

mapreduce设计思想 概念:它是一个分布式并行计算的应用框架它提供相应简单的api模型,我们只需按照这些模型规则编写程序,即可实现"分布式并行计算"的功能. 案例一:word ...

- MapReduce 单词统计案例编程

MapReduce 单词统计案例编程 一.在Linux环境安装Eclipse软件 1. 解压tar包 下载安装包eclipse-jee-kepler-SR1-linux-gtk-x86_64.ta ...

- MapReduce:给出children-parents(孩子——父母)表,要求输出grandchild-grandparent(孙子——爷奶)表

hadoop中使用MapReduce单表关联案例: MapReduce:给出children-parents(孩子——父母)表,要求输出grandchild-grandparent(孙子——爷奶)表. ...

- mapreduce案例:获取PI的值

mapreduce案例:获取PI的值 * content:核心思想是向以(0,0),(0,1),(1,0),(1,1)为顶点的正方形中投掷随机点. * 统计(0.5,0.5)为圆心的单位圆中落点占总落 ...

- 【Hadoop离线基础总结】MapReduce案例之自定义groupingComparator

MapReduce案例之自定义groupingComparator 求取Top 1的数据 需求 求出每一个订单中成交金额最大的一笔交易 订单id 商品id 成交金额 Order_0000005 Pdt ...

- 【Cloud Computing】Hadoop环境安装、基本命令及MapReduce字数统计程序

[Cloud Computing]Hadoop环境安装.基本命令及MapReduce字数统计程序 1.虚拟机准备 1.1 模板机器配置 1.1.1 主机配置 IP地址:在学校校园网Wifi下连接下 V ...

- EXP/IMP迁移案例,IMP遭遇导入表的表空间归属问题

生产环境: 源数据库:Windows Server + Oracle 11.2.0.1 目标数据库:SunOS + Oracle 11.2.0.3 1.确认迁移需求:源数据库cssf 用户所有表和数据 ...

随机推荐

- Delphi直接实现分享图片功能

procedure TCustomCameraViewDoc.ShareTextClick(Sender: TObject); var FSharingService: IFMXShareSheetA ...

- Oracle使用exp和imp导出、导入数据

===========导出============ exp 用户名/密码@服务器(localhost) file=文件路径.dmp owner=(用户名) ===========导入========= ...

- python DRF获取参数介绍

DRF获取参数的方式 例如url url(r'^demo/(?P<word>.*)/$', DemoView.as_view()) 在类视图中获取参数 url:http://127.0.0 ...

- [转]ZooKeeper 集群环境搭建 (本机3个节点)

ZooKeeper 集群环境搭建 (本机3个节点) 是一个简单的分布式同步数据库(或者是小文件系统) ------------------------------------------------- ...

- C高级第二次PTA作业

6-7 删除字符串中数字字符 1.设计思路: (1)算法: 第一步:定义一个字符数组item,输入一个字符串赋给字符数组item.调用函数delnum, 第二步:在函数delnum中定义循环变量i=0 ...

- todolist待办事项

使用html/css原生js实现待办事项列表: 支持添加待办事项,删除待办事项,切换待办事项的状态(正在进行,已经完成) 支持对正在进行以及已经完成事项编辑(单击内容即可编辑) 源代码:链接:http ...

- Java中的面向对象II

既然要创建一个对象那么就需要有一个类,下面介绍类的构建. 一.类的两个元素: 1.字段 字段也就是类变量,每一个类变量都是类的成员. <1.>类变量访问指定通常是私有的(private)或 ...

- 软件安装配置笔记(二)——SQL Server安装

客户端安装: 服务器端安装:

- Project Euler 54

#include<bits/stdc++.h> using namespace std; ]; ]; ; map<char,int> mp; //map<char,cha ...

- Python--subprocess系统命令模块-深入

当我们运行python的时候,我们都是在创建并运行一个进程.正如我们在Linux进程基础中介绍的那样,一个进程可以fork一个子进程,并让这个子进程exec另外一个程序.在Python中,我们通过标准 ...