吴裕雄 python 神经网络——TensorFlow 实现LeNet-5模型处理MNIST手写数据集

import os

import numpy as np

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data tf.reset_default_graph() INPUT_NODE = 784

OUTPUT_NODE = 10 IMAGE_SIZE = 28

NUM_CHANNELS = 1

NUM_LABELS = 10 CONV1_DEEP = 32

CONV1_SIZE = 5 CONV2_DEEP = 64

CONV2_SIZE = 5 FC_SIZE = 512 def inference(input_tensor, train, regularizer):

with tf.variable_scope('layer1-conv1'):

conv1_weights = tf.get_variable("weight", [CONV1_SIZE, CONV1_SIZE, NUM_CHANNELS, CONV1_DEEP],initializer=tf.truncated_normal_initializer(stddev=0.1))

conv1_biases = tf.get_variable("bias", [CONV1_DEEP], initializer=tf.constant_initializer(0.0))

conv1 = tf.nn.conv2d(input_tensor, conv1_weights, strides=[1, 1, 1, 1], padding='SAME')

relu1 = tf.nn.relu(tf.nn.bias_add(conv1, conv1_biases)) with tf.name_scope("layer2-pool1"):

pool1 = tf.nn.max_pool(relu1, ksize = [1,2,2,1],strides=[1,2,2,1],padding="SAME") with tf.variable_scope("layer3-conv2"):

conv2_weights = tf.get_variable("weight", [CONV2_SIZE, CONV2_SIZE, CONV1_DEEP, CONV2_DEEP],initializer=tf.truncated_normal_initializer(stddev=0.1))

conv2_biases = tf.get_variable("bias", [CONV2_DEEP], initializer=tf.constant_initializer(0.0))

conv2 = tf.nn.conv2d(pool1, conv2_weights, strides=[1, 1, 1, 1], padding='SAME')

relu2 = tf.nn.relu(tf.nn.bias_add(conv2, conv2_biases)) with tf.name_scope("layer4-pool2"):

pool2 = tf.nn.max_pool(relu2, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME')

pool_shape = pool2.get_shape().as_list()

nodes = pool_shape[1] * pool_shape[2] * pool_shape[3]

reshaped = tf.reshape(pool2, [pool_shape[0], nodes]) with tf.variable_scope('layer5-fc1'):

fc1_weights = tf.get_variable("weight", [nodes, FC_SIZE],initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None:

tf.add_to_collection('losses', regularizer(fc1_weights))

fc1_biases = tf.get_variable("bias", [FC_SIZE], initializer=tf.constant_initializer(0.1))

fc1 = tf.nn.relu(tf.matmul(reshaped, fc1_weights) + fc1_biases)

if train: fc1 = tf.nn.dropout(fc1, 0.5) with tf.variable_scope('layer6-fc2'):

fc2_weights = tf.get_variable("weight", [FC_SIZE, NUM_LABELS],initializer=tf.truncated_normal_initializer(stddev=0.1))

if regularizer != None: tf.add_to_collection('losses', regularizer(fc2_weights))

fc2_biases = tf.get_variable("bias", [NUM_LABELS], initializer=tf.constant_initializer(0.1))

logit = tf.matmul(fc1, fc2_weights) + fc2_biases

return logit BATCH_SIZE = 100

LEARNING_RATE_BASE = 0.01

LEARNING_RATE_DECAY = 0.99

REGULARIZATION_RATE = 0.0001

TRAINING_STEPS = 6000

MOVING_AVERAGE_DECAY = 0.99 def train(mnist):

# 定义输出为4维矩阵的placeholder

x = tf.placeholder(tf.float32, [BATCH_SIZE,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS],name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

regularizer = tf.contrib.layers.l2_regularizer(REGULARIZATION_RATE)

y = inference(x,False,regularizer)

global_step = tf.Variable(0, trainable=False) # 定义损失函数、学习率、滑动平均操作以及训练过程。

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy)

loss = cross_entropy_mean + tf.add_n(tf.get_collection('losses'))

learning_rate = tf.train.exponential_decay(LEARNING_RATE_BASE,global_step,mnist.train.num_examples / BATCH_SIZE, LEARNING_RATE_DECAY,staircase=True) train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step)

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train')

# 初始化TensorFlow持久化类。

saver = tf.train.Saver()

with tf.Session() as sess:

tf.global_variables_initializer().run()

for i in range(TRAINING_STEPS):

xs, ys = mnist.train.next_batch(BATCH_SIZE)

reshaped_xs = np.reshape(xs, (BATCH_SIZE,IMAGE_SIZE,IMAGE_SIZE,NUM_CHANNELS))

_, loss_value, step = sess.run([train_op, loss, global_step], feed_dict={x: reshaped_xs, y_: ys})

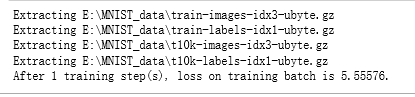

if i % 1000 == 0:

print("After %d training step(s), loss on training batch is %g." % (step, loss_value)) def main(argv=None):

mnist = input_data.read_data_sets("E:\\MNIST_data\\", one_hot=True)

train(mnist) if __name__ == '__main__':

main()

吴裕雄 python 神经网络——TensorFlow 实现LeNet-5模型处理MNIST手写数据集的更多相关文章

- 吴裕雄 python 神经网络TensorFlow实现LeNet模型处理手写数字识别MNIST数据集

import tensorflow as tf tf.reset_default_graph() # 配置神经网络的参数 INPUT_NODE = 784 OUTPUT_NODE = 10 IMAGE ...

- TensorFlow实战第五课(MNIST手写数据集识别)

Tensorflow实现softmax regression识别手写数字 MNIST手写数字识别可以形象的描述为机器学习领域中的hello world. MNIST是一个非常简单的机器视觉数据集.它由 ...

- 吴裕雄 python 神经网络——TensorFlow 循环神经网络处理MNIST手写数字数据集

#加载TF并导入数据集 import tensorflow as tf from tensorflow.contrib import rnn from tensorflow.examples.tuto ...

- 吴裕雄 python 神经网络——TensorFlow 使用卷积神经网络训练和预测MNIST手写数据集

import tensorflow as tf import numpy as np from tensorflow.examples.tutorials.mnist import input_dat ...

- 吴裕雄 python 神经网络——TensorFlow 训练过程的可视化 TensorBoard的应用

#训练过程的可视化 ,TensorBoard的应用 #导入模块并下载数据集 import tensorflow as tf from tensorflow.examples.tutorials.mni ...

- 吴裕雄 python 神经网络——TensorFlow实现搭建基础神经网络

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt def add_layer(inputs, in_ ...

- 吴裕雄 python 神经网络——TensorFlow图片预处理调整图片

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt def distort_color(image, ...

- 吴裕雄 python 神经网络——TensorFlow pb文件保存方法

import tensorflow as tf from tensorflow.python.framework import graph_util v1 = tf.Variable(tf.const ...

- 吴裕雄 python 神经网络——TensorFlow 数据集高层操作

import tempfile import tensorflow as tf train_files = tf.train.match_filenames_once("E:\\output ...

- 吴裕雄 python 神经网络——TensorFlow 输入数据处理框架

import tensorflow as tf files = tf.train.match_filenames_once("E:\\MNIST_data\\output.tfrecords ...

随机推荐

- MySQL5.7的参数优化

https://www.cnblogs.com/zhjh256/p/9260636.html query_cache_size = 0query_cache_type=0innodb_undo_tab ...

- Visual Studio 配置 fftw 库

前提条件: 1.vs 2010 +(我的是2019): 2.下载 fftw. 先将vs 的 msvc 编译器的位置添加到path,一般在下面这个目录下: Microsoft Visual Studio ...

- MySQL性能优化---优化方案

1.对查询进行优化,应尽量避免全表查询,首先考虑在where及order by涉及的列上建立索引: 2.应尽量避免where子句中对字段进行null值判断,否则将导致引擎放弃使用索引而进行全表扫描: ...

- 如何将mongo查询结果导出到文件中

1.新建一个js文件,将查询方法写进去,如dump.js,文件内容如下 var c = db.campaign.find({status:1}).limit(5) while(c.hasNext()) ...

- XSS 1

首先打开链接https://xss.haozi.me/ 点击打开第一题 然后看一下代码 尝试一下用简单的代码 可不可以通过 例如:<script>alert(1)</script& ...

- python lib timeit 测试运行时间

目录 1. 简介 1.1. python interface 2. 案例 2.1. timeit() /repeat() 2.2. timer() 1. 简介 27.5. timeit - Measu ...

- py1

python 下载安装 https://python.org python解释性语言 python数据结构 *输入输出 print(12,34,56,end='',sep='*') input() ...

- 1 dev repo organize

码云 注册 组织 创建 仓库 创建 Git版本管理工具 download from https://www.git-scm.com/download/ 克隆/下载 git clone https ...

- 精简版logging

# coding=utf-8 import logging import time import os import logging.handlers import re def logger(sch ...

- SpringMVC重定向(redirect)传参数,前端EL表达式接受值

由于重定向相当于2次请求,所以无法把参数加在model中传过去.在上面例子中,页面获取不到msg参数.要想获取参数,可以手动拼url,把参数带在后面.Spring 3.1 提供了一个很好用的类:Red ...