On Using Very Large Target Vocabulary for Neural Machine Translation Candidate Sampling Sampled Softmax

【softmax分类器的加速器】

https://www.tensorflow.org/api_docs/python/tf/nn/sampled_softmax_loss

This is a faster way to train a softmax classifier over a huge number of classes.

【分类的结果集过大,选取子集】

https://www.tensorflow.org/api_guides/python/nn#Candidate_Sampling

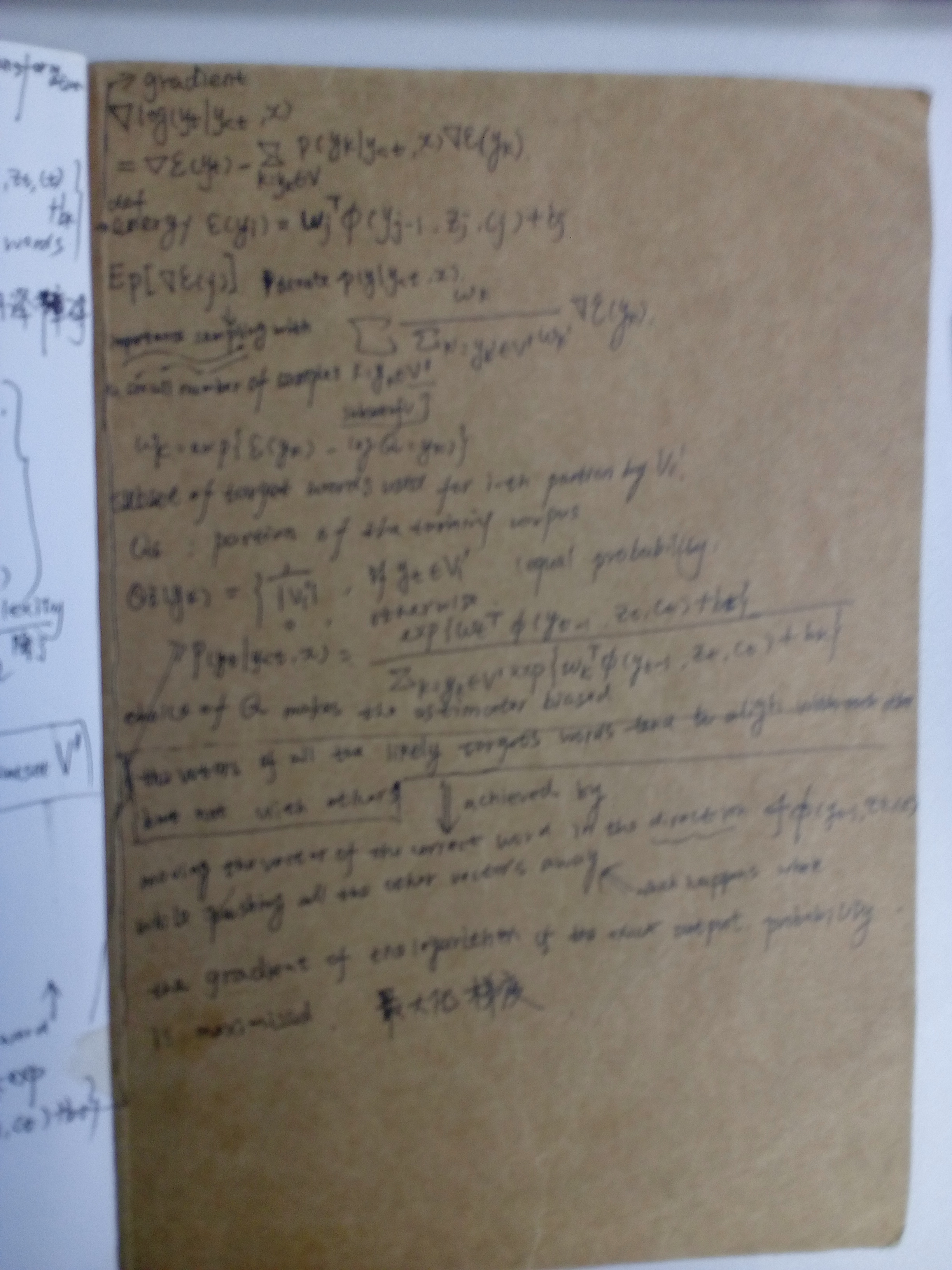

Do you want to train a multiclass or multilabel model with thousands or millions of output classes (for example, a language model with a large vocabulary)? Training with a full Softmax is slow in this case, since all of the classes are evaluated for every training example. Candidate Sampling training algorithms can speed up your step times by only considering a small randomly-chosen subset of contrastive classes (called candidates) for each batch of training examples.

https://www.tensorflow.org/extras/candidate_sampling.pdf

【 compute F(x, y) for every class y ∈ L for every training example----耗时点,这是要解决的问题】

What is Candidate Sampling Say we have a multiclass or multilabel problem where each training example (x , ) consists of i Ti a context xi a small (multi)set of target classes Ti out of a large universe L of possible classes. For example, the problem might be to predicting the next word (or the set of future words) in a sentence given the previous words.

We wish to learn a compatibility function F(x, y) which says something about the compatibility of a class y with a context x . For example the probability of the class given the context.

“Exhaustive” training methods such as softmax and logistic regression require us to compute F(x, y) for every class y ∈ L for every training example. When |L| is very large, this can be prohibitively expensive.

【the model having a very large target vocabulary by selecting only a small subset of the whole target vocabulary:子集】

https://arxiv.org/pdf/1412.2007.pdf

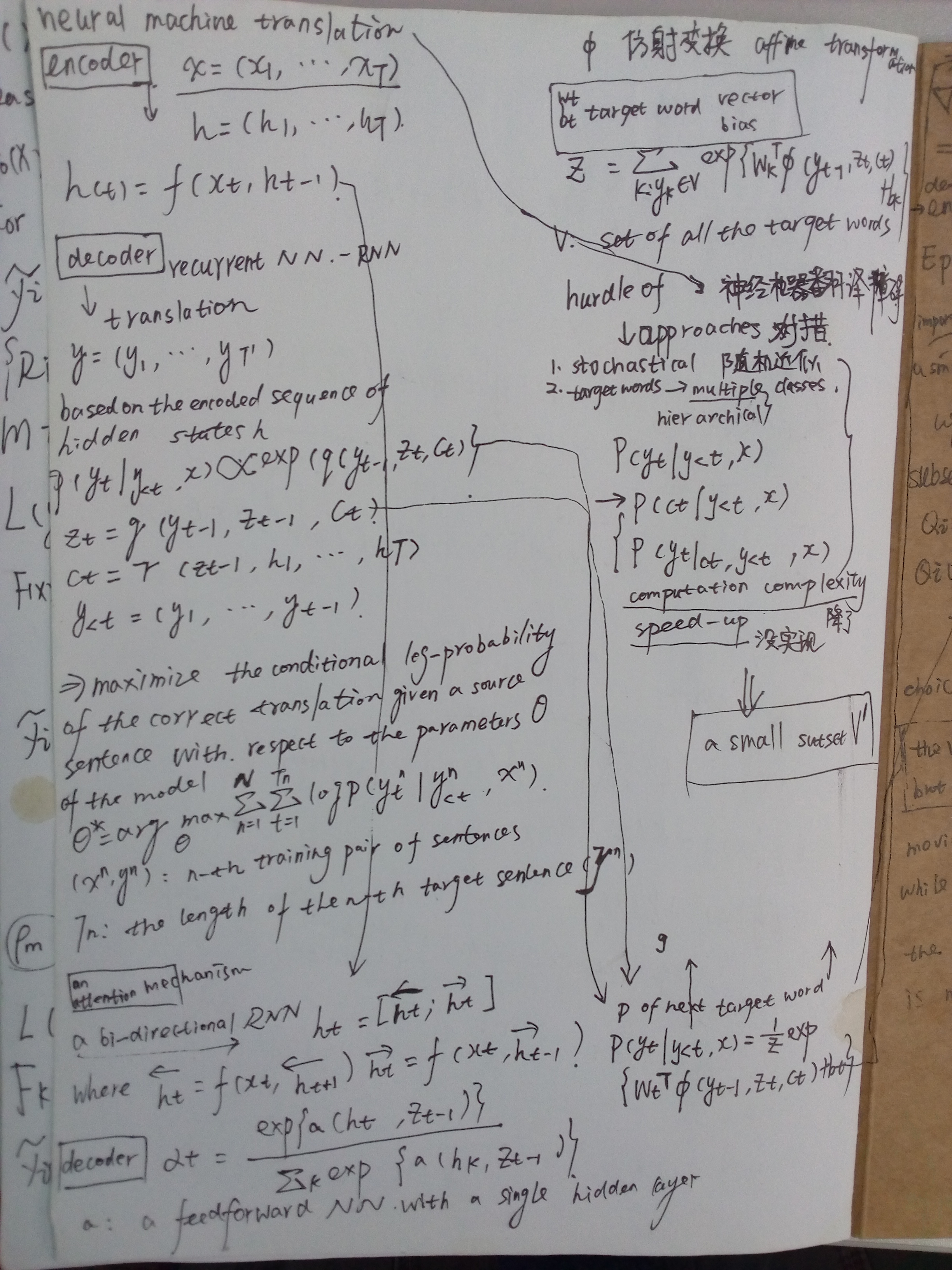

Neural machine translation, a recently proposed approach to machine translation based purely on neural networks, has shown promising results compared to the existing approaches such as phrase-based statistical machine translation. Despite its recent success, neural machine translation has its limitation in handling a larger vocabulary, as training complexity as well as decoding complexity increase proportionally to the number of target words. In this paper, we propose a method that allows us to use a very large target vocabulary without increasing training complexity, based on importance sampling. We show that decoding can be efficiently done even with the model having a very large target vocabulary by selecting only a small subset of the whole target vocabulary. The models trained by the proposed approach are empirically found to outperform the baseline models with a small vocabulary as well as the LSTM-based neural machine translation models. Furthermore, when we use the ensemble of a few models with very large target vocabularies, we achieve the state-of-the-art translation performance (measured by BLEU) on the English->German translation and almost as high performance as state-of-the-art English->French translation system.

On Using Very Large Target Vocabulary for Neural Machine Translation Candidate Sampling Sampled Softmax的更多相关文章

- 课程五(Sequence Models),第三周(Sequence models & Attention mechanism) —— 1.Programming assignments:Neural Machine Translation with Attention

Neural Machine Translation Welcome to your first programming assignment for this week! You will buil ...

- Sequence Models Week 3 Neural Machine Translation

Neural Machine Translation Welcome to your first programming assignment for this week! You will buil ...

- 神经机器翻译 - NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE

论文:NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE 综述 背景及问题 背景: 翻译: 翻译模型学习条件分布 ...

- 对Neural Machine Translation by Jointly Learning to Align and Translate论文的详解

读论文 Neural Machine Translation by Jointly Learning to Align and Translate 这个论文是在NLP中第一个使用attention机制 ...

- Effective Approaches to Attention-based Neural Machine Translation(Global和Local attention)

这篇论文主要是提出了Global attention 和 Local attention 这个论文有一个译文,不过我没细看 Effective Approaches to Attention-base ...

- 【转载 | 翻译】Visualizing A Neural Machine Translation Model(神经机器翻译模型NMT的可视化)

转载并翻译Jay Alammar的一篇博文:Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models Wi ...

- [笔记] encoder-decoder NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE

原文地址 :[1409.0473] Neural Machine Translation by Jointly Learning to Align and Translate (arxiv.org) ...

- Introduction to Neural Machine Translation - part 1

The Noise Channel Model \(p(e)\): the language Model \(p(f|e)\): the translation model where, \(e\): ...

- 论文阅读 | Robust Neural Machine Translation with Doubly Adversarial Inputs

(1)用对抗性的源实例攻击翻译模型; (2)使用对抗性目标输入来保护翻译模型,提高其对对抗性源输入的鲁棒性. 生成对抗输入:基于梯度 (平均损失) -> AdvGen 我们的工作处理由白盒N ...

随机推荐

- 正确地使用GIT FORK

摘自github官方网站,稍后我将抽空翻译. Fork a repo https://help.github.com/articles/fork-a-repo/ Syncing a fork http ...

- [Python Cookbook] Numpy: Iterating Over Arrays

1. Using for-loop Iterate along row axis: import numpy as np x=np.array([[1,2,3],[4,5,6]]) for i in ...

- Java 字符集,编码、解码

1. 计算机中文件.数据底层都是基于二进制的. 计算机底层并没有文本文件.图片文件之分,它只是记录着每个文件的二进制序列. 字符集:包含着字符和二进制序列之间的对应关系,一个字符对应一个二进制序列. ...

- PHP的按位运算符是什么意思

按位运算符是什么意思? 按位运算符(Bitwise Operators)是用于对涉及单个位操作的位模式或二进制数字执行位操作的运算符. 按位运算符可以用于: 1.通信堆栈,其中标头中的各个位附加到数据 ...

- 【hibernate】Hibernate SQL 方言(hibernate.dialect)

参考如下: RDBMS Dialect DB2 org.hibernate.dialect.DB2Dialect DB2 AS/400 org.hibernate.dialect.DB2400Dial ...

- Rebound动画框架简单介绍

Rebound动画框架简单介绍 Android菜鸟一枚,有不对的地方希望大家指出,谢谢. 最近在接手了一个老项目,发现里面动画框架用的是facebook中的Rebound框架,由于以前没听说过,放假时 ...

- vs2012搭建OpenGL环境

1. 下载glut库 glut库地址为:http://www.opengl.org/resources/libraries/glut/glutdlls37beta.zip glut全称为:OpenGL ...

- 手把手教你画AndroidK线分时图及指标

先废话一下:来到公司之前.项目是由外包公司做的,面试初,没有接触过分时图k线这块,认为好难,我能搞定不.可是一段时间之后,发现之前做的那是一片稀烂,可是这货是主功能啊.迟早的自己操刀,痛下决心,开搞, ...

- ThinkPHP第一课 环境搭建

第一课 环境搭建 1.说明: ThinkPHP是一个开源的国产PHP框架,是为了简化企业级应用开发和敏捷WEB应用开发而诞生的. 最早诞生于2006年初.原名FCS.2007年元旦正式更名为Think ...

- SpringMVC:前台jsp页面和后台传值

前台jsp页面和后台传值的几种方式: 不用SpringMVC自带的标签 前台---->后台,通过表单传递数据(): 1.jsp页面代码如下, modelattribute 有没有都行 < ...