Kafka单节点及集群配置安装

一.单节点

1.上传Kafka安装包到Linux系统【当前为Centos7】。

2.解压,配置conf/server.property。

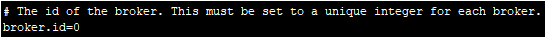

2.1配置broker.id

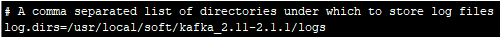

2.2配置log.dirs

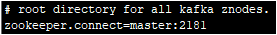

2.3配置zookeeper.connect

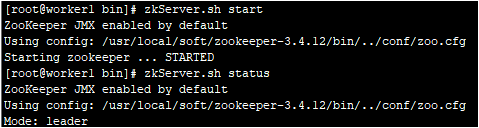

3.启动Zookeeper集群

备注:zookeeper集群启动时,先启动的节点因节点启动过少而出现not running这种情况,是正常的,把所有节点都启动之后这个情况就会消失!

3.启动Kafka服务

执行:./kafka-server-start.sh ../config/server.properties &

启动日志:

[root@master bin]# ./kafka-server-start.sh ../config/server.properties &

[]

[root@master bin]# [-- ::,] INFO Registered kafka:type=kafka.Log4jController MBean (kafka.utils.Log4jControllerRegistration$)

[-- ::,] INFO starting (kafka.server.KafkaServer)

[-- ::,] INFO Connecting to zookeeper on master: (kafka.server.KafkaServer)

[-- ::,] INFO [ZooKeeperClient] Initializing a new session to master:. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Client environment:zookeeper.version=3.4.-2d71af4dbe22557fda74f9a9b4309b15a7487f03, built on // : GMT (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:host.name=master (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.version=1.8.0_172 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.vendor=Oracle Corporation (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.home=/usr/local/soft/jdk1..0_172/jre (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.class.path=.:/usr/local/soft/jdk1..0_172/jre/lib/rt.jar:/usr/local/soft/jdk1..0_172/lib/dt.jar:/usr/local/soft/jdk1..0_172/lib/tools.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/activation-1.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/aopalliance-repackaged-2.5.-b42.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/argparse4j-0.7..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/audience-annotations-0.5..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/commons-lang3-3.8..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-api-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-basic-auth-extension-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-file-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-json-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-runtime-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/connect-transforms-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/guava-20.0.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/hk2-api-2.5.-b42.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/hk2-locator-2.5.-b42.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/hk2-utils-2.5.-b42.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-annotations-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-core-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-databind-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-jaxrs-base-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-jaxrs-json-provider-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jackson-module-jaxb-annotations-2.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javassist-3.22.-CR2.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.annotation-api-1.2.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.inject-.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.inject-2.5.-b42.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.servlet-api-3.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.ws.rs-api-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/javax.ws.rs-api-2.1.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jaxb-api-2.3..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-client-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-common-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-container-servlet-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-container-servlet-core-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-hk2-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-media-jaxb-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jersey-server-2.27.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-client-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-continuation-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-http-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-io-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-security-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-server-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-servlet-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-servlets-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jetty-util-9.4..v20180830.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/jopt-simple-5.0..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka_2.-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka_2.-2.1.-sources.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-clients-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-log4j-appender-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-streams-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-streams-examples-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-streams-scala_2.-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-streams-test-utils-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/kafka-tools-2.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/log4j-1.2..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/lz4-java-1.5..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/maven-artifact-3.6..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/metrics-core-2.2..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/osgi-resource-locator-1.0..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/plexus-utils-3.1..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/reflections-0.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/rocksdbjni-5.14..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/scala-library-2.11..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/scala-logging_2.-3.9..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/scala-reflect-2.11..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/slf4j-api-1.7..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/slf4j-log4j12-1.7..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/snappy-java-1.1.7.2.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/validation-api-1.1..Final.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/zkclient-0.11.jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/zookeeper-3.4..jar:/usr/local/soft/kafka_2.-2.1./bin/../libs/zstd-jni-1.3.-.jar (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.compiler=<NA> (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.name=Linux (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.arch=amd64 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.version=3.10.-.el7.x86_64 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.name=root (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.home=/root (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.dir=/usr/local/soft/kafka_2.-2.1./bin (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Initiating client connection, connectString=master: sessionTimeout= watcher=kafka.zookeeper.ZooKeeperClient$ZooKeeperClientWatcher$@351d00c0 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Opening socket connection to server master/192.168.245.136:. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO [ZooKeeperClient] Waiting until connected. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Socket connection established to master/192.168.245.136:, initiating session (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO Session establishment complete on server master/192.168.245.136:, sessionid = 0x1000023437d0000, negotiated timeout = (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO [ZooKeeperClient] Connected. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Cluster ID = 1AkrnNRhRiW9PWHA77R9lA (kafka.server.KafkaServer)

[-- ::,] WARN No meta.properties file under dir /usr/local/soft/kafka_2.-2.1./logs/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[-- ::,] INFO KafkaConfig values:

advertised.host.name = null

advertised.listeners = null

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num =

alter.log.dirs.replication.quota.window.size.seconds =

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads =

broker.id =

broker.id.generation.enable = true

broker.rack = null

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms =

connections.max.idle.ms =

controlled.shutdown.enable = true

controlled.shutdown.max.retries =

controlled.shutdown.retry.backoff.ms =

controller.socket.timeout.ms =

create.topic.policy.class.name = null

default.replication.factor =

delegation.token.expiry.check.interval.ms =

delegation.token.expiry.time.ms =

delegation.token.master.key = null

delegation.token.max.lifetime.ms =

delete.records.purgatory.purge.interval.requests =

delete.topic.enable = true

fetch.purgatory.purge.interval.requests =

group.initial.rebalance.delay.ms =

group.max.session.timeout.ms =

group.min.session.timeout.ms =

host.name =

inter.broker.listener.name = null

inter.broker.protocol.version = 2.1-IV2

kafka.metrics.polling.interval.secs =

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds =

leader.imbalance.per.broker.percentage =

listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

listeners = null

log.cleaner.backoff.ms =

log.cleaner.dedupe.buffer.size =

log.cleaner.delete.retention.ms =

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size =

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms =

log.cleaner.threads =

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /usr/local/soft/kafka_2.-2.1./logs

log.flush.interval.messages =

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms =

log.flush.scheduler.interval.ms =

log.flush.start.offset.checkpoint.interval.ms =

log.index.interval.bytes =

log.index.size.max.bytes =

log.message.downconversion.enable = true

log.message.format.version = 2.1-IV2

log.message.timestamp.difference.max.ms =

log.message.timestamp.type = CreateTime

log.preallocate = false

log.retention.bytes = -

log.retention.check.interval.ms =

log.retention.hours =

log.retention.minutes = null

log.retention.ms = null

log.roll.hours =

log.roll.jitter.hours =

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes =

log.segment.delete.delay.ms =

max.connections.per.ip =

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots =

message.max.bytes =

metric.reporters = []

metrics.num.samples =

metrics.recording.level = INFO

metrics.sample.window.ms =

min.insync.replicas =

num.io.threads =

num.network.threads =

num.partitions =

num.recovery.threads.per.data.dir =

num.replica.alter.log.dirs.threads = null

num.replica.fetchers =

offset.metadata.max.bytes =

offsets.commit.required.acks = -

offsets.commit.timeout.ms =

offsets.load.buffer.size =

offsets.retention.check.interval.ms =

offsets.retention.minutes =

offsets.topic.compression.codec =

offsets.topic.num.partitions =

offsets.topic.replication.factor =

offsets.topic.segment.bytes =

password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding

password.encoder.iterations =

password.encoder.key.length =

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port =

principal.builder.class = null

producer.purgatory.purge.interval.requests =

queued.max.request.bytes = -

queued.max.requests =

quota.consumer.default =

quota.producer.default =

quota.window.num =

quota.window.size.seconds =

replica.fetch.backoff.ms =

replica.fetch.max.bytes =

replica.fetch.min.bytes =

replica.fetch.response.max.bytes =

replica.fetch.wait.max.ms =

replica.high.watermark.checkpoint.interval.ms =

replica.lag.time.max.ms =

replica.socket.receive.buffer.bytes =

replica.socket.timeout.ms =

replication.quota.window.num =

replication.quota.window.size.seconds =

request.timeout.ms =

reserved.broker.max.id =

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [GSSAPI]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin =

sasl.kerberos.principal.to.local.rules = [DEFAULT]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds =

sasl.login.refresh.min.period.seconds =

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.inter.broker.protocol = GSSAPI

sasl.server.callback.handler.class = null

security.inter.broker.protocol = PLAINTEXT

socket.receive.buffer.bytes =

socket.request.max.bytes =

socket.send.buffer.bytes =

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [TLSv1., TLSv1., TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.abort.timed.out.transaction.cleanup.interval.ms =

transaction.max.timeout.ms =

transaction.remove.expired.transaction.cleanup.interval.ms =

transaction.state.log.load.buffer.size =

transaction.state.log.min.isr =

transaction.state.log.num.partitions =

transaction.state.log.replication.factor =

transaction.state.log.segment.bytes =

transactional.id.expiration.ms =

unclean.leader.election.enable = false

zookeeper.connect = master:

zookeeper.connection.timeout.ms =

zookeeper.max.in.flight.requests =

zookeeper.session.timeout.ms =

zookeeper.set.acl = false

zookeeper.sync.time.ms =

(kafka.server.KafkaConfig)

[-- ::,] INFO KafkaConfig values:

advertised.host.name = null

advertised.listeners = null

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num =

alter.log.dirs.replication.quota.window.size.seconds =

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads =

broker.id =

broker.id.generation.enable = true

broker.rack = null

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms =

connections.max.idle.ms =

controlled.shutdown.enable = true

controlled.shutdown.max.retries =

controlled.shutdown.retry.backoff.ms =

controller.socket.timeout.ms =

create.topic.policy.class.name = null

default.replication.factor =

delegation.token.expiry.check.interval.ms =

delegation.token.expiry.time.ms =

delegation.token.master.key = null

delegation.token.max.lifetime.ms =

delete.records.purgatory.purge.interval.requests =

delete.topic.enable = true

fetch.purgatory.purge.interval.requests =

group.initial.rebalance.delay.ms =

group.max.session.timeout.ms =

group.min.session.timeout.ms =

host.name =

inter.broker.listener.name = null

inter.broker.protocol.version = 2.1-IV2

kafka.metrics.polling.interval.secs =

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds =

leader.imbalance.per.broker.percentage =

listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

listeners = null

log.cleaner.backoff.ms =

log.cleaner.dedupe.buffer.size =

log.cleaner.delete.retention.ms =

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size =

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms =

log.cleaner.threads =

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /usr/local/soft/kafka_2.-2.1./logs

log.flush.interval.messages =

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms =

log.flush.scheduler.interval.ms =

log.flush.start.offset.checkpoint.interval.ms =

log.index.interval.bytes =

log.index.size.max.bytes =

log.message.downconversion.enable = true

log.message.format.version = 2.1-IV2

log.message.timestamp.difference.max.ms =

log.message.timestamp.type = CreateTime

log.preallocate = false

log.retention.bytes = -

log.retention.check.interval.ms =

log.retention.hours =

log.retention.minutes = null

log.retention.ms = null

log.roll.hours =

log.roll.jitter.hours =

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes =

log.segment.delete.delay.ms =

max.connections.per.ip =

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots =

message.max.bytes =

metric.reporters = []

metrics.num.samples =

metrics.recording.level = INFO

metrics.sample.window.ms =

min.insync.replicas =

num.io.threads =

num.network.threads =

num.partitions =

num.recovery.threads.per.data.dir =

num.replica.alter.log.dirs.threads = null

num.replica.fetchers =

offset.metadata.max.bytes =

offsets.commit.required.acks = -

offsets.commit.timeout.ms =

offsets.load.buffer.size =

offsets.retention.check.interval.ms =

offsets.retention.minutes =

offsets.topic.compression.codec =

offsets.topic.num.partitions =

offsets.topic.replication.factor =

offsets.topic.segment.bytes =

password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding

password.encoder.iterations =

password.encoder.key.length =

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port =

principal.builder.class = null

producer.purgatory.purge.interval.requests =

queued.max.request.bytes = -

queued.max.requests =

quota.consumer.default =

quota.producer.default =

quota.window.num =

quota.window.size.seconds =

replica.fetch.backoff.ms =

replica.fetch.max.bytes =

replica.fetch.min.bytes =

replica.fetch.response.max.bytes =

replica.fetch.wait.max.ms =

replica.high.watermark.checkpoint.interval.ms =

replica.lag.time.max.ms =

replica.socket.receive.buffer.bytes =

replica.socket.timeout.ms =

replication.quota.window.num =

replication.quota.window.size.seconds =

request.timeout.ms =

reserved.broker.max.id =

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [GSSAPI]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin =

sasl.kerberos.principal.to.local.rules = [DEFAULT]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds =

sasl.login.refresh.min.period.seconds =

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.inter.broker.protocol = GSSAPI

sasl.server.callback.handler.class = null

security.inter.broker.protocol = PLAINTEXT

socket.receive.buffer.bytes =

socket.request.max.bytes =

socket.send.buffer.bytes =

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [TLSv1., TLSv1., TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.abort.timed.out.transaction.cleanup.interval.ms =

transaction.max.timeout.ms =

transaction.remove.expired.transaction.cleanup.interval.ms =

transaction.state.log.load.buffer.size =

transaction.state.log.min.isr =

transaction.state.log.num.partitions =

transaction.state.log.replication.factor =

transaction.state.log.segment.bytes =

transactional.id.expiration.ms =

unclean.leader.election.enable = false

zookeeper.connect = master:

zookeeper.connection.timeout.ms =

zookeeper.max.in.flight.requests =

zookeeper.session.timeout.ms =

zookeeper.set.acl = false

zookeeper.sync.time.ms =

(kafka.server.KafkaConfig)

[-- ::,] INFO [ThrottledChannelReaper-Fetch]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO [ThrottledChannelReaper-Produce]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO [ThrottledChannelReaper-Request]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO Loading logs. (kafka.log.LogManager)

[-- ::,] INFO Logs loading complete in ms. (kafka.log.LogManager)

[-- ::,] INFO Starting log cleanup with a period of ms. (kafka.log.LogManager)

[-- ::,] INFO Starting log flusher with a default period of ms. (kafka.log.LogManager)

[-- ::,] INFO Awaiting socket connections on 0.0.0.0:. (kafka.network.Acceptor)

[-- ::,] INFO [SocketServer brokerId=] Started acceptor threads (kafka.network.SocketServer)

[-- ::,] INFO [ExpirationReaper--Produce]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Fetch]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--DeleteRecords]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [LogDirFailureHandler]: Starting (kafka.server.ReplicaManager$LogDirFailureHandler)

[-- ::,] INFO Creating /brokers/ids/ (is it secure? false) (kafka.zk.KafkaZkClient)

[-- ::,] INFO Result of znode creation at /brokers/ids/ is: OK (kafka.zk.KafkaZkClient)

[-- ::,] INFO Registered broker at path /brokers/ids/ with addresses: ArrayBuffer(EndPoint(master,,ListenerName(PLAINTEXT),PLAINTEXT)) (kafka.zk.KafkaZkClient)

[-- ::,] WARN No meta.properties file under dir /usr/local/soft/kafka_2.-2.1./logs/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[-- ::,] INFO [ExpirationReaper--topic]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Heartbeat]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Rebalance]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO Successfully created /controller_epoch with initial epoch (kafka.zk.KafkaZkClient)

[-- ::,] INFO [GroupCoordinator ]: Starting up. (kafka.coordinator.group.GroupCoordinator)

[-- ::,] INFO [GroupCoordinator ]: Startup complete. (kafka.coordinator.group.GroupCoordinator)

[-- ::,] INFO [GroupMetadataManager brokerId=] Removed expired offsets in milliseconds. (kafka.coordinator.group.GroupMetadataManager)

[-- ::,] INFO [ProducerId Manager ]: Acquired new producerId block (brokerId:,blockStartProducerId:,blockEndProducerId:) by writing to Zk with path version (kafka.coordinator.transaction.ProducerIdManager)

[-- ::,] INFO [TransactionCoordinator id=] Starting up. (kafka.coordinator.transaction.TransactionCoordinator)

[-- ::,] INFO [TransactionCoordinator id=] Startup complete. (kafka.coordinator.transaction.TransactionCoordinator)

[-- ::,] INFO [Transaction Marker Channel Manager ]: Starting (kafka.coordinator.transaction.TransactionMarkerChannelManager)

[-- ::,] INFO [/config/changes-event-process-thread]: Starting (kafka.common.ZkNodeChangeNotificationListener$ChangeEventProcessThread)

[-- ::,] INFO [SocketServer brokerId=] Started processors for acceptors (kafka.network.SocketServer)

[-- ::,] INFO Kafka version : 2.1. (org.apache.kafka.common.utils.AppInfoParser)

[-- ::,] INFO Kafka commitId : 21234bee31165527 (org.apache.kafka.common.utils.AppInfoParser)

[-- ::,] INFO [KafkaServer id=] started (kafka.server.KafkaServer)

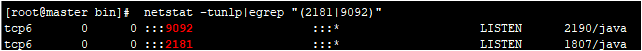

端口检测:

其中9092为kafka的监听端口,2181位zookeeper的使用端口。

4.单机连通性测试

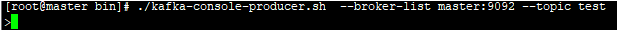

4.1启动生产者producer

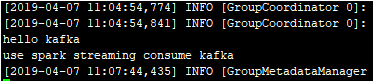

4.2启动消费者consumer

4.3测试

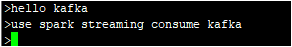

生产者生产数据:

消费者消耗数据:

二.Kafka集群搭建

未完待续......

Kafka单节点及集群配置安装的更多相关文章

- hadoop入门手册1:hadoop【2.7.1】【多节点】集群配置【必知配置知识1】

问题导读 1.说说你对集群配置的认识?2.集群配置的配置项你了解多少?3.下面内容让你对集群的配置有了什么新的认识? 目的 目的1:这个文档描述了如何安装配置hadoop集群,从几个节点到上千节点.为 ...

- hadoop入门手册2:hadoop【2.7.1】【多节点】集群配置【必知配置知识2】

问题导读 1.如何实现检测NodeManagers健康?2.配置ssh互信的作用是什么?3.启动.停止hdfs有哪些方式? 上篇: hadoop[2.7.1][多节点]集群配置[必知配置知识1]htt ...

- 使用Minikube运行一个本地单节点Kubernetes集群(阿里云)

使用Minikube运行一个本地单节点Kubernetes集群中使用谷歌官方镜像由于某些原因导致镜像拉取失败以及很多人并没有代理无法开展相关实验. 因此本文使用阿里云提供的修改版Minikube创建一 ...

- K8s二进制部署单节点 etcd集群,flannel网络配置 ——锥刺股

K8s 二进制部署单节点 master --锥刺股 k8s集群搭建: etcd集群 flannel网络插件 搭建master组件 搭建node组件 1.部署etcd集群 2.Flannel 网络 ...

- 使用Minikube运行一个本地单节点Kubernetes集群

使用Minikube是运行Kubernetes集群最简单.最快捷的途径,Minikube是一个构建单节点集群的工具,对于测试Kubernetes和本地开发应用都非常有用. ⒈安装Minikube Mi ...

- ActiveMQ的单节点和集群部署

平安寿险消息队列用的是ActiveMQ. 单节点部署: 下载解压后,直接cd到bin目录,用activemq start命令就可启动activemq服务端了. ActiveMQ默认采用61616端口提 ...

- Ceph实战入门系列(一)——三节点Ceph集群的安装与部署

安装文档:http://blog.csdn.net/u014139942/article/details/53639124

- Windows server2003 + sql server2005 集群配置安装

http://blog.itpub.net/29500582/viewspace-1249319/

- mongodb 单节点集群配置 (开发环境)

最近项目会用到mongodb的oplog触发业务流程,开发时的debug很不方便.所以在本地创建一个单台mongodb 集群进行开发debug. 大概:mongodb可以产生oplog的部署方式应该是 ...

随机推荐

- 开始在web中使用JS Modules

本文由云+社区发表 作者: 原文:<Using JavaScript modules on the web> https://developers.google.com/web/funda ...

- Android屏幕适配讲解与实战

文章大纲 一.屏幕适配是什么二. 重要概念讲解三.屏幕适配实战四.项目源码下载 一.屏幕适配是什么 Android中屏幕适配就是通过对尺寸单位.图片.文字.布局这四种类型的资源进行合理的设计和 ...

- 读懂 Gradle 的 DSL

现在 Android 开发免不了要和 Gradle 打交道,所有的 Android 开发肯定都知道这么在 build.gradle 中添加依赖,或者添加配置批量打包,但是真正理解这些脚本的人恐怕很少. ...

- LNMP shell

#!/bin/bash #set -x #date: 2018-12-13 #Description: 一键安装LNMP环境 or LAMP 环境 #Version: 0.4 #Author: sim ...

- spider 爬虫文件基本参数(3)

一 代码 # -*- coding: utf-8 -*- import scrapy class ZhihuSpider(scrapy.Spider): # 爬虫名字,名字唯一,允许自定义 name ...

- LOJ #6041. 「雅礼集训 2017 Day7」事情的相似度

我可以大喊一声这就是个套路题吗? 首先看到LCP问题,那么套路的想到SAM(SA的做法也有) LCP的长度是它们在parent树上的LCA(众所周知),所以我们考虑同时统计多个点之间的LCA对 树上问 ...

- 怎么用Mac电脑创建多个桌面

区别于win的单个桌面,Mac电脑可以设置多个桌面,方面用户处理各种多乱杂的情况.究竟怎么用Mac电脑创建多个桌面呢?一起来看看吧! 1.首先打开Mission Control,点击偏好设置 2.然后 ...

- Android多Module下的Application引用方式

版权声明:本文为HaiyuKing原创文章,转载请注明出处! 前言 Android开发时,Application一般都放在APP中,Lib模块如果想引用Application则需要在APP中进行传递, ...

- openLayers 3知识回顾

openlayers 知识 前段时间帮助同事重构一个地图类的项目,然后就学习了openLayer3这个框架,但是官网上没有中文版,也没有详细的例子解释,我只能遇到看不懂的就翻译成中文来用,为了方便以后 ...

- java~springboot~ibatis Invalid bound statement (not found)原因

事实起因 最近在ORM上使用了ibatis,感觉挺繁琐的,没有jpa来的直接,但项目非要用也没有办法,最近在进行开发过程中出现了一个问题Invalid bound statement (not fou ...