HAProxy+Keepalived+PXC负载均衡和高可用的PXC环境

HAProxy介绍

反向代理服务器,支持双机热备支持虚拟主机,但其配置简单,拥有非常不错的服务器健康检查功能,当其代理的后端服务器出现故障, HAProxy会自动将该服务器摘除,故障恢复后再自动将该服务器加入。引入了frontend,backend;frontend根据任意 HTTP请求头内容做规则匹配,然后把请求定向到相关的backend.

Keepalived介绍

Keepalived是一个基于VRRP协议来实现的WEB 服务高可用方案,可以利用其来避免单点故障。一个WEB服务至少会有2台服务器运行Keepalived,一台为主服务器(MASTER),一台为备份服务器(BACKUP),但是对外表现为一个虚拟IP,主服务器会发送特定的消息给备份服务器,当备份服务器收不到这个消息的时候,即主服务器宕机的时候,备份服务器就会接管虚拟IP,继续提供服务,从而保证了高可用性。

环境情况:

OS:CentOS release 6.6 x86_64系统

MySQL版本 :Percona-XtraDB-Cluster-5.6.22-25.8

pxc三个节点 :192.168.79.3:3306、192.168.79.4:3306、192.168.79.5:3306

HAPproxy节点 :192.168.79.128 、 192.168.79.5

HAproxy版本 :1.5.2

keepalived版本:keepalived-1.2.13-4.el6.x86_64 vip:192.168.79.166

一、PXC环境的搭建

配置服务器ssh登录无密码验证

ssh-keygen实现四台主机之间相互免密钥登录,保证四台主机之间能ping通

1)在所有的主机上执行:

# ssh-keygen -t rsa

2)将所有机子上公钥(id_rsa.pub)导到一个主机的/root/.ssh/authorized_keys文件中,然后将authorized_keys分别拷贝到所有主机上

cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

ssh 192.168.79.4 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

ssh 192.168.79.5 cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

scp /root/.ssh/authorized_keys 192.168.79.4:/root/.ssh/authorized_keys

scp /root/.ssh/authorized_keys 192.168.79.5:/root/.ssh/authorized_keys

测试:ssh xxxxx date

在所有的节点上pxc所需安装软件包

# rpm -ivh perl-DBD-MySQL-4.013-3.el6.x86_64.rpm

# rpm -ivh perl-IO-Socket-SSL-1.31-2.el6.noarch.rpm

# rpm -ivh nc-1.84-22.el6.x86_64.rpm

# rpm -ivh socat-1.7.2.4-1.el6.rf.x86_64.rpm

# rpm -ivh mysql-libs-5.1.73-3.el6_5.x86_64.rpm

# rpm -ivh zlib-1.2.3-29.el6.x86_64.rpm

# rpm -ivh zlib-devel-1.2.3-29.el6.x86_64.rpm

# rpm -ivh percona-release-0.1-3.noarch.rpm

# rpm -ivh perl-Time-HiRes-1.9721-136.el6.x86_64.rpm

# rpm -ivh percona-xtrabackup-2.2.9-5067.el6.x86_64.rpm

ps:安装epel用网络yum源。yum localinstall xx.rpm解决本地包依赖

在所有的节点上mysql用户,用户组,

# groupadd mysql

# useradd mysql -g mysql -s /sbin/nologin -d /opt/mysql

解压Percona-XtraDB-Cluster二进制安装包,安装文件放在/opt/mysql,并对解压后的mysql目录加一个符号连接,mysql,这样读mysql目录操作会更方便

# cd /opt/mysql

# tar -zxvf Percona-XtraDB-Cluster-5.6.22-rel72.0-25.8.978.Linux.x86_64.tar.gz

#cd /usr/local

# ln -s /opt/mysql/Percona-XtraDB-Cluster-5.6.22-rel72.0-25.8.978.Linux.x86_64 mysql

#chown -R mysql:mysql /usr/local/mysql/

# ln -sf /usr/lib64/libssl.so.10 /usr/lib64/libssl.so.6

# ln -sf /usr/lib64/libcrypto.so.10 /usr/lib64/libcrypto.so.6

创建安装mysql的目标,并赋予权限

# mkdir -p /data/mysql/mysql_3306/{data,logs,tmp}

chown -R mysql:mysql /data/mysql/

chown -R mysql:mysql /usr/local/mysql/

加环境变量,解决找不到mysql命令的问题

echo 'export PATH=$PATH:/usr/local/mysql/bin' >> /etc/profile

source /etc/profile

echo 'export PATH=$PATH:/usr/local/mysql/bin' >> /etc/bashrc //装mhs写到这里,perl只能调用/root/.bashrc或者/etc/bashrc

my.cnf关键参数配置

###############################

# Percona XtraDB Cluster

default_storage_engine = InnoDB

#innodb_locks_unsafe_for_binlog = 1

innodb_autoinc_lock_mode = 2

wsrep_cluster_name = pxc_taotao #Cluster 集群的名字

wsrep_cluster_address = gcomm://192.168.79.3,192.168.79.4,192.168.79.5 #Cluster集群中的所有节点IP

wsrep_node_address = 192.168.79.3 #Cluster 集群当前节点的IP

wsrep_provider = /usr/local/mysql/lib/libgalera_smm.so

#wsrep_provider_options="gcache.size = 1G;debug = yes"

wsrep_provider_options="gcache.size = 1G;"

#wsrep_sst_method = rsync //很大,上T用这个

wsrep_sst_method = xtrabackup-v2 // 100-200G用

wsrep_sst_auth = sst:taotao

#wsrep_sst_donor = #从那个节点主机名同步数据

###############################333333

scp /etc/my.cnf 192.168.79.4:/etc/my.cnf

scp /etc/my.cnf 192.168.79.5:/etc/my.cnf

注:不同机子需要修改这两个参数

wsrep_node_address 参数为Cluster集群节点的当前机器的IP地址

server-id 的标识

在第一个节点上初始化数据库,启动集群第一个节点,配置备份用户

1)在第一个节点的basedir下初始化数据库:

# /usr/local/mysql/scripts/mysql_install_db --datadir=/data/mysql/mysql_3306/data --basedir=/usr/local/mysql

2)启动集群的第一个节点:

# cp /usr/local/mysql/support-files/mysql.server /etc/init.d/mysqld

# /etc/init.d/mysqld bootstrap-pxc

Bootstrapping PXC (Percona XtraDB Cluster)Starting MySQL (Percona XtraDB Cluster)................[ OK ]

# ps -ef |grep mysqld

root 8114 1 0 13:11 pts/1 00:00:00 /bin/sh /usr/local/mysql/bin/mysqld_safe --datadir=/data/mysql/mysql_3306/data --pid-file=/data/mysql/mysql_3306/data/node1.localdomain.pid --wsrep-new-cluster

mysql 9326 8114 28 13:11 pts/1 00:00:15 /usr/local/mysql/bin/mysqld --basedir=/usr/local/mysql --datadir=/data/mysql/mysql_3306/data --plugin-dir=/usr/local/mysql/lib/mysql/plugin --user=mysql --wsrep-provider=/usr/local/mysql/lib/libgalera_smm.so --wsrep-new-cluster --log-error=/data/mysql/mysql_3306/logs/error.log --pid-file=/data/mysql/mysql_3306/data/node1.localdomain.pid --socket=/tmp/mysql.sock --port=3306 --wsrep_start_position=00000000-0000-0000-0000-000000000000:-1

root 9435 6397 2 13:12 pts/1 00:00:00 grep mysqld

3)配置备份用户

# mysql

delete from mysql.user where user != 'root' or host != 'localhost';

truncate mysql.db;

drop database test;

grant all privileges on *.* to 'sst'@'localhost' identified by 'taotao'; //这一个就可以啦,供本地的innobackupex使用的

flush privileges;

其他集群节点启动:

其它节点无需初始化数据库,数据会从第一个节点上拉过来

cp /usr/local/mysql/support-files/mysql.server /etc/init.d/mysqld

# /etc/init.d/mysqld start

查看集群状态:

> show global status like 'wsrep%';

二、Haproxy负载均衡

PXC安装完成后,我们采用HARPOXY分发连接对数据库进行访问

如下在192.168.79.128 和192.168.79.5上haprox的安装配置操作,两个服务器上操作一样

1、安装haproxy(如果安装在pxc mysql节点注意端口号)

#tar -zxvf haproxy-1.5.2.tar.gz

#cd haproxy-1.5.2

#make TARGET=linux2628

#make install

ps:默认安装到/usr/local/sbin/下面,可以用PREFIX指定软件安装路径

也可以直接使用yum安装:yum install –y haproxy

2、在haproxy服务器上安装配置HAproxy

1)/etc/haproxy/haproxy.cfg配置如下:

#mkdir /etc/haproxy

# cp examples/haproxy.cfg /etc/haproxy/

# cat /etc/haproxy/haproxy.cfg

global

log 127.0.0.1 local0

log 127.0.0.1 local1 notice

maxconn 4096

#ulimit-n 10240

#chroot /usr/share/haproxy

uid 99

gid 99

daemon

#nbproc

#pidfile /var/run/haproxy/haproxy.pid

#stats socket /var/run/haproxy/haproxy.sock level operator

#debug

#quiet

defaults

log global

mode http

option tcplog

option dontlognull

retries 3

option redispatch

maxconn 2000

timeout connect 50000

timeout client 50000

timeout server 50000

frontend stats-front

bind *:8088

mode http

default_backend stats-back

frontend pxc-front

bind *:3307

mode tcp

default_backend pxc-back

frontend pxc-onenode-front

bind *:3308

mode tcp

default_backend pxc-onenode-back

backend stats-back

mode http

balance roundrobin

stats uri /haproxy/stats

stats auth admin:admin

backend pxc-back

mode tcp

balance leastconn

option httpchk

server taotao 192.168.79.3:3306 check port 9200 inter 12000 rise 3 fall 3

server candidate 192.168.79.4:3306 check port 9200 inter 12000 rise 3 fall 3

server slave 192.168.79.5:3306 check port 9200 inter 12000 rise 3 fall 3

backend pxc-onenode-back

mode tcp

balance leastconn

option httpchk

server taotao 192.168.79.3:3306 check port 9200 inter 12000 rise 3 fall 3

server candidate 192.168.79.4:3306 check port 9200 inter 12000 rise 3 fall 3 backup

server slave 192.168.79.5:3306 check port 9200 inter 12000 rise 3 fall 3 backup

2)配置haproxy的日志:

安装完HAProxy后,默认情况下,HAProxy为了节省读写IO所消耗的性能,默认情况下没有日志输出,一下是开启日志的过程

# rpm -qa |grep rsyslog

rsyslog-5.8.10-8.el6.x86_64

# rpm -ql rsyslog |grep conf$

# vim /etc/rsyslog.conf

...........

$ModLoad imudp

$UDPServerRun 514 //rsyslog 默认情况下,需要在514端口监听UDP,所以可以把这两行注释掉

.........

local0.* /var/log/haproxy.log //和haproxy的配置文件中定义的log level一致

# service rsyslog restart

Shutting down system logger: [ OK ]

Starting system logger: [ OK ]

# service rsyslog status

rsyslogd (pid 11437) is running...

3、在每个PXC 每个mysql节点安装mysql健康状态检查脚本(需要在pxc的每个节点执行)

1)脚本拷贝

# cp /usr/local/mysql/bin/clustercheck /usr/bin/

# cp /usr/local/mysql/xinetd.d/mysqlchk /etc/xinetd.d/

ps:clustercheck和脚本都是默认值没有修改

2)创建mysql用户,用于mysql健康检查(在任一节点即可):

> grant process on *.* to 'clustercheckuser'@'localhost' identified by 'clustercheckpassword!';

> flush privileges;

ps:如不使用clustercheck中默认用户名和密码,将需要修改clustercheck脚本,MYSQL_USERNAME和MYSQL_PASSWORD值

3)更改/etc/services添加mysqlchk的服务端口号:

# echo 'mysqlchk 9200/tcp # mysqlchk' >> /etc/services

4)安装xinetd服务,通过守护进程来管理mysql健康状态检查脚本

# yum -y install xinetd

# /etc/init.d/xinetd restart

Stopping xinetd: [FAILED]

Starting xinetd: [ OK ]

# chkconfig --level 2345 xinetd on

# chkconfig --list |grep xinetd

xinetd 0:off 1:off 2:on 3:on 4:on 5:on 6:off

测试检测脚本:

# clustercheck

HTTP/1.1 200 OK

Content-Type: text/plain

Connection: close

Content-Length: 40

Percona XtraDB Cluster Node is synced.

# curl -I 192.168.79.5:9200

HTTP/1.1 503 Service Unavailable

Content-Type: text/plain

Connection: close

Content-Length: 57

# cp /usr/local/mysql/bin/mysql /usr/bin/

# curl -I 192.168.79.5:9200

HTTP/1.1 200 OK

Content-Type: text/plain

Connection: close

Content-Length: 40

ps:要保证状态为200,否则检测不通过,可能是mysql服务不正常,或者环境不对致使haproxy无法使用mysql

haproxy如何侦测 MySQL Server 是否存活,靠着就是 9200 port,透过 Http check 方式,让 HAProxy 知道 PXC 状态

在mysql集群的其他节点执行上面操作,保证各个节点返回状态为200,如下:

# curl -I 192.168.79.4:9200

HTTP/1.1 200 OK

Content-Type: text/plain

Connection: close

Content-Length: 40

# curl -I 192.168.79.5:9200

HTTP/1.1 200 OK

Content-Type: text/plain

Connection: close

Content-Length: 40

4、HAproxy启动和关闭

在haproxy服务器上启动haproxy服务:

# /usr/local/sbin/haproxy -f /etc/haproxy/haproxy.cfg

关闭:

#pkill haproxy

# /usr/local/sbin/haproxy -f /etc/haproxy/haproxy.cfg

# ps -ef |grep haproxy

nobody 5751 1 0 21:18 ? 00:00:00 /usr/local/sbin/haproxy -f /etc/haproxy/haproxy.cfg

root 5754 2458 0 21:19 pts/0 00:00:00 grep haproxy

]# netstat -nlap |grep haproxy

tcp 0 0 0.0.0.0:8088 0.0.0.0:* LISTEN 5751/haproxy

tcp 0 0 0.0.0.0:3307 0.0.0.0:* LISTEN 5751/haproxy

tcp 0 0 0.0.0.0:3308 0.0.0.0:* LISTEN 5751/haproxy

udp 0 0 0.0.0.0:45891 0.0.0.0:* 5751/haproxy

# cp /usr/local/sbin/haproxy /usr/sbin/haproxy

cd /opt/soft/haproxy-1.5.3/examples

[root@db169 examples]# cp haproxy.init /etc/init.d/haproxy

[root@db169 examples]# chmod +x /etc/init.d/haproxy

5、haproxy测试

在mysql pxc创建测试账号:

#grant all privileges on *.* to 'taotao'@’%’ identified by ‘taotao’;

#for i in `seq 1 1000`; do mysql -h 192.168.79.128 -P3307 -utaotao -ptaotao -e "select @@hostname;"; done

#for i in `seq 1 1000`; do mysql -h 192.168.79.128 -P3308 -utaotao -ptaotao -e "select @@hostname;"; done

注:其实可以只允许haproxy侧的IP访问即可,因用户通过vip访问mysql集群,haproxy根据调度策略使用自己的ip创建与后端mysql服务器的连接。

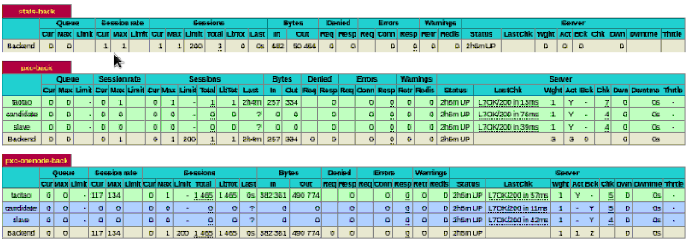

查看Haproxy状态:

http://192.168.79.128:8088/haproxy/stats

输入用户密码:stats auth admin admin

三、用keepalived实现haproxy 的高可用

接着在192.168.79.128 和192.168.79.5节点,安装配置keepalived,防止是HAPROXY单点故障对数据库的访问产生影响,给服务器之间通过KEEPALIVED进行心跳检测,如果其中的某个机器出现问题,其中的一台将会接管,对用户来说整个过程透明

在192.168.79.128和192.168.79.5上安装配置:

# yum install keepalived -y

#yum install MySQL-python -y

# mv /etc/keepalived/keepalived.conf /etc/keepalived/keepalived.conf.bak

# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

router_id haproxy_ha #keepalived组的名称

}

vrrp_script chk_haprocy {

script "/etc/keepalived/check_haproxy.sh"

interval 2

weight 2

}

vrrp_instance VI_HAPROXY {

state MASTER #备份机是BACKUP

#nopreempt #非抢占模式

interface eth0

virtual_router_id 51 #同一集群中该数值要相同,只能从1-255

priority 100 //备库可以90

advert_int 1

authentication {

auth_type PASS #Auth 用密码,但密码不要超过8位

auth_pass 1111

}

track_script {

chk_haprocy

}

virtual_ipaddress {

192.168.79.166/24

}

}

这里state不配置MASTER,是期望在MASTER宕机后再恢复时,不主动将MASTER状态抢过来,避免MySQL服务的波动。

由于不存在使用lvs进行负载均衡,不需要配置虚拟服务器virtual server,下同。

#vim /etc/keepalived/check_haproxy.sh

#!/bin/bash

A=`ps -C haproxy --no-header |wc -l`

if [ $A -eq 0 ];then

/usr/local/sbin/haproxy -f /etc/haproxy/haproxy.cfg

sleep 3

if [ `ps -C haproxy --no-header |wc -l` -eq 0 ];then

/etc/init.d/keepalived stop

fi

fi

#chmod 755 /etc/keepalived/check_haproxy.sh

启用keepalived

#service keepalived start

# chkconfig --level 2345 keepalived on

# tail -f /var/log/messages //keepalived的日志

ps:先启动,你内心期望成为对外服务的机器,确认VIP绑定到那台机器上, keepalived进入到master状态持有vip

keepalived 三种状态 1)backup 2)master 3)尝试进入master状态,没成功: FAULT

haproxy高可用测试:

check_haproxy.sh脚本可知,测试如果是只关闭haproxy服务,还是会自动重新,如果haproxy服务重启成功,是不会关闭keepalived的,

vip也不会飘到haproxy备机上,所以给测试需要关闭keepalived或关闭服务器才能达到效果。当master的挂掉后,处于backup的keepalived可以自动接管,

当master启动后vip会自动偏移过来。

在其中某台机子上关闭keepalived服务,/etc/init.d/keepalived stop,在经过1s的心跳检测后,会自动切换到另一台机子上,可以通过/var/log/messages进行查看

192.168.79.128:

# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:7c:e0:ce brd ff:ff:ff:ff:ff:ff

inet 192.168.79.128/24 brd 192.168.79.255 scope global eth0

inet 192.168.79.166/32 scope global eth0

192.168.79.5:

# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:d2:83:87 brd ff:ff:ff:ff:ff:ff

inet 192.168.79.5/24 brd 192.168.79.255 scope global eth0

192.168.79.128:

# /etc/init.d/keepalived stop

Stopping keepalived: [ OK ]

[root@taotao ~]# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:7c:e0:ce brd ff:ff:ff:ff:ff:ff

inet 192.168.79.128/24 brd 192.168.79.255 scope global eth0

inet6 fe80::20c:29ff:fe7c:e0ce/64 scope link

valid_lft forever preferred_lft forever

192.168.79.5:

# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:d2:83:87 brd ff:ff:ff:ff:ff:ff

inet 192.168.79.5/24 brd 192.168.79.255 scope global eth0

inet 192.168.79.166/32 scope global eth0

inet6 fe80::20c:29ff:fed2:8387/64 scope link

valid_lft forever preferred_lft forever

192.168.79.128:

# /etc/init.d/keepalived start

Starting keepalived: [ OK ]

# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:7c:e0:ce brd ff:ff:ff:ff:ff:ff

inet 192.168.79.128/24 brd 192.168.79.255 scope global eth0

inet 192.168.79.166/32 scope global eth0

# ip a

2: eth0: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:d2:83:87 brd ff:ff:ff:ff:ff:ff

inet 192.168.79.5/24 brd 192.168.79.255 scope global eth0

HAProxy+Keepalived+PXC负载均衡和高可用的PXC环境的更多相关文章

- 利用ansible书写playbook搭建HAProxy+Keepalived+PXC负载均衡和高可用的PXC环境续

ansible.playbook.haproxy.keepalived.PXC haproxy+keepalived双主模式调度pxc集群 HAProxy介绍 反向代理服务器,支持双机热备支持虚拟主机 ...

- LVS + keepalived + tomcat负载均衡及高可用实现(初级)

1.首先检测Linux服务器是否支持ipvs 执行如下命令:modprobe -l|grep ipvs 输出: kernel/net/netfilter/ipvs/ip_vs.ko kernel/ne ...

- HAProxy+keepalived+MySQL 实现MHA中slave集群负载均衡的高可用

HAProxy+keepalived+MySQL实现MHA中slave集群的负载均衡的高可用 Ip地址划分: 240 mysql_b2 242 mysql_b1 247 haprox ...

- Keepalived+LVS+Nginx负载均衡之高可用

Keepalived+LVS+Nginx负载均衡之高可用 上一篇写了nginx负载均衡,此篇实现高可用(HA).系统整体设计是采用Nginx做负载均衡,若出现Nginx单机故障,则导致整个系统无法正常 ...

- 本文介绍如何使用 Docker Swarm 来部署 Nebula Graph 集群,并部署客户端负载均衡和高可用

本文作者系:视野金服工程师 | 吴海胜 首发于 Nebula Graph 论坛:https://discuss.nebula-graph.com.cn/t/topic/1388 一.前言 本文介绍如何 ...

- net core 实战之 redis 负载均衡和"高可用"实现

net core 实战之 redis 负载均衡和"高可用"实现 1.概述 分布式系统缓存已经变得不可或缺,本文主要阐述如何实现redis主从复制集群的负载均衡,以及 redis的& ...

- dubbo服务层面上的负载均衡和高可用

dubbo上的服务层可以做集群,来达到负载均衡和高可用,很简单,只需要在不同的服务器节点上向同一个zk(内网环境)注册相同的服务 注意就是,消费者不能在同一个zk做这种集群操作的 转载请注明博客出处: ...

- Net分布式系统之三:Keepalived+LVS+Nginx负载均衡之高可用

上一篇写了nginx负载均衡,此篇实现高可用(HA).系统整体设计是采用Nginx做负载均衡,若出现Nginx单机故障,则导致整个系统无法正常运行.针对系统架构设计的高可用要求,我们需要解决Nginx ...

- asp.net core 实战之 redis 负载均衡和"高可用"实现

1.概述 分布式系统缓存已经变得不可或缺,本文主要阐述如何实现redis主从复制集群的负载均衡,以及 redis的"高可用"实现, 呵呵双引号的"高可用"并不是 ...

随机推荐

- OpenSSl编译

1.下载openssl代码,下载地址:http://www.openssl.org/source/ ,如果使用winrar解压失败的话(提示不能创建符号链接),可以关闭UAC.2.下载安装Active ...

- stretchlim函数分析

在看imadjust代码时,看到stretchlim函数,特此分析一下,代码注释如下 function lowhigh = stretchlim(varargin) %STRETCHLIM Find ...

- 【solr基础教程之二】索引

一.向Solr提交索引的方式 1.使用post.jar进行索引 (1)创建文档xml文件 <add> <doc> <field name="id"&g ...

- js入门基础7-2 (求模-隔行变色)

<!DOCTYPE html> <html> <head> <meta charset="UTF-8"> <title> ...

- JAVA操作properties文件

va中的properties文件是一种配置文件,主要用于表达配置信息,文件类型为*.properties,格式为文本文件,文件的内容是格式是"键=值"的格式,在properties ...

- jQuery模拟瀑布流布局

<!DOCTYPE html> <html> <head> <meta charset="utf-8"> <title> ...

- static的用法解析

PHP中static变量的使用范围要更广一些,我们不仅可以在类,方法或变量前面添加static修饰符,我们甚至还能给函数内部变量添加static关键字.添加了static修饰符的变量即使在该函数执行完 ...

- firefox因gnash cpu 高

sudo apt-get remove --purge gnash 去adobe下载adobe flash for linux 解压 tar zxvf install_flash_player_11_ ...

- Backbone案例的初略理解

版权声明:转载时请以超链接形式标明文章原始出处和作者信息及本声明http://www.blogbus.com/monw3c-logs/217636180.html 先说一下Backbone的执行顺序: ...

- 从一道面试题谈linux下fork的运行机制

http://www.cnblogs.com/leoo2sk/archive/2009/12/11/talk-about-fork-in-linux.html