吴裕雄 python深度学习与实践(16)

import struct

import numpy as np

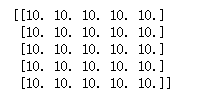

import matplotlib.pyplot as plt dateMat = np.ones((7,7)) kernel = np.array([[2,1,1],[3,0,1],[1,1,0]]) def convolve(dateMat,kernel):

m,n = dateMat.shape

km,kn = kernel.shape

newMat = np.ones(((m - km + 1),(n - kn + 1)))

tempMat = np.ones(((km),(kn)))

for row in range(m - km + 1):

for col in range(n - kn + 1):

for m_k in range(km):

for n_k in range(kn):

tempMat[m_k,n_k] = dateMat[(row + m_k),(col + n_k)] * kernel[m_k,n_k]

newMat[row,col] = np.sum(tempMat) return newMat newMat = convolve(dateMat,kernel)

print(newMat)

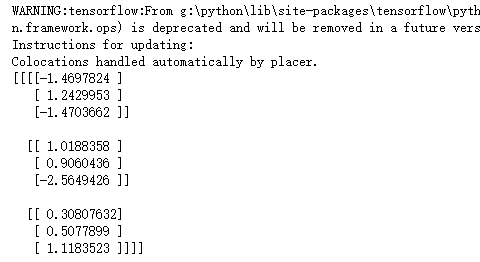

import tensorflow as tf input1 = tf.Variable(tf.random_normal([1, 3, 3, 1]))

filter1 = tf.Variable(tf.ones([1, 1, 1, 1])) init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

conv2d = tf.nn.conv2d(input1, filter1, strides=[1, 1, 1, 1], padding='VALID')

print(sess.run(conv2d))

import tensorflow as tf input1 = tf.Variable(tf.random_normal([1, 5, 5, 5]))

filter1 = tf.Variable(tf.ones([3, 3, 5, 1])) init = tf.global_variables_initializer() with tf.Session() as sess:

sess.run(init)

conv2d = tf.nn.conv2d(input1, filter1, strides=[1, 1, 1, 1], padding='VALID')

print(sess.run(conv2d))

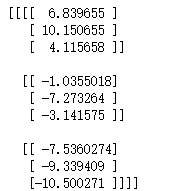

import tensorflow as tf input1 = tf.Variable(tf.random_normal([1, 5, 5, 5]))

filter1 = tf.Variable(tf.ones([3, 3, 5, 1])) init = tf.global_variables_initializer() with tf.Session() as sess:

sess.run(init)

conv2d = tf.nn.conv2d(input1, filter1, strides=[1, 1, 1, 1], padding='SAME')

print(sess.run(conv2d))

import tensorflow as tf input1 = tf.Variable(tf.random_normal([1, 5, 5, 5]))

filter1 = tf.Variable(tf.ones([3, 3, 5, 1])) init = tf.global_variables_initializer() with tf.Session() as sess:

sess.run(init)

conv2d = tf.nn.conv2d(input1, filter1, strides=[1, 2, 2, 1], padding='SAME')

print(sess.run(conv2d))

import cv2

import numpy as np

import tensorflow as tf img = cv2.imread("D:\\F\\TensorFlow_deep_learn\\data\\lena.jpg")

img = np.array(img,dtype=np.float32)

x_image=tf.reshape(img,[1,512,512,3]) filter1 = tf.Variable(tf.ones([7, 7, 3, 1])) init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

res = tf.nn.conv2d(x_image, filter1, strides=[1, 2, 2, 1], padding='SAME')

res_image = sess.run(tf.reshape(res,[256,256]))/128 + 1 cv2.imshow("lover",res_image.astype('uint8'))

cv2.waitKey()

import cv2

import numpy as np

import tensorflow as tf img = cv2.imread("D:\\F\\TensorFlow_deep_learn\\data\\lena.jpg")

img = np.array(img,dtype=np.float32)

x_image=tf.reshape(img,[1,512,512,3]) filter1 = tf.Variable(tf.ones([11, 11, 3, 1])) init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

res = tf.nn.conv2d(x_image, filter1, strides=[1, 2, 2, 1], padding='SAME')

res_image = sess.run(tf.reshape(res,[256,256]))/128 + 1 cv2.imshow("lover",res_image.astype('uint8'))

cv2.waitKey()

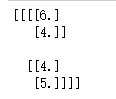

import tensorflow as tf data=tf.constant([

[[3.0,2.0,3.0,4.0],

[2.0,6.0,2.0,4.0],

[1.0,2.0,1.0,5.0],

[4.0,3.0,2.0,1.0]]

])

data = tf.reshape(data,[1,4,4,1])

maxPooling=tf.nn.max_pool(data, [1, 2, 2, 1], [1, 2, 2, 1], padding='VALID') with tf.Session() as sess:

print(sess.run(maxPooling))

import cv2

import numpy as np

import tensorflow as tf img = cv2.imread("D:\\F\\TensorFlow_deep_learn\\data\\lena.jpg")

img = np.array(img,dtype=np.float32)

x_image=tf.reshape(img,[1,512,512,3]) filter1 = tf.Variable(tf.ones([7, 7, 3, 1]))

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

res = tf.nn.conv2d(x_image, filter1, strides=[1, 2, 2, 1], padding='SAME')

res = tf.nn.max_pool(res, [1, 2, 2, 1], [1, 2, 2, 1], padding='VALID')

res_image = sess.run(tf.reshape(res,[128,128]))/128 + 1 cv2.imshow("lover",res_image.astype('uint8'))

cv2.waitKey()

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data # 声明输入图片数据,类别

x = tf.placeholder('float', [None, 784])

y_ = tf.placeholder('float', [None, 10])

# 输入图片数据转化

x_image = tf.reshape(x, [-1, 28, 28, 1]) #第一层卷积层,初始化卷积核参数、偏置值,该卷积层5*5大小,一个通道,共有6个不同卷积核

filter1 = tf.Variable(tf.truncated_normal([5, 5, 1, 6]))

bias1 = tf.Variable(tf.truncated_normal([6]))

conv1 = tf.nn.conv2d(x_image, filter1, strides=[1, 1, 1, 1], padding='SAME')

h_conv1 = tf.nn.sigmoid(conv1 + bias1) maxPool2 = tf.nn.max_pool(h_conv1, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') filter2 = tf.Variable(tf.truncated_normal([5, 5, 6, 16]))

bias2 = tf.Variable(tf.truncated_normal([16]))

conv2 = tf.nn.conv2d(maxPool2, filter2, strides=[1, 1, 1, 1], padding='SAME')

h_conv2 = tf.nn.sigmoid(conv2 + bias2) maxPool3 = tf.nn.max_pool(h_conv2, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') filter3 = tf.Variable(tf.truncated_normal([5, 5, 16, 120]))

bias3 = tf.Variable(tf.truncated_normal([120]))

conv3 = tf.nn.conv2d(maxPool3, filter3, strides=[1, 1, 1, 1], padding='SAME')

h_conv3 = tf.nn.sigmoid(conv3 + bias3) # 全连接层

# 权值参数

W_fc1 = tf.Variable(tf.truncated_normal([7 * 7 * 120, 80]))

# 偏置值

b_fc1 = tf.Variable(tf.truncated_normal([80]))

# 将卷积的产出展开

h_pool2_flat = tf.reshape(h_conv3, [-1, 7 * 7 * 120])

# 神经网络计算,并添加sigmoid激活函数

h_fc1 = tf.nn.sigmoid(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) # 输出层,使用softmax进行多分类

W_fc2 = tf.Variable(tf.truncated_normal([80, 10]))

b_fc2 = tf.Variable(tf.truncated_normal([10]))

y_conv = tf.nn.softmax(tf.matmul(h_fc1, W_fc2) + b_fc2)

# 损失函数

cross_entropy = -tf.reduce_sum(y_ * tf.log(y_conv))

# 使用GDO优化算法来调整参数

train_step = tf.train.GradientDescentOptimizer(0.001).minimize(cross_entropy) sess = tf.InteractiveSession()

# 测试正确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) # 所有变量进行初始化

sess.run(tf.initialize_all_variables()) # 获取mnist数据

mnist_data_set = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) # 进行训练

start_time = time.time()

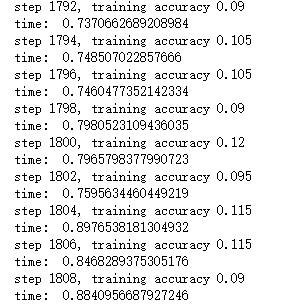

for i in range(20000):

# 获取训练数据

batch_xs, batch_ys = mnist_data_set.train.next_batch(200) # 每迭代100个 batch,对当前训练数据进行测试,输出当前预测准确率

if i % 2 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch_xs, y_: batch_ys})

print("step %d, training accuracy %g" % (i, train_accuracy))

# 计算间隔时间

end_time = time.time()

print('time: ', (end_time - start_time))

start_time = end_time

# 训练数据

train_step.run(feed_dict={x: batch_xs, y_: batch_ys}) # 关闭会话

sess.close()

import time

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data # 声明输入图片数据,类别

x = tf.placeholder('float', [None, 784])

y_ = tf.placeholder('float', [None, 10])

# 输入图片数据转化

x_image = tf.reshape(x, [-1, 28, 28, 1]) #第一层卷积层,初始化卷积核参数、偏置值,该卷积层5*5大小,一个通道,共有6个不同卷积核

filter1 = tf.Variable(tf.truncated_normal([5, 5, 1, 6]))

bias1 = tf.Variable(tf.truncated_normal([6]))

conv1 = tf.nn.conv2d(x_image, filter1, strides=[1, 1, 1, 1], padding='SAME')

h_conv1 = tf.nn.relu(conv1 + bias1) maxPool2 = tf.nn.max_pool(h_conv1, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') filter2 = tf.Variable(tf.truncated_normal([5, 5, 6, 16]))

bias2 = tf.Variable(tf.truncated_normal([16]))

conv2 = tf.nn.conv2d(maxPool2, filter2, strides=[1, 1, 1, 1], padding='SAME')

h_conv2 = tf.nn.relu(conv2 + bias2) maxPool3 = tf.nn.max_pool(h_conv2, ksize=[1, 2, 2, 1],strides=[1, 2, 2, 1], padding='SAME') filter3 = tf.Variable(tf.truncated_normal([5, 5, 16, 120]))

bias3 = tf.Variable(tf.truncated_normal([120]))

conv3 = tf.nn.conv2d(maxPool3, filter3, strides=[1, 1, 1, 1], padding='SAME')

h_conv3 = tf.nn.relu(conv3 + bias3) # 全连接层

# 权值参数

W_fc1 = tf.Variable(tf.truncated_normal([7 * 7 * 120, 80]))

# 偏置值

b_fc1 = tf.Variable(tf.truncated_normal([80]))

# 将卷积的产出展开

h_pool2_flat = tf.reshape(h_conv3, [-1, 7 * 7 * 120])

# 神经网络计算,并添加relu激活函数

h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) # 输出层,使用softmax进行多分类

W_fc2 = tf.Variable(tf.truncated_normal([80, 10]))

b_fc2 = tf.Variable(tf.truncated_normal([10]))

y_conv = tf.nn.softmax(tf.matmul(h_fc1, W_fc2) + b_fc2)

# 损失函数

cross_entropy = -tf.reduce_sum(y_ * tf.log(y_conv))

# 使用GDO优化算法来调整参数

train_step = tf.train.GradientDescentOptimizer(0.001).minimize(cross_entropy) sess = tf.InteractiveSession()

# 测试正确率

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) # 所有变量进行初始化

sess.run(tf.initialize_all_variables()) # 获取mnist数据

mnist_data_set = input_data.read_data_sets("D:\\F\\TensorFlow_deep_learn\\MNIST\\", one_hot=True) # 进行训练

start_time = time.time()

for i in range(20000):

# 获取训练数据

batch_xs, batch_ys = mnist_data_set.train.next_batch(200) # 每迭代100个 batch,对当前训练数据进行测试,输出当前预测准确率

if i % 2 == 0:

train_accuracy = accuracy.eval(feed_dict={x: batch_xs, y_: batch_ys})

print("step %d, training accuracy %g" % (i, train_accuracy))

# 计算间隔时间

end_time = time.time()

print('time: ', (end_time - start_time))

start_time = end_time

# 训练数据

train_step.run(feed_dict={x: batch_xs, y_: batch_ys}) # 关闭会话

sess.close()

吴裕雄 python深度学习与实践(16)的更多相关文章

- 吴裕雄 python深度学习与实践(17)

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data import time # 声明输 ...

- 吴裕雄 python深度学习与实践(13)

import numpy as np import matplotlib.pyplot as plt x_data = np.random.randn(10) print(x_data) y_data ...

- 吴裕雄 python深度学习与实践(5)

import numpy as np data = np.mat([[1,200,105,3,False], [2,165,80,2,False], [3,184.5,120,2,False], [4 ...

- 吴裕雄 python深度学习与实践(18)

# coding: utf-8 import time import numpy as np import tensorflow as tf import _pickle as pickle impo ...

- 吴裕雄 python深度学习与实践(15)

import tensorflow as tf import tensorflow.examples.tutorials.mnist.input_data as input_data mnist = ...

- 吴裕雄 python深度学习与实践(14)

import numpy as np import tensorflow as tf import matplotlib.pyplot as plt threshold = 1.0e-2 x1_dat ...

- 吴裕雄 python深度学习与实践(12)

import tensorflow as tf q = tf.FIFOQueue(,"float32") counter = tf.Variable(0.0) add_op = t ...

- 吴裕雄 python深度学习与实践(11)

import numpy as np from matplotlib import pyplot as plt A = np.array([[5],[4]]) C = np.array([[4],[6 ...

- 吴裕雄 python深度学习与实践(10)

import tensorflow as tf input1 = tf.constant(1) print(input1) input2 = tf.Variable(2,tf.int32) print ...

随机推荐

- 微信小程序用setData给数组对象赋值

假如现在要给数组marker中的对象属性赋值 data: { marker: [ { latitude: ' ' , longitude: ' ' } ] }, 在方法中的写法为 fetchJ ...

- 【leetcode】414. Third Maximum Number

problem 414. Third Maximum Number solution 思路:用三个变量first, second, third来分别保存第一大.第二大和第三大的数,然后遍历数组. cl ...

- javascript 运算符优先级

JavaScript 运算符优先级(从高到低) https://github.com/xhlwill/blog/issues/16 今天把js函数转换为python 函数时,发现在js运算符优先级这边 ...

- Ubuntu下math库函数编译时未定义问题的解决

自己在Ubuntu下练习C程序时,用到了库函数math.h,虽然在源程序中已添加头文件“math.h”,但仍提示所用函数未定义,原本以为是程序出错了,找了好久,这是怎么回事呢? 后来上网查了下,发现是 ...

- h5 网络断网时,返回上一个页面 demo (与检测网络代码相结合,更直观看到结果)

页面一: <!DOCTYPE html><html lang="en"><head> <meta charset="UTF-8& ...

- arch 安装准备--包管理的使用pacman

-------https://wiki.archlinux.org/index.php/Pacman/Tips_and_tricks#List_of_installed_packageshttps:/ ...

- 在html中做表格以及给表格设置高宽字体居中和表格线的粗细

今天学习了如何用HTML在网页上做表格,对于我这种横列部分的属实有点麻烦,不过在看着表格合并单过格的时候我把整个表格看做代码就容易多了. 对于今天的作业让我学习了更多的代码,对于代码的应用希望更加熟练 ...

- solr 学习笔记(一)--搜索引擎简介

一 搜索引擎是什么一套可对大量结构化.半结构化数据.非结构化文本类数据进行实时搜索的专门软件最早应用于信息检索领域,经谷歌.百度等公司推出网页搜索而为大众广知.后又被各大电商网站采用来做网站的商品搜索 ...

- shell(2)图片重命名

1:图片重命名 原来的图片名字格式: 改成的图片名字格式: #!/bin/bash #重命名 .png和.jpg #如果原文件的图片名称是从0开始,那么count=:从1开始,那么count= cou ...

- sequelize查询数据的日期格式化

首先确定时区 const sequelize = new Sequelize(config.database, config.username, config.password, { host: co ...