word2vec之tensorflow(skip-gram)实现

关于word2vec的理解,推荐文章https://www.cnblogs.com/guoyaohua/p/9240336.html

代码参考https://github.com/eecrazy/word2vec_chinese_annotation

我在其基础上修改了错误的部分,并添加了一些注释。

代码在jupyter notebook下运行。

from __future__ import print_function #表示不管哪个python版本,使用最新的print语法

import collections

import math

import numpy as np

import random

import tensorflow as tf

import zipfile

from matplotlib import pylab

from sklearn.manifold import TSNE

%matplotlib inline

下载text8.zip文件,这个文件包含了大量单词。官方地址为http://mattmahoney.net/dc/text8.zip

filename='text8.zip'

def read_data(filename):

"""Extract the first file enclosed in a zip file as a list of words"""

with zipfile.ZipFile(filename) as f:

# 里面只有一个文件text8,包含了多个单词

# f.read返回字节,tf.compat.as_str将字节转为字符

# data包含了所有单词

data = tf.compat.as_str(f.read(f.namelist()[0])).split()

return data #words里面包含了所有的单词

words = read_data(filename)

print('Data size %d' % len(words))

创建正-反词典,并将单词转换为词典索引,这里词汇表取为50000,仍然有400000多的单词标记为unknown。

#词汇表大小

vocabulary_size = 50000 def build_dataset(words):

# 表示未知,即不在词汇表里的单词,注意这里用的是列表形式而非元组形式,因为后面未知的数量需要赋值

count = [['UNK', -1]]

count.extend(collections.Counter(words).most_common(vocabulary_size - 1)) #词-索引哈希

dictionary = dict()

for word, _ in count:

# 每增加一个-->len+1,索引从0开始

dictionary[word] = len(dictionary) #用索引表示的整个text8文本

data = list()

unk_count = 0

for word in words:

if word in dictionary:

index = dictionary[word]

else:

index = 0 # dictionary['UNK']

unk_count = unk_count + 1

data.append(index) count[0][1] = unk_count

# 索引-词哈希

reverse_dictionary = dict(zip(dictionary.values(), dictionary.keys()))

return data, count, dictionary, reverse_dictionary data, count, dictionary, reverse_dictionary = build_dataset(words)

print('Most common words (+UNK)', count[:5])

print('Sample data', data[:10])

# 删除,减少内存

del words # Hint to reduce memory.

生成batch的函数

data_index = 0 # num_skips表示在两侧窗口内总共取多少个词,数量可以小于2*skip_window

# span窗口为[ skip_window target skip_window ]

# num_skips=2*skip_window

def generate_batch(batch_size, num_skips, skip_window):

global data_index #这里两个断言

assert batch_size % num_skips == 0

assert num_skips <= 2 * skip_window #初始化batch和labels,都是整形

batch = np.ndarray(shape=(batch_size), dtype=np.int32)

labels = np.ndarray(shape=(batch_size, 1), dtype=np.int32) #注意labels的形状 span = 2 * skip_window + 1 # [ skip_window target skip_window ]

#buffer这个队列太有用了,不断地保存span个单词在里面,然后不断往后滑动,而且buffer[skip_window]就是中心词

buffer = collections.deque(maxlen=span) for _ in range(span):

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data) #需要多少个中心词,因为一个target对应num_skips个的单词,即一个目标单词w在num_skips=2时形成2个样本(w,left_w),(w,right_w)

# 这样描述了目标单词w的上下文

center_words_count=batch_size // num_skips

for i in range(center_words_count):

#skip_window在buffer里正好是中心词所在位置

target = skip_window # target label at the center of the buffer

targets_to_avoid = [ skip_window ]

for j in range(num_skips):

# 选取span窗口中不包含target的,且不包含已选过的

target=random.choice([i for i in range(0,span) if i not in targets_to_avoid])

targets_to_avoid.append(target)

# batch中重复num_skips次

batch[i * num_skips + j] = buffer[skip_window]

# 同一个target对应num_skips个上下文单词

labels[i * num_skips + j, 0] = buffer[target]

# buffer滑动一格

buffer.append(data[data_index])

data_index = (data_index + 1) % len(data)

return batch, labels # 打印前8个单词

print('data:', [reverse_dictionary[di] for di in data[:10]])

for num_skips, skip_window in [(2, 1), (4, 2)]:

data_index = 0

batch, labels = generate_batch(batch_size=16, num_skips=num_skips, skip_window=skip_window)

print('\nwith num_skips = %d and skip_window = %d:' % (num_skips, skip_window))

print(' batch:', [reverse_dictionary[bi] for bi in batch])

print(' labels:', [reverse_dictionary[li] for li in labels.reshape(16)])

我这里打印的结果为:可以看到batch和label的关系为,一个target单词多次对应于其上下文的单词

data: ['anarchism', 'originated', 'as', 'a', 'term', 'of', 'abuse', 'first', 'used', 'against'] with num_skips = 2 and skip_window = 1:

batch: ['originated', 'originated', 'as', 'as', 'a', 'a', 'term', 'term', 'of', 'of', 'abuse', 'abuse', 'first', 'first', 'used', 'used']

labels: ['as', 'anarchism', 'originated', 'a', 'term', 'as', 'of', 'a', 'term', 'abuse', 'of', 'first', 'abuse', 'used', 'against', 'first'] with num_skips = 4 and skip_window = 2:

batch: ['as', 'as', 'as', 'as', 'a', 'a', 'a', 'a', 'term', 'term', 'term', 'term', 'of', 'of', 'of', 'of']

labels: ['anarchism', 'originated', 'a', 'term', 'originated', 'of', 'as', 'term', 'of', 'a', 'abuse', 'as', 'a', 'term', 'first', 'abuse']

构建model,定义loss:

batch_size = 128

embedding_size = 128 # Dimension of the embedding vector.

skip_window = 1 # How many words to consider left and right.

num_skips = 2 # How many times to reuse an input to generate a label. valid_size = 16 # Random set of words to evaluate similarity on.

valid_window = 100 # Only pick dev samples in the head of the distribution.

#随机挑选一组单词作为验证集,valid_examples也就是下面的valid_dataset,是一个一维的ndarray

valid_examples = np.array(random.sample(range(valid_window), valid_size)) #trick:负采样数值

num_sampled = 64 # Number of negative examples to sample. graph = tf.Graph() with graph.as_default(), tf.device('/cpu:0'): # 训练集和标签,以及验证集(注意验证集是一个常量集合)

train_dataset = tf.placeholder(tf.int32, shape=[batch_size])

train_labels = tf.placeholder(tf.int32, shape=[batch_size, 1])

valid_dataset = tf.constant(valid_examples, dtype=tf.int32) # 定义Embedding层,初始化。

embeddings = tf.Variable(tf.random_uniform([vocabulary_size, embedding_size], -1.0, 1.0))

softmax_weights = tf.Variable(

tf.truncated_normal([vocabulary_size, embedding_size],stddev=1.0 / math.sqrt(embedding_size)))

softmax_biases = tf.Variable(tf.zeros([vocabulary_size])) # Model.

# train_dataset通过embeddings变为稠密向量,train_dataset是一个一维的ndarray

embed = tf.nn.embedding_lookup(embeddings, train_dataset) # Compute the softmax loss, using a sample of the negative labels each time.

# 计算损失,tf.reduce_mean和tf.nn.sampled_softmax_loss

loss = tf.reduce_mean(tf.nn.sampled_softmax_loss(weights=softmax_weights, biases=softmax_biases, inputs=embed,

labels=train_labels, num_sampled=num_sampled, num_classes=vocabulary_size)) # Optimizer.优化器,这里也会优化embeddings

# Note: The optimizer will optimize the softmax_weights AND the embeddings.

# This is because the embeddings are defined as a variable quantity and the

# optimizer's `minimize` method will by default modify all variable quantities

# that contribute to the tensor it is passed.

# See docs on `tf.train.Optimizer.minimize()` for more details.

optimizer = tf.train.AdagradOptimizer(1.0).minimize(loss) # 模型其实到这里就结束了,下面是在验证集上做效果验证

# Compute the similarity between minibatch examples and all embeddings.

# We use the cosine distance:先对embeddings做正则化

norm = tf.sqrt(tf.reduce_sum(tf.square(embeddings), 1, keep_dims=True))

normalized_embeddings = embeddings / norm

valid_embeddings = tf.nn.embedding_lookup(normalized_embeddings, valid_dataset)

#验证集单词与其他所有单词的相似度计算

similarity = tf.matmul(valid_embeddings, tf.transpose(normalized_embeddings))

开始训练:

num_steps = 40001

with tf.Session(graph=graph) as session:

tf.initialize_all_variables().run()

print('Initialized')

average_loss = 0

for step in range(num_steps):

batch_data, batch_labels = generate_batch(batch_size, num_skips, skip_window)

feed_dict = {train_dataset : batch_data, train_labels : batch_labels}

_, this_loss = session.run([optimizer, loss], feed_dict=feed_dict) average_loss += this_loss

# 每2000步计算一次平均loss

if step % 2000 == 0:

if step > 0:

average_loss = average_loss / 2000

# The average loss is an estimate of the loss over the last 2000 batches.

print('Average loss at step %d: %f' % (step, average_loss))

average_loss = 0 # note that this is expensive (~20% slowdown if computed every 500 steps)

if step % 10000 == 0:

sim = similarity.eval()

for i in range(valid_size):

valid_word = reverse_dictionary[valid_examples[i]]

top_k = 8 # number of nearest neighbors

# nearest = (-sim[i, :]).argsort()[1:top_k+1]

nearest = (-sim[i, :]).argsort()[0:top_k+1]#包含自己试试

log = 'Nearest to %s:' % valid_word

for k in range(top_k):

close_word = reverse_dictionary[nearest[k]]

log = '%s %s,' % (log, close_word)

print(log)

#一直到训练结束,再对所有embeddings做一次正则化,得到最后的embedding

final_embeddings = normalized_embeddings.eval()

我们可以看下训练过程中的验证情况,比如many这个单词的相似词计算:

开始时,

Nearest to many: many, originator, jeddah, maxwell, laurent, distress, interpret, bucharest,

10000步后,

Nearest to many: many, some, several, jeddah, originator, neurath, distress, songs,

40000步后,

Nearest to many: many, some, several, these, various, such, other, most,

可以看到此时单词的相似度确实很高了。

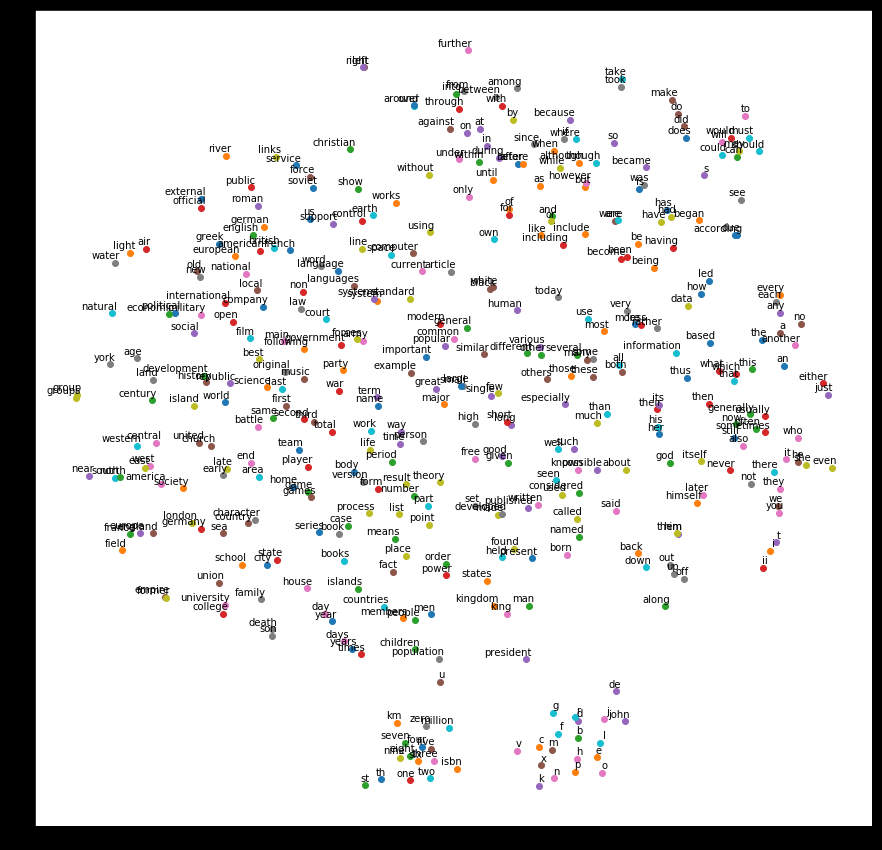

最后,我们通过降维,将单词相似情况以图示展现出来:

num_points = 400

# 降维度PCA

tsne = TSNE(perplexity=30, n_components=2, init='pca', n_iter=5000)

two_d_embeddings = tsne.fit_transform(final_embeddings[1:num_points+1, :])

def plot(embeddings, labels):

assert embeddings.shape[0] >= len(labels), 'More labels than embeddings'

pylab.figure(figsize=(15,15)) # in inches

for i, label in enumerate(labels):

x, y = embeddings[i,:]

pylab.scatter(x, y)

pylab.annotate(label, xy=(x, y), xytext=(5, 2), textcoords='offset points',

ha='right', va='bottom')

pylab.show() words = [reverse_dictionary[i] for i in range(1, num_points+1)]

plot(two_d_embeddings, words)

结果如下,随便举些例子,university和college相近,take和took相近,one、two、three等相近

总结:原始的word2vec是用c语言写的,这里用的python,结合的tensorflow。这个代码存在一些问题,首先,单词不是以索引作为输入的,应该是以one-hot形式输入。其次,负采样的比例太小,词汇表有50000,每批样本才选64个去做softmax。然后,这里也没使用到另一个trick(当然这里根本没用one-hot,这个trick也不存在了,我甚至觉得根本不需要负采样):将单词构建为二叉树(类似于从one-hot维度降低到二叉树编码(如哈夫曼树)),从而实现一种降维操作。不过,即使是这个简陋的模型,效果看起来依然不错,即方向对了,醉汉也能走到家。

word2vec之tensorflow(skip-gram)实现的更多相关文章

- Word2Vec在Tensorflow上的版本以及与Gensim之间的运行对比

接昨天的博客,这篇随笔将会对本人运行Word2Vec算法时在Gensim以及Tensorflow的不同版本下的运行结果对比.在运行中,参数的调节以及迭代的决定本人并没有很好的经验,所以希望在展出运行的 ...

- Word2vec多线程(tensorflow)

workers = [] for _ in xrange(opts.concurrent_steps): t = threading.Thread(target=self._train_thread_ ...

- [C7] Andrew Ng - Sequence Models

About this Course This course will teach you how to build models for natural language, audio, and ot ...

- Tensorflow 的Word2vec demo解析

简单demo的代码路径在tensorflow\tensorflow\g3doc\tutorials\word2vec\word2vec_basic.py Sikp gram方式的model思路 htt ...

- 利用Tensorflow进行自然语言处理(NLP)系列之二高级Word2Vec

本篇也同步笔者另一博客上(https://blog.csdn.net/qq_37608890/article/details/81530542) 一.概述 在上一篇中,我们介绍了Word2Vec即词向 ...

- 利用 TensorFlow 入门 Word2Vec

利用 TensorFlow 入门 Word2Vec 原创 2017-10-14 chen_h coderpai 博客地址:http://www.jianshu.com/p/4e16ae0aad25 或 ...

- Forward-backward梯度求导(tensorflow word2vec实例)

考虑不可分的例子 通过使用basis functions 使得不可分的线性模型变成可分的非线性模型 最常用的就是写出一个目标函数 并且使用梯度下降法 来计算 梯度的下降法的梯度 ...

- Python Tensorflow下的Word2Vec代码解释

前言: 作为一个深度学习的重度狂热者,在学习了各项理论后一直想通过项目练手来学习深度学习的框架以及结构用在实战中的知识.心愿是好的,但机会却不好找.最近刚好有个项目,借此机会练手的过程中,我发现其实各 ...

- Word2Vec总结

摘要: 1.算法概述 2.算法要点与推导 3.算法特性及优缺点 4.注意事项 5.实现和具体例子 6.适用场合 内容: 1.算法概述 Word2Vec是一个可以将语言中的字词转换为向量表达(Vecto ...

随机推荐

- HandlerMethodArgumentResolver(二):Map参数类型和固定参数类型【享学Spring MVC】

每篇一句 黄金的导电性最好,为什么电脑主板还是要用铜? 飞机最快,为什么还有人做火车? 清华大学最好,为什么还有人去普通学校? 因为资源都是有限的,我们现实生活中必须兼顾成本与产出的平衡 前言 上文 ...

- Mybatis的一级缓存和二级缓存的理解以及用法

程序中为什么使用缓存? 先了解一下缓存的概念:原始意义是指访问速度比一般随机存取存储器快的一种RAM,通常它不像系统主存那样使用DRAM技术,而使用昂贵但较快速的SRAM技术.对于我们编程来说,所谓的 ...

- 百度地图获取定位,实现拖动marker定位,返回具体的位置名

<!DOCTYPE html> <html> <head> <meta http-equiv="Content-Type" content ...

- Git学习笔记-相关命令记录

内容来自:https://www.liaoxuefeng.com/wiki/896043488029600/896067074338496 1.Linux安装Git 首先,你可以试着输入git,看看系 ...

- 基于ASP.Net Core开发的一套通用后台框架

基于ASP.Net Core开发一套通用后台框架 写在前面 这是本人在学习的过程中搭建学习的框架,如果对你有所帮助那再好不过.如果您有发现错误,请告知我,我会第一时间修改. 知其然,知其所以然,并非重 ...

- d3.js 教程 模仿echarts legend功能

上一节记录没有加上echarts的legend功能,这一小节补一下. 1. 数据 我们可以从echarts中看出,折线数据并不是我们传进入的原始数据(多数情况下我们也不会修改原始数据),而是原始数组的 ...

- HDU-1532 网络流裸题

HDU-1532 题意简单的来说就是从1点到n点,最大的流量是多少. 代码: #include<bits/stdc++.h> using namespace std; #define Fo ...

- NOIP 2016 蚯蚓 题解

一道有趣的题目,首先想到合并果子,然而发现会超时,我们可以发现首先拿出来的切掉后比后拿出来切掉后还是还长,即满足单调递增,故建立三个队列即可. 代码 #include<bits/stdc++.h ...

- Linux系统调用表(x86_64)

内核版本 Linux 4.7 Note:64位系统中,不再使用int 0x80来进行系统调用,取而代之的是syscall指令 %rax System call %rdi %rsi %rdx %r10 ...

- 【Offer】[18-1] 【在O(1)时间内删除链表节点】

题目描述 思路分析 测试用例 代码链接 题目描述 给定单向链表的头指针和一个结点指针,定义一个函数在O(1)时间删除该结点. 思路分析 一般我们删除单链表中的节点是需要遍历链表,找到要删除节点的前一个 ...