Kubernetes 企业级集群部署方式

一、Kubernetes介绍与特性

1.1、kubernetes是什么

官方网站:http://www.kubernetes.io

• Kubernetes是Google在2014年开源的一个容器集群管理系统,Kubernetes简称K8S。

• K8S用于容器化应用程序的部署,扩展和管理。

• K8S提供了容器编排,资源调度,弹性伸缩,部署管理,服务发现等一系列功能。

• Kubernetes目标是让部署容器化应用简单高效。

1.2、kubernetes是什么

一个容器平台

一个微服务平台

便捷式云平台

1.3、kubernetes特性

- 自我修复

在节点故障时重新启动失败的容器,替换和重新部署,保证预期的副本数量;杀死健康检查失败的容器,并且在未准备好之前不会处理客户端请求,确保线上服务不中断。

- 弹性伸缩

使用命令、UI或者基于CPU使用情况自动快速扩容和缩容应用程序实例,保证应用业务高峰并发时的高可用性;业务低峰时回收资源,以最小成本运行服务。

- 自动部署和回滚

K8S采用滚动更新策略更新应用,一次更新一个Pod,而不是同时删除所有Pod,如果更新过程中出现问题,将回滚更改,确保升级不受影响业务。

- 服务发现和负载均衡

K8S为多个容器提供一个统一访问入口(内部IP地址和一个DNS名称),并且负载均衡关联的所有容器,使得用户无需考虑容器IP问题。

- 机密和配置管理

管理机密数据和应用程序配置,而不需要把敏感数据暴露在镜像里,提高敏感数据安全性。并可以将一些常用的配置存储在K8S中,方便应用程序使用。

- 存储编排

挂载外部存储系统,无论是来自本地存储,公有云(如AWS),还是网络存储(如NFS、GlusterFS、Ceph)都作为集群资源的一部分使用,极大提高存储使用灵活性。

- 批处理

提供一次性任务,定时任务;满足批量数据处理和分析的场景。

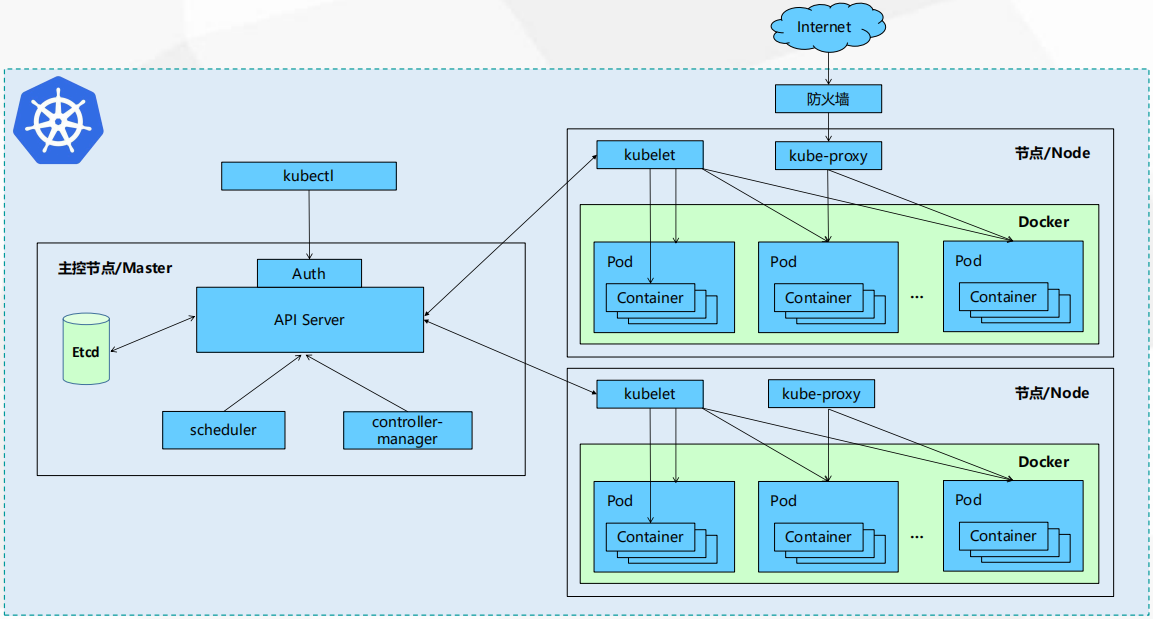

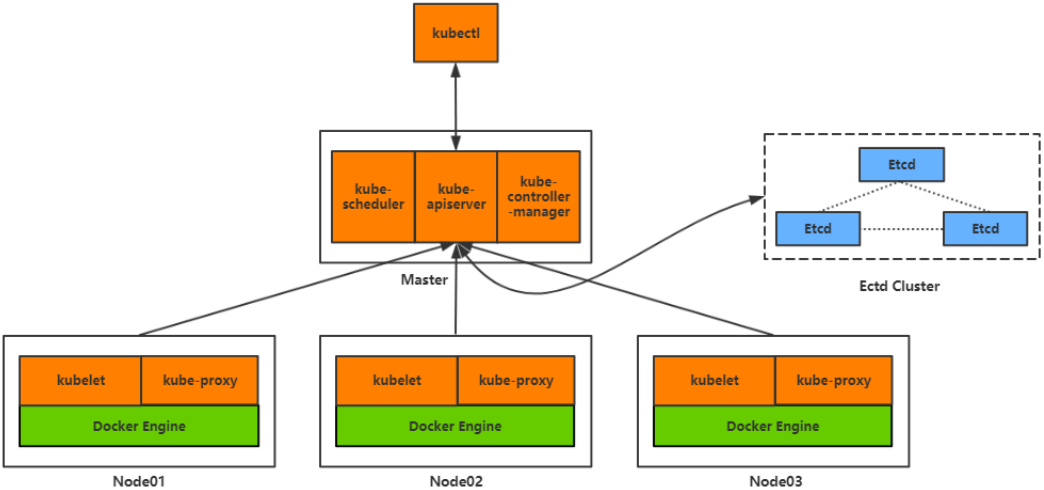

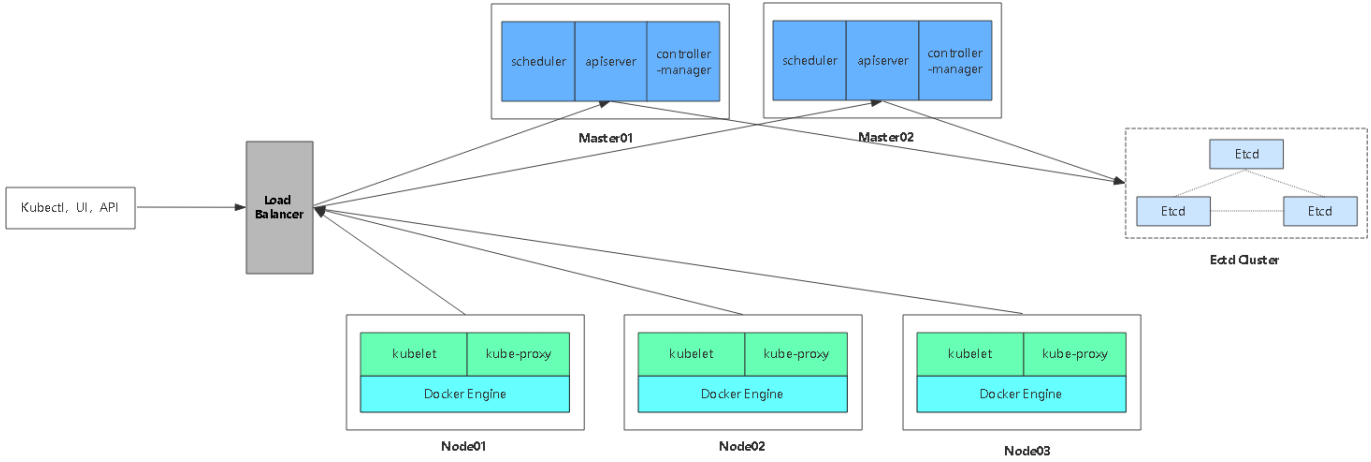

二、kubernetes组织架构介绍

2.1、整体架构组件详解

1、如图,有三个节点一个master节点和两个node节点。

2、Master有三个组件:

- API server:K8S提供的一个统一的入口,提供RESTful API访问方式接口服务。

- Auth:认证授权,判断是否有权限访问

- Etcd:存储的数据库、存储认证信息等,K8S状态,节点信息等

- scheduler:集群的调度,将集群分配到哪个节点内

- controller manager: 控制器,来控制来做哪些任务,管理 pod service 控制器等

- Kubectl:管理工具,直接管理API Server,期间会有认证授权。

3、Node有两个组件:

- kubelet:接收K8S下发的任务,管理容器创建,生命周期管理等,将一个pod转换成一组容器。

- kube-proxy:Pod网络代理,四层负载均衡,对外访问

- 用户 -> 防火墙 -> kube-proxy -> 业务

Pod:K8S最小单元

- Container:运行容器的环境,运行容器引擎

- Docker

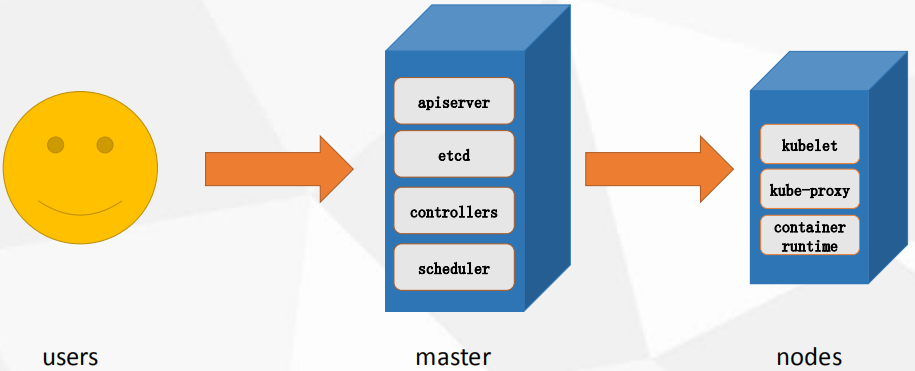

2.2、集群管理流程及核心概念

1、 管理集群流程

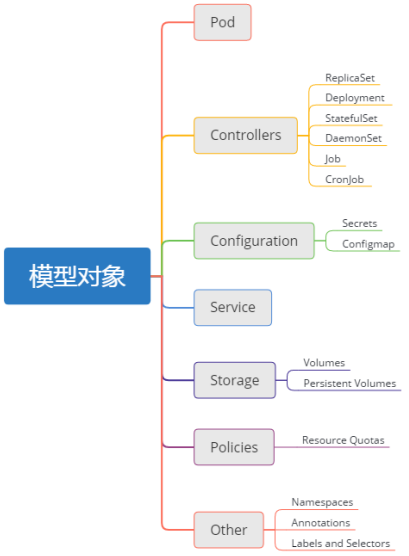

2、Kubernetes核心概念

Pod

• 最小部署单元

• 一组容器的集合

• 一个Pod中的容器共享网络命名空间

• Pod是短暂的

Controllers

• ReplicaSet : 确保预期的Pod副本数量

• Deployment : 无状态应用部署

• StatefulSet : 有状态应用部署

• DaemonSet : 确保所有Node运行同一个Pod

• Job : 一次性任务

• Cronjob : 定时任务

注:更高级层次对象,部署和管理Pod

Service

• 防止Pod失联

• 定义一组Pod的访问策略

Label : 标签,附加到某个资源上,用于关联对象、查询和筛选

Namespaces : 命名空间,将对象逻辑上隔离

Annotations :注释

三、Kubernetes 部署

- # K8S 相关服务包

- 百度云下载:https://pan.baidu.com/s/1d1zqoil3pfeThC-v45bWkg

- 密码:0ssx

3.1 服务版本及架构说明

服务版本

- centos:7.4

- etcd-v3.3.10

- flannel-v0.10.0

- kubernetes-1.12.1

- nginx-1.16.1

- keepalived-1.3.5

- docker-19.03.1

单Master架构

- k8s Master:172.16.105.220

- k8s Node:172.16.105.230、172.16.105.213

- etcd:172.16.105.220、172.16.105.230、172.16.105.213

双Master+Nginx+Keepalived

- k8s Master1:192.168.1.108

- k8s Master2:192.168.1.109

- k8s Node3:192.168.1.110

- k8s Node4:192.168.1.111

- etc:192.168.1.108、192.168.1.109、192.168.1.110、192.168.1.111

- Nginx+keepalived1:192.168.1.112

- Nginx+keepalived2:192.168.1.113

- vip:192.168.1.100

3.2、部署kubernetes准备

1、关闭防火墙

systemctl stop firewalld.service

2、关闭SELINUX

setenforce

3、修改主机名

vim /etc/hostname

hostname ****

4、同步时间

ntpdate time.windows.com

5、环境变量

注:下面配置所有用到的k8s最好部署环境变量

3.3、Etcd 数据库集群部署

1、部署 Etcd 自签证书

1、创建k8s及证书目录

mkdir ~/k8s && cd ~/k8s

mkdir k8s-cert

mkdir etcd-cert

cd etcd-cert

2、安装cfssl生成证书工具

# 通过选项生成证书

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

# 通过json生成证书

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

# 查看证书信息

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

# 添加执行权限

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

3、执行命令生成证书使用的json文件1

vim ca-config.json

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

vim ca-config.json

4、执行命令生成证书使用的json文件2

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

vim ca-csr.json

5、执行命令通过json文件生成CA根证书、会在当前目录生成ca.pem和ca-key.pem

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

6、执行命令生成Etcd域名证书、首先创建json文件后生成

{

"CN": "etcd",

"hosts": [

"172.16.105.220",

"172.16.105.230",

"172.16.105.213"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

vim server-csr.json

注:hosts下面跟etcd部署服务的IP。

7、执行命令办法Etcd域名证书、当前目录下生成 server.pem 与 server-key.pem

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

8、查看创建的证书

ls *pem

ca-key.pem ca.pem server-key.pem server.pem

2、部署 Etcd 数据库集群

- 使用etcd版本:etcd-v3.3.10-linux-amd64.tar.gz

- 二进制包下载地址:https://github.com/coreos/etcd/releases/tag/v3.2.12

1、下载本地后进行解压、进入到解压目录

tar zxvf etcd-v3.3.10-linux-amd64.tar.gz

cd etcd-v3.3.10-linux-amd64

2、为了方便管理etcd创建几个目录、并移动文件

mkdir /opt/etcd/{cfg,bin,ssl} -p

mv etcd etcdctl /opt/etcd/bin/

3、创建编写etcd配置文件

#[Member]

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://172.16.105.220:2380"

ETCD_LISTEN_CLIENT_URLS="https://172.16.105.220:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.105.220:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://172.16.105.220:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://172.16.105.220:2380,etcd02=https://172.16.105.230:2380,etcd03=https://172.16.105.213:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

vim /opt/etcd/cfg/etcd

· ETCD_NAME 节点名称

· ETCD_DATA_DIR 数据目录

· ETCD_LISTEN_PEER_URLS 集群通信监听地址

· ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

· ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

· ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

· ETCD_INITIAL_CLUSTER 集群节点地址

· ETCD_INITIAL_CLUSTER_TOKEN 集群Token

· ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群

参数含义

4、创建systemd 管理 etcd

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd

ExecStart=/opt/etcd/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536 [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/etcd.service

5、将证书文件copy到指定目录

cp /root/k8s/etcd-cert/{ca,ca-key,server-key,server}.pem /opt/etcd/ssl/

6、启动 etcd、并设置开机自启动

systemctl daemon-reload

systemctl enable etcd.service

systemctl start etcd.service

7、开启后etcd可能会等待其他两个节点等待,需要讲其他两个节点etcd开启

# 1、将目录etcd配置目录 copy 到两个节点内

scp -r /opt/etcd/ root@172.16.105.230:/opt/

scp -r /opt/etcd/ root@172.16.105.213:/opt/

# 2、将启动服务配置文件 copy 到两个节点内

scp -r /usr/lib/systemd/system/etcd.service root@172.16.105.230:/usr/lib/systemd/system/

scp -r /usr/lib/systemd/system/etcd.service root@172.16.105.213:/usr/lib/systemd/system/

8、修改 两个节点 etcd /opt/etcd/cfg/etcd 配置文件

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://172.16.105.230:2380"

ETCD_LISTEN_CLIENT_URLS="https://172.16.105.230:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.105.230:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://172.16.105.230:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://172.16.105.220:2380,etcd02=https://172.16.105.230:2380,etcd03=https://172.16.105.213:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

节点:172.16.105.230 配置文件

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://172.16.105.213:2380"

ETCD_LISTEN_CLIENT_URLS="https://172.16.105.213:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://172.16.105.213:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://172.16.105.213:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://172.16.105.220:2380,etcd02=https://172.16.105.230:2380,etcd03=https://172.16.105.213:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

节点:172.16.105.213 配置文件

9、两个节点启动服务、并设置开机自启动

systemctl daemon-reload

systemctl enable etcd.service

systemctl start etcd.service

10、查看主etcd日志

Aug 6 11:13:54 izbp14x4an2p4z7awyek7mz etcd: updating the cluster version from 3.0 to 3.3

Aug 6 11:13:54 izbp14x4an2p4z7awyek7mz etcd: updated the cluster version from 3.0 to 3.3

Aug 6 11:13:54 izbp14x4an2p4z7awyek7mz etcd: enabled capabilities for version 3.3

tail /var/log/messages -f

11、查看端口启动

tcp 0 0 172.16.105.220:2379 0.0.0.0:* LISTEN 13021/etcd

tcp 0 0 127.0.0.1:2379 0.0.0.0:* LISTEN 13021/etcd

tcp 0 0 172.16.105.220:2380 0.0.0.0:* LISTEN 13021/etcd

netstat -lnpt

12、查看进程使用

root 13021 1.1 1.4 10541908 28052 ? Ssl 11:13 0:02 /opt/etcd/bin/etcd --name=etcd01 --data-dir=/var/lib/etcd/default.etcd --listen-peer-urls=https://172.16.105.220:2380 --listen-client-urls=https://172.16.105.220:2379,http://127.0.0.1:2379 --advertise-client-urls=https://172.16.105.220:2379 --initial-advertise-peer-urls=https://172.16.105.220:2380 --initial-cluster=etcd01=https://172.16.105.220:2380,etcd02=https://172.16.105.230:2380,etcd03=https://172.16.105.213:2380 --initial-cluster-token=etcd-cluster --initial-cluster-state=new --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --peer-cert-file=/opt/etcd/ssl/server.pem --peer-key-file=/opt/etcd/ssl/server-key.pem --trusted-ca-file=/opt/etcd/ssl/ca.pem --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

ps -aux | grep etcd

13、通过工具验证etcd

# 添加证书文件绝对路径与etcd集群节点地址

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379" cluster-health

member 1d5fcc16a8c9361e is healthy: got healthy result from https://172.16.105.220:2379

member 7b28469233594fbd is healthy: got healthy result from https://172.16.105.230:2379

member b2e216e703023e21 is healthy: got healthy result from https://172.16.105.213:2379

cluster is healthy

输出如下表示没问题:

其他:

# 删除每个节点data文件重新启动

rm -rf /var/lib/etcd/default.etcd

报错:etcd: request cluster ID mismatch

3.4、Node 部署 Docker 容器应用

1、安装依赖包

yum install -y yum-utils device-mapper-persistent-data lvm2

2、配置官方源

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3、安装docker最新版

yum -y install docker-ce

4、配置docker仓库加速器

官网:https://www.daocloud.io/mirror

加速命令:curl -sSL https://get.daocloud.io/daotools/set_mirror.sh | sh -s http://f1361db2.m.daocloud.io

5、重启docker

systemctl restart docker

6、查看docker版本:docker version

Version: 19.03.1

3.5、Node 部署 Flannel 网络模型

- 二进制包:https://github.com/coreos/flannel/releases

1、写入分配的子网到etcd、提供flanneld使用。

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

2、查看创建网络信息

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379" get /coreos.com/network/config

3、下载完flannel包后进行解压

tar -xvzf flannel-v0.10.0-linux-amd64.tar.gz

4、创建目录将文件存放到指定目录下

mkdir -p /opt/kubernetes/{bin,cfg,ssl}

mv flanneld mk-docker-opts.sh /opt/kubernetes/bin/

5、创建flanneld配置文件

FLANNEL_OPTIONS="--etcd-endpoints=https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem"

vim /opt/kubernetes/cfg/flanneld

6、创建systemd管理flannel

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service [Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/flanneld.service

7、配置Docker启动指定网段

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/docker.service

8、启动flannel与docker、设置开机自启动

systemctl daemon-reload

systemctl enable flanneld

systemctl start flanneld

systemctl restart docker

9、确认 docker 与 flannel 再同网段

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.26.1 netmask 255.255.255.0 broadcast 172.17.26.255

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.26.0 netmask 255.255.255.255 broadcast 0.0.0.0

ifconfig

10、查看路由信息

# 1、查看生成的文件

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379" ls /coreos.com/network/subnets/

/coreos.com/network/subnets/172.17.59.0-24

/coreos.com/network/subnets/172.17.23.0-24

/coreos.com/network/subnets/172.17.26.0-24

输出:

# 2、查看指定路由文件

/opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379" get /coreos.com/network/subnets/172.17.59.0-24

# 对应关系

{"PublicIP":"172.16.105.220","BackendType":"vxlan","BackendData":{"VtepMAC":"ae:6b:20:4a:bd:ed"}}

输出:

3.6、部署 kubernetes 单Master集群

- 下载二进制包:https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.12.md

- 下载这个包(kubernetes-server-linux-amd64.tar.gz)就够了,包含了所需的所有组件。

1、生成证书

1.1、执行命令生成证书使用的json文件1

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

vim ca-config.json

1.2、执行命令生成证书使用的json文件2

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

vim ca-csr.json

1.3、执行命令生成CA证书

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

1.4、执行命令生成证书使用的json文件、注:添加所有使用到k8s的节点IP。

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"172.16.105.220",

"172.16.105.210",

"多选添加IP,Node节点不用添加",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

vim server-csr.json

1.5、执行命令生成 apiserver 证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

1.6、执行命令生成证书使用的json文件生成 kube-proxy 证书

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

vim kube-proxy-csr.json

1.7、执行命令生成 kube-proxy 证书

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

1.8、查看所有生成证书

ca-key.pem ca.pem kube-proxy-key.pem kube-proxy.pem server-key.pem server.pem

ls *pem

2、部署Master apiserver 组件

1、下载到k8s目录解压、进入目录

tar -xzvf kubernetes-server-linux-amd64.tar.gz

cd kubernetes/server/bin/

2、创建目录

mkdir /opt/kubernetes/{bin,cfg,ssl,logs} -p

3、将二进制文件导入到相应目录下

cp kube-apiserver kube-scheduler kube-controller-manager kubectl /opt/kubernetes/bin

4、将生成的证书文件存入到指定文件

cp ca.pem ca-key.pem server.pem server-key.pem /opt/kubernetes/ssl/

5、创建 token 文件

674c457d4dcf2eefe4920d7dbb6b0ddc,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

vim /opt/kubernetes/cfg/token.csv

第一列:随机字符串,自己可生成

第二列:用户名

第三列:UID

第四列:用户组

说明

6、创建 apiserver 配置文件、确保配置好生成证书,确保连接etcd

KUBE_APISERVER_OPTS="--logtostderr=false \

--log-dir=/opt/kubernetes/logs \

--v=4 \

--etcd-servers=https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379 \

--bind-address=172.16.105.220 \

--secure-port=6443 \

--advertise-address=172.16.105.220 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--service-node-port-range=30000-50000 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,SecurityContextDeny,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

vim /opt/kubernetes/cfg/kube-apiserver

参数说明:

· --logtostderr 启用日志

· ---v 日志等级

· --etcd-servers etcd集群地址

· --bind-address 监听地址

· --secure-port https安全端口

· --advertise-address 集群通告地址

· --allow-privileged 启用授权

· --service-cluster-ip-range Service虚拟IP地址段

· --enable-admission-plugins 准入控制模块

· --authorization-mode 认证授权,启用RBAC授权和节点自管理

· --enable-bootstrap-token-auth 启用TLS bootstrap功能,后面会讲到

· --token-auth-file token文件

· --service-node-port-range Service Node类型默认分配端口范围 日志:

# true 日志默认放到/var/log/messages

--logtostderr=true

# false 日志可以指定放到一个目录

--logtostderr=false

--log-dir=/opt/kubernetes/logs

参数说明:

7、创建 systemd 管理 apiserver

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver $KUBE_APISERVER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/kube-apiserver.service

8、启动、并设置开机自启动

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

9、查看端口

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 5431/kube-apiserver

netstat -lnpt | grep 8080

tcp 0 0 172.16.105.220:6443 0.0.0.0:* LISTEN 5431/kube-apiserver

netstat -lnpt | grep 6443

3、部署 Master scheduler 组件

1、创建 schduler 配置文件

KUBE_SCHEDULER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect"

vim /opt/kubernetes/cfg/kube-scheduler

参数说明:

· --master 连接本地apiserver

· --leader-elect 当该组件启动多个时,自动选举(HA)

参数说明:

2、systemd管理schduler组件

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler $KUBE_SCHEDULER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/kube-scheduler.service

3、启动并设置开机自启

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

4、查看进程

root 8393 0.5 1.1 45360 21356 ? Ssl 11:23 0:00 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect

ps -aux | grep kube-scheduler

4、部署 Master controller-manager 组件

1、创建 controller-manager 配置文件

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \

--v=4 \

--master=127.0.0.1:8080 \

--leader-elect=true \

--address=127.0.0.1 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--experimental-cluster-signing-duration=87600h0m0s"

vim /opt/kubernetes/cfg/kube-controller-manager

2、systemd管理controller-manager组件

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager $KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/kube-controller-manager.service

3、启动并添加开机自启

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

4、查看进程

root 8966 0.4 1.1 45360 20900 ? Ssl 11:27 0:00 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect

ps -aux | grep controller-manager

5、通过 kubectl 检查所有组件状态

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

/opt/kubernetes/bin/kubectl get cs

5、部署 kubecongig 文件

master 节点配置

1、将kubelet-bootstrap用户绑定到系统集群角色。生成的token文件中定义的角色。

# 主要为kuelet办法证书的最小全权限

/opt/kubernetes/bin/kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

2、创建kubeconfig文件、在生成kubernetes证书的目录下执行以下命令生成kubeconfig文件:

# 创建kubelet bootstrapping kubeconfig

BOOTSTRAP_TOKEN=674c457d4dcf2eefe4920d7dbb6b0ddc

KUBE_APISERVER="https://172.16.105.220:6443" # 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=/root/k8s/k8s-cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig # 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig # 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig # 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig #---------------------- # 创建kube-proxy kubeconfig文件 kubectl config set-cluster kubernetes \

--certificate-authority=/root/k8s/k8s-cert/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials kube-proxy \

--client-certificate=/root/k8s/k8s-cert/kube-proxy.pem \

--client-key=/root/k8s/k8s-cert/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

vim kubeconfig.sh

3、执行脚本

bash kubeconfig.sh

4、将生成的kube-proxy.kubeconfig与bootstrap.kubeconfig copy 到 Node 机器内。

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@172.16.105.230:/opt/kubernetes/cfg/

scp bootstrap.kubeconfig kube-proxy.kubeconfig root@172.16.105.213:/opt/kubernetes/cfg/

6、部署Node kubelet 组件

1、Node节点创建目录

mkdir -p /opt/kubernetes/{cfg,bin,logs,ssl}

2、copy下列文件到指定目录下

- 使用:/kubernetes/server/bin/kubelet

- 使用:/kubernetes/server/bin/kube-proxy

- 将上面两个文件copy到Node端/opt/kubernetes/bin/目录下

3、创建 kubelet 配置文件

KUBELET_OPTS="--logtostderr=false \

--log-dir=/opt/kubernetes/logs/ \

--v=4 \

--hostname-override=172.16.105.213 \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet.config \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

vim /opt/kubernetes/cfg/kubelet

参数说明:

· --hostname-override 在集群中显示的主机名

· --kubeconfig 指定kubeconfig文件位置,会自动生成

· --bootstrap-kubeconfig 指定刚才生成的bootstrap.kubeconfig文件

· --cert-dir 颁发证书存放位置

· --pod-infra-container-image 管理Pod网络的镜像

参数说明:

2、创建 kubelet.config 配置文件

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 172.16.105.213

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

vim /opt/kubernetes/cfg/kubelet.config

3、systemd 管理 kubelet 组件

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service [Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet $KUBELET_OPTS

Restart=on-failure

KillMode=process [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/kubelet.service

4、启动并设置开机自启动

systemctl daemon-reload

systemctl enable kubelet.service

systemctl start kubelet.service

5、查看进程

root 24607 0.8 1.7 626848 69140 ? Ssl 16:03 0:05 /opt/kubernetes/bin/kubelet --logtostderr=false --log-dir=/opt/kubernetes/logs/ --v=4 --hostname-override=172.16.105.213 --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig --config=/opt/kubernetes/cfg/kubelet.config --cert-dir=/opt/kubernetes/ssl --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

ps -aux | grep kubelet

6、Master 端 审批Node 加入集群:

- 启动后还没加入到集群中,需要手动允许该节点才可以。

- 在Master节点查看请求签名的Node:

7、查看请求加入集群的Node

NAME AGE REQUESTOR CONDITION

node-csr-7ZHhg19mVh1w2gfJOh55eaBsRisA_wT8EHZQfqCLPLE 21s kubelet-bootstrap Pending

node-csr-weeFsR6VVUNIHyohOgaGvy2Hr6M9qSUIkoGjQ_mUyOo 28s kubelet-bootstrap Pending

kubectl get csr

8、同意请求让Node节点加入

kubectl certificate approve node-csr-7ZHhg19mVh1w2gfJOh55eaBsRisA_wT8EHZQfqCLPLE

kubectl certificate approve node-csr-weeFsR6VVUNIHyohOgaGvy2Hr6M9qSUIkoGjQ_mUyOo

9、查看加入节点

NAME STATUS ROLES AGE VERSION

172.16.105.213 Ready <none> 42s v1.12.1

172.16.105.230 Ready <none> 57s v1.12.1

kubectl get node

7、部署Node kube-proxy组件

1、创建 kube-proxy 配置文件

KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=172.16.105.213 \

--cluster-cidr=10.0.0.0/24 \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

vim /opt/kubernetes/cfg/kube-proxy

2、systemd管理kube-proxy组件

[Unit]

Description=Kubernetes Proxy

After=network.target [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy $KUBE_PROXY_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

vim /usr/lib/systemd/system/kube-proxy.service

3、启动并设置开机自启动

systemctl daemon-reload

systemctl enable kube-proxy

systemctl start kube-proxy

4、查看进程

root 27166 0.3 0.5 41588 21332 ? Ssl 16:16 0:00 /opt/kubernetes/bin/kube-proxy --logtostderr=true --v=4 --hostname-override=172.16.105.213 --cluster-cidr=10.0.0.0/24 --kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig

ps -aux | grep kube-proxy

8、其他设置

1、解决:将匿名用户绑定到系统用户

kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous

3.7、部署 kubernetes 多Master集群

1、Master2配置部署

- 注:Master节点2配置与单Master相同下面我这里只直接略过相同配置。

- 注:直接复制配置文件可能会导致etcd链接问题

- 注:最好以master为etcd端。

1、修改Master02配置文件中的IP,更改为Master02IP

--bind-address=172.16.105.212

--advertise-address=172.16.105.212

vim kube-apiserver

2、启动Master02 k8s

systemctl start kube-apiserver

systemctl start kube-scheduler

systemctl start kube-controller-manager

3、查看集群状态

NAME STATUS MESSAGE ERROR

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

kubectl get cs

5、查看etcd连接状态

NAME STATUS ROLES AGE VERSION

172.16.105.213 Ready <none> 41h v1.12.1

172.16.105.230 Ready <none> 41h v1.12.1

kubectl get node

2、部署 Nginx 负载均衡

- 注:保证系统时间统一证书正常使用

- nginx官网:http://www.nginx.org

- documentation --> Installing nginx --> packages

1、复制nginx官方源写入到/etc/yum.repos.d/nginx.repo、修该centos版本

[nginx-stable]

name=nginx stable repo

baseurl=http://nginx.org/packages/centos/7/$basearch/

gpgcheck=1

enabled=1

gpgkey=https://nginx.org/keys/nginx_signing.key [nginx-mainline]

name=nginx mainline repo

baseurl=http://nginx.org/packages/mainline/centos/7/$basearch/

gpgcheck=1

enabled=0

gpgkey=https://nginx.org/keys/nginx_signing.key

vim /etc/yum.repos.d/nginx.repo

2、从新加载yum

yum clean all

yum makecache

3、安装 nginx

yum install nginx -y

4、修该配置文件,events同级添加

events {

worker_connections 1024;

}

stream {

log_format main "$remote_addr $upstream_addr - $time_local $status";

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 172.16.105.220:6443;

server 172.16.105.210:6443;

}

server {

listen 172.16.105.231:6443;

proxy_pass k8s-apiserver;

}

}

vim /etc/nginx/nginx.conf

参数说明:

# 创建四层负载均衡

stream {

# 记录日志

log_format main "$remote_addr $upstream_addr $time_local $status"

# 日志存放路径

access_log /var/log/nginx/k8s-access.log main;

# 创建调度集群 k8s-apiserver 为服务名称

upstream k8s-apiserver {

server 172.16.105.220:6443;

server 172.16.105.210:6443;

}

# 创建监听服务

server {

# 本地监听访问开启的使用IP与端口

listen 172.16.105.231:6443;

# 调度的服务名称,由于是4层则不是用http

proxy_pass k8s-apiserver;

}

}

参数说明:

5、启动nginx并生效配置文件

systemctl start nginx

6、查看监听端口

tcp 0 0 172.16.105.231:6443 0.0.0.0:* LISTEN 19067/nginx: master

netstat -lnpt | grep 6443

8、修改每个Node 节点中配置文件。将引用的连接端,改为该负载均衡的机器内。

vim bootstrap.kubeconfig

server: https://172.16.105.231:6443 vim kubelet.kubeconfig

server: https://172.16.105.231:6443 vim kube-proxy.kubeconfig

server: https://172.16.105.231:6443

9、重启 kubelet Node 客户端

systemctl restart kubelet

systemctl restart kube-proxy

10、查看Node 启动进程

root 23226 0.0 0.4 300552 16460 ? Ssl Aug08 0:25 /opt/kubernetes/bin/flanneld --ip-masq --etcd-endpoints=https://172.16.105.220:2379,https://172.16.105.230:2379,https://172.16.105.213:2379 -etcd-cafile=/opt/etcd/ssl/ca.pem -etcd-certfile=/opt/etcd/ssl/server.pem -etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 26986 1.5 1.5 632676 60740 ? Ssl 11:30 0:01 /opt/kubernetes/bin/kubelet --logtostderr=false --log-dir=/opt/kubernetes/logs/ --v=4 --hostname-override=172.16.105.213 --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig --config=/opt/kubernetes/cfg/kubelet.config --cert-dir=/opt/kubernetes/ssl --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

root 27584 0.7 0.5 41588 19896 ? Ssl 11:32 0:00 /opt/kubernetes/bin/kube-proxy --logtostderr=true --v=4 --hostname-override=172.16.105.213 --cluster-cidr=10.0.0.0/24 --kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig

ps -aux | grep kube

11、重启Master kube-apiserver

systemctl restart kube-apiserver

12、查看Nginx日志

172.16.105.213 172.16.105.220:6443 09/Aug/2019:13:34:59 +0800 200

172.16.105.230 172.16.105.220:6443 09/Aug/2019:13:34:59 +0800 200

172.16.105.213 172.16.105.220:6443 09/Aug/2019:13:34:59 +0800 200

172.16.105.230 172.16.105.220:6443 09/Aug/2019:13:34:59 +0800 200

172.16.105.230 172.16.105.220:6443 09/Aug/2019:13:35:00 +0800 200

tail -f /var/log/nginx/k8s-access.log

3、部署 Nginx2+keepalived 高可用

- 注:VIP 要设置为证书授权过得ip否则会无法通过外网访问

- 注:安装Nginx2与单Nginx的安装步骤相同,这里我不再重复部署,只讲解重点。

1、Nginx1与Nginx2安装keepalive高可用

yum -y install keepalived

2、修改Nginx1 Master 主配置文件

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

} # 通过vrrp协议检查本机nginx服务是否正常

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

} vrrp_instance VI_1 {

state MASTER

interface ens32

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 100 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒 # 密码认证

authentication {

auth_type PASS

auth_pass 1111

}

# VIP

virtual_ipaddress {

192.168.1.100/24

} # 使用检查脚本

track_script {

check_nginx

}

}

vim /etc/keepalived/keepalived.conf

3、修改Nginx2 Slave 主配置文件

! Configuration File for keepalived

global_defs {

# 接收邮件地址

notification_email {

acassen@firewall.loc

failover@firewall.loc

sysadmin@firewall.loc

}

# 邮件发送地址

notification_email_from Alexandre.Cassen@firewall.loc

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id NGINX_MASTER

} # 通过vrrp协议检查本机nginx服务是否正常

vrrp_script check_nginx {

script "/etc/keepalived/check_nginx.sh"

} vrrp_instance VI_1 {

state BACKUP

interface ens32

virtual_router_id 51 # VRRP 路由 ID实例,每个实例是唯一的

priority 90 # 优先级,备服务器设置 90

advert_int 1 # 指定VRRP 心跳包通告间隔时间,默认1秒 # 密码认证

authentication {

auth_type PASS

auth_pass 1111

}

# VIP

virtual_ipaddress {

192.168.1.100/24

} # 使用检查脚本

track_script {

check_nginx

}

}

vim /etc/keepalived/keepalived.conf

4、Ngin1与Nginx2创建检查脚本

# 检查nginx进程数

count=$(ps -ef |grep nginx |egrep -cv "grep|$$") if [ "$count" -eq 0 ];then

systemctl stop keepalived

fi

vim /etc/keepalived/check_nginx.sh

5、给脚本添加权限

chmod +x /etc/keepalived/check_nginx.sh

6、Ngin1与Nginx2启动keepalived

systemctl start keepalived

7、查看进程

root 1969 0.0 0.1 118608 1396 ? Ss 09:41 0:00 /usr/sbin/keepalived -D

root 1970 0.0 0.2 120732 2832 ? S 09:41 0:00 /usr/sbin/keepalived -D

root 1971 0.0 0.2 120732 2380 ? S 09:41 0:00 /usr/sbin/keepalived -D

ps aux | grep keepalived

8、Master 查看虚拟IP

ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:3d:1c:d0 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.115/24 brd 192.168.1.255 scope global dynamic ens32

valid_lft 5015sec preferred_lft 5015sec

inet 192.168.1.100/24 scope global secondary ens32

valid_lft forever preferred_lft forever

inet6 fe80::4db8:8591:9f94:8837/64 scope link

valid_lft forever preferred_lft forever

ip addr

9、Slave 6、查看虚拟IP(没有就正常)

ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:09:b3:c4 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.112/24 brd 192.168.1.255 scope global dynamic ens32

valid_lft 7200sec preferred_lft 7200sec

inet6 fe80::1dbe:11ff:f093:ef49/64 scope link

valid_lft forever preferred_lft forever

ip addr

10、测试

测试IP飘逸

1、关闭Master Nginx1

pkill nginx

2、查看Slave Nginx2 虚拟IP是否飘逸

ip addr

2: ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:09:b3:c4 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.112/24 brd 192.168.1.255 scope global dynamic ens32

valid_lft 4387sec preferred_lft 4387sec

inet 192.168.1.100/24 scope global secondary ens32

valid_lft forever preferred_lft forever

inet6 fe80::1dbe:11ff:f093:ef49/64 scope link

valid_lft forever preferred_lft forever

3、启动Master Nginx1 keepalived 测试ip飘回

systemctl start nginx

systemctl start keepalived

4、查看Nginx1 vip

ip addr

2: ens32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc pfifo_fast state UP qlen 1000

link/ether 00:0c:29:3d:1c:d0 brd ff:ff:ff:ff:ff:ff

inet 192.168.1.115/24 brd 192.168.1.255 scope global dynamic ens32

valid_lft 7010sec preferred_lft 7010sec

inet 192.168.1.100/24 scope global secondary ens32

valid_lft forever preferred_lft forever

inet6 fe80::4db8:8591:9f94:8837/64 scope link

valid_lft forever preferred_lft forever

测试IP飘逸

11、修改Nginx1 与 Nginx2 代理监听

stream {

log_format main "$remote_addr $upstream_addr - $time_local $status";

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.1.108:6443;

server 192.168.1.109:6443;

}

server {

listen 0.0.0.0:6443;

proxy_pass k8s-apiserver;

}

}

vim /etc/nginx/nginx.conf

12、重启nginx

systemctl restart nginx

13、接入K8S 修改所有Node配置文件IP为 VIP

1、修改配置文件

vim bootstrap.kubeconfig

server: https://192.168.1.100:6443

vim kube-proxy.kubeconfig

server: https://192.168.1.100:6443

2、重启Node

systemctl restart kubelet

systemctl restart kube-proxy

3、查看Master nginx1 日志

192.168.1.111 192.168.1.108:6443 - 22/Aug/2019:11:02:36 +0800 200

192.168.1.111 192.168.1.109:6443 - 22/Aug/2019:11:02:36 +0800 200

192.168.1.110 192.168.1.108:6443 - 22/Aug/2019:11:02:36 +0800 200

192.168.1.110 192.168.1.109:6443 - 22/Aug/2019:11:02:36 +0800 200

192.168.1.111 192.168.1.108:6443 - 22/Aug/2019:11:02:37 +0800 200

tail /var/log/nginx/k8s-access.log -f

Kubernetes 企业级集群部署方式的更多相关文章

- Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列之自签TLS证书及Etcd集群部署(二)

0.前言 整体架构目录:ASP.NET Core分布式项目实战-目录 k8s架构目录:Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录 一.服务器设置 1.把每一 ...

- Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录

0.目录 整体架构目录:ASP.NET Core分布式项目实战-目录 k8s架构目录:Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录 一.感谢 在此感谢.net ...

- Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列之集群部署环境规划(一)

0.前言 整体架构目录:ASP.NET Core分布式项目实战-目录 k8s架构目录:Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录 一.环境规划 软件 版本 ...

- Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列之flanneld网络介绍及部署(三)

0.前言 整体架构目录:ASP.NET Core分布式项目实战-目录 k8s架构目录:Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录 一.flanneld介绍 ...

- Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列之部署master/node节点组件(四)

0.前言 整体架构目录:ASP.NET Core分布式项目实战-目录 k8s架构目录:Kubernetes(k8s)集群部署(k8s企业级Docker容器集群管理)系列目录 1.部署master组件 ...

- Kubernetes&Docker集群部署

集群环境搭建 搭建kubernetes的集群环境 环境规划 集群类型 kubernetes集群大体上分为两类:一主多从和多主多从. 一主多从:一台Master节点和多台Node节点,搭建简单,但是有单 ...

- linux运维、架构之路-Kubernetes离线集群部署-无坑

一.部署环境介绍 1.服务器规划 系统 IP地址 主机名 CPU 内存 CentOS 7.5 192.168.56.11 k8s-node1 2C 2G CentOS 7.5 192.168.56 ...

- kubernetes容器集群部署Etcd集群

安装etcd 二进制包下载地址:https://github.com/etcd-io/etcd/releases/tag/v3.2.12 [root@master ~]# GOOGLE_URL=htt ...

- Ha-Federation-hdfs +Yarn集群部署方式

经过一下午的尝试,终于把这个集群的搭建好了,搭完感觉也没有太大的必要,就当是学习了吧,为之后搭建真实环境做基础. 以下搭建的是一个Ha-Federation-hdfs+Yarn的集群部署. 首先讲一下 ...

随机推荐

- Ubuntu安装CUDA、CUDNN比较有用的网址总结

Ubuntu安装CUDA.CUDNN比较有用的网址总结 1.tensorflow各个版本所对应的的系统要求和CUDA\CUDNN适配版本 https://tensorflow.google.cn/in ...

- GO 函数的参数

一.函数 函数的参数 1.1 参数的使用 形式参数:定义函数时,用于接收外部传入的数据,叫做形式参数,简称形参. 实际参数:调用函数时,传给形参的实际的数据,叫做实际参数,简称实参. 函数调用: ...

- SPA项目搭建及嵌套路由

Vue-cli: 什么是vue-cli? vue-cli是vue.js的脚手架,用于自动生成vue.js+webpack的项目模板,创建命令如下: vue init webpack xxx 注1:xx ...

- struts图片上传

文件上传:三种上传方案1.上传到tomcat服务器 上传图片的存放位置与tomcat服务器的耦合度太高2.上传到指定文件目录,添加服务器与真实目录的映射关系,从而解耦上传文件与tomcat的关系文件服 ...

- ASP.Net MVC 路由及路由调试工具RouteDebug

一.路由规则 1.可以创建多条路由规则,每条路由的name属性不相同 2.路由规则有优先级,最上面的路由规则优先级越高 App_Start文件下的:RouteConfig.cs public stat ...

- python web框架Flask——csrf攻击

CSRF是什么? (Cross Site Request Forgery, 跨站域请求伪造)是一种网络的攻击方式,它在 2007 年曾被列为互联网 20 大安全隐患之一,也被称为“One Click ...

- Android框架Volley使用:Get请求实现

首先我们在项目中导入这个框架: implementation 'com.mcxiaoke.volley:library:1.0.19' 在AndroidManifest文件当中添加网络权限: < ...

- AFNetworking遇到错误 Request failed: unacceptable content-type: text/html

iOS 使用AFNetworking遇到错误 Request failed: unacceptable content-type: text/html 原因: 不可接受的内容类型 “text/html ...

- iOS自定义TabBar使用popToRootViewControllerAnimated返回后tabbar重叠

解决方法 所以方法就是:遵循UINavigationController的代理,用代理方法解决该Bug,代码如下: 实现代理方法: { // 删除系统自带的tabBarButton for (UIVi ...

- [20190524]使用use_concat or_expand提示优化.txt

[20190524]使用use_concat or_expand提示优化.txt --//上午看了链接https://connor-mcdonald.com/2019/05/22/being-gene ...