阿里云恶意软件检测比赛-第三周-TextCNN

LSTM初试遇到障碍,使用较熟悉的TextCNN。

1.基础知识:

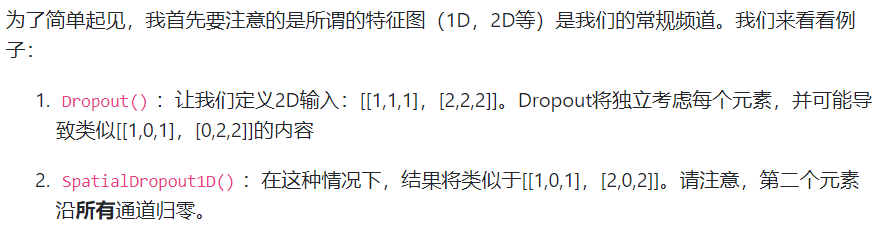

SpatialDropout1D

import pickle

from keras.preprocessing.sequence import pad_sequences

from keras_preprocessing.text import Tokenizer

from keras.models import Sequential, Model

from keras.layers import Dense, Embedding, Activation, merge, Input, Lambda, Reshape, LSTM, RNN, CuDNNLSTM, \

SimpleRNNCell, SpatialDropout1D, Add, Maximum

from keras.layers import Conv1D, Flatten, Dropout, MaxPool1D, GlobalAveragePooling1D, concatenate, AveragePooling1D

from keras import optimizers

from keras import regularizers

from keras.layers import BatchNormalization

from keras.callbacks import TensorBoard, EarlyStopping, ModelCheckpoint

from keras.utils import to_categorical

import time

import numpy as np

from keras import backend as K

from sklearn.model_selection import StratifiedKFold

import pickle

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

import time

import csv

import xgboost as xgb

import numpy as np

from sklearn.model_selection import StratifiedKFold my_security_train = './my_security_train.pkl'

my_security_test = './my_security_test.pkl'

my_result = './my_result.pkl'

my_result_csv = './my_result.csv'

inputLen=100

# config = K.tf.ConfigProto()

# # 程序按需申请内存

# config.gpu_options.allow_growth = True

# session = K.tf.Session(config = config) # 读取文件到变量中

with open(my_security_train, 'rb') as f:

train_labels = pickle.load(f)

train_apis = pickle.load(f)

with open(my_security_test, 'rb') as f:

test_files = pickle.load(f)

test_apis = pickle.load(f) # print(time.strftime("%Y-%m-%d-%H-%M-%S", time.localtime()))

# tensorboard = TensorBoard('./Logs/', write_images=1, histogram_freq=1)

# print(train_labels)

# 将标签转换为空格相隔的一维数组

train_labels = np.asarray(train_labels)

# print(train_labels) tokenizer = Tokenizer(num_words=None,

filters='!"#$%&()*+,-./:;<=>?@[\]^_`{|}~\t\n',

lower=True,

split=" ",

char_level=False)

# print(train_apis)

# 通过训练和测试数据集丰富取词器的字典,方便后续操作

tokenizer.fit_on_texts(train_apis)

# print(train_apis)

# print(test_apis)

tokenizer.fit_on_texts(test_apis)

# print(test_apis)

# print(tokenizer.word_index)

# #获取目前提取词的字典信息

# # vocal = tokenizer.word_index

train_apis = tokenizer.texts_to_sequences(train_apis)

# 通过字典信息将字符转换为对应的数字

test_apis = tokenizer.texts_to_sequences(test_apis)

# print(test_apis)

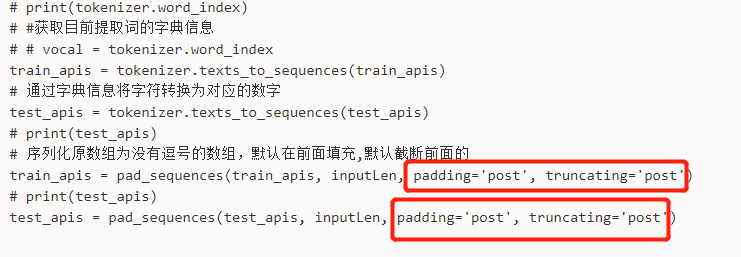

# 序列化原数组为没有逗号的数组,默认在前面填充,默认截断前面的

train_apis = pad_sequences(train_apis, inputLen, padding='post', truncating='post')

# print(test_apis)

test_apis = pad_sequences(test_apis, inputLen, padding='post', truncating='post') # print(test_apis) def SequenceModel():

# Sequential()是序列模型,其实是堆叠模型,可以在它上面堆砌网络形成一个复杂的网络结构

model = Sequential()

model.add(Dense(32, activation='relu', input_dim=6000))

model.add(Dense(8, activation='softmax'))

return model def lstm():

my_inpuy = Input(shape = (6000,), dtype = 'float64')

#在网络第一层,起降维的作用

emb = Embedding(len(tokenizer.word_index)+1, 256, input_length=6000)

emb = emb(my_inpuy)

net = Conv1D(16, 3, padding='same', kernel_initializer='glorot_uniform')(emb)

net = BatchNormalization()(net)

net = Activation('relu')(net)

net = Conv1D(32, 3, padding='same', kernel_initializer='glorot_uniform')(net)

net = BatchNormalization()(net)

net = Activation('relu')(net)

net = MaxPool1D(pool_size=4)(net) net1 = Conv1D(16, 4, padding='same', kernel_initializer='glorot_uniform')(emb)

net1 = BatchNormalization()(net1)

net1 = Activation('relu')(net1)

net1 = Conv1D(32, 4, padding='same', kernel_initializer='glorot_uniform')(net1)

net1 = BatchNormalization()(net1)

net1 = Activation('relu')(net1)

net1 = MaxPool1D(pool_size=4)(net1) net2 = Conv1D(16, 5, padding='same', kernel_initializer='glorot_uniform')(emb)

net2 = BatchNormalization()(net2)

net2 = Activation('relu')(net2)

net2 = Conv1D(32, 5, padding='same', kernel_initializer='glorot_uniform')(net2)

net2 = BatchNormalization()(net2)

net2 = Activation('relu')(net2)

net2 = MaxPool1D(pool_size=4)(net2) net = concatenate([net, net1, net2], axis=-1)

net = CuDNNLSTM(256)(net)

net = Dense(8, activation = 'softmax')(net)

model = Model(inputs=my_inpuy, outputs=net)

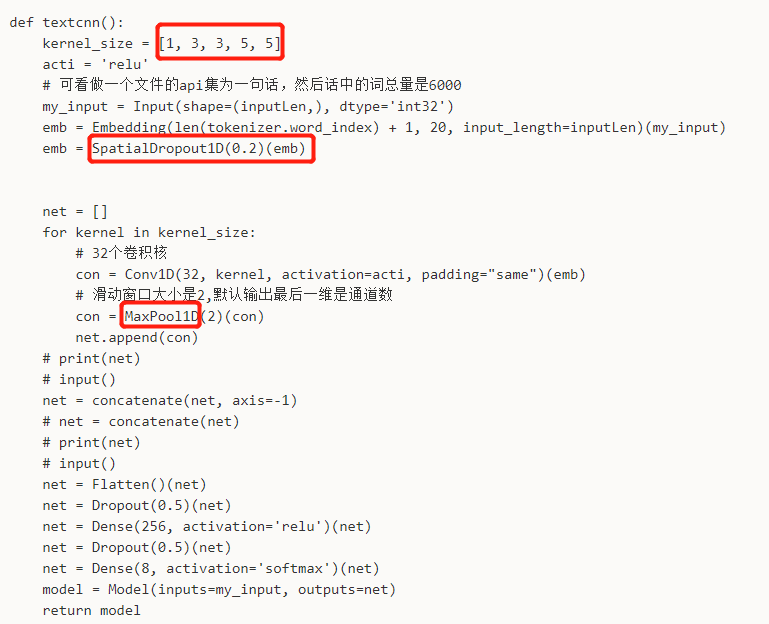

return model def textcnn():

kernel_size = [1, 3, 3, 5, 5]

acti = 'relu'

#可看做一个文件的api集为一句话,然后话中的词总量是6000

my_input = Input(shape=(inputLen,), dtype='int32')

emb = Embedding(len(tokenizer.word_index) + 1, 5, input_length=inputLen)(my_input)

emb = SpatialDropout1D(0.2)(emb) net = []

for kernel in kernel_size:

# 32个卷积核

con = Conv1D(32, kernel, activation=acti, padding="same")(emb)

# 滑动窗口大小是2,默认输出最后一维是通道数

con = MaxPool1D(2)(con)

net.append(con)

# print(net)

# input()

net = concatenate(net, axis =-1)

# net = concatenate(net)

# print(net)

# input()

net = Flatten()(net)

net = Dropout(0.5)(net)

net = Dense(256, activation='relu')(net)

net = Dropout(0.5)(net)

net = Dense(8, activation='softmax')(net)

model = Model(inputs=my_input, outputs=net)

return model # model = SequenceModel()

model = textcnn() # metrics默认只有loss,加accuracy后在model.evaluate(...)的返回值即有accuracy结果

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'])

# print(train_apis.shape)

# print(train_labels.shape)

# 将训练集切分成训练和验证集

skf = StratifiedKFold(n_splits=5)

for i, (train_index, valid_index) in enumerate(skf.split(train_apis, train_labels)):

model.fit(train_apis[train_index], train_labels[train_index], epochs=10, batch_size=1000,

validation_data=(train_apis[valid_index], train_labels[valid_index]))

print(train_index, valid_index) # loss, acc = model.evaluate(train_apis, train_labels)

# print(loss)

# print(acc)

# print(model.predict(train_apis))

test_apis = model.predict(test_apis)

# print(test_files)

# print(test_apis) with open(my_result, 'wb') as f:

pickle.dump(test_files, f)

pickle.dump(test_apis, f) # print(len(test_files))

# print(len(test_apis)) result = []

for i in range(len(test_files)):

# # print(test_files[i])

# #之前test_apis不带逗号的格式是矩阵格式,现在tolist转为带逗号的列表格式

# print(test_apis[i])

# print(test_apis[i].tolist())

# result.append(test_files[i])

# result.append(test_apis[i])

tmp = []

a = test_apis[i].tolist()

tmp.append(test_files[i])

# extend相比于append可以添加多个值

tmp.extend(a)

# print(tmp)

result.append(tmp)

# print(1)

# print(result) with open(my_result_csv, 'w') as f:

# f.write([1,2,3])

result_csv = csv.writer(f)

result_csv.writerow(["file_id", "prob0", "prob1", "prob2", "prob3", "prob4", "prob5", "prob6", "prob7"])

result_csv.writerows(result)

确定好它的原始文件api序列最大长度:13264587

import pickle

from keras.preprocessing.sequence import pad_sequences

from keras_preprocessing.text import Tokenizer

from keras.models import Sequential, Model

from keras.layers import Dense, Embedding, Activation, merge, Input, Lambda, Reshape, LSTM, RNN, CuDNNLSTM, \

SimpleRNNCell, SpatialDropout1D, Add, Maximum

from keras.layers import Conv1D, Flatten, Dropout, MaxPool1D, GlobalAveragePooling1D, concatenate, AveragePooling1D

from keras import optimizers

from keras import regularizers

from keras.layers import BatchNormalization

from keras.callbacks import TensorBoard, EarlyStopping, ModelCheckpoint

from keras.utils import to_categorical

import time

import numpy as np

from keras import backend as K

from sklearn.model_selection import StratifiedKFold

import pickle

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

import time

import csv

import xgboost as xgb

import numpy as np

from sklearn.model_selection import StratifiedKFold my_security_train = './my_security_train.pkl'

my_security_test = './my_security_test.pkl'

my_result = './my_result1.pkl'

my_result_csv = './my_result1.csv'

inputLen = 5000

# config = K.tf.ConfigProto()

# # 程序按需申请内存

# config.gpu_options.allow_growth = True

# session = K.tf.Session(config = config) # 读取文件到变量中

with open(my_security_train, 'rb') as f:

train_labels = pickle.load(f)

train_apis = pickle.load(f)

with open(my_security_test, 'rb') as f:

test_files = pickle.load(f)

test_apis = pickle.load(f) # print(time.strftime("%Y-%m-%d-%H-%M-%S", time.localtime()))

# tensorboard = TensorBoard('./Logs/', write_images=1, histogram_freq=1)

# print(train_labels)

# 将标签转换为空格相隔的一维数组

train_labels = np.asarray(train_labels)

# print(train_labels) tokenizer = Tokenizer(num_words=None,

filters='!"#$%&()*+,-./:;<=>?@[\]^_`{|}~\t\n',

lower=True,

split=" ",

char_level=False)

# print(train_apis)

# 通过训练和测试数据集丰富取词器的字典,方便后续操作

tokenizer.fit_on_texts(train_apis)

# print(train_apis)

# print(test_apis)

tokenizer.fit_on_texts(test_apis)

# print(test_apis)

# print(tokenizer.word_index)

# #获取目前提取词的字典信息

# # vocal = tokenizer.word_index

train_apis = tokenizer.texts_to_sequences(train_apis)

# 通过字典信息将字符转换为对应的数字

test_apis = tokenizer.texts_to_sequences(test_apis)

# print(test_apis)

# 序列化原数组为没有逗号的数组,默认在前面填充,默认截断前面的

train_apis = pad_sequences(train_apis, inputLen, padding='post', truncating='post')

# print(test_apis)

test_apis = pad_sequences(test_apis, inputLen, padding='post', truncating='post') # print(test_apis) def SequenceModel():

# Sequential()是序列模型,其实是堆叠模型,可以在它上面堆砌网络形成一个复杂的网络结构

model = Sequential()

model.add(Dense(32, activation='relu', input_dim=6000))

model.add(Dense(8, activation='softmax'))

return model def lstm():

my_inpuy = Input(shape=(6000,), dtype='float64')

# 在网络第一层,起降维的作用

emb = Embedding(len(tokenizer.word_index) + 1, 5, input_length=6000)

emb = emb(my_inpuy)

net = Conv1D(16, 3, padding='same', kernel_initializer='glorot_uniform')(emb)

net = BatchNormalization()(net)

net = Activation('relu')(net)

net = Conv1D(32, 3, padding='same', kernel_initializer='glorot_uniform')(net)

net = BatchNormalization()(net)

net = Activation('relu')(net)

net = MaxPool1D(pool_size=4)(net) net1 = Conv1D(16, 4, padding='same', kernel_initializer='glorot_uniform')(emb)

net1 = BatchNormalization()(net1)

net1 = Activation('relu')(net1)

net1 = Conv1D(32, 4, padding='same', kernel_initializer='glorot_uniform')(net1)

net1 = BatchNormalization()(net1)

net1 = Activation('relu')(net1)

net1 = MaxPool1D(pool_size=4)(net1) net2 = Conv1D(16, 5, padding='same', kernel_initializer='glorot_uniform')(emb)

net2 = BatchNormalization()(net2)

net2 = Activation('relu')(net2)

net2 = Conv1D(32, 5, padding='same', kernel_initializer='glorot_uniform')(net2)

net2 = BatchNormalization()(net2)

net2 = Activation('relu')(net2)

net2 = MaxPool1D(pool_size=4)(net2) net = concatenate([net, net1, net2], axis=-1)

net = CuDNNLSTM(256)(net)

net = Dense(8, activation='softmax')(net)

model = Model(inputs=my_inpuy, outputs=net)

return model def textcnn():

kernel_size = [1, 3, 3, 5, 5]

acti = 'relu'

# 可看做一个文件的api集为一句话,然后话中的词总量是6000

my_input = Input(shape=(inputLen,), dtype='int32')

emb = Embedding(len(tokenizer.word_index) + 1, 20, input_length=inputLen)(my_input)

emb = SpatialDropout1D(0.2)(emb) net = []

for kernel in kernel_size:

# 32个卷积核

con = Conv1D(32, kernel, activation=acti, padding="same")(emb)

# 滑动窗口大小是2,默认输出最后一维是通道数

con = MaxPool1D(2)(con)

net.append(con)

# print(net)

# input()

net = concatenate(net, axis=-1)

# net = concatenate(net)

# print(net)

# input()

net = Flatten()(net)

net = Dropout(0.5)(net)

net = Dense(256, activation='relu')(net)

net = Dropout(0.5)(net)

net = Dense(8, activation='softmax')(net)

model = Model(inputs=my_input, outputs=net)

return model test_result = np.zeros(shape=(len(test_apis),8)) # print(train_apis.shape)

# print(train_labels.shape)

# 5折交叉验证,将训练集切分成训练和验证集

skf = StratifiedKFold(n_splits=5)

for i, (train_index, valid_index) in enumerate(skf.split(train_apis, train_labels)):

# print(i)

# model = SequenceModel()

model = textcnn() # metrics默认只有loss,加accuracy后在model.evaluate(...)的返回值即有accuracy结果

model.compile(optimizer='adam', loss='sparse_categorical_crossentropy', metrics=['accuracy'])

#模型保存规则

model_save_path = './my_model/my_model_{}.h5'.format(str(i))

checkpoint = ModelCheckpoint(model_save_path, save_best_only=True, save_weights_only=True)

#早停规则

earlystop = EarlyStopping(monitor='val_loss', min_delta=0, patience=5, verbose=0, mode='min', baseline=None,

restore_best_weights=True)

#训练的过程会保存模型并早停

model.fit(train_apis[train_index], train_labels[train_index], epochs=100, batch_size=1000,

validation_data=(train_apis[valid_index], train_labels[valid_index]), callbacks=[checkpoint, earlystop])

model.load_weights(model_save_path)

# print(train_index, valid_index) test_tmpapis = model.predict(test_apis)

test_result = test_result + test_tmpapis # loss, acc = model.evaluate(train_apis, train_labels)

# print(loss)

# print(acc)

# print(model.predict(train_apis)) # print(test_files)

# print(test_apis)

test_result = test_result/5.0

with open(my_result, 'wb') as f:

pickle.dump(test_files, f)

pickle.dump(test_result, f) # print(len(test_files))

# print(len(test_apis)) result = []

for i in range(len(test_files)):

# # print(test_files[i])

# #之前test_apis不带逗号的格式是矩阵格式,现在tolist转为带逗号的列表格式

# print(test_apis[i])

# print(test_apis[i].tolist())

# result.append(test_files[i])

# result.append(test_apis[i])

tmp = []

a = test_result[i].tolist()

tmp.append(test_files[i])

# extend相比于append可以添加多个值

tmp.extend(a)

# print(tmp)

result.append(tmp)

# print(1)

# print(result) with open(my_result_csv, 'w') as f:

# f.write([1,2,3])

result_csv = csv.writer(f)

result_csv.writerow(["file_id", "prob0", "prob1", "prob2", "prob3", "prob4", "prob5", "prob6", "prob7"])

result_csv.writerows(result)

可知,增加了早停机制后,约20代程序就被截止,valid不饱和。改进方案呢?

尝试参考网上的,前向填充,这个影响大吗?

阿里云恶意软件检测比赛-第三周-TextCNN的更多相关文章

- 确保数据零丢失!阿里云数据库RDS for MySQL 三节点企业版正式商用

2019年10月23号,阿里云数据库RDS for MySQL 三节点企业版正式商用,RDS for MySQL三节点企业版基于Paxos协议实现数据库复制,每个事务日志确保至少同步两个节点,实现任意 ...

- 阿里云 Aliplayer高级功能介绍(三):多字幕

基本介绍 国际化场景下面,播放器支持多字幕,可以有效解决视频的传播障碍难题,该功能适用于视频内容在全球范围内推广,阿里云的媒体处理服务提供接口可以生成多字幕,现在先看一下具体的效果: WebVTT格式 ...

- 记一次阿里云服务器被用作DDOS攻击肉鸡

事件描述:阿里云报警 ——检测该异常事件意味着您服务器上开启了"Chargen/DNS/NTP/SNMP/SSDP"这些UDP端口服务,黑客通过向该ECS发送伪造源IP和源端口的恶 ...

- 阿里云 ecs win2016 FileZilla Server

Windows Server 2016 下使用 FileZilla Server 安装搭建 FTP 服务 一.安装 Filezilla Server 下载最新版本的 Filezilla Server ...

- 阿里云配置通用服务的坑 ssh: connect to host 47.103.101.102 port 22: Connection refused

1.~ wjw$ ssh root@47.103.101.102 ssh: connect to host 47.103.101.102 port 22: Connection refused ssh ...

- 阿里云入选Gartner 2019 WAF魔力象限,唯一亚太厂商!

近期,在全球权威咨询机构Gartner发布的2019 Web应用防火墙魔力象限中,阿里云Web应用防火墙成功入围,是亚太地区唯一一家进入该魔力象限的厂商! Web应用防火墙,简称WAF.在保护Web应 ...

- SaaS加速器,到底加速了谁? 剖析阿里云的SaaS战略:企业和ISV不可错过的好文

过去二十年,中国诞生了大批To C的高市值互联网巨头,2C的领域高速发展,而2B领域一直不温不火.近两年来,在C端流量饱和,B端数字化转型来临的背景下,中国越来越多的科技公司已经慢慢将触角延伸到了B端 ...

- 有关阿里云对SaaS行业的思考,看这一篇就够了

过去二十年,随着改革开放的深化,以及中国的人口红利等因素,中国诞生了大批To C的高市值互联网巨头,2C的领域高速发展,而2B领域一直不温不火.近两年来,在C端流量饱和,B端数字化转型来临的背景下,中 ...

- 专访阿里云资深技术专家黄省江:中国SaaS公司的成功之路

笔者采访中国SaaS厂商10多年,深感面对获客成本巨大.产品技术与功能成熟度不足.项目经营模式难以大规模复制.客户观念有待转变等诸多挑战,很多中国SaaS公司的经营状况都不容乐观. 7月26日,阿里云 ...

随机推荐

- Go interface 操作示例

原文链接:Go interface操作示例 特点: 1. interface 是一种类型 interface 是一种具有一组方法的类型,这些方法定义了 interface 的行为.go 允许不带任何方 ...

- cinderclient命令行源码解析

一.简介 openstack的各个模块中,都有相应的客户端模块实现,其作用是为用户访问具体模块提供了接口,并且也作为模块之间相互访问的途径.Cinder也一样,有着自己的cinder-client. ...

- Prefrontal cortex as a meta-reinforcement learning system

郑重声明:原文参见标题,如有侵权,请联系作者,将会撤销发布! Nature Neuroscience, 2018 Abstract 在过去的20年中,基于奖励的学习的神经科学研究已经集中在经典模型上, ...

- 6套MSP430开发板资料共享 | 免费下载

目录 1-MSP430 开发板I 2-MSP430mini板资料 3-MSP430F149开发板资料 4-KB-1B光盘资料 5-LT-1B型MSP430学习板光盘 6-MSP-EXP430F552 ...

- python爬虫之多线程、多进程+代码示例

python爬虫之多线程.多进程 使用多进程.多线程编写爬虫的代码能有效的提高爬虫爬取目标网站的效率. 一.什么是进程和线程 引用廖雪峰的官方网站关于进程和线程的讲解: 进程:对于操作系统来说,一个任 ...

- 消息型中间件之RabbitMQ集群

在上一篇博客中我们简单的介绍了下rabbitmq简介,安装配置相关指令的说明以及rabbitmqctl的相关子命令的说明:回顾请参考https://www.cnblogs.com/qiuhom-187 ...

- 为什么网站URL需要设置为静态化

http://www.wocaoseo.com/thread-95-1-1.html 为什么网站URL需要静态化?网站url静态化的好处是什么?现在很多网站的链接都是静态规的链接,但是网站 ...

- e3mall商城的归纳总结10之freemarker的使用和sso单点登录系统的简介

敬给读者的话 本节主要讲解freemarker的使用以及sso单点登录系统,两种技术都是比较先进的技术,freemarker是一个模板,主要生成一个静态静态,能更快的响应给用户,提高用户体验. 而ss ...

- 学完Python,我决定熬夜整理这篇总结

目录 了解Python Python基础语法 Python数据结构 数值 字符串 列表 元组 字典 集合 Python控制流 if 判断语句 for 循环语句 while 循环语句 break 和 c ...

- P4719 【模板】"动态 DP"&动态树分治

题目描述 给定一棵 n 个点的树,点带点权. 有 m 次操作,每次操作给定 x,y,表示修改点 x 的权值为 y. 你需要在每次操作之后求出这棵树的最大权独立集的权值大小. 输入格式 第一行有两个整数 ...