Kubernetes 二进制部署(二)集群部署(多 Master 节点通过 Nginx 负载均衡)

0. 前言

- 紧接上一篇,本篇文章我们尝试学习多节点部署 kubernetes 集群

- 并通过 haproxy+keepalived 实现 Master 节点的负载均衡

1. 实验环境

- 实验环境主要为 5 台虚拟机,IP 地址分别为:192.168.1.65、192.168.1.66、192.168.1.67、192.168.1.68、192.168.1.69

1.1 节点分配

- LB 节点:

- lb1:192.168.1.65

- lb2:192.168.1.66

- Master 节点:

- master1:192.168.1.67

- master2:192.168.1.68

- master3:192.168.1.69

- Node 节点:

- node1:192.168.1.67

- node2:192.168.1.68

- node3:192.168.1.69

- Etcd 节点:

- etcd01:192.168.1.67

- etcd02:192.168.1.68

- etcd03:192.168.1.69

- 为节约计算资源,kubernetes 集群中的 Master 节点、Node 节点和 Etcd 节点均各自部署在一个节点内

2. 部署流程

2.1 源码编译

- 安装 golang 环境

- kubernetes v1.18 要求使用的 golang 版本为 1.13

$ wget https://dl.google.com/go/go1.13.8.linux-amd64.tar.gz

$ tar -zxvf go1.13.8.linux-amd64.tar.gz -C /usr/local/

- 添加如下环境变量至 ~/.bashrc 或者 ~/.zshrc

export GOROOT=/usr/local/go # GOPATH

export GOPATH=$HOME/go # GOROOT bin

export PATH=$PATH:$GOROOT/bin # GOPATH bin

export PATH=$PATH:$GOPATH/bin

- 更新环境变量

$ source ~/.bashrc

- 从 github 上下载 kubernetes 最新源码

$ git clone https://github.com/kubernetes/kubernetes.git

- 编译形成二进制文件

$ make KUBE_BUILD_PLATFORMS=linux/amd64

+++ [ ::] Building go targets for linux/amd64:

./vendor/k8s.io/code-generator/cmd/deepcopy-gen

+++ [ ::] Building go targets for linux/amd64:

./vendor/k8s.io/code-generator/cmd/defaulter-gen

+++ [ ::] Building go targets for linux/amd64:

./vendor/k8s.io/code-generator/cmd/conversion-gen

+++ [ ::] Building go targets for linux/amd64:

./vendor/k8s.io/kube-openapi/cmd/openapi-gen

+++ [ ::] Building go targets for linux/amd64:

./vendor/github.com/go-bindata/go-bindata/go-bindata

+++ [ ::] Building go targets for linux/amd64:

cmd/kube-proxy

cmd/kube-apiserver

cmd/kube-controller-manager

cmd/kubelet

cmd/kubeadm

cmd/kube-scheduler

vendor/k8s.io/apiextensions-apiserver

cluster/gce/gci/mounter

cmd/kubectl

cmd/gendocs

cmd/genkubedocs

cmd/genman

cmd/genyaml

cmd/genswaggertypedocs

cmd/linkcheck

vendor/github.com/onsi/ginkgo/ginkgo

test/e2e/e2e.test

cluster/images/conformance/go-runner

cmd/kubemark

vendor/github.com/onsi/ginkgo/ginkgo

- KUBE_BUILD_PLATFORMS 指定了编译生成的二进制文件的目标平台,包括 darwin/amd64、linux/amd64 和 windows/amd64 等

- 执行 make cross 会生成所有平台的二进制文件

- 本地编译然后上传至服务器

- 生成的 _output 目录即为编译生成文件,核心二进制文件在 _output/local/bin/linux/amd64 中

$ pwd

/root/Coding/kubernetes/_output/local/bin/linux/amd64

$ ls

apiextensions-apiserver genman go-runner kube-scheduler kubemark

e2e.test genswaggertypedocs kube-apiserver kubeadm linkcheck

gendocs genyaml kube-controller-manager kubectl mounter

genkubedocs ginkgo kube-proxy kubelet

- 其中 kube-apiserver、kube-scheduler、kube-controller-manager、kubectl、kube-proxy 和 kubelet 为安装需要的二进制文件

2.2 安装 docker

- 在 kubernetes 集群的三个虚拟机上安装 docker:192.168.1.67、192.168.1.68、192.168.1.69

- 具体安装细节参见 官方文档

2.3 下载安装脚本

- 后续安装部署的所有脚本已经上传至 github 仓库 中,感兴趣的朋友可以下载

- 在 master1、master2 和 master3 上创建工作目录 k8s 和脚本目录 k8s/scripts,复制仓库中的所有脚本,到工作目录中的脚本文件夹中

$ git clone https://github.com/wangao1236/k8s_cluster_deploy.git

$ cd k8s_cluster_deploy/scripts

$ chmod +x *.sh

$ mkdir -p k8s/scripts

$ cp k8s_cluster_deploy/scripts/* k8s/scripts

2.4 安装 cfssl

- 在 master1、master2 和 master3 上安装 cfssl

- 在左右 kubernetes 节点上安装 cfssl,执行 k8s/scripts/cfssl.sh 脚本,或者执行如下命令:

$ curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

$ curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

$ curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

$ chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

- k8s/scripts/cfssl.sh 脚本内容如下:

$ cat k8s_cluster_deploy/scripts/cfssl.sh

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

2.5 安装 etcd

- 在其中一台机器上(如 etcd01)创建目标文件夹

$ mkdir -p /opt/etcd/{cfg,bin,ssl}

- 下载 etcd 最新版安装包

$ wget https://github.com/etcd-io/etcd/releases/download/v3.3.18/etcd-v3.3.18-linux-amd64.tar.gz

$ tar -zxvf etcd-v3.3.18-linux-amd64.tar.gz

$ cp etcd-v3.3.18-linux-amd64/etcdctl etcd-v3.3.18-linux-amd64/etcd /opt/etcd/bin

- 创建文件夹 k8s/etcd-cert,其中 k8s 部署相关文件和脚本的存储根目录,etcd-cert 暂存 etcd https 的证书

$ mkdir -p k8s/etcd-cert

- 复制 etcd-cert.sh 脚本执行 etcd-cert 目录中

$ cp k8s/scripts/etcd-cert.sh k8s/etcd-cert

- 脚本内容如下:

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF cfssl gencert -initca ca-csr.json | cfssljson -bare ca - #----------------------- cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"127.0.0.1",

"192.168.1.65",

"192.168.1.66",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

- 注意修改 server-csr.json 部分的 hosts 内容为 127.0.0.1 和虚拟机集群的所有 IP 地址

- 执行脚本

$ ./etcd-cert.sh

// :: [INFO] generating a new CA key and certificate from CSR

// :: [INFO] generate received request

// :: [INFO] received CSR

// :: [INFO] generating key: rsa-

// :: [INFO] encoded CSR

// :: [INFO] signed certificate with serial number

// :: [INFO] generate received request

// :: [INFO] received CSR

// :: [INFO] generating key: rsa-

// :: [INFO] encoded CSR

// :: [INFO] signed certificate with serial number

// :: [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1., from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2. ("Information Requirements").

- 拷贝证书

$ cp *.pem /opt/etcd/ssl

- 执行 k8s/scripts/etcd.sh 脚本,第一个参数为 etcd 节点名称,第二个为当前启动节点的 IP 地址,第三个参数为 Etcd 集群的所有地址

$ ./k8s/scripts/etcd.sh etcd01 192.168.1.67 etcd01=https://192.168.1.67:2380,etcd02=https://192.168.1.68:2380,etcd03=https://192.168.1.69:2380

- k8s/scripts/etcd.sh 脚本内容如下:

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.1.10 etcd01=https://192.168.1.10:2380,etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380 ETCD_NAME=$

ETCD_IP=$

ETCD_CLUSTER=$ systemctl stop etcd

systemctl disable etcd WORK_DIR=/opt/etcd cat <<EOF >$WORK_DIR/cfg/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF cat <<EOF >/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE= [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

- 接下来将 etcd 的工作目录和 etcd.service 文件复制给 etcd02 和 etcd03

$ scp -r /opt/etcd/ root@192.168.1.68:/opt/

$ scp -r /opt/etcd/ root@192.168.1.69:/opt/

$ scp /usr/lib/systemd/system/etcd.service root@192.168.1.68:/usr/lib/systemd/system/

$ scp /usr/lib/systemd/system/etcd.service root@192.168.1.69:/usr/lib/systemd/system/

- 分别在 etcd02 和 etcd03 上修改配置文件:/opt/etcd/cfg/etcd

[root@192.168.1.68] $ vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.68:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.68:2379" #[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.68:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.68:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.1.67:2380,etcd02=https://192.168.1.68:2380,etcd03=https://192.168.1.69

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new" [root@192.168.1.69] $ vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd03"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.1.69:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.1.69:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.1.69:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.1.69:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.1.67:2380,etcd02=https://192.168.1.68:2380,etcd03=https://192.168.1.69

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

- 分别在 etcd02 和 etcd03 上启动 etcd 服务

$ sudo systemctl enable etcd.service

Created symlink /etc/systemd/system/multi-user.target.wants/etcd.service → /usr/lib/systemd/system/etcd.service.

$ sudo systemctl start etcd.service

- 检查安装是否成功,执行如下命令:

$ sudo etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379" cluster-health

member 3143a1397990e241 is healthy: got healthy result from https://192.168.1.68:2379

member 469e7b2757c25086 is healthy: got healthy result from https://192.168.1.67:2379

member 5b1e32d0ab5e3e1b is healthy: got healthy result from https://192.168.1.69:2379

cluster is healthy

2.6 部署 flannel

- 在 node1、node2、node3 三个节点上分别部署 flannel

- 写入分配的子网段到 etcd 中,供 flannel 使用:

$ /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://127.0.0.1:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

- 查看写入的信息

$ /opt/etcd/bin/etcdctl --ca-file=/opt/etcd/ssl/ca.pem --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --endpoints="https://127.0.0.1:2379" get /coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

- 下载 flannel 最新安装包

$ wget https://github.com/coreos/flannel/releases/download/v0.11.0/flannel-v0.11.0-linux-amd64.tar.gz

$ tar -zxvf flannel-v0.11.0-linux-amd64.tar.gz

$ mkdir -p /opt/kubernetes/{cfg,bin,ssl}

$ mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

- 执行脚本 k8s/scripts/flannel.sh,第一个参数为 etcd 地址

$ ./k8s/scripts/flannel.sh https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379

- 脚本内容如下:

$ cat ./k8s/scripts/flannel.sh

#!/bin/bash ETCD_ENDPOINTS=${:-"http://127.0.0.1:2379"} systemctl stop flanneld

systemctl disable flanneld cat <<EOF >/opt/kubernetes/cfg/flanneld FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \\

-etcd-cafile=/opt/etcd/ssl/ca.pem \\

-etcd-certfile=/opt/etcd/ssl/server.pem \\

-etcd-keyfile=/opt/etcd/ssl/server-key.pem" EOF cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service [Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/docker -f /run/flannel/subnet.env

Restart=on-failure [Install]

WantedBy=multi-user.target EOF systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

- 查看启动时指定的子网

$ cat /run/flannel/subnet.envFLANNEL_NETWORK=172.17.0.0/

FLANNEL_SUBNET=172.17.89.1/

FLANNEL_MTU=

FLANNEL_IPMASQ=true $ cat /run/flannel/docker

DOCKER_OPT_BIP="--bip=172.17.89.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_OPTS=" --bip=172.17.89.1/24 --ip-masq=false --mtu=1450"

- 执行

vim /usr/lib/systemd/system/docker.service修改 docker 配置

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

BindsTo=containerd.service

After=network-online.target firewalld.service containerd.service

Wants=network-online.target

Requires=docker.socket [Service]

Type=notify

# the default is not to use systemd for cgroups because the delegate issues still

# exists and systemd currently does not support the cgroup feature set required

# for containers run by docker

EnvironmentFile=/run/flannel/docker

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H unix:///var/run/docker.soc

#ExecStart=/usr/bin/dockerd -H fd:// --containerd=/run/containerd/containerd.soc

ExecReload=/bin/kill -s HUP $MAINPID

TimeoutSec=

RestartSec=

Restart=always

......

- 重启 docker 服务

$ systemctl daemon-reload

$ systemctl restart docker

- 查看 flannel 网络,docker0 位于 flannel 分配的子网中

$ ifconfig

docker0: flags=<UP,BROADCAST,MULTICAST> mtu

inet 172.17.89.1 netmask 255.255.255.0 broadcast 172.17.89.255

ether ::fb::3b: txqueuelen (Ethernet)

RX packets bytes (0.0 B)

RX errors dropped overruns frame

TX packets bytes (0.0 B)

TX errors dropped overruns carrier collisions enp0s3: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu

inet 10.0.2.15 netmask 255.255.255.0 broadcast 10.0.2.255

inet6 fe80::a00:27ff:feaf:b59f prefixlen scopeid 0x20<link>

ether :::af:b5:9f txqueuelen (Ethernet)

RX packets bytes (247.1 KB)

RX errors dropped overruns frame

TX packets bytes (44.2 KB)

TX errors dropped overruns carrier collisions enp0s8: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu

inet 192.168.1.67 netmask 255.255.255.0 broadcast 192.168.1.255

inet6 fe80::a00:27ff:fe9f:cb5c prefixlen scopeid 0x20<link>

inet6 :8a10:2e24:d130:a00:27ff:fe9f:cb5c prefixlen scopeid 0x0<global>

ether :::9f:cb:5c txqueuelen (Ethernet)

RX packets bytes (2.3 MB)

RX errors dropped overruns frame

TX packets bytes (1.0 MB)

TX errors dropped overruns carrier collisions flannel.: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu

inet 172.17.89.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::60c3:ecff:fe34:9d6c prefixlen scopeid 0x20<link>

ether :c3:ec::9d:6c txqueuelen (Ethernet)

RX packets bytes (0.0 B)

RX errors dropped overruns frame

TX packets bytes (0.0 B)

TX errors dropped overruns carrier collisions lo: flags=<UP,LOOPBACK,RUNNING> mtu

inet 127.0.0.1 netmask 255.0.0.0

inet6 :: prefixlen scopeid 0x10<host>

loop txqueuelen (Local Loopback)

RX packets bytes (904.8 KB)

RX errors dropped overruns frame

TX packets bytes (904.8 KB)

TX errors dropped overruns carrier collisions

- 创建容器,查看容器网络

[root@adf9fc37d171 /]# yum install -y net-tools

[root@adf9fc37d171 /]# ifconfig

eth0: flags=<UP,BROADCAST,RUNNING,MULTICAST> mtu

inet 172.17.89.2 netmask 255.255.255.0 broadcast 172.17.89.255

ether ::ac::: txqueuelen (Ethernet)

RX packets bytes (13.4 MiB)

RX errors dropped overruns frame

TX packets bytes (79.4 KiB)

TX errors dropped overruns carrier collisions lo: flags=<UP,LOOPBACK,RUNNING> mtu

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen (Local Loopback)

RX packets bytes (0.0 B)

RX errors dropped overruns frame

TX packets bytes (0.0 B)

TX errors dropped overruns carrier collisions [root@adf9fc37d171 /]# ping 172.17.89.1

PING 172.17.89.1 (172.17.89.1) () bytes of data.

bytes from 172.17.89.1: icmp_seq= ttl= time=0.045 ms

bytes from 172.17.89.1: icmp_seq= ttl= time=0.045 ms

bytes from 172.17.89.1: icmp_seq= ttl= time=0.050 ms

bytes from 172.17.89.1: icmp_seq= ttl= time=0.052 ms

bytes from 172.17.89.1: icmp_seq= ttl= time=0.049 ms

- 测试可以 ping 通 docker0 网卡 证明 flannel 起到路由作用

2.7 安装 haproxy+keepalieved

- 在 lb1 和 lb2 上安装 haproxy 和 keepalived

- 执行如下命令:

$ sudo apt-get -y install haproxy keepalived

- 两台机器上分别修改 /etc/haproxy/haproxy.cfg 文件,追加如下内容:

listen admin_stats

bind 0.0.0.0:

mode http

log 127.0.0.1 local0 err

stats refresh 30s

stats uri /status

stats realm welcome login\ Haproxy

stats auth admin:

stats hide-version

stats admin if TRUE listen kube-master

bind 0.0.0.0:

mode tcp

option tcplog

balance source

server 192.168.1.67 192.168.1.67: check inter fall rise weight

server 192.168.1.68 192.168.1.68: check inter fall rise weight

server 192.168.1.69 192.168.1.69: check inter fall rise weight

- 定义监听端口为 8443 防止和 kube-apiserver 的 6443 端口重复

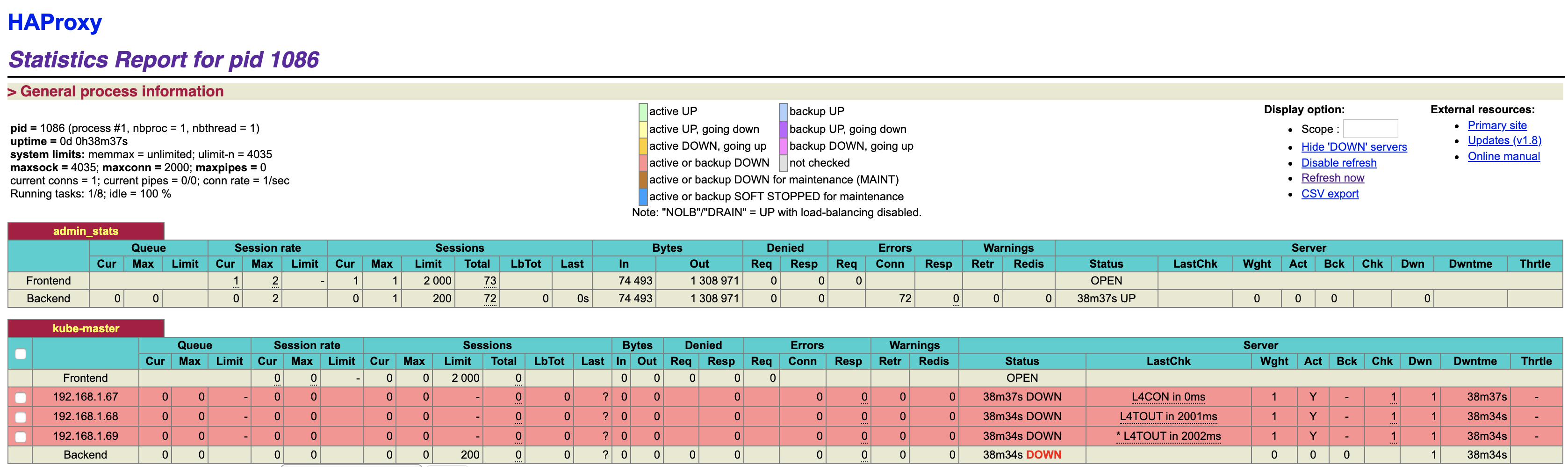

- haproxy 的访问页面为 http://192.168.1.66:10080/status 或者 http://192.168.1.67:10080/status,需要输入定义的用户名密码:admin/123456

- 完整配置文件如下:

$ cat /etc/haproxy/haproxy.cfg

global

log /dev/log local0

log /dev/log local1 notice

chroot /var/lib/haproxy

stats socket /run/haproxy/admin.sock mode level admin expose-fd listeners

stats timeout 30s

user haproxy

group haproxy

daemon # Default SSL material locations

ca-base /etc/ssl/certs

crt-base /etc/ssl/private # Default ciphers to use on SSL-enabled listening sockets.

# For more information, see ciphers(1SSL). This list is from:

# https://hynek.me/articles/hardening-your-web-servers-ssl-ciphers/

# An alternative list with additional directives can be obtained from

# https://mozilla.github.io/server-side-tls/ssl-config-generator/?server=haproxy

ssl-default-bind-ciphers ECDH+AESGCM:DH+AESGCM:ECDH+AES256:DH+AES256:ECDH+AES128:DH+AES:RSA+AESGCM:RSA+AES:!aNULL:!MD5:!DSS

ssl-default-bind-options no-sslv3 defaults

log global

mode http

option httplog

option dontlognull

timeout connect

timeout client

timeout server

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http

errorfile /etc/haproxy/errors/.http listen admin_stats

bind 0.0.0.0:

mode http

log 127.0.0.1 local0 err

stats refresh 30s

stats uri /status

stats realm welcome login\ Haproxy

stats auth admin:

stats hide-version

stats admin if TRUE listen kube-master

bind 0.0.0.0:

mode tcp

option tcplog

balance source

server 192.168.1.67 192.168.1.67: check inter fall rise weight

server 192.168.1.68 192.168.1.68: check inter fall rise weight

server 192.168.1.69 192.168.1.69: check inter fall rise weight

- 修改配置文件后,执行如下命令,重启服务:

$ sudo systemctl enable haproxy

$ sudo systemctl daemon-reload

$ sudo systemctl restart haproxy.service

- 访问页面如下:

- 选择 lb1 作为 keepalived 的主节点,lb2 为备份节点

- 添加 lb1 的配置文件如下:

$ sudo vim /etc/keepalived/keepalived.conf

global_defs {

router_id lb-master

} vrrp_script check-haproxy {

script "killall -0 haproxy"

interval

weight -

} vrrp_instance VI-kube-master {

state MASTER

priority

dont_track_primary

interface enp0s3

virtual_router_id

advert_int

track_script {

check-haproxy

}

virtual_ipaddress {

192.168.1.99

}

}

- 添加 lb2 的配置文件如下:

$ sudo vim /etc/keepalived/keepalived.conf

global_defs {

router_id lb-backup

} vrrp_script check-haproxy {

script "killall -0 haproxy"

interval

weight -

} vrrp_instance VI-kube-master {

state BACKUP

priority

dont_track_primary

interface enp0s3

virtual_router_id

advert_int

track_script {

check-haproxy

}

virtual_ipaddress {

192.168.1.99

}

}

- 注意上述 vrrp_instance 部分的 interface 字段为 lb1 和 lb2 上对应的网卡名称

- 重启服务:

$ sudo systemctl enable keepalived

$ sudo systemctl daemon-reload

$ sudo systemctl restart keepalived.service

2.8 脚本生成各个组件的证书

- 在192.168.1.67、192.168.1.68 和 192.168.1.69 上分别执行如下命令:

$ sudo mkdir -p /opt/kubernetes/{ssl,cfg,bin,log}

- k8s/scripts/k8s-cert.sh 定义了各个组件 https 服务的 csr,并生成证书

- 修改 k8s/scripts/k8s-cert.sh 中 kube-apiserver-csr.json 部分的 hosts 字段为 127.0.0.1、虚拟 IP 地址、master 的 IP 地址和 kube-apiserver 定义的 --service-cluster-ip-range 参数指定的 IP 地址段(10.254.0.0/24)的第一个IP地址

cat > kube-apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.1.99", // 虚拟机 IP

"192.168.1.67", // master1 IP

"192.168.1.68", // master2 IP

"192.168.1.69", // master3 IP

"10.254.0.1", // kube-apiserver 定义的 --service-cluster-ip-range 参数指定的 IP 地址段(10.254.0.0/24)的第一个IP地址

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

- 修改 k8s/scripts/k8s-cert.sh 中 kube-controller-manager-csr.json 部分的 hosts 字段为 127.0.0.1 和 master 的 IP 地址

cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size":

},

"hosts": [

"127.0.0.1",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "System"

}

]

}

EOF

- CN 为 system:kube-controller-manager

- O 为 system:kube-controller-manager

- kube-apiserver预定义的 RBAC使用的 ClusterRoleBindings system:kube-controller-manager 将用户 system:kube-controller-manager 与 ClusterRole system:kube-controller-manager 绑定

- 修改 k8s/scripts/k8s-cert.sh 中 kube-scheduler-csr.json 部分的 hosts 字段为 127.0.0.1 和 master 的 IP 地址

cat > kube-scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size":

},

"hosts": [

"127.0.0.1",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

EOF

- 脚本内容如下:

rm -rf master

rm -rf node

mkdir master

mkdir node cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF cfssl gencert -initca ca-csr.json | cfssljson -bare ca - #----------------------- kube-apiserver echo "generate kube-apiserver cert" cd master cat > kube-apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.1.99",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69",

"10.254.0.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=../ca.pem -ca-key=../ca-key.pem -config=../ca-config.json -profile=kubernetes kube-apiserver-csr.json | cfssljson -bare kube-apiserver cd .. #----------------------- kubectl echo "generate kubectl cert" cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin #----------------------- kube-controller-manager echo "generate kube-controller-manager cert" cd master cat > kube-controller-manager-csr.json <<EOF

{

"CN": "system:kube-controller-manager",

"key": {

"algo": "rsa",

"size":

},

"hosts": [

"127.0.0.1",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-controller-manager",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=../ca.pem -ca-key=../ca-key.pem -config=../ca-config.json -profile=kubernetes kube-controller-manager-csr.json | cfssljson -bare kube-controller-manager cd .. #----------------------- kube-scheduler echo "generate kube-scheduler cert" cd master cat > kube-scheduler-csr.json <<EOF

{

"CN": "system:kube-scheduler",

"key": {

"algo": "rsa",

"size":

},

"hosts": [

"127.0.0.1",

"192.168.1.67",

"192.168.1.68",

"192.168.1.69"

],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-scheduler",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=../ca.pem -ca-key=../ca-key.pem -config=../ca-config.json -profile=kubernetes kube-scheduler-csr.json | cfssljson -bare kube-scheduler cd .. #----------------------- kube-proxy echo "generate kube-proxy cert" cd node cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size":

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:kube-proxy",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=../ca.pem -ca-key=../ca-key.pem -config=../ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy cd ..

- 在其中一台机器上(如 192.168.1.67)使用 k8s/scripts/k8s-cert.sh 脚本生成认证,并复制到 /opt/kubernetes/ssl/ 中

$ mkdir -p k8s/k8s-cert

$ cp k8s/scripts/k8s-cert.sh k8s/k8s-cert

$ cd k8s/k8s-cert

$ ./k8s-cert.sh

$ sudo cp -r ca* kube* master node /opt/kubernetes/ssl/

- 复制所有的证书到另外两个节点上

$ sudo scp -r /opt/kubernetes/ssl/* root@192.168.1.68:/opt/kubernetes/ssl/

$ sudo scp -r /opt/kubernetes/ssl/* root@192.168.1.69:/opt/kubernetes/ssl/

2.9 脚本生成各个组件的 kubeconfig

- 在 192.168.1.67、192.168.1.68 和 192.168.1.69 分别创建 .kube 文件夹

$ mkdir -p ~/.kube

- 在其中一台机器上(如 192.168.1.67)创建工作目录,复制 k8s/scripts/kubeconfig.sh 脚本

$ mkdir -p k8s/kubeconfig

$ cp k8s/scripts/kubeconfig.sh k8s/kubeconfig

$ cd k8s/kubeconfig

- 执行脚本生成各个组件的 kubeconfig,第一参数为虚拟 IP 地址,第二个参数为 kubernetes 安装目录的证书文件

$ sudo ./kubeconfig.sh 192.168.1.99 /opt/kubernetes/ssl

0524b3077444a437dfc662e5739bfa1a

===> generate kubectl config

Cluster "kubernetes" set.

User "admin" set.

Context "admin@kubernetes" created.

Switched to context "admin@kubernetes".

===> generate kube-controller-manager.kubeconfig

Cluster "kubernetes" set.

User "system:kube-controller-manager" set.

Context "system:kube-controller-manager@kubernetes" created.

Switched to context "system:kube-controller-manager@kubernetes".

===> generate kube-scheduler.kubeconfig

Cluster "kubernetes" set.

User "system:kube-scheduler" set.

Context "system:kube-scheduler@kubernetes" created.

Switched to context "system:kube-scheduler@kubernetes".

===> generate kubelet bootstrapping kubeconfig

Cluster "kubernetes" set.

User "kubelet-bootstrap" set.

Context "default" created.

Switched to context "default".

===> generate kube-proxy.kubeconfig

Cluster "kubernetes" set.

User "system:kube-proxy" set.

Context "system:kube-proxy@kubernetes" created.

Switched to context "system:kube-proxy@kubernetes".

- 脚本内容如下:

#----------------------创建 kube-apiserver TLS Bootstrapping Token BOOTSTRAP_TOKEN=$(head -c /dev/urandom | od -An -t x | tr -d ' ')

echo ${BOOTSTRAP_TOKEN} cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,,"system:kubelet-bootstrap"

EOF #---------------------- APISERVER=$

SSL_DIR=$ export KUBE_APISERVER="https://$APISERVER:8443" #---------------------- echo "===> generate kubectl config" # 创建 kubectl config kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=config kubectl config set-credentials admin \

--client-certificate=$SSL_DIR/admin.pem \

--client-key=$SSL_DIR/admin-key.pem \

--embed-certs=true \

--kubeconfig=config kubectl config set-context admin@kubernetes \

--cluster=kubernetes \

--user=admin \

--kubeconfig=config kubectl config use-context admin@kubernetes --kubeconfig=config #---------------------- echo "===> generate kube-controller-manager.kubeconfig" # 创建 kube-controller-manager.kubeconfig kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-controller-manager.kubeconfig kubectl config set-credentials system:kube-controller-manager \

--client-certificate=$SSL_DIR/master/kube-controller-manager.pem \

--client-key=$SSL_DIR/master/kube-controller-manager-key.pem \

--embed-certs=true \

--kubeconfig=kube-controller-manager.kubeconfig kubectl config set-context system:kube-controller-manager@kubernetes \

--cluster=kubernetes \

--user=system:kube-controller-manager \

--kubeconfig=kube-controller-manager.kubeconfig kubectl config use-context system:kube-controller-manager@kubernetes --kubeconfig=kube-controller-manager.kubeconfig #---------------------- echo "===> generate kube-scheduler.kubeconfig" # 创建 kube-scheduler.kubeconfig kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-scheduler.kubeconfig kubectl config set-credentials system:kube-scheduler \

--client-certificate=$SSL_DIR/master/kube-scheduler.pem \

--client-key=$SSL_DIR/master/kube-scheduler-key.pem \

--embed-certs=true \

--kubeconfig=kube-scheduler.kubeconfig kubectl config set-context system:kube-scheduler@kubernetes \

--cluster=kubernetes \

--user=system:kube-scheduler \

--kubeconfig=kube-scheduler.kubeconfig kubectl config use-context system:kube-scheduler@kubernetes --kubeconfig=kube-scheduler.kubeconfig #---------------------- echo "===> generate kubelet bootstrapping kubeconfig" # 创建 kubelet bootstrapping kubeconfig # 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig # 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig # 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig # 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig #---------------------- echo "===> generate kube-proxy.kubeconfig" # 创建 kube-proxy.kubeconfig kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials system:kube-proxy \

--client-certificate=$SSL_DIR/node/kube-proxy.pem \

--client-key=$SSL_DIR/node/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-context system:kube-proxy@kubernetes \

--cluster=kubernetes \

--user=system:kube-proxy \

--kubeconfig=kube-proxy.kubeconfig kubectl config use-context system:kube-proxy@kubernetes --kubeconfig=kube-proxy.kubeconfig

- 复制 config 文件到 ~/.kube 文件夹中

$ sudo chown ao:ao config

$ cp config ~/.kube

- 同时复制 config 文件到其他两台机器上

$ scp config ao@192.168.1.68:/home/ao/.kube

$ scp config ao@192.168.1.69:/home/ao/.kube

- 复制 token.csv 文件到 /opt/kubernetes/cfg 中

$ sudo cp token.csv /opt/kubernetes/cfg

- 复制到 token.csv 到另外两台机器上

$ sudo scp token.csv root@192.168.1.68:/opt/kubernetes/cfg

$ sudo scp token.csv root@192.168.1.69:/opt/kubernetes/cfg

- 其他文件也需要复制到对应机器上的对应目录,后面安装其他组件时继续介绍

2.10 安装 kube-apiserver

- 在 192.168.1.67、192.168.1.68 和 192.168.1.69 上复制上述提到的 kube-apiserver、kubectl、kube-controller-manager、kube-scheduler、kubelet 和 kube-proxy 到 /

opt/kubernetes/bin/ 中

$ cp kube-apiserver kubectl kube-controller-manager kube-scheduler kubelet kube-proxy /opt/kubernetes/bin/

- 在三台机器上分别执行 k8s/scripts/apiserver.sh 脚本,启动 kube-apiserver.service 服务,第一个参数为 Master 节点地址,第二个为 etcd 集群地址

$ sudo ./k8s/scripts/apiserver.sh 192.168.1.67 https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379

$ sudo ./k8s/scripts/apiserver.sh 192.168.1.68 https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379

$ sudo ./k8s/scripts/apiserver.sh 192.168.1.69 https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379

- 脚本内容如下:

#!/bin/bash MASTER_ADDRESS=$

ETCD_SERVERS=$ systemctl stop kube-apiserver

systemctl disable kube-apiserver cat <<EOF >/opt/kubernetes/cfg/kube-apiserver KUBE_APISERVER_OPTS="--logtostderr=true \\

--v= \\

--anonymous-auth=false \\

--etcd-servers=${ETCD_SERVERS} \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \\

--service-cluster-ip-range=10.254.0.0/ \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port= \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--allow-privileged=true \\

--tls-cert-file=/opt/kubernetes/ssl/master/kube-apiserver.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/master/kube-apiserver-key.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--advertise-address=${MASTER_ADDRESS} \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--kubelet-certificate-authority=/opt/kubernetes/ssl/ca.pem \\

--kubelet-client-key=/opt/kubernetes/ssl/master/kube-apiserver-key.pem \\

--kubelet-client-certificate=/opt/kubernetes/ssl/master/kube-apiserver.pem \\

--service-node-port-range=-" EOF cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

- 脚本会创建 kube-apiserver.service 的服务,查看服务状态

$ systemctl status kube-apiserver.service

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; vendor preset: enabled)

Active: active (running) since Tue -- :: UTC; 2s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: (kube-apiserver)

Tasks: (limit: )

CGroup: /system.slice/kube-apiserver.service

└─ /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v= --anonymous-auth=false --etcd-servers Feb :: master1 kube-apiserver[]: I0225 ::08.782348 endpoint.go:] ccResolverWrapper: send

Feb :: master1 kube-apiserver[]: I0225 ::08.782501 reflector.go:] Listing and watching

Feb :: master1 kube-apiserver[]: I0225 ::08.788181 store.go:] Monitoring apiservices.a

Feb :: master1 kube-apiserver[]: I0225 ::08.789982 watch_cache.go:] Replace watchCache

Feb :: master1 kube-apiserver[]: I0225 ::08.790831 deprecated_insecure_serving.go:] Serv

Feb :: master1 kube-apiserver[]: I0225 ::08.794071 reflector.go:] Listing and watching

Feb :: master1 kube-apiserver[]: I0225 ::08.797572 watch_cache.go:] Replace watchCache

Feb :: master1 kube-apiserver[]: I0225 ::09.026015 client.go:] parsed scheme: "endpoint

Feb :: master1 kube-apiserver[]: I0225 ::09.026087 endpoint.go:] ccResolverWrapper: send

Feb :: master1 kube-apiserver[]: I0225 ::09.888015 aggregator.go:] Building initial Ope

lines -/ (END)

- 查看 kube-apiservce.service 的配置文件

$ cat /opt/kubernetes/cfg/kube-apiserver KUBE_APISERVER_OPTS="--logtostderr=true \

--v= \

--anonymous-auth=false \

--etcd-servers=https://192.168.1.67:2379,https://192.168.1.68:2379,https://192.168.1.69:2379 \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--service-cluster-ip-range=10.254.0.0/ \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--bind-address=192.168.1.67 \

--secure-port= \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--allow-privileged=true \

--tls-cert-file=/opt/kubernetes/ssl/master/kube-apiserver.pem \

--tls-private-key-file=/opt/kubernetes/ssl/master/kube-apiserver-key.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--advertise-address=192.168.1.67 \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--kubelet-certificate-authority=/opt/kubernetes/ssl/ca.pem \

--kubelet-client-key=/opt/kubernetes/ssl/master/kube-apiserver-key.pem \

--kubelet-client-certificate=/opt/kubernetes/ssl/master/kube-apiserver.pem \

--service-node-port-range=-"

授予 kubernetes 证书访问 kubelet API 的权限

$ kubectl create clusterrolebinding kube-apiserver:kubelet-apis --clusterrole=system:kubelet-api-admin --user kubernetes

2.11 安装 kube-scheduler

- 在 192.168.1.67(上述生成各个组件的 kubeconfig 的机器)上复制 kube-scheduler.kubeconfig 到指定目录和其他节点的指定目录:

$ cd k8s/kubeconfig

$ sudo cp kube-scheduler.kubeconfig /opt/kubernetes/cfg

$ sudo scp kube-scheduler.kubeconfig root@192.168.1.68:/opt/kubernetes/cfg

$ sudo scp kube-scheduler.kubeconfig root@192.168.1.69:/opt/kubernetes/cfg

- 在三台节点上执行 k8s/scripts/scheduler.sh 脚本,创建 kube-scheduler.service 服务并启动

$ sudo ./k8s/scripts/scheduler.sh

- 脚本内容如下:

#!/bin/bash systemctl stop kube-scheduler

systemctl disable kube-scheduler cat <<EOF >/opt/kubernetes/cfg/kube-scheduler KUBE_SCHEDULER_OPTS="--logtostderr=true \\

--v= \\

--bind-address=0.0.0.0 \\

--port= \\

--secure-port= \\

--kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--authentication-kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--authorization-kubeconfig=/opt/kubernetes/cfg/kube-scheduler.kubeconfig \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--tls-cert-file=/opt/kubernetes/ssl/master/kube-scheduler.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/master/kube-scheduler-key.pem \\

--leader-elect=true" EOF cat <<EOF >/usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

- 脚本会创建 kube-scheduler.service 服务,查看服务状态

$ sudo systemctl status kube-scheduler.service

● kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: enabled)

Active: active (running) since Tue -- :: UTC; 1min 7s ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: (kube-scheduler)

Tasks: (limit: )

CGroup: /system.slice/kube-scheduler.service

└─ /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v= --bind-address=0.0.0.0 --port= - Feb :: master1 kube-scheduler[]: I0225 ::43.911182 reflector.go:] Listing and watching

Feb :: master1 kube-scheduler[]: E0225 ::43.913844 reflector.go:] k8s.io/kubernetes/cmd

Feb :: master1 kube-scheduler[]: I0225 ::46.438728 reflector.go:] Listing and watching

Feb :: master1 kube-scheduler[]: E0225 ::46.441883 reflector.go:] k8s.io/client-go/info

Feb :: master1 kube-scheduler[]: I0225 ::47.086981 reflector.go:] Listing and watching

Feb :: master1 kube-scheduler[]: E0225 ::47.088902 reflector.go:] k8s.io/client-go/info

Feb :: master1 kube-scheduler[]: I0225 ::09.120429 reflector.go:] Listing and watching

Feb :: master1 kube-scheduler[]: E0225 ::09.123594 reflector.go:] k8s.io/client-go/info

Feb :: master1 kube-scheduler[]: I0225 ::09.788768 reflector.go:] Listing and watching

Feb :: master1 kube-scheduler[]: E0225 ::09.790724 reflector.go:] k8s.io/client-go/info

lines -/ (END)

2.12 安装 kube-controller-manager

- 在 192.168.1.67(上述生成各个组件的 kubeconfig 的机器)上复制 kube-controller-manager.kubeconfig 到指定目录和其他节点的指定目录:

$ cd k8s/kubeconfig

$ sudo cp kube-controller-manager.kubeconfig /opt/kubernetes/cfg

$ sudo scp kube-controller-manager.kubeconfig root@192.168.1.68:/opt/kubernetes/cfg

$ sudo scp kube-controller-manager.kubeconfig root@192.168.1.69:/opt/kubernetes/cfg

- 在三台节点上执行 k8s/scripts/controller-manager.sh 脚本,创建 kube-controller-manager.service 服务并启动

$ sudo ./k8s/scripts/controller-manager.sh

- 脚本内容如下:

#!/bin/bash systemctl stop kube-controller-manager.service

systemctl disable kube-controller-manager.service cat <<EOF >/opt/kubernetes/cfg/kube-controller-manager KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v= \\

--bind-address=0.0.0.0 \\

--cluster-name=kubernetes \\

--kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--requestheader-client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--authentication-kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--authorization-kubeconfig=/opt/kubernetes/cfg/kube-controller-manager.kubeconfig \\

--leader-elect=true \\

--service-cluster-ip-range=10.254.0.0/ \\

--controllers=*,bootstrapsigner,tokencleaner \\

--tls-cert-file=/opt/kubernetes/ssl/master/kube-controller-manager.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/master/kube-controller-manager-key.pem \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--secure-port= \\

--use-service-account-credentials=true \\

--experimental-cluster-signing-duration=87600h0m0s" EOF #--allocate-node-cidrs=true \\

#--cluster-cidr=172.17.0.0/ \\ cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

- 脚本会创建 kube-controller-manager.service 服务,查看服务状态

$ systemctl status kube-controller-manager.service

● kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: enabled)

Active: active (running) since Sun -- :: UTC; 15min ago

Docs: https://github.com/kubernetes/kubernetes

Main PID: (kube-controller)

Tasks: (limit: )

CGroup: /system.slice/kube-controller-manager.service

└─ /opt/kubernetes/bin/kube-controller-manager --logtostderr=false --v= --bind-address=192.168.1.6 Feb :: clean systemd[]: Started Kubernetes Controller Manager.

- 将二进制文件目录加入环境变量:export PATH=$PATH:/opt/kubernetes/bin/

$ vim ~/.zshrc

......

export PATH=$PATH:/opt/kubernetes/bin/

$ source ~/.zshrc

- 在任意一个机器上执行如下命令,查看集群状态

$ kubectl cluster-info

Kubernetes master is running at https://192.168.1.99:8443 To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. $ kubectl get cs

NAME STATUS MESSAGE ERROR

scheduler Healthy ok

controller-manager Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

2.13 安装 kubelet

- 从此节开始,安装的组件均为 Node 节点使用

- 创建 bootstrap 角色赋予权限用于连接 kube-apiserver 请求签名

$ kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

- 在 192.168.1.67(上述生成各个组件的 kubeconfig 的机器)上复制 bootstrap.kubeconfig 到指定目录和其他节点的指定目录:

$ cd k8s/kubeconfig

$ sudo cp kube-controller-manager.kubeconfig /opt/kubernetes/cfg

$ sudo scp kube-controller-manager.kubeconfig root@192.168.1.68:/opt/kubernetes/cfg

$ sudo scp kube-controller-manager.kubeconfig root@192.168.1.69:/opt/kubernetes/cfg

- 在三台节点上执行 k8s/scripts/kubelet.sh 脚本,创建 kubelet.service 服务并启动,第一个参数为 Node 节点地址,第二个参数为 Node 节点在 kubernetes 中显示的名称

sudo ./k8s/scripts/kubelet.sh 192.168.1.67 node1

sudo ./k8s/scripts/kubelet.sh 192.168.1.68 node2

sudo ./k8s/scripts/kubelet.sh 192.168.1.69 node3

- 脚本内容如下:

#!/bin/bash NODE_ADDRESS=$

NODE_NAME=$

DNS_SERVER_IP=${:-"10.254.0.2"} systemctl stop kubelet

systemctl disable kubelet cat <<EOF >/opt/kubernetes/cfg/kubelet KUBELET_OPTS="--logtostderr=true \\

--v= \\

--config=/opt/kubernetes/cfg/kubelet.config \\

--node-ip=${NODE_ADDRESS} \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--cert-dir=/opt/kubernetes/ssl/node \\

--hostname-override=${NODE_NAME} \\

--node-labels=node.kubernetes.io/k8s-master=true \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0" EOF #--cni-bin-dir=/opt/cni/bin \\

#--cni-conf-dir=/opt/cni/net.d \\

#--network-plugin=cni \\ cat <<EOF >/opt/kubernetes/cfg/kubelet.config kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port:

readOnlyPort:

cgroupDriver: cgroupfs

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

enabled: true

x509:

clientCAFile: "/opt/kubernetes/ssl/ca.pem"

authorization:

mode: Webhook EOF cat <<EOF >/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service [Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

- 检查请求

$ kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-HHJMkN9RvwkgTkWGJThtsIPPlexh1Ci5vyOcjEhwk5c 29s kubelet-bootstrap Pending

node-csr-Ih-JtbfHPzP8u0_YI0By7RWMPCEfaEpapi47kil1YbU 4s kubelet-bootstrap Pending

node-csr-eyb0y_uxEWgPHnUQ2DyEhCK09AkirUp11O3b40zFyAQ 1s kubelet-bootstrap Pending

- 同意请求并颁发证书

$ kubectl certificate approve node-csr-HHJMkN9RvwkgTkWGJThtsIPPlexh1Ci5vyOcjEhwk5c node-csr-Ih-JtbfHPzP8u0_YI0By7RWMPCEfaEpapi47kil1YbU node-csr-eyb0y_uxEWgPHnUQ2DyEhCK09AkirUp11O3b40zFyAQ

certificatesigningrequest.certificates.k8s.io/node-csr-HHJMkN9RvwkgTkWGJThtsIPPlexh1Ci5vyOcjEhwk5c approved

certificatesigningrequest.certificates.k8s.io/node-csr-Ih-JtbfHPzP8u0_YI0By7RWMPCEfaEpapi47kil1YbU approved

certificatesigningrequest.certificates.k8s.io/node-csr-eyb0y_uxEWgPHnUQ2DyEhCK09AkirUp11O3b40zFyAQ approved

$ kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-HHJMkN9RvwkgTkWGJThtsIPPlexh1Ci5vyOcjEhwk5c 3m37s kubelet-bootstrap Approved,Issued

node-csr-Ih-JtbfHPzP8u0_YI0By7RWMPCEfaEpapi47kil1YbU 3m12s kubelet-bootstrap Approved,Issued

node-csr-eyb0y_uxEWgPHnUQ2DyEhCK09AkirUp11O3b40zFyAQ 3m9s kubelet-bootstrap Approved,Issued

- 查看集群节点

$ kubectl get node

NAME STATUS ROLES AGE VERSION

node1 Ready <none> 2m36s v1.18.0-alpha.5.158+1c60045db0bd6e

node2 Ready <none> 2m36s v1.18.0-alpha.5.158+1c60045db0bd6e

node3 Ready <none> 2m36s v1.18.0-alpha.5.158+1c60045db0bd6e

- 已经是 Ready 状态,说明加入成功

- 由于该 Node 同时也是 Master 角色,因此需要标记一下

$ kubectl label node node1 node2 node3 node-role.kubernetes.io/master=true

node/node1 labeled

node/node2 labeled

node/node3 labeled

$ kubectl get node

NAME STATUS ROLES AGE VERSION

node1 Ready master 3m36s v1.18.0-alpha.5.158+1c60045db0bd6e

node2 Ready master 3m36s v1.18.0-alpha.5.158+1c60045db0bd6e

node3 Ready master 3m36s v1.18.0-alpha.5.158+1c60045db0bd6e

允许 Master 节点上部署 Pod:

$ kubectl taint nodes --all node-role.kubernetes.io/master=true:NoSchedule

$ kubectl taint nodes node1 node2 node3 node-role.kubernetes.io/master-

- 注意:理论上只调用上述第二个命令即可,但是实际上会出现 “taint "node-role.kubernetes.io/master" not found” 错误,因此加上了第一个命令

2.14 安装 kube-proxy

- 在 192.168.1.67(上述生成各个组件的 kubeconfig 的机器)上复制 kube-proxy.kubeconfig 到指定目录和其他节点的指定目录:

$ cd k8s/kubeconfig

$ sudo cp kube-proxy.kubeconfig /opt/kubernetes/cfg

$ sudo scp kube-proxy.kubeconfig root@192.168.1.68:/opt/kubernetes/cfg

$ sudo scp kube-proxy.kubeconfig root@192.168.1.69:/opt/kubernetes/cfg

- 在三台执行 k8s/scripts/proxy.sh 脚本,创建 kube-proxy.service 服务并启动,第一个参数为 Node 节点显示名称(需要与 kubelet 中的 Node 节点名称对应)

$ ./k8s/scripts/proxy.sh node1

$ ./k8s/scripts/proxy.sh node2

$ ./k8s/scripts/proxy.sh node3

- 脚本内容如下:

#!/bin/bash NODE_NAME=$ systemctl stop kube-proxy

systemctl disable kube-proxy cat <<EOF >/opt/kubernetes/cfg/kube-proxy KUBE_PROXY_OPTS="--logtostderr=true \\

--v= \\

--bind-address=0.0.0.0 \\

--hostname-override=${NODE_NAME} \\

--cleanup-ipvs=true \\

--cluster-cidr=10.254.0.0/ \\

--proxy-mode=ipvs \\

--ipvs-min-sync-period=5s \\

--ipvs-sync-period=5s \\

--ipvs-scheduler=wrr \\

--masquerade-all=true \\

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig" EOF cat <<EOF >/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

- 脚本会创建 kube-proxy.service 服务,查看服务状态

$ sudo systemctl status kube-proxy.service

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: enabled)

Active: active (running) since Tue -- :: UTC; 12s ago

Main PID: (kube-proxy)

Tasks: (limit: )

CGroup: /system.slice/kube-proxy.service

└─ /opt/kubernetes/bin/kube-proxy --logtostderr=true --v= --bind-address=0.0.0.0 --hostname-overri Feb :: master3 kube-proxy[]: I0225 ::01.877754 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::02.027867 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::03.906364 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::04.058010 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::05.937519 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::06.081698 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::07.970036 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::08.118982 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::09.996659 config.go:] Calling handler.OnEndpoints

Feb :: master3 kube-proxy[]: I0225 ::10.148146 config.go:] Calling handler.OnEndpoints

lines -/ (END)

2.15 检验安装

- 创建 yaml 文件

$ mkdir -p k8s/yamls

$ cd k8s/yamls

$ vim nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:

name: nginx-deployment

spec:

selector:

matchLabels:

app: nginx

replicas: 2

template:

metadata:

labels:

app: nginx

spec:

containers:

- name: nginx

image: nginx:1.7.9

ports:

- containerPort: 80

- 创建 deployment 对象,查看生成的 Pod,进入 Running 状态,说明已经成功创建

$ kubectl apply -f nginx-deployment.yaml

$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-deployment-54f57cf6bf-6d4n5 / Running 5s

nginx-deployment-54f57cf6bf-zzdv4 / Running 5s

- 查看 Pod 具体信息

$ kubectl describe pod nginx-deployment-54f57cf6bf-6d4n5

Name: nginx-deployment-54f57cf6bf-6d4n5

Namespace: default

Priority:

Node: node3/192.168.1.69

Start Time: Tue, Feb :: +

Labels: app=nginx

pod-template-hash=54f57cf6bf

Annotations: <none>

Status: Running

IP: 172.17.89.2

IPs:

IP: 172.17.89.2

Controlled By: ReplicaSet/nginx-deployment-54f57cf6bf

Containers:

nginx:

Container ID: docker://222b1dd1bb57fdd36b4eda31100477531f94a82c844a2f042c444f0a710faf20

Image: nginx:1.7.

Image ID: docker-pullable://nginx@sha256:e3456c851a152494c3e4ff5fcc26f240206abac0c9d794affb40e0714846c451

Port: /TCP

Host Port: /TCP

State: Running

Started: Tue, Feb :: +

Ready: True

Restart Count:

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from default-token-p92fn (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

default-token-p92fn:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-p92fn

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled <unknown> default-scheduler Successfully assigned default/nginx-deployment-54f57cf6bf-6d4n5 to node3

Normal Pulled 117s kubelet, node3 Container image "nginx:1.7.9" already present on machine

Normal Created 117s kubelet, node3 Created container nginx

Normal Started 117s kubelet, node3 Started container nginx

- 若查看时报如下错误:

Error from server (Forbidden): Forbidden (user=system:anonymous, verb=get, resource=nodes, subresource=proxy)

- 则需要给集群加一个 cluster-admin 权限:

$ kubectl create clusterrolebinding system:anonymous --clusterrole=cluster-admin --user=system:anonymous

3. 小结

- 当前部署 kubelet 没有以 cni 的网络插件启动,因此不能跨节点访问 pod,后续学习中加入

- 上述的脚本均上传至 github 仓库

- 欢迎各位提出问题和批评

4. 参考文献

Kubernetes 二进制部署(二)集群部署(多 Master 节点通过 Nginx 负载均衡)的更多相关文章

- 二进制方法-部署k8s集群部署1.18版本

二进制方法-部署k8s集群部署1.18版本 1. 前置知识点 1.1 生产环境可部署kubernetes集群的两种方式 目前生产部署Kubernetes集群主要有两种方式 kuberadm Kubea ...

- 二进制部署K8S-2集群部署

二进制部署K8S-2集群部署 感谢老男孩教育王导的公开视频,文档整理自https://www.yuque.com/duduniao/k8s. 因为在后期运行容器需要有大量的物理硬件资源使用的环境是用的 ...

- kubernetes 1.4.5集群部署

2016/11/16 23:39:58 环境: centos7 [fu@centos server]$ uname -a Linux centos 3.10.0-327.el7.x86_64 #1 S ...

- 二进制部署1.23.4版本k8s集群-5-部署Master节点服务

1.安装Docker 在21.22.200三台机器上安装Docker.安装命令: 在21.22.200三台主机上部署Docker. ~]# curl -fsSL https://get.docker. ...

- 005.基于docker部署etcd集群部署

一 环境准备 ntp配置:略 #建议配置ntp服务,保证时间一致性 etcd版本:v3.3.9 防火墙及SELinux:关闭防火墙和SELinux 名称 地址 主机名 备注 etcd1 172.24. ...

- Zookeeper(一)-- 简介以及单机部署和集群部署

一.分布式系统 由多个计算机组成解决同一个问题的系统,提高业务的并发,解决高并发问题. 二.分布式环境下常见问题 1.节点失效 2.配置信息的创建及更新 3.分布式锁 三.Zookeeper 1.定义 ...

- Solr单机部署和集群部署

用到的相关jar包:http://pan.baidu.com/disk/home#list/path=%2Fsolr Solr目录结构 Solr 目录 Contrib :solr 为了增强自身的功能, ...

- Linux中Zookeeper部署和集群部署

自己网上下载安装包,我下载的是tar.gz安装包直接解压,也可以下载rpm格式 1.下载zookeeper安装包,放到/usr/local/zookeeper安装包网上下载 2.解压文件tar -zx ...

- LVS集群中实现的三种IP负载均衡技术

LVS有三种IP负载均衡技术:VS/NAT,VS/DR,VS/TUN. VS/NAT的体系结构如图所示.在一组服务器前有一个调度器,它们是通过Switch/HUB相连接的.这些服务器 提供相同的网络服 ...

随机推荐

- 攻防世界 web 新手练习 刷题记录

1.view_source 既然让看源码,那就F12直接就能看到. 2.robots 先百度去简单了解一下robots协议 robots协议(robots.txt),robots.txt文件在网站根目 ...

- vs2015 C语言

1.C语言输入一行未知个数数字存入数组 参考:https://www.cnblogs.com/wd1001/p/4826855.html 2.VS2015编写C语言程序的流程 参考:http://c. ...

- POJ-2891 Strange Way to Express Integers(拓展中国剩余定理)

放一个写的不错的博客:https://www.cnblogs.com/zwfymqz/p/8425731.html POJ好像不能用__int128. #include <iostream> ...

- spring boot properties文件与yaml文件的区别

编写是没有提示的话在pom中添加依赖,如下: <!-- 配置文件处理器 编写配置时会有提示 --> <dependency> <groupId>org.spring ...

- webpack原理类型问题

1.webpack底层原理 (实现一个webpack) 步骤:1.拿到入口文件的代码并读出来转化为js对象(抽象语法术parser)2.拿到所有模块的依赖 ‘./message.js’,放进数组中 引 ...

- 使用Gogs搭建自己的Git服务--windows

Gogs介绍 官方网站:传送门... 使用Gogs可以搭建一个自己的私有Git服务. 有时候我们有一些有些不想公开的私人小项目或者练习项目,源码想放在GitHub.码云(一直觉得这名字有点蹭知名度)这 ...

- 【Html 页面布局】

float:left方式布局 <!DOCTYPE html> <html> <head> <meta charset="utf-8" /& ...

- SpringBoot 集成JUnit

项目太大,不好直接测整个项目,一般都是切割成多个单元,单独测试,即单元测试. 直接在原项目上测试,会把项目改得乱七八糟的,一般是单独写测试代码. 进行单元测试,这就需要集成JUnit. (1)在pom ...

- Redis常用命令操作

字符串类型: * 存储:set key value * 获取:get key * 无值返回nil * 删除:del key 哈希类型 hash: * 存储:hset key field value * ...

- PTA的Python练习题(一)

最近宅家里没事干,顺便把python给学了.教程和书看了一段时间,但是缺少练习的平台. 想起大一时候练习C语言的PTA平台,就拿来练手了. (因为没有验证码无法提交题目,所以自己用pycharm来做题 ...