kafka的安装和初步使用

简介

最近开发的项目中,kafka用的比较多,为了方便梳理,从今天起准备记录一些关于kafka的文章,首先,当然是如何安装kafka了。

Apache Kafka是分布式发布-订阅消息系统。

Apache Kafka与传统消息系统相比,有以下不同:

- 它被设计为一个分布式系统,易于向外扩展;

- 它同时为发布和订阅提供高吞吐量;

- 它支持多订阅者,当失败时能自动平衡消费者;

- 它将消息持久化到磁盘,因此可用于批量消费,例如ETL,以及实时应用程序。

安装 kafka

下载地址:http://mirrors.tuna.tsinghua.edu.cn/apache/kafka/2.3.0/kafka_2.11-2.3.0.tgz

wget http://mirrors.tuna.tsinghua.edu.cn/apache/kafka/2.3.0/kafka_2.11-2.3.0.tgz

解压

tar -zxvf kafka_2.-2.3..tgz

cd /usr/local/kafka_2.-2.3./

修改 kafka-server 的配置文件

vi /usr/local/kafkaa_2.11-2.3.0/config/server.properties

修改如下

broker.id=

log.dir=/data/kafka/logs-

当然,也可以不修改,只是demo测试,默认配置即可。

常用功能简介

1、启动 zookeeper

使用安装包中的脚本启动单节点 Zookeeper 实例:

sh bin/zookeeper-server-start.sh -daemon config/zookeeper.properties

2、启动Kafka 服务

使用 kafka-server-start.sh 启动 kafka 服务:

sh bin/kafka-server-start.sh config/server.properties

启动日志

[-- ::,] INFO Registered kafka:type=kafka.Log4jController MBean (kafka.utils.Log4jControllerRegistration$)

[-- ::,] INFO Registered signal handlers for TERM, INT, HUP (org.apache.kafka.common.utils.LoggingSignalHandler)

[-- ::,] INFO starting (kafka.server.KafkaServer)

[-- ::,] INFO Connecting to zookeeper on localhost: (kafka.server.KafkaServer)

[-- ::,] INFO [ZooKeeperClient Kafka server] Initializing a new session to localhost:. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Client environment:zookeeper.version=3.4.-4c25d480e66aadd371de8bd2fd8da255ac140bcf, built on // : GMT (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:host.name=localhost (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.version=1.8.0_212 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.vendor=Oracle Corporation (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.home=/usr/java/jdk1..0_212-amd64/jre (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.class.path=.:/usr/java/default/lib/dt.jar:/usr/java/default/lib/tools.jar:/usr/local/kafka_2.-2.3./bin/../libs/activation-1.1..jar:/usr/local/kafka_2.-2.3./bin/../libs/aopalliance-repackaged-2.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/argparse4j-0.7..jar:/usr/local/kafka_2.-2.3./bin/../libs/audience-annotations-0.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/commons-lang3-3.8..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-api-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-basic-auth-extension-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-file-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-json-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-runtime-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/connect-transforms-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/guava-20.0.jar:/usr/local/kafka_2.-2.3./bin/../libs/hk2-api-2.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/hk2-locator-2.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/hk2-utils-2.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-annotations-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-core-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-databind-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-dataformat-csv-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-datatype-jdk8-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-jaxrs-base-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-jaxrs-json-provider-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-module-jaxb-annotations-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-module-paranamer-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jackson-module-scala_2.-2.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/jakarta.annotation-api-1.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/jakarta.inject-2.5..jar:/usr/local/kafka_2.-2.3./bin/../libs/jakarta.ws.rs-api-2.1..jar:/usr/local/kafka_2.-2.3./bin/../libs/javassist-3.22.-CR2.jar:/usr/local/kafka_2.-2.3./bin/../libs/javax.servlet-api-3.1..jar:/usr/local/kafka_2.-2.3./bin/../libs/javax.ws.rs-api-2.1..jar:/usr/local/kafka_2.-2.3./bin/../libs/jaxb-api-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-client-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-common-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-container-servlet-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-container-servlet-core-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-hk2-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-media-jaxb-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jersey-server-2.28.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-client-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-continuation-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-http-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-io-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-security-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-server-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-servlet-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-servlets-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jetty-util-9.4..v20190429.jar:/usr/local/kafka_2.-2.3./bin/../libs/jopt-simple-5.0..jar:/usr/local/kafka_2.-2.3./bin/../libs/jsr305-3.0..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka_2.-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka_2.-2.3.-sources.jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-clients-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-log4j-appender-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-streams-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-streams-examples-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-streams-scala_2.-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-streams-test-utils-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/kafka-tools-2.3..jar:/usr/local/kafka_2.-2.3./bin/../libs/log4j-1.2..jar:/usr/local/kafka_2.-2.3./bin/../libs/lz4-java-1.6..jar:/usr/local/kafka_2.-2.3./bin/../libs/maven-artifact-3.6..jar:/usr/local/kafka_2.-2.3./bin/../libs/metrics-core-2.2..jar:/usr/local/kafka_2.-2.3./bin/../libs/osgi-resource-locator-1.0..jar:/usr/local/kafka_2.-2.3./bin/../libs/paranamer-2.8.jar:/usr/local/kafka_2.-2.3./bin/../libs/plexus-utils-3.2..jar:/usr/local/kafka_2.-2.3./bin/../libs/reflections-0.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/rocksdbjni-5.18..jar:/usr/local/kafka_2.-2.3./bin/../libs/scala-library-2.11..jar:/usr/local/kafka_2.-2.3./bin/../libs/scala-logging_2.-3.9..jar:/usr/local/kafka_2.-2.3./bin/../libs/scala-reflect-2.11..jar:/usr/local/kafka_2.-2.3./bin/../libs/slf4j-api-1.7..jar:/usr/local/kafka_2.-2.3./bin/../libs/slf4j-log4j12-1.7..jar:/usr/local/kafka_2.-2.3./bin/../libs/snappy-java-1.1.7.3.jar:/usr/local/kafka_2.-2.3./bin/../libs/spotbugs-annotations-3.1..jar:/usr/local/kafka_2.-2.3./bin/../libs/validation-api-2.0..Final.jar:/usr/local/kafka_2.-2.3./bin/../libs/zkclient-0.11.jar:/usr/local/kafka_2.-2.3./bin/../libs/zookeeper-3.4..jar:/usr/local/kafka_2.-2.3./bin/../libs/zstd-jni-1.4.-.jar (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.library.path=/usr/java/packages/lib/amd64:/usr/lib64:/lib64:/lib:/usr/lib (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.io.tmpdir=/tmp (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:java.compiler=<NA> (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.name=Linux (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.arch=amd64 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:os.version=3.10.-.el7.x86_64 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.name=root (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.home=/root (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Client environment:user.dir=/usr/local/kafka_2.-2.3./bin (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Initiating client connection, connectString=localhost: sessionTimeout= watcher=kafka.zookeeper.ZooKeeperClient$ZooKeeperClientWatcher$@6302bbb1 (org.apache.zookeeper.ZooKeeper)

[-- ::,] INFO Opening socket connection to server localhost/::::::::. Will not attempt to authenticate using SASL (unknown error) (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO Socket connection established to localhost/::::::::, initiating session (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO [ZooKeeperClient Kafka server] Waiting until connected. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Session establishment complete on server localhost/::::::::, sessionid = 0x1000016e9450000, negotiated timeout = (org.apache.zookeeper.ClientCnxn)

[-- ::,] INFO [ZooKeeperClient Kafka server] Connected. (kafka.zookeeper.ZooKeeperClient)

[-- ::,] INFO Cluster ID = SWLs93NzQPekqvdj7KmbmQ (kafka.server.KafkaServer)

[-- ::,] WARN No meta.properties file under dir /data/kafka/logs-/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[-- ::,] INFO KafkaConfig values:

advertised.host.name = null

advertised.listeners = null

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num =

alter.log.dirs.replication.quota.window.size.seconds =

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads =

broker.id =

broker.id.generation.enable = true

broker.rack = null

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms =

connections.max.idle.ms =

connections.max.reauth.ms =

control.plane.listener.name = null

controlled.shutdown.enable = true

controlled.shutdown.max.retries =

controlled.shutdown.retry.backoff.ms =

controller.socket.timeout.ms =

create.topic.policy.class.name = null

default.replication.factor =

delegation.token.expiry.check.interval.ms =

delegation.token.expiry.time.ms =

delegation.token.master.key = null

delegation.token.max.lifetime.ms =

delete.records.purgatory.purge.interval.requests =

delete.topic.enable = true

fetch.purgatory.purge.interval.requests =

group.initial.rebalance.delay.ms =

group.max.session.timeout.ms =

group.max.size =

group.min.session.timeout.ms =

host.name =

inter.broker.listener.name = null

inter.broker.protocol.version = 2.3-IV1

kafka.metrics.polling.interval.secs =

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds =

leader.imbalance.per.broker.percentage =

listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

listeners = null

log.cleaner.backoff.ms =

log.cleaner.dedupe.buffer.size =

log.cleaner.delete.retention.ms =

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size =

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

log.cleaner.max.compaction.lag.ms =

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms =

log.cleaner.threads =

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /data/kafka/logs-

log.flush.interval.messages =

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms =

log.flush.scheduler.interval.ms =

log.flush.start.offset.checkpoint.interval.ms =

log.index.interval.bytes =

log.index.size.max.bytes =

log.message.downconversion.enable = true

log.message.format.version = 2.3-IV1

log.message.timestamp.difference.max.ms =

log.message.timestamp.type = CreateTime

log.preallocate = false

log.retention.bytes = -

log.retention.check.interval.ms =

log.retention.hours =

log.retention.minutes = null

log.retention.ms = null

log.roll.hours =

log.roll.jitter.hours =

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes =

log.segment.delete.delay.ms =

max.connections =

max.connections.per.ip =

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots =

message.max.bytes =

metric.reporters = []

metrics.num.samples =

metrics.recording.level = INFO

metrics.sample.window.ms =

min.insync.replicas =

num.io.threads =

num.network.threads =

num.partitions =

num.recovery.threads.per.data.dir =

num.replica.alter.log.dirs.threads = null

num.replica.fetchers =

offset.metadata.max.bytes =

offsets.commit.required.acks = -

offsets.commit.timeout.ms =

offsets.load.buffer.size =

offsets.retention.check.interval.ms =

offsets.retention.minutes =

offsets.topic.compression.codec =

offsets.topic.num.partitions =

offsets.topic.replication.factor =

offsets.topic.segment.bytes =

password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding

password.encoder.iterations =

password.encoder.key.length =

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port =

principal.builder.class = null

producer.purgatory.purge.interval.requests =

queued.max.request.bytes = -

queued.max.requests =

quota.consumer.default =

quota.producer.default =

quota.window.num =

quota.window.size.seconds =

replica.fetch.backoff.ms =

replica.fetch.max.bytes =

replica.fetch.min.bytes =

replica.fetch.response.max.bytes =

replica.fetch.wait.max.ms =

replica.high.watermark.checkpoint.interval.ms =

replica.lag.time.max.ms =

replica.socket.receive.buffer.bytes =

replica.socket.timeout.ms =

replication.quota.window.num =

replication.quota.window.size.seconds =

request.timeout.ms =

reserved.broker.max.id =

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [GSSAPI]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin =

sasl.kerberos.principal.to.local.rules = [DEFAULT]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds =

sasl.login.refresh.min.period.seconds =

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.inter.broker.protocol = GSSAPI

sasl.server.callback.handler.class = null

security.inter.broker.protocol = PLAINTEXT

socket.receive.buffer.bytes =

socket.request.max.bytes =

socket.send.buffer.bytes =

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [TLSv1., TLSv1., TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.principal.mapping.rules = [DEFAULT]

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.abort.timed.out.transaction.cleanup.interval.ms =

transaction.max.timeout.ms =

transaction.remove.expired.transaction.cleanup.interval.ms =

transaction.state.log.load.buffer.size =

transaction.state.log.min.isr =

transaction.state.log.num.partitions =

transaction.state.log.replication.factor =

transaction.state.log.segment.bytes =

transactional.id.expiration.ms =

unclean.leader.election.enable = false

zookeeper.connect = localhost:

zookeeper.connection.timeout.ms =

zookeeper.max.in.flight.requests =

zookeeper.session.timeout.ms =

zookeeper.set.acl = false

zookeeper.sync.time.ms =

(kafka.server.KafkaConfig)

[-- ::,] INFO KafkaConfig values:

advertised.host.name = null

advertised.listeners = null

advertised.port = null

alter.config.policy.class.name = null

alter.log.dirs.replication.quota.window.num =

alter.log.dirs.replication.quota.window.size.seconds =

authorizer.class.name =

auto.create.topics.enable = true

auto.leader.rebalance.enable = true

background.threads =

broker.id =

broker.id.generation.enable = true

broker.rack = null

client.quota.callback.class = null

compression.type = producer

connection.failed.authentication.delay.ms =

connections.max.idle.ms =

connections.max.reauth.ms =

control.plane.listener.name = null

controlled.shutdown.enable = true

controlled.shutdown.max.retries =

controlled.shutdown.retry.backoff.ms =

controller.socket.timeout.ms =

create.topic.policy.class.name = null

default.replication.factor =

delegation.token.expiry.check.interval.ms =

delegation.token.expiry.time.ms =

delegation.token.master.key = null

delegation.token.max.lifetime.ms =

delete.records.purgatory.purge.interval.requests =

delete.topic.enable = true

fetch.purgatory.purge.interval.requests =

group.initial.rebalance.delay.ms =

group.max.session.timeout.ms =

group.max.size =

group.min.session.timeout.ms =

host.name =

inter.broker.listener.name = null

inter.broker.protocol.version = 2.3-IV1

kafka.metrics.polling.interval.secs =

kafka.metrics.reporters = []

leader.imbalance.check.interval.seconds =

leader.imbalance.per.broker.percentage =

listener.security.protocol.map = PLAINTEXT:PLAINTEXT,SSL:SSL,SASL_PLAINTEXT:SASL_PLAINTEXT,SASL_SSL:SASL_SSL

listeners = null

log.cleaner.backoff.ms =

log.cleaner.dedupe.buffer.size =

log.cleaner.delete.retention.ms =

log.cleaner.enable = true

log.cleaner.io.buffer.load.factor = 0.9

log.cleaner.io.buffer.size =

log.cleaner.io.max.bytes.per.second = 1.7976931348623157E308

log.cleaner.max.compaction.lag.ms =

log.cleaner.min.cleanable.ratio = 0.5

log.cleaner.min.compaction.lag.ms =

log.cleaner.threads =

log.cleanup.policy = [delete]

log.dir = /tmp/kafka-logs

log.dirs = /data/kafka/logs-

log.flush.interval.messages =

log.flush.interval.ms = null

log.flush.offset.checkpoint.interval.ms =

log.flush.scheduler.interval.ms =

log.flush.start.offset.checkpoint.interval.ms =

log.index.interval.bytes =

log.index.size.max.bytes =

log.message.downconversion.enable = true

log.message.format.version = 2.3-IV1

log.message.timestamp.difference.max.ms =

log.message.timestamp.type = CreateTime

log.preallocate = false

log.retention.bytes = -

log.retention.check.interval.ms =

log.retention.hours =

log.retention.minutes = null

log.retention.ms = null

log.roll.hours =

log.roll.jitter.hours =

log.roll.jitter.ms = null

log.roll.ms = null

log.segment.bytes =

log.segment.delete.delay.ms =

max.connections =

max.connections.per.ip =

max.connections.per.ip.overrides =

max.incremental.fetch.session.cache.slots =

message.max.bytes =

metric.reporters = []

metrics.num.samples =

metrics.recording.level = INFO

metrics.sample.window.ms =

min.insync.replicas =

num.io.threads =

num.network.threads =

num.partitions =

num.recovery.threads.per.data.dir =

num.replica.alter.log.dirs.threads = null

num.replica.fetchers =

offset.metadata.max.bytes =

offsets.commit.required.acks = -

offsets.commit.timeout.ms =

offsets.load.buffer.size =

offsets.retention.check.interval.ms =

offsets.retention.minutes =

offsets.topic.compression.codec =

offsets.topic.num.partitions =

offsets.topic.replication.factor =

offsets.topic.segment.bytes =

password.encoder.cipher.algorithm = AES/CBC/PKCS5Padding

password.encoder.iterations =

password.encoder.key.length =

password.encoder.keyfactory.algorithm = null

password.encoder.old.secret = null

password.encoder.secret = null

port =

principal.builder.class = null

producer.purgatory.purge.interval.requests =

queued.max.request.bytes = -

queued.max.requests =

quota.consumer.default =

quota.producer.default =

quota.window.num =

quota.window.size.seconds =

replica.fetch.backoff.ms =

replica.fetch.max.bytes =

replica.fetch.min.bytes =

replica.fetch.response.max.bytes =

replica.fetch.wait.max.ms =

replica.high.watermark.checkpoint.interval.ms =

replica.lag.time.max.ms =

replica.socket.receive.buffer.bytes =

replica.socket.timeout.ms =

replication.quota.window.num =

replication.quota.window.size.seconds =

request.timeout.ms =

reserved.broker.max.id =

sasl.client.callback.handler.class = null

sasl.enabled.mechanisms = [GSSAPI]

sasl.jaas.config = null

sasl.kerberos.kinit.cmd = /usr/bin/kinit

sasl.kerberos.min.time.before.relogin =

sasl.kerberos.principal.to.local.rules = [DEFAULT]

sasl.kerberos.service.name = null

sasl.kerberos.ticket.renew.jitter = 0.05

sasl.kerberos.ticket.renew.window.factor = 0.8

sasl.login.callback.handler.class = null

sasl.login.class = null

sasl.login.refresh.buffer.seconds =

sasl.login.refresh.min.period.seconds =

sasl.login.refresh.window.factor = 0.8

sasl.login.refresh.window.jitter = 0.05

sasl.mechanism.inter.broker.protocol = GSSAPI

sasl.server.callback.handler.class = null

security.inter.broker.protocol = PLAINTEXT

socket.receive.buffer.bytes =

socket.request.max.bytes =

socket.send.buffer.bytes =

ssl.cipher.suites = []

ssl.client.auth = none

ssl.enabled.protocols = [TLSv1., TLSv1., TLSv1]

ssl.endpoint.identification.algorithm = https

ssl.key.password = null

ssl.keymanager.algorithm = SunX509

ssl.keystore.location = null

ssl.keystore.password = null

ssl.keystore.type = JKS

ssl.principal.mapping.rules = [DEFAULT]

ssl.protocol = TLS

ssl.provider = null

ssl.secure.random.implementation = null

ssl.trustmanager.algorithm = PKIX

ssl.truststore.location = null

ssl.truststore.password = null

ssl.truststore.type = JKS

transaction.abort.timed.out.transaction.cleanup.interval.ms =

transaction.max.timeout.ms =

transaction.remove.expired.transaction.cleanup.interval.ms =

transaction.state.log.load.buffer.size =

transaction.state.log.min.isr =

transaction.state.log.num.partitions =

transaction.state.log.replication.factor =

transaction.state.log.segment.bytes =

transactional.id.expiration.ms =

unclean.leader.election.enable = false

zookeeper.connect = localhost:

zookeeper.connection.timeout.ms =

zookeeper.max.in.flight.requests =

zookeeper.session.timeout.ms =

zookeeper.set.acl = false

zookeeper.sync.time.ms =

(kafka.server.KafkaConfig)

[-- ::,] INFO [ThrottledChannelReaper-Fetch]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO [ThrottledChannelReaper-Produce]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO [ThrottledChannelReaper-Request]: Starting (kafka.server.ClientQuotaManager$ThrottledChannelReaper)

[-- ::,] INFO Log directory /data/kafka/logs- not found, creating it. (kafka.log.LogManager)

[-- ::,] INFO Loading logs. (kafka.log.LogManager)

[-- ::,] INFO Logs loading complete in ms. (kafka.log.LogManager)

[-- ::,] INFO Starting log cleanup with a period of ms. (kafka.log.LogManager)

[-- ::,] INFO Starting log flusher with a default period of ms. (kafka.log.LogManager)

[-- ::,] INFO Awaiting socket connections on 0.0.0.0:. (kafka.network.Acceptor)

[-- ::,] INFO [SocketServer brokerId=] Created data-plane acceptor and processors for endpoint : EndPoint(null,,ListenerName(PLAINTEXT),PLAINTEXT) (kafka.network.SocketServer)

[-- ::,] INFO [SocketServer brokerId=] Started acceptor threads for data-plane (kafka.network.SocketServer)

[-- ::,] INFO [ExpirationReaper--Produce]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Fetch]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--DeleteRecords]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--ElectPreferredLeader]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [LogDirFailureHandler]: Starting (kafka.server.ReplicaManager$LogDirFailureHandler)

[-- ::,] INFO Creating /brokers/ids/ (is it secure? false) (kafka.zk.KafkaZkClient)

[-- ::,] INFO Stat of the created znode at /brokers/ids/ is: ,,,,,,,,,,

(kafka.zk.KafkaZkClient)

[-- ::,] INFO Registered broker at path /brokers/ids/ with addresses: ArrayBuffer(EndPoint(localhost,,ListenerName(PLAINTEXT),PLAINTEXT)), czxid (broker epoch): (kafka.zk.KafkaZkClient)

[-- ::,] WARN No meta.properties file under dir /data/kafka/logs-/meta.properties (kafka.server.BrokerMetadataCheckpoint)

[-- ::,] INFO [ExpirationReaper--topic]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Heartbeat]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO [ExpirationReaper--Rebalance]: Starting (kafka.server.DelayedOperationPurgatory$ExpiredOperationReaper)

[-- ::,] INFO Successfully created /controller_epoch with initial epoch (kafka.zk.KafkaZkClient)

[-- ::,] INFO [GroupCoordinator ]: Starting up. (kafka.coordinator.group.GroupCoordinator)

[-- ::,] INFO [GroupCoordinator ]: Startup complete. (kafka.coordinator.group.GroupCoordinator)

[-- ::,] INFO [GroupMetadataManager brokerId=] Removed expired offsets in milliseconds. (kafka.coordinator.group.GroupMetadataManager)

[-- ::,] INFO [ProducerId Manager ]: Acquired new producerId block (brokerId:,blockStartProducerId:,blockEndProducerId:) by writing to Zk with path version (kafka.coordinator.transaction.ProducerIdManager)

[-- ::,] INFO [TransactionCoordinator id=] Starting up. (kafka.coordinator.transaction.TransactionCoordinator)

[-- ::,] INFO [Transaction Marker Channel Manager ]: Starting (kafka.coordinator.transaction.TransactionMarkerChannelManager)

[-- ::,] INFO [TransactionCoordinator id=] Startup complete. (kafka.coordinator.transaction.TransactionCoordinator)

[-- ::,] INFO [/config/changes-event-process-thread]: Starting (kafka.common.ZkNodeChangeNotificationListener$ChangeEventProcessThread)

[-- ::,] INFO [SocketServer brokerId=] Started data-plane processors for acceptors (kafka.network.SocketServer)

[-- ::,] INFO Kafka version: 2.3. (org.apache.kafka.common.utils.AppInfoParser)

[-- ::,] INFO Kafka commitId: fc1aaa116b661c8a (org.apache.kafka.common.utils.AppInfoParser)

[-- ::,] INFO Kafka startTimeMs: (org.apache.kafka.common.utils.AppInfoParser)

[-- ::,] INFO [KafkaServer id=] started (kafka.server.KafkaServer)

[-- ::,] INFO [ReplicaFetcherManager on broker ] Removed fetcher for partitions Set(test-) (kafka.server.ReplicaFetcherManager)

[-- ::,] INFO [Log partition=test-, dir=/data/kafka/logs-] Loading producer state till offset with message format version (kafka.log.Log)

[-- ::,] INFO [Log partition=test-, dir=/data/kafka/logs-] Completed load of log with segments, log start offset and log end offset in ms (kafka.log.Log)

[-- ::,] INFO Created log for partition test- in /data/kafka/logs- with properties {compression.type -> producer, message.downconversion.enable -> true, min.insync.replicas -> , segment.jitter.ms -> , cleanup.policy -> [delete], flush.ms -> , segment.bytes -> , retention.ms -> , flush.messages -> , message.format.version -> 2.3-IV1, file.delete.delay.ms -> , max.compaction.lag.ms -> , max.message.bytes -> , min.compaction.lag.ms -> , message.timestamp.type -> CreateTime, preallocate -> false, min.cleanable.dirty.ratio -> 0.5, index.interval.bytes -> , unclean.leader.election.enable -> false, retention.bytes -> -, delete.retention.ms -> , segment.ms -> , message.timestamp.difference.max.ms -> , segment.index.bytes -> }. (kafka.log.LogManager)

[-- ::,] INFO [Partition test- broker=] No checkpointed highwatermark is found for partition test- (kafka.cluster.Partition)

[-- ::,] INFO Replica loaded for partition test- with initial high watermark (kafka.cluster.Replica)

[-- ::,] INFO [Partition test- broker=] test- starts at Leader Epoch from offset . Previous Leader Epoch was: - (kafka.cluster.Partition)

[-- ::,] INFO [GroupMetadataManager brokerId=] Removed expired offsets in milliseconds. (kafka.coordinator.group.GroupMetadataManager)

[-- ::,] INFO [GroupMetadataManager brokerId=] Removed expired offsets in milliseconds. (kafka.coordinator.group.GroupMetadataManager)

3、创建 topic

使用 kafka-topics.sh 创建单分区单副本的 topic test:

sh bin/kafka-topics.sh --create --zookeeper localhost: --replication-factor --partitions --topic test

结果

Created topic test.

查看 topic 列表:

bin/kafka-topics.sh --list --zookeeper localhost:

结果

test

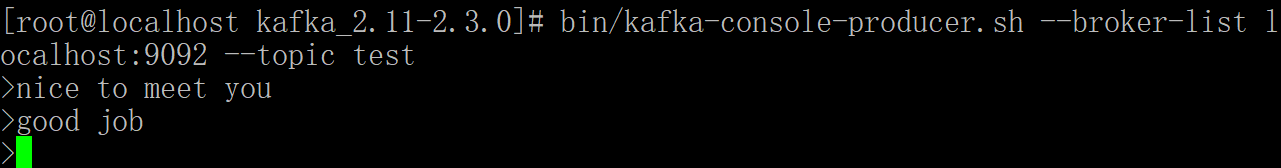

4、产生消息

使用 kafka-console-producer.sh 发送消息:

sh bin/kafka-console-producer.sh --broker-list localhost: --topic test

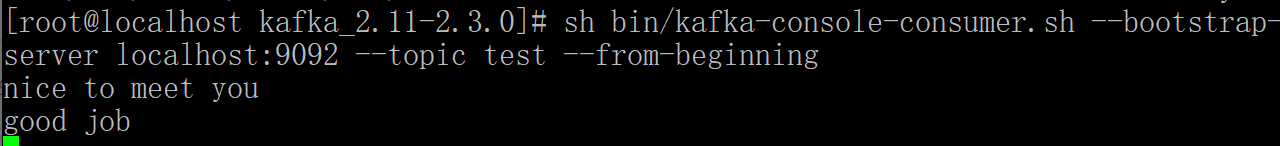

5、消费消息

使用 kafka-console-consumer.sh 接收消息并在终端打印:

sh bin/kafka-console-consumer.sh --zookeeper localhost: --topic test --from-beginning

打开个新的命令窗口执行上面命令即可查看信息:

出错

zookeeper is not a recognized option

原因:我们使用的是高版本的kafka,换成以下命令

sh bin/kafka-console-consumer.sh --bootstrap-server localhost: --topic test --from-beginning

可以看到这回发布的消息都可以订阅到了。

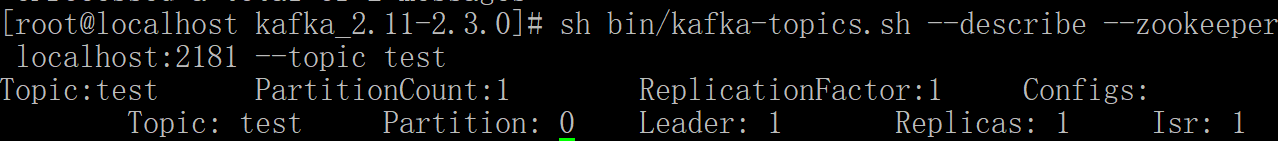

6、查看描述 topics 信息

sh bin/kafka-topics.sh --describe --zookeeper localhost: --topic test

结果:

解释

第一行给出了所有分区的摘要,每个附加行给出了关于一个分区的信息。 由于我们只有一个分区,所以只有一行。

“Leader”: 是负责给定分区的所有读取和写入的节点。 每个节点将成为分区随机选择部分的领导者。

“Replicas”: 是复制此分区日志的节点列表,无论它们是否是领导者,或者即使他们当前处于活动状态。

“Isr”: 是一组“同步”副本。这是复制品列表的子集,当前活着并被引导到领导者。

集群配置

Kafka 支持两种模式的集群搭建:一种是在单机上运行多个 broker 实例来实现集群,一种是在多台机器上搭建集群。

下面介绍下如何实现单机多 broker 实例集群,其实很简单,只需要如下配置即可。

单机多broker 集群配置

利用单节点部署多个 broker。 不同的 broker 设置不同的 id,监听端口及日志目录。 例如:

cp config/server.properties config/server-.properties

cp config/server.properties config/server-.properties

vim config/server-.properties

vim config/server-.properties

修改 :

broker.id=

listeners = PLAINTEXT://your.host.name:9093

log.dir=/data/kafka/logs-

和

broker.id=

listeners = PLAINTEXT://your.host.name:9094

log.dir=/data/kafka/logs-

启动Kafka服务:

bin/kafka-server-start.sh config/server-.properties &

bin/kafka-server-start.sh config/server-.properties &

至此,单机多broker实例的集群配置完毕。

多机多 broker 集群配置

分别在多个节点按上述方式安装 Kafka,配置启动多个 Zookeeper 实例。

假设三台机器 IP 地址是 : 192.168.153.135, 192.168.153.136, 192.168.153.137

分别配置多个机器上的 Kafka 服务,设置不同的 broker id,zookeeper.connect 设置如下:

vim config/server.properties

里面的 zookeeper.connect

修改为:

zookeeper.connect=192.168.153.135:,192.168.153.136:,192.168.153.137:

kafka的安装和初步使用的更多相关文章

- Kafka的安装和部署及测试

1.简介 大数据分析处理平台包括数据的接入,数据的存储,数据的处理,以及后面的展示或者应用.今天我们连说一下数据的接入,数据的接入目前比较普遍的是采用kafka将前面的数据通过消息的方式,以数据流的形 ...

- Linux下Kafka单机安装配置方法(图文)

Kafka是一个分布式的.可分区的.可复制的消息系统.它提供了普通消息系统的功能,但具有自己独特的设计.这个独特的设计是什么样的呢 介绍 Kafka是一个分布式的.可分区的.可复制的消息系统.它提供了 ...

- kafka的安装以及基本用法

kafka的安装 kafka依赖于ZooKeeper,所以在运行kafka之前需要先部署ZooKeeper集群,ZooKeeper集群部署方式分为两种,一种是单独部署(推荐),另外一种是使用kafka ...

- kafka manager安装配置和使用

kafka manager安装配置和使用 .安装yum源 curl https://bintray.com/sbt/rpm/rpm | sudo tee /etc/yum.repos.d/bintra ...

- kafka 的安装部署

Kafka 的简介: Kafka 是一款分布式消息发布和订阅系统,具有高性能.高吞吐量的特点而被广泛应用与大数据传输场景.它是由 LinkedIn 公司开发,使用 Scala 语言编写,之后成为 Ap ...

- Kafka学习之路 (四)Kafka的安装

一.下载 下载地址: http://kafka.apache.org/downloads.html http://mirrors.hust.edu.cn/apache/ 二.安装前提(zookeepe ...

- MySQL安装与初步操作

MySQL是一款出色的中小型关系数据库,做Java Web开发时,要做到数据持久化存储,选择一款数据库软件自然必不可少. 由于MySQL社区版开元免费,功能比较强大,在此以MySQL为例,演示MySQ ...

- centos php Zookeeper kafka扩展安装

如题,系统架构升级引入消息机制,php 安装还是挺麻烦的,网上各种文章有的东拼西凑这里记录下来做个备忘,有需要的同学可以自行参考安装亲测可行 1 zookeeper扩展安装 1.安装zookeeper ...

- JetBrains PyCharm(Professional版本)的下载、安装和初步使用

不多说,直接上干货! 首先谈及这款软件,博主我用的理由:搞机器学习和深度学习! 想学习Python的同学们,在这里隆重介绍一款 Python 的开发工具 pyCharm IDE.这是我最喜欢的 Pyt ...

随机推荐

- 海关单一窗口程序出现网络/MQ问题后自动修复处理

单一窗口切换了2年多了,由于RabbitMQ或者网络的不稳定,或者升级或者网络调整,等等诸多问题导致了海关单一窗口程序会不定期的出现文件及回执自动处理的作业停止的问题. 最近终于想明白了,把日志监控起 ...

- python验证码处理(1)

目录 一.普通图形验证码 这篇博客及之后的系列,我会向大家介绍各种验证码的识别.包括普通图形验证码,极验滑动验证码,点触验证码,微博宫格验证码. 一.普通图形验证码 之前的博客已向大家介绍了简 ...

- pycharm替换文件中所有相同字段方法

1.打开要修改的文件 2.ctrl r调出替换功能,如图所示: 3.上面红框是需要更改的部分,下面红框是想要更改为部分,编辑后,点击“replace all”即可

- 【IPHONE开发-OBJECTC入门学习】对象的归档和解归档

转自:http://blog.csdn.net/java886o/article/details/9046967 #import <Foundation/Foundation.h> #im ...

- c# 读取txt文件中文乱码解决方法

之前做过一个项目,在程序运行目录下有个txt文件,文件内容是中文的时候会乱码, 后来用这个函数处理后,就不乱码了: private string GetPDA_Code() { ...

- 【设计模式】Prototype

前言 这篇讲设计模式的部分相对较少.Prototype设计模式,它提供一种复制对象的思路.使用Prototype就可以在不需要了解类结构的前提下,复制一个现有对象.写了一个代码片段,讲解使用Objec ...

- Java使用MD5加盐进行加密

Java使用MD5加盐进行加密 我使用的方法是导入了md5.jar包,就不需要再自己写MD5的加密算法了,直接调用方法即可 点击下载md5包 import com.ndktools.javamd ...

- Leetcode 88:合并两个有序数组

Leetcode链接 : https://leetcode-cn.com/problems/merge-sorted-array/ 问题描述: 给定两个有序整数数组 nums1 和 nums2,将 n ...

- c++的explicit理解

默认规定 只有一个参数的构造函数也定义了一个隐式转换,将该构造函数对应数据类型的数据转换为该类对象 explicit class A { explicit A(int n); A(char *p); ...

- django rest framework 过滤 lim分页

一.过滤 1.首先引用diango 自带的过滤配置 2.导入模块 from django_filters.rest_framework import DjangoFilterBackend from ...