Requests+BeautifulSoup+正则表达式爬取猫眼电影Top100(名称,演员,评分,封面,上映时间,简介)

# encoding:utf-8

from requests.exceptions import RequestException

import requests

import re

import json

from multiprocessing import Pool def get_one_page(url):

try:

response = requests.get(url)

if response.status_code == 200:

return response.text

return None

except RequestException:

return None def parse_one_page(html):

pattern = re.compile('<dd>.*?board-index.*?>(\d+)</i>.*?data-src="(.*?)".*?name"><a'

+'.*?>(.*?)</a>.*?star">(.*?)</p>.*?releasetime">(.*?)</p>'

+'.*?integer">(.*?)</i>.*?fraction">(.*?)</i>.*?</dd>',re.S)

items = re.findall(pattern, html)

# print(items)

for item in items:

yield {

'index': item[0],

'image': item[1],

'title': item[2],

'actor': item[3].strip()[3:],

'time': item[4].strip()[5:],

'score': item[5]+item[6]

} def write_to_file(content):

with open('MaoyanTop100.txt', 'a', encoding='utf-8') as f:

f.write(json.dumps(content, ensure_ascii=False)+'\n')

f.close() def main(offset):

url = "http://maoyan.com/board/4?offset="+str(offset)

html = get_one_page(url)

# print(html)

# parse_one_page(html)

for item in parse_one_page(html):

print(item)

write_to_file(item) if __name__ == '__main__':

pool = Pool()

# for i in range(10):

# main(i*10)

# 加快效率

pool.map(main, [i*10 for i in range(10)])

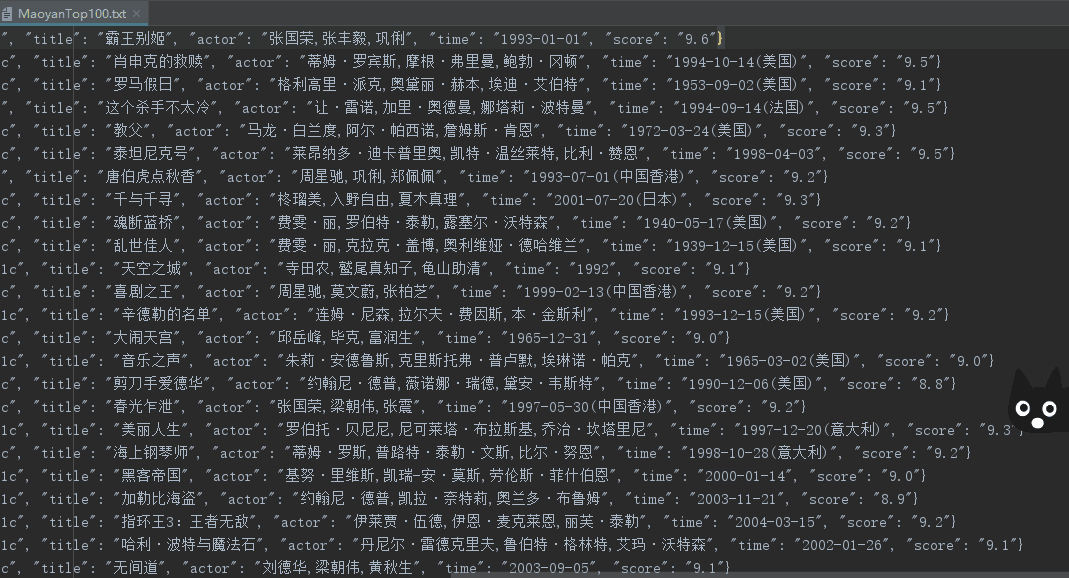

效果图:

更新(获取封面以及影片简介):

# encoding:utf-8

from requests.exceptions import RequestException

import requests

import json

import re

from urllib import request

from bs4 import BeautifulSoup def get_one_page(url):

try:

response = requests.get(url)

if response.status_code == 200:

return response.text

return None

except RequestException:

return None def parse_one_page(html):

pattern = re.compile('<dd>.*?board-index.*?>(\d+)</i>.*?href="(.*?)".*?data-src="(.*?)".*?name"><a'

+'.*?>(.*?)</a>.*?star">(.*?)</p>.*?releasetime">(.*?)</p>'

+'.*?integer">(.*?)</i>.*?fraction">(.*?)</i>.*?</dd>',re.S)

items = re.findall(pattern, html)

# print(items)

for item in items:

yield {

'index': item[0],

'jump': item[1],

'image': item[2],

'title': item[3],

'actor': item[4].strip()[3:],

'time': item[5].strip()[5:],

'score': item[6]+item[7]

} def parse_summary_page(url):

# url = 'https://maoyan.com/films/1203'

head = {}

# 使用代理

head['User - Agent'] = 'User-Agent:Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/63.0.3239.26 Safari/537.36 Core/1.63.6788.400 QQBrowser/10.3.2843.400'

req = request.Request(url, headers=head)

response = request.urlopen(req)

html = response.read()

# 创建request对象

soup = BeautifulSoup(html, 'lxml')

# 找出div中的内容

soup_text = soup.find('span', class_='dra')

# 输出其中的文本

# print(soup_text.text)

return soup_text def write_to_file(content):

with open('newMaoyanTop100.txt', 'a', encoding='utf-8') as f:

f.write(json.dumps(content, ensure_ascii=False)+'\n')

f.close() def main(offset):

url = "http://maoyan.com/board/4?offset="+str(offset*10)

html = get_one_page(url) for item in parse_one_page(html):

# print(item['number'])

# print(item['jump'])

jump_url = "https://maoyan.com"+str(item['jump'])

item['summary'] = str(parse_summary_page(jump_url)).replace("<span class=\"dra\">","").replace("</span>","")

print(item)

write_to_file(item) # 写txt

# for item in parse_one_page(html):

# write_to_file(item['title']) # 爬取100张图片

# path = 'E:\\myCode\\py_test\\MaoyanTop100\\images\\'

# for item in parse_one_page(html):

# urllib.request.urlretrieve(item['image'], '{}{}.jpg'.format(path, item['index'])) if __name__ == '__main__':

for i in range(10):

main(i)

Requests+BeautifulSoup+正则表达式爬取猫眼电影Top100(名称,演员,评分,封面,上映时间,简介)的更多相关文章

- requests和正则表达式爬取猫眼电影Top100练习

1 import requests 2 import re 3 from multiprocessing import Pool 4 from requests.exceptions import R ...

- python3.6 利用requests和正则表达式爬取猫眼电影TOP100

import requests from requests.exceptions import RequestException from multiprocessing import Pool im ...

- PYTHON 爬虫笔记八:利用Requests+正则表达式爬取猫眼电影top100(实战项目一)

利用Requests+正则表达式爬取猫眼电影top100 目标站点分析 流程框架 爬虫实战 使用requests库获取top100首页: import requests def get_one_pag ...

- 爬虫练习之正则表达式爬取猫眼电影Top100

#猫眼电影Top100import requests,re,timedef get_one_page(url): headers={ 'User-Agent':'Mozilla/5.0 (Window ...

- Requests+正则表达式爬取猫眼电影(TOP100榜)

猫眼电影网址:www.maoyan.com 前言:网上一些大神已经对猫眼电影进行过爬取,所用的方法也是各有其优,最终目的是把影片排名.图片.名称.主要演员.上映时间与评分提取出来并保存到文件或者数据库 ...

- Python爬虫实战之Requests+正则表达式爬取猫眼电影Top100

import requests from requests.exceptions import RequestException import re import json # from multip ...

- python爬虫从入门到放弃(九)之 Requests+正则表达式爬取猫眼电影TOP100

import requests from requests.exceptions import RequestException import re import json from multipro ...

- 整理requests和正则表达式爬取猫眼Top100中遇到的问题及解决方案

最近看崔庆才老师的爬虫课程,第一个实战课程是requests和正则表达式爬取猫眼电影Top100榜单.虽然理解崔老师每一步代码的实现过程,但自己敲代码的时候还是遇到了不少问题: 问题1:获取respo ...

- 14-Requests+正则表达式爬取猫眼电影

'''Requests+正则表达式爬取猫眼电影TOP100''''''流程框架:抓去单页内容:利用requests请求目标站点,得到单个网页HTML代码,返回结果.正则表达式分析:根据HTML代码分析 ...

随机推荐

- winform里直接使用WCF,不需要单独的WCF项目

https://www.cnblogs.com/fengwenit/p/4249446.html 依照此法建立即可, 但是vs生成的配置有误,正确配置如下 <?xml version=" ...

- mui项目中如何使用原生JavaScript代替jquery来操作dom

最近在用mui写页面,当然了在移动App里引入jq或zepto这些框架,肯定是极不理性的.原生JS挺简单,为何需要jq?jq的成功当时是因为ie6.7.8.9.10.chrome.ff这些浏览器不兼容 ...

- swiper 视频轮番

百度搜索:swiper 视频轮番 转载1:https://blog.csdn.net/Aimee1608/article/details/79637929 项目中使用swiper插件嵌套video标签 ...

- spark sql的agg函数,作用:在整体DataFrame不分组聚合

.agg(expers:column*) 返回dataframe类型 ,同数学计算求值 df.agg(max("age"), avg("salary")) df ...

- Scala集合(二)

将函数映射到集合 map方法 val names = List("Peter" , "Paul", "Mary") names.map(_. ...

- [django]drf知识点梳理-分页

msyql分页 limit offset https://www.cnblogs.com/iiiiiher/articles/8846194.html django自己实现分页 https://www ...

- [LeetCode] 98. Validate Binary Search Tree_Medium

Given a binary tree, determine if it is a valid binary search tree (BST). Assume a BST is defined as ...

- python使用cx_Oracle在Linux和Windows下的一点差异

1. 主要是线程方面的差异. Windows下,把cx_Oracle.connect(connectedId)得到的handle传给定时器线程,主线程和和定时器可以用同一个handle. 但Linux ...

- @responsebody 返回json

添加jackson依赖 添加@ResponseBody 测试: 注意,如果输入中文,出现乱码现象,则需要@RequestMapping(value="/appinterface" ...

- Lepus(天兔)监控MySQL部署

http://www.dbarun.com/docs/lepus/install/lnmp/ 注意:xampp mysqldb-python版本太高会导致lepus白屏 apache版本最好选择2.2 ...