TF之RNN:TensorBoard可视化之基于顺序的RNN回归案例实现蓝色正弦虚线预测红色余弦实线—Jason niu

import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt BATCH_START = 0

TIME_STEPS = 20

BATCH_SIZE = 50

INPUT_SIZE = 1

OUTPUT_SIZE = 1

CELL_SIZE = 10

LR = 0.006

BATCH_START_TEST = 0 def get_batch():

global BATCH_START, TIME_STEPS

xs = np.arange(BATCH_START, BATCH_START+TIME_STEPS*BATCH_SIZE).reshape((BATCH_SIZE, TIME_STEPS)) / (10*np.pi)

seq = np.sin(xs)

res = np.cos(xs)

BATCH_START += TIME_STEPS

return [seq[:, :, np.newaxis], res[:, :, np.newaxis], xs] class LSTMRNN(object):

def __init__(self, n_steps, input_size, output_size, cell_size, batch_size): self.n_steps = n_steps

self.input_size = input_size

self.output_size = output_size

self.cell_size = cell_size

self.batch_size = batch_size

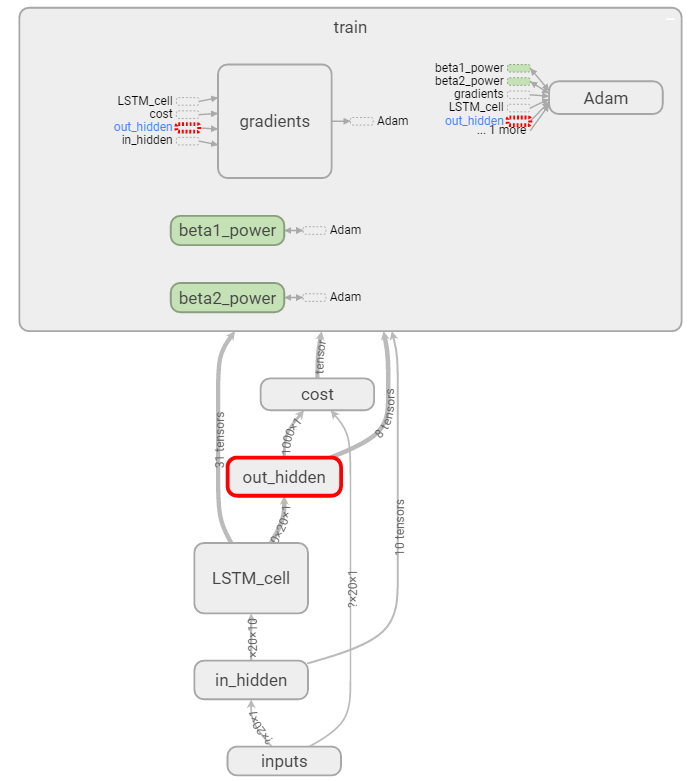

with tf.name_scope('inputs'):

self.xs = tf.placeholder(tf.float32, [None, n_steps, input_size], name='xs')

self.ys = tf.placeholder(tf.float32, [None, n_steps, output_size], name='ys')

with tf.variable_scope('in_hidden'):

self.add_input_layer()

with tf.variable_scope('LSTM_cell'):

self.add_cell()

with tf.variable_scope('out_hidden'):

self.add_output_layer()

with tf.name_scope('cost'):

self.compute_cost()

with tf.name_scope('train'):

self.train_op = tf.train.AdamOptimizer(LR).minimize(self.cost) def add_input_layer(self,):

l_in_x = tf.reshape(self.xs, [-1, self.input_size], name='2_2D')

Ws_in = self._weight_variable([self.input_size, self.cell_size]) bs_in = self._bias_variable([self.cell_size,]) with tf.name_scope('Wx_plus_b'):

l_in_y = tf.matmul(l_in_x, Ws_in) + bs_in

self.l_in_y = tf.reshape(l_in_y, [-1, self.n_steps, self.cell_size], name='2_3D') def add_cell(self):

lstm_cell = tf.nn.rnn_cell.BasicLSTMCell(self.cell_size, forget_bias=1.0, state_is_tuple=True) with tf.name_scope('initial_state'):

self.cell_init_state = lstm_cell.zero_state(self.batch_size, dtype=tf.float32)

self.cell_outputs, self.cell_final_state = tf.nn.dynamic_rnn(

lstm_cell, self.l_in_y, initial_state=self.cell_init_state, time_major=False) def add_output_layer(self):

l_out_x = tf.reshape(self.cell_outputs, [-1, self.cell_size], name='2_2D')

Ws_out = self._weight_variable([self.cell_size, self.output_size])

bs_out = self._bias_variable([self.output_size, ])

# shape = (batch * steps, output_size)

with tf.name_scope('Wx_plus_b'):

self.pred = tf.matmul(l_out_x, Ws_out) + bs_out def compute_cost(self):

losses = tf.contrib.legacy_seq2seq.sequence_loss_by_example(

[tf.reshape(self.pred, [-1], name='reshape_pred')],

[tf.reshape(self.ys, [-1], name='reshape_target')],

[tf.ones([self.batch_size * self.n_steps], dtype=tf.float32)],

average_across_timesteps=True,

softmax_loss_function=self.ms_error,

name='losses'

)

with tf.name_scope('average_cost'):

self.cost = tf.div(

tf.reduce_sum(losses, name='losses_sum'),

self.batch_size,

name='average_cost')

tf.summary.scalar('cost', self.cost) def ms_error(self, y_target, y_pre):

return tf.square(tf.sub( y_target, y_pre)) def _weight_variable(self, shape, name='weights'):

initializer = tf.random_normal_initializer(mean=0., stddev=1.,)

return tf.get_variable(shape=shape, initializer=initializer, name=name) def _bias_variable(self, shape, name='biases'):

initializer = tf.constant_initializer(0.1)

return tf.get_variable(name=name, shape=shape, initializer=initializer) if __name__ == '__main__': model = LSTMRNN(TIME_STEPS, INPUT_SIZE, OUTPUT_SIZE, CELL_SIZE, BATCH_SIZE)

sess = tf.Session()

merged=tf.summary.merge_all()

writer=tf.summary.FileWriter("niu0127/logs0127",sess.graph)

sess.run(tf.global_variables_initializer())

TF之RNN:TensorBoard可视化之基于顺序的RNN回归案例实现蓝色正弦虚线预测红色余弦实线—Jason niu的更多相关文章

- TF之RNN:matplotlib动态演示之基于顺序的RNN回归案例实现高效学习逐步逼近余弦曲线—Jason niu

import tensorflow as tf import numpy as np import matplotlib.pyplot as plt BATCH_START = 0 TIME_STEP ...

- TF之RNN:基于顺序的RNN分类案例对手写数字图片mnist数据集实现高精度预测—Jason niu

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data mnist = input_dat ...

- TF:利用TF的train.Saver将训练好的variables(W、b)保存到指定的index、meda文件—Jason niu

import tensorflow as tf import numpy as np W = tf.Variable([[2,1,8],[1,2,5]], dtype=tf.float32, name ...

- TF:利用TF的train.Saver载入曾经训练好的variables(W、b)以供预测新的数据—Jason niu

import tensorflow as tf import numpy as np W = tf.Variable(np.arange(6).reshape((2, 3)), dtype=tf.fl ...

- Tensorflow学习笔记3:TensorBoard可视化学习

TensorBoard简介 Tensorflow发布包中提供了TensorBoard,用于展示Tensorflow任务在计算过程中的Graph.定量指标图以及附加数据.大致的效果如下所示, Tenso ...

- 学习TensorFlow,TensorBoard可视化网络结构和参数

在学习深度网络框架的过程中,我们发现一个问题,就是如何输出各层网络参数,用于更好地理解,调试和优化网络?针对这个问题,TensorFlow开发了一个特别有用的可视化工具包:TensorBoard,既可 ...

- Tensorboard可视化

# -*- coding: utf-8 -*-"""Created on Sun Nov 5 09:29:36 2017 @author: Admin"&quo ...

- tensorboard可视化节点却没有显示图像的解决方法---注意路径问题加中文文件名

问题:完成graph中的算子,并执行tf.Session后,用tensorboard可视化节点时,没有显示图像 1. tensorboard 1.10 我是将log文件存储在E盘下面的,所以直接在E盘 ...

- 超简单tensorflow入门优化程序&&tensorboard可视化

程序1 任务描述: x = 3.0, y = 100.0, 运算公式 x×W+b = y,求 W和b的最优解. 使用tensorflow编程实现: #-*- coding: utf-8 -*-) im ...

随机推荐

- html<meta>标签

1. 定义说明 <meta>提供与页面有关的元数据,元数据是对数据的描述 <meta>总是位于<head></head>中 <meta>定义 ...

- php可变数量的参数

PHP 在用户自定义函数中支持可变数量的参数列表.在 PHP 5.6 及以上的版本中,由 ... 语法实现:在 PHP 5.5 及更早版本中,使用函数 func_num_args(),func_get ...

- poj1236 SCC+缩点

/* 强连通分量内的点可以互相传送,可以直接缩点 缩点后得到一棵树 第一问的答案是零入度点数量, 第二问: 加多少边后变成强连通图 树上入度为0的点有p个,出度为0的点为q,那么答案就是max(p,q ...

- 详解C程序编译、链接与存储空间布局

被隐藏了的过程 现如今在流行的集成开发环境下我们很少需要关注编译和链接的过程,而隐藏在程序运行期间的细节过程可不简单,即使使用命令行来编译一个源代码文件,简单的一句"gcc hello.c& ...

- IDEA项目的复制操作

另一种复制项目的方法 完成

- linux基础练习题(3)

关卡三 练习题 在家路径下创建A文件夹 在上一步创建的A文件夹中,创建B/C/D文件夹 在上一步所在的路径中,创建C/E/F文件夹 使用目录树查看文件夹结构 A/B/C中创建一个hello.py文件 ...

- Django实现注册页面_头像上传

Django实现注册页面_头像上传 Django实现注册页面_头像上传 1.urls.py 配置路由 from django.conf.urls import url from django.cont ...

- Vs2015 本地git获取的代码目录文件修改后,启动提示error:Unable to start program “C:\Program Files\dotnet\dotnet.exe” 已解决.

http://stackoverflow.com/questions/39938453/unable-to-start-program-c-program-files-dotnet-dotnet-ex ...

- PHP SOAP 发送XML

<?php $xmldata = <<<EOT <soapenv:Envelope xmlns:soapenv="http://schemas.xmlsoap. ...

- .net core支持的操作系统版本

https://github.com/dotnet/core/blob/master/os-lifecycle-policy.md