python之scrapy的debug、shell、settings、pipelines

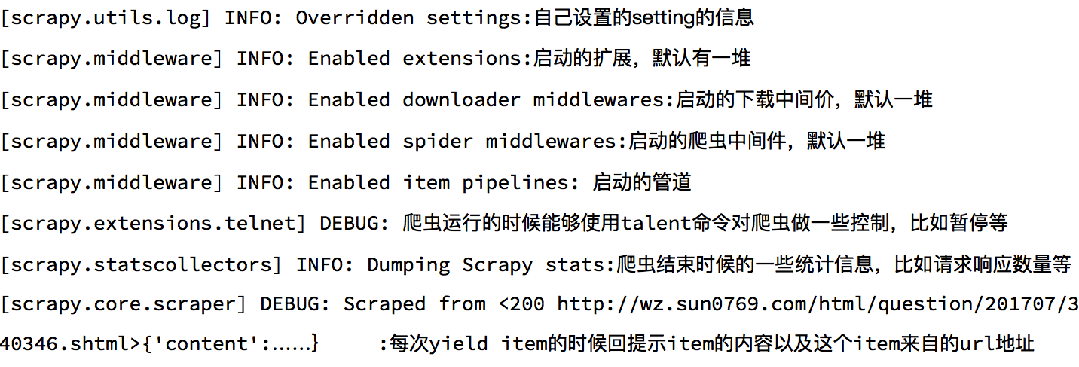

1、debug了解

2、scrapy shell了解

Scrapy shell是一个交互终端,我们可以在未启动spider的情况下尝试及调试代码,也可以用来测试XPath表达式 使用方法:

scrapy shell https://gosuncn.zhiye.com/social/ response.url:当前响应的url地址

response.request.url:当前响应对应的请求的url地址

response.headers:响应头

response.body:响应体,也就是html代码,默认是byte类型

response.requests.headers:当前响应的请求头

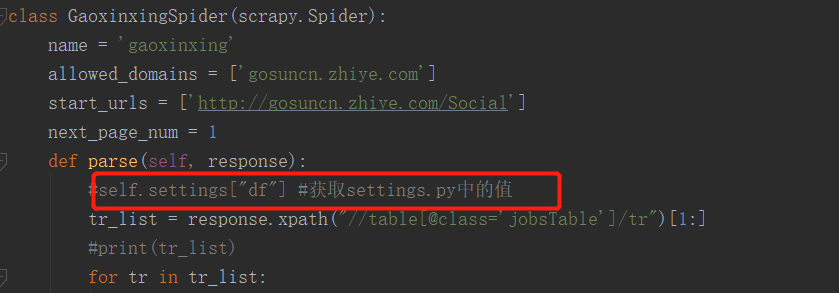

3、settings.py

# -*- coding: utf-8 -*- # Scrapy settings for gosuncn project

#

# For simplicity, this file contains only settings considered important or

# commonly used. You can find more settings consulting the documentation:

#

# https://doc.scrapy.org/en/latest/topics/settings.html

# https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

# https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#settings文件一般存取全局变量,如数据库的账号,密码等###

BOT_NAME = 'gosuncn' SPIDER_MODULES = ['gosuncn.spiders']

NEWSPIDER_MODULE = 'gosuncn.spiders' LOG_LEVEL="WARNING"

# Crawl responsibly by identifying yourself (and your website) on the user-agent

#USER_AGENT = 'gosuncn (+http://www.yourdomain.com)' # Obey robots.txt rules

#遵守ROBOT协议,也就是会先请求ROBOT协议

ROBOTSTXT_OBEY = True # Configure maximum concurrent requests performed by Scrapy (default: 16)

#并发数

#CONCURRENT_REQUESTS = 32 # Configure a delay for requests for the same website (default: 0)

# See https://doc.scrapy.org/en/latest/topics/settings.html#download-delay

# See also autothrottle settings and docs

#下载延迟,每次请求前,先睡3秒

#DOWNLOAD_DELAY = 3

# The download delay setting will honor only one of:

#和DOWNLOAD_DELAY配合使用

#CONCURRENT_REQUESTS_PER_DOMAIN = 16

#CONCURRENT_REQUESTS_PER_IP = 16 # Disable cookies (enabled by default)

#设置COOKIES,默认携带COOKIES信息

#COOKIES_ENABLED = False # Disable Telnet Console (enabled by default)

#TELNETCONSOLE_ENABLED = False # Override the default request headers:

#默认请求头

#DEFAULT_REQUEST_HEADERS = {

# 'Accept': 'text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8',

# 'Accept-Language': 'en',

#} #中间件

# Enable or disable spider middlewares

# See https://doc.scrapy.org/en/latest/topics/spider-middleware.html

#SPIDER_MIDDLEWARES = {

# 'gosuncn.middlewares.GosuncnSpiderMiddleware': 543,

#} # Enable or disable downloader middlewares

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html

#DOWNLOADER_MIDDLEWARES = {

# 'gosuncn.middlewares.GosuncnDownloaderMiddleware': 543,

#} # Enable or disable extensions

# See https://doc.scrapy.org/en/latest/topics/extensions.html

#EXTENSIONS = {

# 'scrapy.extensions.telnet.TelnetConsole': None,

#} #配置pipelines,数字为权重值,越小越先执行

# Configure item pipelines

# See https://doc.scrapy.org/en/latest/topics/item-pipeline.html

ITEM_PIPELINES = {

'gosuncn.pipelines.GosuncnPipeline': 300,

}

LOG_LEVEL ="WARNING"

LOG_FILE = "./log.log" #对爬虫进行限速

# Enable and configure the AutoThrottle extension (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/autothrottle.html

#AUTOTHROTTLE_ENABLED = True

# The initial download delay

#AUTOTHROTTLE_START_DELAY = 5

# The maximum download delay to be set in case of high latencies

#AUTOTHROTTLE_MAX_DELAY = 60

# The average number of requests Scrapy should be sending in parallel to

# each remote server

#AUTOTHROTTLE_TARGET_CONCURRENCY = 1.0

# Enable showing throttling stats for every response received:

#AUTOTHROTTLE_DEBUG = False #HTTP缓存

# Enable and configure HTTP caching (disabled by default)

# See https://doc.scrapy.org/en/latest/topics/downloader-middleware.html#httpcache-middleware-settings

#HTTPCACHE_ENABLED = True

#HTTPCACHE_EXPIRATION_SECS = 0

#HTTPCACHE_DIR = 'httpcache'

#HTTPCACHE_IGNORE_HTTP_CODES = []

#HTTPCACHE_STORAGE = 'scrapy.extensions.httpcache.FilesystemCacheStorage'

4、pipelines的open_spider和close_spider函数

# -*- coding: utf-8 -*- # Define your item pipelines here

#

# Don't forget to add your pipeline to the ITEM_PIPELINES setting

# See: https://doc.scrapy.org/en/latest/topics/item-pipeline.html

import re

from gosuncn.items import GosuncnItem

class GosuncnPipeline(object): def open_spider(self,spider):

"""

爬虫启动时执行,启动数据库连接

:param spider:

:return:

"""

spider.hello = "open" def process_item(self, item, spider):

if isinstance(item,GosuncnItem):

item["content"] = self.process_content(item["content"])

print(item)

return item def process_content(self,content):

content =[re.sub(r"\r\n|' '","",i) for i in content]

content = [i for i in content if len(i)>0]

return content def close_spider(self,spider):

"""

爬虫结束时执行,关闭数据库连接

:param spider:

:return:

"""

spider.hello = "close"

# class GosuncnPipeline1(object):

# def process_item(self, item, spider):

# if isinstance(item,GosuncnItem):

# print(item)

# return item

python之scrapy的debug、shell、settings、pipelines的更多相关文章

- python之scrapy入门教程

看这篇文章的人,我假设你们都已经学会了python(派森),然后下面的知识都是python的扩展(框架). 在这篇入门教程中,我们假定你已经安装了Scrapy.如果你还没有安装,那么请参考安装指南. ...

- python爬虫scrapy项目详解(关注、持续更新)

python爬虫scrapy项目(一) 爬取目标:腾讯招聘网站(起始url:https://hr.tencent.com/position.php?keywords=&tid=0&st ...

- python爬虫scrapy学习之篇二

继上篇<python之urllib2简单解析HTML页面>之后学习使用Python比较有名的爬虫scrapy.网上搜到两篇相应的文档,一篇是较早版本的中文文档Scrapy 0.24 文档, ...

- scrapy框架之shell

scrapy shell scrapy shell是一个交互式shell,您可以在其中快速调试 scrape 代码,而不必运行spider.它本来是用来测试数据提取代码的,但实际上您可以使用它来测试任 ...

- Python之Scrapy爬虫框架安装及简单使用

题记:早已听闻python爬虫框架的大名.近些天学习了下其中的Scrapy爬虫框架,将自己理解的跟大家分享.有表述不当之处,望大神们斧正. 一.初窥Scrapy Scrapy是一个为了爬取网站数据,提 ...

- [Python爬虫] scrapy爬虫系列 <一>.安装及入门介绍

前面介绍了很多Selenium基于自动测试的Python爬虫程序,主要利用它的xpath语句,通过分析网页DOM树结构进行爬取内容,同时可以结合Phantomjs模拟浏览器进行鼠标或键盘操作.但是,更 ...

- Python使用Scrapy框架爬取数据存入CSV文件(Python爬虫实战4)

1. Scrapy框架 Scrapy是python下实现爬虫功能的框架,能够将数据解析.数据处理.数据存储合为一体功能的爬虫框架. 2. Scrapy安装 1. 安装依赖包 yum install g ...

- python爬虫Scrapy(一)-我爬了boss数据

一.概述 学习python有一段时间了,最近了解了下Python的入门爬虫框架Scrapy,参考了文章Python爬虫框架Scrapy入门.本篇文章属于初学经验记录,比较简单,适合刚学习爬虫的小伙伴. ...

- windows下使用python的scrapy爬虫框架,爬取个人博客文章内容信息

scrapy作为流行的python爬虫框架,简单易用,这里简单介绍如何使用该爬虫框架爬取个人博客信息.关于python的安装和scrapy的安装配置请读者自行查阅相关资料,或者也可以关注我后续的内容. ...

随机推荐

- linux操作系统中的常用命令以及快捷键(一)

接触了linux系统一年,总结一些常用的命令,快捷键等一些尝试 1.首先查看linux内核数量,常用于编译源码包时 用 make -j 来指定内核数来编译 grep ^processor /proc/ ...

- vue-element-admin实现模板打印

一.简介 模板打印也叫”套打“,是业务系统和后台管理系统中的常用功能,B/S系统中实现”套打“比较繁琐,所以很多的B/S系统中的打印功能一直使用的是浏览器打印,很少实现模板打印.本篇将介绍在Vue E ...

- PAT Basic 1057 数零壹 (20 分)

给定一串长度不超过 1 的字符串,本题要求你将其中所有英文字母的序号(字母 a-z 对应序号 1-26,不分大小写)相加,得到整数 N,然后再分析一下 N 的二进制表示中有多少 0.多少 1.例如给定 ...

- WebRTC基于GCC的拥塞控制算法[转载]

实时流媒体应用的最大特点是实时性,而延迟是实时性的最大敌人.从媒体收发端来讲,媒体数据的处理速度是造成延迟的重要原因:而从传输角度来讲,网络拥塞则是造成延迟的最主要原因.网络拥塞可能造成数据包丢失,也 ...

- css浮动(float)及如何清除浮动

前言: CSS浮动是现在网页布局中使用最频繁的效果之一,而浮动可以帮我们解决很多问题,那么就让我们一起来看一看如何使用浮动. 一.css浮动(float)(1)html文档流 自窗体自上而下分成一行一 ...

- JAVA-WEB-简单的四则运算

首先附上选择题目数量和每行题数的JSP代码 <!DOCTYPE HTML PUBLIC "-//W3C//DTD HTML 4.01 Transitional//EN"> ...

- mongodb的安装与使用(二)之 增删改查与索引

0.MongoDB数据库和集合创建与删除 MongoDB 创建数据库 语法: use DATABASE_NAME note:查看所有数据库使用show dbs 创建的空数据库 test并不在数据库的列 ...

- node.js http模块和fs模块上机实验·

httpserver const httpserver = require('http'); var server = httpserver.createServer(function (req,re ...

- Go位运算

目录 &(AND) |(OR) ^(XOR) &^(AND NOT) << 和 >> & 位运算 AND | 位运算 OR ^ 位运算 XOR & ...

- Centos 7 搭建蓝鲸V4.1.16稳定社区版

在本地用VMware模拟了三台主机 准备至少3台 CentOS 7 以上操作系统的机器,保证三台虚拟机都可以上网 最低配置:2核4G(我用的是这个) 建议配置: 4核12G 以上 192.168.16 ...