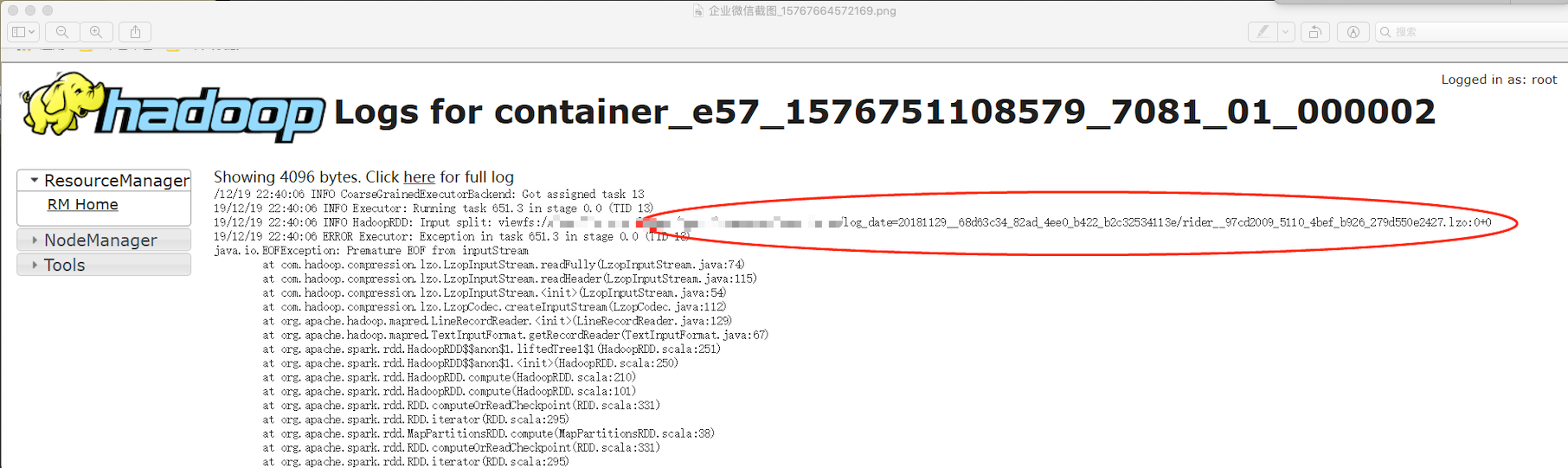

spark 执行报错 java.io.EOFException: Premature EOF from inputStream

使用spark2.4跟spark2.3 做替代公司现有的hive选项。

跑个别任务spark有以下错误

- java.io.EOFException: Premature EOF from inputStream

- at com.hadoop.compression.lzo.LzopInputStream.readFully(LzopInputStream.java:74)

- at com.hadoop.compression.lzo.LzopInputStream.readHeader(LzopInputStream.java:115)

- at com.hadoop.compression.lzo.LzopInputStream.<init>(LzopInputStream.java:54)

- at com.hadoop.compression.lzo.LzopCodec.createInputStream(LzopCodec.java:112)

- at org.apache.hadoop.mapred.LineRecordReader.<init>(LineRecordReader.java:129)

- at org.apache.hadoop.mapred.TextInputFormat.getRecordReader(TextInputFormat.java:67)

- at org.apache.spark.rdd.HadoopRDD$$anon$1.liftedTree1$1(HadoopRDD.scala:269)

- at org.apache.spark.rdd.HadoopRDD$$anon$1.<init>(HadoopRDD.scala:268)

- at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:226)

- at org.apache.spark.rdd.HadoopRDD.compute(HadoopRDD.scala:97)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.UnionRDD.compute(UnionRDD.scala:105)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:52)

- at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:330)

- at org.apache.spark.rdd.RDD.iterator(RDD.scala:294)

- at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:99)

- at org.apache.spark.scheduler.ShuffleMapTask.runTask(ShuffleMapTask.scala:55)

- at org.apache.spark.scheduler.Task.run(Task.scala:123)

- at org.apache.spark.executor.Executor$TaskRunner$$anonfun$10.apply(Executor.scala:408)

- at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1360)

- at org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:414)

- at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

- at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

- at java.lang.Thread.run(Thread.java:748)

排查原因 发现是读取0 size 大小的文件时出错

并没有发现spark官方有修复该bug

手动修改代码 过滤掉这种文件

在 HadoopRDD.scala 类相应位置修改如图即可

- // We get our input bytes from thread-local Hadoop FileSystem statistics.

- // If we do a coalesce, however, we are likely to compute multiple partitions in the same

- // task and in the same thread, in which case we need to avoid override values written by

- // previous partitions (SPARK-13071).

- private def updateBytesRead(): Unit = {

- getBytesReadCallback.foreach { getBytesRead =>

- inputMetrics.setBytesRead(existingBytesRead + getBytesRead())

- }

- }

- private var reader: RecordReader[K, V] = null

- private val inputFormat = getInputFormat(jobConf)

- HadoopRDD.addLocalConfiguration(

- new SimpleDateFormat("yyyyMMddHHmmss", Locale.US).format(createTime),

- context.stageId, theSplit.index, context.attemptNumber, jobConf)

- reader =

- try {

- if (split.inputSplit.value.getLength != 0) { //文件大小不为零 采取读取

- inputFormat.getRecordReader(split.inputSplit.value, jobConf, Reporter.NULL)

- } else {

- logWarning(s"Skipped the file size 0 file: ${split.inputSplit}")

- finished = true //大小为0 即结束 跳过

- null

- }

- } catch {

- case e: FileNotFoundException if ignoreMissingFiles =>

- logWarning(s"Skipped missing file: ${split.inputSplit}", e)

- finished = true

- null

- // Throw FileNotFoundException even if `ignoreCorruptFiles` is true

- case e: FileNotFoundException if !ignoreMissingFiles => throw e

- case e: IOException if ignoreCorruptFiles =>

- logWarning(s"Skipped the rest content in the corrupted file: ${split.inputSplit}", e)

- finished = true

- null

- }

- // Register an on-task-completion callback to close the input stream.

- context.addTaskCompletionListener[Unit] { context =>

- // Update the bytes read before closing is to make sure lingering bytesRead statistics in

- // this thread get correctly added.

- updateBytesRead()

- closeIfNeeded()

- }

spark 执行报错 java.io.EOFException: Premature EOF from inputStream的更多相关文章

- 关于spark入门报错 java.io.FileNotFoundException: File file:/home/dummy/spark_log/file1.txt does not exist

不想看废话的可以直接拉到最底看总结 废话开始: master: master主机存在文件,却报 执行spark-shell语句: ./spark-shell --master spark://ma ...

- Spark启动报错|java.io.FileNotFoundException: File does not exist: hdfs://hadoop101:9000/directory

at org.apache.spark.deploy.history.FsHistoryProvider.<init>(FsHistoryProvider.scala:) at org.a ...

- hadoop MR 任务 报错 "Error: java.io.IOException: Premature EOF from inputStream at org.apache.hadoop.io"

错误原文分析 文件操作超租期,实际上就是data stream操作过程中文件被删掉了.一般是由于Mapred多个task操作同一个文件.一个task完毕后删掉文件导致. 这个错误跟dfs.datano ...

- hbase_异常_03_java.io.EOFException: Premature EOF: no length prefix available

一.异常现象 更改了hadoop的配置文件:core-site.xml 和 mapred-site.xml 之后,重启hadoop 和 hbase 之后,发现hbase日志中抛出了如下异常: ...

- Spark报错java.io.IOException: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.

Spark 读取 JSON 文件时运行报错 java.io.IOException: Could not locate executable null\bin\winutils.exe in the ...

- 关于SpringMVC项目报错:java.io.FileNotFoundException: Could not open ServletContext resource [/WEB-INF/xxxx.xml]

关于SpringMVC项目报错:java.io.FileNotFoundException: Could not open ServletContext resource [/WEB-INF/xxxx ...

- Kafka 启动报错java.io.IOException: Can't resolve address.

阿里云上 部署Kafka 启动报错java.io.IOException: Can't resolve address. 本地调试的,报错 需要在本地添加阿里云主机的 host 映射 linux ...

- 文件上传报错java.io.FileNotFoundException拒绝访问

局部代码如下: File tempFile = new File("G:/tempfileDir"+"/"+fileName); if(!tempFile.ex ...

- 完美解决JavaIO流报错 java.io.FileNotFoundException: F:\ (系统找不到指定的路径。)

完美解决JavaIO流报错 java.io.FileNotFoundException: F:\ (系统找不到指定的路径.) 错误原因 读出文件的路径需要有被拷贝的文件名,否则无法解析地址 源代码(用 ...

随机推荐

- 【线性代数】6-2:对角化(Diagonalizing a Matrix)

title: [线性代数]6-2:对角化(Diagonalizing a Matrix) categories: Mathematic Linear Algebra keywords: Eigenva ...

- 离线语音Snowboy热词唤醒+ 树莓派语音交互实现开关灯

离线语音Snowboy热词唤醒 语音识别现在有非常广泛的应用场景,如手机的语音助手,智能音响(小爱,叮咚,天猫精灵...)等. 语音识别一般包含三个阶段:热词唤醒,语音录入,识别和逻辑控制阶段. 热词 ...

- vue3.x 错误记录

1:css报错 This dependency was not found: * !!vue-style-loader!css-loader?{"minimize":false,& ...

- P3469 割点的应用

https://www.luogu.org/problem/P3469 题目就是说封锁一个点,会导致哪些点(对)连不通: 用tarjan求割点,如果这个点是割点,那么不能通行的点对数就是(乘法法则)儿 ...

- 用python实现简易学生管理系统

以前用C++和Java写过学生管理系统,也想用Python试试,果然“人生苦短,我用Python”.用Python写的更加简洁,实现雏形也就不到100行代码. 下面上代码 #!/usr/bin/pyt ...

- zip flags 1 and 8 are not supported解决方案

原因是因为使用了mac自带的软件打包成了zip,这种zip包unzip命令无法解压的. 所以解决方案就是使用zip命令进行压缩,zip -r 目标文件 源文件

- SQL-W3School-高级:SQL 数据类型

ylbtech-SQL-W3School-高级:SQL 数据类型 1.返回顶部 1. Microsoft Access.MySQL 以及 SQL Server 所使用的数据类型和范围. Microso ...

- C之指针的加法

#include<stdio.h> #include<stdlib.h> main() { //char arr [] = {'H','e','l','l','o'}; int ...

- 深入理解Flink ---- End-to-End Exactly-Once语义

上一篇文章所述的Exactly-Once语义是针对Flink系统内部而言的. 那么Flink和外部系统(如Kafka)之间的消息传递如何做到exactly once呢? 问题所在: 如上图,当sink ...

- Win10安装多个MySQL实例

Win10安装MySQL-8.0.15 1.下载mysql-8.0.15-winx64.zip安装包,地址如下 https://cdn.mysql.com//Downloads/MySQL-8.0/m ...