Introducing Deep Reinforcement

The manuscript of Deep Reinforcement Learning is available now! It makes significant improvements to Deep Reinforcement Learning: An Overview, which has received 100+ citations, by extending its latest version more than one year ago from 70 pages to 150 pages.

It draws a big picture of deep reinforcement learning (RL) with many details. It covers contemporary work in historical contexts. It endeavours to answer the following questions: 1) Why deep? 2) What is the state of the art? and, 3) What are the issues, and potential solutions? It attempts to help those who want to get more familiar with deep RL, and to serve as a reference for people interested in this fascinating area, like professors, researchers, students, engineers, managers, investors, etc. Shortcomings and mistakes are inevitable; comments and criticisms are welcome.

The manuscript introduces AI, machine learning, and deep learning briefly, and provides a mini tutorial for reinforcement learning. The following figure illustrates relationships among these concepts, with major contents for machine learning and AI .Deep reinforcement learning is reinforcement learning integrated with deep learning, or deep artificial neural networks. A blog is dedicated to Resources for Deep Reinforcement Learning.

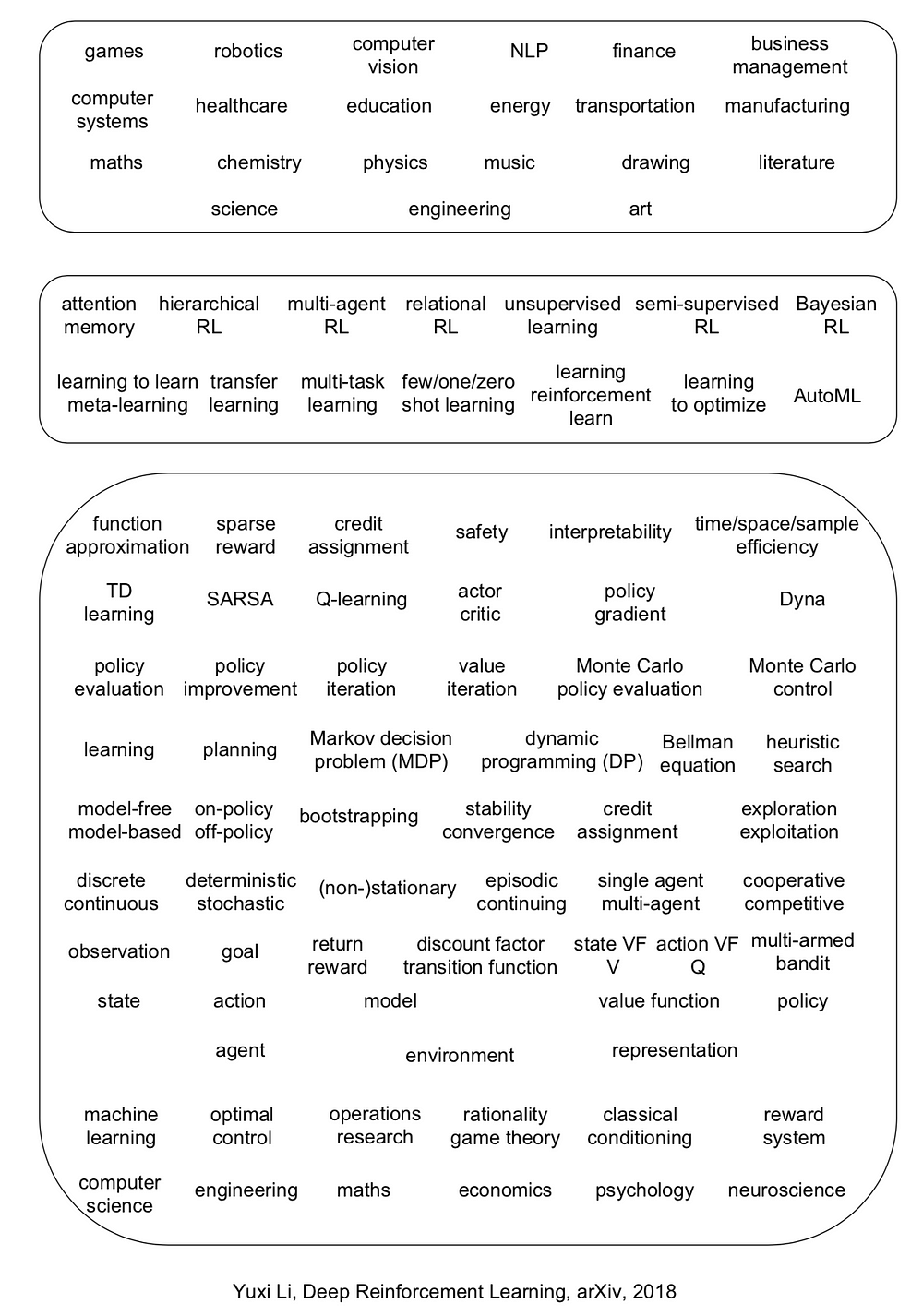

The manuscript covers six core elements: value function, policy, reward, model, exploration vs. exploitation, and representation; six important mechanisms: attention and memory, unsupervised learning, hierarchical RL, multi-agent RL, relational RL, and learning to learn; and twelve applications: games, robotics, natural language processing (NLP), computer vision, finance, business management, healthcare, education, energy, transportation, computer systems, and, science, engineering, and art.

Deep reinforcement learning has made exceptional achievements, e.g., DQN applying to Atari games ignited this wave of deep RL, and AlphaGo (Zero) and DeepStack set landmarks for AI. Deep RL has many newly invented algorithms/architectures, e.g., DQN, A3C, TRPO, PPO, DDPG, Trust-PCL, GPS, UNREAL, DNC, etc. Moreover, deep RL has been enjoying very abound and diverse applications, e.g., Capture the Flag, Dota 2, StarCraft II, robotics, character animation, conversational AI, neural architecture design (AutoML), data center cooling, recommender systems, data augmentation, model compression, combinatorial optimization, program synthesis, theorem proving, medical imaging, music, and chemical retrosynthesis, so on and so forth. A blog is dedicated to Reinforcement Learning applications.

In general, RL is probably helpful, if a problem can be regarded as or transformed to a sequential decision making problem, and states, actions, maybe rewards, can be constructed; sometimes the problem may not appear as an RL problem on the surface. Roughly speaking, if a task involves some manual designed “strategy”, then there is a chance for reinforcement learning to help. Creativity would push the frontiers of deep RL further with respect to core elements, important mechanisms, and applications.

Albeit being so successful, deep RL encounters many issues, like credit assignment, sparse reward, sample efficiency, instability, divergence, interpretability, safety, etc.; even reproducibility is an issue.

Six research directions are proposed as both challenges and opporrtunities. There are already some progress in these directions, e.g., Dopamine, TStarBots, MOREL, GQN, visual reasoning, neural-symbolic learning, UPN, causal InfoGAN, meta-gradient RL, along with many applications as above.

- systematic, comparative study of deep RL algorithms

- “solve” multi-agent problems

- learn from entities, but not just raw inputs

- design an optimal representation for RL

- AutoRL

- develop killer applications for (deep) RL

It is desirable to integrate RL more deeply with AI, with more intelligence in the end-to-end mapping from raw inputs to decisions, to incorporate knowledge, to have common sense, to be more efficient, to be more interpretable, and to avoid obvious mistakes, etc., rather than working as a blackbox.

Deep learning and reinforcement learning, being selected as one of the MIT Technology Review 10 Breakthrough Technologies in 2013 and 2017 respectively, will play their crucial roles in achieving artificial general intelligence. David Silver proposed a conjecture: artificial intelligence = reinforcement learning + deep learning (AI = RL + DL). We will see both deep learning and reinforcement learning prospering in the coming years and beyond. Deep learning is exploding. It is the right time to nurture, educate and lead the market for reinforcement learning.

Deep learning, in this third wave of AI, will have deeper influences, as we have already seen from its many achievements. Reinforcement learning, as a more general learning and decision making paradigm, will deeply influence deep learning, machine learning, and artificial intelligence in general.

Introducing Deep Reinforcement的更多相关文章

- 论文笔记之:Asynchronous Methods for Deep Reinforcement Learning

Asynchronous Methods for Deep Reinforcement Learning ICML 2016 深度强化学习最近被人发现貌似不太稳定,有人提出很多改善的方法,这些方法有很 ...

- (转) Playing FPS games with deep reinforcement learning

Playing FPS games with deep reinforcement learning 博文转自:https://blog.acolyer.org/2016/11/23/playing- ...

- (zhuan) Deep Reinforcement Learning Papers

Deep Reinforcement Learning Papers A list of recent papers regarding deep reinforcement learning. Th ...

- Learning Roadmap of Deep Reinforcement Learning

1. 知乎上关于DQN入门的系列文章 1.1 DQN 从入门到放弃 DQN 从入门到放弃1 DQN与增强学习 DQN 从入门到放弃2 增强学习与MDP DQN 从入门到放弃3 价值函数与Bellman ...

- (转) Deep Reinforcement Learning: Playing a Racing Game

Byte Tank Posts Archive Deep Reinforcement Learning: Playing a Racing Game OCT 6TH, 2016 Agent playi ...

- 论文笔记之:Dueling Network Architectures for Deep Reinforcement Learning

Dueling Network Architectures for Deep Reinforcement Learning ICML 2016 Best Paper 摘要:本文的贡献点主要是在 DQN ...

- getting started with building a ROS simulation platform for Deep Reinforcement Learning

Apparently, this ongoing work is to make a preparation for futural research on Deep Reinforcement Le ...

- (转) Deep Reinforcement Learning: Pong from Pixels

Andrej Karpathy blog About Hacker's guide to Neural Networks Deep Reinforcement Learning: Pong from ...

- 论文笔记之:Deep Reinforcement Learning with Double Q-learning

Deep Reinforcement Learning with Double Q-learning Google DeepMind Abstract 主流的 Q-learning 算法过高的估计在特 ...

随机推荐

- 一起来点React Native——常用组件之Image

一.前言 在开发中还有一个非常重要的组件Image,通过这个组件可以展示各种各样的图片,而且在React Native中该组件可以通过多种方式加载图片资源. 二.Image组件的基本用法 2.1 从当 ...

- 2016 ACM/ICPC Asia Regional Qingdao Online 1001 I Count Two Three(打表+二分搜索)

Time Limit: 3000/1000 MS (Java/Others) Memory Limit: 32768/32768 K (Java/Others)Total Submission( ...

- ODBC 安装/使用/编程

前言: 主要讲解ODBC API, 以mysql为例, 从配置到安装, 再到具体的编程, 以期对ODBC有个初步的认识. *) 下载mysql, 选择社区版mysql, 并安装 http://dev. ...

- 51Nod:1085 背包问题

1085 背包问题 基准时间限制:1 秒 空间限制:131072 KB 分值: 0 难度:基础题 收藏 关注 在N件物品取出若干件放在容量为W的背包里,每件物品的体积为W1,W2--Wn(Wi为 ...

- 最短路--spfa+队列优化模板

spfa普通版就不写了,优化还是要的昂,spfa是可以判负环,接受负权边和重边的,判断负环只需要另开一个数组记录每个结点的入队次数,当有任意一个结点入队大于点数就表明有负环存在 #include< ...

- GridView实现数据编辑和删除

<asp:GridView ID="gv_Emplogin" runat="server" AutoGenerateColumns="False ...

- 【转】每天一个linux命令(42):kill命令

原文网址:http://www.cnblogs.com/peida/archive/2012/12/20/2825837.html Linux中的kill命令用来终止指定的进程(terminate a ...

- 【转】每天一个linux命令(20):find命令之exec

原文网址:http://www.cnblogs.com/peida/archive/2012/11/14/2769248.html find是我们很常用的一个Linux命令,但是我们一般查找出来的并不 ...

- RAC8——scan ip的理解

SCAN概念 先介绍一下什么叫SCAN,SCAN(Single Client Access Name)是Oracle从11g R2开始推出的,客户端可以通过SCAN特性负载均衡地连接到RAC数据库.S ...

- mybatis 生成 映射文件生成工具 mybatisGenerator 使用

第一:新建 generatorConfig.xml 文件 ,写入下面的 内容 <?xml version="1.0" encoding="UTF-8"?& ...