bert中的分词

直接把自己的工作文档导入的,由于是在外企工作,所以都是英文写的

chinese and english tokens result

input: "我爱中国",tokens:["我","爱","中","国"]

input: "I love china habih", tokens:["I","love","china","ha","##bi","##h"] (here "##bi","##h" are all in vocabulary)

Implementation

chinese and english text would call two tokens,one is basic_tokenizer and other one is wordpiece_tokenizer as you can see from the the code below.

basic_tokenizer

if the input is chinese, the _tokenize_chinese_chars would add whitespace between chinese charater, and then call whitespace_tokenizer which separate text with whitespace,so

if the input is the query "我爱中国",would return ["我","爱","中","国"],if the input is the english query "I love china", would return ["I","love","china"]

wordpiece_tokenizer

if the input is chinese ,if would iterate tokens from basic_tokenizer result, if the character is in vocabulary, just keep the same character ,otherwise append unk_token.

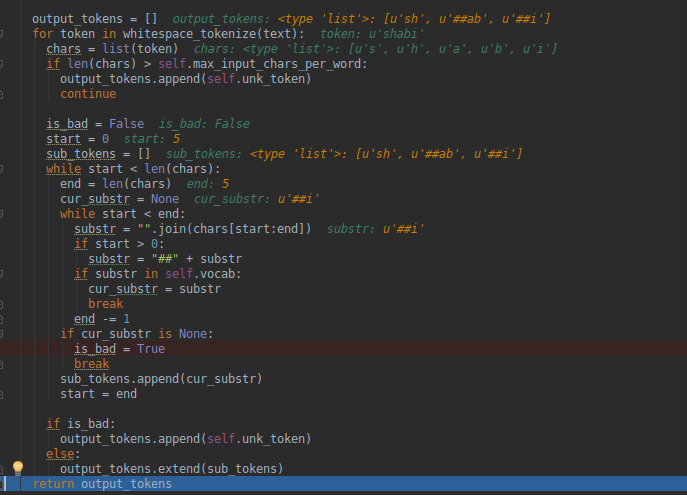

if the input is english, we would iterate over one word ,for example: the word is "shabi", while it is not in vocabulary,so the end index would rollback until it found "sh" in vocabulary,

in the following process, once it found a substr in vocabulary ,it would append "##" then append it to output tokens, so we can get ["sh","##ab","##i"] finally.

#65 How are out of vocabulary words handled for Chinese?

The top 8000 characters are character-tokenized, other characters are mapped to [UNK]. I should've commented this section better but it's here

Basically that's saying if it tries to apply WordPiece tokenization (the character tokenization happens previously), and it gets to a single character that it can't find, it maps it to unk_token.

#62 Why Chinese vocab contains ##word?

This is the character used to denote WordPieces, it's just an artifact of the WordPiece vocabulary generator that we use, but most of those words were never actually used during training (for Chinese). So you can just ignore those tokens. Note that for the English characters that appear in Chinese text they are actually used.

bert中的分词的更多相关文章

- 一文读懂BERT中的WordPiece

1. 前言 2018年最火的论文要属google的BERT,不过今天我们不介绍BERT的模型,而是要介绍BERT中的一个小模块WordPiece. 2. WordPiece原理 现在基本性能好一些的N ...

- 广告行业中那些趣事系列8:详解BERT中分类器源码

最新最全的文章请关注我的微信公众号:数据拾光者. 摘要:BERT是近几年NLP领域中具有里程碑意义的存在.因为效果好和应用范围广所以被广泛应用于科学研究和工程项目中.广告系列中前几篇文章有从理论的方面 ...

- Python中结巴分词使用手记

手记实用系列文章: 1 结巴分词和自然语言处理HanLP处理手记 2 Python中文语料批量预处理手记 3 自然语言处理手记 4 Python中调用自然语言处理工具HanLP手记 5 Python中 ...

- Elasticsearch中的分词器比较及使用方法

Elasticsearch 默认分词器和中分分词器之间的比较及使用方法 https://segmentfault.com/a/1190000012553894 介绍:ElasticSearch 是一个 ...

- ES中的分词器

基本概念: 全文搜索引擎会用某种算法对要建索引的文档进行分析, 从文档中提取出若干Token(词元), 这些算法称为Tokenizer(分词器), 这些Token会被进一步处理, 比如转成小写等, 这 ...

- 开源自然语言处理工具包hanlp中CRF分词实现详解

CRF简介 CRF是序列标注场景中常用的模型,比HMM能利用更多的特征,比MEMM更能抵抗标记偏置的问题. [gerative-discriminative.png] CRF训练 这类耗时的任务,还 ...

- sklearn中的分词函数countVectorizer()的改动--保留长度为1的字符串

1简述问题 使用countVectorizer()将文本向量化时发现,文本中长度唯一的字符串会被自动过滤掉,这对于我在做的情感分析来讲,一些表较重要的表达情感倾向的词汇被过滤掉,比如文本'没用的东西, ...

- nlp任务中的传统分词器和Bert系列伴生的新分词器tokenizers介绍

layout: blog title: Bert系列伴生的新分词器 date: 2020-04-29 09:31:52 tags: 5 categories: nlp mathjax: true ty ...

- 中文分词中的战斗机-jieba库

英文分词的第三方库NLTK不错,中文分词工具也有很多(盘古分词.Yaha分词.Jieba分词等).但是从加载自定义字典.多线程.自动匹配新词等方面来看. 大jieba确实是中文分词中的战斗机. 请随意 ...

随机推荐

- 读入字符串/字符 scanf与getchar/gets区别

1. 读入字符 scanf/getchar:空格.Tab.回车都可以读入.但要以回车作为结束符. 所以当读入字符时,注意去掉一些干扰输入的字符,如空格和回车 2. 读入字符串 scanf:不能读入空格 ...

- word宏(macro) 之 注意事项,常见语法和学习地方

宏:计算机科学里的宏(Macro),是一种批量处理的称谓.一般说来,宏是一种规则或模式,或称语法替换 ,用于说明某一特定输入(通常是字符串)如何根据预定义的规则转换成对应的输出(通常也是字符串).这种 ...

- 安装Cloudera manager agent步骤详解

安装Cloudera manager agent步骤详解 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客主要是针对:https://www.cnblogs.com/yinz ...

- Css的前世今生

Css的基础知识扫盲 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. HTML的用法没有什么技巧,就是死记硬背,当然你不需要都记下来,能记住20个常用的标签基本上就OK了,其他不常用 ...

- 将本地的代码推送到公网的github账号去

将本地的代码推送到公网的github账号去 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 最近工作上需要用到github账号,拜读了一位叫廖雪峰的大神的文档,把git的前世今生说的 ...

- strace常用参数详解

strace常用参数详解 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. strace命令大家应该比我熟悉吧,如果你不知道,呵呵,会可能跟我一样被人说:“我怀疑你是假运维”,不过没关 ...

- java中常用的包及作用

1. java.awt:提供了绘图和图像类,主要用于编写GUI程序,包括按钮.标签等常用组件以及相应的事件类. 2. java.lang:java的语言包,是核心包,默认导入到用户程序,包中有obje ...

- webkitAnimationEnd事件与webkitTransitionEnd事件

写一个焦点图demo,css3动画完成以后要把它隐藏掉,这里会用到css3的事件,以前没有接触过,结果查了一下发现这是一片新天地啊,而且里面还有好多坑,比如重复动画多次触发什么的.anyway,我还是 ...

- LINQ to SQL 实现 GROUP BY、聚合、ORDER BY

Ø 前言 本示例主要实现 LINQ 查询,先分组,再聚合,最后在排序.示例很简单,但是使用 LINQ 却生成了不同的 SQL 实现. 1) 采用手动编写 SQL 实现 SELECT ROW_NU ...

- MQTT协议-MQTT协议解析(MQTT数据包结构)

协议就是通信双方的一个约定,即,表示第1位传输的什么.第2位传输的什么…….在MQTT协议中,一个MQTT数据包由:固定头(Fixed header). 可变头(Variable header). 消 ...