Kubernetes二进制(单/多节点)部署

Kubernetes二进制(单/多节点)部署

- Kubernetes二进制(单/多节点)部署

- 一、常见的K8S部署方式

- 二、K8S单(Master)节点二进制部署

- 1. 环境准备

- 2. 部署etcd集群

- 3. 部署docker引擎(所有node节点)

- 4. flannel网络配置

- 5. 部署master组件(在master01节点上操作)

- 5.1 编写apiserver.sh/scheduler.sh/controller-manager.sh

- 5.1.1 apiserver.sh

- 5.2 创建kubernetes工作目录

- 5.3 生成CA证书、相关组件的证书和私钥

- 5.4 复制CA证书、apiserver相关证书和私钥到kubernetes工作目录的ssl子目录中

- 5.5 下载或上传kubernetes安装包到/opt/k8s目录,并解压

- 5.6 复制master组件的关键命令文件到kubernetes工作目录的bin子目录中

- 5.7 创建bootstrap token认证文件

- 5.8 二进制文件、token、证书都准备好后,开启apiserver服务

- 5.9 检查

- 5.10 启动scheduler服务

- 5.11 启动controller-manager服务

- 5.12 查看master节点状态

- 6. 部署Worker Node组件

- 7. K8S单节点测试

- 三、K8S多(Master)节点二进制部署(在以上部署完成的情况下)

一、常见的K8S部署方式

1. Minikube

Minikube是一个工具,可以在本地快速运行一个单节点微型K8S,仅用于学习、预览K8S的一些特性使用

http://kubernetes.io/docs/setup/minikube

2. Kubeadmin

Kubeadmin也是一个工具,提供kubeadm init和kubeadm join,用于快速部署K8S集群,相对简单。

http://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

3. 二进制安装部署

生产首选,从官方下载发行版的二进制包,手动部署每个组件和自签TLS证书,组成K8S集群,新手推荐。

http://github.com/kubernetes/kubernetes/releases

4. 小结

Kubeadm降低部署门槛,但屏蔽了很多细节,遇到问题很难排查。如果想更容易可控,推荐使用二进制包部署Kubernetes集群,虽然手动部署麻烦点,期间可以学习很多工作原理,也利于后期维护。

二、K8S单(Master)节点二进制部署

1. 环境准备

1.1 服务器配置

| 服务器 | 主机名 | IP地址 | 主要组件/说明 |

|---|---|---|---|

| master01节点+etcd01节点 | master01 | 192.168.122.10 | kube-apiserver kube-controller-manager kube-schedular etcd |

| node01节点+etcd02节点 | node01 | 192.168.122.11 | kubelet kube-proxy docker flannel |

| node02节点+etcd03节点 | node01 | 192.168.122.12 | kubelet kube-proxy docker flannel |

1.2 关闭防火墙

systemctl disable --now firewalld

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

1.3 修改主机名

hostnamectl set-hostname [ ]

su

1.4 关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

1.5 在master添加hosts

cat >> /etc/hosts << EOF

192.168.122.10 master01

192.168.122.11 node01

192.168.122.12 node02

EOF

1.6 将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

1.7 时间同步

yum install ntpdate -y

ntpdate time.windows.com

2. 部署etcd集群

2.1 etcd概述

2.1.1 etcd简介

etcd是CoreOS团队于2013年6月发起的开源项目,它的目标是一个高可用的分布式键值(key-value)数据库。etcd内部采用raft协议作为一致性算法,etcd是go语言编写的。

2.1.2 etcd的特点

etcd作为服务发现系统,有以下的特点:

简单:安装配置简单,而且提供了HTTP API进行交互,使用也很简单

安全:支持SSL证书验证

快速:单实例支持每秒2k+读写操作

可靠:采用raft算法,实现分布式系统数据的可用性和一致性

2.1.3 etcd端口

etcd目前默认使用2379端口提供HTTP API服务,2380端口和peer通信(这两个端口已经被IANA(互联网数字分配机构)官方预留给etcd)。即etcd默认使用2379端口对外为客户端提供通讯,使用端口2380来进行服务器间内部通讯。

etcd在生产环境中一般推荐集群方式部署。由于etcd的leader选举机制,要求至少为3台或以上的奇数台。

2.2 签发证书(Master01)

CFSSL是CloudFlare公司开源的一款PKI/TLS工具。CFSSL包含一个命令行工具和一个用于签名、验证和捆绑TLS证书的HTTP API服务,使用Go语言编写。

CFSSL使用配置文件生成证书,因此自签之前,需要生成它识别的json格式的配置文件,CFSSL提供了方便的命令行生成配置文件。

CFSSL用来为etcd提供TLS证书,它支持签三种类型的证书:

- client证书,服务端连接客户端时携带的证书,用于客户端验证服务端身份,如kube-apiserver访问etcd;

- server证书,客户端连接服务端时携带的证书,用于服务端验证客户端身份,如etcd对外提供服务;

- peer证书,相互之间连接时使用的证书,如etcd节点之间进行验证和通信。

注:在本次实验中使用同一套证书进行认证。

2.2.1 下载证书制作工具

[root@master01 ~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -O /usr/local/bin/cfssl

[root@master01 ~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -O /usr/local/bin/cfssljson

[root@master01 ~]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -O /usr/local/bin/cfssl-certinfo

[root@master01 ~]# chmod +x /usr/local/bin/cfssl*

或使用curl -L下载

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

cfssl:证书签发的工具命令

cfssljson:将cfssl生成的证书(json格式)变为文件承载式证书

cfssl-certinfo:验证证书的信息

“cfssl-certinfo -cert <证书名称> ”可查看证书的信息

2.2.2 创建k8s工作目录

[root@master01 ~]# mkdir /opt/k8s

[root@master01 ~]# cd /opt/k8s

2.2.3 编写etcd-cert.sh和etcd.sh脚本

etcd-cert.sh

[root@master01 k8s]# chmod +x etcd-cert.sh

[root@master01 k8s]# cat etcd-cert.sh

#!/bin/bash

#配置证书生成策略,让 CA 软件知道颁发有什么功能的证书,生成用来签发其他组件证书的根证书

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

#ca-config.json:可以定义多个 profiles,分别指定不同的过期时间、使用场景等参数;

#后续在签名证书时会使用某个 profile;此实例只有一个 www 模板。

#expiry:指定了证书的有效期,87600h 为10年,如果用默认值一年的话,证书到期后集群会立即宕掉

#signing:表示该证书可用于签名其它证书;生成的 ca.pem 证书中 CA=TRUE;

#key encipherment:表示使用非对称密钥加密,如 RSA 加密;

#server auth:表示client可以用该 CA 对 server 提供的证书进行验证;

#client auth:表示server可以用该 CA 对 client 提供的证书进行验证;

#注意标点符号,最后一个字段一般是没有逗号的。

#-----------------------

#生成CA证书和私钥(根证书和私钥)

#特别说明: cfssl和openssl有一些区别,openssl需要先生成私钥,然后用私钥生成请求文件,最后生成签名的证书和私钥等,但是cfssl可以直接得到请求文件。

cat > ca-csr.json <<EOF

{

"CN": "etcd",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

#CN:Common Name,浏览器使用该字段验证网站或机构是否合法,一般写的是域名

#key:指定了加密算法,一般使用rsa(size:2048)

#C:Country,国家

#ST:State,州,省

#L:Locality,地区,城市

#O: Organization Name,组织名称,公司名称

#OU: Organization Unit Name,组织单位名称,公司部门

cfssl gencert -initca ca-csr.json | cfssljson -bare ca

#生成的文件:

#ca-key.pem:根证书私钥

#ca.pem:根证书

#ca.csr:根证书签发请求文件

#cfssl gencert -initca <CSRJSON>:使用 CSRJSON 文件生成生成新的证书和私钥。如果不添加管道符号,会直接把所有证书内容输出到屏幕。

#注意:CSRJSON 文件用的是相对路径,所以 cfssl 的时候需要 csr 文件的路径下执行,也可以指定为绝对路径。

#cfssljson 将 cfssl 生成的证书(json格式)变为文件承载式证书,-bare 用于命名生成的证书文件。

#-----------------------

#生成 etcd 服务器证书和私钥

cat > server-csr.json <<EOF

{

"CN": "etcd",

"hosts": [

"192.168.122.10",

"192.168.122.11",

"192.168.122.12"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

#hosts:将所有 etcd 集群节点添加到 host 列表,需要指定所有 etcd 集群的节点 ip 或主机名不能使用网段,新增 etcd 服务器需要重新签发证书。

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

#生成的文件:

#server.csr:服务器的证书请求文件

#server-key.pem:服务器的私钥

#server.pem:服务器的数字签名证书

#-config:引用证书生成策略文件 ca-config.json

#-profile:指定证书生成策略文件中的的使用场景,比如 ca-config.json 中的 www

etcd.sh

[root@master01 k8s]# chmod +x etcd.sh

[root@master01 k8s]# cat etcd.sh

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.80.10 etcd02=https://192.168.80.11:2380,etcd03=https://192.168.80.12:2380

#创建etcd配置文件/opt/etcd/cfg/etcd

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat > $WORK_DIR/cfg/etcd <<EOF

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

#Member:成员配置

#ETCD_NAME:节点名称,集群中唯一。成员名字,集群中必须具备唯一性,如etcd01

#ETCD_DATA_DIR:数据目录。指定节点的数据存储目录,这些数据包括节点ID,集群ID,集群初始化配置,Snapshot文件,若未指定-wal-dir,还会存储WAL文件;如果不指定会用缺省目录

#ETCD_LISTEN_PEER_URLS:集群通信监听地址。用于监听其他member发送信息的地址。ip为全0代表监听本机所有接口

#ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址。用于监听etcd客户发送信息的地址。ip为全0代表监听本机所有接口

#Clustering:集群配置

#ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址。其他member使用,其他member通过该地址与本member交互信息。一定要保证从其他member能可访问该地址。静态配置方式下,该参数的value一定要同时在--initial-cluster参数中存在

#ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址。etcd客户端使用,客户端通过该地址与本member交互信息。一定要保证从客户侧能可访问该地址

#ETCD_INITIAL_CLUSTER:集群节点地址。本member使用。描述集群中所有节点的信息,本member根据此信息去联系其他member

#ETCD_INITIAL_CLUSTER_TOKEN:集群Token。用于区分不同集群。本地如有多个集群要设为不同

#ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群。

#创建etcd.service服务管理文件

cat > /usr/lib/systemd/system/etcd.service <<EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

#--listen-client-urls:用于指定etcd和客户端的连接端口

#--advertise-client-urls:用于指定etcd服务器之间通讯的端口,etcd有要求,如果--listen-client-urls被设置了,那么就必须同时设置--advertise-client-urls,所以即使设置和默认相同,也必须显式设置

#--peer开头的配置项用于指定集群内部TLS相关证书(peer 证书),这里全部都使用同一套证书认证

#不带--peer开头的的参数是指定 etcd 服务器TLS相关证书(server 证书),这里全部都使用同一套证书认证

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

2.2.4 生成CA证书、etcd服务器证书以及私钥

[root@master01 k8s]# mkdir /opt/k8s/etcd-cert

[root@master01 k8s]# mv etcd-cert.sh etcd-cert/

[root@master01 k8s]# cd /opt/k8s/etcd-cert/

[root@master01 etcd-cert]# ./etcd-cert.sh

#生成CA证书、etcd服务器证书以及私钥

[root@master01 etcd-cert]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem etcd-cert.sh server.csr server-csr.json server-key.pem server.pem

2.3 启动etcd服务

2.3.1 安装etcd服务

etcd二进制包地址:https://github.com/etcd-io/etcd/releases

[root@master01 etcd-cert]# cd /opt/k8s

[root@master01 k8s]# rz -E

#传入etcd安装包etcd-v3.3.10-linux-amd64.tar.gz

rz waiting to receive.

[root@master01 k8s]# tar zxvf etcd-v3.3.10-linux-amd64.tar.gz

[root@master01 k8s]# ls etcd-v3.3.10-linux-amd64

Documentation etcd etcdctl README-etcdctl.md README.md READMEv2-etcdctl.md

etcd就是etcd服务的启动命令,后面可跟各种启动参数

etcdctl主要为etcd服务提供了命令行操作

2.3.2 创建用于存放etcd配置文件、命令文件、证书的目录

[root@master01 k8s]# mkdir -p /opt/etcd/{cfg,bin,ssl}

[root@master01 k8s]# mv /opt/k8s/etcd-v3.3.10-linux-amd64/etcd /opt/k8s/etcd-v3.3.10-linux-amd64/etcdctl /opt/etcd/bin/

[root@master01 k8s]# cp /opt/k8s/etcd-cert/

ca-config.json ca-csr.json ca.pem server.csr server-key.pem

ca.csr ca-key.pem etcd-cert.sh server-csr.json server.pem

[root@master01 k8s]# cp /opt/k8s/etcd-cert/*.pem /opt/etcd/ssl/

2.3.3 启动etcd.sh脚本

[root@master01 k8s]# ls

etcd-cert etcd.sh etcd-v3.3.10-linux-amd64 etcd-v3.3.10-linux-amd64.tar.

[root@master01 k8s]# ./etcd.sh etcd01 192.168.122.10 etcd02=https://192.168.122.11:2380,etcd03=https://192.168.122.12:2380

进入卡住状态等待其他节点加入,这里需要三台etcd服务同时启动,如果只启动其中一台后,服务会卡在那里,知道集群中所有etcd节点都已启动,可忽略这个情况

另外打开一个窗口查看etcd进程是否正常

[root@master01 ~]# ps -ef | grep etcd

root 69202 67516 0 16:52 pts/0 00:00:00 /bin/bash ./etcd.sh etcd01 192.168.122.10 etcd02=https://192.168.122.11:2380,etcd03=https://192.168.122.12:2380

root 69261 69202 0 16:52 pts/0 00:00:00 systemctl restart etcd

root 69267 1 1 16:52 ? 00:00:00 /opt/etcd/bin/etcd --name=etcd01 --data-dir=/var/lib/etcd/default.etcd --listen-peer-urls=https://192.168.122.10:2380 --listen-client-urls=https://192.168.122.10:2379,http://127.0.0.1:2379 --advertise-client-urls=https://192.168.122.10:2379 --initial-advertise-peer-urls=https://192.168.122.10:2380 --initial-cluster=etcd01=https://192.168.122.10:2380,etcd02=https://192.168.122.11:2380,etcd03=https://192.168.122.12:2380 --initial-cluster-token=etcd-cluster --initial-cluster-state=new --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --trusted-ca-file=/opt/etcd/ssl/ca.pem --peer-cert-file=/opt/etcd/ssl/server.pem --peer-key-file=/opt/etcd/ssl/server-key.pem --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

root 69330 69277 0 16:53 pts/1 00:00:00 grep --color=auto etcd

2.3.4 把etcd相关证书文件和命令文件全部拷贝到另外两个etcd集群节点

[root@master01 k8s]# scp -r /opt/etcd/ root@192.168.122.11:/opt/etcd/

etcd 100% 516 1.1MB/s 00:00

etcd 100% 18MB 98.8MB/s 00:00

etcdctl 100% 15MB 153.9MB/s 00:00

ca-key.pem 100% 1679 3.2MB/s 00:00

ca.pem 100% 1257 343.6KB/s 00:00

server-key.pem 100% 1675 1.3MB/s 00:00

server.pem 100% 1334 3.0MB/s 00:00

[root@master01 k8s]# scp -r /opt/etcd/ root@192.168.122.12:/opt/etcd/

etcd 100% 516 1.0MB/s 00:00

etcd 100% 18MB 116.2MB/s 00:00

etcdctl 100% 15MB 147.9MB/s 00:00

ca-key.pem 100% 1679 1.9MB/s 00:00

ca.pem 100% 1257 443.4KB/s 00:00

server-key.pem 100% 1675 2.7MB/s 00:00

server.pem 100% 1334 336.3KB/s 00:00

2.3.5 把etcd服务管理文件拷贝到了另外两个etcd集群节点

[root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service root@192.168.122.11:/usr/lib/systemd/system/

etcd.service 100% 923 1.3MB/s 00:00

[root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service root@192.168.122.12:/usr/lib/systemd/system/

etcd.service 100% 923 1.5MB/s 00:00

2.3.6 修改另外两个etcd集群节点的配置文件

node01

[root@node01 ~]# cd /opt/etcd/cfg/

[root@node01 cfg]# ls

etcd

[root@node01 cfg]# vim etcd

#[Member]

ETCD_NAME="etcd02"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.122.11:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.122.11:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.122.11:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.122.11:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.122.10:2380,etcd02=https://192.168.122.11:2380,etcd03=https://192.168.122.12:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

[root@node01 cfg]# systemctl daemon-reload

[root@node01 cfg]# systemctl enable --now etcd.service

[root@node01 cfg]# systemctl status etcd

● etcd.service - Etcd Server

Loaded: loaded (/usr/lib/systemd/system/etcd.service; enabled; vendor preset: disabled)

Active: active (running) since 三 2021-10-27 17:11:05 CST; 10s ago

......

node02同上,修改ETCD_NAME"etcd03"以及对应IP即可

2.3.7 检查集群状态(Master01)

v2版本查看(默认版本)

[root@master01 k8s]# ln -s /opt/etcd/bin/etcd* /usr/local/bin

#将etcd执行命令软链接到PATH路径

[root@master01 k8s]# cd /opt/etcd/ssl

[root@master01 ssl]# etcdctl \

> --ca-file=ca.pem \

> --cert-file=server.pem \

> --key-file=server-key.pem \

> --endpoints="https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379" \

> cluster-health

member 69d968bf93159205 is healthy: got healthy result from https://192.168.122.11:2379

member 854a51ad82cc5ac2 is healthy: got healthy result from https://192.168.122.12:2379

member aa2f014e32cf986f is healthy: got healthy result from https://192.168.122.10:2379

cluster is healthy

--cert-file:识别HTTPS端使用SSL证书文件

--key-file:使用此SSL密钥文件标识HTTPS客户端

--ca-file:使用此CA证书验证启用https的服务器的证书

--endpoints:集群中以逗号分隔的机器地址列表

cluster-health:检查etcd集群的运行状况

v3版本查看

切换到etcd3版本查看集群节点状态和成员列表

v2和v3命令略有不同,etcd2和etcd3也是不兼容的,默认版本是v2版本

[root@master01 ~]# export ETCDCTL_API=3

[root@master01 ~]# etcdctl --write-out=table endpoint status

+----------------+------------------+---------+---------+-----------+-----------+------------+

| ENDPOINT | ID | VERSION | DB SIZE | IS LEADER | RAFT TERM | RAFT INDEX |

+----------------+------------------+---------+---------+-----------+-----------+------------+

| 127.0.0.1:2379 | aa2f014e32cf986f | 3.3.10 | 20 kB | false | 976 | 21 |

+----------------+------------------+---------+---------+-----------+-----------+------------+

[root@master01 ~]# etcdctl --write-out=table member list

+------------------+---------+--------+-----------------------------+-----------------------------+

| ID | STATUS | NAME | PEER ADDRS | CLIENT ADDRS |

+------------------+---------+--------+-----------------------------+-----------------------------+

| 69d968bf93159205 | started | etcd02 | https://192.168.122.11:2380 | https://192.168.122.11:2379 |

| 854a51ad82cc5ac2 | started | etcd03 | https://192.168.122.12:2380 | https://192.168.122.12:2379 |

| aa2f014e32cf986f | started | etcd01 | https://192.168.122.10:2380 | https://192.168.122.10:2379 |

+------------------+---------+--------+-----------------------------+-----------------------------+

[root@master01 ~]# export ETCDCTL_API=2

3. 部署docker引擎(所有node节点)

node01

[root@node01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@node01 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@node01 ~]# yum install -y docker-ce docker-ce-cli containerd.io

[root@node01 ~]# systemctl enable --now docker

Created symlink from /etc/systemd/system/multi-user.target.wants/docker.service to /usr/lib/systemd/system/docker.service.

node02同上

4. flannel网络配置

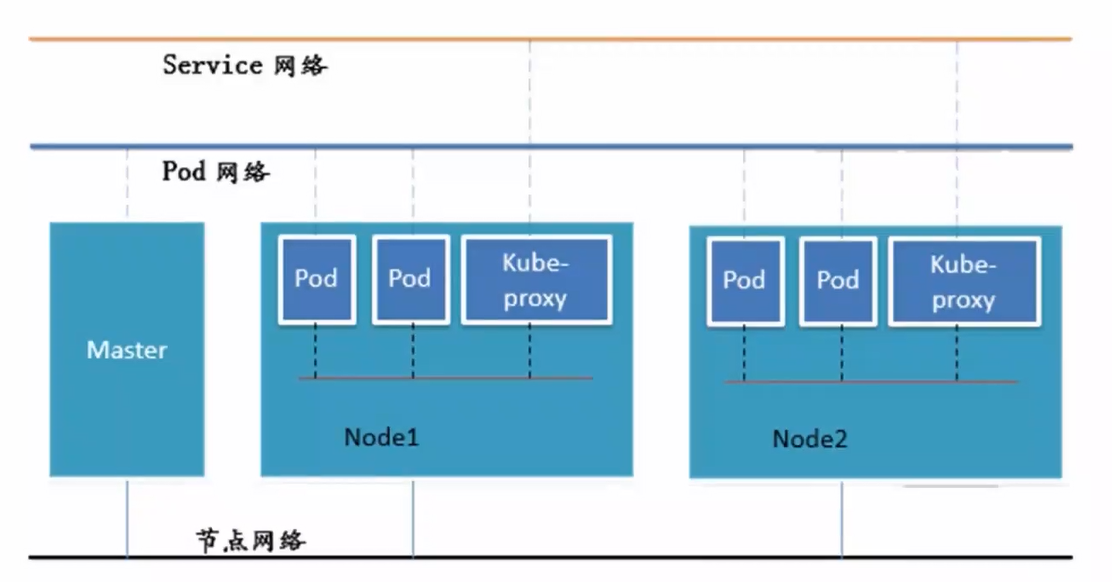

4.1 K8S中Pod网络通信

● Pod内容器与容器之间的通信

在同一个Pod内的容器(Pod内的容器是不会跨宿主机的)共享同一个网络命令空间,相当于它们在同一台机器上一样,可以用localhost地址访问彼此的端口。

● 同一个Node内Pod之间的通信

每个Pod都有一个真实的全局IP地址,同一个Node内的不同Pod之间可以直接采用对方Pod的IP地址进行通信,Pod1与Pod2都是通过Veth连接到同一个docker0网桥,网段相同,所以它们之间可以直接通信。

● 不同Node上Pod之间的通信

Pod地址与docker0在同一网段,docker0网段与宿主机网卡是两个不同的网段,且不同Node之间的通信只能通过宿主机的物理网卡进行。

要想实现不同Node上Pod之间的通信,就必须想办法通过主机的物理网卡IP地址进行寻址和通信。因此要满足两个条件:Pod的IP不能冲突,将Pod的IP和所在的Node的IP关联起来,通过这个关联让不同Node上Pod之间直接通过内网IP地址通信。

4.2 Overlay Network

叠加网络,在二层或者三层几乎网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路隧道连接起来(类似于VPN)。

4.3 VXLAN

将源数据包封装到UDP中,并使用基础网络的IP/MAC作为外层报文头进行封装,然后在以太网上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址。

4.4 Flannel简介

Flannel的功能是让集群中的不同节点主机创建的Docker容器都具有全集群唯一的虚拟IP地址。

Flannel是Overlay网络的一种,也是将TCP源数据包封装在另一种网络包里面进行路由转发和通信,目前支持UDP、VXLAN、host-GW三种数据转发方式。

4.5 Flannel工作原理

数据从node01上Pod的源容器中发出后,经由所在主机的docker0虚拟网卡转发到flannel.1虚拟网卡,flanneld服务监听在flanne.1数据网卡的另外一端。

Flannel通过Etcd服务维护了一张节点间的路由表。源主机node01的flanneld服务将原本的数据内容封装到UDP中后根据自己的路由表通过物理网卡投递给目的节点node02的flanneld服务,数据到达以后被解包,然后直接进入目的节点的dlannel.1虚拟网卡,之后被转发到目的主机的docker0虚拟网卡,最后就像本机容器通信一样由docker0转发到目标容器。

4.6 ETCD之Flannel提供说明

存储管理Flannel可分配的IP地址段资源

监控ETCD中每个Pod的实际地址,并在内存中建立维护Pod节点路由表

4.7 Flannel部署

4.7.1 在master01节点上操作

添加flannel网络配置信息,写入分配的子网段到etcd中,供flannel使用

[root@master01 ~]# cd /opt/etcd/ssl

[root@master01 ssl]# etcdctl \

> --ca-file=ca.pem \

> --cert-file=server.pem \

> --key-file=server-key.pem \

> --endpoint="https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379" \

> set /coreos.com/network/config '{"Network":"172.17.0.0/16","Backend":{"Type":"vxlan"}}'

查看写入的信息

[root@master01 ssl]# etcdctl \

> --ca-file=ca.pem \

> --cert-file=server.pem \

> --key-file=server-key.pem \

> --endpoint="https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379" \

> get /coreos.com/network/config

{"Network":"172.17.0.0/16","Backend":{"Type":"vxlan"}}

set :给键赋值

set /coreos.com/network/config:添加一条网络配置记录,这个配置将用于flannel分配给每个docker的虚拟IP地址段

get :获取网络配置记录,后面不用再跟参数

Network:用于指定Flannel地址池

Backend:用于指定数据包以什么方式转发,默认为udp模式,Backend为vxlan比起预设的udp性能相对好一些。

4.7.2 在所有node节点上操作(以node01为例)

4.7.2.1 安装flannel

[root@node01 ~]# cd /opt

[root@node01 opt]# rz -E

#上传flannel安装包flannel-v.0.10.0-linux-amd64.tar.gz到/opt目录中

rz waiting to receive.

flanneld

#flanneld为主要的执行文件

mk-docker-opts.sh

#mk-docker-opts.sh脚本用于生成Docker启动参数

README.md

4.7.2.2 编写flannel.sh脚本

[root@node01 opt]# cat flannel.sh

#!/bin/bash

#定义etcd集群的端点IP地址和对外提供服务的2379端口

#${var:-string}:若变量var为空,则用在命令行中用string来替换;否则变量var不为空时,则用变量var的值来替换,这里的1代表的是位置变量$1

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"}

#创建flanneld配置文件

cat > /opt/kubernetes/cfg/flanneld <<EOF

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \\

-etcd-cafile=/opt/etcd/ssl/ca.pem \\

-etcd-certfile=/opt/etcd/ssl/server.pem \\

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

#flanneld 本应使用 etcd 客户端TLS相关证书(client 证书),这里全部都使用同一套证书认证。

#创建flanneld.service服务管理文件

cat > /usr/lib/systemd/system/flanneld.service <<EOF

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

#flanneld启动后会使用 mk-docker-opts.sh 脚本生成 docker 网络相关配置信息

#mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS:将组合选项键设置为环境变量DOCKER_NETWORK_OPTIONS,docker启动时将使用此变量

#mk-docker-opts.sh -d /run/flannel/subnet.env:指定要生成的docker网络相关信息配置文件的路径,docker启动时候引用此配置

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

4.7.2.3 创建kubenetes工作目录

[root@node01 opt]# mkdir -p /opt/kubernetes/{cfg,bin,ssl}

[root@node01 opt]# cd /opt

[root@node01 opt]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

4.7.2.4 启动flannel服务,开启flannel网络功能

[root@node01 opt]# chmod +x flannel.sh

[root@node01 opt]# ./flannel.sh https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379

[root@node01 opt]# systemctl status flanneld.service

● flanneld.service - Flanneld overlay address etcd agent

Loaded: loaded (/usr/lib/systemd/system/flanneld.service; enabled; vendor preset: disabled)

Active: active (running) since 三 2021-10-27 19:38:31 CST; 10s ago

......

flannel启动后会生成一个docker网络相关信息配置文件/run/flannel/subnet.env,包含了docker要使用flannel通讯的相关参数

node01

[root@node01 opt]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.54.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.54.1/24 --ip-masq=false --mtu=1450"

[root@node01 opt]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

ether 02:42:8b:ec:a9:19 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.54.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::ec8b:f9ff:fe2b:c8f8 prefixlen 64 scopeid 0x20<link>

ether ee:8b:f9:2b:c8:f8 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 26 overruns 0 carrier 0 collisions 0

......

node02

[root@node02 opt]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.97.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.97.1/24 --ip-masq=false --mtu=1450"

[root@node02 opt]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.0.1 netmask 255.255.0.0 broadcast 172.17.255.255

ether 02:42:0f:e6:9d:50 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.97.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::d821:a6ff:fef7:a4bf prefixlen 64 scopeid 0x20<link>

ether da:21:a6:f7:a4:bf txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 27 overruns 0 carrier 0 collisions 0

......

--bip:指定docker启动时的子网

--ip-masq:设置ipmasq=false关闭snat伪装策略

--mtu=1450:mtu要留出50字节给外层的vxlan封包的额外开销使用

4.7.2.5 修改docker0网卡网段与flannel一致

添加docker环境变量

[root@node01 opt]# vim /lib/systemd/system/docker.service

#13行,插入环境文件

EnvironmentFile=/run/flannel/subnet.env

#14行,添加变量$DOCKER_NETWORK_OPTIONS

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS -H fd:// --containerd=/run/containerd/containerd.sock

[root@node01 opt]# systemctl daemon-reload

[root@node01 opt]# systemctl restart docker

node01

[root@node01 opt]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.54.1 netmask 255.255.255.0 broadcast 172.17.54.255

ether 02:42:8b:ec:a9:19 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.54.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::ec8b:f9ff:fe2b:c8f8 prefixlen 64 scopeid 0x20<link>

ether ee:8b:f9:2b:c8:f8 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 26 overruns 0 carrier 0 collisions 0

......

node02

[root@node02 opt]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.97.1 netmask 255.255.255.0 broadcast 172.17.97.255

ether 02:42:0f:e6:9d:50 txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.97.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::d821:a6ff:fef7:a4bf prefixlen 64 scopeid 0x20<link>

ether da:21:a6:f7:a4:bf txqueuelen 0 (Ethernet)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 27 overruns 0 carrier 0 collisions 0

......

4.7.2.6 容器间跨网通信

node01

[root@node01 opt]# docker run -itd centos:7 bash

[root@node01 opt]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2cec8cb71481 centos:7 "bash" 3 minutes ago Up 3 minutes sleepy_dubinsky

[root@node01 opt]# docker exec -it 2cec8cb71481 bash

[root@2cec8cb71481 /]# ifconfig

bash: ifconfig: command not found

[root@2cec8cb71481 /]# yum install -y net-tools

[root@2cec8cb71481 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.54.2 netmask 255.255.255.0 broadcast 172.17.54.255

ether 02:42:ac:11:36:02 txqueuelen 0 (Ethernet)

RX packets 26944 bytes 20616702 (19.6 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 13503 bytes 732575 (715.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

node02

[root@node02 opt]# docker run -itd centos:7 bash

[root@node02 opt]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

2a6e09908c6c centos:7 "bash" 4 minutes ago Up 4 minutes nifty_poincare

[root@node02 opt]# docker exec -it 2a6e09908c6c bash

[root@2a6e09908c6c /]# yum install -y net-tools

[root@2a6e09908c6c /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.97.2 netmask 255.255.255.0 broadcast 172.17.97.255

ether 02:42:ac:11:61:02 txqueuelen 0 (Ethernet)

RX packets 26870 bytes 20613730 (19.6 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 12804 bytes 694756 (678.4 KiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

......

[root@2a6e09908c6c /]# ping -I 172.17.97.2 172.17.54.2

#指定eth0网卡ping其他主机node02中的容器ip

PING 172.17.54.2 (172.17.54.2) from 172.17.97.2 : 56(84) bytes of data.

64 bytes from 172.17.54.2: icmp_seq=1 ttl=62 time=0.350 ms

64 bytes from 172.17.54.2: icmp_seq=2 ttl=62 time=0.276 ms

64 bytes from 172.17.54.2: icmp_seq=3 ttl=62 time=0.342 ms

node01

[root@2cec8cb71481 /]# ping -I 172.17.54.2 172.17.97.2

PING 172.17.97.2 (172.17.97.2) from 172.17.54.2 : 56(84) bytes of data.

64 bytes from 172.17.97.2: icmp_seq=1 ttl=62 time=0.415 ms

64 bytes from 172.17.97.2: icmp_seq=2 ttl=62 time=0.316 ms

5. 部署master组件(在master01节点上操作)

5.1 编写apiserver.sh/scheduler.sh/controller-manager.sh

5.1.1 apiserver.sh

[root@master01 ~]# cd /opt/k8s

[root@master01 k8s]# cat apiserver.sh

#!/bin/bash

#example: apiserver.sh 192.168.122.10 https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379

#创建 kube-apiserver 启动参数配置文件

MASTER_ADDRESS=$1

ETCD_SERVERS=$2

cat >/opt/kubernetes/cfg/kube-apiserver <<EOF

KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--etcd-servers=${ETCD_SERVERS} \\

--bind-address=${MASTER_ADDRESS} \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.0.0.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/opt/kubernetes/ssl/apiserver.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/apiserver-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/etcd/ssl/ca.pem \\

--etcd-certfile=/opt/etcd/ssl/server.pem \\

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

#--logtostderr=true:输出日志到标准错误控制台,不输出到文件

#--v=4:指定输出日志的级别,v=4为调试级别详细输出

#--etcd-servers:指定etcd服务器列表(格式://ip:port),逗号分隔

#--bind-address:指定 HTTPS 安全接口的监听地址,默认值0.0.0.0

#--secure-port:指定 HTTPS 安全接口的监听端口,默认值6443

#--advertise-address:通过该 ip 地址向集群其他节点公布 api server 的信息,必须能够被其他节点访问

#--allow-privileged=true:允许拥有系统特权的容器运行,默认值false

#--service-cluster-ip-range:指定 Service Cluster IP 地址段

#--enable-admission-plugins:kuberneres集群的准入控制机制,各控制模块以插件的形式依次生效,集群时必须包含ServiceAccount,运行在认证(Authentication)、授权(Authorization)之后,Admission Control是权限认证链上的最后一环, 对请求API资源对象进行修改和校验

#--authorization-mode:在安全端口使用RBAC,Node授权模式,未通过授权的请求拒绝,默认值AlwaysAllow。RBAC是用户通过角色与权限进行关联的模式;Node模式(节点授权)是一种特殊用途的授权模式,专门授权由kubelet发出的API请求,在进行认证时,先通过用户名、用户分组验证是否是集群中的Node节点,只有是Node节点的请求才能使用Node模式授权

#--kubelet-https=true:kubelet通信使用https,默认值true

#--enable-bootstrap-token-auth:在apiserver上启用Bootstrap Token 认证

#--token-auth-file=/opt/kubernetes/cfg/token.csv:指定Token认证文件路径

#--service-node-port-range:指定 NodePort 的端口范围,默认值30000-32767

#创建 kube-apiserver.service 服务管理文件

cat >/usr/lib/systemd/system/kube-apiserver.service <<EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver

5.1.2 编写scheduler.sh

[root@master01 k8s]# cat scheduler.sh

#!/bin/bash

#创建 kube-scheduler 启动参数配置文件

MASTER_ADDRESS=$1

cat >/opt/kubernetes/cfg/kube-scheduler <<EOF

KUBE_SCHEDULER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true"

EOF

#--master:监听 apiserver 的地址和8080端口

#--leader-elect=true:启动 leader 选举

#k8s中Controller-Manager和Scheduler的选主逻辑:k8s中的etcd是整个集群所有状态信息的存储,涉及数据的读写和多个etcd之间数据的同步,对数据的一致性要求严格,所以使用较复杂的 raft 算法来选择用于提交数据的主节点。而 apiserver 作为集群入口,本身是无状态的web服务器,多个 apiserver 服务之间直接负载请求并不需要做选主。Controller-Manager 和 Scheduler 作为任务类型的组件,比如 controller-manager 内置的 k8s 各种资源对象的控制器实时的 watch apiserver 获取对象最新的变化事件做期望状态和实际状态调整,调度器watch未绑定节点的pod做节点选择,显然多个这些任务同时工作是完全没有必要的,所以 controller-manager 和 scheduler 也是需要选主的,但是选主逻辑和 etcd 不一样的,这里只需要保证从多个 controller-manager 和 scheduler 之间选出一个 leader 进入工作状态即可,而无需考虑它们之间的数据一致和同步。

#创建 kube-scheduler.service 服务管理文件

cat >/usr/lib/systemd/system/kube-scheduler.service <<EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

5.1.3 编写controller-manager.sh

[root@master01 k8s]# cat controller-manager.sh

#!/bin/bash

#创建 kube-controller-manager 启动参数配置文件

MASTER_ADDRESS=$1

cat >/opt/kubernetes/cfg/kube-controller-manager <<EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.0.0.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--experimental-cluster-signing-duration=87600h0m0s"

EOF

#--cluster-name=kubernetes:集群名称,与CA证书里的CN匹配

#--cluster-signing-cert-file:指定签名的CA机构根证书,用来签名为 TLS BootStrapping 创建的证书和私钥

#--root-ca-file:指定根CA证书文件路径,用来对 kube-apiserver 证书进行校验,指定该参数后,才会在 Pod 容器的 ServiceAccount 中放置该 CA 证书文件

#--experimental-cluster-signing-duration:设置为 TLS BootStrapping 签署的证书有效时间为10年,默认为1年

#创建 kube-controller-manager.service 服务管理文件

cat >/usr/lib/systemd/system/kube-controller-manager.service <<EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager

5.1.4 编写k8s-cert.sh

[root@master01 k8s]# cat k8s-cert.sh

#!/bin/bash

#配置证书生成策略,让 CA 软件知道颁发有什么功能的证书,生成用来签发其他组件证书的根证书

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

#生成CA证书和私钥(根证书和私钥)

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

#生成 apiserver 的证书和私钥(apiserver和其它k8s组件通信使用)

#hosts中将所有可能作为 apiserver 的 ip 添加进去,后面 keepalived 使用的 VIP 也要加入

cat > apiserver-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

##service

"127.0.0.1",

##本地

"192.168.122.10",

##master01

"192.168.122.20",

##master02

"192.168.122.100",

##vip,后面 keepalived 使用

"192.168.122.13",

##load balancer01(master)

"192.168.122.14",

##load balancer02(backup)

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes apiserver-csr.json | cfssljson -bare apiserver

#-----------------------

#生成 kubectl 的证书和私钥,具有admin权限

cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

#生成 kube-proxy 的证书和私钥

cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

5.1.5 赋予以上脚本执行权限

[root@master01 k8s]# chmod +x *.sh

5.2 创建kubernetes工作目录

[root@master01 k8s]# mkdir -p /opt/kubernetes/{cfg,bin,ssl}

5.3 生成CA证书、相关组件的证书和私钥

[root@master01 k8s]# mkdir /opt/k8s/k8s-cert

[root@master01 k8s]# mv /opt/k8s/k8s-cert.sh /opt/k8s/k8s-cert

[root@master01 k8s]# cd !$

cd /opt/k8s/k8s-cert

[root@master01 k8s-cert]# ./k8s-cert.sh

#生成CA证书、相关组件的证书和私钥

[root@master01 k8s-cert]# ls

admin.csr admin.pem apiserver-key.pem ca.csr ca.pem kube-proxy-csr.json

admin-csr.json apiserver.csr apiserver.pem ca-csr.json k8s-cert.sh kube-proxy-key.pem

admin-key.pem apiserver-csr.json ca-config.json ca-key.pem kube-proxy.csr kube-proxy.pem

controller-manager和kube-scheduler设置为只调用当前机器的apiserver,使用127.0.0.1:8080通信,因此不需要签发证书

5.4 复制CA证书、apiserver相关证书和私钥到kubernetes工作目录的ssl子目录中

[root@master01 k8s-cert]# ls *.pem

admin-key.pem admin.pem apiserver-key.pem apiserver.pem ca-key.pem ca.pem kube-proxy-key.pem kube-proxy.pem

[root@master01 k8s-cert]# cp ca*pem apiserver*pem /opt/kubernetes/ssl/

5.5 下载或上传kubernetes安装包到/opt/k8s目录,并解压

[root@master01 k8s-cert]# cd /opt/k8s/

[root@master01 k8s]# rz -E

#上传k8s安装包kubernetes-server-linux-amd64.tar.gz

rz waiting to receive.

[root@master01 k8s]# tar zxvf kubernetes-server-linux-amd64.tar.gz

5.6 复制master组件的关键命令文件到kubernetes工作目录的bin子目录中

[root@master01 k8s]# cd /opt/k8s/kubernetes/server/bin/

[root@master01 bin]# ls

apiextensions-apiserver kubeadm kube-controller-manager.docker_tag kube-proxy.docker_tag mounter

cloud-controller-manager kube-apiserver kube-controller-manager.tar kube-proxy.tar

cloud-controller-manager.docker_tag kube-apiserver.docker_tag kubectl kube-scheduler

cloud-controller-manager.tar kube-apiserver.tar kubelet kube-scheduler.docker_tag

hyperkube kube-controller-manager kube-proxy kube-scheduler.tar

[root@master01 bin]# cp kube-apiserver kubectl kube-controller-manager kube-scheduler /opt/kubernetes/bin/

[root@master01 bin]# ln -s /opt/kubernetes/bin/* /usr/local/bin/

5.7 创建bootstrap token认证文件

apiserver启动时会调用,然后就相当于在集群内创建了一个这个用户,接下来就可以用RBAC给他授权

[root@master01 bin]# cd /opt/k8s/

[root@master01 k8s]# vim token.sh

#!/bin/bash

#获取随机数前16个字节内容,以十六进制格式输出,并删除其中空格

BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

#生成token.csv文件,按照Token序列号,用户名,UID,用户组的格式生成

cat > /opt/kubernetes/cfg/token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"sysytem:kubelet-bootstrap"

EOF

[root@master01 k8s]# chmod +x token.sh

[root@master01 k8s]# ./token.sh

[root@master01 k8s]# cat /opt/kubernetes/cfg/token.csv

87fe12db8fc131d8e43561e3d82b9878,kubelet-bootstrap,10001,"sysytem:kubelet-bootstrap"

5.8 二进制文件、token、证书都准备好后,开启apiserver服务

[root@master01 k8s]# cd /opt/k8s/

[root@master01 k8s]# ./apiserver.sh 192.168.122.10 https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

5.9 检查

5.9.1 检查进程是否启动成功

[root@master01 k8s]# ps aux | grep kube-apiserver

root 3667 12.1 15.6 405152 318232 ? Ssl 15:08 0:06 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379 --bind-address=192.168.122.10 --secure-port=6443 --advertise-address=192.168.122.10 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/apiserver.pem --tls-private-key-file=/opt/kubernetes/ssl/apiserver-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 3686 0.0 0.0 112676 984 pts/0 S+ 15:09 0:00 grep --color=auto kube-apiserver

k8s通过kube-apiserver这个进程提供服务,该进程运行在单个master节点上。默认有两个端口6443和8080(新版本为58080)

5.9.2 检查6443端口

安全端口6443用于接收HTTPS请求,用于基于Token文件或客户端证书等认证

[root@master01 k8s]# netstat -natp | grep 6443

tcp 0 0 192.168.122.10:6443 0.0.0.0:* LISTEN 3667/kube-apiserver

tcp 0 0 192.168.122.10:6443 192.168.122.10:50550 ESTABLISHED 3667/kube-apiserver

tcp 0 0 192.168.122.10:50550 192.168.122.10:6443 ESTABLISHED 3667/kube-apiserver

5.9.3 检查8080端口

本地端口8080用于接收HTTP请求,非认证或授权的HTTP请求通过该端口访问API Server

[root@master01 k8s]# netstat -natp | grep 8080

tcp 0 0 127.0.0.1:8080 0.0.0.0:* LISTEN 3667/kube-apiserver

5.9.4 查看版本信息

必须保证apiserver启动正常,不然无法查询到server的版本信息

[root@master01 k8s]# kubectl version

Client Version: version.Info{Major:"1", Minor:"12", GitVersion:"v1.12.3", GitCommit:"435f92c719f279a3a67808c80521ea17d5715c66", GitTreeState:"clean", BuildDate:"2018-11-26T12:57:14Z", GoVersion:"go1.10.4", Compiler:"gc", Platform:"linux/amd64"}

Server Version: version.Info{Major:"1", Minor:"12", GitVersion:"v1.12.3", GitCommit:"435f92c719f279a3a67808c80521ea17d5715c66", GitTreeState:"clean", BuildDate:"2018-11-26T12:46:57Z", GoVersion:"go1.10.4", Compiler:"gc", Platform:"linux/amd64"}

5.10 启动scheduler服务

[root@master01 k8s]# ./scheduler.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@master01 k8s]# ps aux | grep kube-schesuler

root 3942 0.0 0.0 112676 980 pts/0 R+ 15:22 0:00 grep --color=auto kube-schesuler

[root@master01 k8s]# ps aux | grep kube-scheduler

root 3920 0.4 1.0 45616 20704 ? Ssl 15:22 0:00 /opt/kubernetes/bin/kube-scheduler --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true

root 3944 0.0 0.0 112676 984 pts/0 S+ 15:23 0:00 grep --color=auto kube-scheduler

5.11 启动controller-manager服务

[root@master01 k8s]# ./controller-manager.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@master01 k8s]# ps aux | grep controller-manager

root 4029 1.0 3.1 142844 64964 ? Ssl 15:24 0:01 /opt/kubernetes/bin/kube-controller-manager --logtostderr=true --v=4 --master=127.0.0.1:8080 --leader-elect=true --address=127.0.0.1 --service-cluster-ip-range=10.0.0.0/24 --cluster-name=kubernetes --cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem --cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem --root-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem --experimental-cluster-signing-duration=87600h0m0s

root 4060 0.0 0.0 112676 980 pts/0 S+ 15:26 0:00 grep --color=auto controller-manager

5.12 查看master节点状态

[root@master01 k8s]# kubectl get componentstatuses

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-1 Healthy {"health":"true"}

或

[root@master01 k8s]# kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

6. 部署Worker Node组件

6.1 把kubelet、kube-proxy拷贝到node节点(在master01节点操作)

[root@master01 k8s]# cd /opt/k8s/kubernetes/server/bin

[root@master01 bin]# scp kubelet kube-proxy root@192.168.122.11:/opt/kubernetes/bin/

kubelet 100% 168MB 141.0MB/s 00:01

kube-proxy 100% 48MB 100.0MB/s 00:00

[root@master01 bin]# scp kubelet kube-proxy root@192.168.122.12:/opt/kubernetes/bin/

kubelet 100% 168MB 148.9MB/s 00:01

kube-proxy 100% 48MB 139.9MB/s 00:00

6.2 编写脚本(在node01节点操作)

6.2.1 proxy.sh

[root@node01 opt]# cat proxy.sh

#!/bin/bash

NODE_ADDRESS=$1

#创建 kube-proxy 启动参数配置文件

cat >/opt/kubernetes/cfg/kube-proxy <<EOF

KUBE_PROXY_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--cluster-cidr=172.17.0.0/16 \\

--proxy-mode=ipvs \\

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig"

EOF

#--hostnameOverride: 参数值必须与 kubelet 的值一致,否则 kube-proxy 启动后会找不到该 Node,从而不会创建任何 ipvs 规则

#--cluster-cidr:指定 Pod 网络使用的聚合网段,Pod 使用的网段和 apiserver 中指定的 service 的 cluster ip 网段不是同一个网段。 kube-proxy 根据 --cluster-cidr 判断集群内部和外部流量,指定 --cluster-cidr 选项后 kube-proxy 才会对访问 Service IP 的请求做 SNAT,即来自非 Pod 网络的流量被当成外部流量,访问 Service 时需要做 SNAT。

#--proxy-mode:指定流量调度模式为 ipvs 模式

#--kubeconfig: 指定连接 apiserver 的 kubeconfig 文件

#----------------------

#创建 kube-proxy.service 服务管理文件

cat >/usr/lib/systemd/system/kube-proxy.service <<EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

6.2.2 编写kubelet.sh

[root@node01 opt]# cat kubelet.sh

#!/bin/bash

NODE_ADDRESS=$1

DNS_SERVER_IP=${2:-"10.0.0.2"}

#创建 kubelet 启动参数配置文件

cat >/opt/kubernetes/cfg/kubelet <<EOF

KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--hostname-override=${NODE_ADDRESS} \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--config=/opt/kubernetes/cfg/kubelet.config \\

--cert-dir=/opt/kubernetes/ssl \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

#--hostname-override:指定kubelet节点在集群中显示的主机名或IP地址,默认使用主机hostname;kube-proxy和kubelet的此项参数设置必须完全一致

#--kubeconfig:指定kubelet.kubeconfig文件位置,用于如何连接到apiserver,里面含有kubelet证书,master授权完成后会在node节点上生成 kubelet.kubeconfig 文件

#--bootstrap-kubeconfig:指定连接 apiserver 的 bootstrap.kubeconfig 文件

#--config:指定kubelet配置文件的路径,启动kubelet时将从此文件加载其配置

#--cert-dir:指定master颁发的客户端证书和密钥保存位置

#--pod-infra-container-image:指定Pod基础容器(Pause容器)的镜像。Pod启动的时候都会启动一个这样的容器,每个pod之间相互通信需要Pause的支持,启动Pause需要Pause基础镜像

#----------------------

#创建kubelet配置文件(该文件实际上就是一个yml文件,语法非常严格,不能出现tab键,冒号后面必须要有空格,每行结尾也不能有空格)

cat >/opt/kubernetes/cfg/kubelet.config <<EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: ${NODE_ADDRESS}

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS:

- ${DNS_SERVER_IP}

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: true

EOF

#PS:当命令行参数与此配置文件(kubelet.config)有相同的值时,就会覆盖配置文件中的该值。

#----------------------

#创建 kubelet.service 服务管理文件

cat >/usr/lib/systemd/system/kubelet.service <<EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet

6.2.3 赋予上述脚本执行权限

[root@node01 opt]# chmod +x *.sh

6.3 在master01节点上操作

6.3.1 创建用于生成kubelet的配置文件的目录

[root@master01 ~]# mkdir /opt/k8s/kubeconfig

6.3.2 编写kubeconfig.sh

kubeconfig.sh文件包含集群参数(CA证书、API Server地址),客户端参数(上面生成的证书和私钥),集群context上下文参数(集群名称、用户名)。Kubenetes组件(如kubectl、kube-proxy)通过启动时指定不同的kubeconfig文件可以切换到不同的集群,连接到apiserver。

[root@master01 ~]# cd !$

cd /opt/k8s/kubeconfig

[root@master01 kubeconfig]# cat kubeconfig.sh

#!/bin/bash

#example: kubeconfig 192.168.122.10 /opt/k8s/k8s-cert/

#创建bootstrap.kubeconfig文件

#该文件中内置了 token.csv 中用户的 Token,以及 apiserver CA 证书;kubelet 首次启动会加载此文件,使用 apiserver CA 证书建立与 apiserver 的 TLS 通讯,使用其中的用户 Token 作为身份标识向 apiserver 发起 CSR 请求

BOOTSTRAP_TOKEN=$(awk -F ',' '{print $1}' /opt/kubernetes/cfg/token.csv)

APISERVER=$1

SSL_DIR=$2

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

#--embed-certs=true:表示将ca.pem证书写入到生成的bootstrap.kubeconfig文件中

# 设置客户端认证参数,kubelet 使用 bootstrap token 认证

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 使用上下文参数生成 bootstrap.kubeconfig 文件

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

#创建kube-proxy.kubeconfig文件

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

# 设置客户端认证参数,kube-proxy 使用 TLS 证书认证

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

# 使用上下文参数生成 kube-proxy.kubeconfig 文件

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@master01 kubeconfig]# chmod +x kubeconfig.sh

6.3.3 生成kubelet的配置文件

[root@master01 kubeconfig]# cd /opt/k8s/kubeconfig

[root@master01 kubeconfig]# chmod +x kubeconfig.sh

[root@master01 kubeconfig]# ./kubeconfig.sh 192.168.122.10 /opt/k8s/k8s-cert/

[root@master01 kubeconfig]# ls

bootstrap.kubeconfig kubeconfig.sh kube-proxy.kubeconfig

6.3.4 把配置文件bootstrap.kubeconfig、kube-proxy.kubeconfig拷贝到node节点

[root@master01 kubeconfig]# cd /opt/k8s/kubeconfig

[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.122.11:/opt/kubernetes/cfg/

bootstrap.kubeconfig 100% 2168 4.4MB/s 00:00

kube-proxy.kubeconfig 100% 6274 7.7MB/s 00:00

[root@master01 kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig root@192.168.122.12:/opt/kubernetes/cfg/

bootstrap.kubeconfig 100% 2168 3.6MB/s 00:00

kube-proxy.kubeconfig 100% 6274 6.5MB/s 00:00

6.3.5 RBAC授权及相关说明

将预设用户kubelet-bootstrap与内置的ClusterRole system:node-bootstrapper绑定到一起,使其能够发起CSR请求

[root@master01 opt]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

kubelet采用TLS Bootstrapping机制,自动完成到kube-apiserver的注册,在node节点量较大或者后期自动扩容时非常有用。

Master apiserver启用TLS认证后,node节点kubelet组件想要加入集群,必须使用CA签发的有效证书才能与apiserver通信,当node节点很多时,前述证书是一件很繁琐的事情。因此Kubernetes引入了TLS bootstrapping机制来兹自动颁发客户端证书,kubelet会以一个低权限用户自动向apiserver申请证书,kubelet的证书由apiserver动态签署。

kubelet首次启动通过加载bootstrap.kubeconfig中的用户Token和apiserver CA证书发起首次CSR请求,这个Token被预先内置在apiserver节点的token.csv中,其身份为kubelet=bootstrap用户和system:kubelet=bootstrap用户组;想要首次CSR请求能成功(即不会被apiserver 401拒绝),则需要先创建一个ClusterRoleBinding,将kubelet-bootstrap用户和system:node-bootstrapper内置ClusterRole绑定(通过kubectl get clusterroles可查询),使其能够发起CSR认证请求。

TLS bootstrapping时的证书实际是由kube-controller-manager组件来签署的,也就是说证书有效期是kube-controller-manager组件控制的;kube-controller-manager组件提供一个--experimental-cluster-signing-duration参数来设置签署的证书有效时间:默认为8760h0m0s,将其改为87600h0m0s,即10年后再进行TLS bootstrapping签署证书即可。

也就是说kubelet首次访问API Server时,是使用token做认证,通过后,Controller Manager会为kubelet生成一个证书,以后的访问都是用证书做认证了。

6.3.6 查看角色

[root@master01 kubeconfig]# kubectl get clusterroles | grep system:node-bootstrapper

system:node-bootstrapper 70m

6.3.7 查看已授权的角色

[root@master01 kubeconfig]# kubectl get clusterrolebinding

NAME AGE

cluster-admin 72m

kubelet-bootstrap 16m

system:aws-cloud-provider 72m

system:basic-user 72m

system:controller:attachdetach-controller 72m

system:controller:certificate-controller 72m

system:controller:clusterrole-aggregation-controller 72m

system:controller:cronjob-controller 72m

system:controller:daemon-set-controller 72m

system:controller:deployment-controller 72m

system:controller:disruption-controller 72m

system:controller:endpoint-controller 72m

system:controller:expand-controller 72m

system:controller:generic-garbage-collector 72m

system:controller:horizontal-pod-autoscaler 72m

system:controller:job-controller 72m

system:controller:namespace-controller 72m

system:controller:node-controller 72m

system:controller:persistent-volume-binder 72m

system:controller:pod-garbage-collector 72m

system:controller:pv-protection-controller 72m

system:controller:pvc-protection-controller 72m

system:controller:replicaset-controller 72m

system:controller:replication-controller 72m

system:controller:resourcequota-controller 72m

system:controller:route-controller 72m

system:controller:service-account-controller 72m

system:controller:service-controller 72m

system:controller:statefulset-controller 72m

system:controller:ttl-controller 72m

system:discovery 72m

system:kube-controller-manager 72m

system:kube-dns 72m

system:kube-scheduler 72m

system:node 72m

system:node-proxier 72m

system:volume-scheduler 72m

6.4 在node1节点上操作

6.4.1 使用kubelet.sh脚本启动kubelet服务

[root@node01 opt]# cd /opt

[root@node01 opt]# chmod +x kubelet.sh

[root@node01 opt]# ./kubelet.sh 192.168.122.11

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

6.4.2 检查kubelet服务启动

[root@node01 opt]# ps aux | grep kubelet

root 83680 0.3 2.1 387336 42772 ? Ssl 17:33 0:00 /opt/kubernetes/bin/kubelet --logtostderr=true --v=4 --hostname-override= --kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig --bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig --config=/opt/kubernetes/cfg/kubelet.config --cert-dir=/opt/kubernetes/ssl --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0

root 83746 0.0 0.0 112676 976 pts/2 R+ 17:34 0:00 grep --color=auto kubelet

6.4.3 查看当前ssl目录

[root@node01 opt]# ls /opt/kubernetes/ssl/

kubelet-client.key.tmp kubelet.crt kubelet.key

此时还没有生成证书,因为master还未对此申请做批准操作

6.4 在master01节点上操作

6.4.1 查看CSR请求

[root@master01 opt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg 3m31s kubelet-bootstrap Pending

发现有来自于kubelet-bootstrap的申请,处于待办状态

6.4.2 通过CSR请求

[root@master01 opt]# kubectl certificate approve node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg

certificatesigningrequest.certificates.k8s.io/node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg approved

6.4.3 再次查看CSR请求状态

[root@master01 opt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg 6m21s kubelet-bootstrap Approved,Issued

Approved,Issued表示已授权CSR请求并签发整数

6.4.4 查看集群节点状态

[root@master01 opt]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

node01 Ready <none> 2m30s v1.12.3

已成功接入node01节点

6.5 在node01节点上操作

6.5.1 已自动生成证书和kubelet.kubeconfig文件

[root@node01 opt]# ls /opt/kubernetes/cfg/kubelet.kubeconfig

/opt/kubernetes/cfg/kubelet.kubeconfig

[root@node01 opt]# ls /opt/kubernetes/ssl

kubelet-client-2021-10-28-17-39-42.pem kubelet-client-current.pem kubelet.crt kubelet.key

6.5.2 加载ip_vs模块

[root@node01 opt]# for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i > /dev/null 2>&1 && /sbin/modprobe $i;done

ip_vs_dh

ip_vs_ftp

ip_vs

ip_vs_lblc

ip_vs_lblcr

ip_vs_lc

ip_vs_nq

ip_vs_pe_sip

ip_vs_rr

ip_vs_sed

ip_vs_sh

ip_vs_wlc

ip_vs_wrr

6.5.3 使用proxy.sh脚本启动proxy服务

[root@node01 opt]# cd /opt

[root@node01 opt]# chmod +x proxy.sh

[root@node01 opt]# ./proxy.sh 192.168.122.11

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@node01 opt]# systemctl status kube-proxy.service

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2021-10-28 19:09:15 CST; 35s ago

......

6.6 node02节点部署

6.6.1 方法一

6.6.1.1 在node01节点操作

将kubelet.sh、proxy.sh文件拷贝到node02节点

[root@node01 opt]# cd /opt/

[root@node01 opt]# scp kubelet.sh proxy.sh root@192.168.122.12:`pwd`

6.6.1.2 在node02节点上操作

使用kubelet.sh脚本启动kubelet服务

[root@node02 opt]# cd /opt

[root@node02 opt]# chmod +x kubelet.sh

[root@node02 opt]# ./kubelet.sh 192.168.122.12

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

6.6.1.3 在master01节点上操作

查看CSR请求

[root@master01 opt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr--2F7RCWB90yyxWKIgazmTQvWCq_AN7Au56xOlOAS_-Y 57s kubelet-bootstrap Pending

node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg 101m kubelet-bootstrap Approved,Issued

通过CSR请求

[root@master01 opt]# kubectl certificate approve node-csr--2F7RCWB90yyxWKIgazmTQvWCq_AN7Au56xOlOAS_-Y

certificatesigningrequest.certificates.k8s.io/node-csr--2F7RCWB90yyxWKIgazmTQvWCq_AN7Au56xOlOAS_-Y approved

再次查看CSR请求

[root@master01 opt]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr--2F7RCWB90yyxWKIgazmTQvWCq_AN7Au56xOlOAS_-Y 2m4s kubelet-bootstrap Approved,Issued

node-csr-NJKSDx9hIMMweZuDIvyRABq4mguRltOyzm0_hsSXpRg 103m kubelet-bootstrap Approved,Issued

查看集群中的节点状态

[root@master01 opt]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.122.12 Ready <none> 55s v1.12.3

node01 Ready <none> 98m v1.12.3

6.6.1.4 node02操作

加载ipvs模块

[root@node02 opt]# for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i > /dev/null 2>&1 && /sbin/modprobe $i;done

ip_vs_dh

ip_vs_ftp

ip_vs

ip_vs_lblc

ip_vs_lblcr

ip_vs_lc

ip_vs_nq

ip_vs_pe_sip

ip_vs_rr

ip_vs_sed

ip_vs_sh

ip_vs_wlc

ip_vs_wrr

使用proxy.sh脚本启动proxy服务

[root@node02 opt]# cd /opt

[root@node02 opt]# chmod +x proxy.sh

[root@node02 opt]# ./proxy.sh 192.168.122.12

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

查看服务状态

[root@node02 opt]# systemctl status kube-proxy.service

● kube-proxy.service - Kubernetes Proxy

Loaded: loaded (/usr/lib/systemd/system/kube-proxy.service; enabled; vendor preset: disabled)

Active: active (running) since 四 2021-10-28 19:20:46 CST; 37s ago

......

6.6.2 方法二

6.6.2.1 在node01节点操作

把现成的/opt/kubernetes目录和Kubelet、kube-proxy的service服务管理文件复制到其他节点

scp -r /opt/kubernetes/ root:192.168.122.12:/opt/

scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service root@192.168.122.12:/usr/lib/systemd/system/

6.6.2.2 在node02节点操作

- 首先删除复制过来的证书,node02可自行申请证书

cd /opt/kubernetes/ssl/

em -rf *

修改配置文件kubelet、kubelet.config、kube-proxy的相关IP地址配置为当前节点的IP地址

加载ipvs模块

modeprobe ip_vs

- 启动kubelet和kube-proxy服务并设置开机自启

systemctl enable --now kubelet.service

systemctl enable --now kube-proxy.service

6.6.2.3 在master01操作

查看CSR申请

kubectl get csr

通过CSR申请

kubectl certificate approve [node-csr-......]

7. K8S单节点测试

master01

[root@master01 opt]# kubectl create deployment nginx-test --image=nginx:1.14

deployment.apps/nginx-test created

[root@master01 opt]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-test-7dc4f9dcc9-vs2p6 0/1 ContainerCreating 0 5s

#等待镜像拉取

[root@master01 opt]# kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-test-7dc4f9dcc9-vs2p6 1/1 Running 0 45s

#容器启动成功

查看pod详情

[root@master01 opt]# kubectl describe pod nginx-test-7dc4f9dcc9-vs2p6

Name: nginx-test-7dc4f9dcc9-vs2p6

Namespace: default

Priority: 0

PriorityClassName: <none>

Node: node01/192.168.122.11

Start Time: Thu, 28 Oct 2021 19:43:55 +0800

Labels: app=nginx-test

pod-template-hash=7dc4f9dcc9

Annotations: <none>

Status: Running

IP: 172.17.54.3

Controlled By: ReplicaSet/nginx-test-7dc4f9dcc9

Containers:

nginx:

Container ID: docker://7f4a2b1dcf087bb2bc3a557b51a28ab34fb46286f4f34ac1017c30f9501e2462

Image: nginx:1.14

Image ID: docker-pullable://nginx@sha256:f7988fb6c02e0ce69257d9bd9cf37ae20a60f1df7563c3a2a6abe24160306b8d

Port: <none>

Host Port: <none>

State: Running

Started: Thu, 28 Oct 2021 19:44:24 +0800

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from default-token-r4pck (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

default-token-r4pck:

Type: Secret (a volume populated by a Secret)

SecretName: default-token-r4pck

Optional: false

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute for 300s

node.kubernetes.io/unreachable:NoExecute for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 2m26s default-scheduler Successfully assigned default/nginx-test-7dc4f9dcc9-vs2p6 to node01

Normal Pulling 2m20s kubelet, node01 pulling image "nginx:1.14"

Normal Pulled 117s kubelet, node01 Successfully pulled image "nginx:1.14"

Normal Created 117s kubelet, node01 Created container

Normal Started 117s kubelet, node01 Started container

查看pod额外信息(包括IP)

[root@master01 opt]# kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE

nginx-test-7dc4f9dcc9-vs2p6 1/1 Running 0 4m3s 172.17.54.3 node01 <none>

使用任意node节点访问pod测试

[root@node01 opt]# curl 172.17.54.3

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

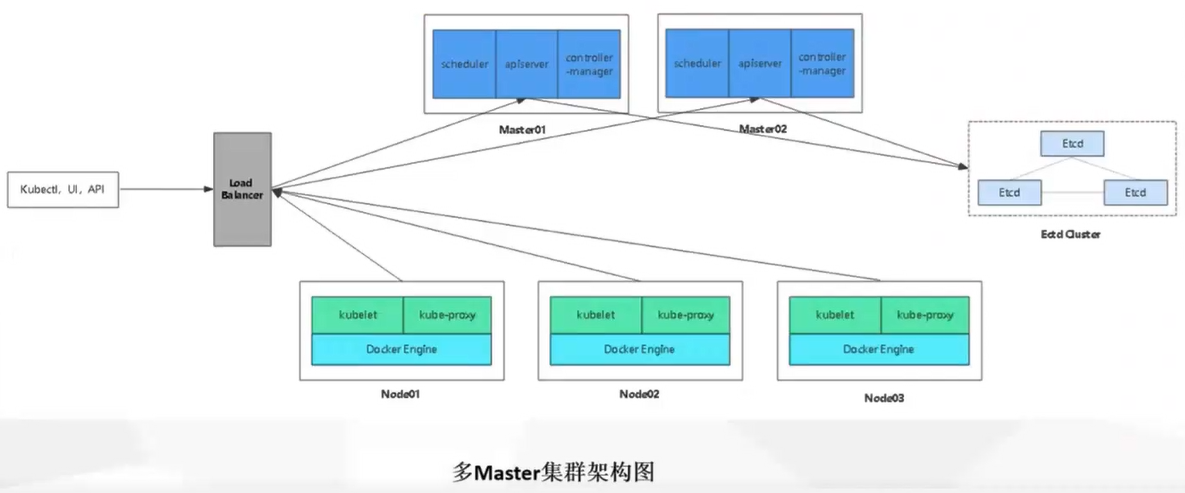

三、K8S多(Master)节点二进制部署(在以上部署完成的情况下)

1. 环境准备

1.1 服务器配置

| 服务器 | 主机名 | IP地址 | 主要组件/说明 |

|---|---|---|---|

| master01节点+etcd01节点 | master01 | 192.168.122.10 | kube-apiserver kube-controller-manager kube-schedular etcd |

| master02节点 | master02 | 192.168.122.20 | kube-apiserver kube-controller-manager kube-schedular |

| node01节点+etcd02节点 | node01 | 192.168.122.11 | kubelet kube-proxy docker flannel |

| node02节点+etcd03节点 | node01 | 192.168.122.12 | kubelet kube-proxy docker flannel |

| nginx01节点 | nginx01 | 192.168.122.13 | keepalived负载均衡(主) |

| nginx02节点 | nginx02 | 192.168.122.14 | keepalived负载均衡(备) |

1.2 master02环境准备

[root@localhost ~]# hostnamectl set-hostname master02

[root@localhost ~]# su

[root@master02 ~]# cat >> /etc/hosts << EOF

> 192.168.122.10 master01

> 192.168.122.20 master02

> 192.168.122.11 node01

> 192.168.122.12 node02

> 192.168.122.13 nginx01

> 192.168.122.14 nginx02

> EOF

[root@master02 ~]# systemctl disable --now firewalld

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service.

[root@master02 ~]# setenforce 0

[root@master02 ~]# sed -i 's/enforcing/disabled/' /etc/selinux/config

[root@master02 ~]# swapoff -a

[root@master02 ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

[root@master02 ~]# cat > /etc/sysctl.d/k8s.conf << EOF

> net.bridge.bridge-nf-call-ip6tables = 1

> net.bridge.bridge-nf-call-iptables = 1

> EOF

[root@master02 ~]# sysctl --system

* Applying /usr/lib/sysctl.d/00-system.conf ...

* Applying /usr/lib/sysctl.d/10-default-yama-scope.conf ...

kernel.yama.ptrace_scope = 0

* Applying /usr/lib/sysctl.d/50-default.conf ...

kernel.sysrq = 16

kernel.core_uses_pid = 1

net.ipv4.conf.default.rp_filter = 1

net.ipv4.conf.all.rp_filter = 1

net.ipv4.conf.default.accept_source_route = 0

net.ipv4.conf.all.accept_source_route = 0

net.ipv4.conf.default.promote_secondaries = 1

net.ipv4.conf.all.promote_secondaries = 1

fs.protected_hardlinks = 1

fs.protected_symlinks = 1

* Applying /usr/lib/sysctl.d/60-libvirtd.conf ...

fs.aio-max-nr = 1048576

* Applying /etc/sysctl.d/99-sysctl.conf ...

* Applying /etc/sysctl.d/k8s.conf ...

* Applying /etc/sysctl.conf ...

[root@master02 ~]# yum install -y ntpdate

[root@master02 ~]# ntpdate time.windows.com

29 Oct 15:00:27 ntpdate[38648]: adjust time server 52.231.114.183 offset -0.002620 sec

1.2 nginx主机(以nginx01为例)

[root@localhost ~]# hostnamectl set-hostname nginx01

[root@localhost ~]# su

[root@nginx01 ~]# systemctl disable --now firewalld

[root@nginx01 ~]# setenforce 0

[root@nginx01 ~]# sed -i 's/enforcing/disabled/' /etc/selinux/config

[root@nginx01 ~]# cat >> /etc/hosts << EOF

> 192.168.122.10 master01

> 192.168.122.20 master02

> 192.168.122.11 node01

> 192.168.122.12 node02

> 192.168.122.13 nginx01

> 192.168.122.14 nginx02

> EOF

1.3 其他主机

cat >> /etc/hosts << EOF

192.168.122.10 master01

192.168.122.20 master02

192.168.122.11 node01

192.168.122.12 node02

192.168.122.13 nginx01

192.168.122.14 nginx02

EOF

2. Master02部署

2.1 拷贝文件

从master01节点上拷贝证书文件、各master组件的配置文件和服务管理文件到master02节点

master01

[root@master01 ~]# scp -r /opt/etcd/ root@192.168.122.20:/opt/

root@192.168.122.20's password:

etcd 100% 516 134.7KB/s 00:00

etcd 100% 18MB 80.8MB/s 00:00

etcdctl 100% 15MB 109.3MB/s 00:00

ca-key.pem 100% 1679 346.6KB/s 00:00

ca.pem 100% 1257 577.1KB/s 00:00

server-key.pem 100% 1675 3.2MB/s 00:00

server.pem 100% 1334 582.3KB/s 00:00

[root@master01 ~]# scp -r /opt/kubernetes/ root@192.168.122.20:/opt/

root@192.168.122.20's password:

token.csv 100% 85 94.0KB/s 00:00

kube-apiserver 100% 938 920.3KB/s 00:00

kube-scheduler 100% 97 183.2KB/s 00:00

kube-controller-manager 100% 480 1.1MB/s 00:00

kube-apiserver 100% 184MB 121.3MB/s 00:01

kubectl 100% 55MB 125.3MB/s 00:00

kube-controller-manager 100% 155MB 140.3MB/s 00:01

kube-scheduler 100% 55MB 126.6MB/s 00:00

ca-key.pem 100% 1675 2.2MB/s 00:00

ca.pem 100% 1359 1.5MB/s 00:00

apiserver-key.pem 100% 1679 860.5KB/s 00:00

apiserver.pem 100% 1643 1.7MB/s 00:00

[root@master01 ~]# cd /usr/lib/systemd/system

[root@master01 system]# scp kube-apiserver.service kube-controller-manager.service kube-scheduler.service root@192.168.122.20:`pwd`

root@192.168.122.20's password:

kube-apiserver.service 100% 282 297.5KB/s 00:00

kube-controller-manager.service 100% 317 576.7KB/s 00:00

kube-scheduler.service 100% 281 582.3KB/s 00:00

2.2 修改配置文件kube-apiserver中的IP

master02

[root@master02 ~]# vim /opt/kubernetes/cfg/kube-apiserver

KUBE_APISERVER_OPTS="--logtostderr=true \

--v=4 \

--etcd-servers=https://192.168.122.10:2379,https://192.168.122.11:2379,https://192.168.122.12:2379 \

##监听地址修改为master02主机IP

--bind-address=192.168.122.20 \

--secure-port=6443 \

##广告地址修改为master02主机IP

--advertise-address=192.168.122.20 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--kubelet-https=true \

--enable-bootstrap-token-auth \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-50000 \

--tls-cert-file=/opt/kubernetes/ssl/apiserver.pem \

--tls-private-key-file=/opt/kubernetes/ssl/apiserver-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem"

2.3 启动各服务并设置开机自启

2.3.1 启动

[root@master02 ~]# systemctl enable --now kube-apiserver.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

[root@master02 ~]# systemctl enable --now kube-controller-manager.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@master02 ~]# systemctl enable --now kube-scheduler.service

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

2.3.2 查看状态

[root@master02 ~]# systemctl status kube-apiserver.service

● kube-apiserver.service - Kubernetes API Server

Loaded: loaded (/usr/lib/systemd/system/kube-apiserver.service; enabled; vendor preset: disabled)

Active: active (running) since 五 2021-10-29 15:27:32 CST; 1min 22s ago

......

[root@master02 ~]# systemctl status kube-controller-manager.service

● kube-controller-manager.service - Kubernetes Controller Manager

Loaded: loaded (/usr/lib/systemd/system/kube-controller-manager.service; enabled; vendor preset: disabled)

Active: active (running) since 五 2021-10-29 15:27:39 CST; 1min 43s ago

......

[root@master02 ~]# systemctl status kube-scheduler.service

● kube-scheduler.service - Kubernetes Scheduler

Loaded: loaded (/usr/lib/systemd/system/kube-scheduler.service; enabled; vendor preset: disabled)

Active: active (running) since 五 2021-10-29 15:27:48 CST; 1min 53s ago

......

2.4 查看node节点状态

master02

[root@master02 ~]# ln -s /opt/kubernetes/bin/* /usr/local/bin/

[root@master02 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.122.12 Ready <none> 20h v1.12.3

node01 Ready <none> 21h v1.12.3

[root@master02 ~]# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

192.168.122.12 Ready <none> 20h v1.12.3 192.168.122.12 <none> CentOS Linux 7 (Core) 3.10.0-693.el7.x86_64 docker://20.10.10

node01 Ready <none> 21h v1.12.3 192.168.122.11 <none> CentOS Linux 7 (Core) 3.10.0-693.el7.x86_64 docker://20.10.10

-o wide:额外输出信息,对于Pod,将输出Pod所在的Node名

此时在master02节点查看到的node节点状态仅是从etcd查询到的信息,而此时node节点实际上并未与master02节点建立通信连接,因此需要使用一个VIP把node节点与master节点东关联起来

3. 负载均衡部署(nginx主机,以nginx01为例)

配置load balancer集群双机热备负载均衡(nginx实现负载均衡,keepalived实现双机热备)

3.1 下载nginx

配置nginx的官方在线yum源,配置本地nginx的yum源

nginx主机(以nginx01为例)

[root@nginx01 ~]# cat > /etc/yum.repos.d/nginx.repo << 'EOF'

> [nginx]

> name=nginx repo

> baseurl=http://nginx.org/packages/centos/7/$basearch/

> gpgcheck=0

> EOF

[root@nginx01 ~]# yum install -y nginx

3.2 修改nginx配置文件

配置四层反向代理负载均衡,指定k8s集群2台master的节点ip和6443

nginx主机(以nginx01为例)

[root@nginx01 ~]# vim /etc/nginx/nginx.conf

......

events {

worker_connections 1024;

}

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiservers {

server 192.168.122.10:6443;

server 192.168.122.20:6443;

}

server {

listen 6443;

proxy_pass k8s-apiservers;

}

}

http {

......

3.3 启动nginx

3.3.1 检查配置文件语法

[root@nginx01 ~]# nginx -t

nginx: the configuration file /etc/nginx/nginx.conf syntax is ok

nginx: configuration file /etc/nginx/nginx.conf test is successful

3.3.2 启动nginx

[root@nginx01 ~]# systemctl enable --now nginx

Created symlink from /etc/systemd/system/multi-user.target.wants/nginx.service to /usr/lib/systemd/system/nginx.service.

3.3.3 查看状态

[root@nginx01 ~]# systemctl status nginx

● nginx.service - nginx - high performance web server

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; vendor preset: disabled)

Active: active (running) since 五 2021-10-29 16:44:40 CST; 1min 19s ago

......

[root@localhost ~]# netstat -natp | grep nginx

tcp 0 0 0.0.0.0:6443 0.0.0.0:* LISTEN 5009/nginx: master

tcp 0 0 0.0.0.0:80 0.0.0.0:* LISTEN 5009/nginx: master

4. 部署keepalived服务(nginx主机,以nginx01为例)

4.1 下载keepalived

[root@nginx01 ~]# yum install -y keepalived

4.2 修改keepalived配置文件

node1

[root@nginx01 ~]# vim /etc/keepalived/keepalived.conf

##10行,修改