Oozie安装部署

不多说,直接上干货!

首先,大家先去看我这篇博客。对于Oozie的安装有一个全新的认识。

Oozie安装的说明

我这里呢,本篇博文定位于手动来安装Oozie,同时避免Apache版本的繁琐编译安装,直接使用CDH版本,已经编译好的oozie-4.1.0-cdh5.5.4.tar.gz。

如果,你要使用Apache版本的话,则需要自己去编译吧!

Apache版本只有1.7M。CDH(已经帮我们编译好了)有1.0G。

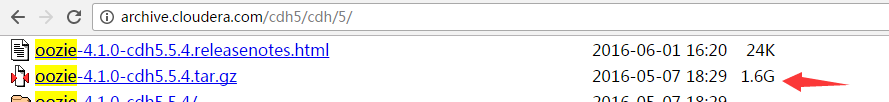

第一大步:oozie-4.1.0-cdh5.5.4.tar.gz的下载

http://archive.cloudera.com/cdh5/cdh/5/

当然,你这里也可以不像我这里,本地下载好,也可以在线下载。

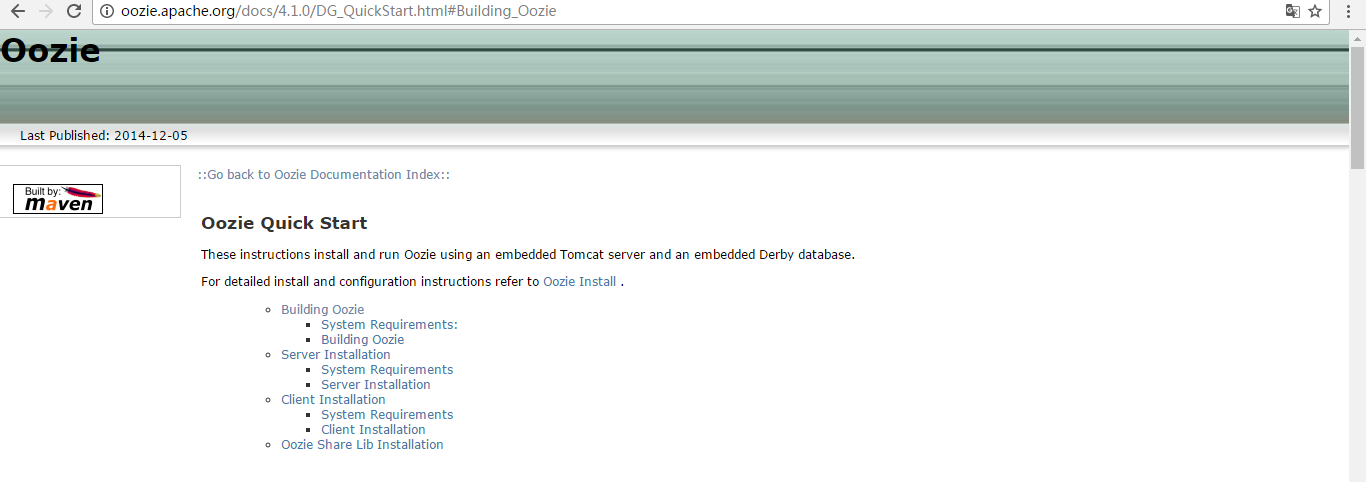

第二大步:Apache Oozie 4.1.0编译的参考官方文档(这个大家去做吧)

http://oozie.apache.org/docs/4.1.0/DG_QuickStart.html#Building_Oozie

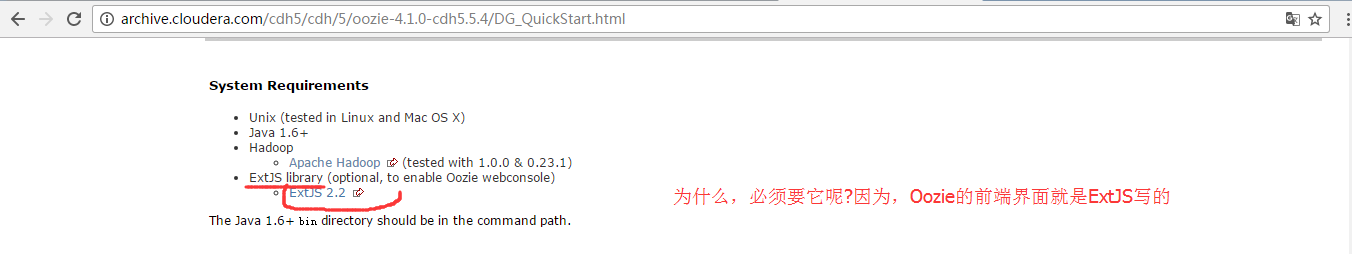

上面是官网要求,至少的!

但是呢,现在,我建议大家,如下。

使用的环境是:Hadoop-2.6.0 Oozie 4.2.0 Jdk 1.7 Maven 3.3.9 Pig0.15.0 Hive-1.2.1 Sqoop 1.99.6

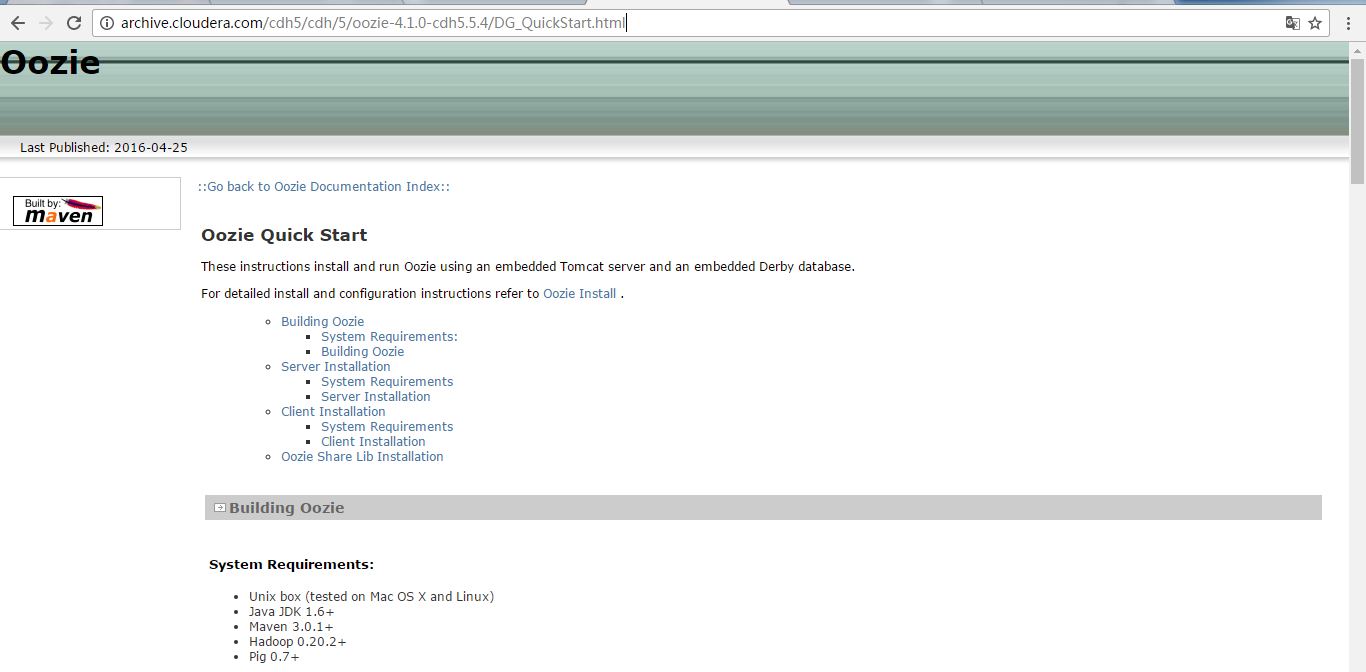

第二大步:Cloudera Oozie 4.1.0编译的参考官方文档(本博文重点)

当然,大家也可以用CDH版本的源码去编译安装,我这里也不多赘述。主要注意的是,把这个源码包打开之后,里面有个pom.xml文件

这个很重要,把里面的什么hive啊、hbase等版本,改成自己机器里的版本。别用默认的。注意这些细节就可以了。然后大家有兴趣,自己去做吧!

注意: apache版本的oozie需要自己编译,由于我本身的环境是cdh5,所以可以直接cdh编译好的版本。(即oozie-4.1.0-cdh5.5.4.tar.gz)

Oozie Server Architecture

大家,可以看到Oozie Server端是在Tomcat里。

Oozie Server 的安装

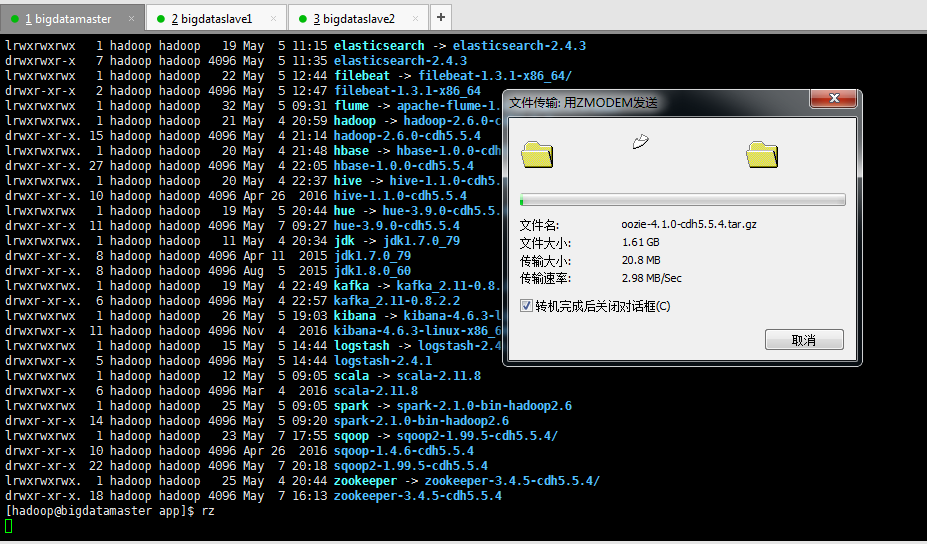

上传

需要一段时间

[hadoop@bigdatamaster app]$ pwd

/home/hadoop/app

[hadoop@bigdatamaster app]$ ll

total

drwxr-xr-x hadoop hadoop Apr apache-flume-1.6.-cdh5.5.4-bin

lrwxrwxrwx hadoop hadoop May : elasticsearch -> elasticsearch-2.4.

drwxrwxr-x hadoop hadoop May : elasticsearch-2.4.

lrwxrwxrwx hadoop hadoop May : filebeat -> filebeat-1.3.-x86_64/

drwxr-xr-x hadoop hadoop May : filebeat-1.3.-x86_64

lrwxrwxrwx hadoop hadoop May : flume -> apache-flume-1.6.-cdh5.5.4-bin/

lrwxrwxrwx. hadoop hadoop May : hadoop -> hadoop-2.6.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hadoop-2.6.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hbase -> hbase-1.0.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hbase-1.0.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hive -> hive-1.1.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop Apr hive-1.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : hue -> hue-3.9.-cdh5.5.4/

drwxr-xr-x hadoop hadoop May : hue-3.9.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx. hadoop hadoop May : kafka -> kafka_2.-0.8.2.2/

drwxr-xr-x. hadoop hadoop May : kafka_2.-0.8.2.2

lrwxrwxrwx hadoop hadoop May : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop May : logstash -> logstash-2.4./

drwxrwxr-x hadoop hadoop May : logstash-2.4.

lrwxrwxrwx hadoop hadoop May : scala -> scala-2.11.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : spark -> spark-2.1.-bin-hadoop2.

drwxr-xr-x hadoop hadoop May : spark-2.1.-bin-hadoop2.

lrwxrwxrwx hadoop hadoop May : sqoop -> sqoop2-1.99.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr sqoop-1.4.-cdh5.5.4

drwxr-xr-x hadoop hadoop May : sqoop2-1.99.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : zookeeper -> zookeeper-3.4.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop May : zookeeper-3.4.-cdh5.5.4

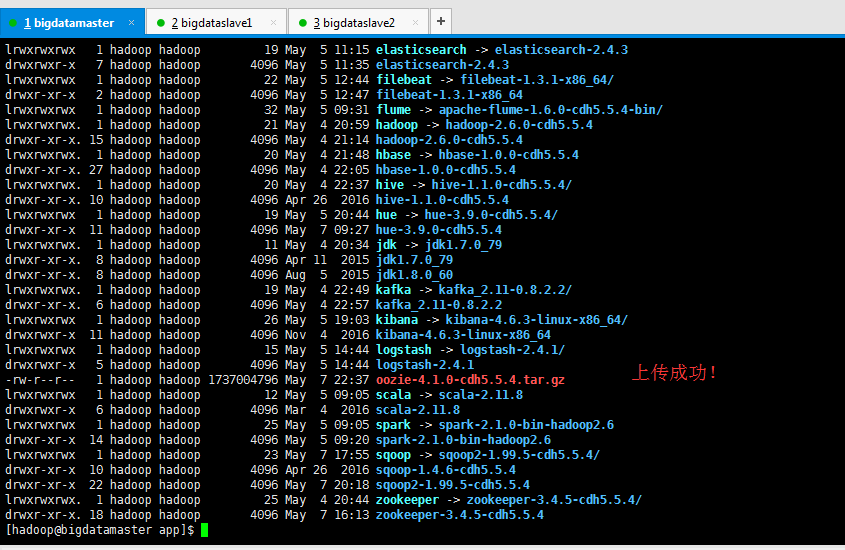

[hadoop@bigdatamaster app]$ rz [hadoop@bigdatamaster app]$ ll

total

drwxr-xr-x hadoop hadoop Apr apache-flume-1.6.-cdh5.5.4-bin

lrwxrwxrwx hadoop hadoop May : elasticsearch -> elasticsearch-2.4.

drwxrwxr-x hadoop hadoop May : elasticsearch-2.4.

lrwxrwxrwx hadoop hadoop May : filebeat -> filebeat-1.3.-x86_64/

drwxr-xr-x hadoop hadoop May : filebeat-1.3.-x86_64

lrwxrwxrwx hadoop hadoop May : flume -> apache-flume-1.6.-cdh5.5.4-bin/

lrwxrwxrwx. hadoop hadoop May : hadoop -> hadoop-2.6.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hadoop-2.6.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hbase -> hbase-1.0.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hbase-1.0.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hive -> hive-1.1.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop Apr hive-1.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : hue -> hue-3.9.-cdh5.5.4/

drwxr-xr-x hadoop hadoop May : hue-3.9.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx. hadoop hadoop May : kafka -> kafka_2.-0.8.2.2/

drwxr-xr-x. hadoop hadoop May : kafka_2.-0.8.2.2

lrwxrwxrwx hadoop hadoop May : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop May : logstash -> logstash-2.4./

drwxrwxr-x hadoop hadoop May : logstash-2.4.

-rw-r--r-- hadoop hadoop May : oozie-4.1.-cdh5.5.4.tar.gz

lrwxrwxrwx hadoop hadoop May : scala -> scala-2.11.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : spark -> spark-2.1.-bin-hadoop2.

drwxr-xr-x hadoop hadoop May : spark-2.1.-bin-hadoop2.

lrwxrwxrwx hadoop hadoop May : sqoop -> sqoop2-1.99.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr sqoop-1.4.-cdh5.5.4

drwxr-xr-x hadoop hadoop May : sqoop2-1.99.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : zookeeper -> zookeeper-3.4.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop May : zookeeper-3.4.-cdh5.5.4

[hadoop@bigdatamaster app]$

解压

[hadoop@bigdatamaster app]$ pwd

/home/hadoop/app

[hadoop@bigdatamaster app]$ ll

total

drwxr-xr-x hadoop hadoop Apr apache-flume-1.6.-cdh5.5.4-bin

lrwxrwxrwx hadoop hadoop May : elasticsearch -> elasticsearch-2.4.

drwxrwxr-x hadoop hadoop May : elasticsearch-2.4.

lrwxrwxrwx hadoop hadoop May : filebeat -> filebeat-1.3.-x86_64/

drwxr-xr-x hadoop hadoop May : filebeat-1.3.-x86_64

lrwxrwxrwx hadoop hadoop May : flume -> apache-flume-1.6.-cdh5.5.4-bin/

lrwxrwxrwx. hadoop hadoop May : hadoop -> hadoop-2.6.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hadoop-2.6.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hbase -> hbase-1.0.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hbase-1.0.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hive -> hive-1.1.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop Apr hive-1.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : hue -> hue-3.9.-cdh5.5.4/

drwxr-xr-x hadoop hadoop May : hue-3.9.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx. hadoop hadoop May : kafka -> kafka_2.-0.8.2.2/

drwxr-xr-x. hadoop hadoop May : kafka_2.-0.8.2.2

lrwxrwxrwx hadoop hadoop May : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop May : logstash -> logstash-2.4./

drwxrwxr-x hadoop hadoop May : logstash-2.4.

-rw-r--r-- hadoop hadoop May : oozie-4.1.-cdh5.5.4.tar.gz

lrwxrwxrwx hadoop hadoop May : scala -> scala-2.11.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : spark -> spark-2.1.-bin-hadoop2.

drwxr-xr-x hadoop hadoop May : spark-2.1.-bin-hadoop2.

lrwxrwxrwx hadoop hadoop May : sqoop -> sqoop2-1.99.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr sqoop-1.4.-cdh5.5.4

drwxr-xr-x hadoop hadoop May : sqoop2-1.99.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : zookeeper -> zookeeper-3.4.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop May : zookeeper-3.4.-cdh5.5.4

[hadoop@bigdatamaster app]$ tar -zxvf oozie-4.1.-cdh5.5.4.tar.gz

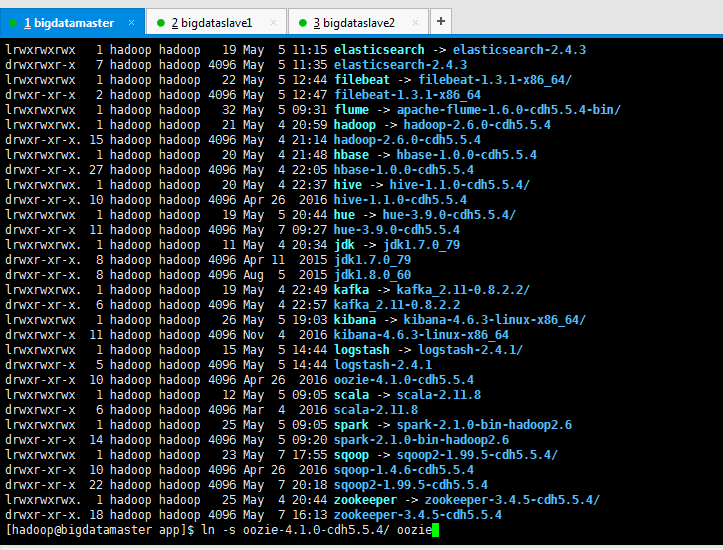

建立软链接(为了适应不同版本的需求)

[hadoop@bigdatamaster app]$ pwd

/home/hadoop/app

[hadoop@bigdatamaster app]$ ll

total

drwxr-xr-x hadoop hadoop Apr apache-flume-1.6.-cdh5.5.4-bin

lrwxrwxrwx hadoop hadoop May : elasticsearch -> elasticsearch-2.4.

drwxrwxr-x hadoop hadoop May : elasticsearch-2.4.

lrwxrwxrwx hadoop hadoop May : filebeat -> filebeat-1.3.-x86_64/

drwxr-xr-x hadoop hadoop May : filebeat-1.3.-x86_64

lrwxrwxrwx hadoop hadoop May : flume -> apache-flume-1.6.-cdh5.5.4-bin/

lrwxrwxrwx. hadoop hadoop May : hadoop -> hadoop-2.6.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hadoop-2.6.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hbase -> hbase-1.0.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hbase-1.0.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hive -> hive-1.1.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop Apr hive-1.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : hue -> hue-3.9.-cdh5.5.4/

drwxr-xr-x hadoop hadoop May : hue-3.9.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx. hadoop hadoop May : kafka -> kafka_2.-0.8.2.2/

drwxr-xr-x. hadoop hadoop May : kafka_2.-0.8.2.2

lrwxrwxrwx hadoop hadoop May : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop May : logstash -> logstash-2.4./

drwxrwxr-x hadoop hadoop May : logstash-2.4.

drwxr-xr-x hadoop hadoop Apr oozie-4.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : scala -> scala-2.11.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : spark -> spark-2.1.-bin-hadoop2.

drwxr-xr-x hadoop hadoop May : spark-2.1.-bin-hadoop2.

lrwxrwxrwx hadoop hadoop May : sqoop -> sqoop2-1.99.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr sqoop-1.4.-cdh5.5.4

drwxr-xr-x hadoop hadoop May : sqoop2-1.99.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : zookeeper -> zookeeper-3.4.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop May : zookeeper-3.4.-cdh5.5.4

[hadoop@bigdatamaster app]$ ln -s oozie-4.1.-cdh5.5.4/ oozie

[hadoop@bigdatamaster app]$ ll

total

drwxr-xr-x hadoop hadoop Apr apache-flume-1.6.-cdh5.5.4-bin

lrwxrwxrwx hadoop hadoop May : elasticsearch -> elasticsearch-2.4.

drwxrwxr-x hadoop hadoop May : elasticsearch-2.4.

lrwxrwxrwx hadoop hadoop May : filebeat -> filebeat-1.3.-x86_64/

drwxr-xr-x hadoop hadoop May : filebeat-1.3.-x86_64

lrwxrwxrwx hadoop hadoop May : flume -> apache-flume-1.6.-cdh5.5.4-bin/

lrwxrwxrwx. hadoop hadoop May : hadoop -> hadoop-2.6.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hadoop-2.6.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hbase -> hbase-1.0.-cdh5.5.4

drwxr-xr-x. hadoop hadoop May : hbase-1.0.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : hive -> hive-1.1.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop Apr hive-1.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : hue -> hue-3.9.-cdh5.5.4/

drwxr-xr-x hadoop hadoop May : hue-3.9.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : jdk -> jdk1..0_79

drwxr-xr-x. hadoop hadoop Apr jdk1..0_79

drwxr-xr-x. hadoop hadoop Aug jdk1..0_60

lrwxrwxrwx. hadoop hadoop May : kafka -> kafka_2.-0.8.2.2/

drwxr-xr-x. hadoop hadoop May : kafka_2.-0.8.2.2

lrwxrwxrwx hadoop hadoop May : kibana -> kibana-4.6.-linux-x86_64/

drwxrwxr-x hadoop hadoop Nov kibana-4.6.-linux-x86_64

lrwxrwxrwx hadoop hadoop May : logstash -> logstash-2.4./

drwxrwxr-x hadoop hadoop May : logstash-2.4.

lrwxrwxrwx hadoop hadoop May : oozie -> oozie-4.1.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr oozie-4.1.-cdh5.5.4

lrwxrwxrwx hadoop hadoop May : scala -> scala-2.11.

drwxrwxr-x hadoop hadoop Mar scala-2.11.

lrwxrwxrwx hadoop hadoop May : spark -> spark-2.1.-bin-hadoop2.

drwxr-xr-x hadoop hadoop May : spark-2.1.-bin-hadoop2.

lrwxrwxrwx hadoop hadoop May : sqoop -> sqoop2-1.99.-cdh5.5.4/

drwxr-xr-x hadoop hadoop Apr sqoop-1.4.-cdh5.5.4

drwxr-xr-x hadoop hadoop May : sqoop2-1.99.-cdh5.5.4

lrwxrwxrwx. hadoop hadoop May : zookeeper -> zookeeper-3.4.-cdh5.5.4/

drwxr-xr-x. hadoop hadoop May : zookeeper-3.4.-cdh5.5.4

[hadoop@bigdatamaster app]$

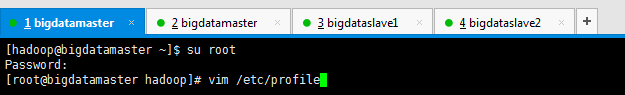

设置环境变量

[hadoop@bigdatamaster ~]$ su root

Password:

[root@bigdatamaster hadoop]# vim /etc/profile

#oozie

export OOZIE_HOME=/home/hadoop/app/oozie

export PATH=$PATH:$OOZIE_HOME/bin

[hadoop@bigdatamaster ~]$ su root

Password:

[root@bigdatamaster hadoop]# vim /etc/profile

[root@bigdatamaster hadoop]# source /etc/profile

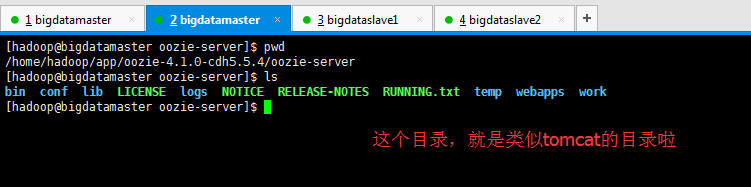

初步认识下Oozie的目录结构

[hadoop@bigdatamaster app]$ cd oozie

[hadoop@bigdatamaster oozie]$ pwd

/home/hadoop/app/oozie

[hadoop@bigdatamaster oozie]$ ll

total

drwxr-xr-x hadoop hadoop Apr bin

drwxr-xr-x hadoop hadoop Apr conf

drwxr-xr-x hadoop hadoop Apr docs

drwxr-xr-x hadoop hadoop Apr lib

drwxr-xr-x hadoop hadoop Apr libtools

-rw-r--r-- hadoop hadoop Apr LICENSE.txt

-rw-r--r-- hadoop hadoop Apr NOTICE.txt

drwxr-xr-x hadoop hadoop Apr oozie-core

-rwxr-xr-x hadoop hadoop Apr oozie-examples.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz

drwxr-xr-x hadoop hadoop Apr oozie-server

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4.tar.gz

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie.war

-rw-r--r-- hadoop hadoop Apr release-log.txt

drwxr-xr-x hadoop hadoop Apr src

[hadoop@bigdatamaster oozie]$

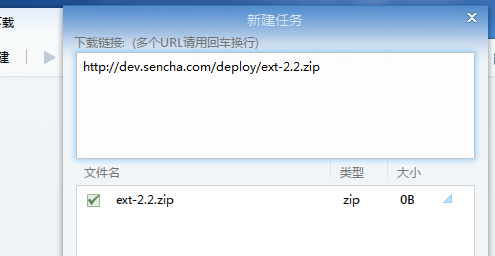

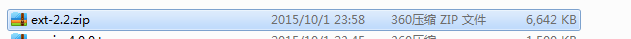

点击它,就可以下载。然后上传。

http://dev.sencha.com/deploy/ext-2.2.zip

建议用迅雷下载

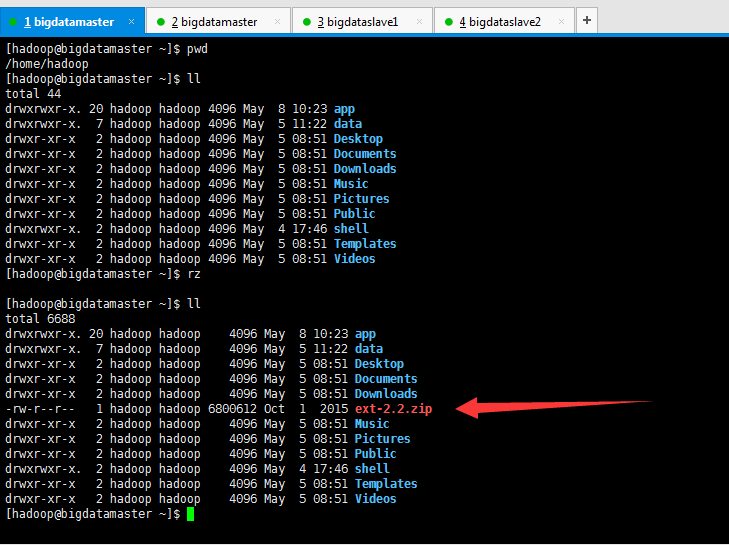

这里,我暂时上传到/home/hadoop下,其实,最终只需放到$OOZIE_HOME/libext下即可。(但是这个目录暂时是没有的,得要新建)

[hadoop@bigdatamaster ~]$ pwd

/home/hadoop

[hadoop@bigdatamaster ~]$ ll

total

drwxrwxr-x. hadoop hadoop May : app

drwxrwxr-x. hadoop hadoop May : data

drwxr-xr-x hadoop hadoop May : Desktop

drwxr-xr-x hadoop hadoop May : Documents

drwxr-xr-x hadoop hadoop May : Downloads

drwxr-xr-x hadoop hadoop May : Music

drwxr-xr-x hadoop hadoop May : Pictures

drwxr-xr-x hadoop hadoop May : Public

drwxrwxr-x. hadoop hadoop May : shell

drwxr-xr-x hadoop hadoop May : Templates

drwxr-xr-x hadoop hadoop May : Videos

[hadoop@bigdatamaster ~]$ rz [hadoop@bigdatamaster ~]$ ll

total

drwxrwxr-x. hadoop hadoop May : app

drwxrwxr-x. hadoop hadoop May : data

drwxr-xr-x hadoop hadoop May : Desktop

drwxr-xr-x hadoop hadoop May : Documents

drwxr-xr-x hadoop hadoop May : Downloads

-rw-r--r-- hadoop hadoop Oct ext-2.2.zip

drwxr-xr-x hadoop hadoop May : Music

drwxr-xr-x hadoop hadoop May : Pictures

drwxr-xr-x hadoop hadoop May : Public

drwxrwxr-x. hadoop hadoop May : shell

drwxr-xr-x hadoop hadoop May : Templates

drwxr-xr-x hadoop hadoop May : Videos

[hadoop@bigdatamaster ~]$

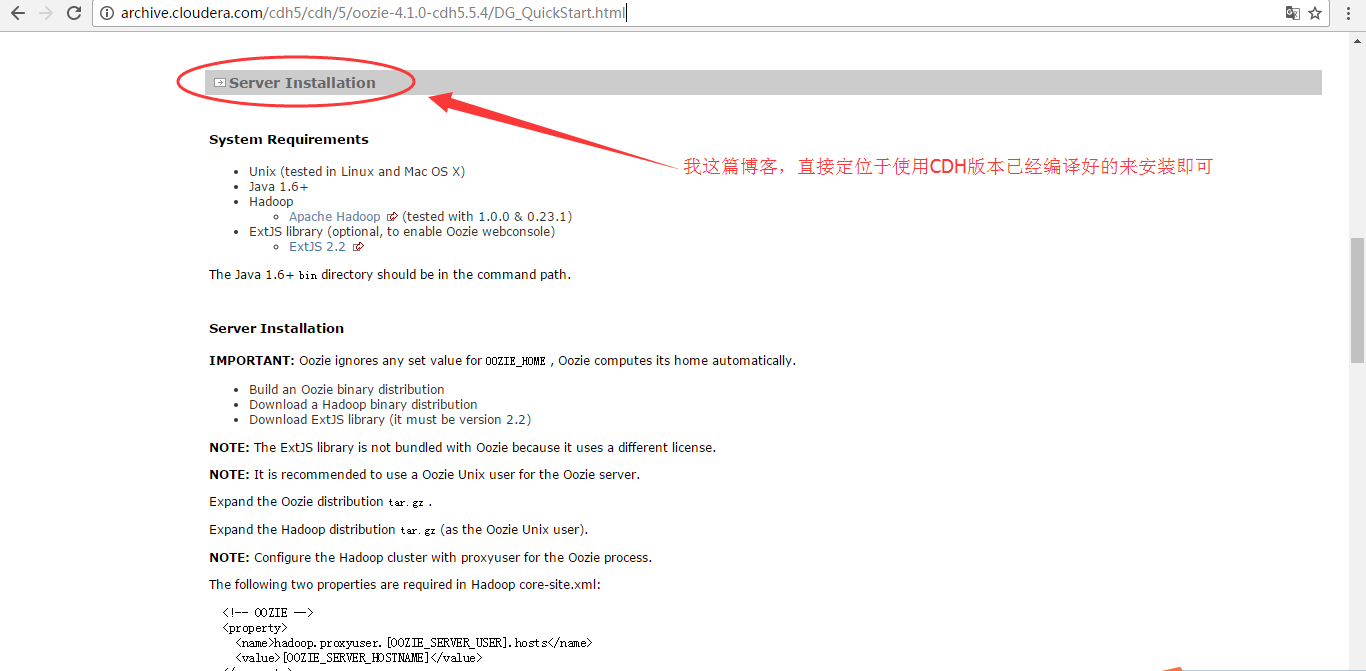

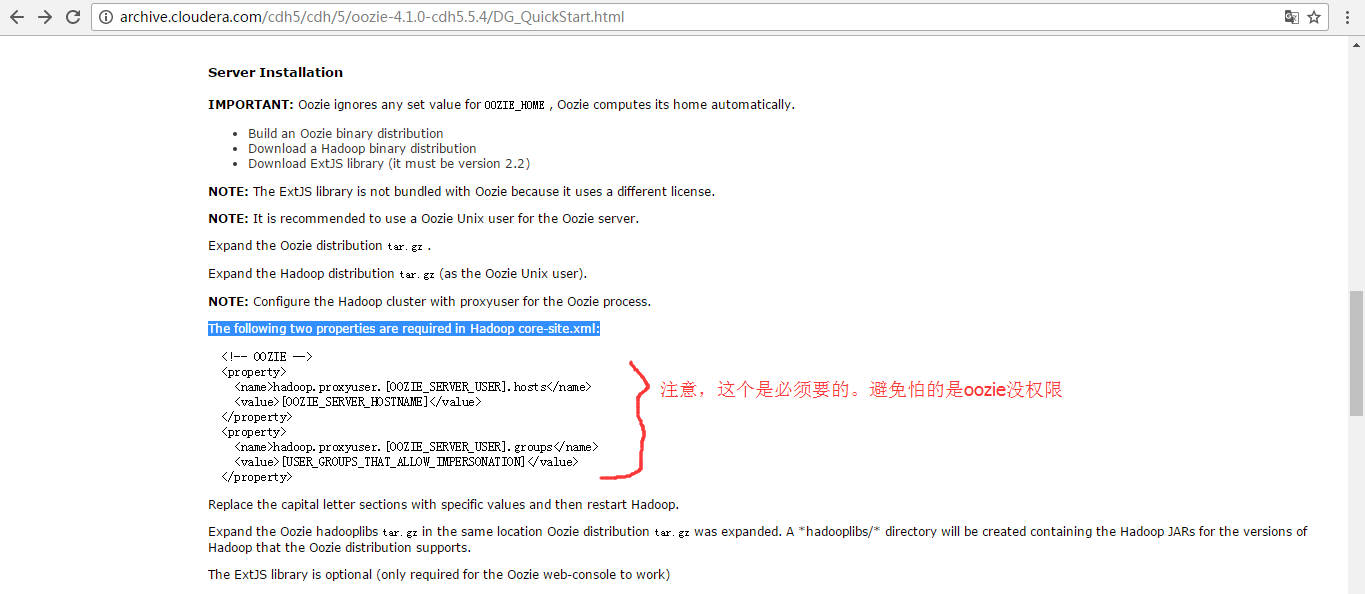

<!-- OOZIE -->

<property>

<name>hadoop.proxyuser.[OOZIE_SERVER_USER].hosts</name>

<value>[OOZIE_SERVER_HOSTNAME]</value>

</property>

<property>

<name>hadoop.proxyuser.[OOZIE_SERVER_USER].groups</name>

<value>[USER_GROUPS_THAT_ALLOW_IMPERSONATION]</value>

</property>

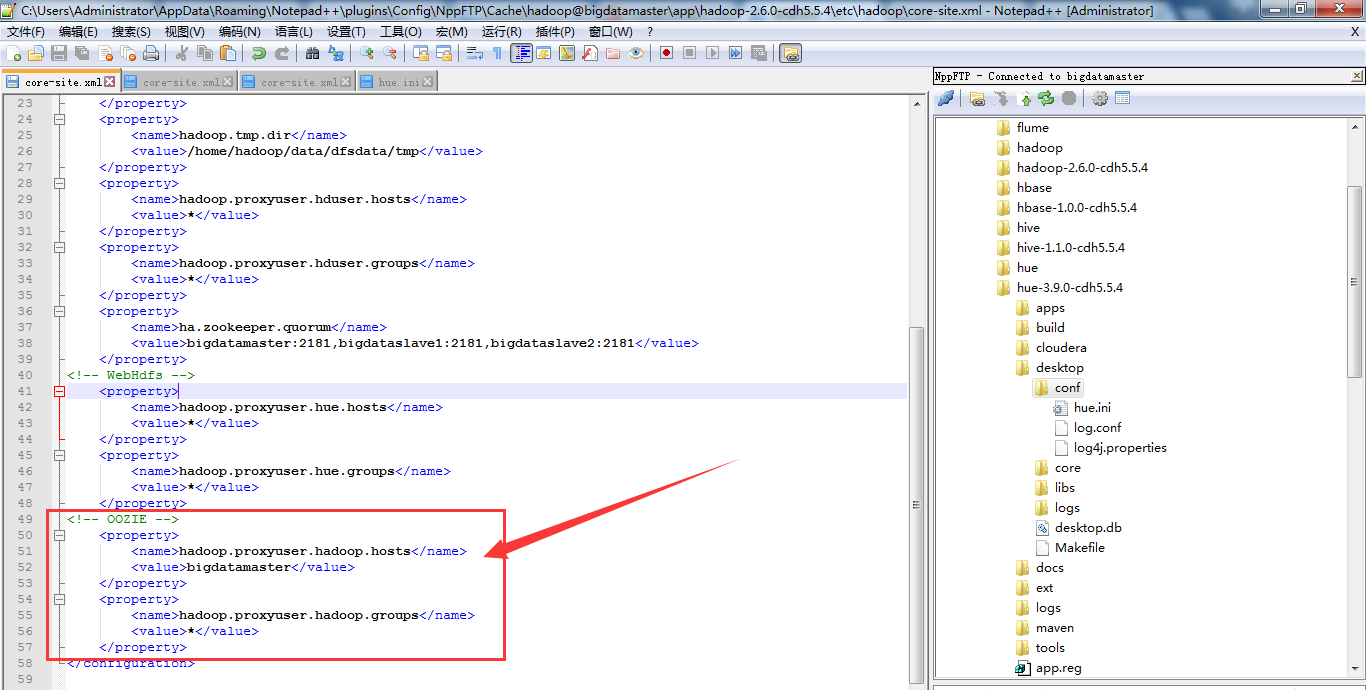

我的这里是

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>bigdatamaster</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

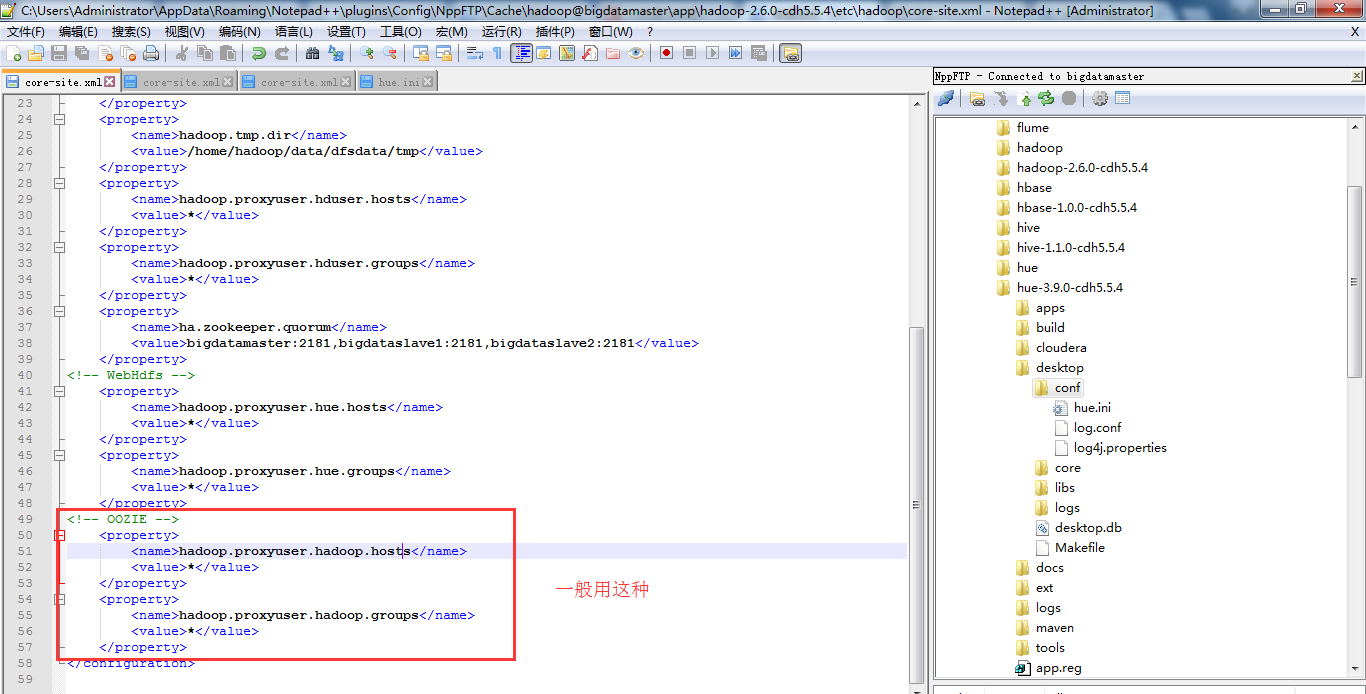

或者(一般用这种)

<property>

<name>hadoop.proxyuser.hadoop.hosts</name>

<value>*</value>

</property>

<property>

<name>hadoop.proxyuser.hadoop.groups</name>

<value>*</value>

</property>

这里只需对安装oozie的机器即可,我这里只安装在bigdatamaster机器上。

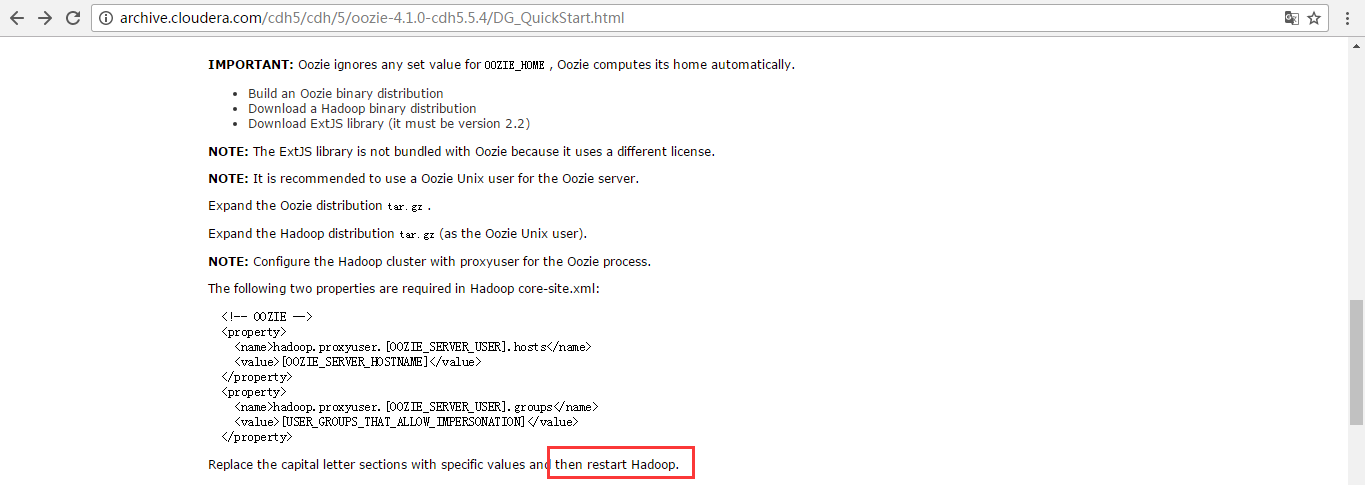

注意,先配置好,再要重启hadoop。不然,不生效的哈。

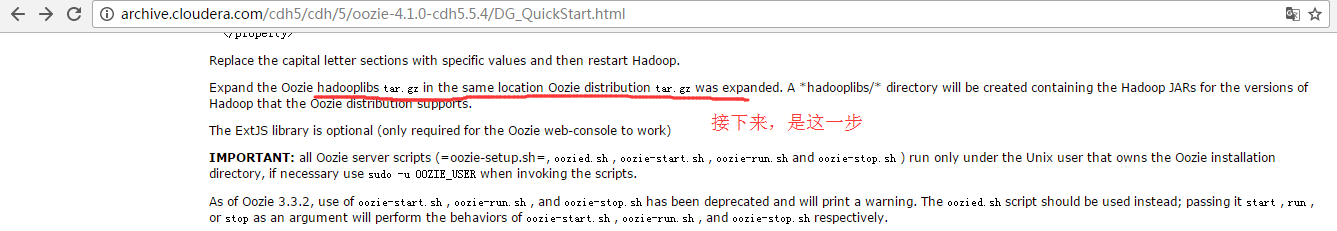

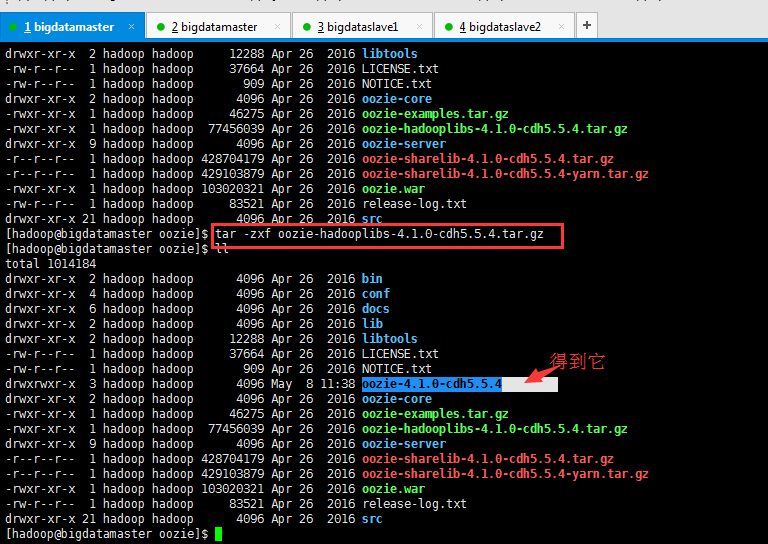

接下来,是解压hadoooplibs.tar.gz

Expand the Oozie hadooplibs tar.gz in the same location Oozie distribution tar.gz was expanded

我这里对应的是,oozie-hadooplibs-4.1.0-cdh5.5.4.tar.gz

[hadoop@bigdatamaster oozie]$ pwd

/home/hadoop/app/oozie

[hadoop@bigdatamaster oozie]$ ll

total

drwxr-xr-x hadoop hadoop Apr bin

drwxr-xr-x hadoop hadoop Apr conf

drwxr-xr-x hadoop hadoop Apr docs

drwxr-xr-x hadoop hadoop Apr lib

drwxr-xr-x hadoop hadoop Apr libtools

-rw-r--r-- hadoop hadoop Apr LICENSE.txt

-rw-r--r-- hadoop hadoop Apr NOTICE.txt

drwxr-xr-x hadoop hadoop Apr oozie-core

-rwxr-xr-x hadoop hadoop Apr oozie-examples.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz

drwxr-xr-x hadoop hadoop Apr oozie-server

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4.tar.gz

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie.war

-rw-r--r-- hadoop hadoop Apr release-log.txt

drwxr-xr-x hadoop hadoop Apr src

[hadoop@bigdatamaster oozie]$ tar -zxf oozie-hadooplibs-4.1.0-cdh5.5.4.tar.gz

[hadoop@bigdatamaster oozie]$ ll

total

drwxr-xr-x hadoop hadoop Apr bin

drwxr-xr-x hadoop hadoop Apr conf

drwxr-xr-x hadoop hadoop Apr docs

drwxr-xr-x hadoop hadoop Apr lib

drwxr-xr-x hadoop hadoop Apr libtools

-rw-r--r-- hadoop hadoop Apr LICENSE.txt

-rw-r--r-- hadoop hadoop Apr NOTICE.txt

drwxrwxr-x hadoop hadoop May : oozie-4.1.0-cdh5.5.4

drwxr-xr-x hadoop hadoop Apr oozie-core

-rwxr-xr-x hadoop hadoop Apr oozie-examples.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz

drwxr-xr-x hadoop hadoop Apr oozie-server

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4.tar.gz

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie.war

-rw-r--r-- hadoop hadoop Apr release-log.txt

drwxr-xr-x hadoop hadoop Apr src

[hadoop@bigdatamaster oozie]$

A *hadooplibs/* directory will be created containing the Hadoop JARs for the versions of Hadoop that the Oozie distribution supports.

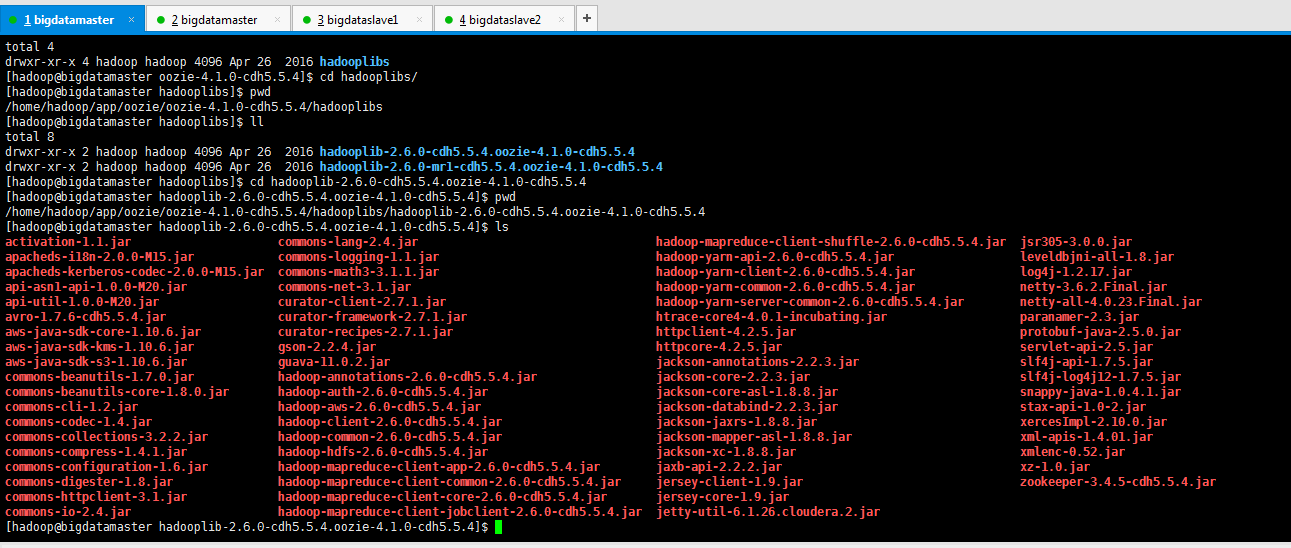

因为, 它是支持MR1,也支持MR2(YARN)。我们的是在YARN里。即成功生成了

[hadoop@bigdatamaster oozie-4.1.-cdh5.5.4]$ pwd

/home/hadoop/app/oozie/oozie-4.1.-cdh5.5.4

[hadoop@bigdatamaster oozie-4.1.-cdh5.5.4]$ ll

total

drwxr-xr-x hadoop hadoop Apr hadooplibs

[hadoop@bigdatamaster oozie-4.1.-cdh5.5.4]$ cd hadooplibs/

[hadoop@bigdatamaster hadooplibs]$ pwd

/home/hadoop/app/oozie/oozie-4.1.-cdh5.5.4/hadooplibs

[hadoop@bigdatamaster hadooplibs]$ ll

total

drwxr-xr-x hadoop hadoop Apr hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4

drwxr-xr-x hadoop hadoop Apr hadooplib-2.6.-mr1-cdh5.5.4.oozie-4.1.-cdh5.5.4

[hadoop@bigdatamaster hadooplibs]$ cd hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4

[hadoop@bigdatamaster hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4]$ pwd

/home/hadoop/app/oozie/oozie-4.1.-cdh5.5.4/hadooplibs/hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4

[hadoop@bigdatamaster hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4]$ ls

activation-1.1.jar commons-lang-2.4.jar hadoop-mapreduce-client-shuffle-2.6.-cdh5.5.4.jar jsr305-3.0..jar

apacheds-i18n-2.0.-M15.jar commons-logging-1.1.jar hadoop-yarn-api-2.6.-cdh5.5.4.jar leveldbjni-all-1.8.jar

apacheds-kerberos-codec-2.0.-M15.jar commons-math3-3.1..jar hadoop-yarn-client-2.6.-cdh5.5.4.jar log4j-1.2..jar

api-asn1-api-1.0.-M20.jar commons-net-3.1.jar hadoop-yarn-common-2.6.-cdh5.5.4.jar netty-3.6..Final.jar

api-util-1.0.-M20.jar curator-client-2.7..jar hadoop-yarn-server-common-2.6.-cdh5.5.4.jar netty-all-4.0..Final.jar

avro-1.7.-cdh5.5.4.jar curator-framework-2.7..jar htrace-core4-4.0.-incubating.jar paranamer-2.3.jar

aws-java-sdk-core-1.10..jar curator-recipes-2.7..jar httpclient-4.2..jar protobuf-java-2.5..jar

aws-java-sdk-kms-1.10..jar gson-2.2..jar httpcore-4.2..jar servlet-api-2.5.jar

aws-java-sdk-s3-1.10..jar guava-11.0..jar jackson-annotations-2.2..jar slf4j-api-1.7..jar

commons-beanutils-1.7..jar hadoop-annotations-2.6.-cdh5.5.4.jar jackson-core-2.2..jar slf4j-log4j12-1.7..jar

commons-beanutils-core-1.8..jar hadoop-auth-2.6.-cdh5.5.4.jar jackson-core-asl-1.8..jar snappy-java-1.0.4.1.jar

commons-cli-1.2.jar hadoop-aws-2.6.-cdh5.5.4.jar jackson-databind-2.2..jar stax-api-1.0-.jar

commons-codec-1.4.jar hadoop-client-2.6.-cdh5.5.4.jar jackson-jaxrs-1.8..jar xercesImpl-2.10..jar

commons-collections-3.2..jar hadoop-common-2.6.-cdh5.5.4.jar jackson-mapper-asl-1.8..jar xml-apis-1.4..jar

commons-compress-1.4..jar hadoop-hdfs-2.6.-cdh5.5.4.jar jackson-xc-1.8..jar xmlenc-0.52.jar

commons-configuration-1.6.jar hadoop-mapreduce-client-app-2.6.-cdh5.5.4.jar jaxb-api-2.2..jar xz-1.0.jar

commons-digester-1.8.jar hadoop-mapreduce-client-common-2.6.-cdh5.5.4.jar jersey-client-1.9.jar zookeeper-3.4.-cdh5.5.4.jar

commons-httpclient-3.1.jar hadoop-mapreduce-client-core-2.6.-cdh5.5.4.jar jersey-core-1.9.jar

commons-io-2.4.jar hadoop-mapreduce-client-jobclient-2.6.-cdh5.5.4.jar jetty-util-6.1..cloudera..jar

[hadoop@bigdatamaster hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4]$

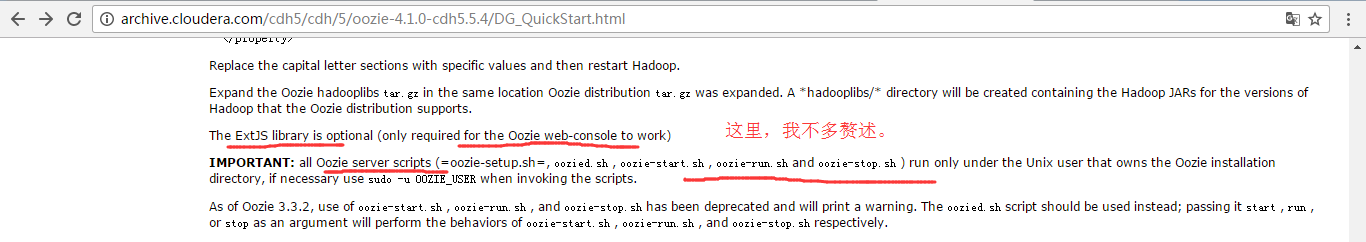

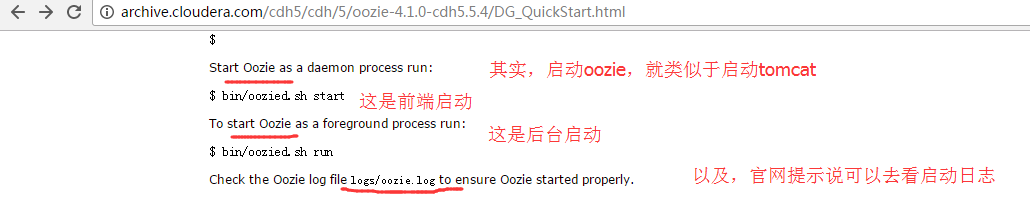

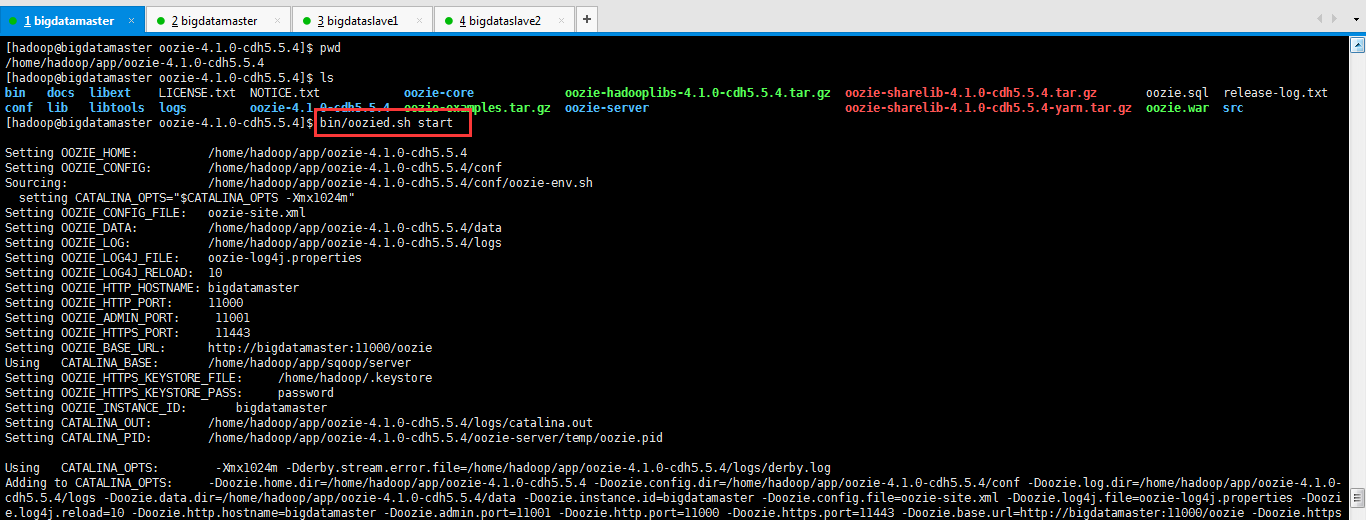

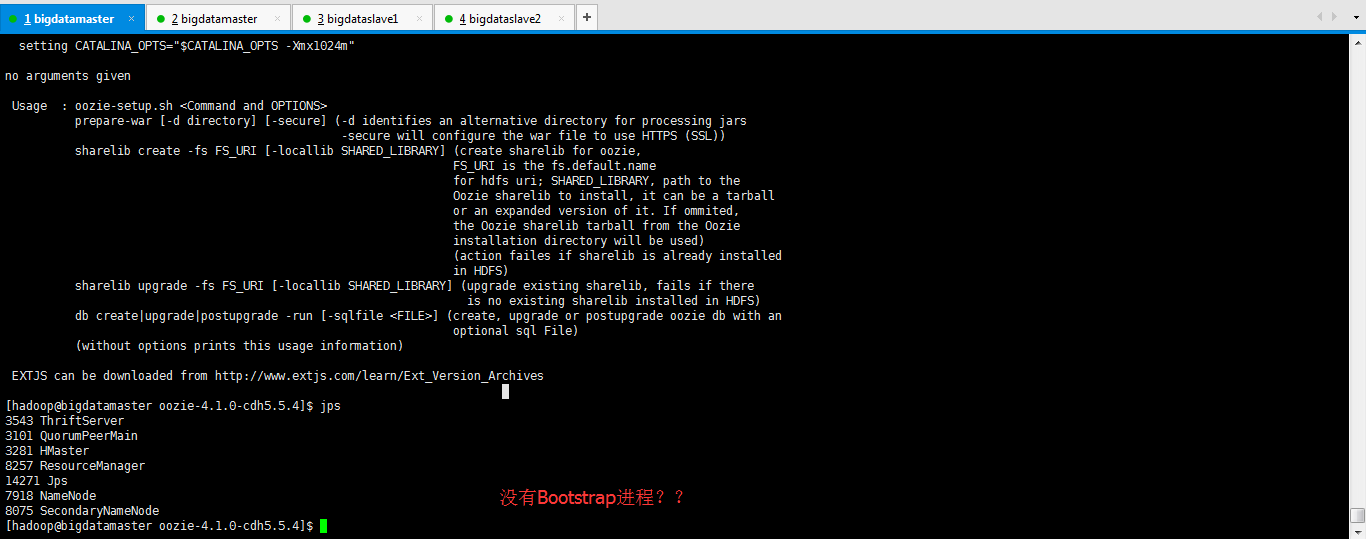

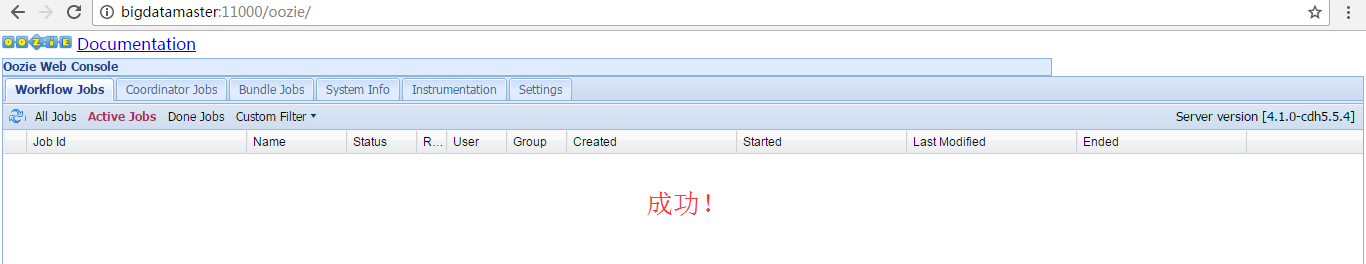

The ExtJS library is optional (only required for the Oozie web-console to work) IMPORTANT: all Oozie server scripts (=oozie-setup.sh=, oozied.sh , oozie-start.sh , oozie-run.sh and oozie-stop.sh ) run only under the Unix user that owns the Oozie installation directory, if necessary use sudo -u OOZIE_USER when invoking the scripts. As of Oozie 3.3.2, use of oozie-start.sh , oozie-run.sh , and oozie-stop.sh has been deprecated and will print a warning. The oozied.sh script should be used instead; passing it start , run , or stop as an argument will perform the behaviors of oozie-start.sh , oozie-run.sh , and oozie-stop.sh respectively.

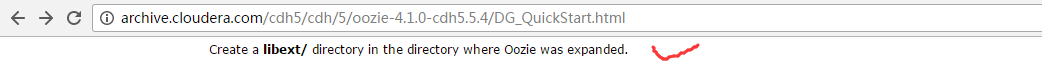

Create a libext/ directory in the directory where Oozie was expanded.

由此可见,安装后,是没有这个目录libext的。

所以,得新建mkdir libext

[hadoop@bigdatamaster oozie]$ mkdir libext

[hadoop@bigdatamaster oozie]$ ll

total

drwxr-xr-x hadoop hadoop Apr bin

drwxr-xr-x hadoop hadoop Apr conf

drwxr-xr-x hadoop hadoop Apr docs

drwxr-xr-x hadoop hadoop Apr lib

drwxrwxr-x hadoop hadoop May : libext

drwxr-xr-x hadoop hadoop Apr libtools

-rw-r--r-- hadoop hadoop Apr LICENSE.txt

-rw-r--r-- hadoop hadoop Apr NOTICE.txt

drwxrwxr-x hadoop hadoop May : oozie-4.1.-cdh5.5.4

drwxr-xr-x hadoop hadoop Apr oozie-core

-rwxr-xr-x hadoop hadoop Apr oozie-examples.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz

drwxr-xr-x hadoop hadoop Apr oozie-server

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4.tar.gz

-r--r--r-- hadoop hadoop Apr oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz

-rwxr-xr-x hadoop hadoop Apr oozie.war

-rw-r--r-- hadoop hadoop Apr release-log.txt

drwxr-xr-x hadoop hadoop Apr src

[hadoop@bigdatamaster oozie]$

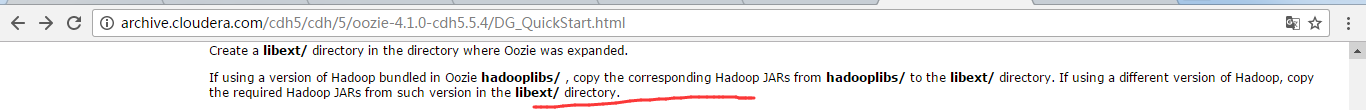

新建好目录之后,然后,将hadooplibs下所有的hadoop jar包都复制一份到这个新建好的libext目录下

If using a version of Hadoop bundled in Oozie hadooplibs/ , copy the corresponding Hadoop JARs from hadooplibs/ to the libext/ directory. If using a different version of Hadoop, copy the required Hadoop JARs from such version in the libext/ directory.

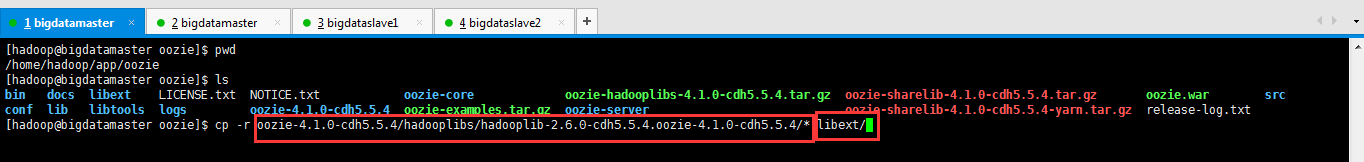

[hadoop@bigdatamaster oozie]$ pwd

/home/hadoop/app/oozie

[hadoop@bigdatamaster oozie]$ ls

bin docs libext LICENSE.txt NOTICE.txt oozie-core oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz oozie-sharelib-4.1.-cdh5.5.4.tar.gz oozie.war src

conf lib libtools logs oozie-4.1.-cdh5.5.4 oozie-examples.tar.gz oozie-server oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz release-log.txt

[hadoop@bigdatamaster oozie]$ cp -r oozie-4.1.-cdh5.5.4/hadooplibs/hadooplib-2.6.-cdh5.5.4.oozie-4.1.-cdh5.5.4/* libext/

[hadoop@bigdatamaster oozie]$

查看有没有拷贝成功

然后,再拷贝,我们之前,暂时上传在/home/hadop下的ext-2.2.zip到$OOZIE_HOME/libext目录下

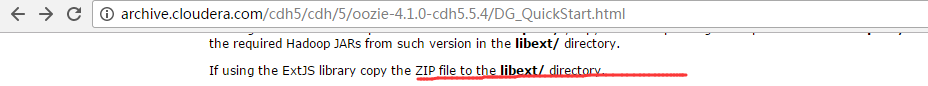

If using the ExtJS library copy the ZIP file to the libext/ directory.

这里官网,说的很谦虚,还来什么如果。其实是必须的啊!因为Ooize的前端界面就是用到ExtJS。

[hadoop@bigdatamaster libext]$ pwd

/home/hadoop/app/oozie/libext

[hadoop@bigdatamaster libext]$ cp /home/hadoop/ext-2.2.zip /home/hadoop/app/oozie/libext/

[hadoop@bigdatamaster libext]$ ls

activation-1.1.jar commons-lang-2.4.jar hadoop-mapreduce-client-jobclient-2.6.-cdh5.5.4.jar jetty-util-6.1..cloudera..jar

apacheds-i18n-2.0.-M15.jar commons-logging-1.1.jar hadoop-mapreduce-client-shuffle-2.6.-cdh5.5.4.jar jsr305-3.0..jar

apacheds-kerberos-codec-2.0.-M15.jar commons-math3-3.1..jar hadoop-yarn-api-2.6.-cdh5.5.4.jar leveldbjni-all-1.8.jar

api-asn1-api-1.0.-M20.jar commons-net-3.1.jar hadoop-yarn-client-2.6.-cdh5.5.4.jar log4j-1.2..jar

api-util-1.0.-M20.jar curator-client-2.7..jar hadoop-yarn-common-2.6.-cdh5.5.4.jar netty-3.6..Final.jar

avro-1.7.-cdh5.5.4.jar curator-framework-2.7..jar hadoop-yarn-server-common-2.6.-cdh5.5.4.jar netty-all-4.0..Final.jar

aws-java-sdk-core-1.10..jar curator-recipes-2.7..jar htrace-core4-4.0.-incubating.jar paranamer-2.3.jar

aws-java-sdk-kms-1.10..jar ext-2.2.zip httpclient-4.2..jar protobuf-java-2.5..jar

aws-java-sdk-s3-1.10..jar gson-2.2..jar httpcore-4.2..jar servlet-api-2.5.jar

commons-beanutils-1.7..jar guava-11.0..jar jackson-annotations-2.2..jar slf4j-api-1.7..jar

commons-beanutils-core-1.8..jar hadoop-annotations-2.6.-cdh5.5.4.jar jackson-core-2.2..jar slf4j-log4j12-1.7..jar

commons-cli-1.2.jar hadoop-auth-2.6.-cdh5.5.4.jar jackson-core-asl-1.8..jar snappy-java-1.0.4.1.jar

commons-codec-1.4.jar hadoop-aws-2.6.-cdh5.5.4.jar jackson-databind-2.2..jar stax-api-1.0-.jar

commons-collections-3.2..jar hadoop-client-2.6.-cdh5.5.4.jar jackson-jaxrs-1.8..jar xercesImpl-2.10..jar

commons-compress-1.4..jar hadoop-common-2.6.-cdh5.5.4.jar jackson-mapper-asl-1.8..jar xml-apis-1.4..jar

commons-configuration-1.6.jar hadoop-hdfs-2.6.-cdh5.5.4.jar jackson-xc-1.8..jar xmlenc-0.52.jar

commons-digester-1.8.jar hadoop-mapreduce-client-app-2.6.-cdh5.5.4.jar jaxb-api-2.2..jar xz-1.0.jar

commons-httpclient-3.1.jar hadoop-mapreduce-client-common-2.6.-cdh5.5.4.jar jersey-client-1.9.jar zookeeper-3.4.-cdh5.5.4.jar

commons-io-2.4.jar hadoop-mapreduce-client-core-2.6.-cdh5.5.4.jar jersey-core-1.9.jar

[hadoop@bigdatamaster libext]$

欧克,这样的话。我们的/home/hadoop下的ext-2.2.zip 就可以删除了,不要了。

下面的这些操作,就是我们之前的那么jar包都准备好了,然后,怎么打到libext目录里去。

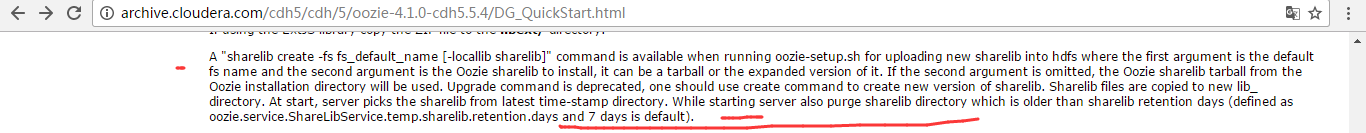

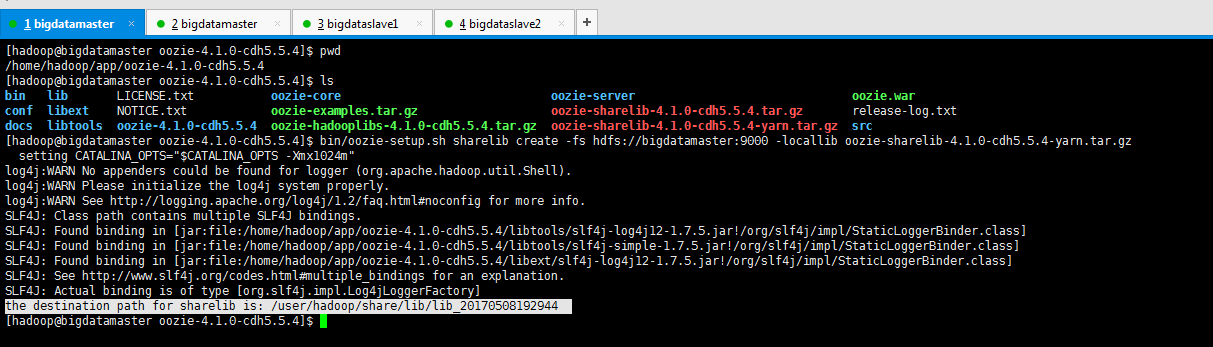

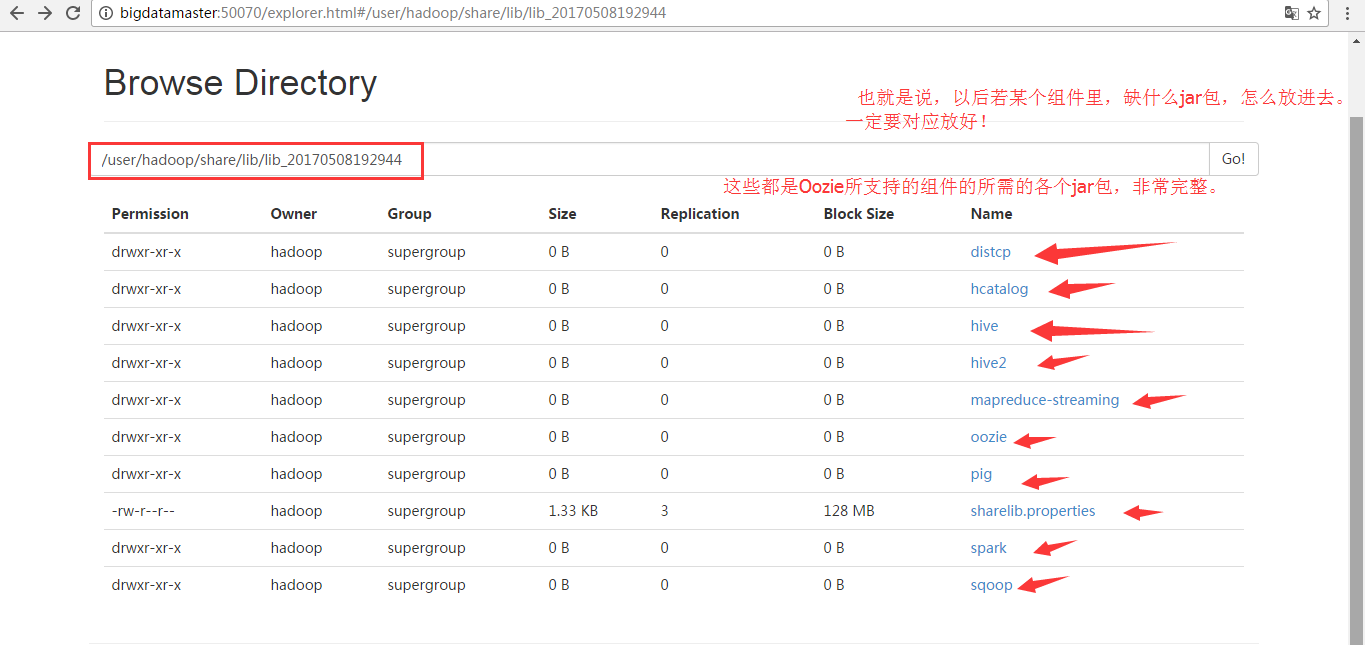

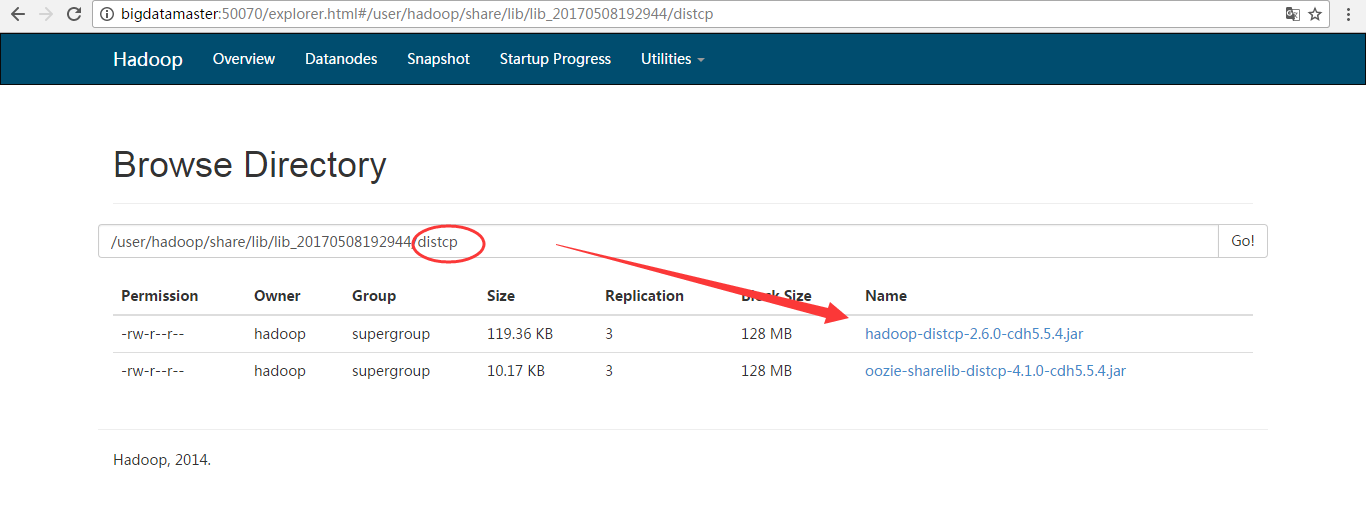

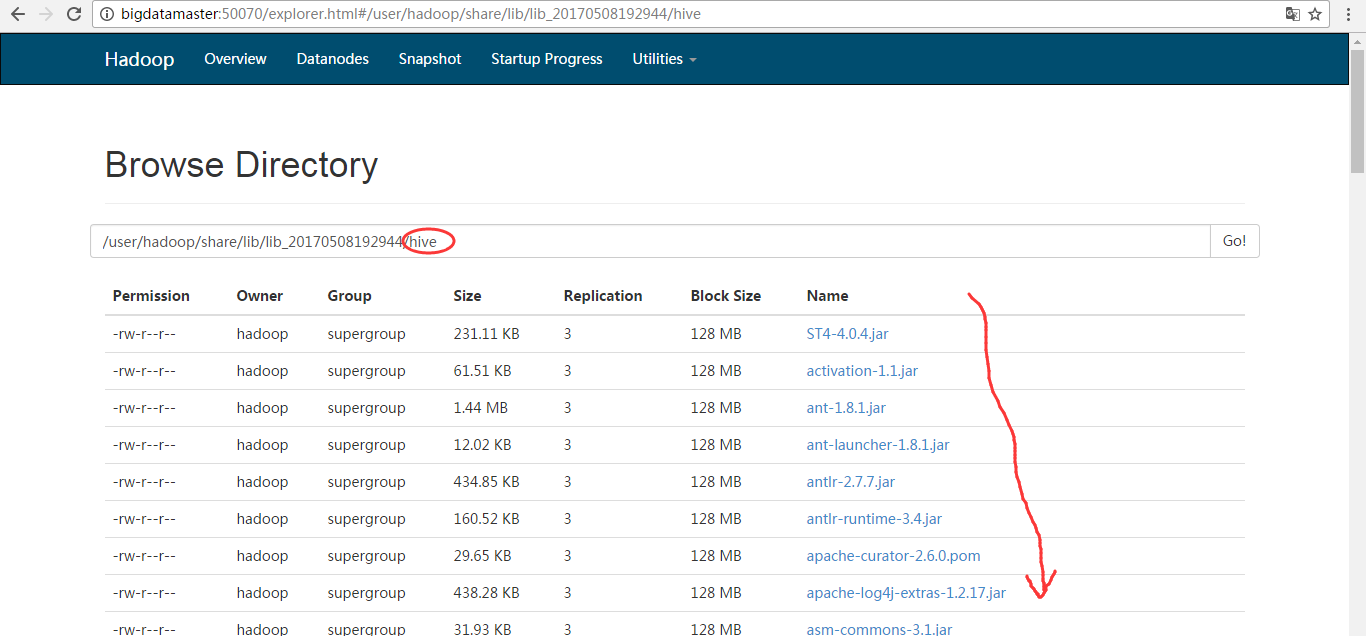

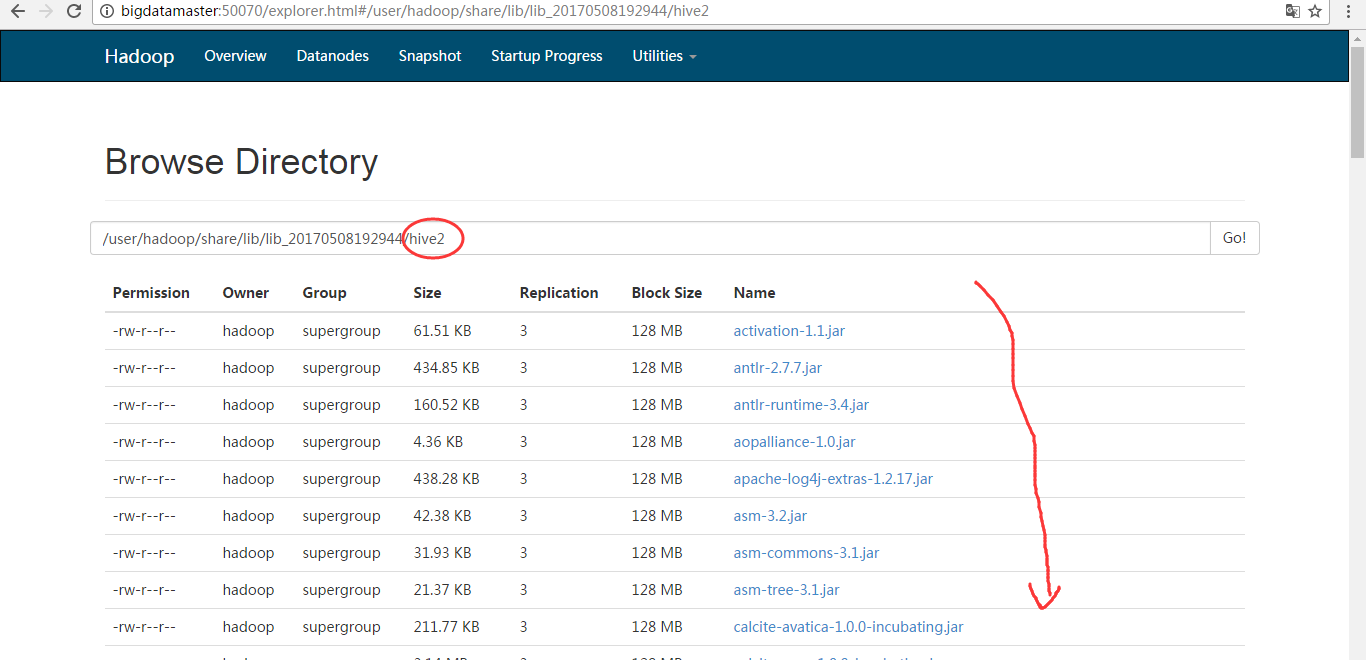

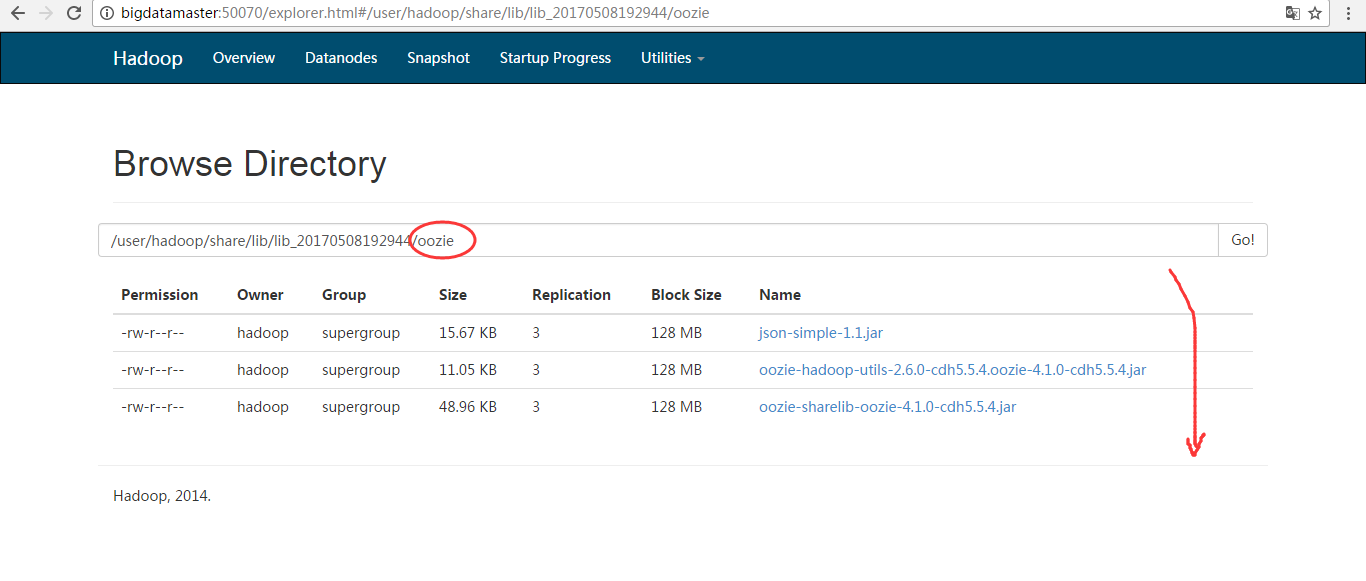

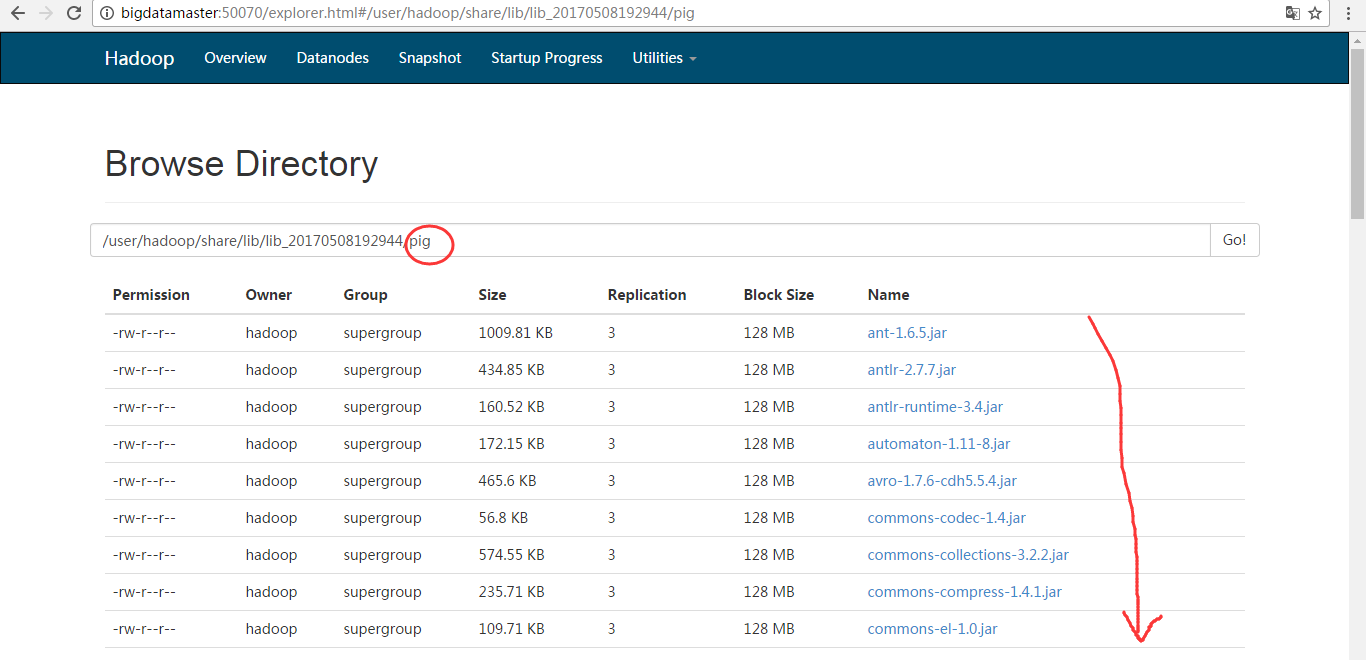

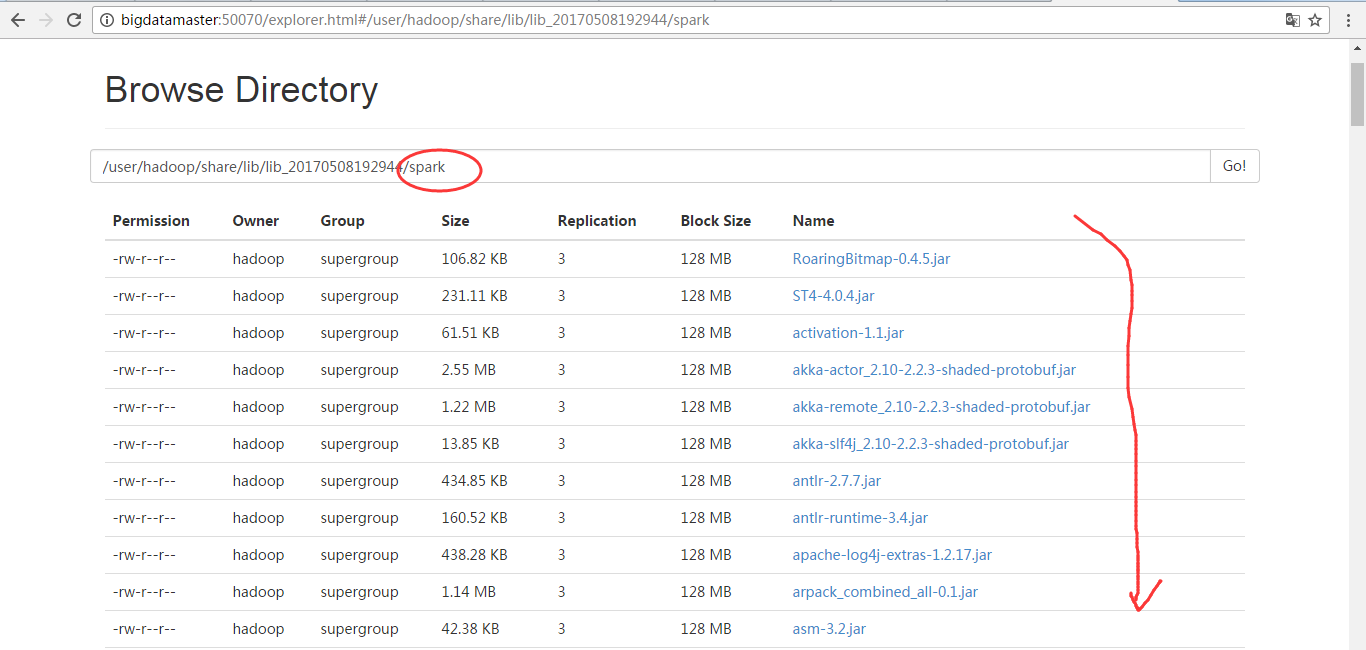

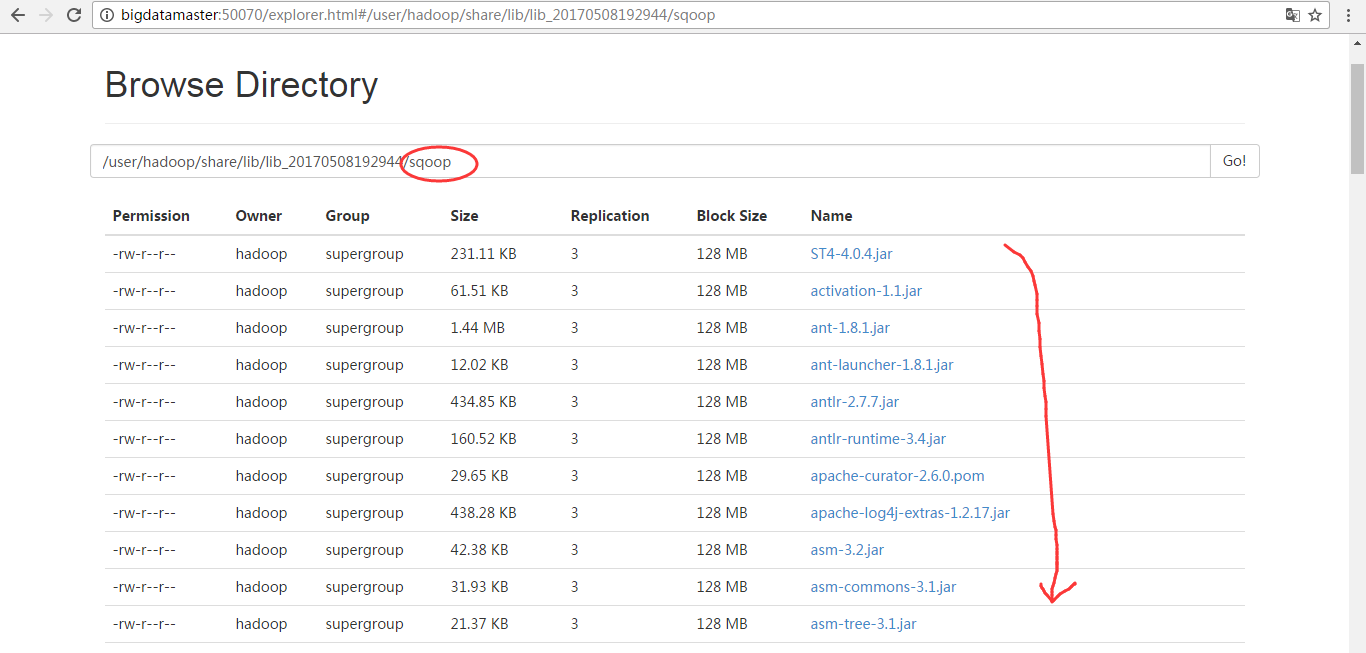

A "sharelib create -fs fs_default_name [-locallib sharelib]" command is available when running oozie-setup.sh for uploading new sharelib into hdfs where the first argument is the default fs name and the second argument is the Oozie sharelib to install, it can be a tarball or the expanded version of it. If the second argument is omitted, the Oozie sharelib tarball from the Oozie installation directory will be used. Upgrade command is deprecated, one should use create command to create new version of sharelib. Sharelib files are copied to new lib_ directory. At start, server picks the sharelib from latest time-stamp directory. While starting server also purge sharelib directory which is older than sharelib retention days (defined as oozie.service.ShareLibService.temp.sharelib.retention.days and days is default).

继续往下。

"prepare-war [-d directory]" command is for creating war files for oozie with an optional alternative directory other than libext. db create|upgrade|postupgrade -run [-sqlfile ] command is for create, upgrade or postupgrade oozie db with an optional sql file Run the oozie-setup.sh script to configure Oozie with all the components added to the libext/ directory.

因为,官网说的很明白,如果我们没有像上述那样,把所有的包都拷贝到$OOZIE_HOME/libext下,则就直接执行下面的命令需要指定,也是可以达到目的的。

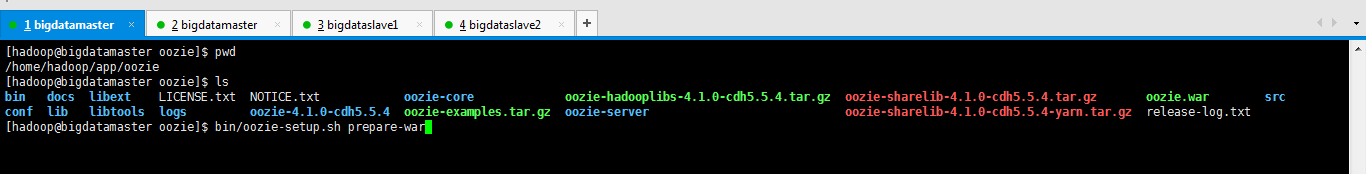

那我这里,已经弄好了,就不需去指定参数了,可以直接执行

bin/oozie-setup.sh prepare-war

[hadoop@bigdatamaster oozie]$ pwd

/home/hadoop/app/oozie

[hadoop@bigdatamaster oozie]$ ls

bin docs libext LICENSE.txt NOTICE.txt oozie-core oozie-hadooplibs-4.1.-cdh5.5.4.tar.gz oozie-sharelib-4.1.-cdh5.5.4.tar.gz oozie.war src

conf lib libtools logs oozie-4.1.-cdh5.5.4 oozie-examples.tar.gz oozie-server oozie-sharelib-4.1.-cdh5.5.4-yarn.tar.gz release-log.txt

[hadoop@bigdatamaster oozie]$ bin/oozie-setup.sh prepare-war

setting CATALINA_OPTS="$CATALINA_OPTS -Xmx1024m"

INFO: Adding extension: /home/hadoop/app/oozie/libext/activation-1.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/apacheds-i18n-2.0.0-M15.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/apacheds-kerberos-codec-2.0.0-M15.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/api-asn1-api-1.0.0-M20.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/api-util-1.0.0-M20.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/avro-1.7.6-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/aws-java-sdk-core-1.10.6.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/aws-java-sdk-kms-1.10.6.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/aws-java-sdk-s3-1.10.6.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-beanutils-1.7.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-beanutils-core-1.8.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-cli-1.2.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-codec-1.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-collections-3.2.2.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-compress-1.4.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-configuration-1.6.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-digester-1.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-httpclient-3.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-io-2.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-lang-2.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-logging-1.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-math3-3.1.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/commons-net-3.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/curator-client-2.7.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/curator-framework-2.7.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/curator-recipes-2.7.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/gson-2.2.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/guava-11.0.2.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-annotations-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-auth-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-aws-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-client-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-common-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-hdfs-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-mapreduce-client-app-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-mapreduce-client-common-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-mapreduce-client-core-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-mapreduce-client-jobclient-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-mapreduce-client-shuffle-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-yarn-api-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-yarn-client-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-yarn-common-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/hadoop-yarn-server-common-2.6.0-cdh5.5.4.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/htrace-core4-4.0.1-incubating.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/httpclient-4.2.5.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/httpcore-4.2.5.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-annotations-2.2.3.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-core-2.2.3.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-core-asl-1.8.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-databind-2.2.3.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-jaxrs-1.8.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-mapper-asl-1.8.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jackson-xc-1.8.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jaxb-api-2.2.2.jar

INFO: Adding extension: /home/hadoop/app/oozieb/libext/jersey-client-1.9.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jersey-core-1.9.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jetty-util-6.1.26.cloudera.2.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/jsr305-3.0.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/leveldbjni-all-1.8.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/log4j-1.2.17.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/netty-3.6.2.Final.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/netty-all-4.0.23.Final.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/paranamer-2.3.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/protobuf-java-2.5.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/servlet-api-2.5.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/slf4j-api-1.7.5.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/slf4j-log4j12-1.7.5.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/snappy-java-1.0.4.1.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/stax-api-1.0-2.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/xercesImpl-2.10.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/xml-apis-1.4.01.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/xmlenc-0.52.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/xz-1.0.jar

INFO: Adding extension: /home/hadoop/app/oozie/libext/zookeeper-3.4.5-cdh5.5.4.jar

File/Dir does no exist: /home/hadoop/app/sqoop/server/conf/ssl/server.xml

[hadoop@bigdatamaster oozie]$

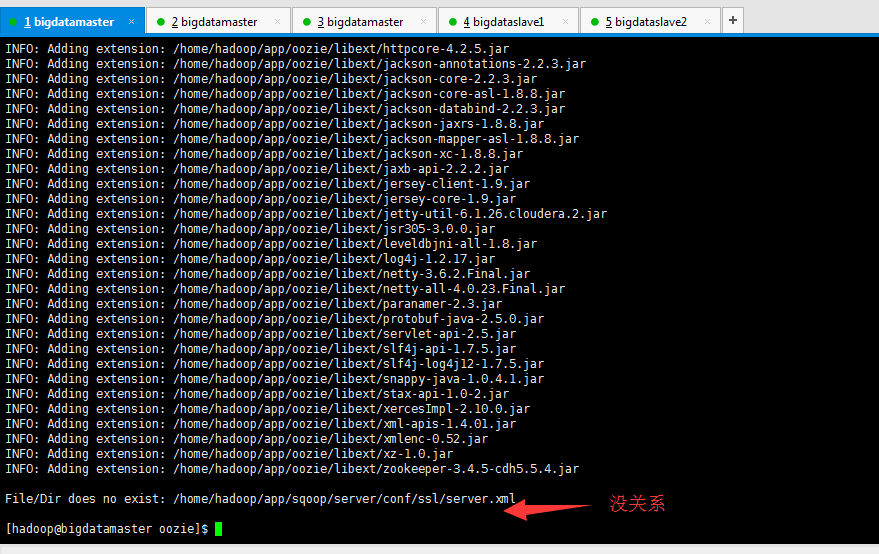

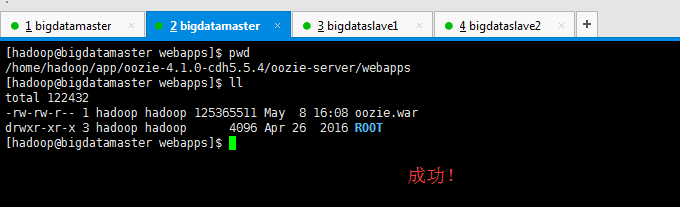

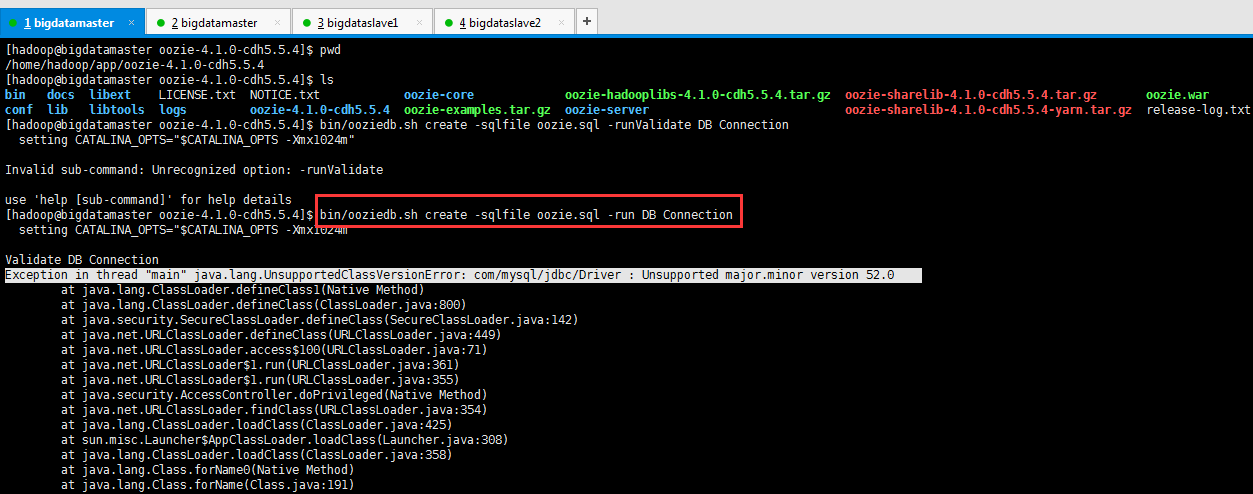

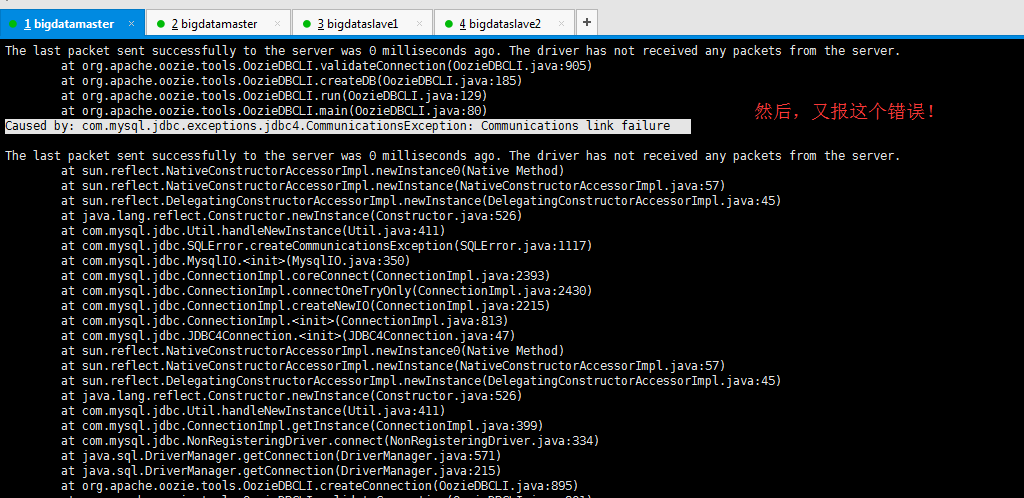

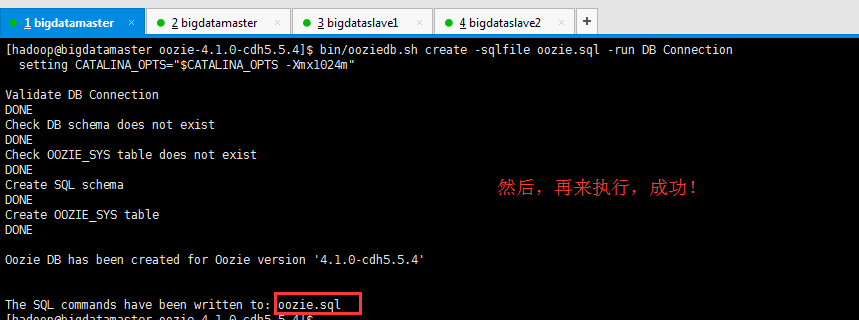

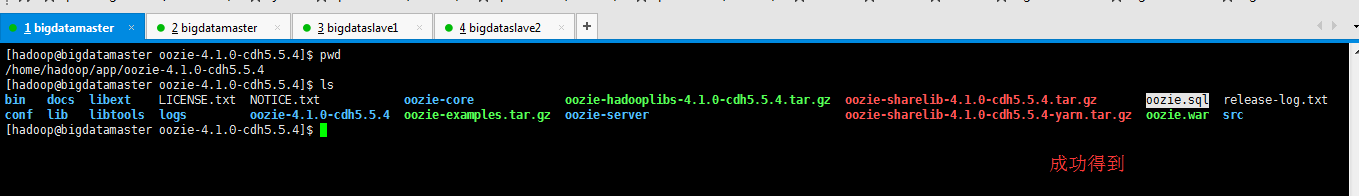

我一直在这里反复试了好几次,oozie-server下的oozie.war生成不出来。

解决办法

CDH版本的oozie安装执行bin/oozie-setup.sh prepare-war,没生成oozie.war?

[hadoop@bigdatamaster webapps]$ pwd

/home/hadoop/app/oozie-4.1.-cdh5.5.4/oozie-server/webapps

[hadoop@bigdatamaster webapps]$ ll

total

-rw-rw-r-- hadoop hadoop May : oozie.war

drwxr-xr-x hadoop hadoop Apr ROOT

[hadoop@bigdatamaster webapps]$

[hadoop@bigdatamaster oozie-server]$ pwd

/home/hadoop/app/oozie-4.1.-cdh5.5.4/oozie-server

[hadoop@bigdatamaster oozie-server]$ ls

bin conf lib LICENSE logs NOTICE RELEASE-NOTES RUNNING.txt temp webapps work

[hadoop@bigdatamaster oozie-server]$

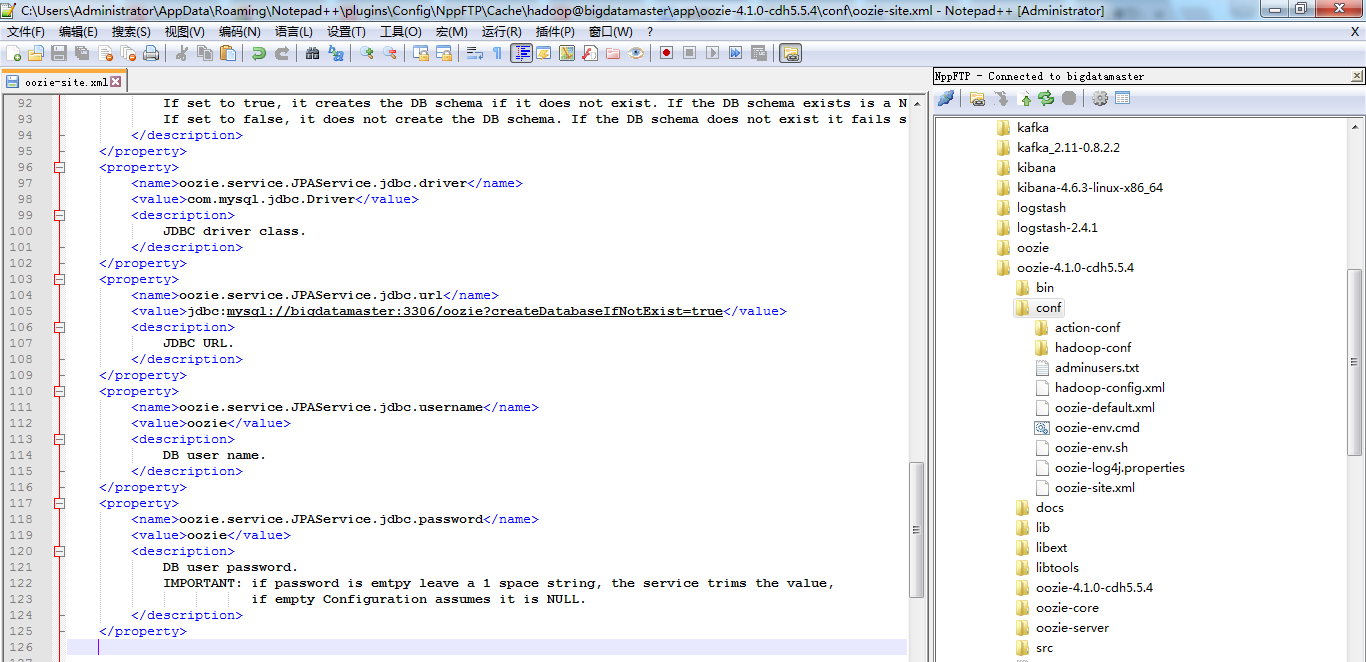

然后,我们配置好$OOZIE_HOME/conf/oozie-site.xml 和 配置$OOZIE_HOME/conf/oozie-default.xml (这个配置文件,一般是不需动的,它里面是最全的)

注意,我们的oozie-site.xml配置文件里面默认是如下

<?xml version="1.0"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one

or more contributor license agreements. See the NOTICE file

distributed with this work for additional information

regarding copyright ownership. The ASF licenses this file

to you under the Apache License, Version 2.0 (the

"License"); you may not use this file except in compliance

with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<configuration> <!--

Refer to the oozie-default.xml file for the complete list of

Oozie configuration properties and their default values.

--> <!-- Proxyuser Configuration --> <!-- <property>

<name>oozie.service.ProxyUserService.proxyuser.#USER#.hosts</name>

<value>*</value>

<description>

List of hosts the '#USER#' user is allowed to perform 'doAs'

operations. The '#USER#' must be replaced with the username o the user who is

allowed to perform 'doAs' operations. The value can be the '*' wildcard or a list of hostnames. For multiple users copy this property and replace the user name

in the property name.

</description>

</property> <property>

<name>oozie.service.ProxyUserService.proxyuser.#USER#.groups</name>

<value>*</value>

<description>

List of groups the '#USER#' user is allowed to impersonate users

from to perform 'doAs' operations. The '#USER#' must be replaced with the username o the user who is

allowed to perform 'doAs' operations. The value can be the '*' wildcard or a list of groups. For multiple users copy this property and replace the user name

in the property name.

</description>

</property> --> <!-- Default proxyuser configuration for Hue --> <property>

<name>oozie.service.ProxyUserService.proxyuser.hue.hosts</name>

<value>*</value>

</property> <property>

<name>oozie.service.ProxyUserService.proxyuser.hue.groups</name>

<value>*</value>

</property> </configuration>

然后,去网上找到如下的配置信息,复制粘贴进去。

最后oozie-site.xml配置文件,如下

<?xml version="1.0"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one

or more contributor license agreements. See the NOTICE file

distributed with this work for additional information

regarding copyright ownership. The ASF licenses this file

to you under the Apache License, Version 2.0 (the

"License"); you may not use this file except in compliance

with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<configuration> <!--

Refer to the oozie-default.xml file for the complete list of

Oozie configuration properties and their default values.

--> <!-- Proxyuser Configuration --> <!-- <property>

<name>oozie.service.ProxyUserService.proxyuser.#USER#.hosts</name>

<value>*</value>

<description>

List of hosts the '#USER#' user is allowed to perform 'doAs'

operations. The '#USER#' must be replaced with the username o the user who is

allowed to perform 'doAs' operations. The value can be the '*' wildcard or a list of hostnames. For multiple users copy this property and replace the user name

in the property name.

</description>

</property> <property>

<name>oozie.service.ProxyUserService.proxyuser.#USER#.groups</name>

<value>*</value>

<description>

List of groups the '#USER#' user is allowed to impersonate users

from to perform 'doAs' operations. The '#USER#' must be replaced with the username o the user who is

allowed to perform 'doAs' operations. The value can be the '*' wildcard or a list of groups. For multiple users copy this property and replace the user name

in the property name.

</description>

</property> --> <!-- Default proxyuser configuration for Hue --> <property>

<name>oozie.service.ProxyUserService.proxyuser.hue.hosts</name>

<value>*</value>

</property> <property>

<name>oozie.service.ProxyUserService.proxyuser.hue.groups</name>

<value>*</value>

</property> <property>

<name>oozie.db.schema.name</name>

<value>oozie</value>

<description>

Oozie DataBase Name

</description>

</property>

<property>

<name>oozie.service.JPAService.create.db.schema</name>

<value>false</value>

<description>

Creates Oozie DB.

If set to true, it creates the DB schema if it does not exist. If the DB schema exists is a NOP.

If set to false, it does not create the DB schema. If the DB schema does not exist it fails start up.

</description>

</property>

<property>

<name>oozie.service.JPAService.jdbc.driver</name>

<value>com.mysql.jdbc.Driver</value>

<description>

JDBC driver class.

</description>

</property>

<property>

<name>oozie.service.JPAService.jdbc.url</name>

<value>jdbc:mysql://bigdatamaster:3306/oozie?createDatabaseIfNotExist=true</value>

<description>

JDBC URL.

</description>

</property>

<property>

<name>oozie.service.JPAService.jdbc.username</name>

<value>oozie</value>

<description>

DB user name.

</description>

</property>

<property>

<name>oozie.service.JPAService.jdbc.password</name>

<value>oozie</value>

<description>

DB user password.

IMPORTANT: if password is emtpy leave a space string, the service trims the value,

if empty Configuration assumes it is NULL.

</description>

</property> <property>

<name>oozie.service.HadoopAccessorService.hadoop.configurations</name>

<value>*=/home/hadoop/app/hadoop-2.6.-cdh5.5.4/etc/hadoop</value>

<description>

Comma separated AUTHORITY=HADOOP_CONF_DIR, where AUTHORITY is the HOST:PORT of

the Hadoop service (JobTracker, HDFS). The wildcard '*' configuration is

used when there is no exact match for an authority. The HADOOP_CONF_DIR contains

the relevant Hadoop *-site.xml files. If the path is relative is looked within

the Oozie configuration directory; though the path can be absolute (i.e. to point

to Hadoop client conf/ directories in the local filesystem.

</description>

</property> </configuration>

oozie-default.xml保持默认,因为,越新的版本很多都默认配置好了。

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<!--

Licensed to the Apache Software Foundation (ASF) under one

or more contributor license agreements. See the NOTICE file

distributed with this work for additional information

regarding copyright ownership. The ASF licenses this file

to you under the Apache License, Version 2.0 (the

"License"); you may not use this file except in compliance

with the License. You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0 Unless required by applicable law or agreed to in writing, software

distributed under the License is distributed on an "AS IS" BASIS,

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

See the License for the specific language governing permissions and

limitations under the License.

-->

<configuration> <!-- ************************** VERY IMPORTANT ************************** -->

<!-- This file is in the Oozie configuration directory only for reference. -->

<!-- It is not loaded by Oozie, Oozie uses its own privatecopy. -->

<!-- ************************** VERY IMPORTANT ************************** --> <property>

<name>oozie.output.compression.codec</name>

<value>gz</value>

<description>

The name of the compression codec to use.

The implementation class for the codec needs to be specified through another property oozie.compression.codecs.

You can specify a comma separated list of 'Codec_name'='Codec_class' for oozie.compression.codecs

where codec class implements the interface org.apache.oozie.compression.CompressionCodec.

If oozie.compression.codecs is not specified, gz codec implementation is used by default.

</description>

</property> <property>

<name>oozie.action.mapreduce.uber.jar.enable</name>

<value>false</value>

<description>

If true, enables the oozie.mapreduce.uber.jar mapreduce workflow configuration property, which is used to specify an

uber jar in HDFS. Submitting a workflow with an uber jar requires at least Hadoop 2.2. or 1.2.. If false, workflows

which specify the oozie.mapreduce.uber.jar configuration property will fail.

</description>

</property> <property>

<name>oozie.processing.timezone</name>

<value>UTC</value>

<description>

Oozie server timezone. Valid values are UTC and GMT(+/-)####, for example 'GMT+0530' would be India

timezone. All dates parsed and genered dates by Oozie Coordinator/Bundle will be done in the specified

timezone. The default value of 'UTC' should not be changed under normal circumtances. If for any reason

is changed, note that GMT(+/-)#### timezones do not observe DST changes.

</description>

</property> <!-- Base Oozie URL: <SCHEME>://<HOST>:<PORT>/<CONTEXT> --> <property>

<name>oozie.base.url</name>

<value>http://localhost:8080/oozie</value>

<description>

Base Oozie URL.

</description>

</property> <!-- Services --> <property>

<name>oozie.system.id</name>

<value>oozie-${user.name}</value>

<description>

The Oozie system ID.

</description>

</property> <property>

<name>oozie.systemmode</name>

<value>NORMAL</value>

<description>

System mode for Oozie at startup.

</description>

</property> <property>

<name>oozie.delete.runtime.dir.on.shutdown</name>

<value>true</value>

<description>

If the runtime directory should be kept after Oozie shutdowns down.

</description>

</property> <property>

<name>oozie.services</name>

<value>

org.apache.oozie.service.SchedulerService,

org.apache.oozie.service.InstrumentationService,

org.apache.oozie.service.MemoryLocksService,

org.apache.oozie.service.UUIDService,

org.apache.oozie.service.ELService,

org.apache.oozie.service.AuthorizationService,

org.apache.oozie.service.UserGroupInformationService,

org.apache.oozie.service.HadoopAccessorService,

org.apache.oozie.service.JobsConcurrencyService,

org.apache.oozie.service.URIHandlerService,

org.apache.oozie.service.DagXLogInfoService,

org.apache.oozie.service.SchemaService,

org.apache.oozie.service.LiteWorkflowAppService,

org.apache.oozie.service.JPAService,

org.apache.oozie.service.StoreService,

org.apache.oozie.service.SLAStoreService,

org.apache.oozie.service.DBLiteWorkflowStoreService,

org.apache.oozie.service.CallbackService,

org.apache.oozie.service.ActionService,

org.apache.oozie.service.ShareLibService,

org.apache.oozie.service.CallableQueueService,

org.apache.oozie.service.ActionCheckerService,

org.apache.oozie.service.RecoveryService,

org.apache.oozie.service.PurgeService,

org.apache.oozie.service.CoordinatorEngineService,

org.apache.oozie.service.BundleEngineService,

org.apache.oozie.service.DagEngineService,

org.apache.oozie.service.CoordMaterializeTriggerService,

org.apache.oozie.service.StatusTransitService,

org.apache.oozie.service.PauseTransitService,

org.apache.oozie.service.GroupsService,

org.apache.oozie.service.ProxyUserService,

org.apache.oozie.service.XLogStreamingService,

org.apache.oozie.service.JvmPauseMonitorService,

org.apache.oozie.service.SparkConfigurationService

</value>

<description>

All services to be created and managed by Oozie Services singleton.

Class names must be separated by commas.

</description>

</property> <property>

<name>oozie.services.ext</name>

<value> </value>

<description>

To add/replace services defined in 'oozie.services' with custom implementations.

Class names must be separated by commas.

</description>

</property> <property>

<name>oozie.service.XLogStreamingService.buffer.len</name>

<value></value>

<description>4K buffer for streaming the logs progressively</description>

</property> <!-- HCatAccessorService -->

<property>

<name>oozie.service.HCatAccessorService.jmsconnections</name>

<value>

default=java.naming.factory.initial#org.apache.activemq.jndi.ActiveMQInitialContextFactory;java.naming.provider.url#tcp://localhost:61616;connectionFactoryNames#ConnectionFactory

</value>

<description>

Specify the map of endpoints to JMS configuration properties. In general, endpoint

identifies the HCatalog server URL. "default" is used if no endpoint is mentioned

in the query. If some JMS property is not defined, the system will use the property

defined jndi.properties. jndi.properties files is retrieved from the application classpath.

Mapping rules can also be provided for mapping Hcatalog servers to corresponding JMS providers.

hcat://${1}.${2}.server.com:8020=java.naming.factory.initial#Dummy.Factory;java.naming.provider.url#tcp://broker.${2}:61616

</description>

</property> <!-- TopicService --> <property>

<name>oozie.service.JMSTopicService.topic.name</name>

<value>

default=${username}

</value>

<description>

Topic options are ${username} or ${jobId} or a fixed string which can be specified as default or for a

particular job type.

For e.g To have a fixed string topic for workflows, coordinators and bundles,

specify in the following comma-separated format: {jobtype1}={some_string1}, {jobtype2}={some_string2}

where job type can be WORKFLOW, COORDINATOR or BUNDLE.

e.g. Following defines topic for workflow job, workflow action, coordinator job, coordinator action,

bundle job and bundle action

WORKFLOW=workflow,

COORDINATOR=coordinator,

BUNDLE=bundle

For jobs with no defined topic, default topic will be ${username}

</description>

</property> <!-- JMS Producer connection -->

<property>

<name>oozie.jms.producer.connection.properties</name>

<value>java.naming.factory.initial#org.apache.activemq.jndi.ActiveMQInitialContextFactory;java.naming.provider.url#tcp://localhost:61616;connectionFactoryNames#ConnectionFactory</value>

</property> <!-- JMSAccessorService -->

<property>

<name>oozie.service.JMSAccessorService.connectioncontext.impl</name>

<value>

org.apache.oozie.jms.DefaultConnectionContext

</value>

<description>

Specifies the Connection Context implementation

</description>

</property> <!-- ConfigurationService --> <property>

<name>oozie.service.ConfigurationService.ignore.system.properties</name>

<value>

oozie.service.AuthorizationService.security.enabled

</value>

<description>

Specifies "oozie.*" properties to cannot be overriden via Java system properties.

Property names must be separted by commas.

</description>

</property> <property>

<name>oozie.service.ConfigurationService.verify.available.properties</name>

<value>true</value>

<description>

Specifies whether the available configurations check is enabled or not.

</description>

</property> <!-- SchedulerService --> <property>

<name>oozie.service.SchedulerService.threads</name>

<value></value>

<description>

The number of threads to be used by the SchedulerService to run deamon tasks.

If maxed out, scheduled daemon tasks will be queued up and delayed until threads become available.

</description>

</property> <!-- AuthorizationService --> <property>

<name>oozie.service.AuthorizationService.authorization.enabled</name>

<value>false</value>

<description>

Specifies whether security (user name/admin role) is enabled or not.

If disabled any user can manage Oozie system and manage any job.

</description>

</property> <property>

<name>oozie.service.AuthorizationService.default.group.as.acl</name>

<value>false</value>

<description>

Enables old behavior where the User's default group is the job's ACL.

</description>

</property> <!-- InstrumentationService --> <property>

<name>oozie.service.InstrumentationService.logging.interval</name>

<value></value>

<description>

Interval, in seconds, at which instrumentation should be logged by the InstrumentationService.

If set to it will not log instrumentation data.

</description>

</property> <!-- PurgeService -->

<property>

<name>oozie.service.PurgeService.older.than</name>

<value></value>

<description>

Completed workflow jobs older than this value, in days, will be purged by the PurgeService.

</description>

</property> <property>

<name>oozie.service.PurgeService.coord.older.than</name>

<value></value>

<description>

Completed coordinator jobs older than this value, in days, will be purged by the PurgeService.

</description>

</property> <property>

<name>oozie.service.PurgeService.bundle.older.than</name>

<value></value>

<description>

Completed bundle jobs older than this value, in days, will be purged by the PurgeService.

</description>

</property> <property>

<name>oozie.service.PurgeService.purge.old.coord.action</name>

<value>false</value>

<description>

Whether to purge completed workflows and their corresponding coordinator actions

of long running coordinator jobs if the completed workflow jobs are older than the value

specified in oozie.service.PurgeService.older.than.

</description>

</property> <property>

<name>oozie.service.PurgeService.purge.limit</name>

<value></value>

<description>

Completed Actions purge - limit each purge to this value

</description>

</property> <property>

<name>oozie.service.PurgeService.purge.interval</name>

<value></value>

<description>

Interval at which the purge service will run, in seconds.

</description>

</property> <!-- RecoveryService --> <property>

<name>oozie.service.RecoveryService.wf.actions.older.than</name>

<value></value>

<description>

Age of the actions which are eligible to be queued for recovery, in seconds.

</description>

</property> <property>

<name>oozie.service.RecoveryService.wf.actions.created.time.interval</name>

<value></value>

<description>

Created time period of the actions which are eligible to be queued for recovery in days.

</description>

</property> <property>

<name>oozie.service.RecoveryService.callable.batch.size</name>

<value></value>

<description>

This value determines the number of callable which will be batched together

to be executed by a single thread.

</description>

</property> <property>

<name>oozie.service.RecoveryService.push.dependency.interval</name>

<value></value>

<description>

This value determines the delay for push missing dependency command queueing

in Recovery Service

</description>

</property> <property>

<name>oozie.service.RecoveryService.interval</name>

<value></value>

<description>

Interval at which the RecoverService will run, in seconds.

</description>

</property> <property>

<name>oozie.service.RecoveryService.coord.older.than</name>

<value></value>

<description>

Age of the Coordinator jobs or actions which are eligible to be queued for recovery, in seconds.

</description>

</property> <property>

<name>oozie.service.RecoveryService.bundle.older.than</name>

<value></value>

<description>

Age of the Bundle jobs which are eligible to be queued for recovery, in seconds.

</description>

</property> <!-- CallableQueueService --> <property>

<name>oozie.service.CallableQueueService.queue.size</name>

<value></value>

<description>Max callable queue size</description>

</property> <property>

<name>oozie.service.CallableQueueService.threads</name>

<value></value>

<description>Number of threads used for executing callables</description>

</property> <property>

<name>oozie.service.CallableQueueService.callable.concurrency</name>

<value></value>

<description>

Maximum concurrency for a given callable type.

Each command is a callable type (submit, start, run, signal, job, jobs, suspend,resume, etc).

Each action type is a callable type (Map-Reduce, Pig, SSH, FS, sub-workflow, etc).

All commands that use action executors (action-start, action-end, action-kill and action-check) use

the action type as the callable type.

</description>

</property> <property>

<name>oozie.service.CallableQueueService.callable.next.eligible</name>

<value>true</value>

<description>

If true, when a callable in the queue has already reached max concurrency,

Oozie continuously find next one which has not yet reach max concurrency.

</description>

</property> <property>

<name>oozie.service.CallableQueueService.InterruptMapMaxSize</name>

<value></value>

<description>

Maximum Size of the Interrupt Map, the interrupt element will not be inserted in the map if exceeded the size.

</description>

</property> <property>

<name>oozie.service.CallableQueueService.InterruptTypes</name>

<value>kill,resume,suspend,bundle_kill,bundle_resume,bundle_suspend,coord_kill,coord_change,coord_resume,coord_suspend</value>

<description>

Getting the types of XCommands that are considered to be of Interrupt type

</description>

</property> <!-- CoordMaterializeTriggerService --> <property>

<name>oozie.service.CoordMaterializeTriggerService.lookup.interval

</name>

<value></value>

<description> Coordinator Job Lookup interval.(in seconds).

</description>

</property> <!-- Enable this if you want different scheduling interval for CoordMaterializeTriggerService.

By default it will use lookup interval as scheduling interval

<property>

<name>oozie.service.CoordMaterializeTriggerService.scheduling.interval

</name>

<value></value>

<description> The frequency at which the CoordMaterializeTriggerService will run.</description>

</property>

--> <property>

<name>oozie.service.CoordMaterializeTriggerService.materialization.window

</name>

<value></value>

<description> Coordinator Job Lookup command materialized each

job for this next "window" duration

</description>

</property> <property>

<name>oozie.service.CoordMaterializeTriggerService.callable.batch.size</name>

<value></value>

<description>

This value determines the number of callable which will be batched together

to be executed by a single thread.

</description>

</property> <property>

<name>oozie.service.CoordMaterializeTriggerService.materialization.system.limit</name>

<value></value>

<description>

This value determines the number of coordinator jobs to be materialized at a given time.

</description>

</property> <property>

<name>oozie.service.coord.normal.default.timeout

</name>

<value></value>

<description>Default timeout for a coordinator action input check (in minutes) for normal job.

- means infinite timeout</description>

</property> <property>

<name>oozie.service.coord.default.max.timeout

</name>

<value></value>

<description>Default maximum timeout for a coordinator action input check (in minutes). = 60days

</description>

</property> <property>

<name>oozie.service.coord.input.check.requeue.interval

</name>

<value></value>

<description>Command re-queue interval for coordinator data input check (in millisecond).

</description>

</property> <property>

<name>oozie.service.coord.push.check.requeue.interval

</name>

<value></value>

<description>Command re-queue interval for push dependencies (in millisecond).

</description>

</property> <property>

<name>oozie.service.coord.default.concurrency

</name>

<value></value>

<description>Default concurrency for a coordinator job to determine how many maximum action should

be executed at the same time. - means infinite concurrency.</description>

</property> <property>

<name>oozie.service.coord.default.throttle

</name>

<value></value>

<description>Default throttle for a coordinator job to determine how many maximum action should

be in WAITING state at the same time.</description>

</property> <property>

<name>oozie.service.coord.materialization.throttling.factor

</name>

<value>0.05</value>

<description>Determine how many maximum actions should be in WAITING state for a single job at any time. The value is calculated by

this factor X the total queue size.</description>

</property> <property>

<name>oozie.service.coord.check.maximum.frequency</name>

<value>true</value>

<description>

When true, Oozie will reject any coordinators with a frequency faster than minutes. It is not recommended to disable

this check or submit coordinators with frequencies faster than minutes: doing so can cause unintended behavior and

additional system stress.

</description>

</property> <!-- ELService -->

<!-- List of supported groups for ELService -->

<property>

<name>oozie.service.ELService.groups</name>

<value>job-submit,workflow,wf-sla-submit,coord-job-submit-freq,coord-job-submit-nofuncs,coord-job-submit-data,coord-job-submit-instances,coord-sla-submit,coord-action-create,coord-action-create-inst,coord-sla-create,coord-action-start,coord-job-wait-timeout</value>

<description>List of groups for different ELServices</description>

</property> <property>

<name>oozie.service.ELService.constants.job-submit</name>

<value>

</value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.functions.job-submit</name>

<value>

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.job-submit</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions without having to include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.job-submit</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions without having to include all the built in ones.

</description>

</property> <!-- Workflow specifics -->

<property>

<name>oozie.service.ELService.constants.workflow</name>

<value>

KB=org.apache.oozie.util.ELConstantsFunctions#KB,

MB=org.apache.oozie.util.ELConstantsFunctions#MB,

GB=org.apache.oozie.util.ELConstantsFunctions#GB,

TB=org.apache.oozie.util.ELConstantsFunctions#TB,

PB=org.apache.oozie.util.ELConstantsFunctions#PB,

RECORDS=org.apache.oozie.action.hadoop.HadoopELFunctions#RECORDS,

MAP_IN=org.apache.oozie.action.hadoop.HadoopELFunctions#MAP_IN,

MAP_OUT=org.apache.oozie.action.hadoop.HadoopELFunctions#MAP_OUT,

REDUCE_IN=org.apache.oozie.action.hadoop.HadoopELFunctions#REDUCE_IN,

REDUCE_OUT=org.apache.oozie.action.hadoop.HadoopELFunctions#REDUCE_OUT,

GROUPS=org.apache.oozie.action.hadoop.HadoopELFunctions#GROUPS

</value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.workflow</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.workflow</name>

<value>

firstNotNull=org.apache.oozie.util.ELConstantsFunctions#firstNotNull,

concat=org.apache.oozie.util.ELConstantsFunctions#concat,

replaceAll=org.apache.oozie.util.ELConstantsFunctions#replaceAll,

appendAll=org.apache.oozie.util.ELConstantsFunctions#appendAll,

trim=org.apache.oozie.util.ELConstantsFunctions#trim,

timestamp=org.apache.oozie.util.ELConstantsFunctions#timestamp,

urlEncode=org.apache.oozie.util.ELConstantsFunctions#urlEncode,

toJsonStr=org.apache.oozie.util.ELConstantsFunctions#toJsonStr,

toPropertiesStr=org.apache.oozie.util.ELConstantsFunctions#toPropertiesStr,

toConfigurationStr=org.apache.oozie.util.ELConstantsFunctions#toConfigurationStr,

wf:id=org.apache.oozie.DagELFunctions#wf_id,

wf:name=org.apache.oozie.DagELFunctions#wf_name,

wf:appPath=org.apache.oozie.DagELFunctions#wf_appPath,

wf:conf=org.apache.oozie.DagELFunctions#wf_conf,

wf:user=org.apache.oozie.DagELFunctions#wf_user,

wf:group=org.apache.oozie.DagELFunctions#wf_group,

wf:callback=org.apache.oozie.DagELFunctions#wf_callback,

wf:transition=org.apache.oozie.DagELFunctions#wf_transition,

wf:lastErrorNode=org.apache.oozie.DagELFunctions#wf_lastErrorNode,

wf:errorCode=org.apache.oozie.DagELFunctions#wf_errorCode,

wf:errorMessage=org.apache.oozie.DagELFunctions#wf_errorMessage,

wf:run=org.apache.oozie.DagELFunctions#wf_run,

wf:actionData=org.apache.oozie.DagELFunctions#wf_actionData,

wf:actionExternalId=org.apache.oozie.DagELFunctions#wf_actionExternalId,

wf:actionTrackerUri=org.apache.oozie.DagELFunctions#wf_actionTrackerUri,

wf:actionExternalStatus=org.apache.oozie.DagELFunctions#wf_actionExternalStatus,

hadoop:counters=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_counters,

hadoop:conf=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_conf,

fs:exists=org.apache.oozie.action.hadoop.FsELFunctions#fs_exists,

fs:isDir=org.apache.oozie.action.hadoop.FsELFunctions#fs_isDir,

fs:dirSize=org.apache.oozie.action.hadoop.FsELFunctions#fs_dirSize,

fs:fileSize=org.apache.oozie.action.hadoop.FsELFunctions#fs_fileSize,

fs:blockSize=org.apache.oozie.action.hadoop.FsELFunctions#fs_blockSize,

hcat:exists=org.apache.oozie.coord.HCatELFunctions#hcat_exists

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.WorkflowAppService.WorkflowDefinitionMaxLength</name>

<value></value>

<description>

The maximum length of the workflow definition in bytes

An error will be reported if the length exceeds the given maximum

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.workflow</name>

<value>

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <!-- Resolve SLA information during Workflow job submission -->

<property>

<name>oozie.service.ELService.constants.wf-sla-submit</name>

<value>

MINUTES=org.apache.oozie.util.ELConstantsFunctions#SUBMIT_MINUTES,

HOURS=org.apache.oozie.util.ELConstantsFunctions#SUBMIT_HOURS,

DAYS=org.apache.oozie.util.ELConstantsFunctions#SUBMIT_DAYS

</value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.wf-sla-submit</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.wf-sla-submit</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property>

<property>

<name>oozie.service.ELService.ext.functions.wf-sla-submit</name>

<value>

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <!-- Coordinator specifics -->l

<!-- Phase resolution during job submission -->

<!-- EL Evalautor setup to resolve mainly frequency tags -->

<property>

<name>oozie.service.ELService.constants.coord-job-submit-freq</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.coord-job-submit-freq</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.coord-job-submit-freq</name>

<value>

coord:days=org.apache.oozie.coord.CoordELFunctions#ph1_coord_days,

coord:months=org.apache.oozie.coord.CoordELFunctions#ph1_coord_months,

coord:hours=org.apache.oozie.coord.CoordELFunctions#ph1_coord_hours,

coord:minutes=org.apache.oozie.coord.CoordELFunctions#ph1_coord_minutes,

coord:endOfDays=org.apache.oozie.coord.CoordELFunctions#ph1_coord_endOfDays,

coord:endOfMonths=org.apache.oozie.coord.CoordELFunctions#ph1_coord_endOfMonths,

coord:conf=org.apache.oozie.coord.CoordELFunctions#coord_conf,

coord:user=org.apache.oozie.coord.CoordELFunctions#coord_user,

hadoop:conf=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_conf

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.coord-job-submit-freq</name>

<value>

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.constants.coord-job-wait-timeout</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.coord-job-wait-timeout</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions without having to include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.coord-job-wait-timeout</name>

<value>

coord:days=org.apache.oozie.coord.CoordELFunctions#ph1_coord_days,

coord:months=org.apache.oozie.coord.CoordELFunctions#ph1_coord_months,

coord:hours=org.apache.oozie.coord.CoordELFunctions#ph1_coord_hours,

coord:minutes=org.apache.oozie.coord.CoordELFunctions#ph1_coord_minutes,

hadoop:conf=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_conf

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.coord-job-wait-timeout</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions without having to include all the built in ones.

</description>

</property> <!-- EL Evalautor setup to resolve mainly all constants/variables - no EL functions is resolved -->

<property>

<name>oozie.service.ELService.constants.coord-job-submit-nofuncs</name>

<value>

MINUTE=org.apache.oozie.coord.CoordELConstants#SUBMIT_MINUTE,

HOUR=org.apache.oozie.coord.CoordELConstants#SUBMIT_HOUR,

DAY=org.apache.oozie.coord.CoordELConstants#SUBMIT_DAY,

MONTH=org.apache.oozie.coord.CoordELConstants#SUBMIT_MONTH,

YEAR=org.apache.oozie.coord.CoordELConstants#SUBMIT_YEAR

</value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.coord-job-submit-nofuncs</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.coord-job-submit-nofuncs</name>

<value>

coord:conf=org.apache.oozie.coord.CoordELFunctions#coord_conf,

coord:user=org.apache.oozie.coord.CoordELFunctions#coord_user,

hadoop:conf=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_conf

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.coord-job-submit-nofuncs</name>

<value> </value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <!-- EL Evalautor setup to **check** whether instances/start-instance/end-instances are valid

no EL functions will be resolved -->

<property>

<name>oozie.service.ELService.constants.coord-job-submit-instances</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

</description>

</property> <property>

<name>oozie.service.ELService.ext.constants.coord-job-submit-instances</name>

<value> </value>

<description>

EL constant declarations, separated by commas, format is [PREFIX:]NAME=CLASS#CONSTANT.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <property>

<name>oozie.service.ELService.functions.coord-job-submit-instances</name>

<value>

coord:hoursInDay=org.apache.oozie.coord.CoordELFunctions#ph1_coord_hoursInDay_echo,

coord:daysInMonth=org.apache.oozie.coord.CoordELFunctions#ph1_coord_daysInMonth_echo,

coord:tzOffset=org.apache.oozie.coord.CoordELFunctions#ph1_coord_tzOffset_echo,

coord:current=org.apache.oozie.coord.CoordELFunctions#ph1_coord_current_echo,

coord:currentRange=org.apache.oozie.coord.CoordELFunctions#ph1_coord_currentRange_echo,

coord:offset=org.apache.oozie.coord.CoordELFunctions#ph1_coord_offset_echo,

coord:latest=org.apache.oozie.coord.CoordELFunctions#ph1_coord_latest_echo,

coord:latestRange=org.apache.oozie.coord.CoordELFunctions#ph1_coord_latestRange_echo,

coord:future=org.apache.oozie.coord.CoordELFunctions#ph1_coord_future_echo,

coord:futureRange=org.apache.oozie.coord.CoordELFunctions#ph1_coord_futureRange_echo,

coord:formatTime=org.apache.oozie.coord.CoordELFunctions#ph1_coord_formatTime_echo,

coord:conf=org.apache.oozie.coord.CoordELFunctions#coord_conf,

coord:user=org.apache.oozie.coord.CoordELFunctions#coord_user,

coord:absolute=org.apache.oozie.coord.CoordELFunctions#ph1_coord_absolute_echo,

hadoop:conf=org.apache.oozie.action.hadoop.HadoopELFunctions#hadoop_conf

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

</description>

</property> <property>

<name>oozie.service.ELService.ext.functions.coord-job-submit-instances</name>

<value>

</value>

<description>

EL functions declarations, separated by commas, format is [PREFIX:]NAME=CLASS#METHOD.

This property is a convenience property to add extensions to the built in executors without having to

include all the built in ones.

</description>

</property> <!-- EL Evalautor setup to **check** whether dataIn and dataOut are valid

no EL functions will be resolved --> <property>

<name>oozie.service.ELService.constants.coord-job-submit-data</name>