吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用隐藏层

import tensorflow as tf

from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 # 输入节点

OUTPUT_NODE = 10 # 输出节点

BATCH_SIZE = 100 # 每次batch打包的样本个数 # 模型相关的参数

LEARNING_RATE_BASE = 0.01

LEARNING_RATE_DECAY = 0.99

REGULARAZTION_RATE = 0.0001

TRAINING_STEPS = 5000

MOVING_AVERAGE_DECAY = 0.99 def inference(input_tensor, avg_class, weights1, biases1):

# 不使用滑动平均类

if avg_class == None:

layer1 = tf.nn.relu(tf.matmul(input_tensor, weights1) + biases1)

return layer1

else:

# 使用滑动平均类

layer1 = tf.nn.relu(tf.matmul(input_tensor, avg_class.average(weights1)) + avg_class.average(biases1))

return layer1 def train(mnist):

x = tf.placeholder(tf.float32, [None, INPUT_NODE], name='x-input')

y_ = tf.placeholder(tf.float32, [None, OUTPUT_NODE], name='y-input')

# 生成输出层的参数。

weights1 = tf.Variable(tf.truncated_normal([INPUT_NODE, OUTPUT_NODE], stddev=0.1))

biases1 = tf.Variable(tf.constant(0.1, shape=[OUTPUT_NODE])) # 计算不含滑动平均类的前向传播结果

y = inference(x, None, weights1, biases1) # 定义训练轮数及相关的滑动平均类

global_step = tf.Variable(0, trainable=False)

variable_averages = tf.train.ExponentialMovingAverage(MOVING_AVERAGE_DECAY, global_step)

variables_averages_op = variable_averages.apply(tf.trainable_variables())

average_y = inference(x, variable_averages, weights1, biases1) # 计算交叉熵及其平均值

cross_entropy = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=y, labels=tf.argmax(y_, 1))

cross_entropy_mean = tf.reduce_mean(cross_entropy) # 损失函数的计算

regularizer = tf.contrib.layers.l2_regularizer(REGULARAZTION_RATE)

regularaztion = regularizer(weights1)

loss = cross_entropy_mean + regularaztion # 设置指数衰减的学习率。

learning_rate = tf.train.exponential_decay(

LEARNING_RATE_BASE,

global_step,

mnist.train.num_examples / BATCH_SIZE,

LEARNING_RATE_DECAY,

staircase=True) # 优化损失函数

train_step = tf.train.GradientDescentOptimizer(learning_rate).minimize(loss, global_step=global_step) # 反向传播更新参数和更新每一个参数的滑动平均值

with tf.control_dependencies([train_step, variables_averages_op]):

train_op = tf.no_op(name='train') # 计算正确率

correct_prediction = tf.equal(tf.argmax(average_y, 1), tf.argmax(y_, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32)) # 初始化会话,并开始训练过程。

with tf.Session() as sess:

tf.global_variables_initializer().run()

validate_feed = {x: mnist.validation.images, y_: mnist.validation.labels}

test_feed = {x: mnist.test.images, y_: mnist.test.labels} # 循环的训练神经网络。

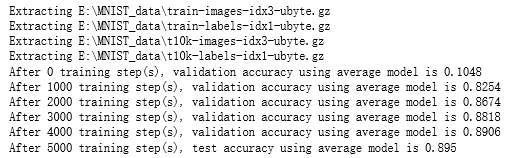

for i in range(TRAINING_STEPS):

if i % 1000 == 0:

validate_acc = sess.run(accuracy, feed_dict=validate_feed)

print("After %d training step(s), validation accuracy using average model is %g " % (i, validate_acc))

xs,ys=mnist.train.next_batch(BATCH_SIZE)

sess.run(train_op,feed_dict={x:xs,y_:ys})

test_acc=sess.run(accuracy,feed_dict=test_feed)

print(("After %d training step(s), test accuracy using average model is %g" %(TRAINING_STEPS, test_acc))) def main(argv=None):

mnist = input_data.read_data_sets("E:\\MNIST_data\\", one_hot=True)

train(mnist) if __name__=='__main__':

main()

吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用隐藏层的更多相关文章

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用滑动平均

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用激活函数

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用指数衰减的学习率

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:不使用正则化

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:全模型

import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_NODE = 784 ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:花瓣识别

import os import glob import os.path import numpy as np import tensorflow as tf from tensorflow.pyth ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:MNIST最佳实践

import os import tensorflow as tf from tensorflow.examples.tutorials.mnist import input_data INPUT_N ...

- 吴裕雄 python 神经网络——TensorFlow训练神经网络:卷积层、池化层样例

import numpy as np import tensorflow as tf M = np.array([ [[1],[-1],[0]], [[-1],[2],[1]], [[0],[2],[ ...

- 吴裕雄--天生自然 Tensorflow卷积神经网络:花朵图片识别

import os import numpy as np import matplotlib.pyplot as plt from PIL import Image, ImageChops from ...

随机推荐

- LED Decorative Light Manufacturer - Decorative Lighting: Functionality And Aesthetics

Whether it is for general ambient lighting, task lighting or accent lighting, the decorative lightin ...

- calloc函数的使用和对内存free的认识

#include<stdlib.h> void *calloc(size_t n, size_t size): free(); 目前的理解: n是多少个这样的size,这样的使用类似有f ...

- mysql忘记密码,更改密码

对MySQL有研究的读者,可能会发现MySQL更新很快,在安装方式上,MySQL提供了两种经典安装方式:解压式和一键式,虽然是两种安装方式,但我更提倡选择解压式安装,不仅快,还干净.在操作系统上,My ...

- linux备忘命令

1,安装vim以后把vim中的tab键设置为4个空格 vim ~/.vimrc一下,如果没有会创建新的, 然后添加下面两行: set ts=4 set expandtab 如果第二行内容是noexpa ...

- c#中的栈(stack)与队列(queue)

using System; using System.Collections.Generic; using System.Linq; using System.Text; using System.T ...

- DRF分页

一.序列化 from rest_framework impost serializers from . models import * class GoodsSerializer(serializer ...

- 移动端 输入框 input 被弹出来的键盘 挡住

给被挡住的input或者textarea加一个id,然后在click事件里调用下面的代码 document.querySelector('#xxx').scrollIntoView();

- 【网搜】禁止 number 输入非数字(Android仍有问题)

目的:使用 number 表单,让其只可输入数字. 问题:ios 可正常限制,Android 仍可输入 [ e | . | - | + ] 这4个字符.猜测这4个字符在数值中为科学记数.小数 ...

- php 接口获取公网ip并获取天气接口信息

<?php function get_ip(){ //判断服务器是否允许$_SERVER if(isset($_SERVER)){ if(isset($_SERVER['HTTP_X_FORWA ...

- Python开发五子棋游戏【新手必学】

五子棋源码,原创代码,仅供 python 开源项目学习.目前电脑走法笨笨的,下一期版本会提高电脑算法ps:另外很多人在学习Python的过程中,往往因为遇问题解决不了或者没好的教程从而导致自己放弃,为 ...