吴裕雄 python 机器学习-NBYS(1)

import numpy as np def loadDataSet():

postingList=[['my', 'dog', 'has', 'flea', 'problems', 'help', 'please'],

['maybe', 'not', 'take', 'him', 'to', 'dog', 'park', 'stupid'],

['my', 'dalmation', 'is', 'so', 'cute', 'I', 'love', 'him'],

['stop', 'posting', 'stupid', 'worthless', 'garbage'],

['mr', 'licks', 'ate', 'my', 'steak', 'how', 'to', 'stop', 'him'],

['quit', 'buying', 'worthless', 'dog', 'food', 'stupid']]

classVec = [0,1,0,1,0,1]

return postingList,classVec def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet:

vocabSet = vocabSet | set(document)

return list(vocabSet) def setOfWords2Vec(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if word in vocabList:

returnVec[vocabList.index(word)] = 1

else:

print("the word: %s is not in my Vocabulary!" % word)

return returnVec def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory)/float(numTrainDocs)

p0Num = np.ones(numWords)

p1Num = np.ones(numWords)

p0Denom = 2.0

p1Denom = 2.0

for i in range(numTrainDocs):

if(trainCategory[i] == 1):

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = np.log(p1Num/p1Denom)

p0Vect = np.log(p0Num/p0Denom)

return p0Vect,p1Vect,pAbusive def classifyNB(vec2Classify, p0Vec, p1Vec, pClass1):

p1 = sum(vec2Classify * p1Vec) + np.log(pClass1)

p0 = sum(vec2Classify * p0Vec) + np.log(1.0 - pClass1)

if p1 > p0:

return 1

else:

return 0 def bagOfWords2VecMN(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if(word in vocabList):

returnVec[vocabList.index(word)] += 1

return returnVec def testingNB():

listOPosts,listClasses = loadDataSet()

myVocabList = createVocabList(listOPosts)

trainMat=[]

for postinDoc in listOPosts:

trainMat.append(setOfWords2Vec(myVocabList, postinDoc))

p0V,p1V,pAb = trainNB0(np.array(trainMat),np.array(listClasses))

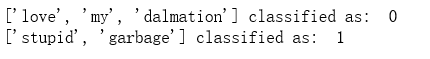

testEntry = ['love', 'my', 'dalmation']

thisDoc = np.array(setOfWords2Vec(myVocabList, testEntry))

print(testEntry,'classified as: ',classifyNB(thisDoc,p0V,p1V,pAb))

testEntry = ['stupid', 'garbage']

thisDoc = np.array(setOfWords2Vec(myVocabList, testEntry))

print(testEntry,'classified as: ',classifyNB(thisDoc,p0V,p1V,pAb)) testingNB()

import re

import numpy as np def createVocabList(dataSet):

vocabSet = set([])

for document in dataSet:

vocabSet = vocabSet | set(document)

return list(vocabSet) def bagOfWords2VecMN(vocabList, inputSet):

returnVec = [0]*len(vocabList)

for word in inputSet:

if(word in vocabList):

returnVec[vocabList.index(word)] += 1

return returnVec def trainNB0(trainMatrix,trainCategory):

numTrainDocs = len(trainMatrix)

numWords = len(trainMatrix[0])

pAbusive = sum(trainCategory)/float(numTrainDocs)

p0Num = np.ones(numWords)

p1Num = np.ones(numWords)

p0Denom = 2.0

p1Denom = 2.0

for i in range(numTrainDocs):

if(trainCategory[i] == 1):

p1Num += trainMatrix[i]

p1Denom += sum(trainMatrix[i])

else:

p0Num += trainMatrix[i]

p0Denom += sum(trainMatrix[i])

p1Vect = np.log(p1Num/p1Denom)

p0Vect = np.log(p0Num/p0Denom)

return p0Vect,p1Vect,pAbusive def textParse(bigString):

listOfTokens = re.split(r'\W*', bigString)

return [tok.lower() for tok in listOfTokens if len(tok) > 2] def spamTest():

docList=[]

classList = []

fullText =[]

for i in range(1,26):

wordList = textParse(open('D:\\LearningResource\\machinelearninginaction\\Ch04\\email\\spam\\%d.txt' % i).read())

docList.append(wordList)

fullText.extend(wordList)

classList.append(1)

wordList = textParse(open('D:\\LearningResource\\machinelearninginaction\\Ch04\\email\\ham\\%d.txt' % i).read())

docList.append(wordList)

fullText.extend(wordList)

classList.append(0)

vocabList = createVocabList(docList)

trainingSet = list(np.arange(50))

testSet=[]

for i in range(10):

randIndex = int(np.random.uniform(0,len(trainingSet)))

testSet.append(trainingSet[randIndex])

del(trainingSet[randIndex])

trainMat=[]

trainClasses = []

for docIndex in trainingSet:

trainMat.append(bagOfWords2VecMN(vocabList, docList[docIndex]))

trainClasses.append(classList[docIndex])

p0V,p1V,pSpam = trainNB0(np.array(trainMat),np.array(trainClasses))

errorCount = 0

for docIndex in testSet:

wordVector = bagOfWords2VecMN(vocabList, docList[docIndex])

if(classifyNB(np.array(wordVector),p0V,p1V,pSpam) != classList[docIndex]):

errorCount += 1

print("classification error",docList[docIndex])

print('the error rate is: ',float(errorCount)/len(testSet)) spamTest()

吴裕雄 python 机器学习-NBYS(1)的更多相关文章

- 吴裕雄 python 机器学习-NBYS(2)

import matplotlib import numpy as np import matplotlib.pyplot as plt n = 1000 xcord0 = [] ycord0 = [ ...

- 吴裕雄 python 机器学习——分类决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——回归决策树模型

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets from sklearn.model_s ...

- 吴裕雄 python 机器学习——线性判断分析LinearDiscriminantAnalysis

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——逻辑回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——ElasticNet回归

import numpy as np import matplotlib.pyplot as plt from matplotlib import cm from mpl_toolkits.mplot ...

- 吴裕雄 python 机器学习——Lasso回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——岭回归

import numpy as np import matplotlib.pyplot as plt from sklearn import datasets, linear_model from s ...

- 吴裕雄 python 机器学习——线性回归模型

import numpy as np from sklearn import datasets,linear_model from sklearn.model_selection import tra ...

随机推荐

- solr系统query检索词特殊字符的处理

solr是基于 lucence开发的应用,如果query中带有非法字符串,结果很可能是检索出所有内容或者直接报错,所以你对用户的输入必须要先做处理.输入星号,能够检索出所有内容:输入加号,则会报错. ...

- linux安装python3 ,安装IPython ,安装jupyter notebook

安装python3 下载到 /opt/中 1.下载python3源码,选择3.6.7因为ipython依赖于>3.6的python环境wget https://www.python.org ...

- vue项目安装sass/scss

vue 添加scss 安装好之后使用: 注意scss和sass的语法区别,scss是用传统花括号,sass是缩进控制,看个人习惯选择语言 sass语法看这里==>sass基本语法 vue项目编译 ...

- WPF Blend 一个动画结束后另一个动画开始执行(一个一个执行)

先说明思路:一个故事版Storyboard,两个双精度动画帧DoubleAnimation. 一个一个执行的原理:控制动画开始时间(例如第一个动画用时2秒,第二个动画就第2秒起开始执行.) XAML: ...

- [python] 初学python,级联菜单输出

#Author:shijt china_map = { "河北": { '石家庄': ['辛集', '正定', '晋州'], '邯郸': ['涉县', '魏县', '磁县'], ' ...

- requireJs搭建

1.配置:myconfig.js(按需配置) require.config({ baseUrl: "../style/js", //该路径下的文件 paths: { 'jque ...

- 第三周C++小结

其实一些经验或者技巧,都是在作业的过程中搜索得到或者自己领悟出来的. 首先是数值变量与字符变量占用的字节数不同,因此可以用sizeof()函数来判断变量所占字节数判断其类型. 然后是空格的ASCII码 ...

- .bat脚本基本命令语法 http://www.cnblogs.com/iTlijun/p/6137027.html

这个是我找到的非常好的一篇文章了: 目录批处理的常见命令(未列举的命令还比较多,请查阅帮助信息) 1.REM 和 :: 2.ECHO 和 @ 3.PAUSE 4.ERR ...

- PHP提取HTML代码中img标签下src属性

需求:提取整片文章中img的src属性,并保存到一个数组当中 preg_match_all("/(href|src)=([\"|']?)([^\"'>]+.(jpg ...

- Ubuntu12.04 内核树建立

先查看自己使用的内核版本 lin@lin-virtual-machine:~$ uname -r --generic 如果安装系统时,自动安装了源码.在 /usr/src 目录下有对应的使用的版本目录 ...