来了,老弟!__二进制部署kubernetes1.11.7集群

Kubernetes容器集群管理

Kubernetes介绍

Kubernetes是Google在2014年6月开源的一个容器集群管理系统,使用Go语言开发,Kubernetes也叫K8S。

K8S是Google内部一个叫Borg的容器集群管理系统衍生出来的,Borg已经在Google大规模生产运行十年之久。

K8S主要用于自动化部署、扩展和管理容器应用,提供了资源调度、部署管理、服务发现、扩容缩容、监控等一整套功能。

2015年7月,Kubernetes v1.0正式发布。

Kubernetes目标是让部署容器化应用简单高效。

官方网站:www.kubernetes.io

Kubernetes 主要功能

- 数据卷

Pod中容器之间共享数据,可以使用数据卷。

- 应用程序健康检查

容器内服务可能进程堵塞无法处理请求,可以设置监控检查策略保证应用健壮性。

- 复制应用程序实例

控制器维护着Pod副本数量,保证一个Pod或一组同类的Pod数量始终可用。

- 弹性伸缩

根据设定的指标(CPU利用率)自动缩放Pod副本数。

- 服务发现

使用环境变量或DNS服务插件保证容器中程序发现Pod入口访问地址。

- 负载均衡

一组Pod副本分配一个私有的集群IP地址,负载均衡转发请求到后端容器。在集群内部其他Pod可通过这个ClusterIP访问应用。

- 滚动更新

更新服务不中断,一次更新一个Pod,而不是同时删除整个服务。

- 服务编排

通过文件描述部署服务,使得应用程序部署变得更高效。

- 资源监控

Node节点组件集成cAdvisor资源收集工具,可通过Heapster汇总整个集群节点资源数据,然后存储到InfluxDB时序数据库,再由Grafana展示。

- 提供认证和授权

支持角色访问控制(RBAC)认证授权等策略。

基本对象概念

基本对象:

- Pod

Pod是最小部署单元,一个Pod有一个或多个容器组成,Pod中容器共享存储和网络,在同一台Docker主机上运行。

- Service

Service一个应用服务抽象,定义了Pod逻辑集合和访问这个Pod集合的策略。

Service代理Pod集合对外表现是为一个访问入口,分配一个集群IP地址,来自这个IP的请求将负载均衡转发后端Pod中的容器。

Service通过Lable Selector选择一组Pod提供服务。

- Volume

数据卷,共享Pod中容器使用的数据。

- Namespace

命名空间将对象逻辑上分配到不同Namespace,可以是不同的项目、用户等区分管理,并设定控制策略,从而实现多租户。

命名空间也称为虚拟集群。

- Lable

标签用于区分对象(比如Pod、Service),键/值对存在;每个对象可以有多个标签,通过标签关联对象。

基于基本对象更高层次抽象:

- ReplicaSet

下一代Replication Controller。确保任何给定时间指定的Pod副本数量,并提供声明式更新等功能。

RC与RS唯一区别就是lable selector支持不同,RS支持新的基于集合的标签,RC仅支持基于等式的标签。

- Deployment

Deployment是一个更高层次的API对象,它管理ReplicaSets和Pod,并提供声明式更新等功能。

官方建议使用Deployment管理ReplicaSets,而不是直接使用ReplicaSets,这就意味着可能永远不需要直接操作ReplicaSet对象。

- StatefulSet

StatefulSet适合持久性的应用程序,有唯一的网络标识符(IP),持久存储,有序的部署、扩展、删除和滚动更新。

- DaemonSet

DaemonSet确保所有(或一些)节点运行同一个Pod。当节点加入Kubernetes集群中,Pod会被调度到该节点上运行,当节点从集群中

移除时,DaemonSet的Pod会被删除。删除DaemonSet会清理它所有创建的Pod。

- Job

一次性任务,运行完成后Pod销毁,不再重新启动新容器。还可以任务定时运行。

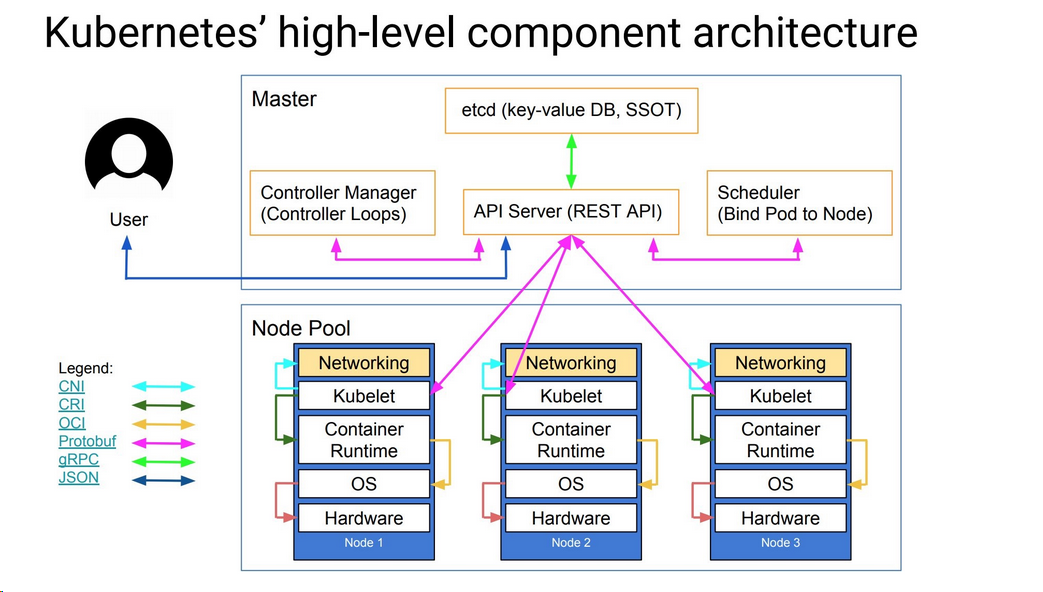

系统架构图及组件功能

Master 组件:

- kube- - apiserver

Kubernetes API,集群的统一入口,各组件协调者,以HTTP API提供接口服务,所有对象资源的增删改查和监听操作都交给APIServer处理后再提交给Etcd存储。

- kube- - controller- - manager

处理集群中常规后台任务,一个资源对应一个控制器,而ControllerManager就是负责管理这些控制器的。

- kube- - scheduler

根据调度算法为新创建的Pod选择一个Node节点。

Node 组件:

- kubelet

kubelet是Master在Node节点上的Agent,管理本机运行容器的生命周期,比如创建容器、Pod挂载数据卷、

下载secret、获取容器和节点状态等工作。kubelet将每个Pod转换成一组容器。

- kube- - proxy

在Node节点上实现Pod网络代理,维护网络规则和四层负载均衡工作。

- docker 或 rocket/rkt

运行容器。

第三方服务:

- etcd

分布式键值存储系统。用于保持集群状态,比如Pod、Service等对象信息。

下图清晰表明了Kubernetes的架构设计以及组件之间的通信协议。

好了,不BB!。。。

集群部署

1、环境规划

2、安装Docker

3、自签TLS证书

4、部署Etcd集群

5、部署Flannel网络

6、创建Node节点kubeconfig文件

7、获取K8S二进制包

8、运行Master组件

9、运行Node组件

10、查询集群状态

11、启动一个测试示例

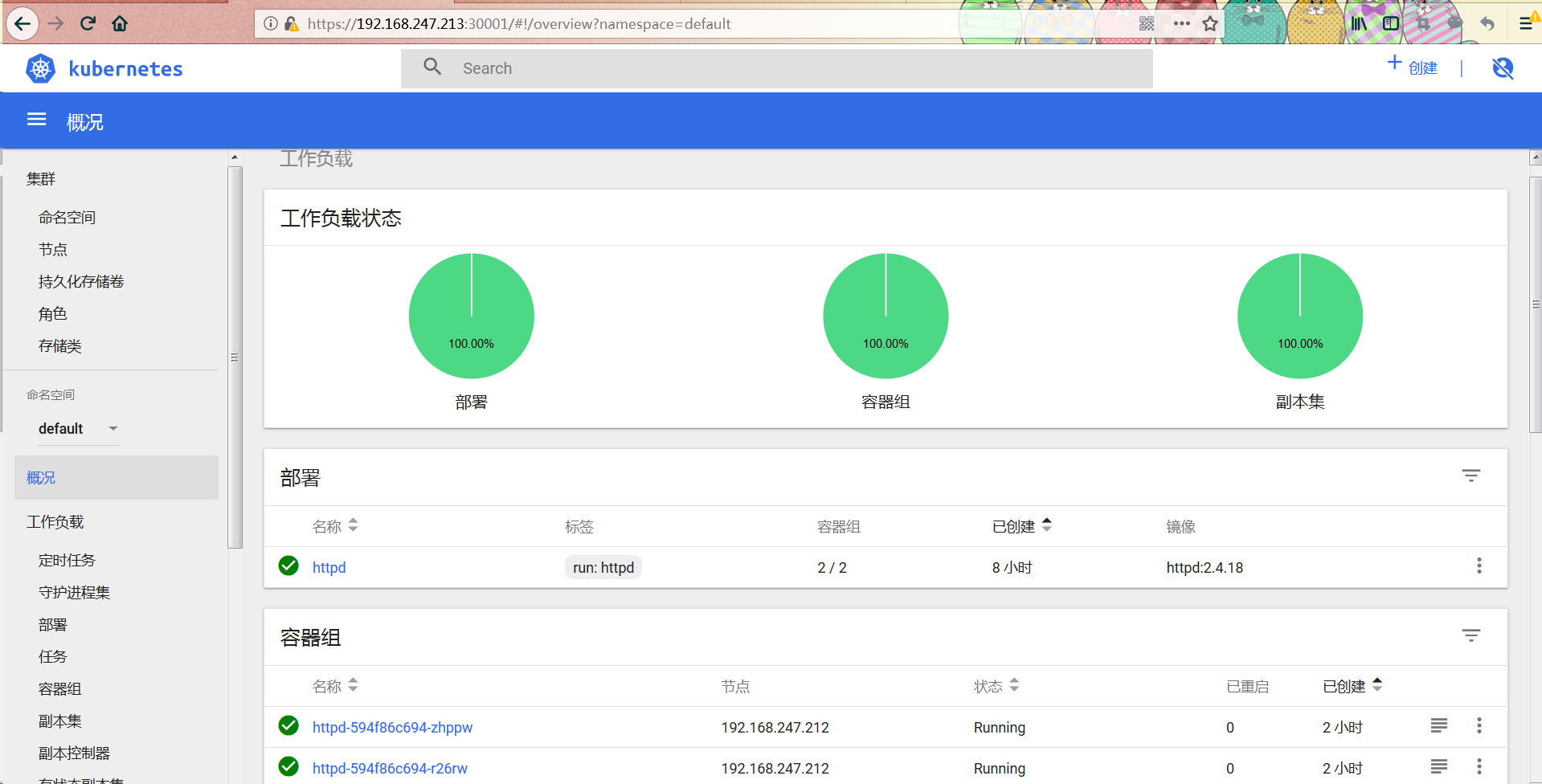

12、部署Web UI (Dashboard)

Kubernetes容器集群管理

| 角色 | IP | 组件 | 推荐配置 |

| master | 192.168.247.211 |

kube-apiserver |

CPU 2核+ 2G内存+ |

| node01 | 192.168.247.212 | kubelet kube-proxy docker flannel etcd |

|

| node02 | 192.168.247.213 | kubelet kube-proxy docker flannel etcd |

| 软件 | 版本 |

| Linux操作系统 | CentOS7.4_x64 |

| Kubernetes | 1.11.7 |

| Docker | 17.12-ce |

| Etcd | 3.0 |

Kubernetes发布地址:https://github.com/kubernetes/kubernetes/releases

系统环境准备

cat <<EOF >>/etc/hosts

192.168.247.211 master

192.168.247.212 node01

192.168.247.213 node02

EOF

systemctl stop firewalld

systemctl disable firewalld

sed -i 's/SELINUX=enforcing/SELINUX=disabled/g' /etc/selinux/config

swapoff -a

sed -i 's/\/dev\/mapper\/centos-swap/\#\/dev\/mapper\/centos-swap/g' /etc/fstab

yum -y install ntp

systemctl enable ntpd

systemctl start ntpd

ntpdate -u cn.pool.ntp.org

hwclock --systohc

timedatectl set-timezone Asia/Shanghai

yum install wget vim lsof net-tools lrzsz -y

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

yum makecache

#设置内核参数

echo "* soft nofile 190000" >> /etc/security/limits.conf

echo "* hard nofile 200000" >> /etc/security/limits.conf

echo "* soft nproc 252144" >> /etc/security/limits.conf

echo "* hadr nproc 262144" >> /etc/security/limits.conf

tee /etc/sysctl.conf <<-'EOF'

# System default settings live in /usr/lib/sysctl.d/00-system.conf.

# To override those settings, enter new settings here, or in an /etc/sysctl.d/<name>.conf file

#

# For more information, see sysctl.conf(5) and sysctl.d(5). net.ipv4.tcp_tw_recycle = 0

net.ipv4.ip_local_port_range = 10000 61000

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_fin_timeout = 30

net.ipv4.ip_forward = 1

net.core.netdev_max_backlog = 2000

net.ipv4.tcp_mem = 131072 262144 524288

net.ipv4.tcp_keepalive_intvl = 30

net.ipv4.tcp_keepalive_probes = 3

net.ipv4.tcp_window_scaling = 1

net.ipv4.tcp_syncookies = 1

net.ipv4.tcp_max_syn_backlog = 2048

net.ipv4.tcp_low_latency = 0

net.core.rmem_default = 256960

net.core.rmem_max = 513920

net.core.wmem_default = 256960

net.core.wmem_max = 513920

net.core.somaxconn = 2048

net.core.optmem_max = 81920

net.ipv4.tcp_mem = 131072 262144 524288

net.ipv4.tcp_rmem = 8760 256960 4088000

net.ipv4.tcp_wmem = 8760 256960 4088000

net.ipv4.tcp_keepalive_time = 1800

net.ipv4.tcp_sack = 1

net.ipv4.tcp_fack = 1

net.ipv4.tcp_timestamps = 1

net.ipv4.tcp_syn_retries = 1

EOF

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system

sysctl -p

reboot

集群部署 – 安装Docker

# step 1: 安装必要的一些系统工具

yum install -y yum-utils device-mapper-persistent-data lvm2 unzip

# Step 2: 添加软件源信息

yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

# Step 3: 更新并安装 Docker-CE

yum makecache fast

yum install https://download.docker.com/linux/centos/7/x86_64/stable/Packages/docker-ce-selinux-17.03.2.ce-1.el7.centos.noarch.rpm -y

yum install docker-ce-17.03.2.ce-1.el7.centos -y

# Step 4: 开启Docker服务

service docker start

systemctl enable docker

# 注意:

# 官方软件源默认启用了最新的软件,您可以通过编辑软件源的方式获取各个版本的软件包。例如官方并没有将测试版本的软件源置为可用,你可以通过以下方式开启。同理可以开启各种测试版本等。

# vim /etc/yum.repos.d/docker-ce.repo

# 将 [docker-ce-test] 下方的 enabled=0 修改为 enabled=1

#

# 安装指定版本的Docker-CE:

# Step 1: 查找Docker-CE的版本:

# yum list docker-ce.x86_64 --showduplicates | sort -r

# Loading mirror speeds from cached hostfile

# Loaded plugins: branch, fastestmirror, langpacks

# docker-ce.x86_64 17.03.1.ce-1.el7.centos docker-ce-stable

# docker-ce.x86_64 17.03.1.ce-1.el7.centos @docker-ce-stable

# docker-ce.x86_64 17.03.0.ce-1.el7.centos docker-ce-stable

# Available Packages

# Step2 : 安装指定版本的Docker-CE: (VERSION 例如上面的 17.03.0.ce.1-1.el7.centos)

# sudo yum -y install docker-ce-[VERSION] # 通过经典网络、VPC网络内网安装时,用以下命令替换Step 2中的命令

# 经典网络:

# sudo yum-config-manager --add-repo http://mirrors.aliyuncs.com/docker-ce/linux/centos/docker-ce.repo

# VPC网络:

# sudo yum-config-manager --add-repo http://mirrors.could.aliyuncs.com/docker-ce/linux/centos/docker-ce.repo #设置加速器

cat << EOF > /etc/docker/daemon.json

{

"registry-mirrors": [ "https://registry.docker-cn.com"],

"insecure-registries":["192.168.247.210:5000"]

}

EOF

集群部署 – 自签TLS证书

| 组件 | 使用的证书 |

| etcd | ca.pem,server.pem,server-key.pem |

| flannel | ca.pem,server.pem,server-key.pem |

| kube-apiserver | ca.pem,server.pem,server-key.pem |

| kubelet | ca.pem,ca-key.pem |

| kube-proxy | ca.pem,kube-proxy.pem,kube-proxy-key.pem |

| kubectl | ca.pem,admin.pem,admin-key.pem |

在master安装证书生成工具 cfssl :

mkdir ssl;cd ssl

wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 --no-check-certificate

wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

mv cfssl_linux-amd64 /usr/local/bin/cfssl

mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

mv cfssl-certinfo_linux-amd64 /usr/bin/cfssl-certinfo

执行certificate.sh生成证书

[root@master ssl]# cat certificate.sh cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF cfssl gencert -initca ca-csr.json | cfssljson -bare ca - #----------------------- cat > server-csr.json <<EOF

{

"CN": "kubernetes",

"hosts": [

"127.0.0.1",

"192.168.247.211",

"192.168.247.212",

"192.168.247.213",

"10.10.10.1",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server #----------------------- cat > admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin #----------------------- cat > kube-proxy-csr.json <<EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

注意这里先把ssl这个目录拷贝一份,因为后面RBAC授权的时候还需要运用到这些生成的证书!!

然后执行以下命令只留下pem证书

ls |grep -v "pem"|xargs rm -fr

集群部署 – 部署Etcd集群

etcd是一个高可用的键值存储系统,主要用于共享配置和服务发现。etcd是由CoreOS开发并维护的,灵感来自于 ZooKeeper 和 Doozer,它使用Go语言编写,并通过Raft一致性算法处理日志复制以保证强一致性。Raft是一个新的一致性算法,适用于分布式系统的日志复制,Raft通过选举的方式来实现一致性。Google的容器集群管理系统Kubernetes、开源PaaS平台Cloud Foundry和CoreOS的Fleet都广泛使用了etcd。在分布式系统中,如何管理节点间的状态一直是一个难题,etcd像是专门为集群环境的服务发现和注册而设计,它提供了数据TTL失效、数据改变监视、多值、目录监听、分布式锁原子操作等功能,可以方便的跟踪并管理集群节点的状态。

etcd的特性如下:

- 简单: 支持curl方式的用户API(HTTP+JSON)

- 安全: 可选的SSL客户端证书认证

- 快速: 单实例每秒 1000 次写操作

- 可靠: 使用Raft保证一致性

二进制包下载地址:https://github.com/coreos/etcd/releases/tag/v3.2.12

部署(master,node01,node02)

mkdir -p /opt/kubernetes/{bin,cfg,ssl}

[root@master ~]# tar -xf etcd-v3.2.12-linux-amd64.tar.gz

[root@master ~]# mv etcd-v3.2.12-linux-amd64/etcd /opt/kubernetes/bin/

[root@master ~]# mv etcd-v3.2.12-linux-amd64/etcdctl /opt/kubernetes/bin/

[root@master ~]# cat /opt/kubernetes/cfg/etcd

#[Member]

ETCD_NAME="etcd01"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.247.211:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.247.211:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.247.211:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.247.211:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.247.211:2380,etcd02=https://192.168.247.212:2380,etcd03=https://192.168.247.213:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

[root@master ~]# cat /usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=-/opt/kubernetes/cfg/etcd

ExecStart=/opt/kubernetes/bin/etcd \

--name=${ETCD_NAME} \

--data-dir=${ETCD_DATA_DIR} \

--listen-peer-urls=${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=${ETCD_INITIAL_CLUSTER} \

--initial-cluster-state=new \

--cert-file=/opt/kubernetes/ssl/server.pem \

--key-file=/opt/kubernetes/ssl/server-key.pem \

--peer-cert-file=/opt/kubernetes/ssl/server.pem \

--peer-key-file=/opt/kubernetes/ssl/server-key.pem \

--trusted-ca-file=/opt/kubernetes/ssl/ca.pem \

--peer-trusted-ca-file=/opt/kubernetes/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

[root@master ~]# cp ssl/server*pem ssl/ca*.pem /opt/kubernetes/ssl/

#制作免密登录

ssh-keygen

ssh-copy-id -i /root/.ssh/id_rsa.pub 192.168.247.212

ssh-copy-id -i /root/.ssh/id_rsa.pub 192.168.247.213

[root@master ~]# scp -r /opt/kubernetes/ 192.168.247.212:/opt/

[root@master ~]# scp -r /opt/kubernetes/ 192.168.247.213:/opt/

[root@master ~]# scp -r /usr/lib/systemd/system/etcd.service 192.168.247.212:/usr/lib/systemd/system/

[root@master ~]# scp -r /usr/lib/systemd/system/etcd.service 192.168.247.213:/usr/lib/systemd/system/

[root@master ~]# systemctl start etcd && systemctl enable etcd

修改node1、node2的/opt/kubernetes/cfg/etcd文件里的ETCD_NAME参数。然后启动!

etcd配置文件参数说明:

ETCD_NAME 节点名称

ETCD_DATA_DIR 数据目录

ETCD_LISTEN_PEER_URLS 集群通信监听地址

ETCD_LISTEN_CLIENT_URLS 客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS 集群通告地址

ETCD_ADVERTISE_CLIENT_URLS 客户端通告地址

ETCD_INITIAL_CLUSTER 集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN 集群Token

ETCD_INITIAL_CLUSTER_STATE 加入集群的当前状态,new是新集群,existing表示加入已有集群

查看集群状态:

# /opt/kubernetes/bin/etcdctl \

--ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

--endpoints="https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379" \

cluster-health [root@master ssl]# /opt/kubernetes/bin/etcdctl \

--ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

--endpoints="https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379" \

cluster-health

member a6c341768b1e58b is healthy: got healthy result from https://192.168.247.211:2379

member 62b5a3c1db53387a is healthy: got healthy result from https://192.168.247.212:2379

member d0f8841f2d3e2788 is healthy: got healthy result from https://192.168.247.213:2379

集群部署 – 部署Flannel网络

Overlay Network :覆盖网络,在基础网络上叠加的一种虚拟网络技术模式,该网络中的主机通过虚拟链路连接起来。

VXLAN :将源数据包封装到UDP中,并使用基础网络的IP/MAC作为外层报文头进行封装,然后在以太网上传输,到达目的地后由隧道端点解封装并将数据发送给目标地址。

Flannel :是Overlay网络的一种,也是将源数据包封装在另一种网络包里面进行路由转发和通信,目前已经支持UDP、VXLAN、AWS VPC和GCE路由等数据转发方式。

多主机容器网络通信其他主流方案:隧道方案( Weave、OpenvSwitch ),路由方案(Calico)等。

集群部署 – 部署Flannel网络(node01,node02)

1 )写入分配的子网段到 etcd ,供 flanneld 使用

1)首先设置子网

[root@master ssl]# /opt/kubernetes/bin/etcdctl \

--ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

--endpoints="https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379" \

set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

2 )下载二进制包

# wget https://github.com/coreos/flannel/releases/download/v0.9.1/flannel-v0.9.1-linux-amd64.tar.gz

tar -xf flannel-v0.9.1-linux-amd64.tar.gz

scp flanneld mk-docker-opts.sh 192.168.247.212:/opt/kubernetes/bin/

scp flanneld mk-docker-opts.sh 192.168.247.213:/opt/kubernetes/bin/

3 )配置 Flannel

[root@node01 cfg]# pwd

/opt/kubernetes/cfg

[root@node01 cfg]# cat flanneld

FLANNEL_OPTIONS="--etcd-endpoints=https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379 -etcd-cafile=/opt/kubernetes/ssl/ca.pem -etcd-certfile=/opt/kubernetes/ssl/server.pem -etcd-keyfile=/opt/kubernetes/ssl/server-key.pem"

4 ) systemd 管理 Flannel

[root@node01 cfg]# cat /usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service [Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq $FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure [Install]

WantedBy=multi-user.target

5 )配置 Docker 启动指定子网段

[root@node01 cfg]# cat /usr/lib/systemd/system/docker.service [Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target [Service]

Type=notify

EnvironmentFile=/run/flannel/subnet.env

ExecStart=/usr/bin/dockerd $DOCKER_NETWORK_OPTIONS

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s [Install]

WantedBy=multi-user.target

6 ) 启动(一定要按这个顺序)

[root@node01 cfg]# systemctl daemon-reload

[root@node01 cfg]# systemctl restart flanneld && systemctl enable flanneld

[root@node01 cfg]# systemctl restart docker

同步到其他node后启动

cd /opt/kubernetes/cfg/

scp flanneld 192.168.247.212:/opt/kubernetes/cfg/

scp flanneld 192.168.247.213:/opt/kubernetes/cfg/

scp /usr/lib/systemd/system/flanneld.service 192.168.247.212:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/flanneld.service 192.168.247.213:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/docker.service 192.168.247.213:/usr/lib/systemd/system/

scp /usr/lib/systemd/system/docker.service 192.168.247.212:/usr/lib/systemd/system/

7 测试

#列出集群中的所有子网

[root@master ssl]# /opt/kubernetes/bin/etcdctl \

> --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

> --endpoints="https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379" \

> ls /coreos.com/network/subnets /coreos.com/network/subnets/172.17.100.0-24

/coreos.com/network/subnets/172.17.57.0-24

/coreos.com/network/subnets/172.17.88.0-24

#查看子网对应的物理网口

[root@master ssl]# /opt/kubernetes/bin/etcdctl \

> --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem \

> --endpoints="https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379" \

> get /coreos.com/network/subnets/172.17.57.0-24

{"PublicIP":"192.168.247.212","BackendType":"vxlan","BackendData":{"VtepMAC":"a6:e3:be:9b:f6:b9"}

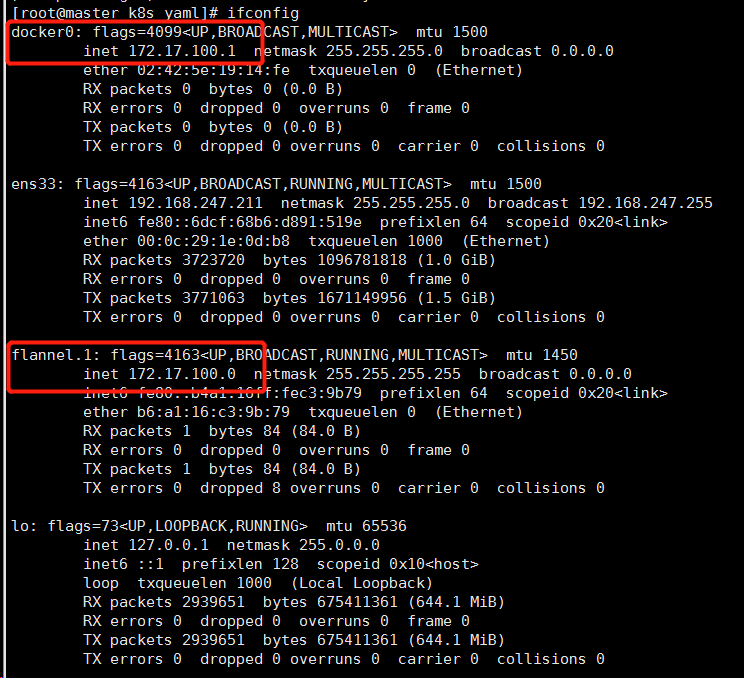

我们发现flannel.1和docker0是在同一网段的

#ping 88段的容器

[root@node01 cfg]# ping 172.17.88.1

PING 172.17.88.1 (172.17.88.1) 56(84) bytes of data.

64 bytes from 172.17.88.1: icmp_seq=1 ttl=64 time=0.581 ms

64 bytes from 172.17.88.1: icmp_seq=2 ttl=64 time=0.871 ms

64 bytes from 172.17.88.1: icmp_seq=3 ttl=64 time=6.78 ms

64 bytes from 172.17.88.1: icmp_seq=4 ttl=64 time=0.874 ms

^C

--- 172.17.88.1 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3011ms

rtt min/avg/max/mdev = 0.581/2.277/6.783/2.604 ms

集群部署 – 创建Node节点kubeconfig文件

1、创建TLS Bootstrapping Token

2、创建kubelet kubeconfig

3、创建kube-proxy kubeconfig

下载安装包:https://dl.k8s.io/v1.11.7/kubernetes-server-linux-amd64.tar.gz

[root@master master_pkg]# tar -xf kubernetes-server-linux-amd64.tar.gz

[root@master master_pkg]# mv kube-apiserver kube-controller-manager kube-scheduler kubectl /opt/kubernetes/bin

[root@master bin]# pwd

/opt/kubernetes/bin

[root@master bin]# chmod +x kubectl

[root@master bin]# echo "PATH=$PATH:/opt/kubernetes/bin" >>/etc/profile

[root@master bin]# source /etc/profile

[root@master ssl]# pwd

/root/ssl

[root@master ssl]# cat kubeconfig.sh

# 创建 TLS Bootstrapping Token

export BOOTSTRAP_TOKEN=$(head -c 16 /dev/urandom | od -An -t x | tr -d ' ')

cat > token.csv <<EOF

${BOOTSTRAP_TOKEN},kubelet-bootstrap,10001,"system:kubelet-bootstrap"

EOF #---------------------- # 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://192.168.247.211:6443" # 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig # 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=${BOOTSTRAP_TOKEN} \

--kubeconfig=bootstrap.kubeconfig # 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig # 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig #---------------------- # 创建kube-proxy kubeconfig文件 kubectl config set-cluster kubernetes \

--certificate-authority=./ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@master ssl]# sh kubeconfig.sh

Cluster "kubernetes" set.

User "kubelet-bootstrap" set.

Context "default" created.

Switched to context "default".

Cluster "kubernetes" set.

User "kube-proxy" set.

Context "default" created.

Switched to context "default".

[root@master ssl]# cat token.csv

dc434e4db0f27ac84703bacbb8157540,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

[root@master ssl]# cp token.csv /opt/kubernetes/cfg/

集群部署 – 运行Master组件

master3个主件安装脚本:

[root@master master_pkg]# cat apiserver.sh

#!/bin/bash MASTER_ADDRESS=${1:-"192.168.1.195"}

ETCD_SERVERS=${2:-"http://127.0.0.1:2379"} cat <<EOF >/opt/kubernetes/cfg/kube-apiserver KUBE_APISERVER_OPTS="--logtostderr=true \\

--v=4 \\

--etcd-servers=${ETCD_SERVERS} \\

--insecure-bind-address=127.0.0.1 \\

--bind-address=${MASTER_ADDRESS} \\

--insecure-port=8080 \\

--secure-port=6443 \\

--advertise-address=${MASTER_ADDRESS} \\

--allow-privileged=true \\

--service-cluster-ip-range=10.10.10.0/24 \\

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \\

--kubelet-https=true \\

--enable-bootstrap-token-auth \\

--token-auth-file=/opt/kubernetes/cfg/token.csv \\

--service-node-port-range=30000-50000 \\

--tls-cert-file=/opt/kubernetes/ssl/server.pem \\

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \\

--client-ca-file=/opt/kubernetes/ssl/ca.pem \\

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--etcd-cafile=/opt/kubernetes/ssl/ca.pem \\

--etcd-certfile=/opt/kubernetes/ssl/server.pem \\

--etcd-keyfile=/opt/kubernetes/ssl/server-key.pem" EOF cat <<EOF >/usr/lib/systemd/system/kube-apiserver.service

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-apiserver

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-apiserver

systemctl restart kube-apiserver [root@master master_pkg]# cat controller-manager.sh

#!/bin/bash MASTER_ADDRESS=${1:-"127.0.0.1"} cat <<EOF >/opt/kubernetes/cfg/kube-controller-manager KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect=true \\

--address=127.0.0.1 \\

--service-cluster-ip-range=10.10.10.0/24 \\

--cluster-name=kubernetes \\

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \\

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \\

--root-ca-file=/opt/kubernetes/ssl/ca.pem" EOF cat <<EOF >/usr/lib/systemd/system/kube-controller-manager.service

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-controller-manager

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-controller-manager

systemctl restart kube-controller-manager [root@master master_pkg]# cat scheduler.sh

#!/bin/bash MASTER_ADDRESS=${1:-"127.0.0.1"} cat <<EOF >/opt/kubernetes/cfg/kube-scheduler KUBE_SCHEDULER_OPTS="--logtostderr=true \\

--v=4 \\

--master=${MASTER_ADDRESS}:8080 \\

--leader-elect" EOF cat <<EOF >/usr/lib/systemd/system/kube-scheduler.service

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-scheduler

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-scheduler

systemctl restart kube-scheduler

apiserver配置文件

参数说明:

—logtostderr 启用日志

—-v 日志等级

—etcd-servers etcd集群地址

—bind-address 监听地址

—secure-port https安全端口

—advertise-address 集群通告地址

—allow-privileged 启用授权

—service-cluster-ip-range Service虚拟IP地址段

—enable-admission-plugins 准入控制模块

—authorization-mode 认证授权,启用RBAC授权和节点自管理

—enable-bootstrap-token-auth 启用TLS bootstrap功能,后面会讲到

—token-auth-file token文件

—service-node-port-range Service Node类型默认分配端口范围

部署master

[root@master ~]# cp ssl/ca*pem ssl/server*pem /opt/kubernetes/ssl/

[root@master master_pkg]# chmod +x /opt/kubernetes/bin/* && chmod +x *.sh

[root@master master_pkg]# ./apiserver.sh 192.168.247.211 https://192.168.247.211:2379,https://192.168.247.212:2379,https://192.168.247.213:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-apiserver.service to /usr/lib/systemd/system/kube-apiserver.service.

[root@master master_pkg]# ./scheduler.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@master master_pkg]# ./controller-manager.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@master master_pkg]# echo "export PATH=$PATH:/opt/kubernetes/bin" >> /etc/profile

[root@master master_pkg]# source /etc/profile

集群部署 – 运行Node组件(node01,node02)

1、将master上的node配置文件拷贝到node的/opt/kubernetes/cfg/目录下

[root@master ssl]# scp *kubeconfig 192.168.247.212:/opt/kubernetes/cfg/

[root@node01 ~]#tar -xf kubernetes-server-linux-amd64.tar.gz

[root@node01 ~]# mv kubelet kube-proxy /opt/kubernetes/bin

2、node上2个组件的安装脚本

[root@node01 ~]# cat kubelet.sh

#!/bin/bash NODE_ADDRESS=${1:-"192.168.1.196"}

DNS_SERVER_IP=${2:-"10.10.10.2"} cat <<EOF >/opt/kubernetes/cfg/kubelet KUBELET_OPTS="--logtostderr=true \\

--v=4 \\

--address=${NODE_ADDRESS} \\

--hostname-override=${NODE_ADDRESS} \\

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \\

--experimental-bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \\

--cert-dir=/opt/kubernetes/ssl \\

--allow-privileged=true \\

--cluster-dns=${DNS_SERVER_IP} \\

--cluster-domain=cluster.local \\

--fail-swap-on=false \\

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0" EOF cat <<EOF >/usr/lib/systemd/system/kubelet.service

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kubelet

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

KillMode=process [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kubelet

systemctl restart kubelet [root@node01 ~]# cat proxy.sh

#!/bin/bash NODE_ADDRESS=${1:-"192.168.1.200"} cat <<EOF >/opt/kubernetes/cfg/kube-proxy KUBE_PROXY_OPTS="--logtostderr=true \

--v=4 \

--hostname-override=${NODE_ADDRESS} \

--kubeconfig=/opt/kubernetes/cfg/kube-proxy.kubeconfig" EOF cat <<EOF >/usr/lib/systemd/system/kube-proxy.service

[Unit]

Description=Kubernetes Proxy

After=network.target [Service]

EnvironmentFile=-/opt/kubernetes/cfg/kube-proxy

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure [Install]

WantedBy=multi-user.target

EOF systemctl daemon-reload

systemctl enable kube-proxy

systemctl restart kube-proxy

kubelet配置文件

参数说明:

—hostname-override 在集群中显示的主机名

—kubeconfig 指定kubeconfig文件位置,会自动生成

—bootstrap-kubeconfig 指定刚才生成的bootstrap.kubeconfig文件

—cert-dir 颁发证书存放位置

—pod-infra-container-image 管理Pod网络的镜像

3、部署node

[root@node01 ~]# chmod +x /opt/kubernetes/bin/* && chmod +x *.sh

[root@node01 ~]# ./kubelet.sh 192.168.247.212 10.10.10.2

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

[root@node01 ~]# ./proxy.sh 192.168.247.212

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

4、在master上绑定kubelet-bootstrap

[root@master ~]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding "kubelet-bootstrap" created

[root@node01 cfg]# systemctl start kubelet && systemctl enable kubelet

[root@node01 cfg]# systemctl start kube-proxy && systemctl enable kube-proxy

[root@master ssl]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-atAc1doj0IP5p48t-yz8FphTOxJYILpu_I9RY5ejL54 26s kubelet-bootstrap Pending [root@master ssl]# kubectl certificate approve node-csr-atAc1doj0IP5p48t-yz8FphTOxJYILpu_I9RY5ejL54

certificatesigningrequest "node-csr-atAc1doj0IP5p48t-yz8FphTOxJYILpu_I9RY5ejL54" approved

[root@master ssl]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-atAc1doj0IP5p48t-yz8FphTOxJYILpu_I9RY5ejL54 1m kubelet-bootstrap Approved,Issued

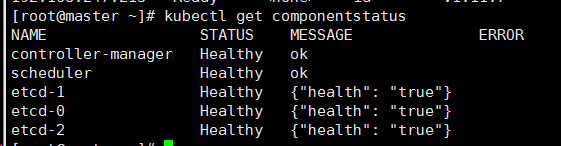

集群部署 – 查询集群状态

# kubectl get node

# kubectl get componentstatus

Kubernetes容器集群管理

集群部署 – 启动一个测试示例

# kubectl run nginx --image=nginx --replicas=3

# kubectl get pod

# kubectl expose deployment nginx --port=88 --target-port=80 --type=NodePort

# kubectl get svc nginx

Kubernetes容器集群管理

集群部署 – 部署Web UI (Dashboard)

Dashboard脚本:

[root@master k8s_yaml]# cat kubernetes-dashboard.yaml

# Copyright 2017 The Kubernetes Authors.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License. # ------------------- Dashboard Secret ------------------- # apiVersion: v1

kind: Secret

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard-certs

namespace: kube-system

type: Opaque ---

# ------------------- Dashboard Service Account ------------------- # apiVersion: v1

kind: ServiceAccount

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system ---

# ------------------- Dashboard Role & Role Binding ------------------- # kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

rules:

# Allow Dashboard to create 'kubernetes-dashboard-key-holder' secret.

- apiGroups: [""]

resources: ["secrets"]

verbs: ["create"]

# Allow Dashboard to create 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

verbs: ["create"]

# Allow Dashboard to get, update and delete Dashboard exclusive secrets.

- apiGroups: [""]

resources: ["secrets"]

resourceNames: ["kubernetes-dashboard-key-holder", "kubernetes-dashboard-certs"]

verbs: ["get", "update", "delete"]

# Allow Dashboard to get and update 'kubernetes-dashboard-settings' config map.

- apiGroups: [""]

resources: ["configmaps"]

resourceNames: ["kubernetes-dashboard-settings"]

verbs: ["get", "update"]

# Allow Dashboard to get metrics from heapster.

- apiGroups: [""]

resources: ["services"]

resourceNames: ["heapster"]

verbs: ["proxy"]

- apiGroups: [""]

resources: ["services/proxy"]

resourceNames: ["heapster", "http:heapster:", "https:heapster:"]

verbs: ["get"] ---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: kubernetes-dashboard-minimal

namespace: kube-system

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: kubernetes-dashboard-minimal

subjects:

- kind: ServiceAccount

name: kubernetes-dashboard

namespace: kube-system ---

# ------------------- Dashboard Deployment ------------------- # kind: Deployment

apiVersion: apps/v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

replicas: 1

revisionHistoryLimit: 10

selector:

matchLabels:

k8s-app: kubernetes-dashboard

template:

metadata:

labels:

k8s-app: kubernetes-dashboard

spec:

containers:

- name: kubernetes-dashboard

image: registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.0

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

# Uncomment the following line to manually specify Kubernetes API server Host

# If not specified, Dashboard will attempt to auto discover the API server and connect

# to it. Uncomment only if the default does not work.

# - --apiserver-host=http://my-address:port

volumeMounts:

- name: kubernetes-dashboard-certs

mountPath: /certs

# Create on-disk volume to store exec logs

- mountPath: /tmp

name: tmp-volume

livenessProbe:

httpGet:

scheme: HTTPS

path: /

port: 8443

initialDelaySeconds: 30

timeoutSeconds: 30

volumes:

- name: kubernetes-dashboard-certs

secret:

secretName: kubernetes-dashboard-certs

- name: tmp-volume

emptyDir: {}

serviceAccountName: kubernetes-dashboard

# Comment the following tolerations if Dashboard must not be deployed on master

tolerations:

- key: node-role.kubernetes.io/master

effect: NoSchedule ---

# ------------------- Dashboard Service ------------------- # kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kube-system

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30001

selector:

k8s-app: kubernetes-dashboard [root@master k8s_yaml]# cat dashboard-admin.yaml

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1beta1

metadata:

name: admin

annotations:

rbac.authorization.kubernetes.io/autoupdate: "true"

roleRef:

kind: ClusterRole

name: cluster-admin

apiGroup: rbac.authorization.k8s.io

subjects:

- kind: ServiceAccount

name: admin

namespace: kube-system

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin

namespace: kube-system

labels:

kubernetes.io/cluster-service: "true"

addonmanager.kubernetes.io/mode: Reconcile

安装dashboard,https://192.168.247.212:30001/#!访问然后跳过认证即可!!

[root@master k8s_yaml]# kubectl apply -f kubernetes-dashboard.yaml

[root@master k8s_yaml]# kubectl apply -f dashboard-admin.yaml

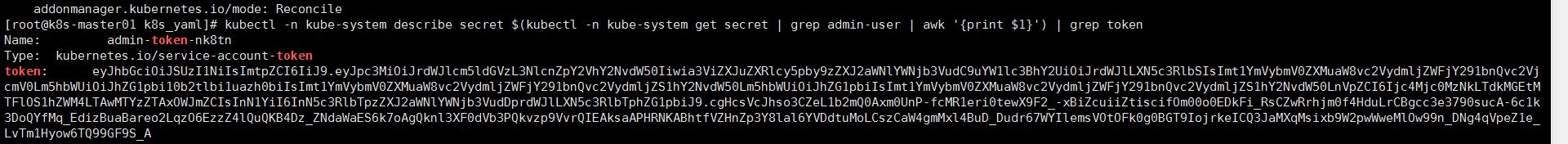

或者通过token访问:

kubectl -n kube-system describe secret $(kubectl -n kube-system get secret | grep admin-user | awk '{print $1}') | grep token

注意这里有个坑,复制的时候格式会换行需要放到记事本里取消换行!!!

部署中的脚本下载地址:https://github.com/hejianlai/Docker-Kubernetes/tree/master/Kubernetes/install

来了,老弟!__二进制部署kubernetes1.11.7集群的更多相关文章

- 最新二进制安装部署kubernetes1.15.6集群---超详细教程

00.组件版本和配置策略 00-01.组件版本 Kubernetes 1.15.6 Docker docker-ce-18.06.1.ce-3.el7 Etcd v3.3.13 Flanneld v0 ...

- 在CentOS上部署kubernetes1.9.0集群

原文链接: https://jimmysong.io/kubernetes-handbook/cloud-native/play-with-kubernetes.html (在CentOS上部署kub ...

- Kubernetes 二进制部署(二)集群部署(多 Master 节点通过 Nginx 负载均衡)

0. 前言 紧接上一篇,本篇文章我们尝试学习多节点部署 kubernetes 集群 并通过 haproxy+keepalived 实现 Master 节点的负载均衡 1. 实验环境 实验环境主要为 5 ...

- 二进制部署Kubernetes-v1.14.1集群

一.部署Kubernetes集群 1.1 Kubernetes介绍 Kubernetes(K8S)是Google开源的容器集群管理系统,K8S在Docker容器技术的基础之上,大大地提高了容器化部署应 ...

- K8s二进制部署单节点 etcd集群,flannel网络配置 ——锥刺股

K8s 二进制部署单节点 master --锥刺股 k8s集群搭建: etcd集群 flannel网络插件 搭建master组件 搭建node组件 1.部署etcd集群 2.Flannel 网络 ...

- Kubeadm部署-Kubernetes-1.18.6集群

环境配置 IP hostname 操作系统 10.11.66.44 k8s-master centos7.6 10.11.66.27 k8s-node1 centos7.7 10.11.66.28 k ...

- 使用Kubeadm部署Kubernetes1.14.1集群

一.环境说明 主机名 IP地址 角色 系统 k8s-node-1 192.170.38.80 k8s-master Centos7.6 k8s-node-2 192.170.38.81 k8s-nod ...

- Centos7离线部署kubernetes 1.13集群记录

一.说明 本篇主要参考kubernetes中文社区的一篇部署文章(CentOS 使用二进制部署 Kubernetes 1.13集群),并做了更详细的记录以备用. 二.部署环境 1.kubernetes ...

- Solr 11 - Solr集群模式的部署(基于Solr 4.10.4搭建SolrCloud)

目录 1 SolrCloud结构说明 2 环境的安装 2.1 环境说明 2.2 部署并启动ZooKeeper集群 2.3 部署Solr单机服务 2.4 添加Solr的索引库 3 部署Solr集群服务( ...

随机推荐

- Linux从入门到进阶全集——【第十四集:Shell基础命令】

1,Shell就是命令行执行器 2,作用:将外层引用程序的例如ls ll等命令进行解释成01表示的二进制代码给内核,从而让硬件执行:硬件的执行结果返回给shell,shell解释成我们能看得懂的代码返 ...

- 使用loadrunner录制手机脚本

1.安装loadrunner补丁包4: 2.安装了loadrunner的PC端上面创建WiFi热点,将手机接入该WiFi: 3.然后打开loadrunner,选择录制协议为手机的协议: 4.弹窗中选择 ...

- Android进阶:三、这一次,我们用最详细的方式解析Android消息机制的源码

决定再写一次有关Handler的源码 Handler源码解析 一.创建Handler对象 使用handler最简单的方式:直接new一个Handler的对象 Handler handler = new ...

- TCP/IP详解 卷一学习笔记(转载)

https://blog.csdn.net/cpcpcp123/article/details/51259498

- xss 加载远程第三方JS

script 没有调用远程平台,用web接收cookie <script>window.open('http://xxx.xxx/cookie.asp?msg='+document.coo ...

- time-based基于google key生成6位验证码(google authenticator)

由于公司服务器启用了双因子认证,登录时需要再次输入谷歌身份验证器生成的验证码.而生成验证码是基于固定的算法的,以当前时间为基础,基于每个人的google key去生成一个6位的验证码.也就是说,只要是 ...

- [BZOJ1925][SDOI2010]地精部落(DP)

题意 传说很久以前,大地上居住着一种神秘的生物:地精. 地精喜欢住在连绵不绝的山脉中.具体地说,一座长度为 N 的山脉 H可分 为从左到右的 N 段,每段有一个独一无二的高度 Hi,其中Hi是1到N ...

- django -使用jinja2模板引擎 自定义的过滤器

setting.py中 TEMPLATES = [ { 'BACKEND': 'django.template.backends.jinja2.Jinja2', 'DIRS': [os.path.jo ...

- 程序执行流程/布尔类型与布尔:运算猜数字游戏;库的使用:turtle

myPrice = 6 while True: guess = int(input()) if guess > myPrice: print('>') elif guess < my ...

- jsp页面的地址

1. ${pageContext.request.contextPath}是JSP取得绝对路径的方法,等价于<%=request.getContextPath()%> . 也就是取出部署的 ...