Hadoop生态圈-Flume的组件之自定义Sink

Hadoop生态圈-Flume的组件之自定义Sink

作者:尹正杰

版权声明:原创作品,谢绝转载!否则将追究法律责任。

本篇博客主要介绍sink相关的API使用两个小案例,想要了解更多关于API的小技巧请参考官网:http://flume.apache.org/FlumeDeveloperGuide.html#client-sdk

一.自定义Sink的步骤

1>.编写自定义sink

/*

@author :yinzhengjie

Blog:http://www.cnblogs.com/yinzhengjie/tag/Hadoop%E7%94%9F%E6%80%81%E5%9C%88/

EMAIL:y1053419035@qq.com

*/

package cn.org.yinzhengjie.sink; import org.apache.flume.*;

import org.apache.flume.conf.Configurable;

import org.apache.flume.sink.AbstractSink; /**

* 自定义sink

*/

public class MySink extends AbstractSink implements Configurable { //定义需要获取的key值

private String key = "name";

//定义默认值

private static final String defaultValue = "yinzhengjie";

//定义从配置文件中获取到的变量

private String res; public void configure(Context context) {

//使用context.getString方法获取配置文件的数据,第一个参数key是值配置文件中所存在的key名称,而第二个参数表示当key不存在时,给其赋值默认值为defaultValue。

res = context.getString(key, defaultValue); } public Status process() throws EventDeliveryException {

//在开启事务之前状态为Status.READY

Status result = Status.READY;

//获取channel

Channel channel = getChannel();

//得到事物

Transaction transaction = channel.getTransaction();

//定义一个事件,它是一行数据的字节数组,是flume发送文件的基本单位

Event event = null; try {

//获取event之前,需要开启事务

transaction.begin();

//从channel中取数据

event = channel.take(); if (event != null) {

System.out.println("配置数据\t" + res);

//得到事件的真实数据

System.out.println("真实数据\t" + new String(event.getBody()));

System.out.println("=====================================");

} else {

//在channel中数据为空的时候,预示此次会话结束,不再向channel中获取数据

result = Status.BACKOFF;

}

//事务处理成功后,需要提交事务

transaction.commit();

} catch (Exception ex) {

//事务失败时候,需要回滚事务

transaction.rollback();

throw new EventDeliveryException("Failed to log event: " + event, ex);

} finally {

//事务结束后,需要关闭事务

transaction.close();

}

return result;

}

}

2>.打包并将其发送到 /soft/flume/lib下

[yinzhengjie@s101 ~]$ cd /soft/flume/lib/

[yinzhengjie@s101 lib]$ rz [yinzhengjie@s101 lib]$

[yinzhengjie@s101 lib]$ ll | grep MyFlume

-rw-r--r-- yinzhengjie yinzhengjie Jun : MyFlume-1.0-SNAPSHOT.jar

[yinzhengjie@s101 lib]$

3>.编写agent的配置文件

[yinzhengjie@s101 ~]$ more /soft/flume/conf/yinzhengjie_mysink.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1 # Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = # Describe the sink

a1.sinks.k1.type = cn.org.yinzhengjie.sink.MySink # Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity =

a1.channels.c1.transactionCapacity = # Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

[yinzhengjie@s101 ~]$

4>.启动flume并测试

a>.启动agent进程

[yinzhengjie@s101 ~]$ flume-ng agent -f /soft/flume/conf/yinzhengjie_mysink.conf -n a1

Warning: No configuration directory set! Use --conf <dir> to override.

Warning: JAVA_HOME is not set!

Info: Including Hadoop libraries found via (/soft/hadoop/bin/hadoop) for HDFS access

Info: Including HBASE libraries found via (/soft/hbase/bin/hbase) for HBASE access

Info: Including Hive libraries found via () for Hive access

+ exec /soft/jdk/bin/java -Xmx20m -cp '/soft/flume/lib/*:/soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/*:/soft/hadoop-2.7.3/share/hadoop/common/*:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/*:/soft/hadoop-2.7.3/share/hadoop/hdfs/*:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/*:/soft/hadoop-2.7.3/share/hadoop/yarn/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/*:/contrib/capacity-scheduler/*.jar:/soft/hbase/bin/../conf:/soft/jdk//lib/tools.jar:/soft/hbase/bin/..:/soft/hbase/bin/../lib/activation-1.1.jar:/soft/hbase/bin/../lib/aopalliance-1.0.jar:/soft/hbase/bin/../lib/apacheds-i18n-2.0.0-M15.jar:/soft/hbase/bin/../lib/apacheds-kerberos-codec-2.0.0-M15.jar:/soft/hbase/bin/../lib/api-asn1-api-1.0.0-M20.jar:/soft/hbase/bin/../lib/api-util-1.0.0-M20.jar:/soft/hbase/bin/../lib/asm-3.1.jar:/soft/hbase/bin/../lib/avro-1.7.4.jar:/soft/hbase/bin/../lib/commons-beanutils-1.7.0.jar:/soft/hbase/bin/../lib/commons-beanutils-core-1.8.0.jar:/soft/hbase/bin/../lib/commons-cli-1.2.jar:/soft/hbase/bin/../lib/commons-codec-1.9.jar:/soft/hbase/bin/../lib/commons-collections-3.2.2.jar:/soft/hbase/bin/../lib/commons-compress-1.4.1.jar:/soft/hbase/bin/../lib/commons-configuration-1.6.jar:/soft/hbase/bin/../lib/commons-daemon-1.0.13.jar:/soft/hbase/bin/../lib/commons-digester-1.8.jar:/soft/hbase/bin/../lib/commons-el-1.0.jar:/soft/hbase/bin/../lib/commons-httpclient-3.1.jar:/soft/hbase/bin/../lib/commons-io-2.4.jar:/soft/hbase/bin/../lib/commons-lang-2.6.jar:/soft/hbase/bin/../lib/commons-logging-1.2.jar:/soft/hbase/bin/../lib/commons-math-2.2.jar:/soft/hbase/bin/../lib/commons-math3-3.1.1.jar:/soft/hbase/bin/../lib/commons-net-3.1.jar:/soft/hbase/bin/../lib/disruptor-3.3.0.jar:/soft/hbase/bin/../lib/findbugs-annotations-1.3.9-1.jar:/soft/hbase/bin/../lib/guava-12.0.1.jar:/soft/hbase/bin/../lib/guice-3.0.jar:/soft/hbase/bin/../lib/guice-servlet-3.0.jar:/soft/hbase/bin/../lib/hadoop-annotations-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-auth-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-client-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-hdfs-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-app-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-core-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-jobclient-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-shuffle-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-api-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-client-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-server-common-2.5.1.jar:/soft/hbase/bin/../lib/hbase-annotations-1.2.6.jar:/soft/hbase/bin/../lib/hbase-annotations-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-client-1.2.6.jar:/soft/hbase/bin/../lib/hbase-common-1.2.6.jar:/soft/hbase/bin/../lib/hbase-common-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-examples-1.2.6.jar:/soft/hbase/bin/../lib/hbase-external-blockcache-1.2.6.jar:/soft/hbase/bin/../lib/hbase-hadoop2-compat-1.2.6.jar:/soft/hbase/bin/../lib/hbase-hadoop-compat-1.2.6.jar:/soft/hbase/bin/../lib/hbase-it-1.2.6.jar:/soft/hbase/bin/../lib/hbase-it-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-prefix-tree-1.2.6.jar:/soft/hbase/bin/../lib/hbase-procedure-1.2.6.jar:/soft/hbase/bin/../lib/hbase-protocol-1.2.6.jar:/soft/hbase/bin/../lib/hbase-resource-bundle-1.2.6.jar:/soft/hbase/bin/../lib/hbase-rest-1.2.6.jar:/soft/hbase/bin/../lib/hbase-server-1.2.6.jar:/soft/hbase/bin/../lib/hbase-server-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-shell-1.2.6.jar:/soft/hbase/bin/../lib/hbase-thrift-1.2.6.jar:/soft/hbase/bin/../lib/htrace-core-3.1.0-incubating.jar:/soft/hbase/bin/../lib/httpclient-4.2.5.jar:/soft/hbase/bin/../lib/httpcore-4.4.1.jar:/soft/hbase/bin/../lib/jackson-core-asl-1.9.13.jar:/soft/hbase/bin/../lib/jackson-jaxrs-1.9.13.jar:/soft/hbase/bin/../lib/jackson-mapper-asl-1.9.13.jar:/soft/hbase/bin/../lib/jackson-xc-1.9.13.jar:/soft/hbase/bin/../lib/jamon-runtime-2.4.1.jar:/soft/hbase/bin/../lib/jasper-compiler-5.5.23.jar:/soft/hbase/bin/../lib/jasper-runtime-5.5.23.jar:/soft/hbase/bin/../lib/javax.inject-1.jar:/soft/hbase/bin/../lib/java-xmlbuilder-0.4.jar:/soft/hbase/bin/../lib/jaxb-api-2.2.2.jar:/soft/hbase/bin/../lib/jaxb-impl-2.2.3-1.jar:/soft/hbase/bin/../lib/jcodings-1.0.8.jar:/soft/hbase/bin/../lib/jersey-client-1.9.jar:/soft/hbase/bin/../lib/jersey-core-1.9.jar:/soft/hbase/bin/../lib/jersey-guice-1.9.jar:/soft/hbase/bin/../lib/jersey-json-1.9.jar:/soft/hbase/bin/../lib/jersey-server-1.9.jar:/soft/hbase/bin/../lib/jets3t-0.9.0.jar:/soft/hbase/bin/../lib/jettison-1.3.3.jar:/soft/hbase/bin/../lib/jetty-6.1.26.jar:/soft/hbase/bin/../lib/jetty-sslengine-6.1.26.jar:/soft/hbase/bin/../lib/jetty-util-6.1.26.jar:/soft/hbase/bin/../lib/joni-2.1.2.jar:/soft/hbase/bin/../lib/jruby-complete-1.6.8.jar:/soft/hbase/bin/../lib/jsch-0.1.42.jar:/soft/hbase/bin/../lib/jsp-2.1-6.1.14.jar:/soft/hbase/bin/../lib/jsp-api-2.1-6.1.14.jar:/soft/hbase/bin/../lib/junit-4.12.jar:/soft/hbase/bin/../lib/leveldbjni-all-1.8.jar:/soft/hbase/bin/../lib/libthrift-0.9.3.jar:/soft/hbase/bin/../lib/log4j-1.2.17.jar:/soft/hbase/bin/../lib/metrics-core-2.2.0.jar:/soft/hbase/bin/../lib/MyHbase-1.0-SNAPSHOT.jar:/soft/hbase/bin/../lib/netty-all-4.0.23.Final.jar:/soft/hbase/bin/../lib/paranamer-2.3.jar:/soft/hbase/bin/../lib/phoenix-4.10.0-HBase-1.2-client.jar:/soft/hbase/bin/../lib/protobuf-java-2.5.0.jar:/soft/hbase/bin/../lib/servlet-api-2.5-6.1.14.jar:/soft/hbase/bin/../lib/servlet-api-2.5.jar:/soft/hbase/bin/../lib/slf4j-api-1.7.7.jar:/soft/hbase/bin/../lib/slf4j-log4j12-1.7.5.jar:/soft/hbase/bin/../lib/snappy-java-1.0.4.1.jar:/soft/hbase/bin/../lib/spymemcached-2.11.6.jar:/soft/hbase/bin/../lib/xmlenc-0.52.jar:/soft/hbase/bin/../lib/xz-1.0.jar:/soft/hbase/bin/../lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/*:/soft/hadoop-2.7.3/share/hadoop/common/*:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/*:/soft/hadoop-2.7.3/share/hadoop/hdfs/*:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/*:/soft/hadoop-2.7.3/share/hadoop/yarn/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/*::/soft/hive/lib/*:/contrib/capacity-scheduler/*.jar:/conf:/lib/*' -Djava.library.path=:/soft/hadoop-2.7./lib/native:/soft/hadoop-2.7./lib/native org.apache.flume.node.Application -f /soft/flume/conf/yinzhengjie_mysink.conf -n a1

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/soft/apache-flume-1.8.-bin/lib/slf4j-log4j12-1.6..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hbase-1.2./lib/phoenix-4.10.-HBase-1.2-client.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hbase-1.2./lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.-bin/lib/log4j-slf4j-impl-2.4..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

// :: INFO node.PollingPropertiesFileConfigurationProvider: Configuration provider starting

// :: INFO node.PollingPropertiesFileConfigurationProvider: Reloading configuration file:/soft/flume/conf/yinzhengjie_mysink.conf

// :: INFO conf.FlumeConfiguration: Added sinks: k1 Agent: a1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Post-validation flume configuration contains configuration for agents: [a1]

// :: INFO node.AbstractConfigurationProvider: Creating channels

// :: INFO channel.DefaultChannelFactory: Creating instance of channel c1 type memory

// :: INFO node.AbstractConfigurationProvider: Created channel c1

// :: INFO source.DefaultSourceFactory: Creating instance of source r1, type netcat

// :: INFO sink.DefaultSinkFactory: Creating instance of sink: k1, type: cn.org.yinzhengjie.sink.MySink

// :: INFO node.AbstractConfigurationProvider: Channel c1 connected to [r1, k1]

// :: INFO node.Application: Starting new configuration:{ sourceRunners:{r1=EventDrivenSourceRunner: { source:org.apache.flume.source.NetcatSource{name:r1,state:IDLE} }} sinkRunners:{k1=SinkRunner: { policy:org.apache.flume.sink.DefaultSinkProcessor@618c8387 counterGroup:{ name:null counters:{} } }} channels:{c1=org.apache.flume.channel.MemoryChannel{name: c1}} }

// :: INFO node.Application: Starting Channel c1

// :: INFO instrumentation.MonitoredCounterGroup: Monitored counter group for type: CHANNEL, name: c1: Successfully registered new MBean.

// :: INFO instrumentation.MonitoredCounterGroup: Component type: CHANNEL, name: c1 started

// :: INFO node.Application: Starting Sink k1

// :: INFO node.Application: Starting Source r1

// :: INFO source.NetcatSource: Source starting

// :: INFO source.NetcatSource: Created serverSocket:sun.nio.ch.ServerSocketChannelImpl[/127.0.0.1:]

[yinzhengjie@s101 ~]$ flume-ng agent -f /soft/flume/conf/yinzhengjie_mysink.conf -n a1

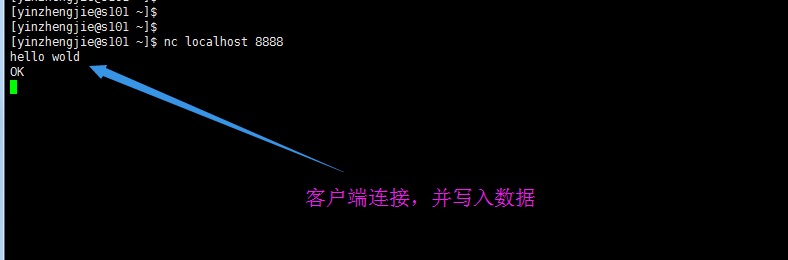

b>.客户端连接(nc)

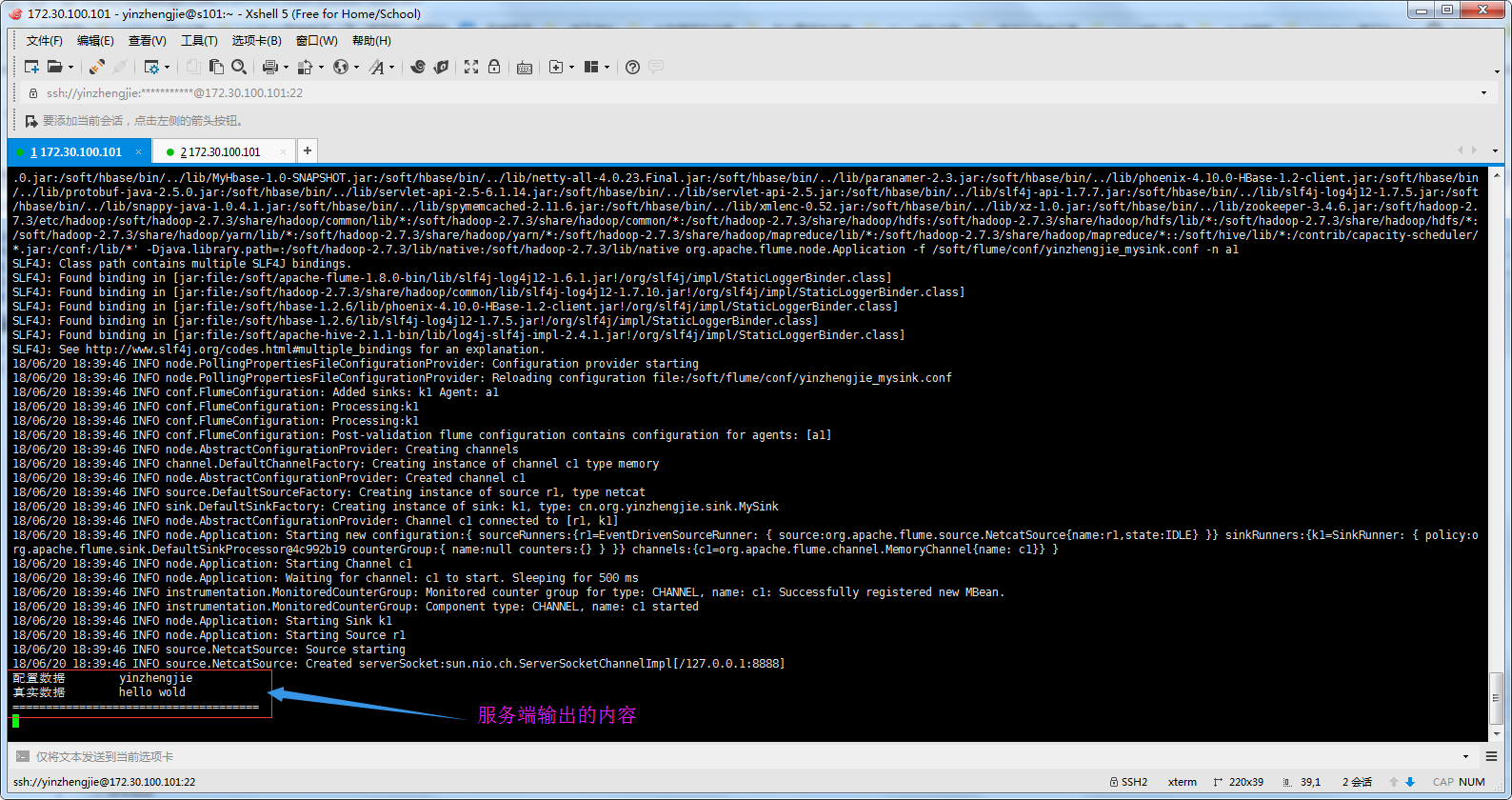

c>.检查服务端

二.自定义hdfs的sink

1>.编写自定义sink

/*

@author :yinzhengjie

Blog:http://www.cnblogs.com/yinzhengjie/tag/Hadoop%E7%94%9F%E6%80%81%E5%9C%88/

EMAIL:y1053419035@qq.com

*/

package cn.org.yinzhengjie.sink; import org.apache.flume.*;

import org.apache.flume.conf.Configurable;

import org.apache.flume.sink.AbstractSink;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path; /**

* 重写hdfs Sink

*

* 自定义路径 //context.getString("path"...);

*

*/ public class HdfsSink extends AbstractSink implements Configurable {

//定义需要写入到hdfs的路径

private String path = "path";

//定义默认的写入路径

private static final String defaultPath = "/yinzhengjie/1.txt";

//定义一个key为写入用户

private String username = "user";

//定义默认的写入用户

private static final String defaultUser = "yinzhengjie"; private String HdfsPath;

private String HdfsUser; //configure方法主要是获取值,也就是为上面的HdfsPath和HdfsUser赋初值

public void configure(Context context) {

//获取到hdfs的路径

HdfsPath = context.getString(path, defaultPath);

//获取到写入用户

HdfsUser = context.getString(username,defaultUser); } //process方法主要负责具体的业务逻辑

public Status process() throws EventDeliveryException {

//在开启事务之前状态为Status.READY

Status result = Status.READY;

//获取channel

Channel channel = getChannel();

//得到事物

Transaction transaction = channel.getTransaction();

//定义一个事件,它是一行数据的字节数组,是flume发送文件的基本单位

Event event = null; try {

//获取event之前,需要开启事务

transaction.begin();

//从channel中取数据

event = channel.take(); if (event != null) {

//设置写入hdfs的用户

System.setProperty("HADOOP_USER_NAME",HdfsUser);

Configuration conf = new Configuration();

FileSystem fs = FileSystem.get(conf);

Path p2 = new Path(HdfsPath);

//如果路径存在就以追加的形式写入

if(fs.exists(p2)){

FSDataOutputStream fos = fs.append(p2);

fos.write(event.getBody());

fos.write("\n".getBytes());

fos.close(); }

//如果路径不存在就先创建该文件在写入数据

else {

FSDataOutputStream fos = fs.create(p2);

fos.write(event.getBody());

fos.write("\n".getBytes());

fos.close();

}

} else {

//在channel中数据为空的时候,预示此次会话结束,不再向channel中获取数据

result = Status.BACKOFF;

}

//事务处理成功后,需要提交事务

transaction.commit();

} catch (Exception ex) {

//事务失败时候,需要回滚事务

transaction.rollback();

throw new EventDeliveryException("Failed to log event: " + event, ex);

} finally {

//事务结束后,需要关闭事务

transaction.close();

}

return result;

}

}

2>.打包并将其发送到 /soft/flume/lib下

[yinzhengjie@s101 lib]$ pwd

/soft/flume/lib

[yinzhengjie@s101 lib]$ ll | grep MyFlume

-rw-r--r-- yinzhengjie yinzhengjie Jun : MyFlume-1.0-SNAPSHOT.jar

[yinzhengjie@s101 lib]$

[yinzhengjie@s101 lib]$ rm -rf MyFlume-1.0-SNAPSHOT.jar

[yinzhengjie@s101 lib]$

[yinzhengjie@s101 lib]$ rz [yinzhengjie@s101 lib]$

[yinzhengjie@s101 lib]$ ll | grep MyFlume

-rw-r--r-- yinzhengjie yinzhengjie Jun : MyFlume-1.0-SNAPSHOT.jar

[yinzhengjie@s101 lib]$

[yinzhengjie@s101 lib]$

3>.编写agent的配置文件

[yinzhengjie@s101 ~]$ more /soft/flume/conf/yinzhengjie_hdfssink.conf

# Name the components on this agent

a1.sources = r1

a1.sinks = k1

a1.channels = c1 # Describe/configure the source

a1.sources.r1.type = netcat

a1.sources.r1.bind = localhost

a1.sources.r1.port = # Describe the sink

a1.sinks.k1.type = cn.org.yinzhengjie.sink.HdfsSink

a1.sinks.k1.user = yinzhengjie

a1.sinks.k1.path = /yinzhengjie/file.txt # Use a channel which buffers events in memory

a1.channels.c1.type = memory

a1.channels.c1.capacity =

a1.channels.c1.transactionCapacity = # Bind the source and sink to the channel

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

[yinzhengjie@s101 ~]$

4>.启动flume并测试

a>.启动hdfs服务

[yinzhengjie@s101 ~]$ more `which xzk.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -ne ];then

echo "无效参数,用法为: $0 {start|stop|restart|status}"

exit

fi #获取用户输入的命令

cmd=$ #定义函数功能

function zookeeperManger(){

case $cmd in

start)

echo "启动服务"

remoteExecution start

;;

stop)

echo "停止服务"

remoteExecution stop

;;

restart)

echo "重启服务"

remoteExecution restart

;;

status)

echo "查看状态"

remoteExecution status

;;

*)

echo "无效参数,用法为: $0 {start|stop|restart|status}"

;;

esac

} #定义执行的命令

function remoteExecution(){

for (( i= ; i<= ; i++ )) ; do

tput setaf

echo ========== s$i zkServer.sh $ ================

tput setaf

ssh s$i "source /etc/profile ; zkServer.sh $1"

done

} #调用函数

zookeeperManger

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xzk.sh start

启动服务

========== s102 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

========== s103 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

========== s104 zkServer.sh start ================

ZooKeeper JMX enabled by default

Using config: /soft/zk/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

[yinzhengjie@s101 ~]$

先启动zookeeper集群([yinzhengjie@s101 ~]$ xzk.sh start)

[yinzhengjie@s101 ~]$ start-dfs.sh

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.-bin/lib/log4j-slf4j-impl-2.4..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

Starting namenodes on [s101 s105]

s101: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s101.out

s105: starting namenode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-namenode-s105.out

s102: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s102.out

s104: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s104.out

s105: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s105.out

s103: starting datanode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-datanode-s103.out

Starting journal nodes [s102 s103 s104]

s102: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s102.out

s104: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s104.out

s103: starting journalnode, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-journalnode-s103.out

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.-bin/lib/log4j-slf4j-impl-2.4..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

Starting ZK Failover Controllers on NN hosts [s101 s105]

s101: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s101.out

s105: starting zkfc, logging to /soft/hadoop-2.7./logs/hadoop-yinzhengjie-zkfc-s105.out

[yinzhengjie@s101 ~]$

启动hdfs分布式系统([yinzhengjie@s101 ~]$ start-dfs.sh )

[yinzhengjie@s101 ~]$ more `which xcall.sh`

#!/bin/bash

#@author :yinzhengjie

#blog:http://www.cnblogs.com/yinzhengjie

#EMAIL:y1053419035@qq.com #判断用户是否传参

if [ $# -lt ];then

echo "请输入参数"

exit

fi #获取用户输入的命令

cmd=$@ for (( i=;i<=;i++ ))

do

#使终端变绿色

tput setaf

echo ============= s$i $cmd ============

#使终端变回原来的颜色,即白灰色

tput setaf

#远程执行命令

ssh s$i $cmd

#判断命令是否执行成功

if [ $? == ];then

echo "命令执行成功"

fi

done

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$

[yinzhengjie@s101 ~]$ xcall.sh jps

============= s101 jps ============

NameNode

DFSZKFailoverController

Jps

命令执行成功

============= s102 jps ============

Jps

DataNode

JournalNode

QuorumPeerMain

命令执行成功

============= s103 jps ============

Jps

QuorumPeerMain

DataNode

JournalNode

命令执行成功

============= s104 jps ============

Jps

DataNode

JournalNode

QuorumPeerMain

命令执行成功

============= s105 jps ============

DFSZKFailoverController

DataNode

NameNode

Jps

命令执行成功

[yinzhengjie@s101 ~]$

检查服务是否正常启动([yinzhengjie@s101 ~]$ xcall.sh jps)

b>.启动agent进程

[yinzhengjie@s101 ~]$ flume-ng agent -f /soft/flume/conf/yinzhengjie_hdfssink.conf -n a1

Warning: No configuration directory set! Use --conf <dir> to override.

Warning: JAVA_HOME is not set!

Info: Including Hadoop libraries found via (/soft/hadoop/bin/hadoop) for HDFS access

Info: Including HBASE libraries found via (/soft/hbase/bin/hbase) for HBASE access

Info: Including Hive libraries found via () for Hive access

+ exec /soft/jdk/bin/java -Xmx20m -cp '/soft/flume/lib/*:/soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/*:/soft/hadoop-2.7.3/share/hadoop/common/*:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/*:/soft/hadoop-2.7.3/share/hadoop/hdfs/*:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/*:/soft/hadoop-2.7.3/share/hadoop/yarn/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/*:/contrib/capacity-scheduler/*.jar:/soft/hbase/bin/../conf:/soft/jdk//lib/tools.jar:/soft/hbase/bin/..:/soft/hbase/bin/../lib/activation-1.1.jar:/soft/hbase/bin/../lib/aopalliance-1.0.jar:/soft/hbase/bin/../lib/apacheds-i18n-2.0.0-M15.jar:/soft/hbase/bin/../lib/apacheds-kerberos-codec-2.0.0-M15.jar:/soft/hbase/bin/../lib/api-asn1-api-1.0.0-M20.jar:/soft/hbase/bin/../lib/api-util-1.0.0-M20.jar:/soft/hbase/bin/../lib/asm-3.1.jar:/soft/hbase/bin/../lib/avro-1.7.4.jar:/soft/hbase/bin/../lib/commons-beanutils-1.7.0.jar:/soft/hbase/bin/../lib/commons-beanutils-core-1.8.0.jar:/soft/hbase/bin/../lib/commons-cli-1.2.jar:/soft/hbase/bin/../lib/commons-codec-1.9.jar:/soft/hbase/bin/../lib/commons-collections-3.2.2.jar:/soft/hbase/bin/../lib/commons-compress-1.4.1.jar:/soft/hbase/bin/../lib/commons-configuration-1.6.jar:/soft/hbase/bin/../lib/commons-daemon-1.0.13.jar:/soft/hbase/bin/../lib/commons-digester-1.8.jar:/soft/hbase/bin/../lib/commons-el-1.0.jar:/soft/hbase/bin/../lib/commons-httpclient-3.1.jar:/soft/hbase/bin/../lib/commons-io-2.4.jar:/soft/hbase/bin/../lib/commons-lang-2.6.jar:/soft/hbase/bin/../lib/commons-logging-1.2.jar:/soft/hbase/bin/../lib/commons-math-2.2.jar:/soft/hbase/bin/../lib/commons-math3-3.1.1.jar:/soft/hbase/bin/../lib/commons-net-3.1.jar:/soft/hbase/bin/../lib/disruptor-3.3.0.jar:/soft/hbase/bin/../lib/findbugs-annotations-1.3.9-1.jar:/soft/hbase/bin/../lib/guava-12.0.1.jar:/soft/hbase/bin/../lib/guice-3.0.jar:/soft/hbase/bin/../lib/guice-servlet-3.0.jar:/soft/hbase/bin/../lib/hadoop-annotations-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-auth-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-client-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-hdfs-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-app-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-core-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-jobclient-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-mapreduce-client-shuffle-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-api-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-client-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-common-2.5.1.jar:/soft/hbase/bin/../lib/hadoop-yarn-server-common-2.5.1.jar:/soft/hbase/bin/../lib/hbase-annotations-1.2.6.jar:/soft/hbase/bin/../lib/hbase-annotations-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-client-1.2.6.jar:/soft/hbase/bin/../lib/hbase-common-1.2.6.jar:/soft/hbase/bin/../lib/hbase-common-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-examples-1.2.6.jar:/soft/hbase/bin/../lib/hbase-external-blockcache-1.2.6.jar:/soft/hbase/bin/../lib/hbase-hadoop2-compat-1.2.6.jar:/soft/hbase/bin/../lib/hbase-hadoop-compat-1.2.6.jar:/soft/hbase/bin/../lib/hbase-it-1.2.6.jar:/soft/hbase/bin/../lib/hbase-it-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-prefix-tree-1.2.6.jar:/soft/hbase/bin/../lib/hbase-procedure-1.2.6.jar:/soft/hbase/bin/../lib/hbase-protocol-1.2.6.jar:/soft/hbase/bin/../lib/hbase-resource-bundle-1.2.6.jar:/soft/hbase/bin/../lib/hbase-rest-1.2.6.jar:/soft/hbase/bin/../lib/hbase-server-1.2.6.jar:/soft/hbase/bin/../lib/hbase-server-1.2.6-tests.jar:/soft/hbase/bin/../lib/hbase-shell-1.2.6.jar:/soft/hbase/bin/../lib/hbase-thrift-1.2.6.jar:/soft/hbase/bin/../lib/htrace-core-3.1.0-incubating.jar:/soft/hbase/bin/../lib/httpclient-4.2.5.jar:/soft/hbase/bin/../lib/httpcore-4.4.1.jar:/soft/hbase/bin/../lib/jackson-core-asl-1.9.13.jar:/soft/hbase/bin/../lib/jackson-jaxrs-1.9.13.jar:/soft/hbase/bin/../lib/jackson-mapper-asl-1.9.13.jar:/soft/hbase/bin/../lib/jackson-xc-1.9.13.jar:/soft/hbase/bin/../lib/jamon-runtime-2.4.1.jar:/soft/hbase/bin/../lib/jasper-compiler-5.5.23.jar:/soft/hbase/bin/../lib/jasper-runtime-5.5.23.jar:/soft/hbase/bin/../lib/javax.inject-1.jar:/soft/hbase/bin/../lib/java-xmlbuilder-0.4.jar:/soft/hbase/bin/../lib/jaxb-api-2.2.2.jar:/soft/hbase/bin/../lib/jaxb-impl-2.2.3-1.jar:/soft/hbase/bin/../lib/jcodings-1.0.8.jar:/soft/hbase/bin/../lib/jersey-client-1.9.jar:/soft/hbase/bin/../lib/jersey-core-1.9.jar:/soft/hbase/bin/../lib/jersey-guice-1.9.jar:/soft/hbase/bin/../lib/jersey-json-1.9.jar:/soft/hbase/bin/../lib/jersey-server-1.9.jar:/soft/hbase/bin/../lib/jets3t-0.9.0.jar:/soft/hbase/bin/../lib/jettison-1.3.3.jar:/soft/hbase/bin/../lib/jetty-6.1.26.jar:/soft/hbase/bin/../lib/jetty-sslengine-6.1.26.jar:/soft/hbase/bin/../lib/jetty-util-6.1.26.jar:/soft/hbase/bin/../lib/joni-2.1.2.jar:/soft/hbase/bin/../lib/jruby-complete-1.6.8.jar:/soft/hbase/bin/../lib/jsch-0.1.42.jar:/soft/hbase/bin/../lib/jsp-2.1-6.1.14.jar:/soft/hbase/bin/../lib/jsp-api-2.1-6.1.14.jar:/soft/hbase/bin/../lib/junit-4.12.jar:/soft/hbase/bin/../lib/leveldbjni-all-1.8.jar:/soft/hbase/bin/../lib/libthrift-0.9.3.jar:/soft/hbase/bin/../lib/log4j-1.2.17.jar:/soft/hbase/bin/../lib/metrics-core-2.2.0.jar:/soft/hbase/bin/../lib/MyHbase-1.0-SNAPSHOT.jar:/soft/hbase/bin/../lib/netty-all-4.0.23.Final.jar:/soft/hbase/bin/../lib/paranamer-2.3.jar:/soft/hbase/bin/../lib/phoenix-4.10.0-HBase-1.2-client.jar:/soft/hbase/bin/../lib/protobuf-java-2.5.0.jar:/soft/hbase/bin/../lib/servlet-api-2.5-6.1.14.jar:/soft/hbase/bin/../lib/servlet-api-2.5.jar:/soft/hbase/bin/../lib/slf4j-api-1.7.7.jar:/soft/hbase/bin/../lib/slf4j-log4j12-1.7.5.jar:/soft/hbase/bin/../lib/snappy-java-1.0.4.1.jar:/soft/hbase/bin/../lib/spymemcached-2.11.6.jar:/soft/hbase/bin/../lib/xmlenc-0.52.jar:/soft/hbase/bin/../lib/xz-1.0.jar:/soft/hbase/bin/../lib/zookeeper-3.4.6.jar:/soft/hadoop-2.7.3/etc/hadoop:/soft/hadoop-2.7.3/share/hadoop/common/lib/*:/soft/hadoop-2.7.3/share/hadoop/common/*:/soft/hadoop-2.7.3/share/hadoop/hdfs:/soft/hadoop-2.7.3/share/hadoop/hdfs/lib/*:/soft/hadoop-2.7.3/share/hadoop/hdfs/*:/soft/hadoop-2.7.3/share/hadoop/yarn/lib/*:/soft/hadoop-2.7.3/share/hadoop/yarn/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/lib/*:/soft/hadoop-2.7.3/share/hadoop/mapreduce/*::/soft/hive/lib/*:/contrib/capacity-scheduler/*.jar:/conf:/lib/*' -Djava.library.path=:/soft/hadoop-2.7./lib/native:/soft/hadoop-2.7./lib/native org.apache.flume.node.Application -f /soft/flume/conf/yinzhengjie_hdfssink.conf -n a1

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/soft/apache-flume-1.8.-bin/lib/slf4j-log4j12-1.6..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hadoop-2.7./share/hadoop/common/lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hbase-1.2./lib/phoenix-4.10.-HBase-1.2-client.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/hbase-1.2./lib/slf4j-log4j12-1.7..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/soft/apache-hive-2.1.-bin/lib/log4j-slf4j-impl-2.4..jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

// :: INFO node.PollingPropertiesFileConfigurationProvider: Configuration provider starting

// :: INFO node.PollingPropertiesFileConfigurationProvider: Reloading configuration file:/soft/flume/conf/yinzhengjie_hdfssink.conf

// :: INFO conf.FlumeConfiguration: Added sinks: k1 Agent: a1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Processing:k1

// :: INFO conf.FlumeConfiguration: Post-validation flume configuration contains configuration for agents: [a1]

// :: INFO node.AbstractConfigurationProvider: Creating channels

// :: INFO channel.DefaultChannelFactory: Creating instance of channel c1 type memory

// :: INFO node.AbstractConfigurationProvider: Created channel c1

// :: INFO source.DefaultSourceFactory: Creating instance of source r1, type netcat

// :: INFO sink.DefaultSinkFactory: Creating instance of sink: k1, type: cn.org.yinzhengjie.sink.HdfsSink

// :: INFO node.AbstractConfigurationProvider: Channel c1 connected to [r1, k1]

// :: INFO node.Application: Starting new configuration:{ sourceRunners:{r1=EventDrivenSourceRunner: { source:org.apache.flume.source.NetcatSource{name:r1,state:IDLE} }} sinkRunners:{k1=SinkRunner: { policy:org.apache.flume.sink.DefaultSinkProcessor@1ce60deb counterGroup:{ name:null counters:{} } }} channels:{c1=org.apache.flume.channel.MemoryChannel{name: c1}} }

// :: INFO node.Application: Starting Channel c1

// :: INFO instrumentation.MonitoredCounterGroup: Monitored counter group for type: CHANNEL, name: c1: Successfully registered new MBean.

// :: INFO instrumentation.MonitoredCounterGroup: Component type: CHANNEL, name: c1 started

// :: INFO node.Application: Starting Sink k1

// :: INFO node.Application: Starting Source r1

// :: INFO source.NetcatSource: Source starting

// :: INFO source.NetcatSource: Created serverSocket:sun.nio.ch.ServerSocketChannelImpl[/127.0.0.1:]

[yinzhengjie@s101 ~]$ flume-ng agent -f /soft/flume/conf/yinzhengjie_hdfssink.conf -n a1

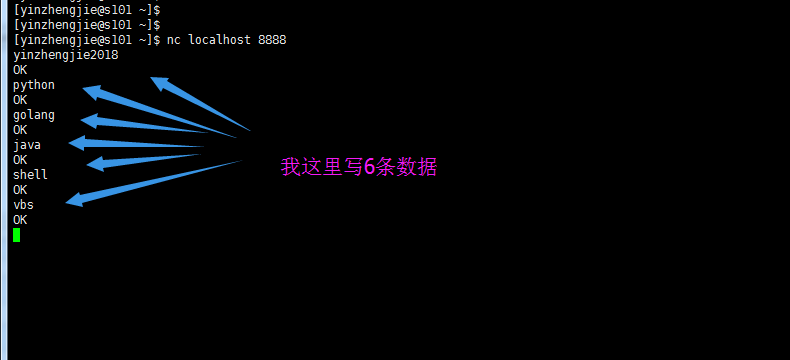

c>.source端进行连接操作

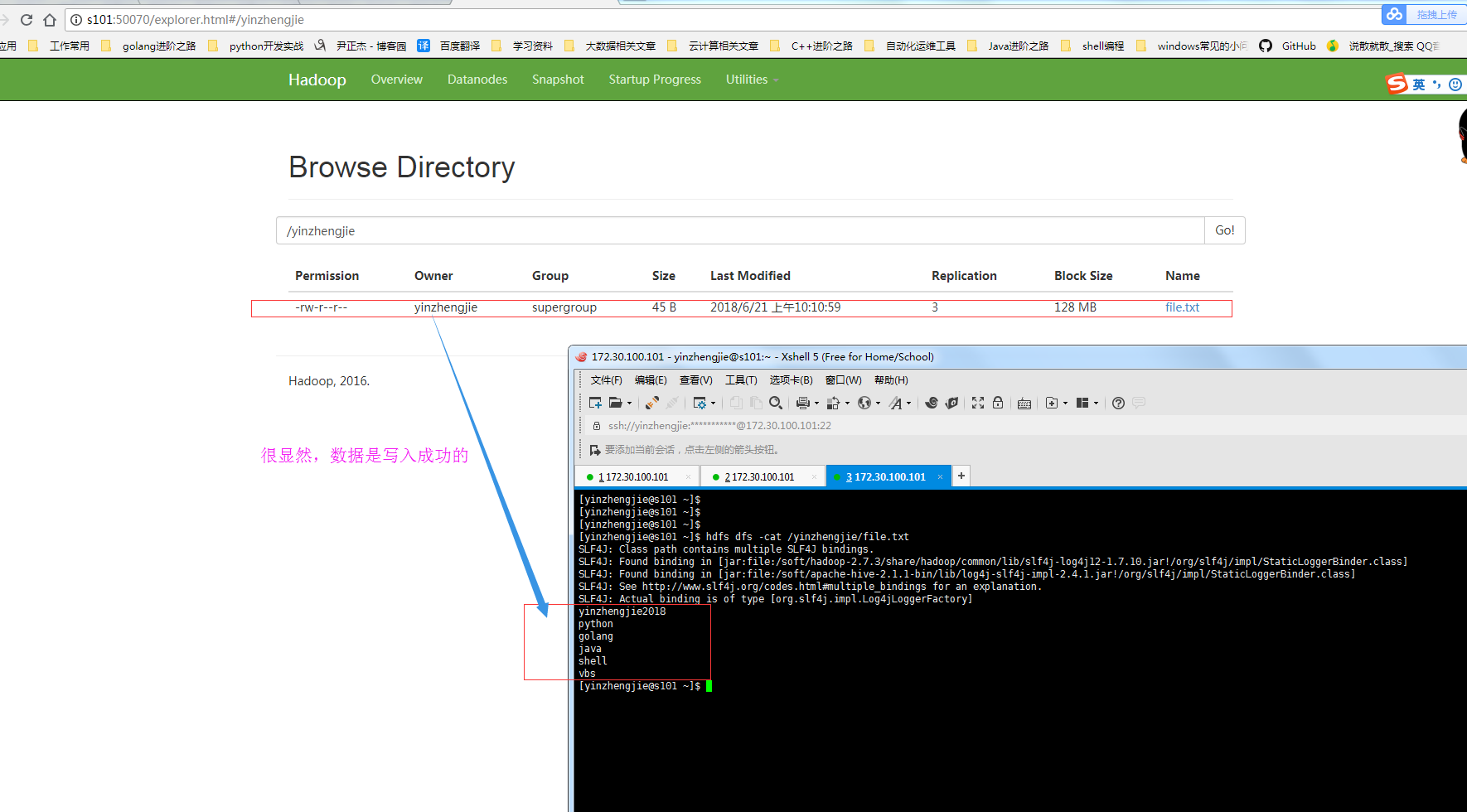

d>.检查hdfs系统是否写入成功

三.自定义HBase的sink

(未完待续.......)

Hadoop生态圈-Flume的组件之自定义Sink的更多相关文章

- Hadoop生态圈-Flume的组件之自定义拦截器(interceptor)

Hadoop生态圈-Flume的组件之自定义拦截器(interceptor) 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客只是举例了一个自定义拦截器的方法,测试字节传输速 ...

- Hadoop生态圈-Flume的组件之sink处理器

Hadoop生态圈-Flume的组件之sink处理器 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一. 二.

- Hadoop生态圈-Flume的组件之拦截器与选择器

Hadoop生态圈-Flume的组件之拦截器与选择器 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客只是配置的是Flume主流的Interceptors,想要了解更详细 ...

- Hadoop生态圈-flume日志收集工具完全分布式部署

Hadoop生态圈-flume日志收集工具完全分布式部署 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 目前为止,Hadoop的一个主流应用就是对于大规模web日志的分析和处理 ...

- Hadoop生态圈-Flume的主流source源配置

Hadoop生态圈-Flume的主流source源配置 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客只是配置的是Flume主流的Source,想要了解更详细的配置信息请参 ...

- Hadoop生态圈-Flume的主流Sinks源配置

Hadoop生态圈-Flume的主流Sinks源配置 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 本篇博客只是配置的是Flume主流的Sinks,想要了解更详细的配置信息请参考官 ...

- 基于ambari搭建hadoop生态圈大数据组件

Ambari介绍1Apache Ambari是一种基于Web的工具,支持Apache Hadoop集群的供应.管理和监控.Ambari已支持大多数Hadoop组件,包括HDFS.MapReduce.H ...

- Hadoop生态圈-Flume的主流Channel源配置

Hadoop生态圈-Flume的主流Channel源配置 作者:尹正杰 版权声明:原创作品,谢绝转载!否则将追究法律责任. 一. 二. 三.

- 大数据和Hadoop生态圈

大数据和Hadoop生态圈 一.前言: 非常感谢Hadoop专业解决方案群:313702010,兄弟们的大力支持,在此说一声辛苦了,经过两周的努力,已经有啦初步的成果,目前第1章 大数据和Hadoop ...

随机推荐

- 微信小程序中跳转另一个小程序

wx.navigateToMiniProgram({ appId: 'xxxxxxxxxxxxxxxxxx', // 要跳转的小程序的appid path: 'page/index/index', / ...

- Azure SQL Database Active Geo-Replication 简介

对于数据库的维护来说,备份工作可谓是重中之重.MS Azure 当然也提供了很完善的数据库备份功能.但是在动手创建备份计划前请思考一下备份工作的真实目的.当然首先要保证数据的安全,一般来说定时创建数据 ...

- 简单测评拨号VPS——云立方&淘宝卖家

做爬虫的同学不可避免地要使用代理IP,除了各网站公布的免费代理IP外,我们还可以选择拨号VPS,本文简单对两家(类)拨号VPS提供商进行测评,如有差错,欢迎指出,非常感谢. 使用过程 云立方 第一次听 ...

- 管理idea Open Recent

在微服务开发过程中,随着服务的增加,日常需要在各个服务之间切换,这样idea 的 Open Recent 功能就显得特别有用,但是过多的最近打开记录中包括已经删除的工程或者无用的工程导致影响开发时切换 ...

- 制作R中分词的字典的办法

在开始下面步骤之前先让自己的文件可以显示扩展名. 如何显示请谷歌. 第一步:打开一个文本文件 第二步:把你要的词复制到这个文本文件吧. 第三步:将这个文本文件的格式改为dic.即原来文件格式是txt后 ...

- Alpha阶段_团队分数分配

小组成员 分数分配 薄霖 74 徐越 65 赵庶宏 65 赵铭 41 武鑫 39 卞忠昊 36 叶能端 30

- [2017BUAA软工助教]个人得分总表(至alpha结束)

一.表 学号 第0次 week1 week2 week3 个人项目 附加1 结对项目 附加2 a团队 a团队得分 a贡献分 总分(不计) 总分(记) 15061119 7 9.5 12 9 45.75 ...

- 读书笔记(chapter1-2)

一.linux内核简介 1.1unix的历史 1.unix强大的根本原因:1.unix很简洁,仅仅提供几百个系统调用并且有一个非常明确的设计目的:2.在unix中,所有的东西都被当作文件对待:3.un ...

- 《实时控制软件设计》之Automation Studio开发环境

Automation Studio是贝加莱公司的控制软件开发平台,软件可运行在贝加莱的基于PC的控制器上,基于Automation Studio我们可构建一个完整的控制软件构建.测试和仿真运行平台.本 ...

- 第二个Sprint冲刺第五天(燃尽图)